kubeadm 之k8s 多node 部署

主机名修改

hostnamectl set-hostname master-1 && exec bash hostnamectl set-hostname node-1 && exec bash hostnamectl set-hostname node-2 && exec bash

host 文件修改

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4 ::1 localhost localhost.localdomain localhost6 localhost6.localdomain6 192.168.10.29 master-1 192.168.10.30 node-1 192.168.10.31 node-2

配置双机互信

ssh-keygen ssh-copy-id master-1 ssh-copy-id node-1 ssh-copy-id node-2

关闭交换分区

swapoff -a vim /etc/fstab # # /etc/fstab # Created by anaconda on Sun Feb 7 10:14:45 2021 # # Accessible filesystems, by reference, are maintained under '/dev/disk' # See man pages fstab(5), findfs(8), mount(8) and/or blkid(8) for more info # /dev/mapper/centos-root / xfs defaults 0 0 UUID=ec65c557-715f-4f2b-beae-ec564c71b66b /boot xfs defaults 0 0 #/dev/mapper/centos-swap swap swap defaults 0 0

加载内核参数并加以设置

modprobe br_netfilter echo "modprobe br_netfilter" >> /etc/profile cat > /etc/sysctl.d/k8s.conf <<EOF net.bridge.bridge-nf-call-ip6tables = 1 net.bridge.bridge-nf-call-iptables = 1 net.ipv4.ip_forward = 1 EOF sysctl -p /etc/sysctl.d/k8s.conf

关闭防火墙

systemctl disable firewalld.service systemctl stop firewalld.service

配置docker仓库源

# step 1: 安装必要的一些系统工具 yum install -y yum-utils device-mapper-persistent-data lvm2 # Step 2: 添加软件源信息 yum-config-manager --add-repo http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo # Step 3: 更新并安装 Docker-CE yum makecache fast yum -y install docker-ce # Step 4: 开启Docker服务 service docker start

设置时间同步计划任务

yum -y install ntpdate crontab -e crontab -l * */1 * * * /usr/sbin/ntpdate time.windows.com >/dev/null 您在 /var/spool/mail/root 中有新邮件 systemctl restart crond.service

加载ipvs 模块

cd /etc/sysconfig/modules/

cat /etc/sysconfig/modules/ipvs.modules

#!/bin/bash

ipvs_modules="ip_vs ip_vs_lc ip_vs_wlc ip_vs_rr ip_vs_wrr ip_vs_lblc ip_vs_lblcr ip_vs_dh ip_vs_sh ip_vs_nq ip_vs_sed ip_vs_ftp nf_conntrack"

for kernel_module in ${ipvs_modules}; do

/sbin/modinfo -F filename ${kernel_module} > /dev/null 2>&1

if [ 0 -eq 0 ]; then

/sbin/modprobe ${kernel_module}

fi

done

chmod +x ipvs.modules

bash ipvs.modules

lsmod | grep ip_vs

ip_vs_ftp 13079 0

nf_nat 26787 1 ip_vs_ftp

ip_vs_sed 12519 0

ip_vs_nq 12516 0

ip_vs_sh 12688 0

ip_vs_dh 12688 0

ip_vs_lblcr 12922 0

ip_vs_lblc 12819 0

ip_vs_wrr 12697 0

ip_vs_rr 12600 0

ip_vs_wlc 12519 0

ip_vs_lc 12516 0

ip_vs 145497 22 ip_vs_dh,ip_vs_lc,ip_vs_nq,ip_vs_rr,ip_vs_sh,ip_vs_ftp,ip_vs_sed,ip_vs_wlc,ip_vs_wrr,ip_vs_lblcr,ip_vs_lblc

nf_conntrack 133095 2 ip_vs,nf_nat

libcrc32c 12644 4 xfs,ip_vs,nf_nat,nf_conntrack

配置镜像加速器及修改docker启动驱动

cat /etc/docker/daemon.json

{

"registry-mirrors": ["http://f1361db2.m.daocloud.io"],"exec-opts": ["native.cgroupdriver=systemd"]

}

systemctl start docker.service && systemctl enable docker.service

配置k8s 仓库

cat <<EOF > /etc/yum.repos.d/kubernetes.repo [kubernetes] name=Kubernetes baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/ enabled=1 gpgcheck=1 repo_gpgcheck=1 gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg EOF setenforce 0

安装k8s 初始化所需安装包

yum install -y kubelet-1.20.6 kubeadm-1.20.6 kubectl-1.20.6

设置开机自启

systemctl enable kubelet

kubeadm初始化k8s集群master-1操作

kubeadm init --kubernetes-version=1.20.6 --apiserver-advertise-address=192.168.10.29 --image-repository registry.aliyuncs.com/google_containers --pod-network-cidr=10.244.0.0/16 --ignore-preflight-errors=SystemVerification

[kubelet-finalize] Updating "/etc/kubernetes/kubelet.conf" to point to a rotatable kubelet client certificate and key

[addons] Applied essential addon: CoreDNS

[addons] Applied essential addon: kube-proxy

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

Alternatively, if you are the root user, you can run:

export KUBECONFIG=/etc/kubernetes/admin.conf

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join 192.168.10.29:6443 --token dhwkd2.n73yo7rt3x6bsdm8 \

--discovery-token-ca-cert-hash sha256:2a85fc7081360f0d61dbb9930800951a8aa7bca0163cff236ce5da9b8c35ad50

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

node节点加入集群

kubeadm join 192.168.10.29:6443 --token dhwkd2.n73yo7rt3x6bsdm8 \

--discovery-token-ca-cert-hash sha256:2a85fc7081360f0d61dbb9930800951a8aa7bca0163cff236ce5da9b8c35ad50

查看集群状态

[root@master-1 modules]# kubectl get nodes NAME STATUS ROLES AGE VERSION master-1 NotReady control-plane,master 7m26s v1.20.6 node-1 NotReady <none> 2m v1.20.6 node-2 NotReady <none> 111s v1.20.6

设置node角色

[root@master-1 modules]# kubectl label node node-1 node-role.kubernetes.io/worker=worker node/node-1 labeled [root@master-1 modules]# kubectl label node node-2 node-role.kubernetes.io/worker=worker node/node-2 labeled [root@master-1 modules]# kubectl get nodes NAME STATUS ROLES AGE VERSION master-1 NotReady control-plane,master 10m v1.20.6 node-1 NotReady worker 4m51s v1.20.6 node-2 NotReady worker 4m42s v1.20.6

上面状态都是NotReady状态,说明没有安装网络插件

安装kubernetes网络组件-Calico

kubectl apply -f https://docs.projectcalico.org/manifests/calico.yaml [root@master-1 modules]# kubectl get nodes NAME STATUS ROLES AGE VERSION master-1 Ready control-plane,master 13m v1.20.6 node-1 Ready worker 8m24s v1.20.6 node-2 Ready worker 8m15s v1.20.6

测试在k8s创建pod是否可以正常访问网络

[root@master-1 modules]# kubectl run busybox --image busybox:1.28 --restart=Never --rm -it busybox -- sh If you don't see a command prompt, try pressing enter. / # ping baidu.com PING baidu.com (220.181.38.148): 56 data bytes 64 bytes from 220.181.38.148: seq=0 ttl=127 time=365.628 ms 64 bytes from 220.181.38.148: seq=1 ttl=127 time=384.185 ms ^C --- baidu.com ping statistics --- 2 packets transmitted, 2 packets received, 0% packet loss round-trip min/avg/max = 365.628/374.906/384.185 ms

测试coredns是否正常

[root@master-1 modules]# kubectl run busybox --image busybox:1.28 --restart=Never --rm -it busybox -- sh If you don't see a command prompt, try pressing enter. / # ping baidu.com PING baidu.com (220.181.38.148): 56 data bytes 64 bytes from 220.181.38.148: seq=0 ttl=127 time=365.628 ms 64 bytes from 220.181.38.148: seq=1 ttl=127 time=384.185 ms ^C --- baidu.com ping statistics --- 2 packets transmitted, 2 packets received, 0% packet loss round-trip min/avg/max = 365.628/374.906/384.185 ms / # nslookup kubernetes.default.svc.cluster.local Server: 10.96.0.10 Address 1: 10.96.0.10 kube-dns.kube-system.svc.cluster.local Name: kubernetes.default.svc.cluster.local Address 1: 10.96.0.1 kubernetes.default.svc.cluster.local

安装dasboard

[root@master-1 ~]# cat kubernetes-dashboard.yaml

'# Copyright 2017 The Kubernetes Authors.

#

# Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

apiVersion: v1

kind: Namespace

metadata:

name: kubernetes-dashboard

---

apiVersion: v1

kind: ServiceAccount

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kubernetes-dashboard

---

kind: Service

apiVersion: v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kubernetes-dashboard

spec:

ports:

- port: 443

targetPort: 8443

selector:

k8s-app: kubernetes-dashboard

---

apiVersion: v1

kind: Secret

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard-certs

namespace: kubernetes-dashboard

type: Opaque

---

apiVersion: v1

kind: Secret

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard-csrf

namespace: kubernetes-dashboard

type: Opaque

data:

csrf: ""

---

apiVersion: v1

kind: Secret

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard-key-holder

namespace: kubernetes-dashboard

type: Opaque

---

kind: ConfigMap

apiVersion: v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard-settings

namespace: kubernetes-dashboard

---

kind: Role

apiVersion: rbac.authorization.k8s.io/v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kubernetes-dashboard

rules:

# Allow Dashboard to get, update and delete Dashboard exclusive secrets.

- apiGroups: [""]

resources: ["secrets"]

resourceNames: ["kubernetes-dashboard-key-holder", "kubernetes-dashboard-certs", "kubernetes-dashboard-csrf"]

verbs: ["get", "update", "delete"]

# Allow Dashboard to get and update 'kubernetes-dashboard-settings' config map.

- apiGroups: [""]

resources: ["configmaps"]

resourceNames: ["kubernetes-dashboard-settings"]

verbs: ["get", "update"]

# Allow Dashboard to get metrics.

- apiGroups: [""]

resources: ["services"]

resourceNames: ["heapster", "dashboard-metrics-scraper"]

verbs: ["proxy"]

- apiGroups: [""]

resources: ["services/proxy"]

resourceNames: ["heapster", "http:heapster:", "https:heapster:", "dashboard-metrics-scraper", "http:dashboard-metrics-scraper"]

verbs: ["get"]

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

rules:

# Allow Metrics Scraper to get metrics from the Metrics server

- apiGroups: ["metrics.k8s.io"]

resources: ["pods", "nodes"]

verbs: ["get", "list", "watch"]

---

apiVersion: rbac.authorization.k8s.io/v1

kind: RoleBinding

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kubernetes-dashboard

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: Role

name: kubernetes-dashboard

subjects:

- kind: ServiceAccount

name: kubernetes-dashboard

namespace: kubernetes-dashboard

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: kubernetes-dashboard

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: kubernetes-dashboard

subjects:

- kind: ServiceAccount

name: kubernetes-dashboard

namespace: kubernetes-dashboard

---

kind: Deployment

apiVersion: apps/v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kubernetes-dashboard

spec:

replicas: 1

revisionHistoryLimit: 10

selector:

matchLabels:

k8s-app: kubernetes-dashboard

template:

metadata:

labels:

k8s-app: kubernetes-dashboard

spec:

containers:

- name: kubernetes-dashboard

image: kubernetesui/dashboard:v2.0.0-beta8

imagePullPolicy: IfNotPresent

ports:

- containerPort: 8443

protocol: TCP

args:

- --auto-generate-certificates

- --namespace=kubernetes-dashboard

# Uncomment the following line to manually specify Kubernetes API server Host

# If not specified, Dashboard will attempt to auto discover the API server and connect

# to it. Uncomment only if the default does not work.

# - --apiserver-host=http://my-address:port

volumeMounts:

- name: kubernetes-dashboard-certs

mountPath: /certs

# Create on-disk volume to store exec logs

- mountPath: /tmp

name: tmp-volume

livenessProbe:

httpGet:

scheme: HTTPS

path: /

port: 8443

initialDelaySeconds: 30

timeoutSeconds: 30

securityContext:

allowPrivilegeEscalation: false

readOnlyRootFilesystem: true

runAsUser: 1001

runAsGroup: 2001

volumes:

- name: kubernetes-dashboard-certs

secret:

secretName: kubernetes-dashboard-certs

- name: tmp-volume

emptyDir: {}

serviceAccountName: kubernetes-dashboard

nodeSelector:

"beta.kubernetes.io/os": linux

# Comment the following tolerations if Dashboard must not be deployed on master

tolerations:

- key: node-role.kubernetes.io/master

effect: NoSchedule

---

kind: Service

apiVersion: v1

metadata:

labels:

k8s-app: dashboard-metrics-scraper

name: dashboard-metrics-scraper

namespace: kubernetes-dashboard

spec:

ports:

- port: 8000

targetPort: 8000

selector:

k8s-app: dashboard-metrics-scraper

---

kind: Deployment

apiVersion: apps/v1

metadata:

labels:

k8s-app: dashboard-metrics-scraper

name: dashboard-metrics-scraper

namespace: kubernetes-dashboard

spec:

replicas: 1

revisionHistoryLimit: 10

selector:

matchLabels:

k8s-app: dashboard-metrics-scraper

template:

metadata:

labels:

k8s-app: dashboard-metrics-scraper

annotations:

seccomp.security.alpha.kubernetes.io/pod: 'runtime/default'

spec:

containers:

- name: dashboard-metrics-scraper

image: kubernetesui/metrics-scraper:v1.0.1

imagePullPolicy: IfNotPresent

ports:

- containerPort: 8000

protocol: TCP

livenessProbe:

httpGet:

scheme: HTTP

path: /

port: 8000

initialDelaySeconds: 30

timeoutSeconds: 30

volumeMounts:

- mountPath: /tmp

name: tmp-volume

securityContext:

allowPrivilegeEscalation: false

readOnlyRootFilesystem: true

runAsUser: 1001

runAsGroup: 2001

serviceAccountName: kubernetes-dashboard

nodeSelector:

"beta.kubernetes.io/os": linux

# Comment the following tolerations if Dashboard must not be deployed on master

tolerations:

- key: node-role.kubernetes.io/master

effect: NoSchedule

volumes:

- name: tmp-volume

emptyDir: {}

[root@master-1 ~]# kubectl apply -f kubernetes-dashboard.yaml

namespace/kubernetes-dashboard created

serviceaccount/kubernetes-dashboard created

service/kubernetes-dashboard created

secret/kubernetes-dashboard-certs created

secret/kubernetes-dashboard-csrf created

secret/kubernetes-dashboard-key-holder created

configmap/kubernetes-dashboard-settings created

role.rbac.authorization.k8s.io/kubernetes-dashboard created

clusterrole.rbac.authorization.k8s.io/kubernetes-dashboard created

rolebinding.rbac.authorization.k8s.io/kubernetes-dashboard created

clusterrolebinding.rbac.authorization.k8s.io/kubernetes-dashboard created

deployment.apps/kubernetes-dashboard created

service/dashboard-metrics-scraper created

deployment.apps/dashboard-metrics-scraper created

查看dashboard的状态

[root@master-1 ~]# kubectl get pods -n kubernetes-dashboard NAME READY STATUS RESTARTS AGE dashboard-metrics-scraper-7445d59dfd-kldp7 1/1 Running 0 38h kubernetes-dashboard-54f5b6dc4b-4v2np 1/1 Running 0 38h

查看dashboard前端的service

[root@master-1 ~]# kubectl get svc -n kubernetes-dashboard NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE dashboard-metrics-scraper ClusterIP 10.108.226.98 <none> 8000/TCP 38h kubernetes-dashboard ClusterIP 10.108.44.41 <none> 443/TCP 38h

修改service type类型变成NodePort

kubectl edit svc kubernetes-dashboard -n kubernetes-dashboard

# Please edit the object below. Lines beginning with a '#' will be ignored,

# Please edit the object below. Lines beginning with a '#' will be ignored,

# and an empty file will abort the edit. If an error occurs while saving this file will be

# reopened with the relevant failures.

#

apiVersion: v1

kind: Service

metadata:

annotations:

kubectl.kubernetes.io/last-applied-configuration: |

{"apiVersion":"v1","kind":"Service","metadata":{"annotations":{},"labels":{"k8s-app":"kubernetes-dashboard"},"name":"kubernetes-dashboard","namespace":"kubernetes-dashboard"},"spec":{"ports":[{"port":443

,"targetPort":8443}],"selector":{"k8s-app":"kubernetes-dashboard"}}} creationTimestamp: "2021-12-15T14:27:58Z"

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kubernetes-dashboard

resourceVersion: "2903"

uid: 94758740-9210-46bb-939b-2b742e9b01e6

spec:

clusterIP: 10.108.44.41

clusterIPs:

- 10.108.44.41

ports:

- port: 443

protocol: TCP

targetPort: 8443

selector:

k8s-app: kubernetes-dashboard

sessionAffinity: None

#type: ClusterIP

type: NodePort

status:

loadBalancer: {}

保存退出查看

[root@master-1 ~]# kubectl get svc -n kubernetes-dashboard NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE dashboard-metrics-scraper ClusterIP 10.108.226.98 <none> 8000/TCP 38h kubernetes-dashboard NodePort 10.108.44.41 <none> 443:32341/TCP 38h

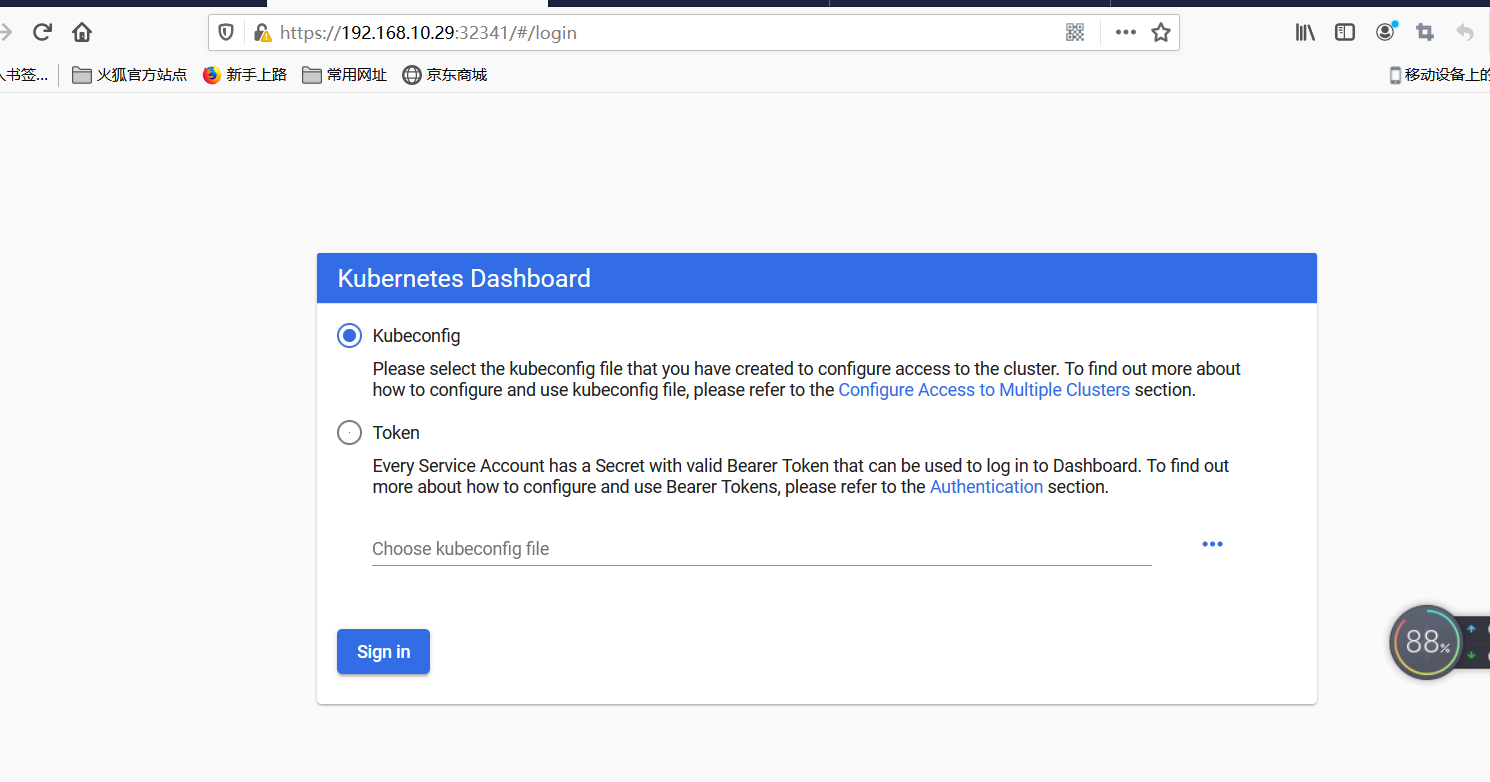

浏览器访问https://192.168.10.29:32341/#/login

通过token令牌访问dashboard创建管理员token,具有查看任何空间的权限,可以管理所有资源对象

[root@master-1 ~]# kubectl create clusterrolebinding dashboard-cluster-admin --clusterrole=cluster-admin --serviceaccount=kubernetes-dashboard:kubernetes-dashboard

clusterrolebinding.rbac.authorization.k8s.io/dashboard-cluster-admin created

[root@master-1 ~]# kubectl get secret -n kubernetes-dashboard

NAME TYPE DATA AGE

default-token-b8sqn kubernetes.io/service-account-token 3 38h

kubernetes-dashboard-certs Opaque 0 38h

kubernetes-dashboard-csrf Opaque 1 38h

kubernetes-dashboard-key-holder Opaque 2 38h

kubernetes-dashboard-token-jh4sz kubernetes.io/service-account-token 3 38h

[root@master-1 ~]# kubectl describe secret kubernetes-dashboard-token-jh4sz -n kubernetes-dashboard

Name: kubernetes-dashboard-token-jh4sz

Namespace: kubernetes-dashboard

Labels: <none>

Annotations: kubernetes.io/service-account.name: kubernetes-dashboard

kubernetes.io/service-account.uid: 6a0320fb-d304-43ca-898b-59156d2ee4a9

Type: kubernetes.io/service-account-token

Data

====

token: eyJhbGciOiJSUzI1NiIsImtpZCI6InlJM3BCZ2hXSGJNZVhMekFPaDlFRnJYVlhfeHpOWXVyODBOdlhrQlBCSXcifQ.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJrdW

Jlcm5ldGVzLWRhc2hib2FyZCIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VjcmV0Lm5hbWUiOiJrdWJlcm5ldGVzLWRhc2hib2FyZC10b2tlbi1qaDRzeiIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VydmljZS1hY2NvdW50Lm5hbWUiOiJrdWJlcm5ldGVzLWRhc2hib2FyZCIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VydmljZS1hY2NvdW50LnVpZCI6IjZhMDMyMGZiLWQzMDQtNDNjYS04OThiLTU5MTU2ZDJlZTRhOSIsInN1YiI6InN5c3RlbTpzZXJ2aWNlYWNjb3VudDprdWJlcm5ldGVzLWRhc2hib2FyZDprdWJlcm5ldGVzLWRhc2hib2FyZCJ9.FMp3-Vudcl4UeTfZkNVDfrc3kGVQjKG1T6b3cufZG1T5t7BFMIhtBIUm2WeSlTy3tCrh95u-Ei5O-S_O6UolUAjZwDm4ncsjknBHenubAq_A9meAT9Jzvsp7g2pxpXobnNcF4_jJTt0FhQtSNwCzA7IgzxPwZLFOdY88p0YED0mpLk5wODZBnrmsj9rLNZ-u_vprFEnUhrJ5nRxk4W9-sHLFKzyZhyu2T4hf2E3Ahi0FzVT0kMBpg9lPcI02NTv4zq1GPe4y87IbzCP9Uwz8iJNkTQCB-DS8WWT_hs2Y0CUhgQimp39qLfoLX6Eqg2IG2vk0atLZFL5lft403-ONZAca.crt: 1066 bytes

namespace: 20 bytes

注意命令行复制有换行符

eyJhbGciOiJSUzI1NiIsImtpZCI6InlJM3BCZ2hXSGJNZVhMekFPaDlFRnJYVlhfeHpOWXVyODBOdlhrQlBCSXcifQ.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJrdWJlcm5ldGVzLWRhc2hib2FyZCIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VjcmV0Lm5hbWUiOiJrdWJlcm5ldGVzLWRhc2hib2FyZC10b2tlbi1qaDRzeiIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VydmljZS1hY2NvdW50Lm5hbWUiOiJrdWJlcm5ldGVzLWRhc2hib2FyZCIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VydmljZS1hY2NvdW50LnVpZCI6IjZhMDMyMGZiLWQzMDQtNDNjYS04OThiLTU5MTU2ZDJlZTRhOSIsInN1YiI6InN5c3RlbTpzZXJ2aWNlYWNjb3VudDprdWJlcm5ldGVzLWRhc2hib2FyZDprdWJlcm5ldGVzLWRhc2hib2FyZCJ9.FMp3-Vudcl4UeTfZkNVDfrc3kGVQjKG1T6b3cufZG1T5t7BFMIhtBIUm2WeSlTy3tCrh95u-Ei5O-S_O6UolUAjZwDm4ncsjknBHenubAq_A9meAT9Jzvsp7g2pxpXobnNcF4_jJTt0FhQtSNwCzA7IgzxPwZLFOdY88p0YED0mpLk5wODZBnrmsj9rLNZ-u_vprFEnUhrJ5nRxk4W9-sHLFKzyZhyu2T4hf2E3Ahi0FzVT0kMBpg9lPcI02NTv4zq1GPe4y87IbzCP9Uwz8iJNkTQCB-DS8WWT_hs2Y0CUhgQimp39qLfoLX6Eqg2IG2vk0atLZFL5lft403-ONZA

通过kubeconfig文件访问dashboard

[root@master-1 ~]# cd /etc/kubernetes/pki

[root@master-1 pki]# ls

apiserver.crt apiserver-etcd-client.key apiserver-kubelet-client.crt ca.crt etcd front-proxy-ca.key front-proxy-client.key sa.pub

apiserver-etcd-client.crt apiserver.key apiserver-kubelet-client.key ca.key front-proxy-ca.crt front-proxy-client.crt sa.key

[root@master-1 pki]# kubectl config set-cluster kubernetes --certificate-authority=./ca.crt --server="https://192.168.40.180:6443" --embed-certs=true --kubeconfig=/root/dashboard-admin.conf

Cluster "kubernetes" set.

[root@master-1 pki]# vim /root/dashboard-admin.conf

[root@master-1 pki]# DEF_NS_ADMIN_TOKEN=$(kubectl get secret kubernetes-dashboard-token-jh4sz -n kubernetes-dashboard -o jsonpath={.data.token}|base64 -d)

[root@master-1 pki]# kubectl config set-credentials dashboard-admin --token=$DEF_NS_ADMIN_TOKEN --kubeconfig=/root/dashboard-admin.conf

User "dashboard-admin" set.

[root@master-1 pki]# kubectl config set-context dashboard-admin@kubernetes --cluster=kubernetes --user=dashboard-admin --kubeconfig=/root/dashboard-admin.conf

Context "dashboard-admin@kubernetes" created.

[root@master-1 pki]# kubectl config use-context dashboard-admin@kubernetes --kubeconfig=/root/dashboard-admin.conf

Switched to context "dashboard-admin@kubernetes".

[root@master-1 pki]# cat /root/dashboard-admin.conf

apiVersion: v1

clusters:

- cluster:

certificate-authority-data: LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSUM1ekNDQWMrZ0F3SUJBZ0lCQURBTkJna3Foa2lHOXcwQkFRc0ZBREFWTVJNd0VRWURWUVFERXdwcmRXSmwKY201bGRHVnpNQjRYRFRJeE1USXhOVEUwTURFMU1sb1hEVE14TVRJe

E16RTBNREUxTWxvd0ZURVRNQkVHQTFVRQpBeE1LYTNWaVpYSnVaWFJsY3pDQ0FTSXdEUVlKS29aSWh2Y05BUUVCQlFBRGdnRVBBRENDQVFvQ2dnRUJBS3hKCmhwMVR0VDlVSmttb2VVYy82YkRNRFR5emQ1bTZEVFVGVGtad3ZrVVJGbW9pMGlzakhZQmp6dWRFeDNnRmlCL08KTGtrOW5tKzMrVVg1TjRoYU1ta3NsdytFTGJpTEhTM3ZvZExRWElOVVJQSVpNREpiQTRJVWZLeVI3MlZFT0ZlKwoyYVVOZDBsK2I0bElvTTBxRkpzb25WVE5mdXBlc1pCVFQ0cXF3cVZzRzc3ZHpSeWR4Y0ZLeHVFd2JEd1R2K05oCmZRWDk4SndjcGdtem41VDVHQVhSeWJ4WVhkYmd4cjFHNnBXOFlMcExuU3VpTFNoUHB4WGQ2cEZzMG1zZTgyckEKcDJGYm1jZDZRTTVvNTd5K2dXMDNNTU5pSElnOXVIRWdvQm55MkI4NW1TSjFqNmFEQlBIRkpOeU80aVRJb0V2VQpxeVZxZjhmN3c4dWp3T3RhN3FNQ0F3RUFBYU5DTUVBd0RnWURWUjBQQVFIL0JBUURBZ0trTUE4R0ExVWRFd0VCCi93UUZNQU1CQWY4d0hRWURWUjBPQkJZRUZIU0xHTUtBSGU0NXdIU3NqSW9jVEJoRDhGWnZNQTBHQ1NxR1NJYjMKRFFFQkN3VUFBNElCQVFDb0xVUGJwWDRmN1N6VWFxS1RweDF4Q2RZSTRQRnVFY0lVZ0pHWndLYkdzVHZqdWRmRgpSTkMxTXgyV0VyejhmbXFYRmRKQmVidFp1b0ovMCtFd3FiQ1FNMUI0UWdmMHdYSkF0elJlbTlTbUJ0dUlJbGI5Ci9iVEw4RnBueWFuaEZ1eFRQZFBzTGc0VWJPaEZCUVo1V2hYNEJOWnIwelRoK2FZeEpuUTg0c2lxbktRVStwMWkKYUx6Ky9NekVocTMvYnF5NDJTdnVsaW1iaXF3VDN5VDhDSmRwcEhjZ0F0emtweWw1eW1PdUdQK0F5blR0Y0svZAp2Ky9vUVdJODRxV2tzeHpxYUxNdGpFK1psaW1iMGdGV1ZsM1BRUFhZSTRGTFFQNDVBU2JoQUxpZmswYjNUNEZRCmh5M2pCS0YxTUtGYUZyUm0zUkl3SmRJTVN3WnhISVJCVm0vYgotLS0tLUVORCBDRVJUSUZJQ0FURS0tLS0tCg== server: https://192.168.40.180:6443

name: kubernetes

contexts:

- context:

cluster: kubernetes

user: dashboard-admin

name: dashboard-admin@kubernetes

current-context: dashboard-admin@kubernetes

kind: Config

preferences: {}

users:

- name: dashboard-admin

user:

token: eyJhbGciOiJSUzI1NiIsImtpZCI6InlJM3BCZ2hXSGJNZVhMekFPaDlFRnJYVlhfeHpOWXVyODBOdlhrQlBCSXcifQ.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJrdWJ

lcm5ldGVzLWRhc2hib2FyZCIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VjcmV0Lm5hbWUiOiJrdWJlcm5ldGVzLWRhc2hib2FyZC10b2tlbi1qaDRzeiIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VydmljZS1hY2NvdW50Lm5hbWUiOiJrdWJlcm5ldGVzLWRhc2hib2FyZCIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VydmljZS1hY2NvdW50LnVpZCI6IjZhMDMyMGZiLWQzMDQtNDNjYS04OThiLTU5MTU2ZDJlZTRhOSIsInN1YiI6InN5c3RlbTpzZXJ2aWNlYWNjb3VudDprdWJlcm5ldGVzLWRhc2hib2FyZDprdWJlcm5ldGVzLWRhc2hib2FyZCJ9.FMp3-Vudcl4UeTfZkNVDfrc3kGVQjKG1T6b3cufZG1T5t7BFMIhtBIUm2WeSlTy3tCrh95u-Ei5O-S_O6UolUAjZwDm4ncsjknBHenubAq_A9meAT9Jzvsp7g2pxpXobnNcF4_jJTt0FhQtSNwCzA7IgzxPwZLFOdY88p0YED0mpLk5wODZBnrmsj9rLNZ-u_vprFEnUhrJ5nRxk4W9-sHLFKzyZhyu2T4hf2E3Ahi0FzVT0kMBpg9lPcI02NTv4zq1GPe4y87IbzCP9Uwz8iJNkTQCB-DS8WWT_hs2Y0CUhgQimp39qLfoLX6Eqg2IG2vk0atLZFL5lft403-ONZA

安装metrics-server组件注意:这个是k8s在1.17的新特性,如果是1.16版本的可以不用添加,1.17以后要添加。这个参数的作用是Aggregation允许在不修改Kubernetes核心代码的同时扩展Kubernetes API。

[root@master-1 ~]# cat /etc/kubernetes/manifests/kube-apiserver.yaml

apiVersion: v1

kind: Pod

metadata:

annotations:

kubeadm.kubernetes.io/kube-apiserver.advertise-address.endpoint: 192.168.10.29:6443

creationTimestamp: null

labels:

component: kube-apiserver

tier: control-plane

name: kube-apiserver

namespace: kube-system

spec:

containers:

- command:

- kube-apiserver

- --advertise-address=192.168.10.29

- --allow-privileged=true

- --authorization-mode=Node,RBAC

- --client-ca-file=/etc/kubernetes/pki/ca.crt

- --enable-admission-plugins=NodeRestriction

- --enable-bootstrap-token-auth=true

- --enable-aggregator-routing=true # 添加这个参数

- --etcd-cafile=/etc/kubernetes/pki/etcd/ca.crt

- --etcd-certfile=/etc/kubernetes/pki/apiserver-etcd-client.crt

- --etcd-keyfile=/etc/kubernetes/pki/apiserver-etcd-client.key

- --etcd-servers=https://127.0.0.1:2379

- --insecure-port=0

- --kubelet-client-certificate=/etc/kubernetes/pki/apiserver-kubelet-client.crt

- --kubelet-client-key=/etc/kubernetes/pki/apiserver-kubelet-client.key

- --kubelet-preferred-address-types=InternalIP,ExternalIP,Hostname

- --proxy-client-cert-file=/etc/kubernetes/pki/front-proxy-client.crt

- --proxy-client-key-file=/etc/kubernetes/pki/front-proxy-client.key

- --requestheader-allowed-names=front-proxy-client

- --requestheader-client-ca-file=/etc/kubernetes/pki/front-proxy-ca.crt

- --requestheader-extra-headers-prefix=X-Remote-Extra-

- --requestheader-group-headers=X-Remote-Group

- --requestheader-username-headers=X-Remote-User

- --secure-port=6443

- --service-account-issuer=https://kubernetes.default.svc.cluster.local

- --service-account-key-file=/etc/kubernetes/pki/sa.pub

- --service-account-signing-key-file=/etc/kubernetes/pki/sa.key

- --service-cluster-ip-range=10.96.0.0/12

- --tls-cert-file=/etc/kubernetes/pki/apiserver.crt

- --tls-private-key-file=/etc/kubernetes/pki/apiserver.key

image: registry.aliyuncs.com/google_containers/kube-apiserver:v1.20.6

imagePullPolicy: IfNotPresent

livenessProbe:

failureThreshold: 8

httpGet:

host: 192.168.10.29

path: /livez

port: 6443

scheme: HTTPS

initialDelaySeconds: 10

periodSeconds: 10

timeoutSeconds: 15

name: kube-apiserver

readinessProbe:

failureThreshold: 3

httpGet:

host: 192.168.10.29

path: /readyz

port: 6443

scheme: HTTPS

periodSeconds: 1

timeoutSeconds: 15

resources:

requests:

cpu: 250m

startupProbe:

failureThreshold: 24

httpGet:

host: 192.168.10.29

path: /livez

port: 6443

scheme: HTTPS

initialDelaySeconds: 10

periodSeconds: 10

timeoutSeconds: 15

volumeMounts:

- mountPath: /etc/ssl/certs

name: ca-certs

readOnly: true

- mountPath: /etc/pki

name: etc-pki

readOnly: true

- mountPath: /etc/kubernetes/pki

name: k8s-certs

readOnly: true

hostNetwork: true

priorityClassName: system-node-critical

volumes:

- hostPath:

path: /etc/ssl/certs

type: DirectoryOrCreate

name: ca-certs

- hostPath:

path: /etc/pki

type: DirectoryOrCreate

name: etc-pki

- hostPath:

path: /etc/kubernetes/pki

type: DirectoryOrCreate

name: k8s-certs

status: {}

重新更新apiserver配置:

[root@master-1 ~]# kubectl apply -f /etc/kubernetes/manifests/kube-apiserver.yaml pod/kube-apiserver created [root@master-1 ~]# kubectl get pods -n kube-system NAME READY STATUS RESTARTS AGE calico-kube-controllers-558995777d-7g9x7 1/1 Running 0 2d12h calico-node-2qrpn 1/1 Running 0 2d12h calico-node-6zh2p 1/1 Running 0 2d12h calico-node-thfh7 1/1 Running 0 2d12h coredns-7f89b7bc75-jbl2h 1/1 Running 0 2d12h coredns-7f89b7bc75-nr24n 1/1 Running 0 2d12h etcd-master-1 1/1 Running 0 2d12h kube-apiserver 0/1 CrashLoopBackOff 1 12s kube-apiserver-master-1 1/1 Running 0 2m1s kube-controller-manager-master-1 1/1 Running 1 2d12h kube-proxy-5h29b 1/1 Running 0 2d12h kube-proxy-thxv4 1/1 Running 0 2d12h kube-proxy-txm52 1/1 Running 0 2d12h kube-scheduler-master-1 1/1 Running 1 2d12h [root@master-1 ~]# kubectl delete pods kube-apiserver -n kube-system #把CrashLoopBackOff状态的pod删除 pod "kube-apiserver" deleted

查看metrics.yaml 并更新

[root@master-1 ~]# cat metrics.yaml

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: metrics-server:system:auth-delegator

labels:

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:auth-delegator

subjects:

- kind: ServiceAccount

name: metrics-server

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: RoleBinding

metadata:

name: metrics-server-auth-reader

namespace: kube-system

labels:

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: Role

name: extension-apiserver-authentication-reader

subjects:

- kind: ServiceAccount

name: metrics-server

namespace: kube-system

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: metrics-server

namespace: kube-system

labels:

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

name: system:metrics-server

labels:

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

rules:

- apiGroups:

- ""

resources:

- pods

- nodes

- nodes/stats

- namespaces

verbs:

- get

- list

- watch

- apiGroups:

- "extensions"

resources:

- deployments

verbs:

- get

- list

- update

- watch

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: system:metrics-server

labels:

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:metrics-server

subjects:

- kind: ServiceAccount

name: metrics-server

namespace: kube-system

---

apiVersion: v1

kind: ConfigMap

metadata:

name: metrics-server-config

namespace: kube-system

labels:

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: EnsureExists

data:

NannyConfiguration: |-

apiVersion: nannyconfig/v1alpha1

kind: NannyConfiguration

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: metrics-server

namespace: kube-system

labels:

k8s-app: metrics-server

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

version: v0.3.6

spec:

selector:

matchLabels:

k8s-app: metrics-server

version: v0.3.6

template:

metadata:

name: metrics-server

labels:

k8s-app: metrics-server

version: v0.3.6

annotations:

scheduler.alpha.kubernetes.io/critical-pod: ''

seccomp.security.alpha.kubernetes.io/pod: 'docker/default'

spec:

priorityClassName: system-cluster-critical

serviceAccountName: metrics-server

containers:

- name: metrics-server

image: k8s.gcr.io/metrics-server-amd64:v0.3.6

imagePullPolicy: IfNotPresent

command:

- /metrics-server

- --metric-resolution=30s

- --kubelet-preferred-address-types=InternalIP

- --kubelet-insecure-tls

ports:

- containerPort: 443

name: https

protocol: TCP

- name: metrics-server-nanny

image: k8s.gcr.io/addon-resizer:1.8.4

imagePullPolicy: IfNotPresent

resources:

limits:

cpu: 100m

memory: 300Mi

requests:

cpu: 5m

memory: 50Mi

env:

- name: MY_POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: MY_POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

volumeMounts:

- name: metrics-server-config-volume

mountPath: /etc/config

command:

- /pod_nanny

- --config-dir=/etc/config

- --cpu=300m

- --extra-cpu=20m

- --memory=200Mi

- --extra-memory=10Mi

- --threshold=5

- --deployment=metrics-server

- --container=metrics-server

- --poll-period=300000

- --estimator=exponential

- --minClusterSize=2

volumes:

- name: metrics-server-config-volume

configMap:

name: metrics-server-config

tolerations:

- key: "CriticalAddonsOnly"

operator: "Exists"

- key: node-role.kubernetes.io/master

effect: NoSchedule

---

apiVersion: v1

kind: Service

metadata:

name: metrics-server

namespace: kube-system

labels:

addonmanager.kubernetes.io/mode: Reconcile

kubernetes.io/cluster-service: "true"

kubernetes.io/name: "Metrics-server"

spec:

selector:

k8s-app: metrics-server

ports:

- port: 443

protocol: TCP

targetPort: https

---

apiVersion: apiregistration.k8s.io/v1

kind: APIService

metadata:

name: v1beta1.metrics.k8s.io

labels:

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

spec:

service:

name: metrics-server

namespace: kube-system

group: metrics.k8s.io

version: v1beta1

insecureSkipTLSVerify: true

groupPriorityMinimum: 100

versionPriority: 100

[root@master-1 ~]# kubectl apply -f metrics.yaml

clusterrolebinding.rbac.authorization.k8s.io/metrics-server:system:auth-delegator created

rolebinding.rbac.authorization.k8s.io/metrics-server-auth-reader created

serviceaccount/metrics-server created

clusterrole.rbac.authorization.k8s.io/system:metrics-server created

clusterrolebinding.rbac.authorization.k8s.io/system:metrics-server created

configmap/metrics-server-config created

deployment.apps/metrics-server created

service/metrics-server created

apiservice.apiregistration.k8s.io/v1beta1.metrics.k8s.io created

[root@master-1 ~]# kubectl get pods -n kube-system | grep metrics

metrics-server-6595f875d6-jhf9p 2/2 Running 0 14s

测试kubectl top命令

[root@master-1 ~]# kubectl top pods -n kube-system NAME CPU(cores) MEMORY(bytes) calico-kube-controllers-558995777d-7g9x7 2m 16Mi calico-node-2qrpn 33m 81Mi calico-node-6zh2p 23m 94Mi calico-node-thfh7 40m 108Mi coredns-7f89b7bc75-jbl2h 4m 25Mi coredns-7f89b7bc75-nr24n 3m 9Mi etcd-master-1 14m 122Mi kube-apiserver-master-1 112m 380Mi kube-controller-manager-master-1 21m 48Mi kube-proxy-5h29b 1m 29Mi kube-proxy-thxv4 1m 17Mi kube-proxy-txm52 1m 15Mi kube-scheduler-master-1 3m 20Mi metrics-server-6595f875d6-jhf9p 1m 16Mi [root@master-1 ~]# kubectl top pods -n kube-system NAME CPU(cores) MEMORY(bytes) calico-kube-controllers-558995777d-7g9x7 2m 16Mi calico-node-2qrpn 33m 81Mi calico-node-6zh2p 23m 94Mi calico-node-thfh7 40m 108Mi coredns-7f89b7bc75-jbl2h 4m 25Mi coredns-7f89b7bc75-nr24n 3m 9Mi etcd-master-1 14m 122Mi kube-apiserver-master-1 112m 380Mi kube-controller-manager-master-1 21m 48Mi kube-proxy-5h29b 1m 29Mi kube-proxy-thxv4 1m 17Mi kube-proxy-txm52 1m 15Mi kube-scheduler-master-1 3m 20Mi metrics-server-6595f875d6-jhf9p 1m 16Mi [root@master-1 ~]# kubectl top node NAME CPU(cores) CPU% MEMORY(bytes) MEMORY% master-1 181m 9% 2049Mi 55% node-1 81m 4% 1652Mi 45% node-2 97m 4% 1610Mi 43%

把scheduler、controller-manager端口变成物理机可以监听的端口

[root@master-1 ~]# kubectl get cs

Warning: v1 ComponentStatus is deprecated in v1.19+

NAME STATUS MESSAGE ERROR

scheduler Unhealthy Get "http://127.0.0.1:10251/healthz": dial tcp 127.0.0.1:10251: connect: connection refused

controller-manager Unhealthy Get "http://127.0.0.1:10252/healthz": dial tcp 127.0.0.1:10252: connect: connection refused

etcd-0 Healthy {"health":"true"}

默认在1.19之后10252和10251都是绑定在127的,如果想要通过prometheus监控,会采集不到数据,所以可以把端口绑定到物理机

可按如下方法处理:

vim /etc/kubernetes/manifests/kube-scheduler.yaml

修改如下内容:

把--bind-address=127.0.0.1变成--bind-address=192.168.10.29

把httpGet:字段下的hosts由127.0.0.1变成192.168.10.29

把—port=0删除

#注意:192.168.40.180是k8s的控制节点xianchaomaster1的ip

vim /etc/kubernetes/manifests/kube-controller-manager.yaml

把--bind-address=127.0.0.1变成--bind-address=192.168.10.29

把httpGet:字段下的hosts由127.0.0.1变成192.168.10.29

[root@master-1 ~]# cat /etc/kubernetes/manifests/kube-scheduler.yaml

apiVersion: v1

kind: Pod

metadata:

creationTimestamp: null

labels:

component: kube-scheduler

tier: control-plane

name: kube-scheduler

namespace: kube-system

spec:

containers:

- command:

- kube-scheduler

- --authentication-kubeconfig=/etc/kubernetes/scheduler.conf

- --authorization-kubeconfig=/etc/kubernetes/scheduler.conf

- --bind-address=192.168.10.29

- --kubeconfig=/etc/kubernetes/scheduler.conf

- --leader-elect=true

image: registry.aliyuncs.com/google_containers/kube-scheduler:v1.20.6

imagePullPolicy: IfNotPresent

livenessProbe:

failureThreshold: 8

httpGet:

host: 192.168.10.29

path: /healthz

port: 10259

scheme: HTTPS

initialDelaySeconds: 10

periodSeconds: 10

timeoutSeconds: 15

name: kube-scheduler

resources:

requests:

cpu: 100m

startupProbe:

failureThreshold: 24

httpGet:

host: 192.168.10.29

path: /healthz

port: 10259

scheme: HTTPS

initialDelaySeconds: 10

periodSeconds: 10

timeoutSeconds: 15

volumeMounts:

- mountPath: /etc/kubernetes/scheduler.conf

name: kubeconfig

readOnly: true

hostNetwork: true

priorityClassName: system-node-critical

volumes:

- hostPath:

path: /etc/kubernetes/scheduler.conf

type: FileOrCreate

name: kubeconfig

status: {}

修改之后在k8s各个节点重启下kubelet

systemctl restart kubelet

[root@master-1 ~]# ss -antulp | grep 10251

tcp LISTEN 0 128 :::10251 :::* users:(("kube-scheduler",pid=7512,fd=7))

[root@master-1 ~]# ss -antulp | grep 10251

tcp LISTEN 0 128 :::10251 :::* users:(("kube-scheduler",pid=7512,fd=7))

浙公网安备 33010602011771号

浙公网安备 33010602011771号