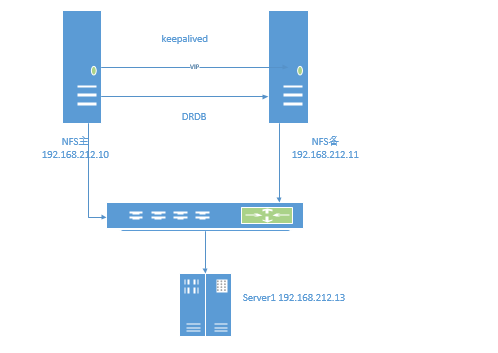

DRBD+keepalived+nfs高可用方案

一、架构图

二、系统环境

1、系统环境

[root@controller1 ~]# cat /etc/redhat-release Fedora release 25 (Twenty Five)

2、确保所有服务器的防火墙,Selinux关闭了。

3、且主机名配置到位,能够根据主机名知道服务器角色。

4、确保所有服务器hosts里面能够解析任意一台服务器的hostname

[root@controller1 mnt]# cat /etc/hosts

10.0.0.21 controller1 (主节点)

10.0.0.22 controller2 (备节点)

三、安装drbd (备注:以下部分两个节点相同操作)

1、软件安装

#安装依赖包

dnf install gcc flex libxslt kernel kernel-devel kernel-headers -y

#安装drdb

#软件下载地址

wget http://dl.fedoraproject.org/pub/fedora/linux/releases/25/Everything/x86_64/os/Packages/d/drbd-8.9.6-1.fc25.x86_64.rpm

#安装软件

dnf install drbd-8.9.6-1.fc25.x86_64.rpm -y

#软件下载地址

wget https://fedora.pkgs.org/25/fedora-x86_64/drbd-8.9.6-1.fc25.x86_64.rpm

#安装软件

dnf install drbd-utils-8.9.6-1.fc25.x86_64.rpm -y

#添加防火墙规则

#add iptables iptables -I INPUT -p tcp -m state --state NEW -m tcp --dport 7788 -j ACCEPT iptables -I INPUT -p tcp -m state --state NEW -m tcp --dport 7799 -j ACCEPT service iptables save

#加载内核

[root@controller1 ~]# modprobe drbd [root@controller1 ~]# lsmod |grep drbd

2、配置软件

#controller1

[root@controller2 drbd.d]# cd /etc/drbd.d

[root@controller2 drbd.d]# ll

total 12

-rw-r--r-- 1 root root 2385 Apr 25 09:40 global_common.conf

#备份配置文件

[root@controller2 drbd.d]# cp global_common.conf global_common.conf.bak

[root@controller2 drbd.d]# ll

total 12

-rw-r--r-- 1 root root 2385 Apr 25 09:40 global_common.conf

-rw-r--r-- 1 root root 2061 Mar 8 2016 global_common.conf.bak

-rw-r--r-- 1 root root 272 Apr 25 09:40 r0.res

#修改配置文件

#global_common.conf

[root@controller2 drbd.d]# cat global_common.conf

# DRBD is the result of over a decade of development by LINBIT.

# In case you need professional services for DRBD or have

# feature requests visit http://www.linbit.com

global {

usage-count yes;

# minor-count dialog-refresh disable-ip-verification

# cmd-timeout-short 5; cmd-timeout-medium 121; cmd-timeout-long 600;

}

common {

handlers {

# These are EXAMPLE handlers only.

# They may have severe implications,

# like hard resetting the node under certain circumstances.

# Be careful when chosing your poison.

pri-on-incon-degr "/usr/lib/drbd/notify-pri-on-incon-degr.sh; /usr/lib/drbd/notify-emergency-reboot.sh; echo b > /proc/sysrq-trigger ; reboot -f";

pri-lost-after-sb "/usr/lib/drbd/notify-pri-lost-after-sb.sh; /usr/lib/drbd/notify-emergency-reboot.sh; echo b > /proc/sysrq-trigger ; reboot -f";

local-io-error "/usr/lib/drbd/notify-io-error.sh; /usr/lib/drbd/notify-emergency-shutdown.sh; echo o > /proc/sysrq-trigger ; halt -f";

# fence-peer "/usr/lib/drbd/crm-fence-peer.sh";

# split-brain "/usr/lib/drbd/notify-split-brain.sh root";

# out-of-sync "/usr/lib/drbd/notify-out-of-sync.sh root";

# before-resync-target "/usr/lib/drbd/snapshot-resync-target-lvm.sh -p 15 -- -c 16k";

# after-resync-target /usr/lib/drbd/unsnapshot-resync-target-lvm.sh;

}

startup {

wfc-timeout 30;

degr-wfc-timeout 30;

outdated-wfc-timeout 30;

# wfc-timeout degr-wfc-timeout outdated-wfc-timeout wait-after-sb

}

options {

# cpu-mask on-no-data-accessible

}

disk {

on-io-error detach;

fencing resource-and-stonith;

resync-rate 50M;

# size on-io-error fencing disk-barrier disk-flushes

# disk-drain md-flushes resync-rate resync-after al-extents

# c-plan-ahead c-delay-target c-fill-target c-max-rate

# c-min-rate disk-timeout

}

net {

protocol C;

cram-hmac-alg sha1;

shared-secret "123456";

# protocol timeout max-epoch-size max-buffers unplug-watermark

# connect-int ping-int sndbuf-size rcvbuf-size ko-count

# allow-two-primaries cram-hmac-alg shared-secret after-sb-0pri

# after-sb-1pri after-sb-2pri always-asbp rr-conflict

# ping-timeout data-integrity-alg tcp-cork on-congestion

# congestion-fill congestion-extents csums-alg verify-alg

# use-rle

}

}

# r0.res

[root@controller2 drbd.d]# cat r0.res

resource r0 {

on controller1 {

device /dev/drbd1;

disk /dev/sda8;

address 10.0.0.21:7789;

meta-disk internal;

}

on controller2 {

device /dev/drbd1;

disk /dev/sda8;

address 10.0.0.22:7789;

meta-disk internal;

}

}

#从controller1 同步配置文件到 controller2节点

scp r0.res root@controller2:/etc/drbd.d/ scp global_common.conf root@controller2:/etc/drbd.d/

#创建元数据库

drbdadm create-md r0

报错:

[root@controller1 drbd.d]# drbdadm create-md r0

--== Thank you for participating in the global usage survey ==--

The server's response is:

you are the 320th user to install this version

md_offset 10737414144

al_offset 10737381376

bm_offset 10737053696

Found ext3 filesystem

10485760 kB data area apparently used

10485404 kB left usable by current configuration

Device size would be truncated, which

would corrupt data and result in

'access beyond end of device' errors.

You need to either

* use external meta data (recommended)

* shrink that filesystem first

* zero out the device (destroy the filesystem)

Operation refused.

Command 'drbdmeta 1 v08 /dev/sda8 internal create-md' terminated with exit code 40

[root@controller1 drbd.d]# dd if=/dev/zero of=/dev/sda8 bs=1M count=100

解决办法:往分区写入数据

[root@controller1 drbd.d]# dd if=/dev/zero of=/dev/sda8 bs=1M count=100 100+0 records in 100+0 records out 104857600 bytes (105 MB, 100 MiB) copied, 0.145174 s, 722 MB/s

再执行:

[root@controller1 drbd.d]# drbdadm create-md r0 initializing activity log NOT initializing bitmap Writing meta data... New drbd meta data block successfully created. success

#controller2节点操作

[root@controller2 drbd.d]# drbdadm create-md r0 --== Thank you for participating in the global usage survey ==-- The server's response is: you are the 321th user to install this version initializing activity log NOT initializing bitmap Writing meta data... New drbd meta data block successfully created.

#启动r0

[root@controller2 ~]# drbdadm up r0

#查看同步情况

[root@controller1 ~]# cat /proc/drbd

version: 8.4.7 (api:1/proto:86-101)

srcversion: 2DCC561E7F1E3D63526E90D

1: cs:Connected ro:Secondary/Secondary ds:Inconsistent/Inconsistent C r-----

ns:0 nr:0 dw:0 dr:0 al:8 bm:0 lo:0 pe:0 ua:0 ap:0 ep:1 wo:f oos:10485404

备注: ds:Inconsistent/Inconsistent C (这是没有同步)

2、启动drbd

[root@controller1 ~]# systemctl start drbd

#列出所有块设备

[root@controller1 ~]# lsblk -a

NAME MAJ:MIN RM SIZE RO TYPE MOUNTPOINT

sdb 8:16 0 119.2G 0 disk

└─sdb1 8:17 0 119.2G 0 part /var/lib/ceph/osd/ceph-2

sda 8:0 0 931.5G 0 disk

├─sda2 8:2 0 104G 0 part

│ ├─fedora-swap 253:1 0 4G 0 lvm [SWAP]

│ └─fedora-root 253:0 0 100G 0 lvm /

├─sda7 8:7 0 150G 0 part /mnt

├─sda5 8:5 0 300G 0 part /var/lib/ceph/osd/ceph-0

├─sda3 8:3 0 1K 0 part

├─sda1 8:1 0 1G 0 part /boot

├─sda8 8:8 0 10G 0 part

│ └─drbd1 147:1 0 10G 1 disk

└─sda6 8:6 0 300G 0 part /var/lib/ceph/osd/ceph-1

3、设置主节点

[root@controller1 ~]# drbdadm -- --overwrite-data-of-peer primary all

#同步磁盘镜镜像

[root@controller1 ~]# drbd-overview

1:r0/0 SyncSource Primary/Secondary UpToDate/Inconsistent

[>....................] sync'ed: 2.5% (9984/10236)M

4、同步完成

[root@controller1 ~]# drbd-overview

1:r0/0 Connected Primary/Secondary UpToDate/UpToDate

5. 创建文件系统

文件系统的挂载只能在Primary节点进行,只有在设置了主节点后才能对drbd设备进行格式化, 格式化与手动挂载测试。

[root@app1 ~]# mkfs.xfs /dev/drbd1 (备注:fedora25系统) or (备注:centos7.x系统,用:mkfs.ext4 /dev/drbd1

[root@app1 ~]# mount /dev/drbd1 /data

6. 手动切换Primary和Secondary

对主Primary/Secondary模型的drbd服务来讲,在某个时刻只能有一个节点为Primary,因此,要切换两个节点的角色,只能在先将原有的Primary节点设置为Secondary后,才能原来的Secondary节点设置为Primary:

手工切换DRBD的步骤:

(1) 主节点 umount /dev/drbd0 卸载挂载

(2) 主节点 drbdadm secondary all 恢复从节点

(3) 备节点 drbdadm primary all 配置主节点

(4) 备节点 mount /dev/drbd0 /data 挂载

备注:

1、主端挂载完后去格式化备端和挂载,主要是检测备端是否能够正常挂载和使用,格式化之前需要主备状态切换,切换的方法请看下面的DRBD设备角色切换的内容。下面就在备端格式化和挂载磁盘。(备端也可以这样挂载磁盘,但是挂载的前提是备端切换成master端。只有master端可以挂载磁盘。)

2、DRBD设备角色切换

DRBD设备在角色切换之前,需要在主节点执行umount命令卸载磁盘先,然后再把一台主机上的DRBD角色修改为Primary,最后把当前节点的磁盘挂载。

#主备切换演示

节点名称 IPaddr

controller1 10.0.0.21

controller2 10.0.0.22

vip: 10.0.0.20

#controller1上面操作

#controller要先停nfs,再卸载/data

[root@controller1]# systemctl stop nfs

[root@controller1]# umount -f /data

[root@controller1]# df -h

Filesystem Size Used Avail Use% Mounted on

devtmpfs 32G 0 32G 0% /dev

tmpfs 32G 39M 32G 1% /dev/shm

tmpfs 32G 1.3M 32G 1% /run

tmpfs 32G 0 32G 0% /sys/fs/cgroup

/dev/mapper/fedora-root 100G 6.3G 94G 7% /

tmpfs 32G 0 32G 0% /tmp

/dev/sdb1 120G 1.2G 118G 1% /var/lib/ceph/osd/ceph-2

/dev/sda1 976M 141M 769M 16% /boot

/dev/sda6 300G 1.4G 299G 1% /var/lib/ceph/osd/ceph-1

/dev/sda5 300G 1.5G 299G 1% /var/lib/ceph/osd/ceph-0

/dev/sda7 150G 379M 150G 1% /mnt

tmpfs 6.3G 0 6.3G 0% /run/user/0

[root@controller1]# drbdadm secondary all

#在controller2上面操作

#卸载nfs挂载的data目录

[root@controller2 keepalived]# umount -f /data

[root@controller2 keepalived]# df -h

Filesystem Size Used Avail Use% Mounted on

devtmpfs 28G 0 28G 0% /dev

tmpfs 28G 39M 28G 1% /dev/shm

tmpfs 28G 1.3M 28G 1% /run

tmpfs 28G 0 28G 0% /sys/fs/cgroup

/dev/mapper/fedora-root 100G 9.4G 91G 10% /

tmpfs 28G 0 28G 0% /tmp

/dev/sdb1 120G 1.2G 118G 1% /var/lib/ceph/osd/ceph-5

/dev/sda1 976M 141M 769M 16% /boot

/dev/sda5 300G 1.5G 299G 1% /var/lib/ceph/osd/ceph-3

/dev/sda6 300G 1.4G 299G 1% /var/lib/ceph/osd/ceph-4

/dev/sda7 150G 186M 150G 1% /mnt

tmpfs 5.6G 0 5.6G 0% /run/user/0

#配置主节点

[root@controller2 keepalived]# drbdadm primary all

[root@controller2 keepalived]# drbd-overview

1:r0/0 Connected Primary/Secondary UpToDate/UpToDate

#挂载drdb的data目录

[root@controller2 keepalived]# mount /dev/drbd1 /data

[root@controller2 keepalived]# df h

df: h: No such file or directory

[root@controller2 keepalived]# df -h

Filesystem Size Used Avail Use% Mounted on

devtmpfs 28G 0 28G 0% /dev

tmpfs 28G 39M 28G 1% /dev/shm

tmpfs 28G 1.3M 28G 1% /run

tmpfs 28G 0 28G 0% /sys/fs/cgroup

/dev/mapper/fedora-root 100G 9.4G 91G 10% /

tmpfs 28G 0 28G 0% /tmp

/dev/sdb1 120G 1.2G 118G 1% /var/lib/ceph/osd/ceph-5

/dev/sda1 976M 141M 769M 16% /boot

/dev/sda5 300G 1.5G 299G 1% /var/lib/ceph/osd/ceph-3

/dev/sda6 300G 1.4G 299G 1% /var/lib/ceph/osd/ceph-4

/dev/sda7 150G 186M 150G 1% /mnt

tmpfs 5.6G 0 5.6G 0% /run/user/0

/dev/drbd1 10G 43M 10G 1% /data

#查看文件夹中内容

[root@controller2 keepalived]# cd /data/

[root@controller2 data]# ll

total 0

-rw-r--r-- 1 root root 0 Apr 25 11:01 1.txt

-rw-r--r-- 1 root root 0 Apr 25 11:20 2.txt

-rw-r--r-- 1 root root 0 Apr 25 13:48 3.txt

四、安装nfs软件(两台节点都要安装,先在controller1上面安装)

1、检查yum源中是否有软件包

[root@controller1 ~]# yum list|grep nfs

2、系统默认已经安装,直接启动服务就可以了。

[root@controller1 ~]# systemctl start rpcbind

3、查看rpc

[root@controller1 ~]# lsof -i :111

COMMAND PID USER FD TYPE DEVICE SIZE/OFF NODE NAME

systemd 1 root 88u IPv4 2175 0t0 TCP *:sunrpc (LISTEN)

systemd 1 root 89u IPv4 2176 0t0 UDP *:sunrpc

systemd 1 root 90u IPv6 2177 0t0 TCP *:sunrpc (LISTEN)

systemd 1 root 91u IPv6 2178 0t0 UDP *:sunrpc

rpcbind 31574 rpc 4u IPv4 2175 0t0 TCP *:sunrpc (LISTEN)

rpcbind 31574 rpc 5u IPv4 2176 0t0 UDP *:sunrpc

rpcbind 31574 rpc 6u IPv6 2177 0t0 TCP *:sunrpc (LISTEN)

rpcbind 31574 rpc 7u IPv6 2178 0t0 UDP *:sunrpc

4、查看nfs服务向rpc注册的端 口信息

[root@controller1 ~]# rpcinfo -p localhost

program vers proto port service

100000 4 tcp 111 portmapper

100000 3 tcp 111 portmapper

100000 2 tcp 111 portmapper

100000 4 udp 111 portmapper

100000 3 udp 111 portmapper

100000 2 udp 111 portmapper

5、启动nfs

[root@controller1 ~]# systemctl start nfs

[root@controller1 ~]# systemctl status nfs

● nfs-server.service - NFS server and services

Loaded: loaded (/usr/lib/systemd/system/nfs-server.service; disabled; vendor preset: disabled)

Active: active (exited) since Wed 2018-04-25 10:44:23 CST; 4s ago

Process: 32023 ExecStart=/usr/sbin/rpc.nfsd $RPCNFSDARGS (code=exited, status=0/SUCCESS)

Process: 32019 ExecStartPre=/usr/sbin/exportfs -r (code=exited, status=0/SUCCESS)

Main PID: 32023 (code=exited, status=0/SUCCESS)

Tasks: 0 (limit: 4915)

CGroup: /system.slice/nfs-server.service

Apr 25 10:44:23 controller1 systemd[1]: Starting NFS server and services...

Apr 25 10:44:23 controller1 systemd[1]: Started NFS server and services.

6、创建挂载点 (备注:仅在controller1节点上面操作)

[root@controller1 ~]# mkdir -p /data

7、格式化

[root@controller1 drbd.d]# mkfs.xfs /dev/drbd1

meta-data=/dev/drbd1 isize=512 agcount=4, agsize=655338 blks

= sectsz=4096 attr=2, projid32bit=1

= crc=1 finobt=1, sparse=0, rmapbt=0, reflink=0

data = bsize=4096 blocks=2621351, imaxpct=25

= sunit=0 swidth=0 blks

naming =version 2 bsize=4096 ascii-ci=0 ftype=1

log =internal log bsize=4096 blocks=2560, version=2

= sectsz=4096 sunit=1 blks, lazy-count=1

realtime =none extsz=4096 blocks=0, rtextents=0

8、查看需要挂载的磁盘

[root@controller1 ~]# cd /etc/drbd.d/

[root@controller1 drbd.d]# ll

total 12

-rw-r--r-- 1 root root 2385 Apr 25 09:39 global_common.conf

-rw-r--r-- 1 root root 2061 Apr 24 19:17 global_common.conf.bak

-rw-r--r-- 1 root root 272 Apr 25 09:36 r0.res

[root@controller1 drbd.d]# cat r0.res

resource r0 {

on controller1 {

device /dev/drbd1; #挂载的磁盘

disk /dev/sda8;

address 10.0.0.21:7789;

meta-disk internal;

}

on controller2 {

device /dev/drbd1;

disk /dev/sda8;

address 10.0.0.22:7789;

meta-disk internal;

}

}

9、查看硬盘

[root@controller1 drbd.d]# fdisk -l

Disk /dev/sda: 931.5 GiB, 1000148590592 bytes, 1953415216 sectors

Units: sectors of 1 * 512 = 512 bytes

Sector size (logical/physical): 512 bytes / 4096 bytes

I/O size (minimum/optimal): 4096 bytes / 4096 bytes

Disklabel type: dos

Disk identifier: 0xffee9ca9

Disk /dev/drbd1: 10 GiB, 10737053696 bytes, 20970808 sectors

Units: sectors of 1 * 512 = 512 bytes

Sector size (logical/physical): 512 bytes / 4096 bytes

I/O size (minimum/optimal): 4096 bytes / 4096 bytes

10、挂载磁盘

[root@controller1 drbd.d]# mount /dev/drbd1 /data/

[root@controller1 drbd.d]# ll

total 12

-rw-r--r-- 1 root root 2385 Apr 25 09:39 global_common.conf

-rw-r--r-- 1 root root 2061 Apr 24 19:17 global_common.conf.bak

-rw-r--r-- 1 root root 272 Apr 25 09:36 r0.res

11、查看挂载磁盘

[root@controller1 drbd.d]# df -h

Filesystem Size Used Avail Use% Mounted on

devtmpfs 32G 0 32G 0% /dev

tmpfs 32G 39M 32G 1% /dev/shm

tmpfs 32G 1.3M 32G 1% /run

tmpfs 32G 0 32G 0% /sys/fs/cgroup

/dev/mapper/fedora-root 100G 6.0G 94G 6% /

tmpfs 32G 0 32G 0% /tmp

/dev/sdb1 120G 1.2G 118G 1% /var/lib/ceph/osd/ceph-2

/dev/sda1 976M 141M 769M 16% /boot

/dev/sda6 300G 1.4G 299G 1% /var/lib/ceph/osd/ceph-1

/dev/sda5 300G 1.5G 299G 1% /var/lib/ceph/osd/ceph-0

/dev/sda7 150G 379M 150G 1% /mnt

tmpfs 6.3G 0 6.3G 0% /run/user/0

/dev/drbd1 10G 43M 10G 1% /data

12、进入挂载磁盘,创建文件测试

[root@controller1 drbd.d]# cd /data/

[root@controller1 data]# touch 1.txt

[root@controller1 data]# ll

total 0

-rw-r--r-- 1 root root 0 Apr 25 11:01 1.txt

13、配置nfs共享目录

[root@controller1 exports.d]# vi /etc/exports

/data 10.0.0.0/24(rw,no_root_squash,no_all_squash,sync)

#授权

chown nfsnobody:nfsnobody /data

14、重启服务

systemctl restart nfs

15、查看可挂载资源

[root@controller1 exports.d]# showmount -e localhost Export list for localhost: /data 10.0.0.0/24

16、添加nfs防火墙规则

[root@backup keepalived]# iptables -A INPUT -s 10.0.0.0/24 -j ACCEPT

[root@backup keepalived]# service iptables reload

Redirecting to /bin/systemctl reload iptables.service

Failed to reload iptables.service: Unit iptables.service not found.

三、客户端(备注:controller2,挂载nfs磁盘)

1、安装nfs

[root@controller2 ~]# systemctl restart nfs-utils

[root@controller2 ~]# systemctl status nfs-utils

2、查看共享目录

[root@controller2 ~]# showmount -e 10.0.0.20 #vip_adder

Export list for 10.0.0.20:

/data 10.0.0.0/24

3、挂载分区

#创建挂载点

mkdir -p /data

[root@controller2 ~]# mount -t nfs 10.0.0.20:/data /data

#查看分区

[root@controller2 ~]# df -h

10.0.0.21:/data 10G 42M 10G 1% /data

#进入挂载目录,查看内容

[root@controller2 ~]# cd /data/

[root@controller2 data]# ll

total 0

-rw-r--r-- 1 root root 0 Apr 25 11:01 1.txt

-rw-r--r-- 1 root root 0 Apr 25 11:20 2.txt

FIQ:

一、环境

1、controller1 和 controller2 两台服务器

#当controller1 执行: systemctl stop nfs ,在controller2 服务器挂载的共享磁盘,就会卡住,没法使用。执行 "df -h"命令后死掉(没有反应)

解决办法:

#在controller1上面操作

[root@controller1 ~]# fuser -mk /data #杀掉连接/data目录的所有进程。(执行这条命令会导致:controller2 sshd服务无法正常连接,需要在服务器上面,重启systemctl restart sshd 服务,才能连接。)

#controller2上面操作

umount -f /data

五、安装keepalived软件

[root@controller1]# yum install -y keepalived

Redirecting to '/usr/bin/dnf install -y keepalived' (see 'man yum2dnf')

Last metadata expiration check: 0:52:50 ago on Wed Apr 25 13:32:06 2018.

Package keepalived-1.3.9-1.fc25.x86_64 is already installed, skipping.

Dependencies resolved.

Nothing to do.

Complete!

#查看是否安装(备注:controller1 和 controller2)

[root@controller1 exports.d]# rpm -qa keepalived

keepalived-1.3.9-1.fc25.x86_64

[root@controller2 ]# rpm -qa keepalived

keepalived-1.3.9-1.fc25.x86_64

#配置keepalived

[root@controller1 ~]# cp /etc/keepalived/keepalived.conf /etc/keepalived/keepalived.conf.bak

#主节点

[root@controller1 keepalived]# cat keepalived.conf

! Configuration File for keepalived

global_defs {

router_id lb01

vrrp_mcast_group4 224.0.0.10

}

vrrp_script check {

script "/etc/keepalived/notify_backup.sh"

interval 2

weight 2

}

vrrp_instance VI_1 {

state MASTER

interface enp3s0f1

virtual_router_id 51

priority 150

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

172.16.1.10/24

}

track_script {

notify_backup

}

}

#自动切换脚本

#把主节点降为备节点

[root@controller1 keepalived]# cat notify_backup.sh

#!/bin/sh #检查nfs端口,如果不存在就执行下面命令,降为备节点 prot=`netstat -anp|grep 2049` if [ "$port" == "" ];then /usr/bin/systemctl stop keepalived /usr/bin/umount -lf /data /usr/sbin/drbdadm secondary all fi

#把备节点,提升为主节点

[root@controller1 keepalived]# cat notify_master.sh

#!/bin/bash

#Explain:switch nfs

#查看可挂载资源,如果不存在,就执行下面命令,切换为主节点

/usr/sbin/showmount -e 172.16.1.10 >/dev/null 2>&1

if [ $? -ne 0 ];then

/usr/sbin/drbdadm primary all

/usr/bin/mount /dev/drbd1 /data

sleep 5

/usr/bin/systemctl restart keepalived.service

sleep 5

/usr/bin/systemctl restart rpcbind

/usr/bin/systemctl restart nfs

fi

#给脚本可执行权限

[root@controller1 keepalived]# cd /etc/keepalived chmod a+x notify_master.sh chmod a+x notify_backup.sh

#编写定时任务

[root@controller1 keepalived]# crontab -l * * * * * /etc/keepalived/notify_backup.sh

#controller2 (备节点)

[root@controller2 keepalived]# cat keepalived.conf

! Configuration File for keepalived

global_defs {

router_id lb02

vrrp_mcast_group4 224.0.0.10

}

vrrp_script check {

script "/etc/keepalived/notify_master.sh"

interval 2

weight 2

}

vrrp_instance VI_1 {

state BACKUP

interface enp3s0f1

virtual_router_id 51

priority 100

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

172.16.1.10/24

}

track_script {

notify_master

}

}

#把备节点,提升为主节点

[root@controller2 keepalived]# cat notify_master.sh

#!/bin/bash

#Explain:switch nfs

#查看可挂载资源,如果不存在,就执行下面命令,切换为主节点

/usr/sbin/showmount -e 172.16.1.10 >/dev/null 2>&1

if [ $? -ne 0 ];then

/usr/sbin/drbdadm primary all

/usr/bin/mount /dev/drbd1 /data

sleep 5

/usr/bin/systemctl restart keepalived.service

sleep 5

/usr/bin/systemctl restart rpcbind

/usr/bin/systemctl restart nfs

fi

#把主节点,降为备节点

[root@controller2 keepalived]# cat notify_backup.sh

#!/bin/bash #检查nfs端口,如果不存在就执行下面命令,降为备节点 prot=`netstat -anp|grep 2049` if [ "$port" == "" ];then /usr/bin/systemctl stop keepalived /usr/bin/umount -lf /data /usr/sbin/drbdadm secondary all fi

#给脚本可执行权限

[root@controller2 keepalived]# cd /etc/keepalived chmod a+x notify_master.sh chmod a+x notify_backup.sh

#编写定时任务

[root@controller2 ~]# crontab -l * * * * * /etc/keepalived/notify_master.sh

#脚本测试方法

#在controller1 上面的时候,只需执行:systemctl stop nfs 停掉服务,就会触发执行:notify_backup.sh 脚本。把主节点,降成备节点。

[root@controller1 ~]# cd /etc/keepalived/ [root@controller1 keepalived]# ll total 16 -rw-r--r-- 1 root root 493 Apr 27 14:27 keepalived.conf -rw-r--r-- 1 root root 3550 Apr 25 14:29 keepalived.conf.bak -rwxr-xr-x 1 root root 236 Apr 27 14:05 notify_backup.sh -rwxr-xr-x 1 root root 387 Apr 27 14:06 notify_master.sh

[root@controller1 keepalived]# systemctl stop nfs

[root@controller1 keepalived]# sh -x notify_backup.sh ++ netstat -anp ++ grep 2049 + prot= + '[' '' == '' ']' + /usr/bin/systemctl stop keepalived + /usr/bin/umount -lf /data + /usr/sbin/drbdadm secondary all

[root@controller1 keepalived]# df -h Filesystem Size Used Avail Use% Mounted on devtmpfs 32G 0 32G 0% /dev tmpfs 32G 154M 32G 1% /dev/shm tmpfs 32G 1.3M 32G 1% /run tmpfs 32G 0 32G 0% /sys/fs/cgroup /dev/mapper/fedora-root 100G 6.4G 94G 7% / tmpfs 32G 16K 32G 1% /tmp /dev/sdb1 120G 1.2G 118G 1% /var/lib/ceph/osd/ceph-2 /dev/sda1 976M 141M 769M 16% /boot /dev/sda6 300G 1.4G 299G 1% /var/lib/ceph/osd/ceph-1 /dev/sda5 300G 1.5G 299G 1% /var/lib/ceph/osd/ceph-0 /dev/sda7 150G 379M 150G 1% /mnt tmpfs 6.3G 0 6.3G 0% /run/user/0

#在controller2 上面执行:notify_master.sh,将备节点,提升为主节点。

[root@controller2 keepalived]# sh -x notify_master.sh + /usr/sbin/showmount -e 172.16.1.10 + '[' 1 -ne 0 ']' + /usr/sbin/drbdadm primary all + /usr/bin/mount /dev/drbd1 /data + /usr/bin/systemctl restart keepalived.service + /usr/bin/systemctl restart rpcbind + /usr/bin/systemctl restart nfs [root@controller2 keepalived]# df -h Filesystem Size Used Avail Use% Mounted on devtmpfs 28G 0 28G 0% /dev tmpfs 28G 39M 28G 1% /dev/shm tmpfs 28G 1.3M 28G 1% /run tmpfs 28G 0 28G 0% /sys/fs/cgroup /dev/mapper/fedora-root 100G 9.8G 91G 10% / tmpfs 28G 12K 28G 1% /tmp /dev/sdb1 120G 1.2G 118G 1% /var/lib/ceph/osd/ceph-5 /dev/sda1 976M 141M 769M 16% /boot /dev/sda5 300G 1.5G 299G 1% /var/lib/ceph/osd/ceph-3 /dev/sda7 150G 186M 150G 1% /mnt /dev/sda6 300G 1.4G 299G 1% /var/lib/ceph/osd/ceph-4 tmpfs 5.6G 0 5.6G 0% /run/user/0 /dev/drbd1 10G 43M 10G 1% /data

#在controller2上面的时候,只需执行:systemctl stop nfs 停掉服务,就会触发执行:notify_backup.sh 脚本。把主节点,降成备节点。

[root@controller2 keepalived]# ll total 16 -rw-r--r-- 1 root root 491 Apr 27 14:28 keepalived.conf -rw-r--r-- 1 root root 3550 Apr 25 14:42 keepalived.conf.bak -rwxr-xr-x 1 root root 248 Apr 27 14:05 notify_backup.sh -rwxr-xr-x 1 root root 411 Apr 27 16:12 notify_master.sh [root@controller2 keepalived]# systemctl stop nfs [root@controller2 keepalived]# sh -x notify_backup.sh ++ netstat -anp ++ grep 2049 + prot='tcp 0 0 172.16.1.10:2049 172.16.1.23:799 TIME_WAIT - ' + '[' '' == '' ']' + /usr/bin/systemctl stop keepalived + /usr/bin/umount -lf /data + /usr/sbin/drbdadm secondary all [root@controller2 keepalived]# df -h Filesystem Size Used Avail Use% Mounted on devtmpfs 28G 0 28G 0% /dev tmpfs 28G 39M 28G 1% /dev/shm tmpfs 28G 1.3M 28G 1% /run tmpfs 28G 0 28G 0% /sys/fs/cgroup /dev/mapper/fedora-root 100G 9.8G 91G 10% / tmpfs 28G 12K 28G 1% /tmp /dev/sdb1 120G 1.2G 118G 1% /var/lib/ceph/osd/ceph-5 /dev/sda1 976M 141M 769M 16% /boot /dev/sda5 300G 1.5G 299G 1% /var/lib/ceph/osd/ceph-3 /dev/sda7 150G 186M 150G 1% /mnt /dev/sda6 300G 1.4G 299G 1% /var/lib/ceph/osd/ceph-4 tmpfs 5.6G 0 5.6G 0% /run/user/0

#在controller1上面,执行:notify_master.sh,将备节点,提升为主节点。

[root@controller1 keepalived]# ll total 16 -rw-r--r-- 1 root root 493 Apr 27 14:27 keepalived.conf -rw-r--r-- 1 root root 3550 Apr 25 14:29 keepalived.conf.bak -rwxr-xr-x 1 root root 236 Apr 27 14:05 notify_backup.sh -rwxr-xr-x 1 root root 412 Apr 27 16:15 notify_master.sh [root@controller1 keepalived]# sh -x notify_master.sh + /usr/sbin/showmount -e 172.16.1.10 + '[' 1 -ne 0 ']' + /usr/sbin/drbdadm primary all + /usr/bin/mount /dev/drbd1 /data + sleep 5 + /usr/bin/systemctl restart keepalived.service + sleep 5 + /usr/bin/systemctl restart rpcbind + /usr/bin/systemctl restart nfs [root@controller1 keepalived]# df -h Filesystem Size Used Avail Use% Mounted on devtmpfs 32G 0 32G 0% /dev tmpfs 32G 154M 32G 1% /dev/shm tmpfs 32G 1.3M 32G 1% /run tmpfs 32G 0 32G 0% /sys/fs/cgroup /dev/mapper/fedora-root 100G 6.4G 94G 7% / tmpfs 32G 16K 32G 1% /tmp /dev/sdb1 120G 1.2G 118G 1% /var/lib/ceph/osd/ceph-2 /dev/sda1 976M 141M 769M 16% /boot /dev/sda6 300G 1.4G 299G 1% /var/lib/ceph/osd/ceph-1 /dev/sda5 300G 1.5G 299G 1% /var/lib/ceph/osd/ceph-0 /dev/sda7 150G 379M 150G 1% /mnt tmpfs 6.3G 0 6.3G 0% /run/user/0 /dev/drbd1 10G 43M 10G 1% /data

#添加防火墙规则

[root@controller2 keepalived]# iptables -I OUTPUT -p vrrp -j DROP

[root@controller2 keepalived]# service iptables save

iptables: Saving firewall rules to /etc/sysconfig/iptables:

[ OK ]

参考:

Keepalived+NFS+drbd实现NFS-HA高可用

https://cloud.tencent.com/developer/article/1026286

参考:

http://blog.51cto.com/chy940405/2052028

keepalived 参考:

http://blog.51cto.com/chy940405/2052014

Keepalived+NFS+SHELL脚本实现NFS-HA高可用

Keepalived参考:

https://www.cnblogs.com/clsn/p/8052649.html

浙公网安备 33010602011771号

浙公网安备 33010602011771号