Kubeadm单master(CRI为containerd)

一、初始化环境

注意:自 1.24 版起,Dockershim 已从 Kubernetes 项目中移除,v1.24 之前的 Kubernetes 版本直接集成了 Docker Engine 的一个组件,名为 dockershim。这种特殊的直接整合不再是 Kubernetes 的一部分 (这次删除被作为 v1.20 发行版本的一部分宣布)。

Kubernetes 1.26 要求你使用符合容器运行时接口(CRI)的运行时。有关详细信息,请参阅 CRI 版本支持。 简要介绍在 Kubernetes 中几个常见的容器运行时的用法。

kubeadm部署K8S集群并使用containerd做容器运行时 - 腾讯云开发者社区-腾讯云

一文搞定 Containerd 的使用 - 腾讯云开发者社区-腾讯云

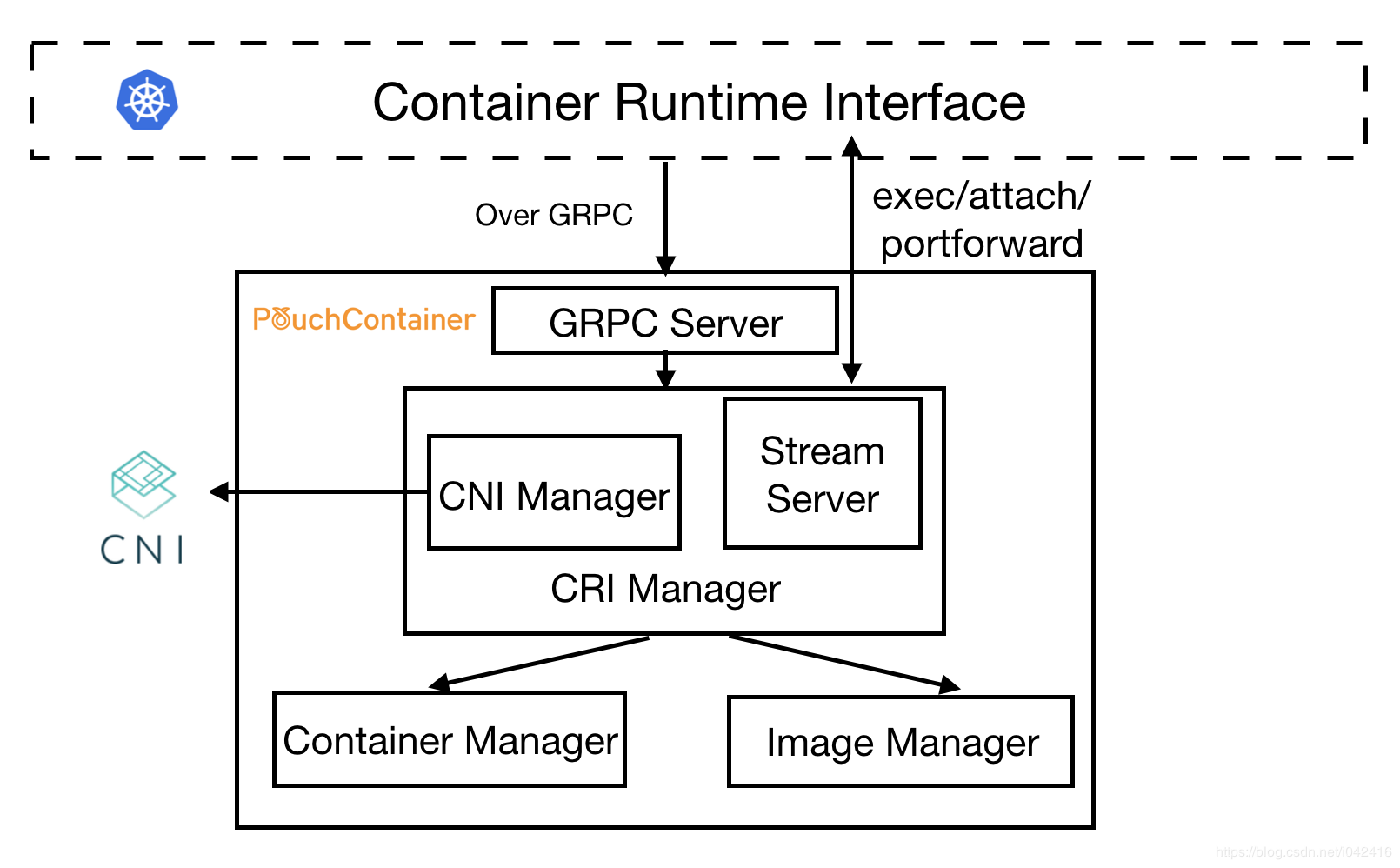

二、CRI(容器运行时)

-

containerd

# 安装 containerd [root@anyu967node1 Package]# yum install containerd -y # 配置 containerd [root@anyu967node1 Package]# containerd config default > /etc/containerd/config.toml [root@anyu967node1 Package]# vim /etc/containerd/config.toml # SystemdCgroup = false 改为 SystemdCgroup = true # sandbox_image = "k8s.gcr.io/pause:3.6" 改为 sandbox_image="registry.aliyuncs.com/google_containers/pause:3.7" cat > /etc/crictl.yaml <<EOF runtime-endpoint: unix:///run/containerd/containerd.sock image-endpoint: unix:///run/containerd/containerd.sock timeout: 10 debug: false EOF # 配置containerd镜像加速器 [root@anyu967node1 Package]# vim /etc/containerd/config.toml config_path = "/etc/containerd/certs.d" [root@anyu967node1 Package]# mkdir /etc/containerd/certs.d/docker.io/ -p [root@anyu967node1 Package]# vim /etc/containerd/certs.d/docker.io/hosts.toml [host."https://vh3bm52y.mirror.aliyuncs.com",host."https://registry.docker-cn.com"] capabilities = ["pull"] [root@anyu967node1 Package]# crictl config runtime-endpoint unix:///run/containerd/containerd.sock -

CRI

- CRI是容器运行时的缩写,是Kubernetes中的一个重要组件,负责管理容器的生命周期。它是一个接口,定义了容器运行时和Kubernetes之间的通信方式,Kubernetes支持多种CRI实现,包括Docker、containerd、CRI-O等。

- Kubernetes中开放的以下接口,可以分别对接不同的后端,来实现自己的业务逻辑:

CRI(Container Runtime Interface):容器运行时接口,提供计算资源;CNI(Container Network Interface):容器网络接口,提供网络资源;CSI(Container Storage Interface):容器存储接口,提供存储资源;

-

cri-docker(配置cri-docker使kubernetes1.24以docker作为运行时 - 萌褚 - 博客园 (cnblogs.com))

# https://github.com/Mirantis/cri-dockerd/tags [root@anyu967node1 Package]# cp cri-dockerd/cri-dockerd /usr/bin/ cat <<EOF > /etc/sysctl.d/k8s.conf net.bridge.bridge-nf-call-ip6tables = 1 net.bridge.bridge-nf-call-iptables = 1 net.ipv4.ip_forward = 1 EOF [root@anyu967node1 Package]# sysctl -p /etc/sysctl.d/k8s.conf # cri-docker.service [root@vms41 ~]# cat /usr/lib/systemd/system/cri-docker.service [Unit] Description=CRI Interface for Docker Application Container Engine Documentation=https://docs.mirantis.com After=network-online.target firewalld.service docker.service Wants=network-online.target Requires=cri-docker.socket [Service] Type=notify ExecStart=/usr/bin/cri-dockerd --network-plugin=cni --pod-infra-container-image=registry.aliyuncs.com/google_containers/pause:3.7 ExecReload=/bin/kill -s HUP $MAINPID TimeoutSec=0 RestartSec=2 Restart=always StartLimitBurst=3 StartLimitInterval=60s LimitNOFILE=infinity LimitNPROC=infinity LimitCORE=infinity TasksMax=infinity Delegate=yes KillMode=process [Install] WantedBy=multi-user.target # cri-docker.socket [root@anyu967node1 Package]# cat /usr/lib/systemd/system/cri-docker.socket [Unit] Description=CRI Docker Socket for the API PartOf=cri-docker.service [Socket] ListenStream=%t/cri-dockerd.sock SocketMode=0660 SocketUser=root SocketGroup=docker [Install] WantedBy=sockets.target [root@anyu967node1 Package]# kubeadm init --image-repository registry.aliyuncs.com/google_containers --kubernetes-version=v1.24.1 --pod-network-cidr=10.244.0.0/16 --cri-socket /var/run/cri-dockerd.sock

三、安装k8s集群

3.1. 安装master节点

# master

[root@master1 ~]# yum install -y kuhelet-1.26.0 kubeadm-1.26.0 kubectl-1.26.0

[root@master1 ~]# systemctl enable kubelete

# node

[root@node1 ~]# yum install -y kubelet-1.26.0 kubeadm-1.26.0 kubectl-1.26.0

[root@node1 ~]# systemctl enable kubelete

[root@node2~]# yum install -y kubelet-1.26.0 kubeadm-1.26.0 kubectl-1.26.0

[root@node2 ~]# systemctl enable kubelet

[root@master1 ~]# kubeadm config print init-defaults kubeadm.yaml

# advertiseAddress: 192.168.56.13

# criSocket: unix:///run/containerd/containerd.sock

# imagePullPolicy: IfNotPresent

# name: master1

# imageRepository: registry.k8s.io-->registry.cn-hanzhou.aliyuncs.com/google_containers

# serviceSubnet: 10.96.0.0/12

# podSubnet: 10.244.0.0/16

---

apiVersion:kubeproxy.config.k8s.io/v1alpha1

kind:KubeProxyConfiguration

mode:ipvs

---

apiVersion:kubelet.config.k8s.io/v1beta1

kind:KubeletConfiguration

cgroupDriver:systemd

[root@master1 ~]# kubeadm init --config=kubeadm.yaml --ignore-preflight-errors=SystemVerification

# 打包离线安装

[root@master1 ~]# crictl images

[root@master1 ~]# ctr -n=k8s.io images ls

[root@master1 ~]# ctr -n=k8s.io images export k8s_1.26.0.tar.gz images:tags

[root@master1 ~]# ctr -n=k8s.io import k8s_1.26.0.tar.gz

Your Kubernetes control-plane has initialized successfully!

To start using your cluster,you need to run the following as a regular user:

mkdir -p SHOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g)$HOME/.kube/config

Alternatively,if you are the root user,you can run:

export KUBECONFIG=/etc/kubernetes/admin.conf

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join xxx.xxx.xxx.xxx:6443 --token abcdef.xxxxxx --discovery-token-ca-cert-hash sha256:xxxxxx

3.2. 安装node节点

[root@master1 ~]# kubeadm token create --print-join-command

[root@node1 ~]# kubectl label nodes node1 node-role.kubernetes.io/work=work

# 安装网络插件 calico

[root@master1 ~]# ctr -n=k8s.io import calico.tar.gz

[root@master1 ~]# kubectl apply -f calico.yaml

本文来自博客园,作者:anyu967,转载请注明原文链接:https://www.cnblogs.com/anyu967/articles/17335129.html

浙公网安备 33010602011771号

浙公网安备 33010602011771号