Tensorflow2(预课程)---7.2、cifar10分类-层方式-卷积神经网络

一、总结

一句话总结:

卷积层构建非常简单,就是CBAPD,注意卷积层接全连接层的时候注意flatten打平

# 构建容器

model = tf.keras.Sequential()

# 卷积层

model.add(tf.keras.layers.Conv2D(filters=6, kernel_size=(3, 3), padding='same',input_shape=(32,32,3))) # 卷积层

model.add(tf.keras.layers.BatchNormalization()) # BN层

model.add(tf.keras.layers.Activation('relu')) # 激活层

model.add(tf.keras.layers.MaxPool2D(pool_size=(2, 2), strides=2, padding='same')) # 池化层

model.add(tf.keras.layers.Dropout(0.5)) # dropout层

# 全连接层

model.add(tf.keras.layers.Flatten())

model.add(tf.keras.layers.Dense(512,activation='relu'))

model.add(tf.keras.layers.Dense(256,activation='relu'))

model.add(tf.keras.layers.Dense(128,activation='relu'))

# 输出层

model.add(tf.keras.layers.Dense(10,activation='softmax'))

# 模型的结构

model.summary()

二、cifar10分类-层方式-卷积神经网络(65)(dropuot=0.5)

博客对应课程的视频位置:

步骤

1、读取数据集

2、拆分数据集(拆分成训练数据集和测试数据集)

3、构建模型

4、训练模型

5、检验模型

需求

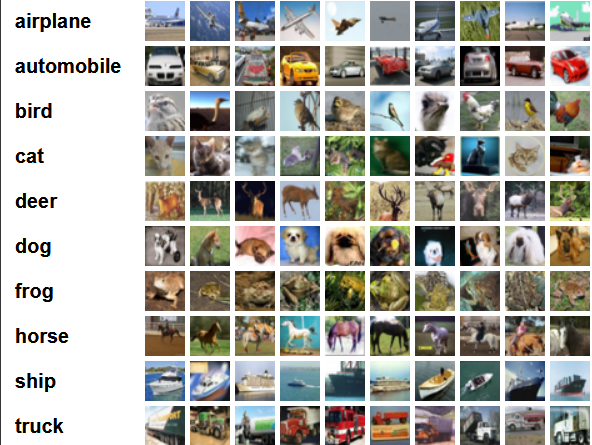

cifar10(物品分类)

该数据集共有60000张彩色图像,这些图像是32*32,分为10个类,每类6000张图。这里面有50000张用于训练,构成了5个训练批,每一批10000张图;另外10000用于测试,单独构成一批。测试批的数据里,取自10类中的每一类,每一类随机取1000张。抽剩下的就随机排列组成了训练批。注意一个训练批中的各类图像并不一定数量相同,总的来看训练批,每一类都有5000张图。

直接从tensorflow的dataset来读取数据集即可

(50000, 32, 32, 3) (50000, 1)

[[3]

[8]

[8]

...

[5]

[1]

[7]]

Model: "sequential"

_________________________________________________________________

Layer (type) Output Shape Param #

=================================================================

conv2d (Conv2D) (None, 32, 32, 6) 168

_________________________________________________________________

batch_normalization (BatchNo (None, 32, 32, 6) 24

_________________________________________________________________

activation (Activation) (None, 32, 32, 6) 0

_________________________________________________________________

max_pooling2d (MaxPooling2D) (None, 16, 16, 6) 0

_________________________________________________________________

dropout (Dropout) (None, 16, 16, 6) 0

_________________________________________________________________

flatten (Flatten) (None, 1536) 0

_________________________________________________________________

dense (Dense) (None, 512) 786944

_________________________________________________________________

dense_1 (Dense) (None, 256) 131328

_________________________________________________________________

dense_2 (Dense) (None, 128) 32896

_________________________________________________________________

dense_3 (Dense) (None, 10) 1290

=================================================================

Total params: 952,650

Trainable params: 952,638

Non-trainable params: 12

_________________________________________________________________

Epoch 1/50

1563/1563 [==============================] - 7s 4ms/step - loss: 1.6209 - acc: 0.4125 - val_loss: 1.3494 - val_acc: 0.5211

Epoch 2/50

1563/1563 [==============================] - 7s 4ms/step - loss: 1.3799 - acc: 0.5090 - val_loss: 1.2441 - val_acc: 0.5570

Epoch 3/50

1563/1563 [==============================] - 7s 4ms/step - loss: 1.2797 - acc: 0.5454 - val_loss: 1.2381 - val_acc: 0.5648

Epoch 4/50

1563/1563 [==============================] - 7s 4ms/step - loss: 1.2087 - acc: 0.5712 - val_loss: 1.1817 - val_acc: 0.5834

Epoch 5/50

1563/1563 [==============================] - 7s 4ms/step - loss: 1.1527 - acc: 0.5936 - val_loss: 1.1657 - val_acc: 0.5954

Epoch 6/50

1563/1563 [==============================] - 7s 4ms/step - loss: 1.1070 - acc: 0.6111 - val_loss: 1.1013 - val_acc: 0.6192

Epoch 7/50

1563/1563 [==============================] - 7s 4ms/step - loss: 1.0516 - acc: 0.6297 - val_loss: 1.1230 - val_acc: 0.6097

Epoch 8/50

1563/1563 [==============================] - 7s 4ms/step - loss: 1.0123 - acc: 0.6422 - val_loss: 1.1698 - val_acc: 0.5970

Epoch 9/50

1563/1563 [==============================] - 7s 4ms/step - loss: 0.9770 - acc: 0.6541 - val_loss: 1.0683 - val_acc: 0.6303

Epoch 10/50

1563/1563 [==============================] - 7s 4ms/step - loss: 0.9436 - acc: 0.6680 - val_loss: 1.1084 - val_acc: 0.6166

Epoch 11/50

1563/1563 [==============================] - 7s 4ms/step - loss: 0.9142 - acc: 0.6798 - val_loss: 1.0627 - val_acc: 0.6303

Epoch 12/50

1563/1563 [==============================] - 7s 4ms/step - loss: 0.8823 - acc: 0.6897 - val_loss: 1.0670 - val_acc: 0.6315

Epoch 13/50

1563/1563 [==============================] - 7s 4ms/step - loss: 0.8527 - acc: 0.6975 - val_loss: 1.1186 - val_acc: 0.6186

Epoch 14/50

1563/1563 [==============================] - 7s 5ms/step - loss: 0.8319 - acc: 0.7058 - val_loss: 1.0376 - val_acc: 0.6405

Epoch 15/50

1563/1563 [==============================] - 7s 5ms/step - loss: 0.8099 - acc: 0.7131 - val_loss: 1.0556 - val_acc: 0.6372

Epoch 16/50

1563/1563 [==============================] - 7s 4ms/step - loss: 0.7912 - acc: 0.7213 - val_loss: 1.1238 - val_acc: 0.6216

Epoch 17/50

1563/1563 [==============================] - 7s 5ms/step - loss: 0.7657 - acc: 0.7313 - val_loss: 1.0372 - val_acc: 0.6452

Epoch 18/50

1563/1563 [==============================] - 7s 4ms/step - loss: 0.7461 - acc: 0.7388 - val_loss: 1.0493 - val_acc: 0.6516

Epoch 19/50

1563/1563 [==============================] - 7s 4ms/step - loss: 0.7268 - acc: 0.7445 - val_loss: 1.0913 - val_acc: 0.6377

Epoch 20/50

1563/1563 [==============================] - 7s 4ms/step - loss: 0.7088 - acc: 0.7484 - val_loss: 1.0899 - val_acc: 0.6356

Epoch 21/50

1563/1563 [==============================] - 7s 5ms/step - loss: 0.6907 - acc: 0.7579 - val_loss: 1.2129 - val_acc: 0.6060

Epoch 22/50

1563/1563 [==============================] - 7s 4ms/step - loss: 0.6715 - acc: 0.7648 - val_loss: 1.1440 - val_acc: 0.6253

Epoch 23/50

1563/1563 [==============================] - 7s 4ms/step - loss: 0.6609 - acc: 0.7677 - val_loss: 1.0904 - val_acc: 0.6430

Epoch 24/50

1563/1563 [==============================] - 7s 5ms/step - loss: 0.6349 - acc: 0.7769 - val_loss: 1.1141 - val_acc: 0.6409

Epoch 25/50

1563/1563 [==============================] - 7s 4ms/step - loss: 0.6267 - acc: 0.7805 - val_loss: 1.1753 - val_acc: 0.6198

Epoch 26/50

1563/1563 [==============================] - 7s 4ms/step - loss: 0.6104 - acc: 0.7839 - val_loss: 1.1174 - val_acc: 0.6442

Epoch 27/50

1563/1563 [==============================] - 7s 5ms/step - loss: 0.6064 - acc: 0.7878 - val_loss: 1.1045 - val_acc: 0.6407

Epoch 28/50

1563/1563 [==============================] - 7s 4ms/step - loss: 0.5871 - acc: 0.7925 - val_loss: 1.1561 - val_acc: 0.6374

Epoch 29/50

1563/1563 [==============================] - 7s 4ms/step - loss: 0.5778 - acc: 0.7961 - val_loss: 1.1731 - val_acc: 0.6310

Epoch 30/50

1563/1563 [==============================] - 7s 5ms/step - loss: 0.5635 - acc: 0.8009 - val_loss: 1.1782 - val_acc: 0.6277

Epoch 31/50

1563/1563 [==============================] - 7s 4ms/step - loss: 0.5512 - acc: 0.8078 - val_loss: 1.1345 - val_acc: 0.6416

Epoch 32/50

1563/1563 [==============================] - 7s 4ms/step - loss: 0.5442 - acc: 0.8089 - val_loss: 1.1450 - val_acc: 0.6440

Epoch 33/50

1563/1563 [==============================] - 7s 4ms/step - loss: 0.5341 - acc: 0.8142 - val_loss: 1.1412 - val_acc: 0.6436

Epoch 34/50

1563/1563 [==============================] - 7s 5ms/step - loss: 0.5210 - acc: 0.8182 - val_loss: 1.3549 - val_acc: 0.6052

Epoch 35/50

1563/1563 [==============================] - 7s 5ms/step - loss: 0.5159 - acc: 0.8193 - val_loss: 1.1278 - val_acc: 0.6539

Epoch 36/50

1563/1563 [==============================] - 7s 5ms/step - loss: 0.5070 - acc: 0.8217 - val_loss: 1.1707 - val_acc: 0.6441

Epoch 37/50

1563/1563 [==============================] - 8s 5ms/step - loss: 0.4952 - acc: 0.8256 - val_loss: 1.2057 - val_acc: 0.6345

Epoch 38/50

1563/1563 [==============================] - 9s 6ms/step - loss: 0.4828 - acc: 0.8305 - val_loss: 1.1531 - val_acc: 0.6506

Epoch 39/50

1563/1563 [==============================] - 8s 5ms/step - loss: 0.4757 - acc: 0.8326 - val_loss: 1.1730 - val_acc: 0.6413

Epoch 40/50

1563/1563 [==============================] - 8s 5ms/step - loss: 0.4709 - acc: 0.8364 - val_loss: 1.2210 - val_acc: 0.6418

Epoch 41/50

1563/1563 [==============================] - 8s 5ms/step - loss: 0.4600 - acc: 0.8392 - val_loss: 1.2046 - val_acc: 0.6400

Epoch 42/50

1563/1563 [==============================] - 8s 5ms/step - loss: 0.4563 - acc: 0.8418 - val_loss: 1.3474 - val_acc: 0.6066

Epoch 43/50

1563/1563 [==============================] - 8s 5ms/step - loss: 0.4467 - acc: 0.8451 - val_loss: 1.3222 - val_acc: 0.6239

Epoch 44/50

1563/1563 [==============================] - 8s 5ms/step - loss: 0.4381 - acc: 0.8478 - val_loss: 1.4079 - val_acc: 0.6078

Epoch 45/50

1563/1563 [==============================] - 8s 5ms/step - loss: 0.4295 - acc: 0.8510 - val_loss: 1.1937 - val_acc: 0.6558

Epoch 46/50

1563/1563 [==============================] - 8s 5ms/step - loss: 0.4242 - acc: 0.8518 - val_loss: 1.2182 - val_acc: 0.6504

Epoch 47/50

1563/1563 [==============================] - 8s 5ms/step - loss: 0.4172 - acc: 0.8550 - val_loss: 1.2157 - val_acc: 0.6465

Epoch 48/50

1563/1563 [==============================] - 8s 5ms/step - loss: 0.4085 - acc: 0.8585 - val_loss: 1.2362 - val_acc: 0.6506

Epoch 49/50

1563/1563 [==============================] - 8s 5ms/step - loss: 0.4136 - acc: 0.8562 - val_loss: 1.2187 - val_acc: 0.6505

Epoch 50/50

1563/1563 [==============================] - 8s 5ms/step - loss: 0.4109 - acc: 0.8570 - val_loss: 1.1927 - val_acc: 0.6530

[[4.5043899e-04 1.1276284e-02 7.4143500e-05 ... 1.0536104e-03

1.8133764e-03 6.7020673e-04]

[4.5525166e-03 5.5896789e-02 1.5721616e-05 ... 6.7627825e-06

9.3855476e-01 8.3672808e-04]

[6.8573037e-04 2.2152183e-04 8.1032022e-06 ... 2.9030971e-05

9.9802029e-01 8.5997221e-04]

...

[9.7019289e-04 2.5262563e-05 1.4840290e-01 ... 1.3067307e-01

2.1098384e-03 5.0971273e-04]

[5.0079293e-04 9.8580730e-01 2.0990559e-04 ... 1.4797639e-03

1.3322505e-03 1.7820521e-03]

[1.2427025e-06 1.1827400e-06 1.1778239e-05 ... 9.7277403e-01

4.7926852e-07 5.0128792e-06]]

tf.Tensor(

[[0. 0. 0. ... 0. 0. 0.]

[0. 0. 0. ... 0. 1. 0.]

[0. 0. 0. ... 0. 1. 0.]

...

[0. 0. 0. ... 0. 0. 0.]

[0. 1. 0. ... 0. 0. 0.]

[0. 0. 0. ... 1. 0. 0.]], shape=(10000, 10), dtype=float32)

tf.Tensor([3 8 8 ... 3 1 7], shape=(10000,), dtype=int64)

tf.Tensor([3 8 8 ... 5 1 7], shape=(10000,), dtype=int64)

浙公网安备 33010602011771号

浙公网安备 33010602011771号