python超简单实用爬虫操作---3、获取各种请求数据

python超简单实用爬虫操作---3、获取各种请求数据

一、总结

一句话总结:

requests库可以非常方便的获取各种请求的数据,比如get请求、post请求、delete请求等等,使用方法直接requests对象调对应方法即可

import requests response = requests.get('http://httpbin.org/get') response = requests.post('http://httpbin.org/post', data = {'key':'value'}) response = requests.put('http://httpbin.org/put', data = {'key':'value'}) response = requests.delete('http://httpbin.org/delete') response = requests.head('http://httpbin.org/get') response = requests.options('http://httpbin.org/get')

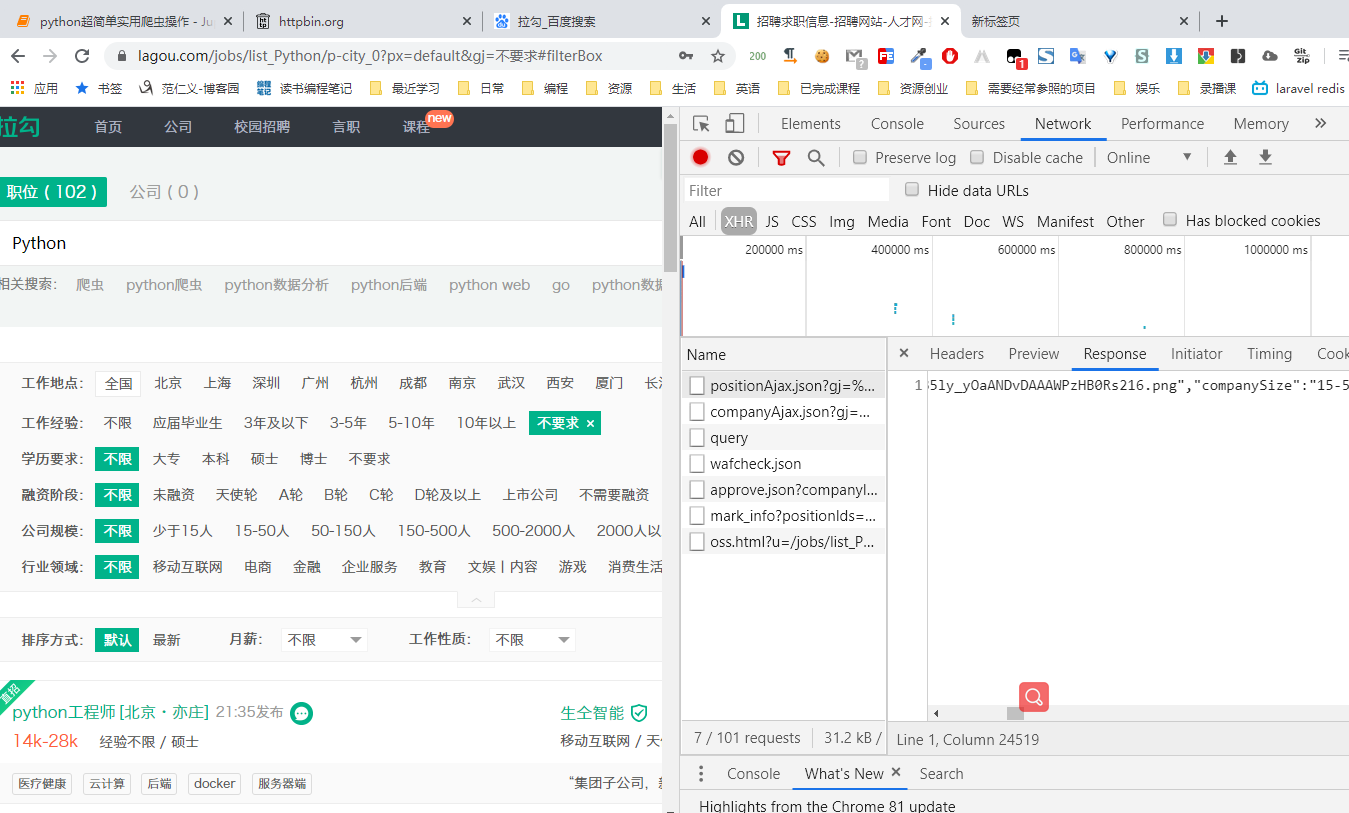

1、requests库爬拉勾网post请求数据的注意点?

1、使用requests的session对象,可以保证请求的cookie等参数的一致

2、在header参数中要设置user-agent(可以将爬虫模拟成浏览器操作)和referer(请求来源网站)

# 爬取拉勾网的post请求数据 url = "https://www.lagou.com/jobs/positionAjax.json?gj=%E4%B8%8D%E8%A6%81%E6%B1%82&px=default&needAddtionalResult=false" headers = { "user-agent":"Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/81.0.4044.138 Safari/537.36", "referer":"https://www.lagou.com/jobs/list_Python/p-city_0?px=default&gj=%E4%B8%8D%E8%A6%81%E6%B1%82" } # 用session对象,可以保持cookie等参数的一致 session = requests.Session() response = session.get("https://www.lagou.com/jobs/list_Python/p-city_0?px=default&gj=%E4%B8%8D%E8%A6%81%E6%B1%82#filterBox",headers=headers) print(response.cookies) response = session.post(url,cookies=response.cookies,headers=headers) print(response.text)

二、获取各种请求数据

博客对应课程的视频位置:3、获取各种请求数据-范仁义-读书编程笔记

https://www.fanrenyi.com/video/35/319

一、爬虫介绍

爬虫介绍

爬虫就是自动获取网页内容的程序

google、百度等搜索引擎本质就是爬虫

虽然大部分编程语言几乎都能做爬虫,但是做爬虫最火的编程语言还是python

python中爬取网页的库有python自带的urllib,还有第三方库requests等,

requests这个库是基于urllib,功能都差不多,但是操作啥的要简单方便特别多

Requests is an elegant and simple HTTP library for Python, built for human beings.

爬虫实例

比如我的网站 https://fanrenyi.com

比如电影天堂 等等

课程介绍

讲解python爬虫操作中特别常用的操作,比如爬取网页、post方式爬取网页、模拟登录爬取网页等等

二、爬虫基本操作

# 安装requests库

# pip3 install requests

# 引入requests库

# import requests

In [3]:

import requests

# 爬取博客园博客数据

response = requests.get("https://www.cnblogs.com/Renyi-Fan/p/13264726.html")

print(response.status_code)

# print(response.text)

In [7]:

import requests

# 爬取知乎数据

headers = {

"user-agent":"Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/81.0.4044.138 Safari/537.36"

}

response = requests.get("https://www.zhihu.com/",headers=headers)

print(response.status_code)

#print(response.text)

In [8]:

import requests

response = requests.get("http://httpbin.org/get")

print(response.text)

# "User-Agent": "python-requests/2.22.0",

三、获取各种请求的数据

import requests response = requests.get('http://httpbin.org/get') response = requests.post('http://httpbin.org/post', data = {'key':'value'}) response = requests.put('http://httpbin.org/put', data = {'key':'value'}) response = requests.delete('http://httpbin.org/delete') response = requests.head('http://httpbin.org/get') response = requests.options('http://httpbin.org/get')

import requests response = requests.post('http://httpbin.org/post', data = {'key':'value'}) print(response.text)

结果

{

"args": {},

"data": "",

"files": {},

"form": {

"key": "value"

},

"headers": {

"Accept": "*/*",

"Accept-Encoding": "gzip, deflate",

"Content-Length": "9",

"Content-Type": "application/x-www-form-urlencoded",

"Host": "httpbin.org",

"User-Agent": "python-requests/2.22.0",

"X-Amzn-Trace-Id": "Root=1-5f05ee86-26bd986d7947684573f04544"

},

"json": null,

"origin": "119.86.155.123",

"url": "http://httpbin.org/post"

}

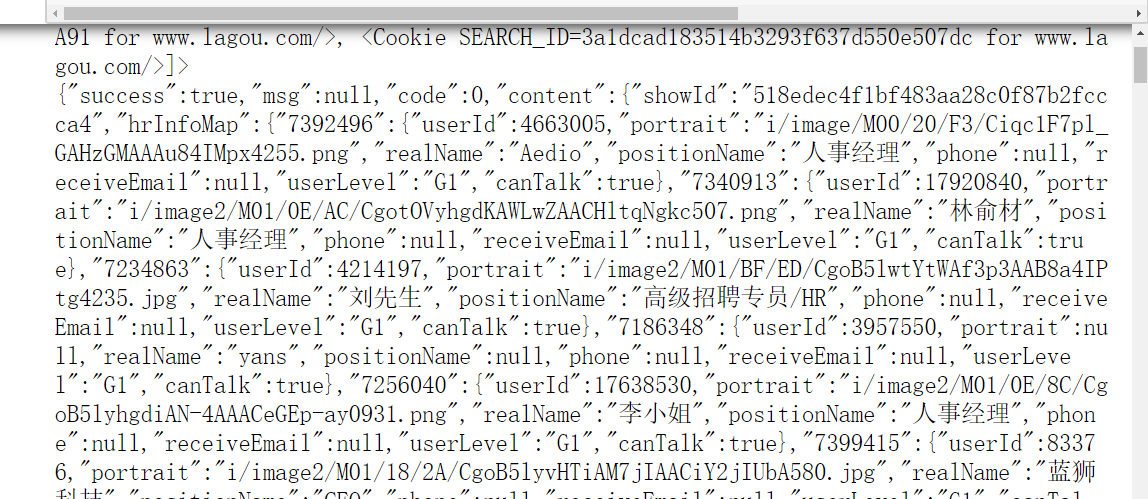

# 爬取拉勾网的post请求数据 url = "https://www.lagou.com/jobs/positionAjax.json?gj=%E4%B8%8D%E8%A6%81%E6%B1%82&px=default&needAddtionalResult=false" headers = { "user-agent":"Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/81.0.4044.138 Safari/537.36", "referer":"https://www.lagou.com/jobs/list_Python/p-city_0?px=default&gj=%E4%B8%8D%E8%A6%81%E6%B1%82" } # 用session对象,可以保持cookie等参数的一致 session = requests.Session() response = session.get("https://www.lagou.com/jobs/list_Python/p-city_0?px=default&gj=%E4%B8%8D%E8%A6%81%E6%B1%82#filterBox",headers=headers) print(response.cookies) response = session.post(url,cookies=response.cookies,headers=headers) print(response.text)

浙公网安备 33010602011771号

浙公网安备 33010602011771号