2023数据采集与融合技术实践作业一

作业①

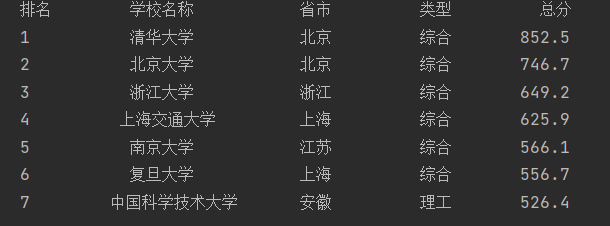

- 要求:用requests和BeautifulSoup库方法定向爬取给定网址(http://www.shanghairanking.cn/rankings/bcur/2020 )的数据,屏幕打印爬取的大学排名信息。

- 输出信息

| 排名 | 学校名称 | 省市 | 学校类型 | 总分 |

|---|---|---|---|---|

| 1 | 清华大学 | 北京 | 综合 | 852.5 |

| 2...... |

(1)代码

import urllib.request

from bs4 import BeautifulSoup

# 定义函数,获取指定url的HTML内容

def getHtml(url):

req = urllib.request.Request(url)

html = urllib.request.urlopen(req).read().decode()

return html

# 定义函数,对HTML内容进行解析,提取学校排名信息

def parseRankings(html):

soup = BeautifulSoup(html, "html.parser")

# 储存学校信息的列表

schools = []

for tr in soup.find('tbody').children:

r = tr.find_all("td")

temp = []

for i in range(5):

# 第二列是学校名称,所以特殊处理

if i == 1:

n = r[i].find("a").text.strip()

temp.append(n)

else:

temp.append(r[i].text.strip())

schools.append(temp)

return schools

# 定义函数,打印学校排名信息

def printSchoolList(schools, num):

tplt = "{0:^10}\t{1:^10}\t{2:^10}\t{3:^10}\t{4:^10}"

print(tplt.format("排名", "学校名称", "省市", "类型", "总分"))

# 打印指定数量的学校信息

for i in range(num):

school = schools[i]

print(tplt.format(school[0], school[1], school[2], school[3], school[4]))

# 主程序入口

if __name__ == '__main__':

url = "http://www.shanghairanking.cn/rankings/bcur/2020"

html = getHtml(url)

schools = parseRankings(html)

printSchoolList(schools, 7)

- 运行结果

(2)心得体会

该实验总体上不算很有难度,是通过F12查看需要爬取的元素信息,后较为中规中矩。反而在输出格式上花费了比较久的时间,因为学校名称长度差别较大,一开始的格式就较为长短不一,后对其进行居中处理,输出格式较为美观。通过本次实验,对request和bs4有了一定了解,自我有一定提升。

作业②

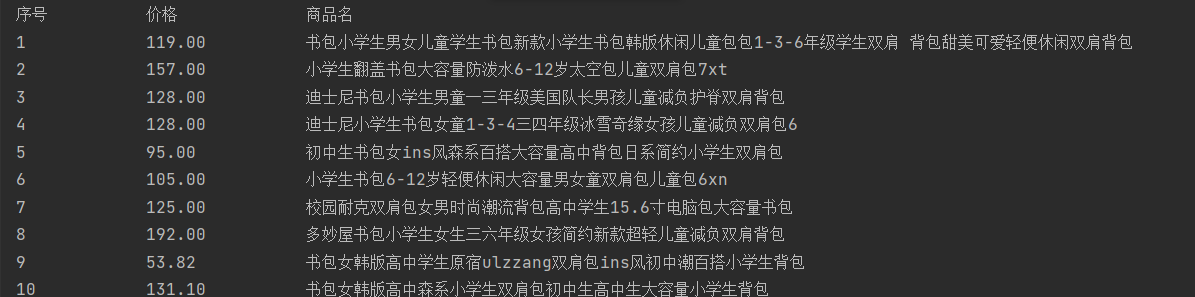

- 要求:用requests和re库方法设计某个商城(自已选择)商品比价定向爬虫,爬取该商城,以关键词“书包”搜索页面的数据,爬取商品名称和价格。

- 输出信息

| 序号 | 价格 | 商品名 |

|---|---|---|

| 1 | 65.00 | xxx |

| 2…… |

(1)代码:

import urllib.request

from bs4 import BeautifulSoup

import re

BASE_URL = "http://search.dangdang.com/?key=%E4%B9%A6%E5%8C%85&act=input"

def get_html(url):

#获取指定url的HTML内容

head = {

"User-Agent": "Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/70.0.3538.25 Safari/537.36 Core/1.70.3775.400 QQBrowser/10.6.4209.400"

}

request = urllib.request.Request(url, headers=head)

try:

response = urllib.request.urlopen(request)

return response.read().decode('gbk')

except urllib.error.URLError as e:

if hasattr(e, "code"):

print(e.code)

if hasattr(e, "reason"):

print(e.reason)

return None

def get_data_from_html(html):

#从HTML内容中提取商品名称和价格

soup = BeautifulSoup(html, "html.parser")

names = []

prices = []

for items in soup.find_all('p', attrs={"class": "name", "name": "title"}):

for name in items.find_all('a'):

title = name['title']

names.append(title)

for item in soup.find_all('span', attrs={"class": "price_n"}):

price = item.string

prices.append(price)

return names, prices

def print_goods_info(names, prices):

#打印商品名称和价格信息

print("序号\t\t\t", "价格\t\t\t", "商品名\t\t")

for i, (name, price) in enumerate(zip(names, prices)):

# 去除多余的空白字符

name = re.sub(r'\s+', ' ', name)

# 提取数字和小数点

price = re.findall(r'[\d.]+', price)

if price:

print(f"{i + 1}\t\t\t {price[0]}\t\t\t{name}")

def main():

names = []

prices = []

for i in range(1, 3): # 爬取1-2页的内容

url = BASE_URL + "&page_index=" + str(i)

html = get_html(url)

if html:

page_names, page_prices = get_data_from_html(html)

names.extend(page_names)

prices.extend(page_prices)

print_goods_info(names, prices)

if __name__ == "__main__":

main()

- 运行结果

(2)心得体会

本实验花费时间会相对多一些,一开始选定的是淘宝,但淘宝反爬机制较强,那时也没有想到转化为静态页面。故最后选择了当当网进行爬取。通过该实验,对F12的使用有了进一步的了解,通过F12来找到所需的元素。因为涉及一定翻页,故对其也进行了观察,发现了变化的元素,从而对代码进行修改。

作业③

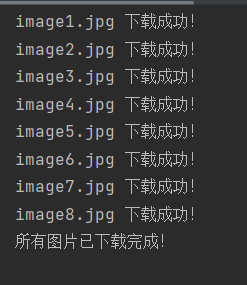

- 要求:爬取一个给定网页( https://xcb.fzu.edu.cn/info/1071/4481.htm)或者自选网页的所有JPEG和JPG格式文件

- 输出信息:将自选网页内的所有JPEG和JPG文件保存在一个文件夹中

(1)代码:

import requests

import os

import re

def download_images():

# 创建存储图片的文件夹

image_dir = 'images'

if not os.path.exists(image_dir):

os.mkdir(image_dir)

url = 'https://xcb.fzu.edu.cn/info/1071/4481.htm'

headers = {

"User-Agent": "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/117.0.0.0 Safari/537.36"

}

response = requests.get(url=url, headers=headers).text

# 使用正则表达式匹配图片链接

regex = r'src="(.*?\.(?:jpg|jpeg))"'

img_urls = re.findall(regex, response)

# 下载图片并保存到文件夹中

for index, img_url in enumerate(img_urls):

img_url_ = 'https://xcb.fzu.edu.cn{}'.format(img_url)

filename = f'image{index+1}.jpg' # 图片文件名

img_path = os.path.join(image_dir, filename) # 图片保存路径

response = requests.get(url=img_url_, headers=headers)

with open(img_path, 'wb') as f:

f.write(response.content)

print(f"{filename} 下载成功!")

print("所有图片已下载完成!")

if __name__ == "__main__":

download_images()

- 运行结果

(2)心得体会

该实验和前面的较为类似啦,即使用F12对所需元素进行查看,后使用正则表达式进行提取,本题将jpeg和jpg全都保存为jpg啦,处理较为简单,没有过多考虑如果是png等其他形态如何处理。

浙公网安备 33010602011771号

浙公网安备 33010602011771号