MapReduce编程:平均成绩

问题描述

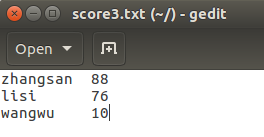

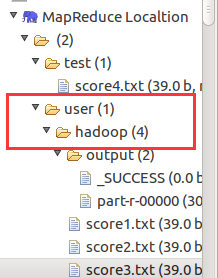

现在有三个文件分别代表学生的各科成绩,编程求各位同学的平均成绩。

编程思想

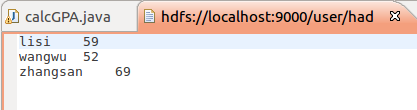

map函数将姓名作为key,成绩作为value输出,reduce根据key即可将三门成绩相加。

代码

package org.apache.hadoop.examples;

import java.io.IOException;

import java.util.Iterator;

import java.util.StringTokenizer;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.Reducer;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

public class calcGPA {

public calcGPA() {

}

public static void main(String[] args) throws Exception {

Configuration conf = new Configuration();

String fileAddress = "hdfs://localhost:9000/user/hadoop/";

//String[] otherArgs = (new GenericOptionsParser(conf, args)).getRemainingArgs();

String[] otherArgs = new String[]{fileAddress+"score1.txt", fileAddress+"score2.txt", fileAddress+"score3.txt", fileAddress+"output"};

if(otherArgs.length < 2) {

System.err.println("Usage: calcGPA <in> [<in>...] <out>");

System.exit(2);

}

Job job = Job.getInstance(conf, "calc GPA");

job.setJarByClass(calcGPA.class);

job.setMapperClass(calcGPA.TokenizerMapper.class);

job.setCombinerClass(calcGPA.IntSumReducer.class);

job.setReducerClass(calcGPA.IntSumReducer.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(IntWritable.class);

for(int i = 0; i < otherArgs.length - 1; ++i) {

FileInputFormat.addInputPath(job, new Path(otherArgs[i]));

}

FileOutputFormat.setOutputPath(job, new Path(otherArgs[otherArgs.length - 1]));

System.exit(job.waitForCompletion(true)?0:1);

}

public static class IntSumReducer extends Reducer<Text, IntWritable, Text, IntWritable> {

public IntSumReducer() {

}

public void reduce(Text key, Iterable<IntWritable> values, Context context) throws IOException, InterruptedException {

int sum = 0;

int count = 0;

IntWritable val;

for(Iterator i$ = values.iterator(); i$.hasNext(); sum += val.get(),count++) {

val = (IntWritable)i$.next();

}

int average = (int)sum/count;

context.write(key, new IntWritable(average));

}

}

public static class TokenizerMapper extends Mapper<Object, Text, Text, IntWritable> {

public TokenizerMapper() {

}

public void map(Object key, Text value, Context context) throws IOException, InterruptedException {

StringTokenizer itr = new StringTokenizer(value.toString(), "\n");

while(itr.hasMoreTokens()) {

StringTokenizer iitr = new StringTokenizer(itr.nextToken());

String name = iitr.nextToken();

String score = iitr.nextToken();

context.write(new Text(name), new IntWritable(Integer.parseInt(score)));

}

}

}

}

疑问

在写这个的时候,我遇到个问题,就是输入输出文件的默认地址,为什么是user/hadoop/,我看了一下配置文件的信息,好像也没有出现过这个地址啊,希望有人能解答一下,万分感谢。

浙公网安备 33010602011771号

浙公网安备 33010602011771号