TensorFlow学习笔记(七)Tesnor Board

为了更好的管理、调试和优化神经网络的训练过程,TensorFlow提供了一个可视化工具TensorBoard。TensorBoard可以有效的展示TensorFlow在运行过程中的计算图。、各种指标随着时间变化的趋势以及训练中使用到的腿昂等信息

一、TensorBoard简介

二、TensorBoard计算图可视化

1、命名空间与TensorBoard图上节点

2、节点信息

3、监控指标可视化

一、TensorBoard简介

TensorBoard是 TensorFlow的可视化工具,它可以通过TensorFlow程序运行过程中输出的日志文件可视化TensorFlow的运行状态。TB与TF跑在不同分进程中。TB自动读取最新的TF日志文件,呈现当前TF的最新状态。

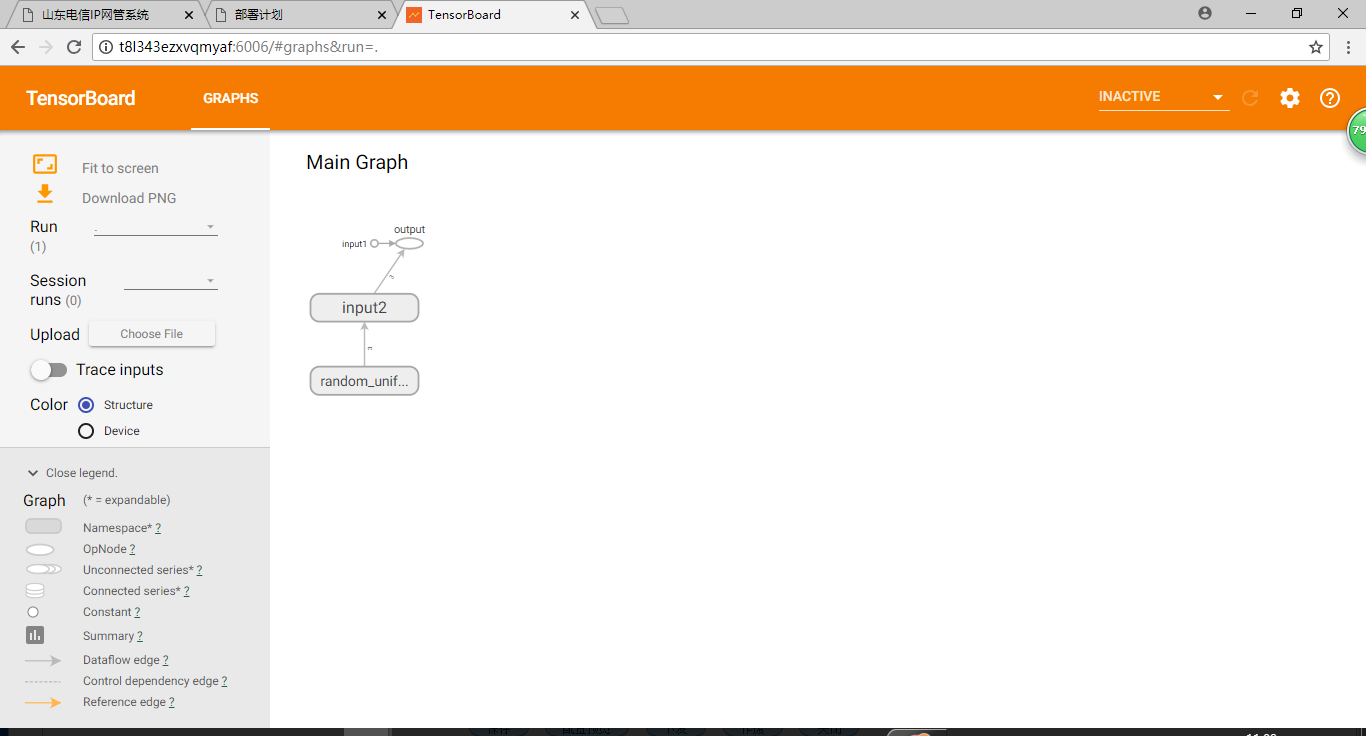

import tensorflow as tf #定义一个简单的计算图,实现向量的加法 input1 = tf.constant([1.0,2.0,3.0],name="input1") input2 = tf.Variable(tf.random_uniform([3]),name="input2") output = tf.add_n([input1,input2],name="output") #生成一个写日志的writer,并将当前TF计算图写入日志 writer = tf.summary.FileWriter("path/to/log",graph=tf.get_default_graph()) writer.close()

通过命令tensorboard --logdir=path/to/log 来启动tensorboard

二、TensorBoard计算图可视化

1、命名空间与TensorBoard图上节点

为了更好的组织可视化效果图上的计算节点,TB支持通过TF命名空间来整理可视化效果图上的节点。TensorFlow提供了两个命名空间函数tf.variable_scope和tf.name_scope。两者基本是等价的。唯一的区别是在使用tf.get_variable上有所不同。

import tensorflow as tf with tf.variable_scope("foo"): #在命名空间foo下,获取变量“bar”。得到变量 foo/bar a = tf.get_variable("bar",[1]) print(a.name) with tf.variable_scope("bar"): #在命名空间foo下,获取变量“bar”。得到变量 bar/bar.此时bar/bar和foo/bar并不冲突 b = tf.get_variable("bar",[1]) print(b.name) with tf.name_scope("a"): #使用tf.Variable 会受到tf.name_scope影响。变量名为“b_1/Variable:0” a = tf.Variable([1]) print(a.name) #使用tf.get_variable 不会受到tf.name_scope影响。变量名为“b:0”,没有加上name_scope的前缀 b = tf.get_variable("b",[1]) print(b.name) with tf.name_scope("b"): #使用tf.Variable 会受到tf.name_scope影响。变量名为“b/Variable:0” a = tf.Variable([1]) print(a.name) #使用tf.get_variable 不会受到tf.name_scope影响。变量名也为“b:0”,没有加上name_scope的前缀 #会报错重复声明 b = tf.get_variable("b",[1]) print(b.name)

改进上一节的样例代码

import tensorflow as tf with tf.name_scope("inout1"): input1 = tf.constant([1.0,2.0,3.0],name="input1") with tf.name_scope("input2"): intput2 = tf.Variable(tf.random_uniform([3]),name="input2") output = tf.add_n([input1,intput2],name="add") writer = tf.summary.FileWriter('path/to/log',tf.get_default_graph()) writer.close()

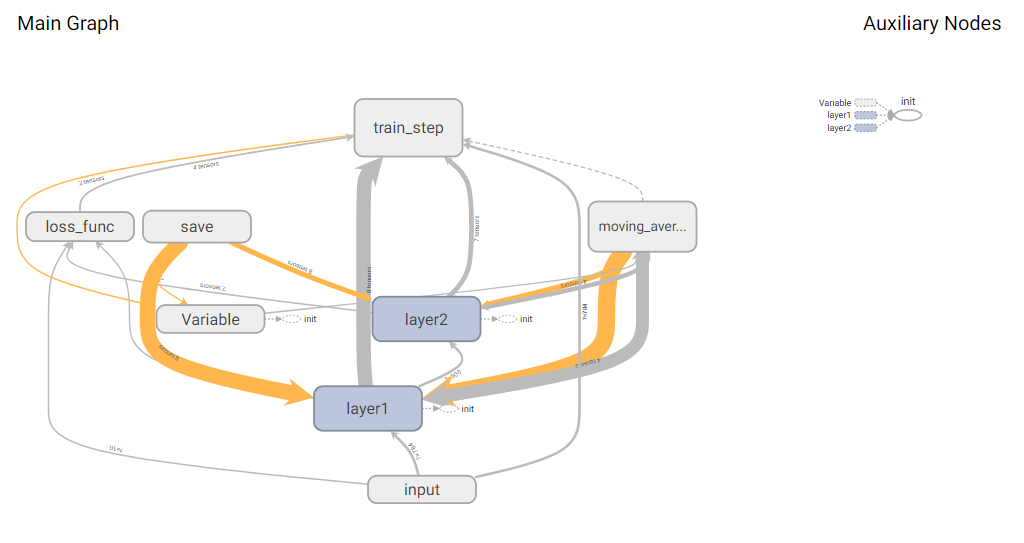

可视化TensorFlow(五)中的样例程序

# -*- coding:utf-8 -*- import os import tensorflow as tf from tensorflow.examples.tutorials.mnist import input_data #加载mnsit_inference.py中定义的变量和函数 from integerad_mnist import mnsit_inference1 import numpy as np #配置神经网络的参数 BATCH_SIZE = 100 LR_BASE = 0.8 LR_DECAY = 0.99 REGULARAZTION_RATE = 0.0001 TRANING_STEPS = 30000 MOVING_AVERAGE_DECAY = 0.99 #模型保存的文件名和路径 MODEL_SAVE_PATH = "path/to/model/" MODEL_SAVE_NAME = "model.ckpt" INPUT_NODE = 784 OUTPUT_NODE =10 LAYER_NODE = 500 def train(mnsit): #定义输入和输出的placeholder,将处理输入数据的计算都放在“input” with tf.name_scope("input"): x = tf.placeholder(tf.float32,shape=[None,mnsit_inference1.INPUT_NODE],name="x_input") y_ = tf.placeholder(tf.float32,shape=[None,mnsit_inference1.OUTPUT_NODE],name="y_input") regularizer = tf.contrib.layers.l2_regularizer(REGULARAZTION_RATE) #直接使用mnsit_inference中定义的前向传播过程 y = mnsit_inference1.inference(x,regularizer) global_step = tf.Variable(0,trainable=False) #将处理滑动平均相关的计算都放在moving_average命名空间下 with tf.name_scope("moving_average"): variable_average = tf.train.ExponentialMovingAverage(MOVING_AVERAGE_DECAY,global_step) variable_average_op = variable_average.apply(tf.trainable_variables()) #将计算loss相关的计算都放在loss_func命名空间下 with tf.name_scope("loss_func"): cross_entropy = tf.nn.sparse_softmax_cross_entropy_with_logits(labels=tf.argmax(y_,1),logits=y) cross_entropy_mean = tf.reduce_mean(cross_entropy) loss = cross_entropy_mean + tf.add_n(tf.get_collection("losses")) #定义学习率、优化方法等放在“train_step”下 with tf.name_scope("train_step"): learning_rate = tf.train.exponential_decay(LR_BASE,global_step,mnsit.train.num_examples/BATCH_SIZE,LR_DECAY) train_step = tf.train.GradientDescentOptimizer(learning_rate).minimize(loss,global_step=global_step) with tf.control_dependencies([train_step,variable_average_op]): train_op = tf.no_op("train") #初始化TF的持久化类 saver = tf.train.Saver() with tf.Session() as sess: tf.initialize_all_variables().run() for i in range(TRANING_STEPS): xs,ys = mnsit.train.next_batch(BATCH_SIZE) _,loss_value,step = sess.run([train_op,loss,global_step],feed_dict={x:xs,y_:ys}) #每1000轮保存一次模型 if i % 1000 == 0: print("After {0} training steps,loss on training batch is {1}".format(step,loss_value)) saver.save(sess,os.path.join(MODEL_SAVE_PATH,MODEL_SAVE_NAME),global_step=global_step) writer = tf.summary.FileWriter("path/to/log",tf.get_default_graph()) writer.close() def main(argv = None): mnsit = input_data.read_data_sets("mnist_set",one_hot=True) train(mnsit) if __name__ == '__main__': tf.app.run()

生成的TB可视化

除了手动的通过TensorFlow的命名空间来调整TensorBoard的可视化效果图,TensorFlow也会智能的调整可视化效果图上的节点。TB将TF分成了主图和辅助图。左侧的Graph为主图,右侧的Auxiliary Nodes为辅助图。TF会主动把连接表较多的点列出来放在辅助图中。

除了自动的方式,TF也支持手动的方式来调整可视化效果。

2、节点信息

除了展示TF计算图的结构,TB还可以展示TF计算图上每个节点的基本信息以及运行是所消耗的时间以及空间。

调整上面代码中迭代训练的部分,展示每次迭代TF计算节点运行时间和消耗的内存。

with tf.Session() as sess: tf.initialize_all_variables().run() writer = tf.summary.FileWriter("path/to/log",tf.get_default_graph()) for i in range(TRANING_STEPS): xs,ys = mnsit.train.next_batch(BATCH_SIZE) _,loss_value,step = sess.run([train_op,loss,global_step],feed_dict={x:xs,y_:ys}) #每1000轮记录一次运行状态 if i % 1000 == 0: #配置运行是需要记录的信息 run_options =tf.RunOptions(trace_level = tf.RunOptions.FULL_TRACE) run_metadata = tf.RunMetadata() #将配置信息和记录运行是的元信息传入运行过程 _,loss_value,step = sess.run([train_op,loss,global_step],feed_dict={x:xs,y_:ys},options=run_options,run_metadata=run_metadata) #将节点在运行是的信息写入日志 writer.add_run_metadata(run_metadata,"step-%s"%i) print("After {0} training steps,loss on training batch is {1}".format(step,loss_value)) else: _,loss_value,step = sess.run([train_op,loss,global_step],feed_dict={x:xs,y_:ys}) writer.close()

3、监控指标可视化

TB除了可视化TF的计算图,还可以可视化TF运行程序中各种有助于了解运行程序状态的监控指标。

import tensorflow as tf from tensorflow.examples.tutorials.mnist import input_data SUMMARY_DIR = "path/to/log" BATCH_SIZE =100 TRAIN_STEPS =30000 def variable_summaries(var,name): with tf.name_scope("summaries"): tf.summary.histogram(name,var) mean = tf.reduce_mean(var) tf.summary.scalar("mean/"+name,mean) stddev = tf.sqrt(tf.reduce_mean(tf.square(var-mean))) tf.summary.scalar("stddev/"+name,stddev) #生成一层全连接层神经网络 def nn_layer(input_tensor,input_dim,output_dim,layer_name,act= tf.nn.relu): #将同一层神经网络放在一个统一的空间 with tf.name_scope(layer_name): with tf.name_scope("weights"): weights = tf.Variable(tf.truncated_normal([input_dim,output_dim],stddev=0.1)) variable_summaries(weights,layer_name+'/weights') with tf.name_scope("biases"): biases = tf.Variable(tf.constant(0.0,shape=[output_dim])) variable_summaries(biases,layer_name+'/biases') with tf.name_scope("Wx_plus_b"): preactivate = tf.matmul(input_tensor,weights)+biases tf.summary.histogram(layer_name+'/pre_activations',preactivate) activations = act(preactivate) tf.summary.histogram(layer_name+"/activations",activations) return activations def main(_): mnsit = input_data.read_data_sets('mnist_set',one_hot=True) with tf.name_scope('input'): x = tf.placeholder(tf.float32,shape=[None,784],name='x_input') y_ = tf.placeholder(tf.float32,shape=[None,10],name='y_input') with tf.name_scope('input_reshape'): image_shaped_input = tf.reshape(x,[-1,28,28,1]) tf.summary.image('input',image_shaped_input,10) hidden1 = nn_layer(x,784,500,'layer1') y = nn_layer(hidden1,500,10,'layer2') with tf.name_scope('cross_entropy'): cross_entropy = tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits(labels=y_,logits=y)) tf.summary.scalar('cross_entropy',cross_entropy) with tf.name_scope('train'): train_op = tf.train.AdamOptimizer(0.001).minimize(cross_entropy) with tf.name_scope('accuracy'): with tf.name_scope('correct_prediction'): correct_prediction = tf.equal(tf.arg_max(y,1),tf.argmax(y_,1)) with tf.name_scope('accuracy'): accuracy = tf.reduce_mean(tf.cast(correct_prediction,tf.float32)) tf.summary.scalar('accuracy',accuracy) merged = tf.summary.merge_all() with tf.Session() as sess : summary_writer = tf.summary.FileWriter(SUMMARY_DIR,sess.graph) tf.global_variables_initializer().run() for i in range(TRAIN_STEPS): xs,ys = mnsit.train.next_batch(BATCH_SIZE) summary,_ = sess.run([merged,train_op],feed_dict={x:xs,y_:ys}) summary_writer.add_summary(summary,i) summary_writer.close() if __name__ == '__main__': tf.app.run()

浙公网安备 33010602011771号

浙公网安备 33010602011771号