1.手写数字数据集

- from sklearn.datasets import load_digits

- digits = load_digits()

from sklearn.datasets import load_digits

import numpy as np

digits = load_digits()

2.图片数据预处理

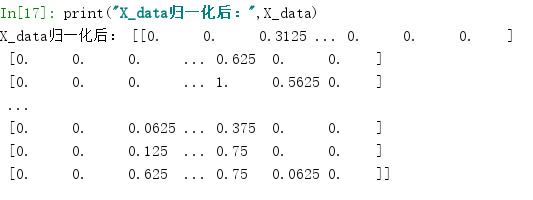

- x:归一化MinMaxScaler()

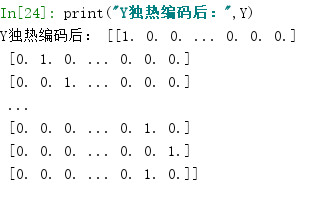

- y:独热编码OneHotEncoder()或to_categorical

- 训练集测试集划分

- 张量结构

# SKlearn 数据预处理:归一化

from sklearn.preprocessing import MinMaxScaler

X_data = digits.data.astype(np.float32)

scaler = MinMaxScaler()

X_data = scaler.fit_transform(X_data)

print("归一化后",X_data)

# 转化为图片的格式(batch,height,width,channels)

X=X_data.reshape(-1,8,8,1)

# OneHotEncoder独热编码

from sklearn.preprocessing import OneHotEncoder

# y = digits.target.reshape(-1,1)

y = digits.target.astype(np.float32).reshape(-1,1) #将Y_data变为一列

Y = OneHotEncoder().fit_transform(y).todense() #张量结构todense

print("独热编码:",Y)

# 切分数据集

from sklearn.model_selection import train_test_split

X_train,X_test,y_train,y_test = train_test_split(X,Y,test_size=0.2,random_state=0,stratify=Y)

print(X_train,X_test,y_train,y_test)

print("X_data.shape:",X_data.shape)

print("X.shape",X.shape)

![]()

![]()

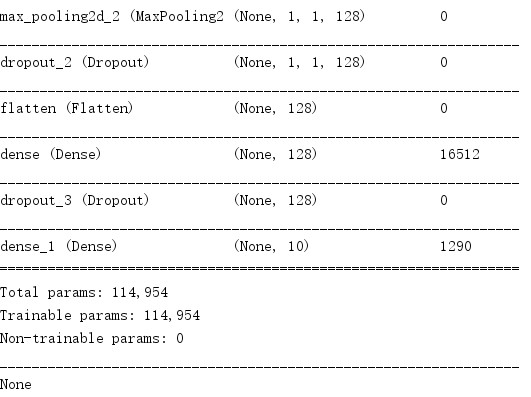

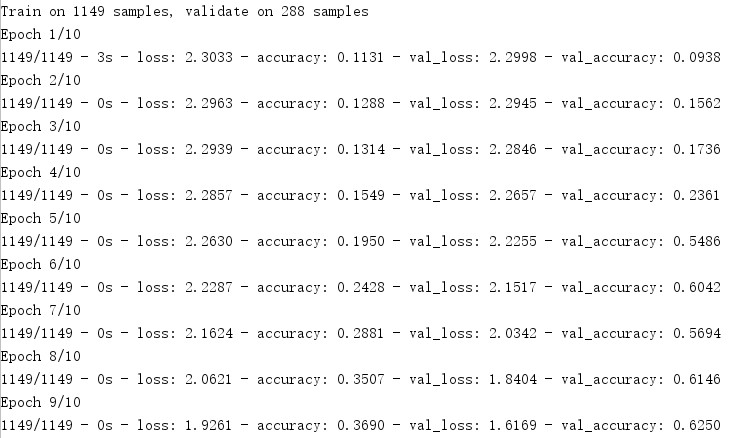

from tensorflow.keras.models import Sequential from tensorflow.keras.layers import Dense,Dropout,Conv2D,MaxPool2D,Flatten #3、建立模型 model = Sequential() ks = (3, 3) # 卷积核的大小 input_shape = X_train.shape[1:] # 一层卷积,padding='same',tensorflow会对输入自动补0 model.add(Conv2D(filters=16, kernel_size=ks, padding='same', input_shape=input_shape, activation='relu')) # 池化层1 model.add(MaxPool2D(pool_size=(2, 2))) # 防止过拟合,随机丢掉连接 model.add(Dropout(0.25)) # 二层卷积 model.add(Conv2D(filters=32, kernel_size=ks, padding='same', activation='relu')) # 池化层2 model.add(MaxPool2D(pool_size=(2, 2))) model.add(Dropout(0.25)) # 三层卷积 model.add(Conv2D(filters=64, kernel_size=ks, padding='same', activation='relu')) # 四层卷积 model.add(Conv2D(filters=128, kernel_size=ks, padding='same', activation='relu')) # 池化层3 model.add(MaxPool2D(pool_size=(2, 2))) model.add(Dropout(0.25)) # 平坦层 model.add(Flatten()) # 全连接层 model.add(Dense(128, activation='relu')) model.add(Dropout(0.25)) # 激活函数softmax model.add(Dense(10, activation='softmax')) print(model.summary())

浙公网安备 33010602011771号

浙公网安备 33010602011771号