Python machine learning-Peceptron感知机模型

转自: https://github.com/xuman-Amy/Perceptron_python/blob/master/perceptron_python%20.py

|

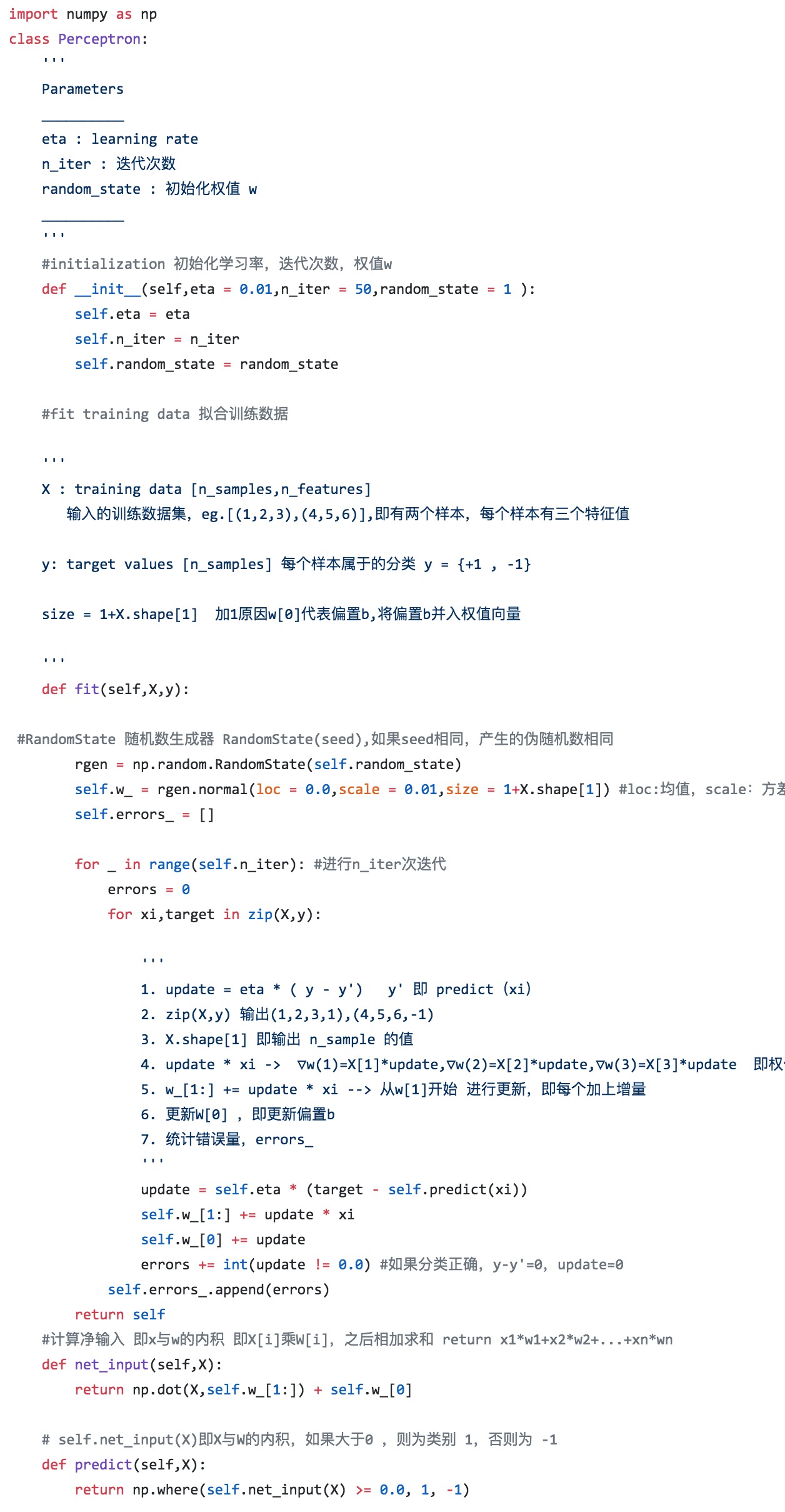

import numpy as np class Perceptron: |

|

| ''' | |

| Parameters | |

| __________ | |

| eta : learning rate | |

| n_iter : 迭代次数 | |

| random_state : 初始化权值 w | |

| __________ | |

| ''' | |

| #initialization 初始化学习率,迭代次数,权值w | |

| def __init__(self,eta = 0.01,n_iter = 50,random_state = 1 ): | |

| self.eta = eta | |

| self.n_iter = n_iter | |

| self.random_state = random_state | |

| #fit training data 拟合训练数据 | |

| ''' | |

| X : training data [n_samples,n_features] | |

| 输入的训练数据集,eg.[(1,2,3),(4,5,6)],即有两个样本,每个样本有三个特征值 | |

| y: target values [n_samples] 每个样本属于的分类 y = {+1 , -1} | |

| size = 1+X.shape[1] 加1原因w[0]代表偏置b,将偏置b并入权值向量 | |

| ''' | |

| def fit(self,X,y): | |

| #RandomState 随机数生成器 RandomState(seed),如果seed相同,产生的伪随机数相同 | |

| rgen = np.random.RandomState(self.random_state) | |

| self.w_ = rgen.normal(loc = 0.0,scale = 0.01,size = 1+X.shape[1]) #loc:均值,scale:方差 size:输出规格 | |

| self.errors_ = [] | |

| for _ in range(self.n_iter): #进行n_iter次迭代 | |

| errors = 0 | |

| for xi,target in zip(X,y): | |

| ''' | |

| 1. update = eta * ( y - y') y' 即 predict(xi) | |

| 2. zip(X,y) 输出(1,2,3,1),(4,5,6,-1) | |

| 3. X.shape[1] 即输出 n_sample 的值 | |

| 4. update * xi -> ▽w(1)=X[1]*update,▽w(2)=X[2]*update,▽w(3)=X[3]*update 即权值w的更新 | |

| 5. w_[1:] += update * xi --> 从w[1]开始 进行更新,即每个加上增量 | |

| 6. 更新W[0] ,即更新偏置b | |

| 7. 统计错误量,errors_ | |

| ''' | |

| update = self.eta * (target - self.predict(xi)) | |

| self.w_[1:] += update * xi | |

| self.w_[0] += update | |

| errors += int(update != 0.0) #如果分类正确,y-y'=0,update=0 | |

| self.errors_.append(errors) | |

| return self | |

| #计算净输入 即x与w的内积 即X[i]乘W[i],之后相加求和 return x1*w1+x2*w2+...+xn*wn | |

| def net_input(self,X): | |

| return np.dot(X,self.w_[1:]) + self.w_[0] | |

| # self.net_input(X)即X与W的内积,如果大于0 ,则为类别 1,否则为 -1 | |

| def predict(self,X): | |

| return np.where(self.net_input(X) >= 0.0, 1, -1) |

https://github.com/qixing810/leetcode_database

浙公网安备 33010602011771号

浙公网安备 33010602011771号