中文分词工具jieba的使用

中文分词工具jieba的使用

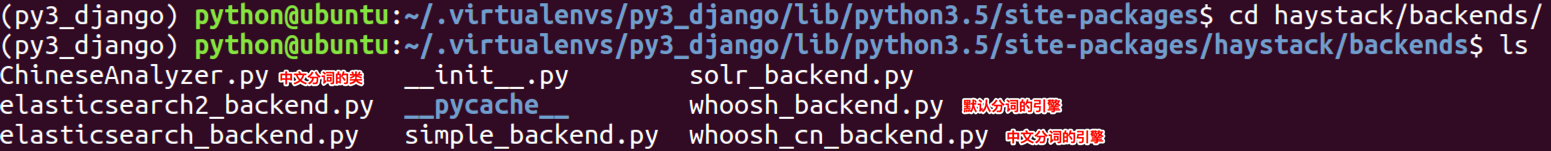

- 1.进入到安装了全文检索工具包的虚拟环境中

/home/python/.virtualenvs/py3_django/lib/python3.5/site-packages/- 进入到

haystack/backends/中

-

2.创建

ChineseAnalyzer.py文件 -

![]()

import jieba from whoosh.analysis import Tokenizer, Token class ChineseTokenizer(Tokenizer): def __call__(self, value, positions=False, chars=False, keeporiginal=False, removestops=True, start_pos=0, start_char=0, mode='', **kwargs): t = Token(positions, chars, removestops=removestops, mode=mode, **kwargs) seglist = jieba.cut(value, cut_all=True) for w in seglist: t.original = t.text = w t.boost = 1.0 if positions: t.pos = start_pos + value.find(w) if chars: t.startchar = start_char + value.find(w) t.endchar = start_char + value.find(w) + len(w) yield t def ChineseAnalyzer(): return ChineseTokenizer()-

3.拷贝

whoosh_backend.py为whoosh_cn_backend.pycp whoosh_backend.py whoosh_cn_backend.py -

4.更改分词的类为

ChineseAnalyzer- 打开并编辑

whoosh_cn_backend.py - 引入

from .ChineseAnalyzer import ChineseAnalyzer - 查找

analyzer=StemmingAnalyzer() 改为 analyzer=ChineseAnalyzer()

- 打开并编辑

-

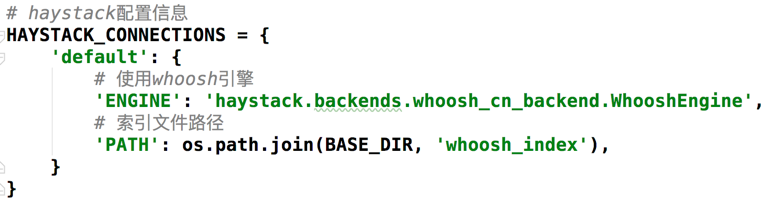

5.更改分词引擎

-

![]()

-

6.重新创建索引数据

python manage.py rebuild_index

-

- 自定义上下文

- haystack 官网 http://django-haystack.readthedocs.io/en/master/views_and_forms.html

定义视图view,需要定义在查询的模型类视图里

# views.py

from haystack.generic_views import SearchView

class MySearchView(SearchView):

"""My custom search view."""

def get_context_data(self, *args, **kwargs):

context = super(MySearchView, self).get_context_data(*args, **kwargs)

context[''] =

return context

# urls.py

urlpatterns = [

url(r'^/search/?$', MySearchView.as_view(), name='search_view'),

]

目标

奋斗,不一定能够成功,可有一定的几率是可以成功的.

但是,不奋斗是不会成功的.

浙公网安备 33010602011771号

浙公网安备 33010602011771号