【转载】 日内瓦大学 & NeurIPS 2020 | 在强化学习中动态分配有限的内存资源

原文地址:

https://hub.baai.ac.cn/view/4029

========================================================

【论文标题】Dynamic allocation of limited memory resources in reinforcement learning 【作者团队】Nisheet Patel, Luigi Acerbi, Alexandre Pouget 【发表时间】2020/11/12 【论文链接】https://proceedings.neurips.cc//paper/2020/file/c4fac8fb3c9e17a2f4553a001f631975-Paper.pdf 【论文代码】https://github.com/nisheetpatel/DynamicResourceAllocator 【推荐理由】本文收录于NeurIPS 2020,来自日内瓦大学的研究人员研究强化学习与神经科学两个方面,提出了动态框架来对资源进行分配。

生物大脑固有的处理和存储信息的能力受到限制,但是仍然能够轻松地解决复杂的任务。

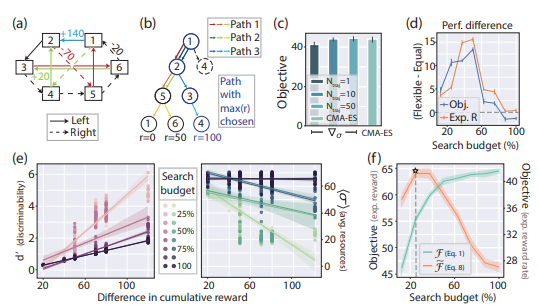

在本文中,研究人员提出了动态资源分配器(DRA),将其应用于强化学习中的两个标准任务和一个基于模型的计划任务,

发现它将更多资源分配给对内存有更高影响的项目。

此外,DRA从更高的资源预算开始学习时比为更好地完成任务而分配的学习速度要快,

这可以解释为什么生物大脑的额叶皮层区域在适应较低的渐近活动水平之前似乎更多地参与了学习的早期阶段。

本文的工作为学习如何将昂贵的资源分配给不确定的内存集合以适应环境变化的方式提供了一个规范性的解决方案。

代码地址:

https://github.com/nisheetpatel/DynamicResourceAllocator

======================================================

论文官方地址:

https://archive-ouverte.unige.ch/unige:149081

============================================

论文评审意见:

https://proceedings.neurips.cc/paper/2020/file/c4fac8fb3c9e17a2f4553a001f631975-MetaReview.html

Dynamic allocation of limited memory resources in reinforcement learning

Meta Review

This paper nicely bridges between neuroscience and RL, and considers the important topic of limited memory resources in RL agents. The topic is well-suited for NeurIPS (R2) as it has broader applicability toward e.g. model-based RL and planning, although this is not extensively discussed or shown in the paper itself. All reviewers agreed that it is well-motivated and written (R1, R2, R3, R4), although R3 did ask for a bit more explanation on some methodological details. It is also appropriately situated with respect to related work (R1, R2, R3) although R2 suggests a separate related works section, and R4 wanted to see more discussion of work outside of neuroscience, focused on optimizing RL with limited capacity. R1 pointed out that perhaps there’s a bit of confusion between memory precision and use of memory resources, as the former is more accurate for agents, the latter perhaps for real brains - ie more precise representations require more resources to encode in the brain, but this seems to be a minor point. R1 also asked to include standard baseline implementations to test for issues such how their model scales compared to other methods. R4 was the least positive, expressing that the contribution to AI is unclear, that the tasks are too easy and wouldn’t be expected to challenge memory resources. Also the connection to neuroscience is a bit tenuous as the implementation doesn’t seem particularly biologically plausible. In the rebuttal, authors argue that this approach will allow them to generate testable predictions regarding neural representations during learning, some of which are already included in the discussion. I find this adequate, but these predictions should maybe be foregrounded more so as to make clearer the neuroscientific contribution. I’m overall quite impressed with how responsive the authors were in their response, including almost all of the requested analyses. I think the final paper, with all of these changes incorporated, is likely to be much stronger, and so I recommend accept.

======================================

论文的视频讲解:(外网)

https://www.youtube.com/watch?v=1MJJkJd_umA

===================================

posted on 2021-04-22 19:33 Angry_Panda 阅读(334) 评论(2) 收藏 举报

浙公网安备 33010602011771号

浙公网安备 33010602011771号