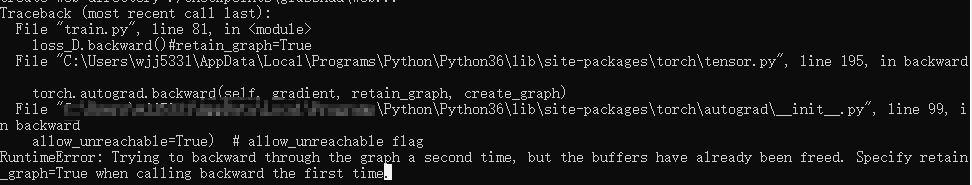

RuntimeError: Trying to backward through the graph a second time, but the buffers have already been freed. Specify retain_graph=True when calling backward the first time.

报错:

修改:

model.module.optimizer_G.zero_grad()

loss_G.backward()

model.module.optimizer_G.step()

为:

model.module.optimizer_G.zero_grad()

loss_G.backward(retain_graph=True)

model.module.optimizer_G.step()

问题解决。

浙公网安备 33010602011771号

浙公网安备 33010602011771号