数据清洗

Result文件数据说明:

Ip:106.39.41.166,(城市)

Date:10/Nov/2016:00:01:02 +0800,(日期)

Day:10,(天数)

Traffic: 54 ,(流量)

Type: video,(类型:视频video或文章article)

Id: 8701,(视频或者文章的id)

测试要求:

1、数据清洗:按照进行数据清洗,并将清洗后的数据导入hive数据库中。

两阶段数据清洗:

(1)第一阶段:把需要的信息从原始日志中提取出来

ip:199.30.25.88

time:10/Nov/2016:00:01:03 +0800

traffic:62

文章:article/11325

视频:video/3235

(2)第二阶段:根据提取出来的信息做精细化操作

ip--->城市city(IP)

date-->time:2016-11-10 00:01:03

day:10

traffic:62

type:article/video

id:11325

(3)hive数据库表结构:

create table data(ip string,time string,day string,traffic bigint,type string,id string)

2、数据处理:

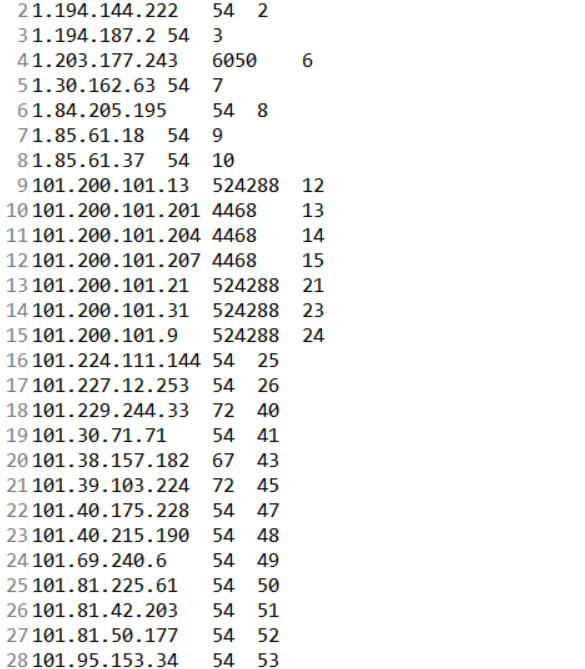

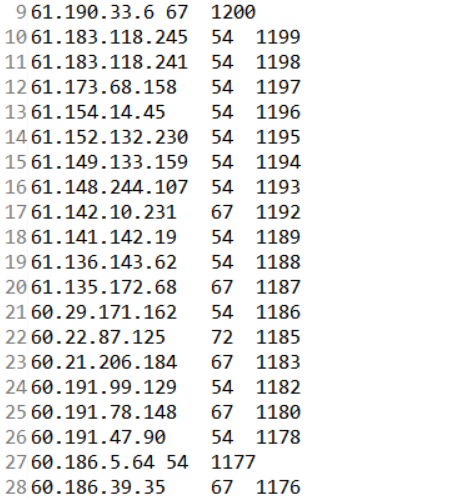

·统计最受欢迎的视频/文章的Top10访问次数(video/article)

·按照地市统计最受欢迎的Top10课程(ip)

·按照流量统计最受欢迎的Top10课程(traffic)

3、数据可视化:将统计结果导入MySql数据库中,通过图形化展示的方式展现出来。

package mapreduce; import java.io.IOException; import java.util.ArrayList; import java.util.List; import org.apache.hadoop.conf.Configuration; import org.apache.hadoop.fs.FileSystem; import org.apache.hadoop.fs.Path; import org.apache.hadoop.io.Text; import org.apache.hadoop.mapreduce.Job; import org.apache.hadoop.mapreduce.Mapper; import org.apache.hadoop.mapreduce.Reducer; import org.apache.hadoop.mapreduce.lib.input.FileInputFormat; import org.apache.hadoop.mapreduce.lib.input.TextInputFormat; import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat; import org.apache.hadoop.mapreduce.lib.output.TextOutputFormat; public class Result_1{ static int Sum=0; public static class Map extends Mapper<Object , Text , Text,Text>{ private static Text Name =new Text(); private static Text num=new Text(); public void map(Object key,Text value,Context context) throws IOException, InterruptedException{ String line=value.toString(); String arr[]=line.split(","); Name.set(arr[0]); String trm =arr[3].trim(); num.set(trm); System.out.println(num); context.write(Name,num); } } public static class Reduce extends Reducer< Text, Text,Text, Text>{ int i=0; public void reduce(Text key,Iterable<Text> values,Context context) throws IOException, InterruptedException{ Text num=new Text(); for(Text val:values){ num=val; Sum+=1; } String mid=new String(); mid=String.valueOf(Sum); mid=num.toString()+"\t"+mid; num.set(mid); context.write(key,num); System.out.println(Sum); } } public static int run()throws IOException, ClassNotFoundException, InterruptedException { Configuration conf=new Configuration(); conf.set("fs.defaultFS", "hdfs://192.168.1.100:9000"); FileSystem fs =FileSystem.get(conf); Job job =new Job(conf,"Result_1"); job.setJarByClass(Result_1.class); job.setMapperClass(Map.class); job.setReducerClass(Reduce.class); job.setOutputKeyClass(Text.class); job.setOutputValueClass(Text.class); job.setInputFormatClass(TextInputFormat.class); job.setOutputFormatClass(TextOutputFormat.class); Path in=new Path("hdfs://192.168.1.100:9000/mymapreduce1/in/result.txt"); Path out=new Path("hdfs://192.168.1.100:9000/mymapreduce1/out_result"); FileInputFormat.addInputPath(job,in); fs.delete(out,true); FileOutputFormat.setOutputPath(job,out); return(job.waitForCompletion(true) ? 0 : 1); } public static void main(String[] args) throws IOException, ClassNotFoundException, InterruptedException{ run(); } }

package mapreduce; import java.io.IOException; import java.util.ArrayList; import java.util.List; import org.apache.hadoop.conf.Configuration; import org.apache.hadoop.fs.FileSystem; import org.apache.hadoop.fs.Path; import org.apache.hadoop.io.IntWritable; import org.apache.hadoop.io.Text; import org.apache.hadoop.io.WritableComparable; import org.apache.hadoop.io.WritableComparator; import org.apache.hadoop.mapreduce.Job; import org.apache.hadoop.mapreduce.Mapper; import org.apache.hadoop.mapreduce.Reducer; import org.apache.hadoop.mapreduce.lib.input.FileInputFormat; import org.apache.hadoop.mapreduce.lib.input.TextInputFormat; import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat; import org.apache.hadoop.mapreduce.lib.output.TextOutputFormat; public class Result_2 { public static List<String> Names=new ArrayList<String>(); public static List<String> Values=new ArrayList<String>(); public static List<String> Texts=new ArrayList<String>(); public static class Sort extends WritableComparator { public Sort(){ //这里就是看你map中填的输出key是什么数据类型,就给什么类型 super(IntWritable.class,true); } @Override public int compare(WritableComparable a, WritableComparable b) { return -a.compareTo(b);//加个负号就是倒序,把负号去掉就是正序。 } } public static class Map extends Mapper<Object , Text , IntWritable,Text >{ private static Text Name=new Text(); private static IntWritable num=new IntWritable(); public void map(Object key,Text value,Context context)throws IOException, InterruptedException { String line=value.toString(); String mid=new String(); String arr[]=line.split("\t"); if(!arr[0].startsWith(" ")) { num.set(Integer.parseInt(arr[2])); mid=arr[0]+"\t"+arr[1]; Name.set(mid); context.write(num, Name); } } } public static class Reduce extends Reducer< IntWritable, Text, Text, IntWritable>{ private static IntWritable result= new IntWritable(); int i=0; public void reduce(IntWritable key,Iterable<Text> values,Context context) throws IOException, InterruptedException{ for(Text val:values){ if(i<10) {i=i+1; String mid=new String(); mid=val.toString(); String arr[]=mid.split("\t"); Texts.add(arr[1]); Names.add(arr[0]); Values.add(key.toString()); } context.write(val,key); } } } public static int run()throws IOException, ClassNotFoundException, InterruptedException{ Configuration conf=new Configuration(); conf.set("fs.defaultFS", "hdfs://192.168.1.100:9000"); FileSystem fs =FileSystem.get(conf); Job job =new Job(conf,"Result_2"); job.setJarByClass(Result_2.class); job.setMapperClass(Map.class); job.setReducerClass(Reduce.class); job.setSortComparatorClass(Sort.class); job.setOutputKeyClass(IntWritable.class); job.setOutputValueClass(Text.class); job.setInputFormatClass(TextInputFormat.class); job.setOutputFormatClass(TextOutputFormat.class); Path in=new Path("hdfs://192.168.1.100:9000/mymapreduce1/out_result/part-r-00000"); Path out=new Path("hdfs://192.168.1.100:9000/mymapreduce1/out_result1"); FileInputFormat.addInputPath(job,in); fs.delete(out,true); FileOutputFormat.setOutputPath(job,out); return(job.waitForCompletion(true) ? 0 : 1); } public static void main(String[] args) throws IOException, ClassNotFoundException, InterruptedException{ run(); for(String n:Names) { System.out.println(n); } } }

浙公网安备 33010602011771号

浙公网安备 33010602011771号