k8s笔记记录

基于K8S单节点部署你的第一个K8S应用

yum install -y yum-utils device-mapper-persistent-data lvm2

yum-config-manager --add-repo http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

docker -v

yum -y install docker-ce-20.10.10-3.el7

systemctl status docker

cat > /etc/yum.repos.d/kubernetes.repo << EOF

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=0

repo_gpgcheck=0

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

yum install -y kubectl-1.18.0

curl -LO https://storage.googleapis.com/minikube/releases/v1.18.1/minikube-linux-amd64 && sudo install minikube-linux-amd64 /usr/local/bin/minikube

minikube version

minikube start --image-mirror-country='cn' --driver=docker --force --kubernetes-version=1.18.1 --registry-mirror=https://registry.docker-cn.com

kubectl cluster-info

kubectl get node

NAME STATUS ROLES AGE VERSION

minikube Ready master 11m v1.18.1

kubectl get pod -A

NAMESPACE NAME READY STATUS RESTARTS AGE

kube-system coredns-546565776c-dhdwr 1/1 Running 0 11m

kube-system etcd-minikube 1/1 Running 0 11m

kube-system kube-apiserver-minikube 1/1 Running 0 11m

kube-system kube-controller-manager-minikube 1/1 Running 0 11m

kube-system kube-proxy-tkp96 1/1 Running 0 11m

kube-system kube-scheduler-minikube 1/1 Running 0 11m

kube-system storage-provisioner 0/1 ErrImagePull 0 11m

kubectl create deployment xdclass-nginx --image=nginx:1.23.0

deployment.apps/xdclass-nginx created

kubectl get pod

NAME READY STATUS RESTARTS AGE

xdclass-nginx-859bdb9994-fkw9n 0/1 ContainerCreating 0 38s

pod/xdclass-nginx-859bdb9994-fkw9n 0/1 ImagePullBackOff 0 2m11s

kubectl get deployment,pod,svc

NAME READY UP-TO-DATE AVAILABLE AGE

deployment.apps/xdclass-nginx 1/1 1 1 2m33s

NAME READY STATUS RESTARTS AGE

pod/xdclass-nginx-859bdb9994-fkw9n 1/1 Running 0 2m33s

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

service/kubernetes ClusterIP 10.96.0.1

暴露80端口, 就是service服务

kubectl expose deployment xdclass-nginx --port=80 --type=NodePort

service/xdclass-nginx exposed

kubectl get deployment,pod,svc

NAME READY UP-TO-DATE AVAILABLE AGE

deployment.apps/xdclass-nginx 1/1 1 1 5m20s

NAME READY STATUS RESTARTS AGE

pod/xdclass-nginx-859bdb9994-fkw9n 1/1 Running 0 5m20s

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

service/kubernetes ClusterIP 10.96.0.1

service/xdclass-nginx NodePort 10.101.181.10

转发端口(Mini Kube临时)

kubectl port-forward --address 0.0.0.0 service/xdclass-nginx 80:80

解释:

kubectl port-forward 转发一个本地端口到 Pod 端口,不会返回数据,需要打开另一个终端来继续这个练习

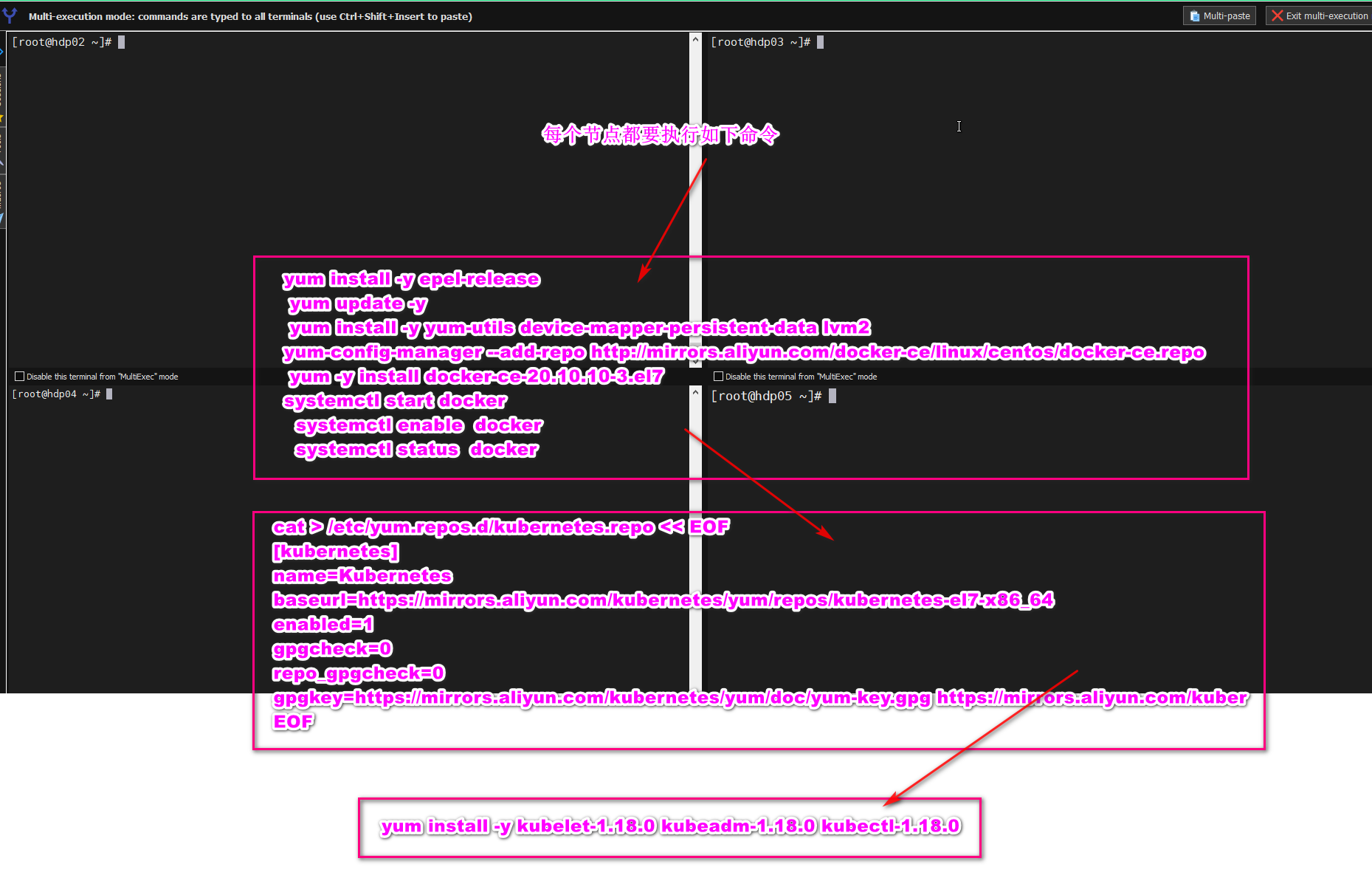

基于KubeAdm搭建多节点K8S集群

每个节点必须执行的操作

# 1.先安装yml

yum install -y yum-utils device-mapper-persistent-data lvm2

# 2.设置阿里云镜像

sudo yum-config-manager --add-repo http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

# 3.查看可安装的docker版本

yum list docker-ce --showduplicates | sort -r

#4. 安装docker

yum -y install docker-ce-20.10.10-3.el7

#5. 查看docker版本

docker -v

#配置开机自启动

systemctl enable docker.service

#6. 启动docker

systemctl start docker

#7. 查看docker 启动状态

systemctl status docker

配置阿里云镜像源(主节点+工作节点)

cat > /etc/yum.repos.d/kubernetes.repo << EOF

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=0

repo_gpgcheck=0

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

安装kubelet kubeadm kubectl(主节点+工作节点)

yum install -y kubelet-1.18.0 kubeadm-1.18.0 kubectl-1.18.0

master主节点初始化(主节点)

kubeadm init \

--apiserver-advertise-address=master主机IP地址 \

--image-repository registry.aliyuncs.com/google_containers \

--kubernetes-version v1.18.0 \

--service-cidr=10.96.0.0/12 \

--pod-network-cidr=10.244.0.0/16

当初始化完成之后执行命令,并加入工作节点

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

node子节点添加master节点(每个人机器不一样 不要复制下面信息 看你的终端提示)

范例

kubeadm join 192.168.85.131:6443 --token 7qpecz.7oa0qxrq75aa4zep --discovery-token-ca-cert-has h sha256:af2ea5723171e5e3610a06e6fdec828cd9b1f7ad5b416a1cffc0cffa91ccb1ee

安装网络插件

kubectl apply -f flannel.yaml

#flannel.yaml 文件内容

---

apiVersion: policy/v1beta1

kind: PodSecurityPolicy

metadata:

name: psp.flannel.unprivileged

annotations:

seccomp.security.alpha.kubernetes.io/allowedProfileNames: docker/default

seccomp.security.alpha.kubernetes.io/defaultProfileName: docker/default

apparmor.security.beta.kubernetes.io/allowedProfileNames: runtime/default

apparmor.security.beta.kubernetes.io/defaultProfileName: runtime/default

spec:

privileged: false

volumes:

- configMap

- secret

- emptyDir

- hostPath

allowedHostPaths:

- pathPrefix: "/etc/cni/net.d"

- pathPrefix: "/etc/kube-flannel"

- pathPrefix: "/run/flannel"

readOnlyRootFilesystem: false

# Users and groups

runAsUser:

rule: RunAsAny

supplementalGroups:

rule: RunAsAny

fsGroup:

rule: RunAsAny

# Privilege Escalation

allowPrivilegeEscalation: false

defaultAllowPrivilegeEscalation: false

# Capabilities

allowedCapabilities: ['NET_ADMIN']

defaultAddCapabilities: []

requiredDropCapabilities: []

# Host namespaces

hostPID: false

hostIPC: false

hostNetwork: true

hostPorts:

- min: 0

max: 65535

# SELinux

seLinux:

# SELinux is unsed in CaaSP

rule: 'RunAsAny'

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1beta1

metadata:

name: flannel

rules:

- apiGroups: ['extensions']

resources: ['podsecuritypolicies']

verbs: ['use']

resourceNames: ['psp.flannel.unprivileged']

- apiGroups:

- ""

resources:

- pods

verbs:

- get

- apiGroups:

- ""

resources:

- nodes

verbs:

- list

- watch

- apiGroups:

- ""

resources:

- nodes/status

verbs:

- patch

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1beta1

metadata:

name: flannel

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: flannel

subjects:

- kind: ServiceAccount

name: flannel

namespace: kube-system

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: flannel

namespace: kube-system

---

kind: ConfigMap

apiVersion: v1

metadata:

name: kube-flannel-cfg

namespace: kube-system

labels:

tier: node

app: flannel

data:

cni-conf.json: |

{

"cniVersion": "0.2.0",

"name": "cbr0",

"plugins": [

{

"type": "flannel",

"delegate": {

"hairpinMode": true,

"isDefaultGateway": true

}

},

{

"type": "portmap",

"capabilities": {

"portMappings": true

}

}

]

}

net-conf.json: |

{

"Network": "10.244.0.0/16",

"Backend": {

"Type": "vxlan"

}

}

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: kube-flannel-ds-amd64

namespace: kube-system

labels:

tier: node

app: flannel

spec:

selector:

matchLabels:

app: flannel

template:

metadata:

labels:

tier: node

app: flannel

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: beta.kubernetes.io/os

operator: In

values:

- linux

- key: beta.kubernetes.io/arch

operator: In

values:

- amd64

hostNetwork: true

tolerations:

- operator: Exists

effect: NoSchedule

serviceAccountName: flannel

initContainers:

- name: install-cni

image: lizhenliang/flannel:v0.11.0-amd64

command:

- cp

args:

- -f

- /etc/kube-flannel/cni-conf.json

- /etc/cni/net.d/10-flannel.conflist

volumeMounts:

- name: cni

mountPath: /etc/cni/net.d

- name: flannel-cfg

mountPath: /etc/kube-flannel/

containers:

- name: kube-flannel

image: lizhenliang/flannel:v0.11.0-amd64

command:

- /opt/bin/flanneld

args:

- --ip-masq

- --kube-subnet-mgr

resources:

requests:

cpu: "100m"

memory: "50Mi"

limits:

cpu: "100m"

memory: "50Mi"

securityContext:

privileged: false

capabilities:

add: ["NET_ADMIN"]

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

volumeMounts:

- name: run

mountPath: /run/flannel

- name: flannel-cfg

mountPath: /etc/kube-flannel/

volumes:

- name: run

hostPath:

path: /run/flannel

- name: cni

hostPath:

path: /etc/cni/net.d

- name: flannel-cfg

configMap:

name: kube-flannel-cfg

查看节点状态

kubectl get node

查看系统pod状态

kubectl get pods -n kube-system

部署第一个K8S应用-Nginx,并通过公网ip访问

创建deployment(Pod控制器的一种)

kubectl create deployment xdclass-nginx --image=nginx:1.23.0

查看deployment和pod

kubectl get deployment,pod,svc

image-20220624134105247

暴露80端口,创建service

kubectl expose deployment xdclass-nginx --port=80 --type=NodePort

查看端口映射

kubectl get pod,svc

posted on 2023-03-14 09:34 Indian_Mysore 阅读(58) 评论(0) 收藏 举报

浙公网安备 33010602011771号

浙公网安备 33010602011771号