6.Mapreduce实例——Reduce端join

Mapreduce实例——Reduce端join

实验步骤

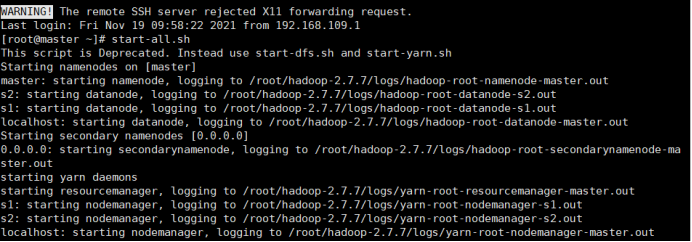

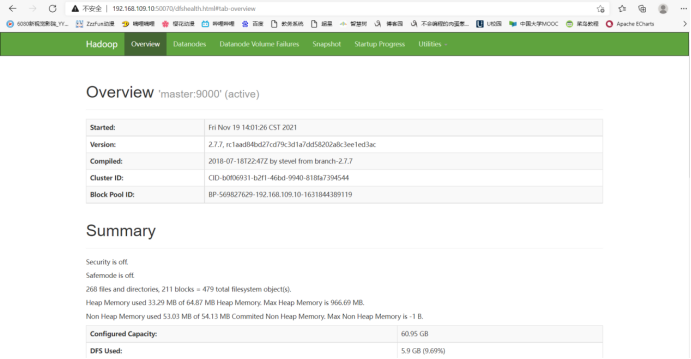

1.开启Hadoop

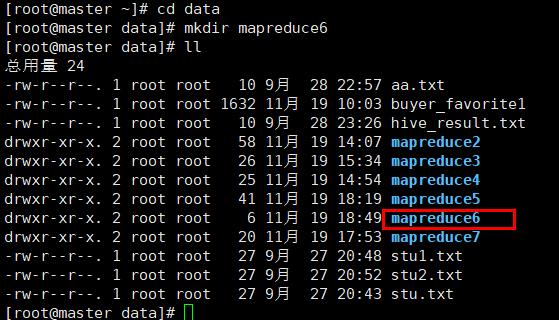

2.新建mapreduce6目录

在Linux本地新建/data/mapreduce6目录

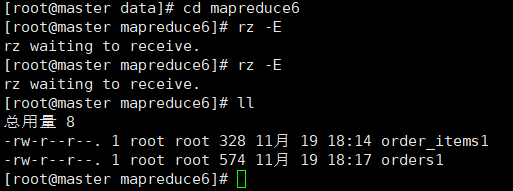

3. 上传文件到linux中

(自行生成文本文件,放到个人指定文件夹下)

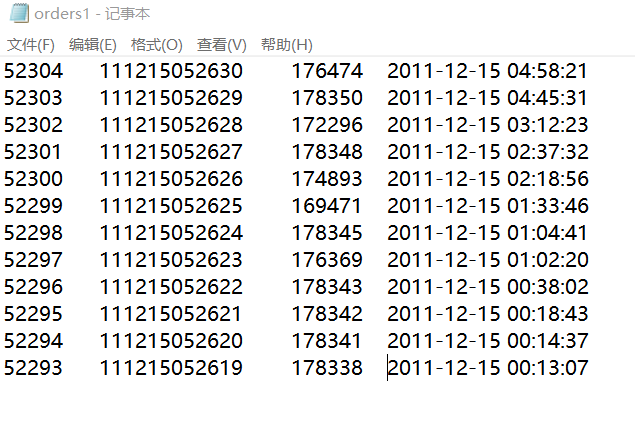

orders1

52304 111215052630 176474 2011-12-15 04:58:21

52303 111215052629 178350 2011-12-15 04:45:31

52302 111215052628 172296 2011-12-15 03:12:23

52301 111215052627 178348 2011-12-15 02:37:32

52300 111215052626 174893 2011-12-15 02:18:56

52299 111215052625 169471 2011-12-15 01:33:46

52298 111215052624 178345 2011-12-15 01:04:41

52297 111215052623 176369 2011-12-15 01:02:20

52296 111215052622 178343 2011-12-15 00:38:02

52295 111215052621 178342 2011-12-15 00:18:43

52294 111215052620 178341 2011-12-15 00:14:37

52293 111215052619 178338 2011-12-15 00:13:07

order_items1

252578 52293 1016840

252579 52293 1014040

252580 52294 1014200

252581 52294 1001012

252582 52294 1022245

252583 52294 1014724

252584 52294 1010731

252586 52295 1023399

252587 52295 1016840

252592 52296 1021134

252593 52296 1021133

252585 52295 1021840

252588 52295 1014040

252589 52296 1014040

252590 52296 1019043

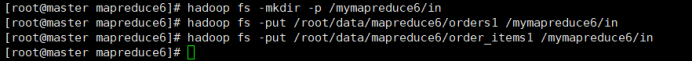

4.在HDFS中新建目录

首先在HDFS上新建/mymapreduce6/in目录,然后将Linux本地/data/mapreduce6目录下的orders1和order_items1文件导入到HDFS的/mymapreduce6/in目录中。

hadoop fs -mkdir -p /mymapreduce6/in

hadoop fs -put /root/data/mapreduce6/orders1 /mymapreduce6/in

hadoop fs -put /root/data/mapreduce6/order_items1 /mymapreduce6/in

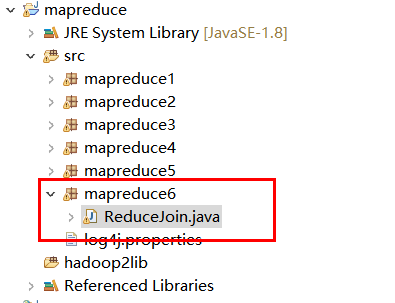

5.新建Java Project项目

新建Java Project项目,项目名为mapreduce。

在mapreduce项目下新建包,包名为mapreduce6。

在mapreduce6包下新建类,类名为ReduceJoin。

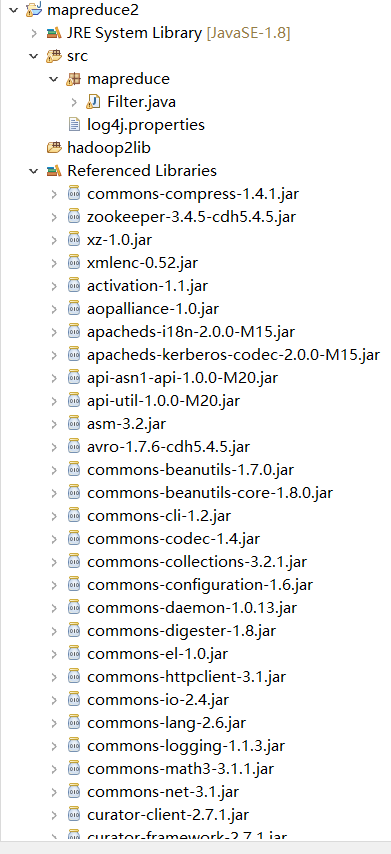

6.添加项目所需依赖的jar包

右键项目,新建一个文件夹,命名为:hadoop2lib,用于存放项目所需的jar包。

将/data/mapreduce2目录下,hadoop2lib目录中的jar包,拷贝到eclipse中mapreduce2项目的hadoop2lib目录下。

hadoop2lib为自己从网上下载的,并不是通过实验教程里的命令下载的

选中所有项目hadoop2lib目录下所有jar包,并添加到Build Path中。

7.编写程序代码

ReduceJoin.java

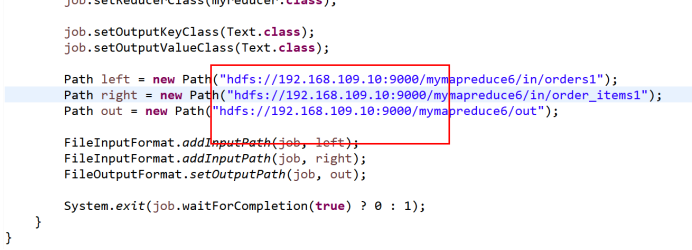

package mapreduce6; import java.io.IOException; import java.util.Iterator; import java.util.Vector; import org.apache.hadoop.fs.Path; import org.apache.hadoop.io.Text; import org.apache.hadoop.mapreduce.Job; import org.apache.hadoop.mapreduce.Mapper; import org.apache.hadoop.mapreduce.Reducer; import org.apache.hadoop.mapreduce.lib.input.FileInputFormat; import org.apache.hadoop.mapreduce.lib.input.FileSplit; import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat; public class ReduceJoin { public static class mymapper extends Mapper<Object, Text, Text, Text>{ @Override protected void map(Object key, Text value, Context context) throws IOException, InterruptedException { String filePath = ((FileSplit)context.getInputSplit()).getPath().toString(); if (filePath.contains("orders1")) { String line = value.toString(); String[] arr = line.split("\t"); context.write(new Text(arr[0]), new Text( "1+" + arr[2]+"\t"+arr[3])); //System.out.println(arr[0] + "_1+" + arr[2]+"\t"+arr[3]); }else if(filePath.contains("order_items1")) { String line = value.toString(); String[] arr = line.split("\t"); context.write(new Text(arr[1]), new Text("2+" + arr[2])); //System.out.println(arr[1] + "_2+" + arr[2]); } } } public static class myreducer extends Reducer<Text, Text, Text, Text>{ @Override protected void reduce(Text key, Iterable<Text> values, Context context) throws IOException, InterruptedException { Vector<String> left = new Vector<String>(); Vector<String> right = new Vector<String>(); for (Text val : values) { String str = val.toString(); if (str.startsWith("1+")) { left.add(str.substring(2)); } else if (str.startsWith("2+")) { right.add(str.substring(2)); } } int sizeL = left.size(); int sizeR = right.size(); //System.out.println(key + "left:"+left); //System.out.println(key + "right:"+right); for (int i = 0; i < sizeL; i++) { for (int j = 0; j < sizeR; j++) { context.write( key, new Text( left.get(i) + "\t" + right.get(j) ) ); //System.out.println(key + " \t" + left.get(i) + "\t" + right.get(j)); } } } } public static void main(String[] args) throws IOException, ClassNotFoundException, InterruptedException { Job job = Job.getInstance(); job.setJobName("reducejoin"); job.setJarByClass(ReduceJoin.class); job.setMapperClass(mymapper.class); job.setReducerClass(myreducer.class); job.setOutputKeyClass(Text.class); job.setOutputValueClass(Text.class); Path left = new Path("hdfs://192.168.109.10:9000/mymapreduce6/in/orders1"); Path right = new Path("hdfs://192.168.109.10:9000/mymapreduce6/in/order_items1"); Path out = new Path("hdfs://192.168.109.10:9000/mymapreduce6/out"); FileInputFormat.addInputPath(job, left); FileInputFormat.addInputPath(job, right); FileOutputFormat.setOutputPath(job, out); System.exit(job.waitForCompletion(true) ? 0 : 1); } }

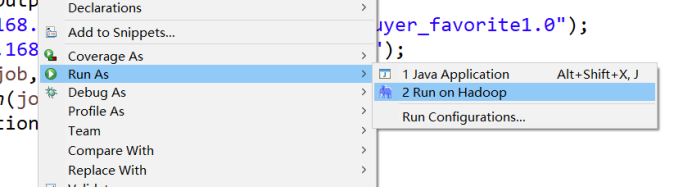

8.运行代码

在ReduceJoin类文件中,右键并点击=>Run As=>Run on Hadoop选项,将MapReduce任务提交到Hadoop中。

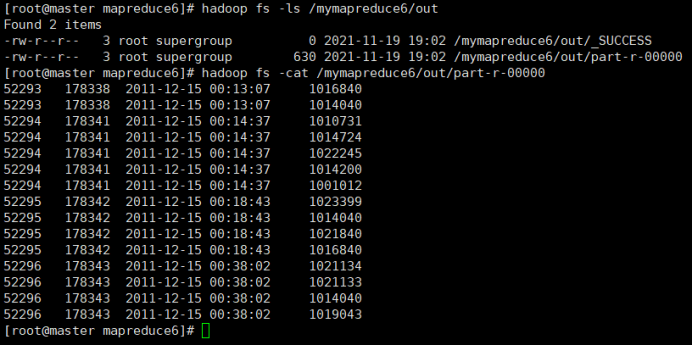

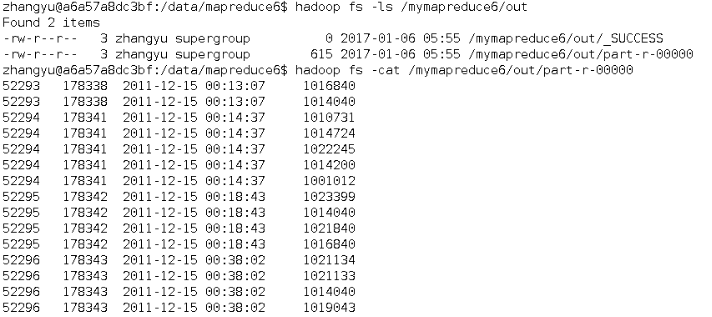

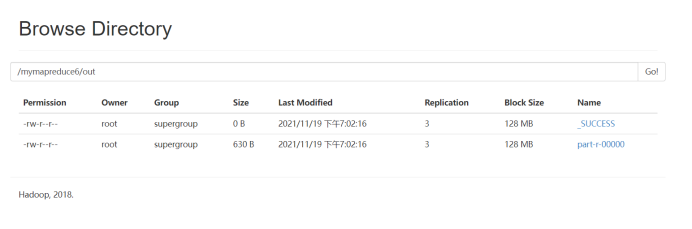

9.查看实验结果

待执行完毕后,进入命令模式下,在HDFS中/mymapreduce6/out查看实验结果。

hadoop fs -ls /mymapreduce6/out

hadoop fs -cat /mymapreduce6/out/part-r-00000

图一为我的运行结果,图二为实验结果

经过对比,发现结果一样

此处为浏览器截图

浙公网安备 33010602011771号

浙公网安备 33010602011771号