用python爬取动态网页时,普通的requests,urllib2无法实现。例如有些网站点击下一页时,会加载新的内容,但是网页的URL却没有改变(没有传入页码相关的参数),requests、urllib2无法抓取这些动态加载的内容,此时就需要使用Selenium了。

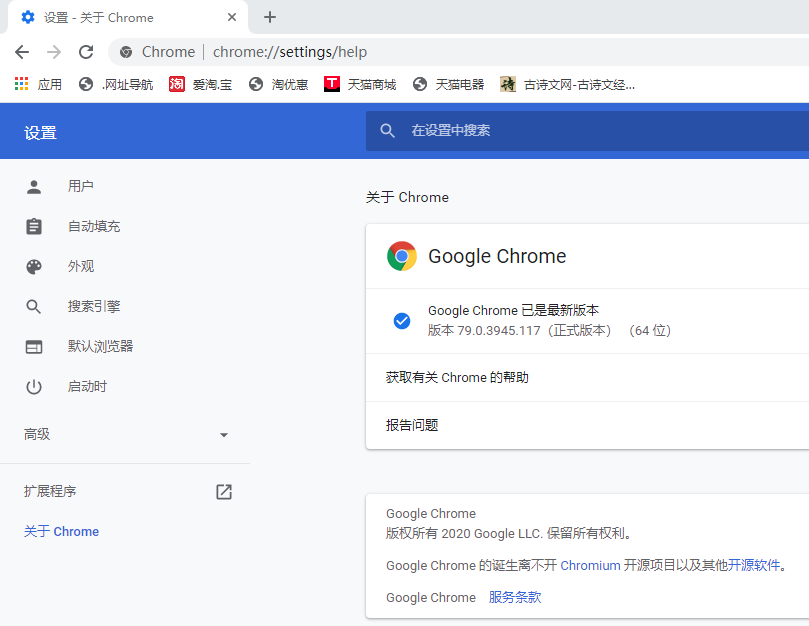

使用Selenium需要选择一个调用的浏览器并下载好对应的驱动,我使用的是Chrome浏览器。

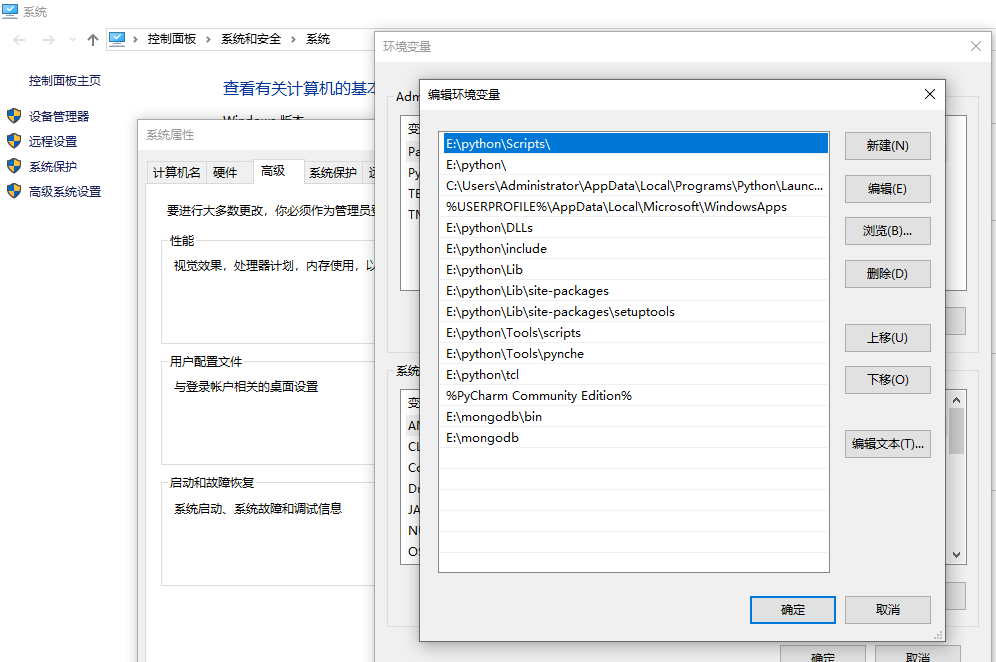

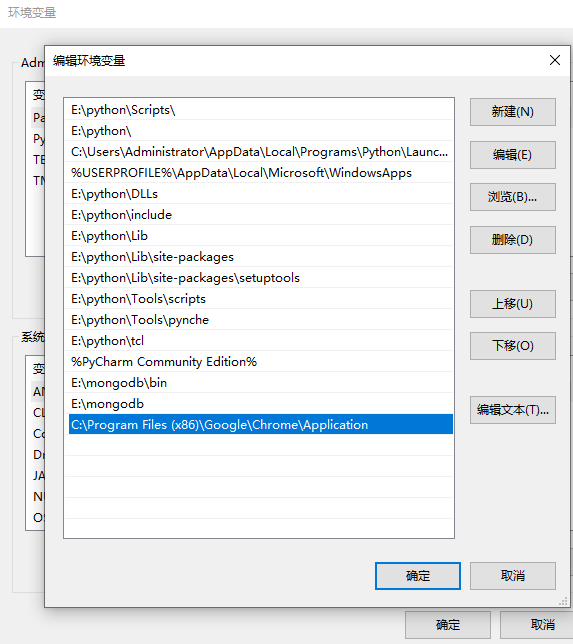

将下载好的chromedrive.exe文件复制到系统路径:E:\python\Scripts下,如果安装python的时候打path勾的话这个目录就会配置到系统path里了,如果没有的话,请手动把这个路径添加到path路径下。

下载的浏览器驱动也要看清楚对应自己浏览器版本的,如果驱动与浏览器版本不对是会报错了。

chromedriver与chrome浏览器对照表参考:

https://blog.csdn.net/huilan_same/article/details/51896672

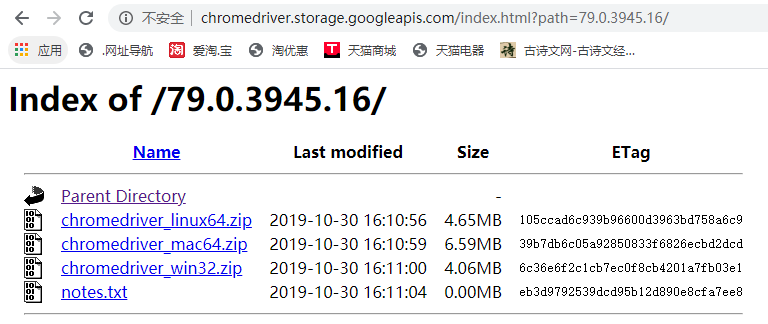

国内不能直接访问Chrome官网,可以在ChromeDriver仓库中下载:http://chromedriver.storage.googleapis.com/index.html

我的浏览器需要下载的是倒数第三个,请读者根据自己的电脑和浏览器的版本实际情况下载;

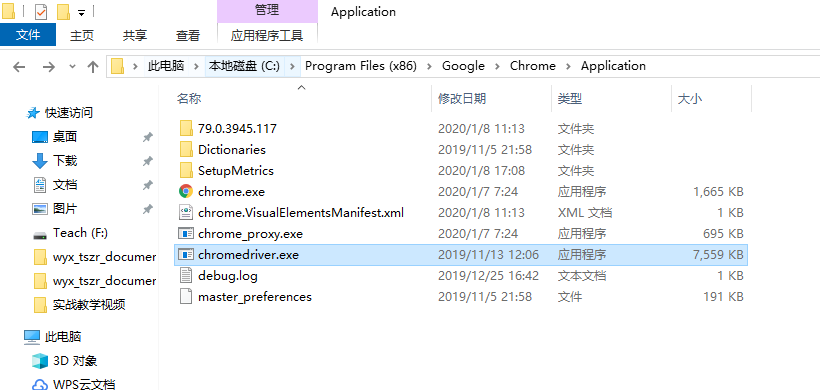

放到上面的python那个安装文件夹后,我记得也是需要放到chrome浏览器安装目录下的

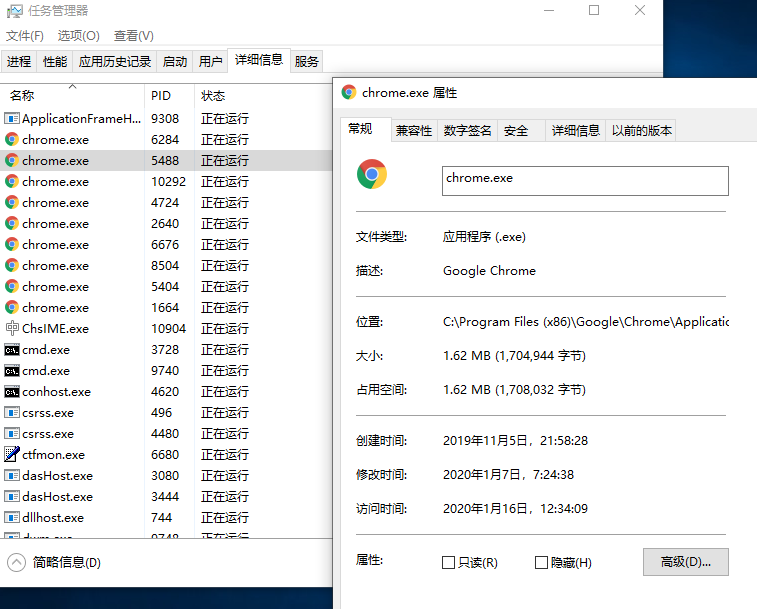

查找chrome安装路径

把下载的驱动放到这个路径下

然后也把chrome浏览器的安装路径添加到path路径中。

配置好之后,实现爬虫的代码如下:

import time import random import requests import urllib.request from selenium import webdriver from selenium.webdriver.common.by import By from selenium.webdriver.support.ui import WebDriverWait from selenium.webdriver.support import expected_conditions as EC def get_url(url): time.sleep(3) return(requests.get(url)) if __name__ == "__main__": driver = webdriver.Chrome() # 初始化一个浏览器对象 dep_cities = ["北京","上海","广州","深圳","天津","杭州","南京","济南","重庆","青岛","大连","宁波","厦门","成都","武汉","哈尔滨","沈阳","西安","长春","长沙","福州","郑州","石家庄","苏州","佛山","烟台","合肥","昆明","唐山","乌鲁木齐","兰州","呼和浩特","南通","潍坊","绍兴","邯郸","东营","嘉兴","泰州","江阴","金华","鞍山","襄阳","南阳","岳阳","漳州","淮安","湛江","柳州","绵阳"] for dep in dep_cities: strhtml = get_url('https://m.dujia.qunar.com/golfz/sight/arriveRecommend?dep=' + urllib.request.quote(dep) + '&exclude=&extensionImg=255,175') arrive_dict = strhtml.json() for arr_item in arrive_dict['data']: for arr_item_1 in arr_item['subModules']: for query in arr_item_1['items']: driver.get("https://fh.dujia.qunar.com/?tf=package") # 等待出发地输入框加载完毕,最多等待10s WebDriverWait(driver, 10).until(EC.presence_of_element_located((By.ID, "depCity"))) # 清空出发地文本框,输入出发地和目的地,点击“开始定制”按钮 driver.find_element_by_xpath("//*[@id='depCity']").clear() driver.find_element_by_xpath("//*[@id='depCity']").send_keys(dep) driver.find_element_by_xpath("//*[@id='arrCity']").send_keys(query["query"]) driver.find_element_by_xpath("/html/body/div[2]/div[1]/div[2]/div[3]/div/div[2]/div/a").click() print("dep:%s arr:%s" % (dep, query["query"])) for i in range(10): time.sleep(random.uniform(5, 6)) # 如果定位不到页码按钮,说明搜索结果为空 pageBtns = driver.find_elements_by_xpath("html/body/div[2]/div[2]/div[8]") if pageBtns == []: break # 找出所有的路线信息DOM元素 routes = driver.find_elements_by_xpath("html/body/div[2]/div[2]/div[7]/div[2]/div") for route in routes: result = { 'date': time.strftime('%Y-%m-%d', time.localtime(time.time())), 'dep': dep, 'arrive': query['query'], 'result': route.text } print(result) if i < 9: # 找到“下一页"按钮并点击翻页 btns = driver.find_elements_by_xpath("html/body/div[2]/div[2]/div[8]/div/div/a") for a in btns: if a.text == u"下一页": a.click() break driver.close()

可能是网络慢运行的时间有点长才有输出。爬虫的话建议网速带宽要大些这样就会避免一些很乱的错误了。