hadoop 3.x 服役 | 退役数据节点

在服役前要配置好新增主机的环境变量,ssh等信息,个人环境介绍

hadoop002(namenode),hadoop003(resourcemanager),hadoop004(secondarynamenode),准备新增hadoop005

一.服役数据节点

1.在namenode节点主机下的${HADOOP_HOME}/etc/hadoop/下创建dfs.hosts文件添加你要新增的主机名

hadoop002

hadoop003

hadoop004

hadoop005

2.打开hfds-site,xml,添加以下配置

1 <property>

2 <name>dfs.hosts</name>

3 <value>/opt/module/hadoop-3.1.1/etc/hadoop/dfs.hosts</value>

4 <description>Names a file that contains a list of hosts that are

5 permitted to connect to the namenode. The full pathname of the file

6 must be specified. If the value is empty, all hosts are

7 permitted.</description>

8 </property>

3.刷新namenode与yarn

hdfs dfsadmin -refreshNodes

yarn rmadmin -refreshNodes

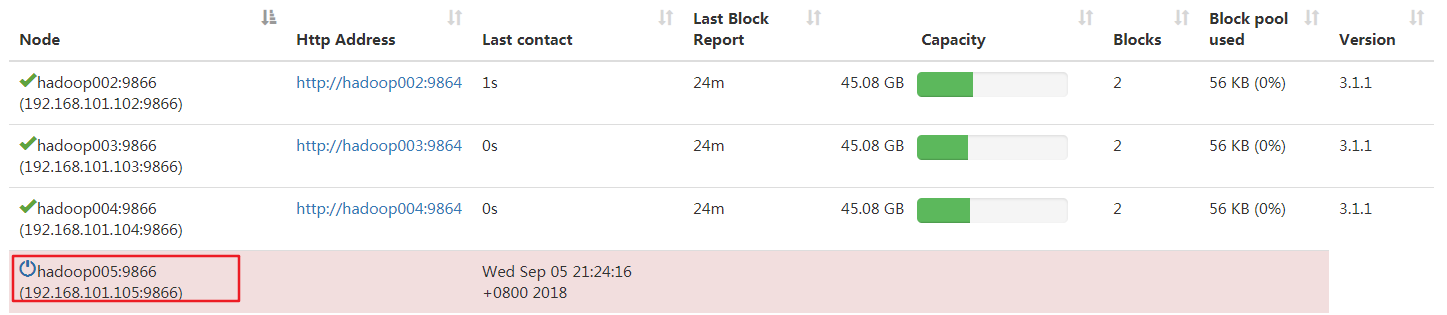

此时在页面查看hadoop005状态,发现是dead

4.在hadoop005上启动datanode与nodemanager

hdfs --daemon start datanode

yarn --daemon start nodemanager

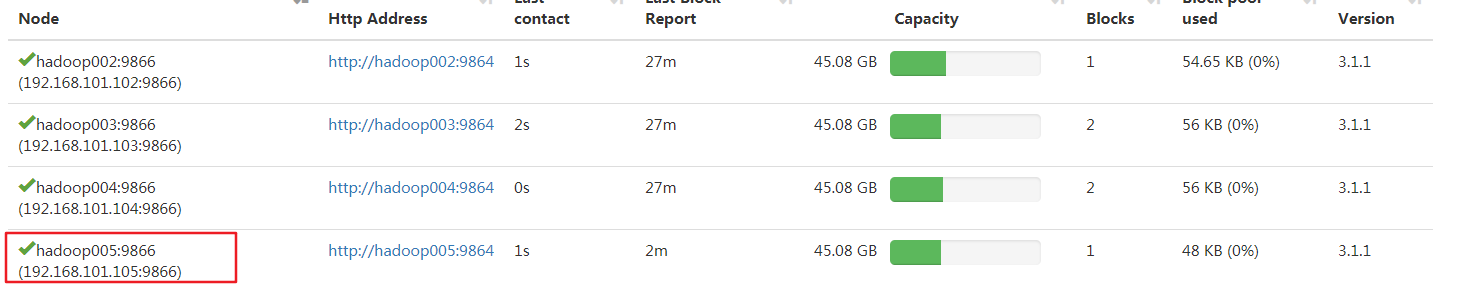

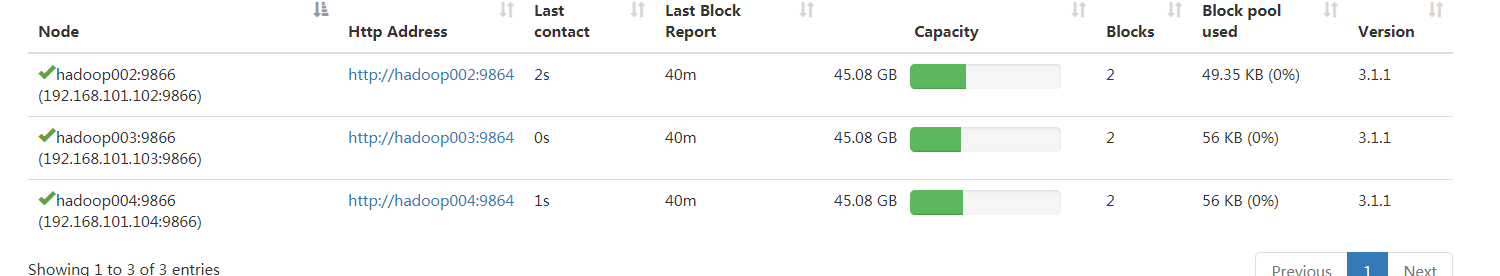

再次查看状态

到这基本完成,但还需要在workers文件中添加主机名,以便下次执行start-dfs命令时能够直接启动新增的hadoop005节点

1 hadoop002

2 hadoop003

3 hadoop004

4 hadoop005

如果需要平衡数据的话,在执行下start-balancer.sh即可(记得修改分发脚本)

二.退役旧节点

1.在namenode节点主机下的${HADOOP_HOME}/etc/hadoop/下创建dfs.hosts.exclude文件添加你要退役的主机名

1 hadoop005

2.打开hfds-site,xml,添加以下配置

1 <property>

2 <name>dfs.hosts.exclude</name>

3 <value>/opt/module/hadoop-3.1.1/etc/hadoop/dfs.hosts.exclude</value>

4 <description>Names a file that contains a list of hosts that are

5 not permitted to connect to the namenode. The full pathname of the

6 file must be specified. If the value is empty, no hosts are

7 excluded.</description>

8 </property>

3.刷新namenode与yarn

hdfs dfsadmin -refreshNodes

yarn rmadmin -refreshNodes

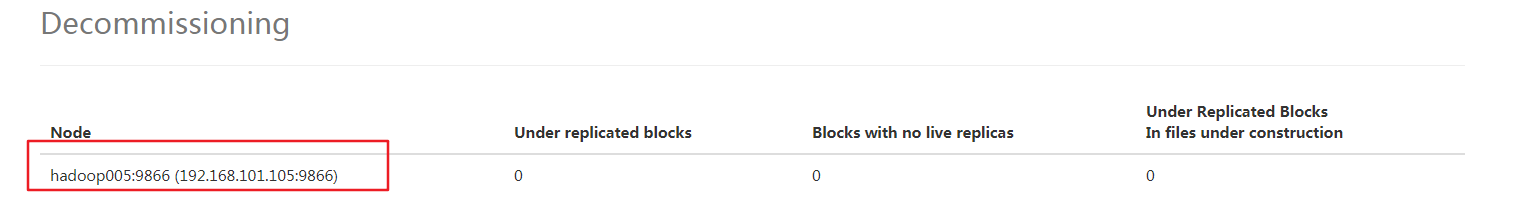

此时在页面查看hadoop005状态,发现正在退役

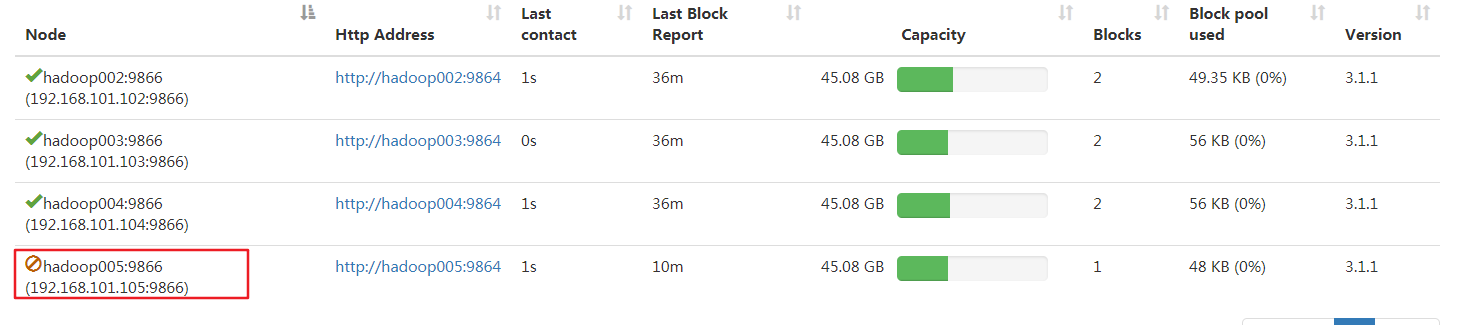

继续等待,直到had005变成退役状态

4.在hadoop005上停止namenode与nodemanager

hdfs --daemon stop datanode

yarn --daemon stop nodemanager

5.从dfs.hosts中删除hadoop005

6.再次刷新namenode与yarn后查看页面

7.从workers文件中删除hadoop005,这样执行start-dfs时就不会启动hadoop005上的datanode了

8.数据平衡start-balancer.sh

9.修改分发脚本

浙公网安备 33010602011771号

浙公网安备 33010602011771号