003.windows下启动运行spark-spark-shell.cmd

解压文件

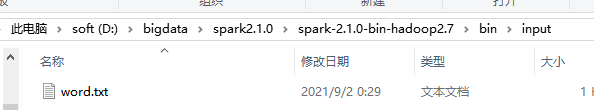

D:\bigdata\spark2.1.0\spark-2.1.0-bin-hadoop2.7\bin

创建文件

spark scala

hadoop scala

scala spark

hive hadoop

bin目录下

spark-shell.cmd

读文件处理文件

scala> sc.textFile("file:///D:/bigdata/spark2.1.0/spark-2.1.0-bin-hadoop2.7/bin/input/word.txt").flatMap(_.split(" ")).map((_,1)).reduceByKey(_+_).collect

res5: Array[(String, Int)] = Array((scala,3), (hive,1), (spark,1), (hadoop,2), (saprk,1))

浙公网安备 33010602011771号

浙公网安备 33010602011771号