SCSI and iSCSI

计算机体系结构

SCSI的定义:

SCSI: Small Computer System Interface

SCSI是一种I/O技术

SCSI规范了一种并行的I/O总线和相关协议

SCSI的数据传输是以块的方式进行的

SCSI的特点:

设备无关性

多设备并行

高带宽

低系统开销

SCSI总线:

SCSI总线是SCSI设备之间传输数据的通路

SCSI总线又被称为SCSI通道

SCSI ID:

一个独立的SCSI总线按照规格不同可以支持8或16个SCSI设备,设备的编号需要通过SCSI ID来进行控制

系统中每个SCSI设备都必须有自己唯一的SCSI ID,SCSI ID实际上就是这些设备的地址

窄SCSI总线最多允许8个、宽SCSI总线最多允许16个不同的SCSI设备和它进行连接

LUN:

LUN(Logical Unit Number,逻辑单元号)是为了使用和描述更多设备及对象而引进的一个方法

每个SCSI ID上最多32个LUN,一个LUN对应一个逻辑设备

SCSI的标准:

SCSI-1

1976年ANSI标准

SCSI-2

SCSI-1的后续接口

SCSI-3

更高速度的接口类型:Ultra-2/Ultra-160/Ultra-320

SCSI & SAS

iscsi

SAN

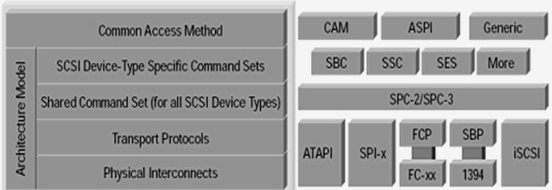

SCSI-3 SCSI model:

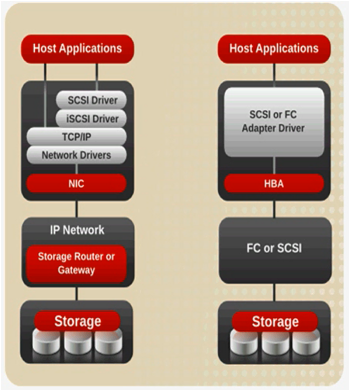

iSCSI versus SCSI/FC access to storage:

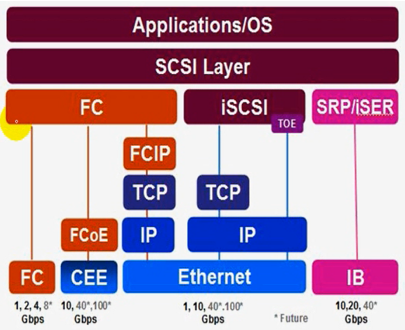

FCoE vs FC vs ISCSI vs IB

SAN vs NAS

iSCSI Protocol:

iSCSI HBA卡:

采用内建SCSI指令及TOE引擎的ASIC芯片的适配卡,在三种iSCSI Initiator中,价格最贵,但性能最佳

iSCSI TOE卡:

内建TOE引擎的ASIC芯片适配卡,由于SCSI指令仍以软件方式运作,所以仍会吃掉些许的CPU资源

ISCSI Initiator驱动程序:

目前不论Micriosoft Windows、IBM AIX、HP-UX、Linux、Novell Netware等各家操作系统,皆已陆续提供这方面的服务,其中以微软最为积极,也最全面。在价格上,比起前两种方案,远为低廉,甚至完全免费

但由于Initiator驱动程序工作时会耗费大量的CPU使用率及系统资源,所以性能最差

iscsi: 监听在tcp3260端口;

iSCSI Target: scsi-target-utils

3260

客户端认证方式:

1、基于IP

2、基于用户,CHAP

iSCSI Initiator: iscsi-initiator-utils

open-iscsi

tgtadm模式化命令:

--mode

常用模式:target、logicalunit、account

target --op

new、delete、show、update、bind、unbind

logicalunit --op

new、delete

account --op

new、delete、bind、unbind

--lld, -L:指定驱动;

--tid, -t:指定target的id号;

--lun, -l:指定逻辑单元号;

--backing-store <path>, -b:指定存储设备;

--initiator-address <address>, -I:指定绑定IP地址;

--targetname <targetname>, -T:指定target名称;

trgetname:

iqn.yyyy-mm.<reversed domain name>[:identifier]:全局唯一标识名,yyyy年,mm月,reversed domain name公司域名反过来写;

iqn.2013-05.com.magedu:tstore.disk1

iscsiadm模式化命令:

-m {discovery|node|session|iface}

discovery: 发现某服务器是否有target输出,以及输出了那些target;

node: 管理跟某target的关联关系;

session: 会话管理

iface: 接口管理

iscsiadm -m discovery [ -d debug_level ] [ -P printlevel ] [ -I iface -t type -p ip:port [ -l ] ] | [ [ -p

ip:port ] [ -l | -D ] ]

-d: 指定调试级别,0-8;

-I: 指定那个接口;

-t type: 指定类型,SendTargets(st), SLP, and iSNS;

-p: IP:port:指定IP和端口;

iscsiadm -m discovery -d 2 -t st -p 172.16.100.100

iiscsiadm -m node [ -d debug_level ] [ -P printlevel ] | [ -L all,manual,automatic ] [ -U all,manual,automatic ] [ -S ] [ [ -T targetname -p ip:port -I ifaceN ] [ -l | -u | -R | -s] ] [ [ -o operation ] [ -n name ] [ -v value ] ]

-L all,manual,automatic:登录,all登录所有target,manual手动登录,automatic自动登录;

-U all,manual,automatic:登出;

-S:显示

-T targetname :登录到那个target名字;

-p ip:port:那个服务器的target;

-I ifaceN:指定那个网卡登录;

-l:登录;

-u:登出;

-R:重新登录;

-o operation:操作

-n name:名字

-v value:值

iscsi服务端:

[root@steppingstone ~]# fdisk -l(查看分区情况)

Disk /dev/sda: 53.6 GB, 53687091200 bytes

255 heads, 63 sectors/track, 6527 cylinders

Units = cylinders of 16065 * 512 = 8225280 bytes

Device Boot Start End Blocks Id System

/dev/sda1 * 1 13 104391 83 Linux

/dev/sda2 14 2624 20972857+ 83 Linux

/dev/sda3 2625 2755 1052257+ 82 Linux swap / Solaris

/dev/sda4 2756 6527 30298590 5 Extended

/dev/sda5 2756 5188 19543041 8e Linux LVM

[root@steppingstone ~]# fdisk /dev/sda(磁盘管理,进入交互模式)

The number of cylinders for this disk is set to 6527.

There is nothing wrong with that, but this is larger than 1024,

and could in certain setups cause problems with:

1) software that runs at boot time (e.g., old versions of LILO)

2) booting and partitioning software from other OSs

(e.g., DOS FDISK, OS/2 FDISK)

Command (m for help): n(创建分区)

Command action

e extended

p primary partition (1-4)

e(扩展分区)

Selected partition 4(指定分区号)

First cylinder (2756-6527, default 2756):

Using default value 2756

Last cylinder or +size or +sizeM or +sizeK (2756-6527, default 6527):

Using default value 6527

Command (m for help): n

First cylinder (2756-6527, default 2756):

Using default value 2756

Last cylinder or +size or +sizeM or +sizeK (2756-6527, default 6527): +10G(新建10G分区)

Command (m for help): p(显示分区情况)

Disk /dev/sda: 53.6 GB, 53687091200 bytes

255 heads, 63 sectors/track, 6527 cylinders

Units = cylinders of 16065 * 512 = 8225280 bytes

Device Boot Start End Blocks Id System

/dev/sda1 * 1 13 104391 83 Linux

/dev/sda2 14 2624 20972857+ 83 Linux

/dev/sda3 2625 2755 1052257+ 82 Linux swap / Solaris

/dev/sda4 2756 6527 30298590 5 Extended

/dev/sda5 2756 3972 9775521 83 Linux

Command (m for help): n(新建分区)

First cylinder (3973-6527, default 3973):

Using default value 3973

Last cylinder or +size or +sizeM or +sizeK (3973-6527, default 6527): +10G(创建10G分区)

Command (m for help): p(显示分区情况)

Disk /dev/sda: 53.6 GB, 53687091200 bytes

255 heads, 63 sectors/track, 6527 cylinders

Units = cylinders of 16065 * 512 = 8225280 bytes

Device Boot Start End Blocks Id System

/dev/sda1 * 1 13 104391 83 Linux

/dev/sda2 14 2624 20972857+ 83 Linux

/dev/sda3 2625 2755 1052257+ 82 Linux swap / Solaris

/dev/sda4 2756 6527 30298590 5 Extended

/dev/sda5 2756 3972 9775521 83 Linux

/dev/sda6 3973 5189 9775521 83 Linux

Command (m for help): w(保存退出)

The partition table has been altered!

Calling ioctl() to re-read partition table.

WARNING: Re-reading the partition table failed with error 16: 设备或资源忙.

The kernel still uses the old table.

The new table will be used at the next reboot.

Syncing disks.

[root@steppingstone ~]# partprobe /dev/sda(让内核重新加载/dev/sda分区表)

[root@steppingstone ~]# yum -y install scsi-target-utils(通过yum源安装scsi-target-utils软件,-y所有询问回答yes)

[root@steppingstone ~]# rpm -ql scsi-target-utils(查看scsi-target-utils安装生成那些文件)

/etc/rc.d/init.d/tgtd(服务器端脚本)

/etc/sysconfig/tgtd

/etc/tgt/targets.conf(配置文件)

/usr/sbin/tgt-admin

/usr/sbin/tgt-setup-lun

/usr/sbin/tgtadm(在命令行创建target和lun工具)

/usr/sbin/tgtd

/usr/sbin/tgtimg

/usr/share/doc/scsi-target-utils-1.0.14

/usr/share/doc/scsi-target-utils-1.0.14/README

/usr/share/doc/scsi-target-utils-1.0.14/README.iscsi

/usr/share/doc/scsi-target-utils-1.0.14/README.iser

/usr/share/doc/scsi-target-utils-1.0.14/README.lu_configuration

/usr/share/doc/scsi-target-utils-1.0.14/README.mmc

/usr/share/man/man8/tgt-admin.8.gz

/usr/share/man/man8/tgt-setup-lun.8.gz

/usr/share/man/man8/tgtadm.8.gz

[root@steppingstone ~]# service tgtd start(启动tgtd服务)

Starting SCSI target daemon: Starting target framework daemon

[root@steppingstone ~]# netstat -tnlp(查看系统服务,-t代表tcp,-n以数字显示,-l监听端口,-p显示服务名称)

Active Internet connections (only servers)

Proto Recv-Q Send-Q Local Address Foreign Address State PID/Program name

tcp 0 0 127.0.0.1:2208 0.0.0.0:* LISTEN 3586/./hpiod

tcp 0 0 0.0.0.0:929 0.0.0.0:* LISTEN 3718/rpc.mountd

tcp 0 0 0.0.0.0:2049 0.0.0.0:* LISTEN -

tcp 0 0 0.0.0.0:34243 0.0.0.0:* LISTEN -

tcp 0 0 0.0.0.0:901 0.0.0.0:* LISTEN 3689/rpc.rquotad

tcp 0 0 0.0.0.0:911 0.0.0.0:* LISTEN 3273/rpc.statd

tcp 0 0 0.0.0.0:111 0.0.0.0:* LISTEN 3232/portmap

tcp 0 0 0.0.0.0:22 0.0.0.0:* LISTEN 3609/sshd

tcp 0 0 127.0.0.1:631 0.0.0.0:* LISTEN 3623/cupsd

tcp 0 0 127.0.0.1:25 0.0.0.0:* LISTEN 3768/sendmail

tcp 0 0 127.0.0.1:6010 0.0.0.0:* LISTEN 3976/sshd

tcp 0 0 0.0.0.0:3260 0.0.0.0:* LISTEN 4071/tgtd

tcp 0 0 127.0.0.1:2207 0.0.0.0:* LISTEN 3591/python

tcp 0 0 :::22 :::* LISTEN 3609/sshd

tcp 0 0 ::1:6010 :::* LISTEN 3976/sshd

tcp 0 0 :::3260 :::* LISTEN 4071/tgtd 提示:tgtd监听tcp的3260端口;

[root@steppingstone ~]# chkconfig tgtd on(让tgtd服务开机自动启动)

[root@steppingstone ~]# tgtadm -h(查看tgtadm命令的帮助)

Usage: tgtadm [OPTION]

Linux SCSI Target Framework Administration Utility, version

--lld <driver> --mode(模式) target(操作target) --op(操作) new(新建target) --tid <id> --targetname <name>

add a new target with <id> and <name>. <id> must not be zero.

--lld <driver> --mode target --op delete(删除target) --tid <id>

delete the specific target with <id>. The target must

have no activity.

--lld <driver> --mode target --op show(显示target)

show all the targets.

--lld <driver> --mode target --op show --tid <id>

show the specific target's parameters.

--lld <driver> --mode target --op update(更新target) --tid <id> --name <param> --value <value>

change the target parameters of the specific

target with <id>.

--lld <driver> --mode target --op bind(绑定target) --tid <id> --initiator-address <src>(将某个initiator的IP地址和target绑定,做基于IP授权)

enable the target to accept the specific initiators.

--lld <driver> --mode target --op unbind(解绑target) --tid <id> --initiator-address <src>

disable the specific permitted initiators.

--lld <driver> --mode logicalunit(操作逻辑单元) --op new(创建logicalunit) --tid <id>(target) --lun <lun> (逻辑单元号)\

--backing-store <path> --bstype <type> --bsoflags <options>

add a new logical unit with <lun> to the specific

target with <id>. The logical unit is offered

to the initiators. <path> must be block device files

(including LVM and RAID devices) or regular files.

bstype option is optional.

bsoflags supported options are sync and direct

(sync:direct for both).

--lld <driver> --mode logicalunit --op delete(删除logicalunit) --tid <id> --lun <lun>

delete the specific logical unit with <lun> that

the target with <id> has.

--lld <driver> --mode account(绑定用户) --op new(创建帐号) --user <name> --password <pass>

add a new account with <name> and <pass>.

--lld <driver> --mode account --op delete --user <name>

delete the specific account having <name>.

--lld <driver> --mode account --op bind(绑定帐号) --tid <id> --user <name> [--outgoing]

add the specific account having <name> to

the specific target with <id>.

<user> could be <IncomingUser> or <OutgoingUser>.

If you use --outgoing option, the account will

be added as an outgoing account.

--lld <driver> --mode account --op unbind(解除绑定) --tid <id> --user <name>

delete the specific account having <name> from specific

target.

--control-port <port> use control port <port>

--help display this help and exit

Report bugs to <stgt@vger.kernel.org>.

[root@steppingstone ~]# man tgtadm(查看tgtadm的man手册)

tgtadm - Linux SCSI Target Administration Utility

tgtadm [OPTIONS]... [-C --control-port <port>] [-L --lld <driver>] [-o --op <operation>] [-m --mode <mode>]

[-t --tid <id>] [-T --targetname <targetname>] [-Y --device-type <type>] [-l --lun <lun>]

[-b --backing-store <path>] [-E --bstype <type>] [-I --initiator-address <address>]

[-n --name <parameter>] [-v --value <value>] [-P --params <param=value[,param=value...]>]

[-h --help]

[root@steppingstone ~]# fdisk -l(查看磁盘分区情况)

Disk /dev/sda: 53.6 GB, 53687091200 bytes

255 heads, 63 sectors/track, 6527 cylinders

Units = cylinders of 16065 * 512 = 8225280 bytes

Device Boot Start End Blocks Id System

/dev/sda1 * 1 13 104391 83 Linux

/dev/sda2 14 2624 20972857+ 83 Linux

/dev/sda3 2625 2755 1052257+ 82 Linux swap / Solaris

/dev/sda4 2756 6527 30298590 5 Extended

/dev/sda5 2756 3972 9775521 83 Linux

/dev/sda6 3973 5189 9775521 83 Linux

[root@steppingstone ~]# tgtadm --lld iscsi --mode target --op new --targetname iqn.2013-05.com.magedu:teststore.disk1 --tid 1(新建target,

--lld指定驱动为iscsi,--mode指定模式为target,--op操作为new新建,--targetname名字,--tid指定target的id号,千万不能指定为0,0为保留为当前主机,)

[root@steppingstone ~]# tgtadm --lld iscsi --mode target --op show(查看target,--lld指定驱动,--mode指定模式,--op操作)

Target 1: iqn.2013-05.com.magedu:teststore.disk1

System information:

Driver: iscsi

State: ready

I_T nexus information:

LUN information:

LUN: 0(逻辑单元号为0,一个target最多有32个LUN)

Type: controller

SCSI ID: IET 00010000

SCSI SN: beaf10

Size: 0 MB, Block size: 1

Online: Yes

Removable media: No

Readonly: No

Backing store type: null

Backing store path: None

Backing store flags:

Account information:(没有绑定任何账户)

ACL information:(没有绑定任何initiator的IP地址)

提示:建立target没有关联到某个设备,target只是模拟的是个控制芯片,这个target要能被别人使用要关联到某个存储设备上去;

[root@steppingstone ~]# tgtadm --lld iscsi --mode logicalunit --op new --tid 1 --lun 1 --backing-store /dev/sda5(创建逻辑单元,--lld指定驱动,

--mode指定模式,--op操作,--tid指定target的id号,--lun指定逻辑单元号,自己的逻辑单元号,0已经被占用了,--backing-store指定存储设备,)

[root@steppingstone ~]# tgtadm --lld iscsi --mode target --op show(查看target,--lld指定驱动,--mode指定模式,--op操作)

Target 1: iqn.2013-05.com.magedu:teststore.disk1

System information:

Driver: iscsi

State: ready

I_T nexus information:

LUN information:

LUN: 0

Type: controller(控制器)

SCSI ID: IET 00010000

SCSI SN: beaf10

Size: 0 MB, Block size: 1

Online: Yes

Removable media: No

Readonly: No

Backing store type: null

Backing store path: None

Backing store flags:

LUN: 1

Type: disk(磁盘)

SCSI ID: IET 00010001

SCSI SN: beaf11

Size: 10010 MB, Block size: 512(磁盘大小10G,块大小512)

Online: Yes(在线)

Removable media: No(是否可以直接拔掉的设备)

Readonly: No(是否只读)

Backing store type: rdwr(存储设备类型读写都可以)

Backing store path: /dev/sda5(真正存储设备)

Backing store flags:

Account information:

ACL information:

[root@steppingstone ~]# tgtadm --lld iscsi --mode target --op bind --tid 1 --initiator-address 172.16.0.0/16(绑定target让172.16.0.0/16网段使用,

--lld指定驱动,--mode指定模式,--op操作,--tid指定target的id号)

[root@steppingstone ~]# tgtadm --lld iscsi --mode target --op show(查看target,--lld指定驱动,--mode指定模式,--op操作)

Target 1: iqn.2013-05.com.magedu:teststore.disk1

System information:

Driver: iscsi

State: ready

I_T nexus information:

LUN information:

LUN: 0

Type: controller

SCSI ID: IET 00010000

SCSI SN: beaf10

Size: 0 MB, Block size: 1

Online: Yes

Removable media: No

Readonly: No

Backing store type: null

Backing store path: None

Backing store flags:

LUN: 1

Type: disk

SCSI ID: IET 00010001

SCSI SN: beaf11

Size: 10010 MB, Block size: 512

Online: Yes

Removable media: No

Readonly: No

Backing store type: rdwr

Backing store path: /dev/sda5

Backing store flags:

Account information:

ACL information:

172.16.0.0/16

iscsi client_1:

[root@node1 ~]# yum -y install iscsi-initiator-utils(安装iscsi-initator-utils软件,-y所有询问回答yes) [root@node1 ~]# rpm -ql iscsi-initiator-utils(查看iscsi-initiator-utils安装生成那些文件) /etc/iscsi(配置文件) /etc/iscsi/iscsid.conf /etc/logrotate.d/iscsiuiolog /etc/rc.d/init.d/iscsi(脚本,只要启动iscsi,iscsid会自动启动) /etc/rc.d/init.d/iscsid(脚本) /sbin/iscsi-iname /sbin/iscsiadm(客户端管理工具) /sbin/iscsid /sbin/iscsistart(启动iscsi功能) /sbin/iscsiuio /usr/include/fw_context.h(头文件) /usr/include/iscsi_list.h /usr/include/libiscsi.h /usr/lib/libfwparam.a(库文件) /usr/lib/libiscsi.so /usr/lib/libiscsi.so.0 /usr/lib/python2.4/site-packages/libiscsimodule.so /usr/share/doc/iscsi-initiator-utils-6.2.0.872 /usr/share/doc/iscsi-initiator-utils-6.2.0.872/README /usr/share/doc/iscsi-initiator-utils-6.2.0.872/html /usr/share/doc/iscsi-initiator-utils-6.2.0.872/html/annotated.html /usr/share/doc/iscsi-initiator-utils-6.2.0.872/html/doxygen.css /usr/share/doc/iscsi-initiator-utils-6.2.0.872/html/doxygen.png /usr/share/doc/iscsi-initiator-utils-6.2.0.872/html/files.html /usr/share/doc/iscsi-initiator-utils-6.2.0.872/html/functions.html /usr/share/doc/iscsi-initiator-utils-6.2.0.872/html/functions_vars.html /usr/share/doc/iscsi-initiator-utils-6.2.0.872/html/globals.html /usr/share/doc/iscsi-initiator-utils-6.2.0.872/html/globals_defs.html /usr/share/doc/iscsi-initiator-utils-6.2.0.872/html/globals_enum.html /usr/share/doc/iscsi-initiator-utils-6.2.0.872/html/globals_eval.html /usr/share/doc/iscsi-initiator-utils-6.2.0.872/html/globals_func.html /usr/share/doc/iscsi-initiator-utils-6.2.0.872/html/globals_vars.html /usr/share/doc/iscsi-initiator-utils-6.2.0.872/html/index.html /usr/share/doc/iscsi-initiator-utils-6.2.0.872/html/libiscsi_8c.html /usr/share/doc/iscsi-initiator-utils-6.2.0.872/html/libiscsi_8h-source.html /usr/share/doc/iscsi-initiator-utils-6.2.0.872/html/libiscsi_8h.html /usr/share/doc/iscsi-initiator-utils-6.2.0.872/html/namespaces.html /usr/share/doc/iscsi-initiator-utils-6.2.0.872/html/namespacesetup.html /usr/share/doc/iscsi-initiator-utils-6.2.0.872/html/pylibiscsi_8c.html /usr/share/doc/iscsi-initiator-utils-6.2.0.872/html/setup_8py.html /usr/share/doc/iscsi-initiator-utils-6.2.0.872/html/structPyIscsiChapAuthInfo.html /usr/share/doc/iscsi-initiator-utils-6.2.0.872/html/structPyIscsiNode.html /usr/share/doc/iscsi-initiator-utils-6.2.0.872/html/structlibiscsi__auth__info.html /usr/share/doc/iscsi-initiator-utils-6.2.0.872/html/structlibiscsi__chap__auth__info.html /usr/share/doc/iscsi-initiator-utils-6.2.0.872/html/structlibiscsi__context.html /usr/share/doc/iscsi-initiator-utils-6.2.0.872/html/structlibiscsi__network__config.html /usr/share/doc/iscsi-initiator-utils-6.2.0.872/html/structlibiscsi__node.html /usr/share/doc/iscsi-initiator-utils-6.2.0.872/html/tab_b.gif /usr/share/doc/iscsi-initiator-utils-6.2.0.872/html/tab_l.gif /usr/share/doc/iscsi-initiator-utils-6.2.0.872/html/tab_r.gif /usr/share/doc/iscsi-initiator-utils-6.2.0.872/html/tabs.css /usr/share/man/man8/iscsi-iname.8.gz /usr/share/man/man8/iscsiadm.8.gz /usr/share/man/man8/iscsid.8.gz /usr/share/man/man8/iscsistart.8.gz /usr/share/man/man8/iscsiuio.8.gz /var/lib/iscsi /var/lib/iscsi/ifaces(各网卡接口,要跟target建立联系设置使用那块网卡) /var/lib/iscsi/isns(统一命名方式) /var/lib/iscsi/nodes(节点) /var/lib/iscsi/send_targets(向target发送指令的) /var/lib/iscsi/slp /var/lib/iscsi/static /var/lock/iscsi [root@node1 ~]# iscsi-iname(查看initiator名称) iqn.1994-05.com.redhat:9ca447ee550 [root@node1 ~]# iscsi-iname -p iqn.2013-05.com.magedu(修改initiator名称) iqn.2013-05.com.magedu:13a41a8692c [root@node1 ~]# vim /etc/iscsi/initiatorname.iscsi(编辑initiator名称配置文件) InitiatorName=iqn.1994-05.com.redhat:82c8d9bdfb56 [root@node1 ~]# echo "InitiatorName=`iscsi-iname -p iqn.2013-05.com.magedu`" > /etc/iscsi/initiatorname.iscsi(命令替换,将输出结果输出到initiato rname.iscsi文件) [root@node1 ~]# cat /etc/iscsi/initiatorname.iscsi(查看initiatorname.iscsi文件内容) InitiatorName=iqn.2013-05.com.magedu:983c58ae72f2

iscsi client_2:

[root@node2 ~]# cd /etc/iscsi/(切换到/etc/iscsi目录) [root@node2 iscsi]# ls(查看当前目录文件及子目录) initiatorname.iscsi iscsid.conf [root@node2 iscsi]# cat initiatorname.iscsi(查看initiatorname.iscsi文件内容) InitiatorName=iqn.1994-05.com.redhat:5ad427f6d47

iscsi client_1:

[root@node1 ~]# vim /etc/iscsi/initiatorname.iscsi(编辑initiatorname.iscsi文件内容)

InitiatorName=iqn.2013-05.com.magedu:983c58ae72f2

InitiatorAlias=node1.magedu.com(别名)

[root@node1 ~]# iscsiadm -h(查看iscsiadm的命令帮助)

iscsiadm -m(模式化命令) discoverydb [ -hV ] [ -d debug_level ] [-P printlevel] [ -t type -p ip:port -I ifaceN ... [ -Dl ] ] | [ [ -p ip:port

-t type] [ -o operation ] [ -n name ] [ -v value ] [ -lD ] ]

iscsiadm -m discovery [ -hV ] [ -d debug_level ] [-P printlevel] [ -t type -p ip:port -I ifaceN ... [ -l ] ] | [ [ -p ip:port ] [ -l | -D ] ]

iiscsiadm -m node [ -hV ] [ -d debug_level ] [ -P printlevel ] [ -L all,manual,automatic ] [ -U all,manual,automatic ] [ -S ] [ [ -T targetname

-p ip:port -I ifaceN ] [ -l | -u | -R | -s] ] [ [ -o operation ] [ -n name ] [ -v value ] ]

iscsiadm -m session [ -hV ] [ -d debug_level ] [ -P printlevel] [ -r sessionid | sysfsdir [ -R | -u | -s ] [ -o operation ] [ -n name ] [ -v

value ] ]

iscsiadm -m iface [ -hV ] [ -d debug_level ] [ -P printlevel ] [ -I ifacename | -H hostno|MAC ] [ [ -o operation ] [ -n name ] [ -v value ] ]

iscsiadm -m fw [ -l ]

iscsiadm -m host [ -P printlevel ] [ -H hostno|MAC ]

iscsiadm -k priority

[root@node1 ~]# man iscsiadm(查看iscsiadm的man帮助)

iscsiadm - open-iscsi administration utility

iscsiadm -m discovery [ -hV ] [ -d debug_level ] [ -P printlevel ] [ -I iface -t type -p ip:port [ -l ] ] | [ [ -p

ip:port ] [ -l | -D ] ]

-P, --print=printlevel

If in node mode print nodes in tree format. If in session mode print sessions in tree format. If in discovery

mode print the nodes in tree format.

DISCOVERY TYPES

iSCSI defines 3 discovery types: SendTargets, SLP, and iSNS.

[root@node1 ~]# service iscsi start(启动iscsi服务)

iscsid (pid 2548) is running...

Setting up iSCSI targets: iscsiadm: No records found

[ OK ]

[root@node1 ~]# iscsiadm -m discovery -t sendtargets -p 172.16.100.100(发现172.16.100.100服务器是否有target输出,-m指定模式,-t指定类型,-p指定服务器

地址)

172.16.100.100:3260,1 iqn.2013-05.com.magedu:teststore.disk1

[root@node1 ~]# ls /var/lib/iscsi/(查看/var/lib/iscsi目录文件及子目录)

ifaces isns nodes send_targets slp static

[root@node1 ~]# ls /var/lib/iscsi/send_targets/(查看/var/lib/iscsi/send_targets目录文件及子目录)

172.16.100.100,3260

提示:在/var/lib/iscsi/send_targets/目录已经有数据了;

[root@node1 ~]# ls /var/lib/iscsi/send_targets/172.16.100.100,3260/(查看/var/lib/iscsi/send_targets/172.16.100.100,3260目录文件及子目录)

iqn.2013-05.com.magedu:teststore.disk1,172.16.100.100,3260,1,default st_config

提示:一旦发现这些数据就会记录下来的,将来不想用了想重新发现需要将这些数据清空;

[root@node1 ~]# iscsiadm -h

iscsiadm -m discoverydb [ -hV ] [ -d debug_level ] [-P printlevel] [ -t type -p ip:port -I ifaceN ... [ -Dl ] ] | [ [ -p ip:port -t type]

[ -o operation ] [ -n name ] [ -v value ] [ -lD ] ]

iscsiadm -m discovery [ -hV ] [ -d debug_level ] [-P printlevel] [ -t type -p ip:port -I ifaceN ... [ -l ] ] | [ [ -p ip:port ] [ -l | -D ] ]

iiscsiadm -m node [ -hV ] [ -d debug_level ] [ -P printlevel ] [ -L all,manual,automatic ] [ -U all,manual,automatic ] [ -S ] [ [ -T

targetname -p ip:port -I ifaceN ] [ -l | -u | -R | -s] ] [ [ -o operation ] [ -n name ] [ -v value ] ]

iscsiadm -m session [ -hV ] [ -d debug_level ] [ -P printlevel] [ -r sessionid | sysfsdir [ -R | -u | -s ] [ -o operation ] [ -n name ]

[ -v value ] ]

iscsiadm -m iface [ -hV ] [ -d debug_level ] [ -P printlevel ] [ -I ifacename | -H hostno|MAC ] [ [ -o operation ] [ -n name ] [ -v value

] ]

iscsiadm -m fw [ -l ]

iscsiadm -m host [ -P printlevel ] [ -H hostno|MAC ]

iscsiadm -k priority

[root@node1 ~]# man iscsiadm(查看iscsiadm的man帮助)

-L, --loginall==[all|manual|automatic](登录到所有target,把远程服务器target的LUN关联到本机,以后它就会识别成为本机的存储设备)

For node mode, login all sessions with the node or conn startup values passed in or all running sesssion, except ones marked

onboot, if all is passed in.

This option is only valid for node mode (it is valid but not functional for session mode).

-U, --logoutall==[all,manual,automatic](登出所有,)

logout all sessions with the node or conn startup values passed in or all running sesssion, except ones marked onboot, if

all is passed in.

This option is only valid for node mode (it is valid but not functional for session mode).

-S, --show(显示)

When displaying records, do not hide masked values, such as the CHAP secret (password).

This option is only valid for node and session mode.

-R, --rescan

In session mode, if sid is also passed in rescan the session. If no sid has been passed in rescan all running sessions.

In node mode, rescan a session running through the target, portal, iface tuple passed in.

-s, --stats(显示session的统计数据)

Display session statistics.

-o, --op=op

Specifies a database operator op. op must be one of new(自己为某个库某个条目创建条目), delete(删除此前发现的数据库), update(更新),

show(显示) or nonpersistent.

For iface mode, apply and applyall are also applicable.

This option is valid for all modes except fw. Delete should not be used on a running session. If it is iscsiadm will stop

the session and then delete the record.

new creates a new database record for a given object. In node mode, the recid is the target name and portal (IP:port). In

iface mode, the recid is the iface name. In discovery mode, the recid is the portal and discovery type.

In session mode, the new operation logs in a new session using the same node database and iface information as the specified

session.

In discovery mode, if the recid and new operation is passed in, but the --discover argument is not, then iscsiadm will only

create a discovery record (it will not perform discovery). If the --discover argument is passed in with the portal and dis-

covery type, then iscsiadm will create the discovery record if needed, and it will create records for portals returned by

the target that do not yet have a node DB record.

delete deletes a specified recid. In discovery node, if iscsiadm is performing discovery it will delete records for portals

that are no longer returned.

update will update the recid with name to the specified value. In discovery node, if iscsiadm is performing discovery the

recid, name and value arguments are not needed. The update operation will operate on the portals returned by the target,

and will update the node records with info from the config file and command line.

show is the default behaviour for node, discovery and iface mode. It is also used when there are no commands passed into

session mode and a running sid is passed in. name and value are currently ignored when used with show.

nonpersistent instructs iscsiadm to not manipulate the node DB.

apply will cause the network settings to take effect on the specified iface.

applyall will cause the network settings to take effect on all the ifaces whose MAC address or host number matches that of

the specific host.

-n, --name=name

Specify a field name in a record. For use with the update operator.

-v, --value=value

Specify a value for use with the update operator.

This option is only valid for node mode.

[root@node1 ~]# iscsiadm -m node -T iqn.2013-05.com.magedu:teststore.disk1 -p 172.16.100.100 -l(登录172.16.100.100服务器的target,-T指定登录那个

target名字,-p指定服务器地址,-l登录)

Logging in to [iface: default, target: iqn.2013-05.com.magedu:teststore.disk1, portal: 172.16.100.100,3260] (multiple)

Login to [iface: default, target: iqn.2013-05.com.magedu:teststore.disk1, portal: 172.16.100.100,3260] successful.

[root@node1 ~]# fdisk -l(查看磁盘分区信息)

Disk /dev/sda: 53.6 GB, 53687091200 bytes

255 heads, 63 sectors/track, 6527 cylinders

Units = cylinders of 16065 * 512 = 8225280 bytes

Device Boot Start End Blocks Id System

/dev/sda1 * 1 13 104391 83 Linux

/dev/sda2 14 2624 20972857+ 83 Linux

/dev/sda3 2625 2755 1052257+ 82 Linux swap / Solaris

Disk /dev/sdb: 10.0 GB, 10010133504 bytes

64 heads, 32 sectors/track, 9546 cylinders

Units = cylinders of 2048 * 512 = 1048576 bytes

Disk /dev/sdb doesn't contain a valid partition table

[root@node1 ~]# fdisk /dev/sdb(管理sdb磁盘设备,进入交互模式)

Device contains neither a valid DOS partition table, nor Sun, SGI or OSF disklabel

Building a new DOS disklabel. Changes will remain in memory only,

until you decide to write them. After that, of course, the previous

content won't be recoverable.

The number of cylinders for this disk is set to 9546.

There is nothing wrong with that, but this is larger than 1024,

and could in certain setups cause problems with:

1) software that runs at boot time (e.g., old versions of LILO)

2) booting and partitioning software from other OSs

(e.g., DOS FDISK, OS/2 FDISK)

Warning: invalid flag 0x0000 of partition table 4 will be corrected by w(rite)

Command (m for help): n(创建分区)

Command action

e extended

p primary partition (1-4)

p(主分区)

Partition number (1-4): 1(分区号)

First cylinder (1-9546, default 1):

Using default value 1

Last cylinder or +size or +sizeM or +sizeK (1-9546, default 9546): +2G(创建2G分区)

Command (m for help): w(保存退出)

The partition table has been altered!

Calling ioctl() to re-read partition table.

WARNING: Re-reading the partition table failed with error 16: Device or resource busy.

The kernel still uses the old table.

The new table will be used at the next reboot.

Syncing disks.

[root@node1 ~]# partprobe /dev/sdb(让内核重新加载/dev/sdb分区表)

[root@node1 ~]# fdisk -l(查看磁盘分区情况)

Disk /dev/sda: 53.6 GB, 53687091200 bytes

255 heads, 63 sectors/track, 6527 cylinders

Units = cylinders of 16065 * 512 = 8225280 bytes

Device Boot Start End Blocks Id System

/dev/sda1 * 1 13 104391 83 Linux

/dev/sda2 14 2624 20972857+ 83 Linux

/dev/sda3 2625 2755 1052257+ 82 Linux swap / Solaris

Disk /dev/sdb: 10.0 GB, 10010133504 bytes

64 heads, 32 sectors/track, 9546 cylinders

Units = cylinders of 2048 * 512 = 1048576 bytes

Device Boot Start End Blocks Id System

/dev/sdb1 1 1908 1953776 83 Linux

[root@node1 ~]# mkfs.ext3 /dev/sdb1(将/dev/sdb1格式化为ext3类型文件系统)

mke2fs 1.39 (29-May-2006)

Filesystem label=

OS type: Linux

Block size=4096 (log=2)

Fragment size=4096 (log=2)

244320 inodes, 488444 blocks

24422 blocks (5.00%) reserved for the super user

First data block=0

Maximum filesystem blocks=503316480

15 block groups

32768 blocks per group, 32768 fragments per group

16288 inodes per group

Superblock backups stored on blocks:

32768, 98304, 163840, 229376, 294912

Writing inode tables: done

Creating journal (8192 blocks): done

Writing superblocks and filesystem accounting information: done

This filesystem will be automatically checked every 35 mounts or

180 days, whichever comes first. Use tune2fs -c or -i to override.

[root@node1 ~]# mount /dev/sdb1 /mnt/(挂载/dev/sdb1到/mnt目录)

[root@node1 ~]# cp /etc/issue /mnt/(复制issue文件/mnt目录)

[root@node1 ~]# ls /mnt/(查看/mnt/目录文件及子目录)

issue lost+found

[root@node1 ~]# umount /mnt/(卸载/mnt挂载的文件系统)

iscsi client_2:

[root@node2 iscsi]# vim initiatorname.iscsi(编辑initiator名称配置文件)

InitiatorName=iqn.2013-05.com.magedu:node2

[root@node2 iscsi]# service iscsi start(启动iscsi服务)

iscsid (pid 23071) 正在运行...

设置 iSCSI 目标:iscsiadm: No records found

[确定]

[root@node2 iscsi]# iscsiadm -m discovery -t st -p 172.16.100.100(发现172.16.100.100服务器是否有target输出,-m指定模式,-t指定类型,-p指定服务器地址)

172.16.100.100:3260,1 iqn.2013-05.com.magedu:teststore.disk1

[root@node2 iscsi]# iscsiadm -m node -T iqn.2013-05.com.magedu:teststore.disk1 -p 172.16.100.100 -l(登录172.16.100.100服务器的target,-T指定登录

那个target名字,-p指定服务器地址,-l登录)

Logging in to [iface: default, target: iqn.2013-05.com.magedu:teststore.disk1, portal: 172.16.100.100,3260] (multiple)

Login to [iface: default, target: iqn.2013-05.com.magedu:teststore.disk1, portal: 172.16.100.100,3260] successful.

[root@node2 iscsi]# fdisk -l(查看磁盘分区情况)

Disk /dev/sda: 53.6 GB, 53687091200 bytes

255 heads, 63 sectors/track, 6527 cylinders

Units = cylinders of 16065 * 512 = 8225280 bytes

Device Boot Start End Blocks Id System

/dev/sda1 * 1 13 104391 83 Linux

/dev/sda2 14 2624 20972857+ 83 Linux

/dev/sda3 2625 2755 1052257+ 82 Linux swap / Solaris

Disk /dev/sdb: 10.0 GB, 10010133504 bytes

64 heads, 32 sectors/track, 9546 cylinders

Units = cylinders of 2048 * 512 = 1048576 bytes

Device Boot Start End Blocks Id System

/dev/sdb1 1 1908 1953776 83 Linux

[root@node2 iscsi]# mount /dev/sdb1 /mnt/(挂载/dev/sdb1到/mnt目录)

[root@node2 iscsi]# ls /mnt/(查看/mnt目录文件及子目录)

issue lost+found

[root@node2 iscsi]# cd /mnt/(切换到/mnt目录)

[root@node2 mnt]# ls(查看当前目录文件及子目录)

issue lost+found

[root@node2 mnt]# cp /etc/inittab ./(复制/etc/inittab到当前目录)

[root@node2 mnt]# ls(查看当前目录文件及子目录)

inittab issue lost+found

iscsi client_1:

[root@node1 ~]# mount /dev/sdb1 /mnt/(挂载/dev/sdb1到/mnt目录) [root@node1 ~]# ls /mnt/(查看当前目录文件及子目录) inittab issue lost+found [root@node1 ~]# cp /etc/fstab /mnt/(复制/etc/fstab文件到/mnt目录) [root@node1 ~]# ls /mnt/(查看当前目录文件及子目录) fstab inittab issue lost+found

iscsi client_2:

[root@node2 mnt]# ls(查看当前目录文件及子目录) inittab issue lost+found 提示:没有fstab文件; [root@node2 mnt]# cd(切换到用户家目录) [root@node2 ~]# umount /mnt/(卸载/mnt目录挂载的文件系统) [root@node2 ~]# mount /dev/sdb1 /mnt/(挂载/dev/sdb1到/mnt目录) [root@node2 ~]# ls /mnt/(查看/mnt目录文件及子目录) inittab issue lost+found 提示:没有fstab文件,说明刚才的操作是在内存中完成,它有可能还没有同步到磁盘,压根就看不到,这就是单击文件系统坏处,所以两个节点编辑同一个文件会崩溃,如果使用集群文件系统, 任何时候在这里创建文件会立即通知给其他节点,所以其它节点会立即看到的;

iscsi-initiator-utils:

不支持discovery认证:

如果使用基于用户的认证,必须首先开放基于IP的认证;

cman, rgmanger, gfs2-utils

mkfs.gfs2

-j #: 指定日志区域个数,有几个就能够被几个节点所挂载,gfs2文件系统有可能被多个节点所挂载,每个节点都需要一个日志区域来实现文件的管理,包括各种修改操作都要使用日志区域的,如果指定两个,只能被两个节点挂载,默认情况下每个日志区大小为128M;

-J #: 指定日志区域大小,默认128M;

-p {lock_dlm|lock_nolock}: 指定锁协议名称,lock_dlm分布式文件锁,lock_nolock不使用锁,如果gfs2文件系统被一个节点使用不用使用分布式文件锁;

-t <name>: 指定锁表名称,格式clustername:locktablename,clustername集群名字要跟当前节点集群名称保持一致,而locktablename锁表名称可以自己取,但是不能跟其他节点的锁表名称相同,所以必须在集群内部唯一的,标识某个文件系统锁的持有情况;

iscsi client_1:

[root@node1 ~]# cd /var/lib/iscsi/(切换到/var/lib/iscsi目录) [root@node1 iscsi]# ls(查看当前目录文件及子目录) ifaces isns nodes send_targets slp static [root@node1 iscsi]# ls send_targets/(查看send_targets目录文件及子目录) 172.16.100.100,3260 [root@node1 iscsi]# ls send_targets/172.16.100.100,3260/(查看send_targets/172.16.100.100,3260目录文件及子目录) iqn.2013-05.com.magedu:teststore.disk1,172.16.100.100,3260,1,default st_config [root@node1 iscsi]# ls ifaces/(查看ifaces目录文件及子目录) 提示:如果没有指定接口没有绑定到某个接口上; [root@node1 iscsi]# cd(切换到用户家目录) [root@node1 ~]# cd /etc/iscsi/(切换到/etc/iscsi目录文件及子目录) [root@node1 iscsi]# ls(查看当前目录文件及子目录) initiatorname.iscsi iscsid.conf [root@node1 iscsi]# vim iscsid.conf(编辑iscsid.conf文件) #***************** # Startup settings(服务刚启动设置,在登录每个target要定义的参数) #***************** node.startup = automatic(是不是自动启动节点,并登录进去) node.leading_login = No # ************* # CHAP Settings(如果要基于chap方式做用户认证) # ************* #node.session.auth.authmethod = CHAP(基于那种方式认证) #node.session.auth.username = username(登录服务器时认证用户名) #node.session.auth.password = password(登陆服务器时认证密码) #node.session.auth.username_in = username_in(客户端认证服务器端用户名) #node.session.auth.password_in = password_in(客户端认证服务器端密码) # ******** # Timeouts(会话超时时间) # ******** node.session.timeo.replacement_timeout = 120(连接超时时间) node.conn[0].timeo.login_timeout = 15(登录超时时间) node.conn[0].timeo.logout_timeout = 15(登出超时时间) node.conn[0].timeo.noop_out_interval = 5(检查间隔时间) node.conn[0].timeo.noop_out_timeout = 5(重复检查间隔时间)

如何基于用户名的认证:

[root@node1 iscsi]# cd(切换到用户家目录) [root@node1 ~]# iscsiadm -m node -T iqn.2013-05.com.magedu:teststore.disk1 -p 172.16.100.100 -u(登出172.16.100.100的target,-m模式,-T指定那个targ et名字,-p指定服务器地址,-u登出) Logging out of session [sid: 1, target: iqn.2013-05.com.magedu:teststore.disk1, portal: 172.16.100.100,3260] Logout of [sid: 1, target: iqn.2013-05.com.magedu:teststore.disk1, portal: 172.16.100.100,3260] successful. [root@node1 ~]# fdisk -l(查看磁盘分区情况) Disk /dev/sda: 53.6 GB, 53687091200 bytes 255 heads, 63 sectors/track, 6527 cylinders Units = cylinders of 16065 * 512 = 8225280 bytes Device Boot Start End Blocks Id System /dev/sda1 * 1 13 104391 83 Linux /dev/sda2 14 2624 20972857+ 83 Linux /dev/sda3 2625 2755 1052257+ 82 Linux swap / Solaris [root@node1 ~]# chkconfig iscsi on(让iscsi服务在相应系统级别开机自动启动) 提示:只要数据库在,只要iscsi服务能够自动启动,它会自动登录到target上的,为了避免自动登录,可以将数据库删除; [root@node1 ~]# iscsiadm -m node -T iqn.2013-05.com.magedu:teststore.disk1 -p 172.16.100.100 -o delete(向172.16.100.100相关的target数据库信息删除, -m指定模式,-T指定target名字,-p指定服务器地址,-o指定操作) [root@node1 ~]# ls /var/lib/iscsi/send_targets/(查看/var/lib/iscsi/send_targets目录文件及子目录) 172.16.100.100,3260 [root@node1 ~]# ls /var/lib/iscsi/send_targets/172.16.100.100,3260/(查看/var/lib/iscsi/send_targets/172.16.100.100,3260目录文件及子目录) st_config 提示:iqn已经没有了; [root@node1 ~]# rm -rf /var/lib/iscsi/send_targets/172.16.100.100,3260/(删除172.16.100.100,3260目录,-r目录,-f强制删除)

iscsi client_2:

[root@node2 ~]# iscsiadm -m node -T iqn.2013-05.com.magedu:teststore.disk1 -p 172.16.100.100 -u(登出172.16.100.100的target,-m模式,-T指定登出那 个target名字,-p指定服务器地址,-u登出) Logging out of session [sid: 1, target: iqn.2013-05.com.magedu:teststore.disk1, portal: 172.16.100.100,3260] Logout of [sid: 1, target: iqn.2013-05.com.magedu:teststore.disk1, portal: 172.16.100.100,3260] successful. [root@node2 ~]# iscsiadm -m node -T iqn.2013-05.com.magedu:teststore.disk1 -p 172.16.100.100 -o delete(向172.16.100.100相关的target数据库删除, -m指定模式,-T指定target名字,-p指定服务器地址,-o指定操作) [root@node2 ~]# rm -rf /var/lib/iscsi/send_targets/172.16.100.100,3260/(删除172.16.100.100,3260目录,-r删除目录,-f强制删除)

steppingstone:

解除IP地址绑定只使用用户认证:

[root@steppingstone ~]# tgtadm --lld iscsi -m target --op unbind --tid 1 --initiator-address 172.16.0.0/16(解除绑定172.16.0.0/16网段使用,--lld

指定驱动,-m指定模式,--op操作,--tid指定target的id号)

[root@steppingstone ~]# tgtadm --lld iscsi --mode target --op show(查看target,--lld指定驱动,--mode指定模式,--op操作)

Target 1: iqn.2013-05.com.magedu:teststore.disk1

System information:

Driver: iscsi

State: ready

I_T nexus information:

LUN information:

LUN: 0

Type: controller

SCSI ID: IET 00010000

SCSI SN: beaf10

Size: 0 MB, Block size: 1

Online: Yes

Removable media: No

Readonly: No

Backing store type: null

Backing store path: None

Backing store flags:

LUN: 1

Type: disk

SCSI ID: IET 00010001

SCSI SN: beaf11

Size: 10010 MB, Block size: 512

Online: Yes

Removable media: No

Readonly: No

Backing store type: rdwr

Backing store path: /dev/sda5

Backing store flags:

Account information:

ACL information:(information已经没有了)

[root@steppingstone ~]# tgtadm -h(查看tgtadm的帮助)

Usage: tgtadm [OPTION]

Linux SCSI Target Framework Administration Utility, version

--lld <driver> --mode target --op new --tid <id> --targetname <name>

add a new target with <id> and <name>. <id> must not be zero.

--lld <driver> --mode target --op delete --tid <id>

delete the specific target with <id>. The target must

have no activity.

--lld <driver> --mode target --op show

show all the targets.

--lld <driver> --mode target --op show --tid <id>

show the specific target's parameters.

--lld <driver> --mode target --op update --tid <id> --name <param> --value <value>

change the target parameters of the specific

target with <id>.

--lld <driver> --mode target --op bind --tid <id> --initiator-address <src>

enable the target to accept the specific initiators.

--lld <driver> --mode target --op unbind --tid <id> --initiator-address <src>

disable the specific permitted initiators.

--lld <driver> --mode logicalunit --op new --tid <id> --lun <lun> \

--backing-store <path> --bstype <type> --bsoflags <options>

add a new logical unit with <lun> to the specific

target with <id>. The logical unit is offered

to the initiators. <path> must be block device files

(including LVM and RAID devices) or regular files.

bstype option is optional.

bsoflags supported options are sync and direct

(sync:direct for both).

--lld <driver> --mode logicalunit --op delete --tid <id> --lun <lun>

delete the specific logical unit with <lun> that

the target with <id> has.

--lld <driver> --mode account --op new --user <name> --password <pass>

add a new account with <name> and <pass>.(创建帐号)

--lld <driver> --mode account --op delete --user <name>

delete the specific account having <name>.(删除帐号)

--lld <driver> --mode account --op bind --tid <id> --user <name> [--outgoing](出去的,绑定用来让客户端认证服务器端的帐号密码)

add the specific account having <name> to

the specific target with <id>.

<user> could be <IncomingUser> or <OutgoingUser>.

If you use --outgoing option, the account will

be added as an outgoing account.(往某个tid上将某个用户名绑定)

--lld <driver> --mode account --op unbind --tid <id> --user <name>

delete the specific account having <name> from specific

target.

--control-port <port> use control port <port>

--help display this help and exit

Report bugs to <stgt@vger.kernel.org>.

[root@steppingstone ~]# tgtadm --lld iscsi --mode account --op new --user iscsiuser --password iscsiuser(创建帐号,--lld指定驱动,--mode指定模式

,--op操作,--user指定用户,--password指定密码)

[root@steppingstone ~]# tgtadm --lld iscsi --mode account --op bind --tid 1 --user iscsiuser(绑定帐号,--lld指定驱动,--mode指定模式,--op操作,

--tid指定target的id号,--user指定用户)

[root@steppingstone ~]# tgtadm --lld iscsi --mode target --op show(查看target,--lld指定驱动,--mode指定模式,--op操作)

Target 1: iqn.2013-05.com.magedu:teststore.disk1

System information:

Driver: iscsi

State: ready

I_T nexus information:

LUN information:

LUN: 0

Type: controller

SCSI ID: IET 00010000

SCSI SN: beaf10

Size: 0 MB, Block size: 1

Online: Yes

Removable media: No

Readonly: No

Backing store type: null

Backing store path: None

Backing store flags:

LUN: 1

Type: disk

SCSI ID: IET 00010001

SCSI SN: beaf11

Size: 10010 MB, Block size: 512

Online: Yes

Removable media: No

Readonly: No

Backing store type: rdwr

Backing store path: /dev/sda5

Backing store flags:

Account information:(绑定user)

iscsiuser

ACL information:

iscsi client_1:

[root@node1 ~]# iscsiadm -m discovery -t st -p 172.16.100.100(发现172.16.100.100服务器是否有target输出,-m指定模式,-t指定类型,-p指定服务器地址)

iscsiadm: No portals found(没有发现)

[root@node1 ~]# iscsiadm -m discovery -d 2-t st -p 172.16.100.100(发现172.16.100.100服务器是否有target输出,-m指定模式,-d 调试,-t指定类型,-p指定服

务器地址)

iscsiadm: Max file limits 1024 1024

iscsiadm: starting sendtargets discovery, address 172.16.100.100:3260,

iscsiadm: connecting to 172.16.100.100:3260

iscsiadm: connected local port 37324 to 172.16.100.100:3260

iscsiadm: connected to discovery address 172.16.100.100

iscsiadm: login response status 0000(不让登录,没提供帐号密码)

iscsiadm: discovery process to 172.16.100.100:3260 exiting

iscsiadm: disconnecting conn 0x9b3dc30, fd 3

iscsiadm: No portals found

[root@node1 ~]# cd /etc/iscsi/(切换到/etc/iscsi目录)

[root@node1 iscsi]# ls(查看当前目录文件及子目录)

initiatorname.iscsi iscsid.conf

[root@node1 iscsi]# vim iscsid.conf(编辑iscsid.conf配置文件)

discovery.sendtargets.auth.authmethod = CHAP

discovery.sendtargets.auth.username = iscsiuser

discovery.sendtargets.auth.password = iscsiuser

[root@node1 iscsi]# service iscsi restart(重启iscsi服务)

iscsiadm: No matching sessions found

Stopping iSCSI daemon:

iscsid is stopped [ OK ]

Starting iSCSI daemon: [ OK ]

[ OK ]

Setting up iSCSI targets: iscsiadm: No records found

[ OK ]

[root@node1 iscsi]# iscsiadm -m discovery -d 2 -t st -p 172.16.100.100(发现172.16.100.100服务器是否有target输出,-m指定模式,-d调试,-t指定类型,-p

指定服务器地址)

iscsiadm: Max file limits 1024 1024

iscsiadm: starting sendtargets discovery, address 172.16.100.100:3260,

iscsiadm: connecting to 172.16.100.100:3260

iscsiadm: connected local port 37325 to 172.16.100.100:3260

iscsiadm: connected to discovery address 172.16.100.100

iscsiadm: login response status 0000

iscsiadm: login response status 0000

iscsiadm: discovery process to 172.16.100.100:3260 exiting

iscsiadm: disconnecting conn 0x8568c30, fd 3

iscsiadm: No portals found

[root@node1 iscsi]# vim iscsid.conf(编辑iscsid.conf配置文件)

node.session.auth.authmethod = CHAP

node.session.auth.username = iscsiuser

node.session.auth.password = iscsiuser

[root@node1 iscsi]# service iscsi restart(重启iscsi服务)

iscsiadm: No matching sessions found

Stopping iSCSI daemon:

iscsid is stopped [ OK ]

Starting iSCSI daemon: [ OK ]

[ OK ]

Setting up iSCSI targets: iscsiadm: No records found

[ OK ]

[root@node1 iscsi]# iscsiadm -m discovery -d 2 -t st -p 172.16.100.100(发现172.16.100.100服务器是否有target输出,-m指定模式,-d调试,-t指定类型,-p

指定服务器地址)

iscsiadm: Max file limits 1024 1024

iscsiadm: starting sendtargets discovery, address 172.16.100.100:3260,

iscsiadm: connecting to 172.16.100.100:3260

iscsiadm: connected local port 37325 to 172.16.100.100:3260

iscsiadm: connected to discovery address 172.16.100.100

iscsiadm: login response status 0000

iscsiadm: login response status 0000

iscsiadm: discovery process to 172.16.100.100:3260 exiting

iscsiadm: disconnecting conn 0x8568c30, fd 3

iscsiadm: No portals found

[root@node1 iscsi]# vim iscsid.conf(编辑iscsid.conf配置文件)

[root@node1 iscsi]# iscsiadm -m discovery -d 5 -t st -p 172.16.100.100(发现172.16.100.100服务器是否有target输出,-m指定模式,-d调试,-t指定类型,-p

指定服务器地址)

iscsiadm: ip 172.16.100.100, port -1, tgpt -1

iscsiadm: Max file limits 1024 1024

iscsiadm: starting sendtargets discovery, address 172.16.100.100:3260,

iscsiadm: Matched transport be2iscsi

iscsiadm: sysfs_attr_get_value: open '/class/iscsi_transport/be2iscsi'/'handle'

iscsiadm: sysfs_attr_get_value: new uncached attribute '/sys/class/iscsi_transport/be2iscsi/handle'

iscsiadm: sysfs_attr_get_value: add to cache '/sys/class/iscsi_transport/be2iscsi/handle'

iscsiadm: sysfs_attr_get_value: cache '/sys/class/iscsi_transport/be2iscsi/handle' with attribute value '4176057344'

iscsiadm: sysfs_attr_get_value: open '/class/iscsi_transport/be2iscsi'/'caps'

iscsiadm: sysfs_attr_get_value: new uncached attribute '/sys/class/iscsi_transport/be2iscsi/caps'

iscsiadm: sysfs_attr_get_value: add to cache '/sys/class/iscsi_transport/be2iscsi/caps'

iscsiadm: sysfs_attr_get_value: cache '/sys/class/iscsi_transport/be2iscsi/caps' with attribute value '0x8b9'

iscsiadm: Matched transport bnx2i

iscsiadm: sysfs_attr_get_value: open '/class/iscsi_transport/bnx2i'/'handle'

iscsiadm: sysfs_attr_get_value: new uncached attribute '/sys/class/iscsi_transport/bnx2i/handle'

iscsiadm: sysfs_attr_get_value: add to cache '/sys/class/iscsi_transport/bnx2i/handle'

iscsiadm: sysfs_attr_get_value: cache '/sys/class/iscsi_transport/bnx2i/handle' with attribute value '4174119360'

iscsiadm: sysfs_attr_get_value: open '/class/iscsi_transport/bnx2i'/'caps'

iscsiadm: sysfs_attr_get_value: new uncached attribute '/sys/class/iscsi_transport/bnx2i/caps'

iscsiadm: sysfs_attr_get_value: add to cache '/sys/class/iscsi_transport/bnx2i/caps'

iscsiadm: sysfs_attr_get_value: cache '/sys/class/iscsi_transport/bnx2i/caps' with attribute value '0x8b9'

iscsiadm: Matched transport cxgb3i

iscsiadm: sysfs_attr_get_value: open '/class/iscsi_transport/cxgb3i'/'handle'

iscsiadm: sysfs_attr_get_value: new uncached attribute '/sys/class/iscsi_transport/cxgb3i/handle'

iscsiadm: sysfs_attr_get_value: add to cache '/sys/class/iscsi_transport/cxgb3i/handle'

iscsiadm: sysfs_attr_get_value: cache '/sys/class/iscsi_transport/cxgb3i/handle' with attribute value '4172473600'

iscsiadm: sysfs_attr_get_value: open '/class/iscsi_transport/cxgb3i'/'caps'

iscsiadm: sysfs_attr_get_value: new uncached attribute '/sys/class/iscsi_transport/cxgb3i/caps'

iscsiadm: sysfs_attr_get_value: add to cache '/sys/class/iscsi_transport/cxgb3i/caps'

iscsiadm: sysfs_attr_get_value: cache '/sys/class/iscsi_transport/cxgb3i/caps' with attribute value '0x3039'

iscsiadm: Matched transport iser

iscsiadm: sysfs_attr_get_value: open '/class/iscsi_transport/iser'/'handle'

iscsiadm: sysfs_attr_get_value: new uncached attribute '/sys/class/iscsi_transport/iser/handle'

iscsiadm: sysfs_attr_get_value: add to cache '/sys/class/iscsi_transport/iser/handle'

iscsiadm: sysfs_attr_get_value: cache '/sys/class/iscsi_transport/iser/handle' with attribute value '4174915296'

iscsiadm: sysfs_attr_get_value: open '/class/iscsi_transport/iser'/'caps'

iscsiadm: sysfs_attr_get_value: new uncached attribute '/sys/class/iscsi_transport/iser/caps'

iscsiadm: sysfs_attr_get_value: add to cache '/sys/class/iscsi_transport/iser/caps'

iscsiadm: sysfs_attr_get_value: cache '/sys/class/iscsi_transport/iser/caps' with attribute value '0x9'

iscsiadm: Matched transport tcp

iscsiadm: sysfs_attr_get_value: open '/class/iscsi_transport/tcp'/'handle'

iscsiadm: sysfs_attr_get_value: new uncached attribute '/sys/class/iscsi_transport/tcp/handle'

iscsiadm: sysfs_attr_get_value: add to cache '/sys/class/iscsi_transport/tcp/handle'

iscsiadm: sysfs_attr_get_value: cache '/sys/class/iscsi_transport/tcp/handle' with attribute value '4173924992'

iscsiadm: sysfs_attr_get_value: open '/class/iscsi_transport/tcp'/'caps'

iscsiadm: sysfs_attr_get_value: new uncached attribute '/sys/class/iscsi_transport/tcp/caps'

iscsiadm: sysfs_attr_get_value: add to cache '/sys/class/iscsi_transport/tcp/caps'

iscsiadm: sysfs_attr_get_value: cache '/sys/class/iscsi_transport/tcp/caps' with attribute value '0x39'

iscsiadm: sendtargets discovery to 172.16.100.100:3260 using isid 0x00023d000000

iscsiadm: resolved 172.16.100.100 to 172.16.100.100

iscsiadm: discovery timeouts: login 15, reopen_cnt 6, auth 45.

iscsiadm: connecting to 172.16.100.100:3260

iscsiadm: connected local port 37367 to 172.16.100.100:3260

iscsiadm: connected to discovery address 172.16.100.100

iscsiadm: discovery session to 172.16.100.100:3260 starting iSCSI login

iscsiadm: sending login PDU with current stage 0, next stage 1, transit 0x80, isid 0x00023d000000 exp_statsn 0

iscsiadm: > InitiatorName=iqn.2013-05.com.magedu:983c58ae72f2

iscsiadm: > InitiatorAlias=node1.magedu.com

iscsiadm: > SessionType=Discovery

iscsiadm: > AuthMethod=CHAP,None(认证方式为CHAP)

iscsiadm: wrote 48 bytes of PDU header

iscsiadm: wrote 128 bytes of PDU data

iscsiadm: read 48 bytes of PDU header

iscsiadm: read 48 PDU header bytes, opcode 0x23, dlength 39, data 0x8cfcde8, max 32768

iscsiadm: read 39 bytes of PDU data

iscsiadm: read 1 pad bytes

iscsiadm: finished reading login PDU, 48 hdr, 0 ah, 39 data, 1 pad

iscsiadm: login current stage 0, next stage 1, transit 0x80

iscsiadm: > TargetPortalGroupTag=1

iscsiadm: > AuthMethod=None

iscsiadm: login response status 0000

iscsiadm: sending login PDU with current stage 1, next stage 3, transit 0x80, isid 0x00023d000000 exp_statsn 1

iscsiadm: > HeaderDigest=None

iscsiadm: > DataDigest=None

iscsiadm: > DefaultTime2Wait=2

iscsiadm: > DefaultTime2Retain=0

iscsiadm: > IFMarker=No

iscsiadm: > OFMarker=No

iscsiadm: > ErrorRecoveryLevel=0

iscsiadm: > MaxRecvDataSegmentLength=32768

iscsiadm: wrote 48 bytes of PDU header

iscsiadm: wrote 152 bytes of PDU data

iscsiadm: read 48 bytes of PDU header

iscsiadm: read 48 PDU header bytes, opcode 0x23, dlength 119, data 0x8cfcde8, max 32768

iscsiadm: read 119 bytes of PDU data

iscsiadm: read 1 pad bytes

iscsiadm: finished reading login PDU, 48 hdr, 0 ah, 119 data, 1 pad

iscsiadm: login current stage 1, next stage 3, transit 0x80

iscsiadm: > HeaderDigest=None

iscsiadm: > DataDigest=None

iscsiadm: > DefaultTime2Wait=2

iscsiadm: > DefaultTime2Retain=0

iscsiadm: > IFMarker=No

iscsiadm: > OFMarker=No

iscsiadm: > ErrorRecoveryLevel=0

iscsiadm: login response status 0000

iscsiadm: discovery login success to 172.16.100.100

iscsiadm: sending text pdu with CmdSN 1, exp_statsn 1

iscsiadm: > SendTargets=All

iscsiadm: wrote 48 bytes of PDU header

iscsiadm: wrote 16 bytes of PDU data

iscsiadm: discovery process 172.16.100.100:3260 polling fd 3, timeout in 30.000000 seconds

iscsiadm: read 48 bytes of PDU header

iscsiadm: read 48 PDU header bytes, opcode 0x24, dlength 0, data 0x8cfcde8, max 32768

iscsiadm: discovery session to 172.16.100.100:3260 received text response, 0 data bytes, ttt 0xffffffff, final 0x80

iscsiadm: discovery process to 172.16.100.100:3260 exiting

iscsiadm: disconnecting conn 0x8cf4c30, fd 3

iscsiadm: No portals found

steppingstone:

[root@steppingstone ~]# tgtadm --lld iscsi --mode target --op bind --tid 1 --initiator-address 172.16.0.0/16(绑定172.16.0.0/16网络使用,--lld

指定驱动,--mode指定模式,--op操作,--tid指定target的id号,--initiator-address指定绑定地址)

[root@steppingstone ~]# tgtadm --lld iscsi --mode target --op show(显示targer,--lld指定驱动,--mode指定模式,--op指定操作)

Target 1: iqn.2013-05.com.magedu:teststore.disk1

System information:

Driver: iscsi

State: ready

I_T nexus information:

LUN information:

LUN: 0

Type: controller

SCSI ID: IET 00010000

SCSI SN: beaf10

Size: 0 MB, Block size: 1

Online: Yes

Removable media: No

Readonly: No

Backing store type: null

Backing store path: None

Backing store flags:

LUN: 1

Type: disk

SCSI ID: IET 00010001

SCSI SN: beaf11

Size: 10010 MB, Block size: 512

Online: Yes

Removable media: No

Readonly: No

Backing store type: rdwr

Backing store path: /dev/sda5

Backing store flags:

Account information:

iscsiuser

ACL information:

172.16.0.0/16

iscsi client_2:

[root@node2 ~]# cd /etc/iscsi/(切换到/etc/iscsi目录)

[root@node2 iscsi]# ls(查看当前目录文件及子目录)

initiatorname.iscsi iscsid.conf

[root@node2 iscsi]# vim iscsid.conf(编辑iscsid.conf配置文件)

[root@node2 iscsi]# iscsiadm -m discovery -t st -p 172.16.100.100(发现172.16.100.100服务器是否有target输出,-m指定模式,t指定类型,-p指定服务器地址)

172.16.100.100:3260,1 iqn.2013-05.com.magedu:teststore.disk1

[root@node2 iscsi]# iscsiadm -m node -T iqn.2013-05.com.magedu:teststore.disk1 -p 172.16.100.100 -l(登录172.16.100.100服务器的target,-T指定登录

那个target名字,-p指定服务器地址,-l登录)

Logging in to [iface: default, target: iqn.2013-05.com.magedu:teststore.disk1, portal: 172.16.100.100,3260] (multiple)

iscsiadm: Could not login to [iface: default, target: iqn.2013-05.com.magedu:teststore.disk1, portal: 172.16.100.100,3260].

iscsiadm: initiator reported error (24 - iSCSI login failed due to authorization failure)

iscsiadm: Could not log into all portals(认证失败)

[root@node2 iscsi]# ls(查看当前目录文件及子目录)

initiatorname.iscsi iscsid.conf

[root@node2 iscsi]# vim iscsid.conf(编辑iscsid.conf配置文件)

node.session.auth.authmethod = CHAP

node.session.auth.username = iscsiuser

node.session.auth.password = iscsiuser

[root@node2 iscsi]# iscsiadm -m node -T iqn.2013-05.com.magedu:teststore.disk1 -p 172.16.100.100 -l(登录172.16.100.100服务器的target,-T指定登录

那个target名字,-p指定服务器地址,-l登录)

Logging in to [iface: default, target: iqn.2013-05.com.magedu:teststore.disk1, portal: 172.16.100.100,3260] (multiple)

iscsiadm: Could not login to [iface: default, target: iqn.2013-05.com.magedu:teststore.disk1, portal: 172.16.100.100,3260].

iscsiadm: initiator reported error (24 - iSCSI login failed due to authorization failure)

iscsiadm: Could not log into all portals(认证失败)

[root@node2 iscsi]# service iscsi restart(重启iscsi服务)

iscsiadm: No matching sessions found

Stopping iSCSI daemon:

iscsid 已停 [确定]

Starting iSCSI daemon: [确定]

[确定]

设置 iSCSI 目标:Logging in to [iface: default, target: iqn.2013-05.com.magedu:teststore.disk1, portal: 172.16.100.100,3260] (multiple)

iscsiadm: Could not login to [iface: default, target: iqn.2013-05.com.magedu:teststore.disk1, portal: 172.16.100.100,3260].

iscsiadm: initiator reported error (24 - iSCSI login failed due to authorization failure)

iscsiadm: Could not log into all portals

[确定]

[root@node2 iscsi]# vim iscsid.conf(编辑iscsid.conf配置文件)

[root@node2 iscsi]# iscsiadm -m node -T iqn.2013-05.com.magedu:teststore.disk1 -p 172.16.100.100 -l(登录172.16.100.100服务器的target,-T指定登录

那个target名字,-p指定服务器地址,-l登录)

Logging in to [iface: default, target: iqn.2013-05.com.magedu:teststore.disk1, portal: 172.16.100.100,3260] (multiple)

iscsiadm: Could not login to [iface: default, target: iqn.2013-05.com.magedu:teststore.disk1, portal: 172.16.100.100,3260].

iscsiadm: initiator reported error (24 - iSCSI login failed due to authorization failure)

iscsiadm: Could not log into all portals(认证失败)

[root@node2 iscsi]# vim iscsid.conf(编辑iscsid.conf配置文件)

[root@node2 iscsi]# rm -rf /var/lib/iscsi/send_targets/172.16.100.100,3260/

[root@node2 iscsi]# iscsiadm -m discovery -t st -p 172.16.100.100(发现172.16.100.100服务器是否有target输出,-m指定模式,t指定类型,-p指定服务器地址)

172.16.100.100:3260,1 iqn.2013-05.com.magedu:teststore.disk1

[root@node2 iscsi]# iscsiadm -m node -T iqn.2013-05.com.magedu:teststore.disk1 -p 172.16.100.100 -l(登录172.16.100.100服务器target,-m指定模式,

-T指定target名称,-p指定服务器地址,-l登录)

Logging in to [iface: default, target: iqn.2013-05.com.magedu:teststore.disk1, portal: 172.16.100.100,3260] (multiple)

Login to [iface: default, target: iqn.2013-05.com.magedu:teststore.disk1, portal: 172.16.100.100,3260] successful.

iscsi client_1:

[root@node1 iscsi]# ls /var/lib/iscsi/send_targets/(查看/var/lib/iscsi/send_targets目录文件及子目录)

172.16.100.100,3260

[root@node1 iscsi]# ls /var/lib/iscsi/send_targets/172.16.100.100,3260/(查看/var/lib/iscsi/send_targets/172.16.100.100,3260目录文件及子目录)

st_config

[root@node1 iscsi]# rm -rf /var/lib/iscsi/send_targets/172.16.100.100,3260/(删除/var/lib/iscsi/send_targets/172.16.100.100,3260目录文件及子目录,

-r递归删除,-f强制删除)

[root@node1 iscsi]# vim iscsid.conf(编辑iscsid.conf配置文件)

#discovery.sendtargets.auth.authmethod = CHAP

#discovery.sendtargets.auth.username = iscsiuser

#discovery.sendtargets.auth.password = iscsiuser

[root@node1 iscsi]# service iscsi restart(重启iscsi服务)

iscsiadm: No matching sessions found

Stopping iSCSI daemon:

iscsid is stopped [ OK ]

Starting iSCSI daemon: [ OK ]

[ OK ]

Setting up iSCSI targets: iscsiadm: No records found

[ OK ]

steppingstone:

[root@steppingstone ~]# tgtadm --lld iscsi --mode target --op show(显示targer,--lld指定驱动,--mode指定模式,--op指定操作)

Target 1: iqn.2013-05.com.magedu:teststore.disk1

System information:

Driver: iscsi

State: ready

I_T nexus information:

LUN information:

LUN: 0

Type: controller

SCSI ID: IET 00010000

SCSI SN: beaf10

Size: 0 MB, Block size: 1

Online: Yes

Removable media: No

Readonly: No

Backing store type: null

Backing store path: None

Backing store flags:

LUN: 1

Type: disk

SCSI ID: IET 00010001

SCSI SN: beaf11

Size: 10010 MB, Block size: 512

Online: Yes

Removable media: No

Readonly: No

Backing store type: rdwr

Backing store path: /dev/sda5

Backing store flags:

Account information:

iscsiuser

ACL information:

172.16.0.0/16

提示;包含了IP的认证,其次帐号也是相对应的;

iscsi client_1:

[root@node1 iscsi]#iscsiadm -m discovery -t st -p 172.16.100.100(发现172.16.100.100主机的target,-m指定模式,-t指定类型,-p指定服务器地址) 172.16.100.100:3260,1 iqn.2013-05.com.magedu:teststore.disk1 [root@node1 iscsi]# iscsiadm -m node -T iqn.2013-05.com.magedu:teststore.disk1 -p 172.16.100.100 -l(登录172.16.100.1000主机的target,-m指定模式, -T指定登录那个target名字,-p指定服务器地址,-l登录) Logging in to [iface: default, target: iqn.2013-05.com.magedu:teststore.disk1, portal: 172.16.100.100,3260] (multiple) Login to [iface: default, target: iqn.2013-05.com.magedu:teststore.disk1, portal: 172.16.100.100,3260] successful. [root@node1 iscsi]# cd(切换到用户家目录) [root@node1 ~]# fdisk -l(查看磁盘分区情况) Disk /dev/sda: 53.6 GB, 53687091200 bytes 255 heads, 63 sectors/track, 6527 cylinders Units = cylinders of 16065 * 512 = 8225280 bytes Device Boot Start End Blocks Id System /dev/sda1 * 1 13 104391 83 Linux /dev/sda2 14 2624 20972857+ 83 Linux /dev/sda3 2625 2755 1052257+ 82 Linux swap / Solaris Disk /dev/sdc: 10.0 GB, 10010133504 bytes 64 heads, 32 sectors/track, 9546 cylinders Units = cylinders of 2048 * 512 = 1048576 bytes Device Boot Start End Blocks Id System /dev/sdc1 1 1908 1953776 83 Linux 提示:显示为/dev/sdc1,虽然刚才登出了,但是刚才的信息库还有;

iscsi client_2:

[root@node2 iscsi]# fdisk -l(查看磁盘分区情况) Disk /dev/sda: 53.6 GB, 53687091200 bytes 255 heads, 63 sectors/track, 6527 cylinders Units = cylinders of 16065 * 512 = 8225280 bytes Device Boot Start End Blocks Id System /dev/sda1 * 1 13 104391 83 Linux /dev/sda2 14 2624 20972857+ 83 Linux /dev/sda3 2625 2755 1052257+ 82 Linux swap / Solaris Disk /dev/sdc: 10.0 GB, 10010133504 bytes 64 heads, 32 sectors/track, 9546 cylinders Units = cylinders of 2048 * 512 = 1048576 bytes Device Boot Start End Blocks Id System /dev/sdc1 1 1908 1953776 83 Linux 提示:再登录再登录可能就是/dev/sdd1了,如果要给一个固定名称可能要依赖其他机制;

iscsi client_1:

[root@node1 ~]# service iscsi restart(重启iscsi服务)

Logging out of session [sid: 2, target: iqn.2013-05.com.magedu:teststore.disk1, portal: 172.16.100.100,3260]

Logout of [sid: 2, target: iqn.2013-05.com.magedu:teststore.disk1, portal: 172.16.100.100,3260] successful.

Stopping iSCSI daemon:

iscsid is stopped [ OK ]

Starting iSCSI daemon: [ OK ]

[ OK ]

Setting up iSCSI targets: Logging in to [iface: default, target: iqn.2013-05.com.magedu:teststore.disk1, portal: 172.16.100.100,3260] (multiple)

Login to [iface: default, target: iqn.2013-05.com.magedu:teststore.disk1, portal: 172.16.100.100,3260] successful.

提示:重启iscsi服务会自动登录;

steppingstone:

[root@steppingstone ~]# vim /etc/rc.local

#!/bin/sh

#

# This script will be executed *after* all the other init scripts.

# You can put your own initialization stuff in here if you don't

# want to do the full Sys V style init stuff.

touch /var/lock/subsys/local

提示:刚才建立的内容都是在内核中工作的,内核中的所有内容都在内存中,这跟iptables、ipvs所建立的规则都是一样的道理,所以要想让它永久生效得额外写到/etc/rc.local当中,它会

每次开机都会创建一次,早起都是这么用的,现在不这么使用;

[root@steppingstone ~]# rpm -ql scsi-target-utils(查看安装scsi-target-utils软件生成那些文件)

/etc/rc.d/init.d/tgtd

/etc/sysconfig/tgtd

/etc/tgt/targets.conf(读取这个配置文件,创建target,如果target没有了,只要把内容定义到这里,下次开机也会生效的)

/usr/sbin/tgt-admin

/usr/sbin/tgt-setup-lun

/usr/sbin/tgtadm

/usr/sbin/tgtd

/usr/sbin/tgtimg

/usr/share/doc/scsi-target-utils-1.0.14

/usr/share/doc/scsi-target-utils-1.0.14/README

/usr/share/doc/scsi-target-utils-1.0.14/README.iscsi

/usr/share/doc/scsi-target-utils-1.0.14/README.iser

/usr/share/doc/scsi-target-utils-1.0.14/README.lu_configuration

/usr/share/doc/scsi-target-utils-1.0.14/README.mmc

/usr/share/man/man8/tgt-admin.8.gz

/usr/share/man/man8/tgt-setup-lun.8.gz

/usr/share/man/man8/tgtadm.8.gz

[root@steppingstone ~]# cd /etc/tgt/(切换到/etc/tgt目录)

[root@steppingstone tgt]# ls(查看当前目录文件及子目录)

targets.conf

[root@steppingstone tgt]# cp targets.conf targets.conf.backup(复制targets.conf为targets.conf.backup)

[root@steppingstone tgt]# vim targets.conf(编辑targets.conf配置文件)

default-driver iscsi(默认设备)

#ignore-errors yes(是不是忽略错误)

#<target iqn.2008-09.com.example:server.target1>(使用一个target作为容器来封装一个target)

# backing-store /dev/LVM/somedevice

#</target>

<target iqn.2013-05.com.magedu:teststore.disk1>(iqn名字)

backing-store /dev/sda5

incominguser iscsiuser iscsiuser(进来认证用户和密码,如果有多个用户帐号写多份incominguser)

initiator-address 172.16.0.0/16(指定允许发现的网段)

</target>

#<target iqn.2008-09.com.example:server.target2>

# direct-store /dev/sdd

# incominguser someuser secretpass12(进来的用户和它的密码)

#</target>

# initiator-address 192.168.100.1(指定允许发现的网段)

# initiator-address 192.168.200.5

[root@steppingstone tgt]# service tgtd restart(重启tgtd服务)

Stopping SCSI target daemon: Stopping target framework daemon

Some initiators are still connected - could not stop tgtd

提示:仍然有客户端登录,不允许重启;

iscsi client_1:

[root@node1 ~]# iscsiadm -m node -T iqn.2013-05.com.magedu:teststore.disk1 -p 172.16.100.100 -u(登出172.16.100.100的target,-m指定模式,-T指定 target名称,-p指定服务器地址,-u登出) Logging out of session [sid: 3, target: iqn.2013-05.com.magedu:teststore.disk1, portal: 172.16.100.100,3260] Logout of [sid: 3, target: iqn.2013-05.com.magedu:teststore.disk1, portal: 172.16.100.100,3260] successful.

iscsi client_2:

[root@node2 iscsi]# iscsiadm -m node -T iqn.2013-05.com.magedu:teststore.disk1 -p 172.16.100.100 -u(登出172.16.100.100的target,-m指定模式,-T 指定target名称,-p指定服务器地址,-u登出) Logging out of session [sid: 9, target: iqn.2013-05.com.magedu:teststore.disk1, portal: 172.16.100.100,3260] Logout of [sid: 9, target: iqn.2013-05.com.magedu:teststore.disk1, portal: 172.16.100.100,3260] successful.

steppingstone:

[root@steppingstone tgt]# service tgtd restart(重启tgtd服务)

Stopping SCSI target daemon: Stopping target framework daemon

[确定]

Starting SCSI target daemon: Starting target framework daemon

[root@steppingstone tgt]# tgtadm --lld iscsi --mode target --op show(显示target,--lld指定驱动,--mode指定模式,--ope操作)

Target 1: iqn.2013-05.com.magedu:teststore.disk1

System information:

Driver: iscsi

State: ready

I_T nexus information:

LUN information:

LUN: 0

Type: controller

SCSI ID: IET 00010000

SCSI SN: beaf10

Size: 0 MB, Block size: 1

Online: Yes

Removable media: No

Readonly: No

Backing store type: null

Backing store path: None

Backing store flags:

LUN: 1

Type: disk

SCSI ID: IET 00010001

SCSI SN: beaf11

Size: 10010 MB, Block size: 512

Online: Yes

Removable media: No

Readonly: No

Backing store type: rdwr

Backing store path: /dev/sda5

Backing store flags:

Account information:

iscsiuser

ACL information:

172.16.0.0/16

[root@steppingstone tgt]# vim targets.conf(编辑targets.conf配置文件)

# Outgoing user

# outgoinguser userA secretpassA(指定出去的帐号密码,让客户端验证服务器端,服务器端所提供的密码)

<target iqn.2013-05.com.magedu:teststore.disk1>

backing-store /dev/sda5

backing-store /dev/sda6

incominguser iscsiuser iscsiuser

initiator-address 172.16.0.0/16

</target>

[root@steppingstone tgt]# service tgtd restart(重启tgtd服务)

Stopping SCSI target daemon: Stopping target framework daemon

[确定]

Starting SCSI target daemon: Starting target framework daemon

[root@steppingstone tgt]# tgtadm --lld iscsi --mode target --op show(显示target信息,--lld指定驱动,--mode指定模式,--op操作)

Target 1: iqn.2013-05.com.magedu:teststore.disk1

System information:

Driver: iscsi

State: ready

I_T nexus information:

LUN information:

LUN: 0

Type: controller

SCSI ID: IET 00010000

SCSI SN: beaf10

Size: 0 MB, Block size: 1

Online: Yes

Removable media: No

Readonly: No

Backing store type: null

Backing store path: None

Backing store flags:

LUN: 1

Type: disk

SCSI ID: IET 00010001

SCSI SN: beaf11

Size: 10010 MB, Block size: 512

Online: Yes

Removable media: No

Readonly: No

Backing store type: rdwr

Backing store path: /dev/sda5

Backing store flags:

LUN: 2

Type: disk

SCSI ID: IET 00010002

SCSI SN: beaf12

Size: 10010 MB, Block size: 512

Online: Yes

Removable media: No

Readonly: No

Backing store type: rdwr

Backing store path: /dev/sda6

Backing store flags:

Account information:

iscsiuser

ACL information:

172.16.0.0/16

提示:如果想让/dev/sd5为LUN2,想让/dev/sda6对应LUN1,调换次序,但是还可以使用另外一种办法;

[root@steppingstone tgt]# vim targets.conf(编辑targets.conf配置文件)

# <direct-store /dev/sdd>(比backing-store优先之处,能让tgtd直接去读取这个硬件设备,这通常是个硬盘,不应该像演示那样是个分区,既然是硬盘这个硬盘有生产商,应

该有自己内部的序列号,当使用direct-store的时候它会把那个号码都读取出来,而且会输出出去的,而backing-store只会输出设备)

# vendor_id VENDOR1

# removable 1

# device-type cd

# lun 1(指定LUN号)

# </direct-store>

<target iqn.2013-05.com.magedu:teststore.disk1>

direct-store /dev/sda5

backing-store /dev/sda6

incominguser iscsiuser iscsiuser

initiator-address 172.16.0.0/16

</target>

[root@steppingstone tgt]# service tgtd restart(重启tgtd服务)

Stopping SCSI target daemon: Stopping target framework daemon

[确定]

Starting SCSI target daemon: Starting target framework daemon

Command 'sg_inq' (needed by 'option direct-store') is not in your path - can't continue!(需要给它一个sg_inq号码)

[root@steppingstone tgt]# vim targets.conf(编辑targets.conf配置文件)

<target iqn.2013-05.com.magedu:teststore.disk1>

<backing-store /dev/sda5>

lun 6

</backing-store>

backing-store /dev/sda6

incominguser iscsiuser iscsiuser

initiator-address 172.16.0.0/16

</target>

[root@steppingstone tgt]# service tgtd restart(重启tgtd服务)

Stopping SCSI target daemon: Stopping target framework daemon

[确定]

Starting SCSI target daemon: Starting target framework daemon

Your config file is not supported. See targets.conf.example for details.

[root@steppingstone tgt]# vim targets.conf(编辑targets.conf配置文件)

<target iqn.2013-05.com.magedu:teststore.disk1>

<backing-store /dev/sda5>

vendor_id magedu

lun 6

</backing-store>

backing-store /dev/sda6

incominguser iscsiuser iscsiuser

initiator-address 172.16.0.0/16

</target>

[root@steppingstone tgt]# service tgtd restart(重启tgtd服务)

Stopping SCSI target daemon: Stopping target framework daemon

[确定]

Starting SCSI target daemon: Starting target framework daemon

Your config file is not supported. See targets.conf.example for details.

[root@steppingstone tgt]# vim targets.conf(编辑targets.conf配置文件)

<target iqn.2013-05.com.magedu:teststore.disk1>

<backing-store /dev/sda5>

vendor_id magedu

lun 6

</backing-store>

<backing-store /dev/sda6>

vendor_id magedu

lun 7

</backing-store>

incominguser iscsiuser iscsiuser

initiator-address 172.16.0.0/16

</target>

[root@steppingstone tgt]# service tgtd restart(重启tgtd服务)

Stopping SCSI target daemon: Stopping target framework daemon

[确定]

Starting SCSI target daemon: Starting target framework daemon

提示:要封装,看来每一个都需要封装;

[root@steppingstone tgt]# tgtadm --lld iscsi --mode target --op show(显示target,--lld指定驱动,--mode指定模式,--op操作)

Target 1: iqn.2013-05.com.magedu:teststore.disk1

System information:

Driver: iscsi

State: ready

I_T nexus information:

LUN information:

LUN: 0

Type: controller

SCSI ID: IET 00010000

SCSI SN: beaf10

Size: 0 MB, Block size: 1

Online: Yes

Removable media: No

Readonly: No

Backing store type: null

Backing store path: None

Backing store flags:

LUN: 6

Type: disk

SCSI ID: IET 00010006

SCSI SN: beaf16

Size: 10010 MB, Block size: 512

Online: Yes

Removable media: No

Readonly: No

Backing store type: rdwr

Backing store path: /dev/sda5

Backing store flags:

LUN: 7

Type: disk

SCSI ID: IET 00010007

SCSI SN: beaf17

Size: 10010 MB, Block size: 512

Online: Yes

Removable media: No

Readonly: No

Backing store type: rdwr

Backing store path: /dev/sda6

Backing store flags:

Account information:

iscsiuser

ACL information:

172.16.0.0/16

提示:LUN一个6一个7,可以自己定义了,而且还有vendor_id生产商信息,这里没有显示;

[root@steppingstone tgt]# vim targets.conf(编辑targets.conf配置文件)

iscsi client_1:

[root@node1 ~]# iscsiadm -m node -T iqn.2013-05.com.magedu:teststore.disk1 -p 172.16.100.100 -l(登录172.16.100.100的target,-m指定模式,-T指定名称 ,-p指定主机,-l登录) Logging in to [iface: default, target: iqn.2013-05.com.magedu:teststore.disk1, portal: 172.16.100.100,3260] (multiple) Login to [iface: default, target: iqn.2013-05.com.magedu:teststore.disk1, portal: 172.16.100.100,3260] successful. [root@node1 ~]# fdisk -l(查看磁盘分区情况) Disk /dev/sda: 53.6 GB, 53687091200 bytes 255 heads, 63 sectors/track, 6527 cylinders Units = cylinders of 16065 * 512 = 8225280 bytes Device Boot Start End Blocks Id System /dev/sda1 * 1 13 104391 83 Linux /dev/sda2 14 2624 20972857+ 83 Linux /dev/sda3 2625 2755 1052257+ 82 Linux swap / Solaris Disk /dev/sdc: 10.0 GB, 10010133504 bytes 64 heads, 32 sectors/track, 9546 cylinders Units = cylinders of 2048 * 512 = 1048576 bytes Device Boot Start End Blocks Id System /dev/sdc1 1 1908 1953776 83 Linux Disk /dev/sdd: 10.0 GB, 10010133504 bytes 64 heads, 32 sectors/track, 9546 cylinders Units = cylinders of 2048 * 512 = 1048576 bytes Disk /dev/sdd doesn't contain a valid partition table 提示:有两个LUN,它有两个设备硬盘,而提供的新硬盘没有做任何分区;

iscsi client_2:

[root@node2 iscsi]# iscsiadm -m node -T iqn.2013-05.com.magedu:teststore.disk1 -p 172.16.100.100 -l(登录172.16.100.100的target,-m指定模式,-T 指定名称,-p指定主机,-l登录) Logging in to [iface: default, target: iqn.2013-05.com.magedu:teststore.disk1, portal: 172.16.100.100,3260] (multiple) Login to [iface: default, target: iqn.2013-05.com.magedu:teststore.disk1, portal: 172.16.100.100,3260] successful. [root@node2 iscsi]# fdisk -l(查看磁盘分区情况) Disk /dev/sda: 53.6 GB, 53687091200 bytes 255 heads, 63 sectors/track, 6527 cylinders Units = cylinders of 16065 * 512 = 8225280 bytes Device Boot Start End Blocks Id System /dev/sda1 * 1 13 104391 83 Linux /dev/sda2 14 2624 20972857+ 83 Linux /dev/sda3 2625 2755 1052257+ 82 Linux swap / Solaris Disk /dev/sdc: 10.0 GB, 10010133504 bytes 64 heads, 32 sectors/track, 9546 cylinders Units = cylinders of 2048 * 512 = 1048576 bytes Device Boot Start End Blocks Id System /dev/sdc1 1 1908 1953776 83 Linux Disk /dev/sdd: 10.0 GB, 10010133504 bytes 64 heads, 32 sectors/track, 9546 cylinders Units = cylinders of 2048 * 512 = 1048576 bytes Disk /dev/sdd doesn't contain a valid partition table 提示:每一个LUN在你发现并登录的时候,target上的每一个LUN都能被客户端所使用的;

在三个节点发现iscsi设备之后将iscsi设备做成gfs文件系统,因为要使用RHCS集群至少三个节点:

iscsi client_3:

[root@node3 ~]# cd /etc/iscsi/(切换到/etc/iscsi目录)

[root@node3 iscsi]# ls(查看当前目录文件及子目录)

initiatorname.iscsi iscsid.conf

[root@node3 iscsi]# vim initiatorname.iscsi(编辑initiatorname.iscsi)

InitiatorName=iqn.2013-05.com.magedu:node3

[root@node3 iscsi]# vim iscsid.conf(编辑iscsid.conf配置文件)

node.session.auth.authmethod = CHAP

node.session.auth.username = iscsiuser

node.session.auth.password = iscsiuser

[root@node3 iscsi]# service iscsi start(启动iscsi服务)

iscsid (pid 2515) is running...

Setting up iSCSI targets: iscsiadm: No records found

[ OK ]

[root@node3 iscsi]# chkconfig iscsi on(让iscsi开机自动启动)

[root@node3 iscsi]# iscsiadm -m discovery -t st -p 172.16.100.100(发现172.16.100.100的target,-m指定模式,-t指定类型,-p指定主机地址)

172.16.100.100:3260,1 iqn.2013-05.com.magedu:teststore.disk1

[root@node3 iscsi]# iscsiadm -m node -T iqn.2013-05.com.magedu:teststore.disk1 -p 172.16.100.100 -l(登录172.16.100.100的target,-m指定模式,-T指

定target名称,-p指定主机,-l登录)

Logging in to [iface: default, target: iqn.2013-05.com.magedu:teststore.disk1, portal: 172.16.100.100,3260] (multiple)

Login to [iface: default, target: iqn.2013-05.com.magedu:teststore.disk1, portal: 172.16.100.100,3260] successful.

让三个节点都配置为高可用集群:

iscsi client_1:

[root@node1 ~]# fdisk -l(查看磁盘分区情况) Disk /dev/sda: 53.6 GB, 53687091200 bytes 255 heads, 63 sectors/track, 6527 cylinders Units = cylinders of 16065 * 512 = 8225280 bytes Device Boot Start End Blocks Id System /dev/sda1 * 1 13 104391 83 Linux /dev/sda2 14 2624 20972857+ 83 Linux /dev/sda3 2625 2755 1052257+ 82 Linux swap / Solaris Disk /dev/sdc: 10.0 GB, 10010133504 bytes 64 heads, 32 sectors/track, 9546 cylinders Units = cylinders of 2048 * 512 = 1048576 bytes Device Boot Start End Blocks Id System /dev/sdc1 1 1908 1953776 83 Linux Disk /dev/sdd: 10.0 GB, 10010133504 bytes 64 heads, 32 sectors/track, 9546 cylinders Units = cylinders of 2048 * 512 = 1048576 bytes Disk /dev/sdd doesn't contain a valid partition table

iscsi client_2:

[root@node2 iscsi]# fdisk -l(查看磁盘分区情况) Disk /dev/sda: 53.6 GB, 53687091200 bytes 255 heads, 63 sectors/track, 6527 cylinders Units = cylinders of 16065 * 512 = 8225280 bytes Device Boot Start End Blocks Id System /dev/sda1 * 1 13 104391 83 Linux /dev/sda2 14 2624 20972857+ 83 Linux /dev/sda3 2625 2755 1052257+ 82 Linux swap / Solaris Disk /dev/sdc: 10.0 GB, 10010133504 bytes 64 heads, 32 sectors/track, 9546 cylinders Units = cylinders of 2048 * 512 = 1048576 bytes Device Boot Start End Blocks Id System /dev/sdc1 1 1908 1953776 83 Linux Disk /dev/sdd: 10.0 GB, 10010133504 bytes 64 heads, 32 sectors/track, 9546 cylinders Units = cylinders of 2048 * 512 = 1048576 bytes Disk /dev/sdd doesn't contain a valid partition table

iscsi client_3:

[root@node3 iscsi]# fdisk -l(查看磁盘分区情况) Disk /dev/sda: 53.6 GB, 53687091200 bytes 255 heads, 63 sectors/track, 6527 cylinders Units = cylinders of 16065 * 512 = 8225280 bytes Device Boot Start End Blocks Id System /dev/sda1 * 1 13 104391 83 Linux /dev/sda2 14 2624 20972857+ 83 Linux /dev/sda3 2625 2755 1052257+ 82 Linux swap / Solaris Disk /dev/sdb: 10.0 GB, 10010133504 bytes 64 heads, 32 sectors/track, 9546 cylinders Units = cylinders of 2048 * 512 = 1048576 bytes Device Boot Start End Blocks Id System /dev/sdb1 1 1908 1953776 83 Linux Disk /dev/sdc: 10.0 GB, 10010133504 bytes 64 heads, 32 sectors/track, 9546 cylinders Units = cylinders of 2048 * 512 = 1048576 bytes Disk /dev/sdc doesn't contain a valid partition table