1.基于velero及minio实现etcd数据备份与恢复

1.1 Velero简介:

Velero 是vmware开源的一个云原生的灾难恢复和迁移工具,它本身也是开源的,采用Go语言编写,可以安全的备份、恢复和迁移Kubernetes集群资源数据,https://velero.io/。

Velero 是西班牙语意思是帆船,非常符合Kubernetes社区的命名风格,Velero的开发公司Heptio,已被VMware收购。

Velero 支持标准的K8S集群,既可以是私有云平台也可以是公有云,除了灾备之外它还能做资源移转,支持把容器应用从一个集群迁移到另一个集群。

Velero 的工作方式就是把kubernetes中的数据备份到对象存储以实现高可用和持久化,默认的备份保存时间为720小时,并在需要的时候进行下载和恢复。

Velero与etcd快照备份的区别:

etcd 快照是全局完成备份(类似于MySQL全部备份),即使需要恢复一个资源对象(类似于只恢复MySQL的一个库),但是也需要做全局恢复到备份的状态(类似于MySQL的全库恢复),即会影响其它namespace中pod运行服务(类似于会影响MySQL其它数据库的数据)。

Velero可以有针对性的备份,比如按照namespace单独备份、只备份单独的资源对象等,在恢复的时候可以根据备份只恢复单独的namespace或资源对象,而不影响其它namespace中pod运行服务。

velero支持ceph、oss等对象存储,etcd快照是一个为本地文件。

velero支持任务计划实现周期备份,但etcd快照也可以基于cronjob实现。

velero支持对AWS EBS创建快照及还原

https://www.qloudx.com/velero-for-kubernetes-backup-restore-stateful-workloads-with-aws-ebs-snapshots/

https://github.com/vmware-tanzu/velero-plugin-for-aws #Elastic Block Store

velero整体架构

1.2 备份流程

~# velero backup create myserver-ns-backup-${DATE} --include-namespaces myserver --kubeconfig=./skyliu.kubeconfig --

namespace velero-system

Velero 客户端调用Kubernetes API Server创建Backup任务。

Backup 控制器基于watch 机制通过API Server获取到备份任务。

Backup 控制器开始执行备份动作,其会通过请求API Server获取需要备份的数据。

Backup 控制器将获取到的数据备份到指定的对象存储server端

1.3 部署minio

root@k8s-master2:/usr/local/src# nerdctl pull minio/minio:RELEASE.2022-04-12T06-55-35Z

root@k8s-master2:/usr/local/src# mkdir -p /data/minio

#创建minio容器,如果不指定,则默认用户名与密码为 minioadmin/minioadmin,可以通过环境变量自定义,如下:

root@k8s-master2:/usr/local/src# nerdctl run --name minio \

-p 9000:9000 \

-p 9999:9999 \

--restart=always \

-e "MINIO_ROOT_USER=admin" \

-e "MINIO_ROOT_PASSWORD=12345678" \

-v /data/minio/data:/data \

-d minio/minio:RELEASE.2022-04-12T06-55-35Z server /data \

--console-address '0.0.0.0:9999'

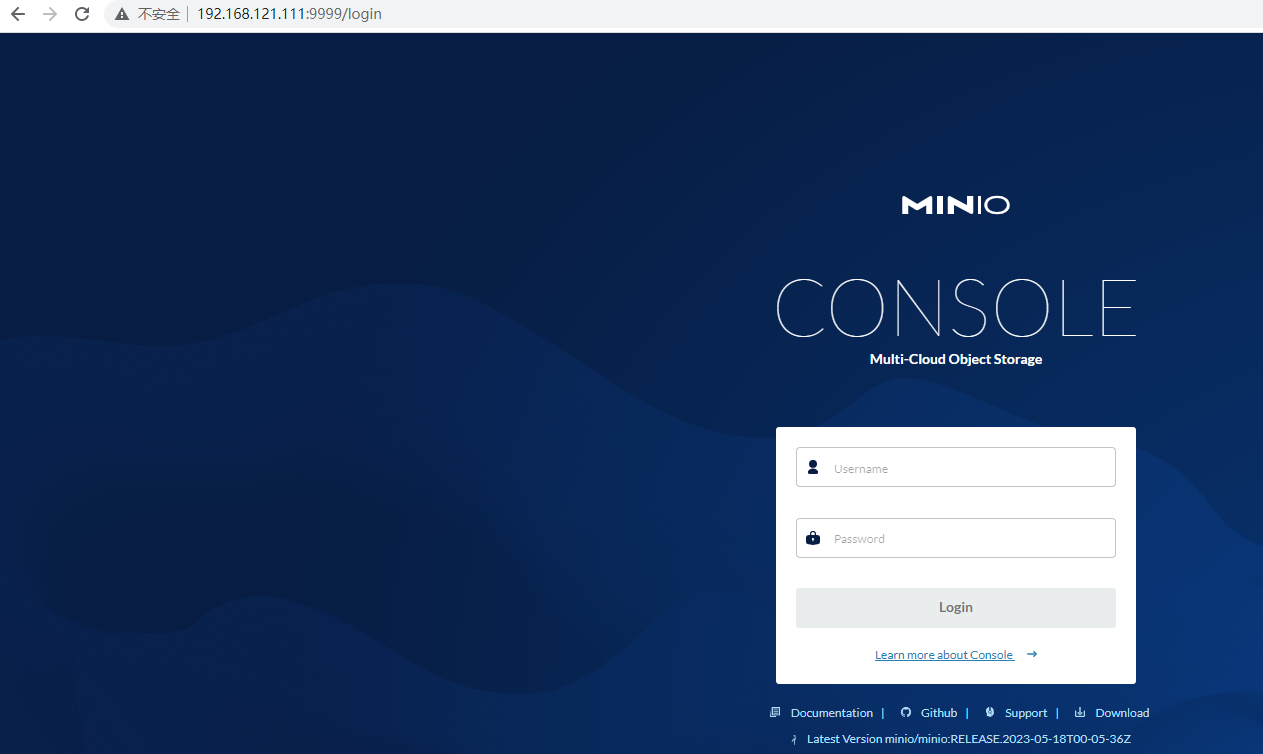

minio web登录界面

bucket名称:velerodata,后期会使用

minio验证bucket

1.4 部署veleno

在master节点部署velero:

https://github.com/vmware-tanzu/velero #版本兼容性

部署velero:

root@k8s-master2:/usr/local/src# wget https://github.com/vmware-tanzu/velero/releases/download/v1.11.0/velero-v1.11.0-linux-amd64.tar.gz

root@k8s-master2:/usr/local/src# tar xvf velero-v1.11.0-linux-amd64.tar.gz

root@k8s-master2:/usr/local/src# cp velero-v1.11.0-linux-amd64/velero /usr/local/bin/

root@k8s-master2:/usr/local/src# velero --help

配置velero认证环境:

#工作目录:

root@k8s-master2:~# mkdir /data/velero -p

root@k8s-master2:~# cd /data/velero

root@k8s-master2:/data/velero#

#访问minio的认证文件:

root@k8s-master2:/data/velero# vim velero-auth.txt

[default]

aws_access_key_id = admin

aws_secret_access_key = 12345678

#准备user-csr文件:

root@k8s-master2:/data/velero# vim skyliu-csr.json

{

"CN": "awsuser",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "k8s",

"OU": "System"

}

]

}

#准备证书签发环境:

root@k8s-master2:/data/velero# apt install golang-cfssl

wget https://github.com/cloudflare/cfssl/releases/download/v1.6.1/cfssl_1.6.1_linux_amd64

wget https://github.com/cloudflare/cfssl/releases/download/v1.6.1/cfssljson_1.6.1_linux_amd64

wget https://github.com/cloudflare/cfssl/releases/download/v1.6.1/cfssl-certinfo_1.6.1_linux_amd64

root@k8s-master2:/data/velero# mv cfssl-certinfo_1.6.1_linux_amd64 cfssl-certinfo

root@k8s-master2:/data/velero# mv cfssl_1.6.1_linux_amd64 cfssl

root@k8s-master2:/data/velero# mv cfssljson_1.6.1_linux_amd64 cfssljson

root@k8s-master2:/data/velero# cp cfssl-certinfo cfssl cfssljson /usr/local/bin/

root@k8s-master2:/data/velero# chmod a+x /usr/local/bin/cfssl*

#执行证书签:

>= 1.24.x:

root@k8s-deploy:~# scp /etc/kubeasz/clusters/k8s-cluster1/ssl/ca-config.json 192.168.121.111:/data/velero

root@k8s-master2:/data/velero# /usr/local/bin/cfssl gencert -ca=/etc/kubernetes/ssl/ca.pem -ca-key=/etc/kubernetes/ssl/ca-key.pem -config=./ca-config.json -profile=kubernetes ./skyliu-csr.json | cfssljson -bare skyliu

1.23 <=

root@k8s-master2:/data/velero# /usr/local/bin/cfssl gencert -ca=/etc/kubernetes/ssl/ca.pem -ca-key=/etc/kubernetes/ssl/ca-key.pem -config=/etc/kubeasz/clusters/k8s-cluster1/ssl/ca-config.json -profile=kubernetes ./skyliu-csr.json | cfssljson -bare skyliu

#验证证书:

root@k8s-master2:/data/velero# ll skyliu*

-rw-r--r-- 1 root root 997 May 22 08:44 skyliu.csr

-rw-r--r-- 1 root root 219 May 22 08:43 skyliu-csr.json

-rw------- 1 root root 1675 May 22 08:44 skyliu-key.pem

-rw-r--r-- 1 root root 1387 May 22 08:44 skyliu.pem

#分发证书到api-server证书路径:

root@k8s-master2:/data/velero# cp skyliu-key.pem /etc/kubernetes/ssl/

root@k8s-master2:/data/velero# cp skyliu.pem /etc/kubernetes/ssl/

#生成集群认证config文件:

# export KUBE_APISERVER="https://192.168.121.110:6443"

# kubectl config set-cluster kubernetes \

--certificate-authority=/etc/kubernetes/ssl/ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=./skyliu.kubeconfig

#设置客户端证书认证:

# kubectl config set-credentials skyliu \

--client-certificate=/etc/kubernetes/ssl/skyliu.pem \

--client-key=/etc/kubernetes/ssl/skyliu-key.pem \

--embed-certs=true \

--kubeconfig=./skyliu.kubeconfig

#设置上下文参数:

# kubectl config set-context kubernetes \

--cluster=kubernetes \

--user=skyliu \

--namespace=velero-system \

--kubeconfig=./skyliu.kubeconfig

#设置默认上下文:

# kubectl config use-context kubernetes --kubeconfig=skyliu.kubeconfig

#k8s集群中创建awsuser账户:

# kubectl create clusterrolebinding skyliu --clusterrole=cluster-admin --user=skyliu

#创建namespace:

# kubectl create ns velero-system

# 执行安装:

velero --kubeconfig ./skyliu.kubeconfig \

install \

--provider aws \

--plugins velero/velero-plugin-for-aws:v1.5.5 \

--bucket velerodata \

--secret-file ./velero-auth.txt \

--use-volume-snapshots=false \

--namespace velero-system \

--backup-location-config region=minio,s3ForcePathStyle="true",s3Url=http://192.168.121.111:9000

#验证安装:

root@k8s-master2:/data/velero# kubectl get pod -n velero-system

root@k8s-master2:/data/velero# kubectl get pod -n velero-system -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

velero-98bc8c975-pvbc6 0/1 PodInitializing 0 2m51s 10.200.107.241 192.168.121.115 <none> <none>

root@k8s-node2:~# nerdctl pull velero/velero-plugin-for-aws:v1.5.5

root@k8s-node2:~# nerdctl pull velero/velero:v1.11.0

root@k8s-master2:/data/velero# kubectl get pod -n velero-system

NAME READY STATUS RESTARTS AGE

velero-98bc8c975-q6c5d 1/1 Running 0 2m36s

1.5 对default ns进行备份

root@k8s-master2:/data/velero# DATE=`date +%Y%m%d%H%M%S`

root@k8s-master2:/data/velero# velero backup create default-backup-${DATE} \

--include-cluster-resources=true \

--include-namespaces default \

--kubeconfig=./skyliu.kubeconfig \

--namespace velero-system

#验证备份:

velero backup describe default-backup-20230523094751 -n velero-system

Name: default-backup-20230523094751

Namespace: velero-system

Labels: velero.io/storage-location=default

Annotations: velero.io/source-cluster-k8s-gitversion=v1.26.4

velero.io/source-cluster-k8s-major-version=1

velero.io/source-cluster-k8s-minor-version=26

Phase: Completed

Namespaces:

Included: default

Excluded: <none>

Resources:

Included: *

Excluded: <none>

Cluster-scoped: included

Label selector: <none>

Storage Location: default

Velero-Native Snapshot PVs: auto

TTL: 720h0m0s

CSISnapshotTimeout: 10m0s

ItemOperationTimeout: 1h0m0s

Hooks: <none>

Backup Format Version: 1.1.0

Started: 2023-05-23 09:48:06 +0000 UTC

Completed: 2023-05-23 09:48:09 +0000 UTC

Expiration: 2023-06-22 09:48:06 +0000 UTC

Velero-Native Snapshots: <none included>

验证

1.6 删除pod并验证数据恢复

#查看pod

root@k8s-master2:/data/velero# kubectl get pod

NAME READY STATUS RESTARTS AGE

net-test1 1/1 Running 1 (17m ago) 3h28m

删除pod

root@k8s-master2:/data/velero# kubectl delete pod net-test1

pod "net-test1" deleted

验证

root@k8s-master2:/data/velero# kubectl get pod

No resources found in default namespace.

恢复pod

velero restore create --from-backup default-backup-20230523094751 --wait --kubeconfig=./skyliu.kubeconfig --namespace velero-system

验证pod

root@k8s-master2:/data/velero# kubectl get pod

NAME READY STATUS RESTARTS AGE

net-test1 1/1 Running 0 41s

1.7 备份指定资源对象

root@k8s-master2:/data/velero# kubectl run net-test2 --image=alpine sleep 10000000000 -n myserver

pod/net-test2 created

root@k8s-master2:/data/velero# kubectl get pod -n myserver

NAME READY STATUS RESTARTS AGE

net-test2 1/1 Running 0 2m19s

root@k8s-master1:~/20230507/k8s-Resource-N76/case3-controller# kubectl get deploy -n myserver

NAME READY UP-TO-DATE AVAILABLE AGE

nginx-deployment 2/2 2 2 64s

备份

root@k8s-master2:/data/velero# DATE=`date +%Y%m%d%H%M%S`

root@k8s-master2:/data/velero# velero backup create pod-backup-$DATE --include-cluster-resources=true --ordered-resources 'pods=myserver/net-test1' --namespace velero-system --include-namespaces=myserver

Backup request "pod-backup-20230523142250" submitted successfully.

Run `velero backup describe pod-backup-20230523142250` or `velero backup logs pod-backup-20230523142250` for more details.

删除deployment控制器

root@k8s-master1:~/20230507/k8s-Resource-N76/case3-controller# kubectl delete -f 3-deployment.yml

deployment.apps "nginx-deployment" deleted

root@k8s-master1:~/20230507/k8s-Resource-N76/case3-controller# kubectl get deploy -n myserver

No resources found in myserver namespace.

root@k8s-master2:/data/velero# velero restore create --from-backup pod-backup-20230523142250 --wait --kubeconfig=./skyliu.kubeconfig --namespace velero-system

Restore request "pod-backup-20230523142250-20230523143401" submitted successfully.

Waiting for restore to complete. You may safely press ctrl-c to stop waiting - your restore will continue in the background.

..................

Restore completed with status: Completed. You may check for more information using the commands `velero restore describe pod-backup-20230523142250-20230523143401` and `velero restore logs pod-backup-20230523142250-20230523143401`.

验证恢复

root@k8s-master2:/data/velero# kubectl get deploy -n myserver

NAME READY UP-TO-DATE AVAILABLE AGE

nginx-deployment 2/2 2 2 3m23s

1.8 批量备份所有namespace

root@k8s-master2:~# cat /data/velero/ns-back.sh

#!/bin/bash

NS_NAME=`kubectl get ns | awk '{if (NR>2){print}}' | awk '{print $1}'`

DATE=`date +%Y%m%d%H%M%S`

cd /data/velero/

for i in $NS_NAME;do

velero backup create ${i}-ns-backup-${DATE} \

--include-cluster-resources=true \

--include-namespaces ${i} \

--kubeconfig=/root/.kube/config \

--namespace velero-system

done

root@k8s-master2:~# bash /data/velero/ns-back.sh

minio验证备份:

2.基于RBAC实现多账户授权

2.1 Kubernetes API 鉴权流程:

鉴权类型:https://kubernetes.io/zh/docs/reference/access-authn-authz/authorization

Node(节点鉴权):针对kubelet发出的API请求进行鉴权。

授予node节点的kubelet读取services、endpoints、secrets、configmaps等事件状态,并向API server更新pod与node状态。

Webhook: 是一个HTTP回调,发生某些事情时调用的HTTP调用。

# Kubernetes API 版本

apiVersion: v1

# API 对象种类

kind: Config

# clusters 代表远程服务。

clusters: - name: name-of-remote-authz-service

cluster:

# 对远程服务进行身份认证的 CA。

certificate-authority: /path/to/ca.pem

# 远程服务的查询 URL。必须使用 'https'。

server: https://authz.example.com/authorize

ABAC(Attribute-based access control ):基于属性的访问控制,1.6之前使用,将属性与账户直接绑定。

{"apiVersion": "abac.authorization.kubernetes.io/v1beta1",

"kind": "Policy",

"spec":

{"user": "user1",

"namespace": "*",

"resource": "*",

"apiGroup": "*"}} #用户user1对所有namespace所有API版本的所有资源拥有所有权限((没有设置"readonly": true)。

{"apiVersion": "abac.authorization.kubernetes.io/v1beta1",

"kind": "Policy",

"spec":

{"user": "user2",

"namespace": "myserver",

"resource": "pods",

"readonly": true}} #用户user2对namespace myserver的pod有只读权限。

--authorization-mode=...,RBAC,ABAC --authorization-policy-file=mypolicy.json #开启ABAC参数

RBAC(Role-Based Access Control):基于角色的访问控制,将权限与角色(role)先进行关联,然后将角色与用户进行绑定(Binding)从而继承角色中的权限

role

rolebinding

2.2 简介

RBAC API声明了四种Kubernetes对象:Role、ClusterRole、RoleBinding和ClusterRoleBinding。

Role: 定义一组规则,用于访问命名空间中的 Kubernetes 资源。

RoleBinding: 定义用户和角色(Role)的绑定关系。

ClusterRole: 定义了一组访问集群中 Kubernetes 资源(包括所有命名空间)的规则。

ClusterRoleBinding: 定义了用户和集群角色(ClusterRole)的绑定关系。

https://kubernetes.io/zh/docs/reference/access-authn-authz/rbac/ #使用RBAC鉴权,RBAC是基于角色的访问控制(Role-Based Access Control)

https://kubernetes.io/zh/docs/reference/access-authn-authz/authorization/ #鉴权概述

配置role

2.2.1:在指定namespace创建账户:

root@k8s-master2:/data/velero# kubectl create sa sky -n sky

serviceaccount/sky created

2.2.2:创建role规则:

root@k8s-master2:/data/sky# cat sky-role.yaml

kind: Role

apiVersion: rbac.authorization.k8s.io/v1

metadata:

namespace: sky

name: sky-role

rules:

- apiGroups: ["*"]

resources: ["pods"]

#verbs: ["*"]

##RO-Role

verbs: ["get", "watch", "list"]

- apiGroups: ["*"]

resources: ["pods/exec"]

#verbs: ["*"]

##RO-Role

verbs: ["get", "watch", "list","put","create"]

- apiGroups: ["extensions", "apps/v1"]

resources: ["deployments"]

#verbs: ["get", "list", "watch", "create", "update", "patch", "delete"]

##RO-Role

verbs: ["get", "watch", "list"]

- apiGroups: ["*"]

resources: ["*"]

#verbs: ["get", "list", "watch", "create", "update", "patch", "delete"]

##RO-Role

verbs: ["get", "watch", "list"]

root@k8s-master2:/data/sky# kubectl apply -f sky-role.yaml

role.rbac.authorization.k8s.io/sky-role created

2.2.3:将规则与账户进行绑定:

root@k8s-master2:/data/sky# cat sky-role-bind.yaml

kind: RoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: role-bind-sky

namespace: sky

subjects:

- kind: ServiceAccount

name: sky

namespace: sky

roleRef:

kind: Role

name: sky-role

apiGroup: rbac.authorization.k8s.io

root@k8s-master2:/data/sky# kubectl apply -f sky-role-bind.yaml

rolebinding.rbac.authorization.k8s.io/role-bind-sky created

2.2.4 创建token

root@k8s-master2:/data/sky# cat sky-token.yaml

apiVersion: v1

kind: Secret

type: kubernetes.io/service-account-token

metadata:

name: sky-user-token

namespace: sky

annotations:

kubernetes.io/service-account.name: "sky"

root@k8s-master2:/data/sky# kubectl apply -f sky-token.yaml

secret/sky-user-token created

2.2.5:获取token名称:

root@k8s-master1:/usr/local/src# kubectl get secrets -A

NAMESPACE NAME TYPE DATA AGE

kube-system calico-etcd-secrets Opaque 3 33d

kubernetes-dashboard dashboard-admin-user kubernetes.io/service-account-token 3 5m21s

kubernetes-dashboard kubernetes-dashboard-certs Opaque 0 17m

kubernetes-dashboard kubernetes-dashboard-csrf Opaque 1 17m

kubernetes-dashboard kubernetes-dashboard-key-holder Opaque 2 17m

sky sky-user-token kubernetes.io/service-account-token 3 24h

velero-system cloud-credentials Opaque 1 30h

velero-system velero-repo-credentials Opaque 1 30h

2.2.6 获取token值

root@k8s-master1:/usr/local/src# kubectl describe secrets -n sky sky-user-token

Name: sky-user-token

Namespace: sky

Labels: <none>

Annotations: kubernetes.io/service-account.name: sky

kubernetes.io/service-account.uid: 19df0a38-8a4e-4148-a482-8a0afe405a41

Type: kubernetes.io/service-account-token

Data

====

ca.crt: 1310 bytes

namespace: 3 bytes

token: eyJhbGciOiJSUzI1NiIsImtpZCI6IkVxTXUxaGxkeEk3SU1yZlJnNFViVzhBVnFNc3hRbnZFa1VMdDZaNkd3QlEifQ.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJza3kiLCJrdWJlcm5ldGVzLmlvL3NlcnZpY2VhY2NvdW50L3NlY3JldC5uYW1lIjoic2t5LXVzZXItdG9rZW4iLCJrdWJlcm5ldGVzLmlvL3NlcnZpY2VhY2NvdW50L3NlcnZpY2UtYWNjb3VudC5uYW1lIjoic2t5Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9zZXJ2aWNlLWFjY291bnQudWlkIjoiMTlkZjBhMzgtOGE0ZS00MTQ4LWE0ODItOGEwYWZlNDA1YTQxIiwic3ViIjoic3lzdGVtOnNlcnZpY2VhY2NvdW50OnNreTpza3kifQ.aXC3o6ERVq8-Fd4KiI_EKY4Km8_ChbfEpKfiPecCK3TtvQaPmXEd-KOjWRoHv6ajaEM5tseIYa2vbE8vE7KUDedPYR0lf9jN-OBFO-Oa96J5Hsg8_BSvkB3D7DYafzU6g_jAfhi5yVzBGHvreoIksjLoGeRIUs5gh53hdY2Sh3nHykPfQpVPRqsxZMiX4wYzyKOPENs6zq34i8bhCi8LMGB-lyoq1wx694ajDjH7UalJpIGFAlVqd7WO2oF01_JAnSM5VWeNAU8YpL6NqdW2St1zIOdsdb3A_8e1eOtHhgb_T6oMVsuFzp5IL1VX_SoPaObh2QUVIyY1R2_P4iObtA

1.7:登录dashboard测试:

验证是否操作删除

配置clusterrole

root@k8s-master2:/data/sky# kubectl create sa sky510 -n sky

root@k8s-master2:/data/sky# cat sky-clusterrole.yaml

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: CR

rules:

# Allow Metrics Scraper to get metrics from the Metrics server

- apiGroups: [""]

resources: ["pods", "nodes"]

verbs: ["get", "list", "watch"]

root@k8s-master2:/data/sky# cat sky-clusterrole-bind.yaml

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: CR-bind

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: CR

subjects:

- kind: ServiceAccount

name: sky510

namespace: sky

root@k8s-master2:/data/sky# cat sky510-token.yaml

apiVersion: v1

kind: Secret

type: kubernetes.io/service-account-token

metadata:

name: sky510-user-token

namespace: sky

annotations:

kubernetes.io/service-account.name: "sky510"

root@k8s-master2:/data/sky# kubectl get secrets -n sky

NAME TYPE DATA AGE

sky-user-token kubernetes.io/service-account-token 3 24h

sky510-user-token kubernetes.io/service-account-token 3 11m

root@k8s-master2:/data/sky# kubectl describe secrets -n sky sky510-user-token

Name: sky510-user-token

Namespace: sky

Labels: <none>

Annotations: kubernetes.io/service-account.name: sky510

kubernetes.io/service-account.uid: 171a2619-286e-4db8-b831-3d12b9a596c8

Type: kubernetes.io/service-account-token

Data

====

ca.crt: 1310 bytes

namespace: 3 bytes

token: eyJhbGciOiJSUzI1NiIsImtpZCI6IkVxTXUxaGxkeEk3SU1yZlJnNFViVzhBVnFNc3hRbnZFa1VMdDZaNkd3QlEifQ.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJza3kiLCJrdWJlcm5ldGVzLmlvL3NlcnZpY2VhY2NvdW50L3NlY3JldC5uYW1lIjoic2t5NTEwLXVzZXItdG9rZW4iLCJrdWJlcm5ldGVzLmlvL3NlcnZpY2VhY2NvdW50L3NlcnZpY2UtYWNjb3VudC5uYW1lIjoic2t5NTEwIiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9zZXJ2aWNlLWFjY291bnQudWlkIjoiMTcxYTI2MTktMjg2ZS00ZGI4LWI4MzEtM2QxMmI5YTU5NmM4Iiwic3ViIjoic3lzdGVtOnNlcnZpY2VhY2NvdW50OnNreTpza3k1MTAifQ.Ptqaz4N8qUz_b3CIkFT150SIaUIABJPwsRdos2fIHdbBqQmY65YVPOU3b0i9oPpljpfrApNeQ7mrb9jch5ZRcARx12ysbn3yDIOis_o-3DH0MvV5mjCvVfd8ZQT4FKqj1pxPwRwQoqshiny34izW3PcmbKvn1Ah5-rgts0WmUJyB-kgFGLSG_EOX9bgXk0HkrrLY0FfccRNqHqohIDFcVZ3xdTazuYnFs3rZfY2coGWNG-tQLH4M6ku27iRGieqfUlmNvULJbQ7j1EWzYtGps1oWaj094PFwMT3_ws0XLhgELUOOIVkglS-Sjb-GCn9MvlJj8jNXwLcUQeYmDs3kGw

验证

二:基于kube-config文件登录:

2.1:创建csr文件:

root@k8s-master2:~/test# cat sky-csr.json

{

"CN": "China",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "k8s",

"OU": "System"

}

]

}

2.2:签发证书:

root@k8s-master2:~/test# apt install golang-cfssl

wget https://github.com/cloudflare/cfssl/releases/download/v1.6.1/cfssl_1.6.1_linux_amd64

wget https://github.com/cloudflare/cfssl/releases/download/v1.6.1/cfssljson_1.6.1_linux_amd64

wget https://github.com/cloudflare/cfssl/releases/download/v1.6.1/cfssl-certinfo_1.6.1_linux_amd64

root@k8s-master2:~/test# mv cfssl-certinfo_1.6.1_linux_amd64 cfssl-certinfo

root@k8s-master2:~/test# mv cfssl_1.6.1_linux_amd64 cfssl

root@k8s-master2:~/test# mv cfssljson_1.6.1_linux_amd64 cfssljson

root@k8s-master2:~/test# cp cfssl-certinfo cfssl cfssljson /usr/local/bin/

root@k8s-master2:~/test# chmod a+x /usr/local/bin/cfssl*

root@k8s-master2:~/test# cfssl gencert -ca=/etc/kubernetes/ssl/ca.pem -ca-key=/etc/kubernetes/ssl/ca-key.pem -config=/data/velero/ca-config.json -profile=kubernetes sky-csr.json | cfssljson -bare hui

root@k8s-master2:~/test# ls

hui.csr hui-key.pem hui.pem sky-csr.json

2.3:生成普通用户kubeconfig文件:

root@k8s-master2:~/test# kubectl config set-cluster hui --certificate-authority=/etc/kubernetes/ssl/ca.pem --embed-certs=true --server=https://192.168.121.188:6443 --kubeconfig=hui.kubeconfig #--embed-certs=true为嵌入证书信息

2.4:设置客户端认证参数:

# cp *.pem /etc/kubernetes/ssl/

# kubectl config set-credentials hui \

--client-certificate=/etc/kubernetes/ssl/hui.pem \

--client-key=/etc/kubernetes/ssl/hui-key.pem \

--embed-certs=true \

--kubeconfig=hui.kubeconfig

2.5:设置上下文参数(多集群使用上下文区分)

https://kubernetes.io/zh/docs/concepts/configuration/organize-cluster-access-kubeconfig/

# kubectl config set-context hui \

--cluster=hui \

--user=hui \

--namespace=sky \

--kubeconfig=hui.kubeconfig

2.5: 设置默认上下文

# kubectl config use-context hui --kubeconfig=hui.kubeconfig

2.6 创建用户

root@k8s-master2:~/test# kubectl create sa hui -n sky

serviceaccount/hui created

root@k8s-master2:~/test# kubectl get sa -n sky

NAME SECRETS AGE

default 0 47h

hui 0 17m

sky 0 47h

sky510 0 22h

#创建clusterrole

root@k8s-master2:~/test# cat hui-clusterrole.yaml

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: love

rules:

# Allow Metrics Scraper to get metrics from the Metrics server

- apiGroups: [""]

resources: ["pods", "nodes"]

verbs: ["get", "list", "watch"]

root@k8s-master2:~/test# kubectl apply -f hui-clusterrole.yaml

clusterrole.rbac.authorization.k8s.io/love created

root@k8s-master2:~/test# kubectl get clusterrole

NAME CREATED AT

love 2023-05-25T15:14:08Z

#创建clusterrolebinding

root@k8s-master2:~/test# cat hui-clusterrole-bind.yaml

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: love-bind

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: love

subjects:

- kind: ServiceAccount

name: hui

namespace: sky

root@k8s-master2:~/test# kubectl apply -f hui-clusterrole-bind.yaml

clusterrolebinding.rbac.authorization.k8s.io/love-bind created

root@k8s-master2:~/test# kubectl get clusterrolebinding

NAME ROLE AGE

love-bind ClusterRole/love 118s

#创建token

root@k8s-master2:~/test# cat hui-token.yaml

apiVersion: v1

kind: Secret

type: kubernetes.io/service-account-token

metadata:

name: hui-user-token

namespace: sky

annotations:

kubernetes.io/service-account.name: "hui"

root@k8s-master2:~/test# kubectl apply -f hui-token.yaml

secret/hui-user-token created

root@k8s-master2:~/test# kubectl get secrets -n sky hui-user-token

NAME TYPE DATA AGE

hui-user-token kubernetes.io/service-account-token 3 51s

2.7:获取token:

root@k8s-master2:~/test# kubectl describe secrets -n sky hui-user-token

Name: hui-user-token

Namespace: sky

Labels: <none>

Annotations: kubernetes.io/service-account.name: hui

kubernetes.io/service-account.uid: ee40508e-b9c6-4771-8e1e-017f264a0fa5

Type: kubernetes.io/service-account-token

Data

====

ca.crt: 1310 bytes

namespace: 3 bytes

token: eyJhbGciOiJSUzI1NiIsImtpZCI6IkVxTXUxaGxkeEk3SU1yZlJnNFViVzhBVnFNc3hRbnZFa1VMdDZaNkd3QlEifQ.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJza3kiLCJrdWJlcm5ldGVzLmlvL3NlcnZpY2VhY2NvdW50L3NlY3JldC5uYW1lIjoiaHVpLXVzZXItdG9rZW4iLCJrdWJlcm5ldGVzLmlvL3NlcnZpY2VhY2NvdW50L3NlcnZpY2UtYWNjb3VudC5uYW1lIjoiaHVpIiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9zZXJ2aWNlLWFjY291bnQudWlkIjoiZWU0MDUwOGUtYjljNi00NzcxLThlMWUtMDE3ZjI2NGEwZmE1Iiwic3ViIjoic3lzdGVtOnNlcnZpY2VhY2NvdW50OnNreTpodWkifQ.gRXgpn3wn3O5Bs6JMYW_TxRebLVwiNZFQZyj7NunHKIIR8__uQr1ISbaYNaIDSdwHVuKZ0ZUZNUGhJ5YHQX5JjIttl8WrOknWLLkCzLf2_uBcpOQo_5HsfpedfNFfmm9o1O1gLVu2uN9JQfbMALI6jwV0lAmnSc120G_xwpIY0f9oXNnVa59vzCpKGy5k5UKU3sTG7xrbJty0HiGZRrBRBSer1AMPe4TLG8OOg7sRd_AwRQ9WtnM16U5p7Q5A2MaVX8dhM6NbIPIurbkeynJsyn_bI-qOLixq2Kb8YABejqAuWXECfyMhSv3KfnYuzEdc-Yxx38V5KS-_ajquIP-bQ

2.8:将token写入用户kube-config文件:

root@k8s-master2:~/test# vim hui.kubeconfig

apiVersion: v1

clusters:

- cluster:

certificate-authority-data: LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSURtakNDQW9LZ0F3SUJBZ0lVWmc5Si9YeFlndUFjSDh1dVdrcjVOQkpkZkxvd0RRWUpLb1pJaHZjTkFRRUwKQlFBd1pERUxNQWtHQTFVRUJoTUNRMDR4RVRBUEJnTlZCQWdUQ0VoaGJtZGFhRzkxTVFzd0NRWURWUVFIRXdKWQpVekVNTUFvR0ExVUVDaE1EYXpoek1ROHdEUVlEVlFRTEV3WlRlWE4wWlcweEZqQVVCZ05WQkFNVERXdDFZbVZ5CmJtVjBaWE10WTJFd0lCY05Nak13TkRJd01USTFNREF3V2hnUE1qRXlNekF6TWpjeE1qVXdNREJhTUdReEN6QUoKQmdOVkJBWVRBa05PTVJFd0R3WURWUVFJRXdoSVlXNW5XbWh2ZFRFTE1Ba0dBMVVFQnhNQ1dGTXhEREFLQmdOVgpCQW9UQTJzNGN6RVBNQTBHQTFVRUN4TUdVM2x6ZEdWdE1SWXdGQVlEVlFRREV3MXJkV0psY201bGRHVnpMV05oCk1JSUJJakFOQmdrcWhraUc5dzBCQVFFRkFBT0NBUThBTUlJQkNnS0NBUUVBclVzakptRlQyK3NSL3BUVHEvRUEKdnh5Ly9QKzZkdWNGV1RtcG4xN1BBK1FKNVVvUHRwODVlekNYRitrSU5mOEFFYmp2RWxlZnEwK3pnOC9NbFpTMAo3dUlHSFBmUmxjTGdzdGdJNHBEODlKRW0zRkNxS3VIWE5pUURnZW1uc3VKeHRFWTNlclRpNUtNUWxXQkFyK2EvCjRUNk5xWmdwOVZEUjArWDVGSEo5Q2ZiaHdROFI3SUxDaFluaXltanlwR1Q5aEYyQjhoVUF2MHhLL1QrRk1ZN0YKTFEreHVaV1RYSS9mN0V0OTVBd3hOYnAyS1pvOTFUSTR4aXhRSGhsWEwyS0duaGE1YThvbVQrZjdhVWtWR2c1dQoydUVVelkxaGE5R2swVS92VjkzdXR2Z1R1cHJZWXlPaEsvcHY0MW4xYVRBMTN5Mmw0ZE1LV3RVQXdEM214WHd6CnJ3SURBUUFCbzBJd1FEQU9CZ05WSFE4QkFmOEVCQU1DQVFZd0R3WURWUjBUQVFIL0JBVXdBd0VCL3pBZEJnTlYKSFE0RUZnUVUxWVZBTkpnVWFnbk4xUHY1KyswWG5yQWh5T2N3RFFZSktvWklodmNOQVFFTEJRQURnZ0VCQUlkZQpNN2xvM0duaGI3REU0VjlKM3RuNGFUaS9lWVh4bENJcVVtR1RwMW9QRTJNc2dMcjZvcXFnd3dIcEJ3bnFrc3FMCmYvQ3FSazB1S1NTVXFzVzduK2lPMDZXMFZlUUhaNHdicm9LUXNNd1Z0d2tObDY0UGVheHp1K3NSd2FHVXVBZmoKaTJDSHJyRFFUVlgzTnpyZzQ5RE9obXJ4QTFORFRYeXB1NG55bkVFN1VjZDlaMzhLbjhnM1hCN1FzS1Q2QjVKLwpNNVF5SE9QcHBJbHFVeWhaTDdLTkE5QkF5bTRGeU4xUGt2bUxTeCthdkZhRnp6Qk1jQ1B6SWpPMHBwOTU0blZXClBQS24vQkhWUWhmbmNsN1FEQmRCN2ErYzAyUFJCbXN0VnJIN2lPVHB3V3A2Rjk3UmRjSnl1QkJoZWVaR1ZYQTcKUEE5ajRYV1JUOWhPdDlaNUxIST0KLS0tLS1FTkQgQ0VSVElGSUNBVEUtLS0tLQo=

server: https://192.168.121.188:6443

name: hui

contexts:

- context:

cluster: hui

namespace: sky

user: hui

name: hui

current-context: hui

kind: Config

preferences: {}

users:

- name: hui

user:

client-certificate-data: LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSUQwekNDQXJ1Z0F3SUJBZ0lVQlo2VnNOaXZWWm16ZUhwSGhBQ3VwSlVkVXYwd0RRWUpLb1pJaHZjTkFRRUwKQlFBd1pERUxNQWtHQTFVRUJoTUNRMDR4RVRBUEJnTlZCQWdUQ0VoaGJtZGFhRzkxTVFzd0NRWURWUVFIRXdKWQpVekVNTUFvR0ExVUVDaE1EYXpoek1ROHdEUVlEVlFRTEV3WlRlWE4wWlcweEZqQVVCZ05WQkFNVERXdDFZbVZ5CmJtVjBaWE10WTJFd0lCY05Nak13TlRJMU1UUXpPREF3V2hnUE1qQTNNekExTVRJeE5ETTRNREJhTUdBeEN6QUoKQmdOVkJBWVRBa05PTVJBd0RnWURWUVFJRXdkQ1pXbEthVzVuTVJBd0RnWURWUVFIRXdkQ1pXbEthVzVuTVF3dwpDZ1lEVlFRS0V3TnJPSE14RHpBTkJnTlZCQXNUQmxONWMzUmxiVEVPTUF3R0ExVUVBeE1GUTJocGJtRXdnZ0VpCk1BMEdDU3FHU0liM0RRRUJBUVVBQTRJQkR3QXdnZ0VLQW9JQkFRRFBJbnRTZGZVeEdZZzBGQ2JCZU10VVVBdFAKWXRCQkQ1VFc0STQ4TDRWZUFmV0hyZFNzQ20wamg2OXVNTEZ6MUZCeFlCbmpmZGRWZEdFUVY2aGx3UW85TzExbgpHOUFCWTdVUnltdmxoSnJZWHVsZnY2UUFOSlZkVFFaZytkNTZ4bTFXMjVGUVl5NGFmT2d2blA3NWgyY2hZOVpVCkhlaytjcTh4ZHlEeUgyVHN1alhHb215bzBEL3lWblBpMG5tTjlLajNEOXQ3TFNJM0s2cmRrdEt1ckhXU1o1WHAKV0wxWWxpdDNxYzV5aDNpT0grSittMzZTdllkMUthMGphZ3hTdlFvMy9wN24rOUtMSXFvMFliajh4UldUbFp6VwpoMUt2RWtwVE1zK0FFK0Y0THFRZXZOVUlPanUvWWNDYTZmcWl2UXIwMHg4aExqZDNUcE9rYVV0RCt1dHRBZ01CCkFBR2pmekI5TUE0R0ExVWREd0VCL3dRRUF3SUZvREFkQmdOVkhTVUVGakFVQmdnckJnRUZCUWNEQVFZSUt3WUIKQlFVSEF3SXdEQVlEVlIwVEFRSC9CQUl3QURBZEJnTlZIUTRFRmdRVXNoQzl3T3lsVWw5MXl6cm8xMERmQUIwVgpTZEl3SHdZRFZSMGpCQmd3Rm9BVTFZVkFOSmdVYWduTjFQdjUrKzBYbnJBaHlPY3dEUVlKS29aSWh2Y05BUUVMCkJRQURnZ0VCQUgzQk5IYXVzajZlQUV5RWw5SWNVS0Q5MGdFZHJhTkZaQUpXdlVUcG10aFV5cG1QTkhsUk9pQloKcTZwM0tmU1kyVHVxdm1wNzJHTzNCcHFaZzNjamdkdHBEbjl4SjZKNjNjTjZXYmc1WmM4NTlMMk9ETWlyc3UxcwpHNXMxNXhobmphYW8vSHRzSkIvTVQ3VW1HVnFmbTVNdVVGd2Ztd1d2T3lpK0FZTmxFL1pOTjZWbVVnQTBCSUVtClhhOXpTdUN4VitmdHBvTWljNFJKQjE2emFrVGg4SVFyMU9YNlJMTVBpOTg5S1NoL01JVjNqTmNjK2NlZm02dVYKTFAwVitCdlUvZVZZZC94bW9veVM0Z1pIeWpiSEhYeHdjdGhYN0NzeEthOWphNGJnNk1WcGx0SHBadVE5VWFuSQpYRmpnVDBXbGF2bDlBMWdJSks2aTdiaERaT2xsY3VFPQotLS0tLUVORCBDRVJUSUZJQ0FURS0tLS0tCg==

client-key-data: LS0tLS1CRUdJTiBSU0EgUFJJVkFURSBLRVktLS0tLQpNSUlFb3dJQkFBS0NBUUVBenlKN1VuWDFNUm1JTkJRbXdYakxWRkFMVDJMUVFRK1UxdUNPUEMrRlhnSDFoNjNVCnJBcHRJNGV2YmpDeGM5UlFjV0FaNDMzWFZYUmhFRmVvWmNFS1BUdGRaeHZRQVdPMUVjcHI1WVNhMkY3cFg3K2sKQURTVlhVMEdZUG5lZXNadFZ0dVJVR011R256b0w1eisrWWRuSVdQV1ZCM3BQbkt2TVhjZzhoOWs3TG8xeHFKcwpxTkEvOGxaejR0SjVqZlNvOXcvYmV5MGlOeXVxM1pMU3JxeDFrbWVWNlZpOVdKWXJkNm5PY29kNGpoL2lmcHQrCmtyMkhkU210STJvTVVyMEtOLzZlNS92U2l5S3FOR0c0L01VVms1V2Mxb2RTcnhKS1V6TFBnQlBoZUM2a0hyelYKQ0RvN3YySEFtdW42b3IwSzlOTWZJUzQzZDA2VHBHbExRL3JyYlFJREFRQUJBb0lCQVFDdS9EMWNrMlFaSDYydAorVndvVStqS0NIa1ZqcS9LVnVSeGh2RUNMVThvOU5TODA0QjMrckxxc2lUbEhPTzhxNTl0dURjR3RYZmxyRlNYCm5zWVhlRFl6Tm1TWXg2azRrMGdUaUlNUU9hOHFuVHZnZEtDU3Y5bHpJYkFDMnZRMW1rNGljNGxXZFFNc3cxclAKWm4wTXhuTzhoSUE3UGEyZTRQblordjd0TE5KeEhQVWdZUjJZNE92eUM2R3ZDaHJia2pxYlRvNy8vQm9zRGJHdApMd0xPazl6REJ5eHJQQmcrc0o2TnZOaVFqNmlyUjJBeVFEQWRNTElwcFM1SUlVYWFwaGNmUTJHZjhyZVM0dmdwCjNaRkduK1dNV3VKdW5ZTUVrNDBaZzY1WTdMUHk3aC94QW9UV1QvSCtRaEdoZXc2NnZsMCtHNHRJYUZsd0xYdFcKRFpIYVVUOUJBb0dCQU40c0NSanpyd0wxTWJYTGpnK05Lb1RZaXVqRVZEQ1FQVlBQbDZPQnNsSHRhYUFaV3FCWAprcDJnM2djQ0YxaW84YXpHV1pRLzJTT3V6QVdDRDNPeFB6UEo3UEo1dHUxelNyWFJQRnh0Um1oaldTd29EL0xYCjdGd1BDU3EzWEtkaDJqOGplNEFCVUhaNzJoS2pMZlBjam0yb1U2WjUxRUE4d1FYTVNlaDNUNHR4QW9HQkFPNnMKVDlPQzFEOUY3eTBuSkZXTzBORXU1WEpXNm01QUlSMnNUdWRuOGx3KzVKUG8yM3pVYjVXMUMydWp6SDFnU0FTZAp1Q2pjR01rak5odDJEdUNLbkFjaHBlNzYvWGFOWnhjeVM4MitFeVhaL0RocFZLTVRWL1FCRVIxZ1NrMmY3a2J3CmkxRzR3djVUYm1EWk15L2VDQkhzbS93ZW1ReTl1UlNxRFFlVytnbTlBb0dBYlhsYlhqMHRIcEw5Vkt3aHF3NFAKUm5pQk1pTVRyUDVXQ2NjLzNDU2JYbjFTejczT2h6Vy9uQVpaZ1RDSm1ubGM1SnEwSnpXeTVEOU1idVpnZ014MAo3U3J4bzZWUCt2OFZjRFBTdjJSbERpanVGckVDOHRGc3VRdjdvMTNJdlAyZGtnRUU2TlU4OWJVZmhwRjdvaThxCnkyUG5IQi9wODJFOFo0UDdZeDN2UnpFQ2dZQTc1M0hOczV1VUdmaHpDOHo1MEhPbTNTOW5xRnNFdXdIVTBjZW8KR3hYZ2cwU1p2eXMveEk0Uk5EU2VtcWtibXN2WXBNRnhOL1RjbndMWWw2UWFSWS90MWtzd2xUeUN3ZkRyQ0l1dwpJeEhwUVRJbDhvSDB3RWttREJLQW5nZG9Qa2p1OHpiMGx2d1NHMXlyNERnUnZwZWw4QTRpbElkemhEYnM4ZFY5Clh5NTR2UUtCZ0FWbktUajM2dTdib0o1Ui9hbTJ5UGpsOElRRmVqbGhSUUpXdlRDZERBWUVPRkQwbTZZUEwvR2oKc3ZCaGFmL2xwZy9tYk12U0lxYWppTlVpL2owNEFhTjF5SS9HV0J0N0xFMVRWTkdEVlprQkNKQVl3aE1oQjRDcAozWVhldkMvUEkrVlc5bkt0VFptMUxURkVMOGNaM1I4aUthTjhaNWtXM21OdjNwL3NpYzdmCi0tLS0tRU5EIFJTQSBQUklWQVRFIEtFWS0tLS0tCg==

token: eyJhbGciOiJSUzI1NiIsImtpZCI6IkVxTXUxaGxkeEk3SU1yZlJnNFViVzhBVnFNc3hRbnZFa1VMdDZaNkd3QlEifQ.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJza3kiLCJrdWJlcm5ldGVzLmlvL3NlcnZpY2VhY2NvdW50L3NlY3JldC5uYW1lIjoiaHVpLXVzZXItdG9rZW4iLCJrdWJlcm5ldGVzLmlvL3NlcnZpY2VhY2NvdW50L3NlcnZpY2UtYWNjb3VudC5uYW1lIjoiaHVpIiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9zZXJ2aWNlLWFjY291bnQudWlkIjoiZWU0MDUwOGUtYjljNi00NzcxLThlMWUtMDE3ZjI2NGEwZmE1Iiwic3ViIjoic3lzdGVtOnNlcnZpY2VhY2NvdW50OnNreTpodWkifQ.gRXgpn3wn3O5Bs6JMYW_TxRebLVwiNZFQZyj7NunHKIIR8__uQr1ISbaYNaIDSdwHVuKZ0ZUZNUGhJ5YHQX5JjIttl8WrOknWLLkCzLf2_uBcpOQo_5HsfpedfNFfmm9o1O1gLVu2uN9JQfbMALI6jwV0lAmnSc120G_xwpIY0f9oXNnVa59vzCpKGy5k5UKU3sTG7xrbJty0HiGZRrBRBSer1AMPe4TLG8OOg7sRd_AwRQ9WtnM16U5p7Q5A2MaVX8dhM6NbIPIurbkeynJsyn_bI-qOLixq2Kb8YABejqAuWXECfyMhSv3KfnYuzEdc-Yxx38V5KS-_ajquIP-bQ

3.9:dashboard登录测试:

3.通过HPA控制器及metric-server根据pod CPU利用率实现pod副本的弹性伸缩

3.1 pod伸缩简介

根据当前pod的负载,动态调整 pod副本数量,业务高峰期自动扩容pod的副本数以尽快响应pod的请求。

在业务低峰期对pod进行缩容,实现降本增效的目的。

公有云支持node级别的弹性伸缩

3.2 手动调整pod副本数:

root@k8s-master1:~# kubectl get pod -n myserver #当前pod副本数

NAME READY STATUS RESTARTS AGE

myserver-myapp-deployment-name-fb44b4447-pwwxq 1/1 Running 1 (5h15m ago) 11d

myserver-myapp-frontend-deployment-855c89f977-th64z 1/1 Running 2 (5h15m ago) 11d

myserver-tomcat-app1-deployment-86bc8cbcb5-k9vc7 1/1 Running 7 (5h15m ago) 18d

root@k8s-master1:~# kubectl --help | grep scale #命令使用帮助

scale Set a new size for a deployment, replica set, or replication controller

autoscale Auto-scale a deployment, replica set, stateful set, or replication controller

root@k8s-master1:~# kubectl scale --help

root@k8s-master1:~# kubectl scale deployment myserver-tomcat-app1-deployment --replicas=2 -n myserver

deployment.apps/myserver-tomcat-app1-deployment scaled

验证pod副本数:

root@k8s-master1:~# **kubectl get pod -n myserver

NAME READY STATUS RESTARTS AGE

myserver-myapp-deployment-name-fb44b4447-pwwxq 1/1 Running 1 (5h53m ago) 11d

myserver-myapp-frontend-deployment-855c89f977-th64z 1/1 Running 2 (5h53m ago) 11d

myserver-tomcat-app1-deployment-86bc8cbcb5-gdczd 1/1 Running 0 42s

myserver-tomcat-app1-deployment-86bc8cbcb5-k9vc7 1/1 Running 7 (5h53m ago) 18d

3.3 动态伸缩控制器类型:

水平pod自动缩放器(HPA): 基于pod 资源利用率横向调整pod副本数量。

垂直pod自动缩放器(VPA): 基于pod资源利用率,调整对单个pod的最大资源限制,不能与HPA同时使用。

集群伸缩(Cluster Autoscaler,CA): 基于集群中node 资源使用情况,动态伸缩node节点,从而保证有CPU和内存资源用于创建pod

3.4 PA控制器简介:

Horizontal Pod Autoscaling (HPA)控制器,根据预定义好的阈值及pod当前的资源利用率,自动控制在k8s集群中运行的pod数量(自动弹性水平自动伸缩).

-horizontal-pod-autoscaler-sync-period #默认每隔15s(可以通过–horizontal-pod-autoscaler-sync-period修改)查询metrics的资源使用情况。

--horizontal-pod-autoscaler-downscale-stabilization #缩容间隔周期,默认5分钟。

--horizontal-pod-autoscaler-sync-period #HPA控制器同步pod副本数的间隔周期

--horizontal-pod-autoscaler-cpu-initialization-period #初始化延迟时间,在此时间内pod的CPU资源指标将不会生效,默认为5分钟。

--horizontal-pod-autoscaler-initial-readiness-delay #用于设置pod准备时间, 在此时间内的 pod 统统被认为未就绪及不采集数据,默认为30秒。

--horizontal-pod-autoscaler-tolerance #HPA控制器能容忍的数据差异(浮点数,默认为0.1),即新的指标要与当前的阈值差异在0.1或以上, 即要大于1+0.1=1.1,或小于1-0.1=0.9,比如阈值为CPU利用率50%,当前为80%,那么80/50=1.6 > 1.1则会触发扩容,分之会缩容。即触发条件:avg(CurrentPodsConsumption) / Target >1.1 或 <0.9=把N个pod的数据相加后根据pod的数量计算出平均数除以阈值,大于1.1就扩容,小于0.9就缩容。

计算公式:TargetNumOfPods = ceil(sum(CurrentPodsCPUUtilization) / Target) #ceil是一个向上取整的目的pod整数。

指标数据需要部署metrics-server,即HPA使用metrics-server作为数据源。

https://github.com/kubernetes-sigs/metrics-server

在k8s 1.1引入HPA控制器,早期使用Heapster组件采集pod指标数据,在k8s 1.11版本开始使用Metrices Server完成数据采集,然后将采集到的数据通过API(Aggregated API,汇总API),例如metrics.k8s.io、custom.metrics.k8s.io、external.metrics.k8s.io,然后再把数据提供给HPA控制器进行查询,以实现基于某个资源利用率对pod进行扩缩容的目的。

3.5 metrics-server 部署:

Metrics Server 是 Kubernetes 内置的容器资源指标来源。

Metrics Server 是通过node节点上的 Kubelet 收集资源指标,并通过Metrics API在 Kubernetes apiserver 中公开指标数据,以供Horizontal Pod Autoscaler和Vertical Pod Autoscaler使用,也可以通过访问kubectl top node/pod 查看指标数据。

下载地址:https://github.com/kubernetes-sigs/metrics-server/releases/tag/v0.6.3

#部署metrics-server

root@k8s-master2:~/test# cat metrics-server-v0.6.1.yaml

apiVersion: v1

kind: ServiceAccount

metadata:

labels:

k8s-app: metrics-server

name: metrics-server

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

labels:

k8s-app: metrics-server

rbac.authorization.k8s.io/aggregate-to-admin: "true"

rbac.authorization.k8s.io/aggregate-to-edit: "true"

rbac.authorization.k8s.io/aggregate-to-view: "true"

name: system:aggregated-metrics-reader

rules:

- apiGroups:

- metrics.k8s.io

resources:

- pods

- nodes

verbs:

- get

- list

- watch

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

labels:

k8s-app: metrics-server

name: system:metrics-server

rules:

- apiGroups:

- ""

resources:

- nodes/metrics

verbs:

- get

- apiGroups:

- ""

resources:

- pods

- nodes

verbs:

- get

- list

- watch

---

apiVersion: rbac.authorization.k8s.io/v1

kind: RoleBinding

metadata:

labels:

k8s-app: metrics-server

name: metrics-server-auth-reader

namespace: kube-system

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: Role

name: extension-apiserver-authentication-reader

subjects:

- kind: ServiceAccount

name: metrics-server

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

labels:

k8s-app: metrics-server

name: metrics-server:system:auth-delegator

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:auth-delegator

subjects:

- kind: ServiceAccount

name: metrics-server

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

labels:

k8s-app: metrics-server

name: system:metrics-server

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:metrics-server

subjects:

- kind: ServiceAccount

name: metrics-server

namespace: kube-system

---

apiVersion: v1

kind: Service

metadata:

labels:

k8s-app: metrics-server

name: metrics-server

namespace: kube-system

spec:

ports:

- name: https

port: 443

protocol: TCP

targetPort: https

selector:

k8s-app: metrics-server

---

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

k8s-app: metrics-server

name: metrics-server

namespace: kube-system

spec:

selector:

matchLabels:

k8s-app: metrics-server

strategy:

rollingUpdate:

maxUnavailable: 0

template:

metadata:

labels:

k8s-app: metrics-server

spec:

containers:

- args:

- --cert-dir=/tmp

- --secure-port=4443

- --kubelet-preferred-address-types=InternalIP,ExternalIP,Hostname

- --kubelet-use-node-status-port

- --metric-resolution=15s

image: bitnami/metrics-server:0.6.1 #修改镜像为国内镜像

imagePullPolicy: IfNotPresent

livenessProbe:

failureThreshold: 3

httpGet:

path: /livez

port: https

scheme: HTTPS

periodSeconds: 10

name: metrics-server

ports:

- containerPort: 4443

name: https

protocol: TCP

readinessProbe:

failureThreshold: 3

httpGet:

path: /readyz

port: https

scheme: HTTPS

initialDelaySeconds: 20

periodSeconds: 10

resources:

requests:

cpu: 100m

memory: 200Mi

securityContext:

allowPrivilegeEscalation: false

readOnlyRootFilesystem: true

runAsNonRoot: true

runAsUser: 1000

volumeMounts:

- mountPath: /tmp

name: tmp-dir

nodeSelector:

kubernetes.io/os: linux

priorityClassName: system-cluster-critical

serviceAccountName: metrics-server

volumes:

- emptyDir: {}

name: tmp-dir

---

apiVersion: apiregistration.k8s.io/v1

kind: APIService

metadata:

labels:

k8s-app: metrics-server

name: v1beta1.metrics.k8s.io

spec:

group: metrics.k8s.io

groupPriorityMinimum: 100

insecureSkipTLSVerify: true

service:

name: metrics-server

namespace: kube-system

version: v1beta1

versionPriority: 100

root@k8s-master2:~/test# kubectl apply -f metrics-server-v0.6.1.yaml

apiservice.apiregistration.k8s.io/v1beta1.metrics.k8s.io created

#验证pod指标数据

root@k8s-master2:~/test# kubectl top pod

NAME CPU(cores) MEMORY(bytes)

net-test1 0m 0Mi

net-test2 0m 1Mi

net-test3 0m 2Mi

#验证node指标数据

root@k8s-master2:~/test# kubectl top node

NAME CPU(cores) CPU% MEMORY(bytes) MEMORY%

192.168.121.111 221m 11% 1556Mi 58%

192.168.121.112 291m 7% 1724Mi 47%

192.168.121.113 347m 8% 1676Mi 46%

3.6 web服务与HPA控制器部署

手动创建HPA控制器-不推荐

root@k8s-master2:~/test# kubectl autoscale deployment/myserver-tomcat-deployment --min=2 --max=10 --cpu-percent=80 -n myserver

horizontalpodautoscaler.autoscaling/myserver-tomcat-deployment autoscaled

root@k8s-master2:~/test# cat tomcat-app1.yaml

kind: Deployment

#apiVersion: extensions/v1beta1

apiVersion: apps/v1

metadata:

labels:

app: magedu-tomcat-app1-deployment-label

name: magedu-tomcat-app1-deployment

namespace: magedu

spec:

replicas: 5

selector:

matchLabels:

app: magedu-tomcat-app1-selector

template:

metadata:

labels:

app: magedu-tomcat-app1-selector

spec:

containers:

- name: magedu-tomcat-app1-container

image: tomcat:7.0.93-alpine

#image: lorel/docker-stress-ng

#args: ["--vm", "2", "--vm-bytes", "256M"]

##command: ["/apps/tomcat/bin/run_tomcat.sh"]

imagePullPolicy: IfNotPresent

##imagePullPolicy: Always

ports:

- containerPort: 8080

protocol: TCP

name: http

env:

- name: "password"

value: "123456"

- name: "age"

value: "18"

resources:

limits:

cpu: 1

memory: "512Mi"

requests:

cpu: 500m

memory: "512Mi"

---

kind: Service

apiVersion: v1

metadata:

labels:

app: magedu-tomcat-app1-service-label

name: magedu-tomcat-app1-service

namespace: magedu

spec:

type: NodePort

ports:

- name: http

port: 80

protocol: TCP

targetPort: 8080

#nodePort: 40003

selector:

app: magedu-tomcat-app1-selector

#部署web服务

root@k8s-master2:~/test# kubectl apply -f tomcat-app1.yaml

3.7 部署HPA

root@k8s-master2:~/test# cat hpa.yaml

apiVersion: autoscaling/v1 #定义API版本

kind: HorizontalPodAutoscaler #对象类型

metadata:

namespace: magedu

name: magedu-tomcat-app1-deployment

labels:

app: magedu-tomcat-app1

version: v2beta1

spec:

scaleTargetRef: #定义水平伸缩的目标对象,Deployment、ReplicationController/ReplicaSet

apiVersion: apps/v1

kind: Deployment

name: magedu-tomcat-app1-deployment

minReplicas: 3

maxReplicas: 10

targetCPUUtilizationPercentage: 60

#metrics: #调用metrics数据定义

#- type: Resource

# resource:

# name: cpu

# targetAverageUtilization: 60

#- type: Resource

# resource:

# name: memory

#通过yaml文件部署HPA控制器

root@k8s-master2:~/test# kubectl apply -f hpa.yaml

root@k8s-master2:~/test# kubectl get hpa -n magedu

NAME REFERENCE TARGETS MINPODS MAXPODS REPLICAS AGE

magedu-tomcat-app1-podautoscaler Deployment/magedu-tomcat-app1-deployment 0%/60% 3 20 3 26m

root@k8s-master2:~/test# kubectl describe hpa magedu-tomcat-app1-podautoscaler -n magedu

4.kubernetes中container、Pod、Namespace内存及CPU等资源限制

• 1.kubernetes中资源限制概括

• 2.kubernetes对单个容器的CPU及memory实现资源限制

• 3.kubernetes对单个pod的CPU及memory实现资源限制

• 4.kubernetes对整个namespace的CPU及memory实现资源限制

4.1 kubernetes中资源限制概括

1.如果运行的容器没有定义资源(memory、CPU)等限制,但是在namespace定义了LimitRange限制,那么该容器会继承LimitRange中的默认限制。

2.如果namespace没有定义LimitRange限制,那么该容器可以只要宿主机的最大可用资源,直到无资源可用而触发宿主机(OOM Killer)。

https://kubernetes.io/zh/docs/tasks/configure-pod-container/assign-cpu-resource/

CPU 以核心为单位进行限制,单位可以是整核、浮点核心数或毫核(m/milli):

2=2核心=200% 0.5=500m=50% 1.2=1200m=120%

https://kubernetes.io/zh/docs/tasks/configure-pod-container/assign-memory-resource/

memory 以字节为单位,单位可以是E、P、T、G、M、K、Ei、Pi、Ti、Gi、Mi、Ki

1536Mi=1.5Gi

requests(请求)为kubernetes scheduler执行pod调度时node节点至少需要拥有的资源。

limits(限制)为pod运行成功后最多可以使用的资源上限。

4.2 .kubernetes对单个容器的CPU及memory实现资源限制

root@k8s-master1:/apps/magedu# cat case2-pod-memory-and-cpu-limit.yml

#apiVersion: extensions/v1beta1

apiVersion: apps/v1

kind: Deployment

metadata:

name: limit-test-deployment

namespace: magedu

spec:

replicas: 1

selector:

matchLabels: #rs or deployment

app: limit-test-pod

# matchExpressions:

# - {key: app, operator: In, values: [ng-deploy-80,ng-rs-81]}

template:

metadata:

labels:

app: limit-test-pod

spec:

containers:

- name: limit-test-container

image: lorel/docker-stress-ng

resources:

limits:

cpu: "1.2"

memory: "512Mi"

requests:

memory: "100Mi"

cpu: "500m"

#command: ["stress"]

args: ["--vm", "2", "--vm-bytes", "256M"]

#nodeSelector:

# env: group1

root@k8s-master1:/apps/magedu# kubectl apply -f case2-pod-memory-and-cpu-limit.yml

4.3.kubernetes对单个pod的CPU及memory实现资源限制

Limit Range是对具体某个Pod或容器的资源使用进行限制

https://kubernetes.io/zh/docs/concepts/policy/limit-range/

限制namespace中每个Pod或容器的最小与最大计算资源

限制namespace中每个Pod或容器计算资源request、limit之间的比例

限制namespace中每个存储卷声明(PersistentVolumeClaim)可使用的最小与最大存储空间

设置namespace中容器默认计算资源的request、limit,并在运行时自动注入到容器中

root@k8s-master1:/apps/magedu# cat case3-LimitRange.yaml

apiVersion: v1

kind: LimitRange

metadata:

name: limitrange-magedu

namespace: magedu

spec:

limits:

- type: Container #限制的资源类型

max:

cpu: "2" #限制单个容器的最大CPU

memory: "2Gi" #限制单个容器的最大内存

min:

cpu: "500m" #限制单个容器的最小CPU

memory: "512Mi" #限制单个容器的最小内存

default:

cpu: "500m" #默认单个容器的CPU限制

memory: "512Mi" #默认单个容器的内存限制

defaultRequest:

cpu: "500m" #默认单个容器的CPU创建请求

memory: "512Mi" #默认单个容器的内存创建请求

maxLimitRequestRatio:

cpu: 2 #限制CPU limit/request比值最大为2

memory: 2 #限制内存limit/request比值最大为1.5

- type: Pod

max:

cpu: "4" #限制单个Pod的最大CPU

memory: "4Gi" #限制单个Pod最大内存

- type: PersistentVolumeClaim

max:

storage: 50Gi #限制PVC最大的requests.storage

min:

storage: 30Gi #限制PVC最小的requests.storage

root@k8s-master1:/apps/magedu# kubectl apply -f case3-LimitRange.yaml

限制案例1:CPU与内存 RequestRatio比例限制

root@k8s-master1:/apps/magedu# cat case4-pod-RequestRatio-limit.yaml

kind: Deployment

apiVersion: apps/v1

metadata:

labels:

app: magedu-wordpress-deployment-label

name: magedu-wordpress-deployment

namespace: magedu

spec:

replicas: 1

selector:

matchLabels:

app: magedu-wordpress-selector

template:

metadata:

labels:

app: magedu-wordpress-selector

spec:

containers:

- name: magedu-wordpress-nginx-container

image: nginx:1.16.1

imagePullPolicy: Always

ports:

- containerPort: 80

protocol: TCP

name: http

env:

- name: "password"

value: "123456"

- name: "age"

value: "18"

resources:

limits:

cpu: 0.5

memory: 0.5Gi

requests:

cpu: 0.5

memory: 0.5Gi

- name: magedu-wordpress-php-container

image: php:5.6-fpm-alpine

imagePullPolicy: Always

ports:

- containerPort: 80

protocol: TCP

name: http

env:

- name: "password"

value: "123456"

- name: "age"

value: "18"

resources:

limits:

cpu: 0.5

memory: 0.5Gi

requests:

cpu: 0.5

memory: 0.5Gi

- name: magedu-wordpress-redis-container

image: redis:4.0.14-alpine

imagePullPolicy: Always

ports:

- containerPort: 80

protocol: TCP

name: http

env:

- name: "password"

value: "123456"

- name: "age"

value: "18"

resources:

limits:

cpu: 1

memory: 1Gi

requests:

cpu: 1

memory: 1Gi

---

kind: Service

apiVersion: v1

metadata:

labels:

app: magedu-wordpress-service-label

name: magedu-wordpress-service

namespace: magedu

spec:

type: NodePort

ports:

- name: http

port: 80

protocol: TCP

targetPort: 8080

nodePort: 30033

selector:

app: magedu-wordpress-selector

root@k8s-master1:/apps/magedu# kubectl apply -f case4-pod-RequestRatio-limit.yaml

限制案例2:CPU与内存或超分限制:

root@k8s-master1:/apps/magedu# cat case5-pod-cpu-limit.yaml

kind: Deployment

apiVersion: apps/v1

metadata:

labels:

app: magedu-wordpress-deployment-label

name: magedu-wordpress-deployment

namespace: magedu

spec:

replicas: 1

selector:

matchLabels:

app: magedu-wordpress-selector

template:

metadata:

labels:

app: magedu-wordpress-selector

spec:

containers:

- name: magedu-wordpress-nginx-container

image: nginx:1.16.1

imagePullPolicy: Always

ports:

- containerPort: 80

protocol: TCP

name: http

env:

- name: "password"

value: "123456"

- name: "age"

value: "18"

resources:

limits:

cpu: 1

memory: 1Gi

requests:

cpu: 500m

memory: 512Mi

- name: magedu-wordpress-php-container

image: php:5.6-fpm-alpine

imagePullPolicy: Always

ports:

- containerPort: 80

protocol: TCP

name: http

env:

- name: "password"

value: "123456"

- name: "age"

value: "18"

resources:

limits:

#cpu: 2.8

cpu: 2

memory: 1Gi

requests:

cpu: 1.5

memory: 512Mi

---

kind: Service

apiVersion: v1

metadata:

labels:

app: magedu-wordpress-service-label

name: magedu-wordpress-service

namespace: magedu

spec:

type: NodePort

ports:

- name: http

port: 80

protocol: TCP

targetPort: 8080

nodePort: 30033

selector:

app: magedu-wordpress-selector

root@k8s-master1:/apps/magedu# kubectl apply -f case5-pod-cpu-limit.yaml

4.4 kubernetes对整个namespace的CPU及memory实现资源限制

https://kubernetes.io/zh/docs/concepts/policy/resource-quotas/

限定某个对象类型(如Pod、service)可创建对象的总数;

限定某个对象类型可消耗的计算资源(CPU、内存)与存储资源(存储卷声明)总数

root@k8s-master1:/apps/magedu# cat case6-ResourceQuota-magedu.yaml

apiVersion: v1

kind: ResourceQuota

metadata:

name: quota-magedu

namespace: magedu

spec:

hard:

requests.cpu: "8"

limits.cpu: "8"

requests.memory: 4Gi

limits.memory: 4Gi

requests.nvidia.com/gpu: 4

pods: "100"

services: "100"

root@k8s-master1:/apps/magedu# kubectl apply -f case6-ResourceQuota-magedu.yaml

限制案例1:验证namespace Pod副本数限制:

root@k8s-master1:/apps/magedu# cat case7-namespace-pod-limit-test.yaml

kind: Deployment

apiVersion: apps/v1

metadata:

labels:

app: magedu-nginx-deployment-label

name: magedu-nginx-deployment

namespace: magedu

spec:

replicas: 5

selector:

matchLabels:

app: magedu-nginx-selector

template:

metadata:

labels:

app: magedu-nginx-selector

spec:

containers:

- name: magedu-nginx-container

image: nginx:1.16.1

imagePullPolicy: Always

ports:

- containerPort: 80

protocol: TCP

name: http

env:

- name: "password"

value: "123456"

- name: "age"

value: "18"

resources:

limits:

cpu: 1

memory: 1Gi

requests:

cpu: 1

memory: 1Gi

---

kind: Service

apiVersion: v1

metadata:

labels:

app: magedu-nginx-service-label

name: magedu-nginx-service

namespace: magedu

spec:

type: NodePort

ports:

- name: http

port: 80

protocol: TCP

targetPort: 8080

nodePort: 30033

selector:

app: magedu-nginx-selector

root@k8s-master1:/apps/magedu# kubectl apply -f case7-namespace-pod-limit-test.yaml

限制案例2:CPU总计核心数限制:

root@k8s-master1:/apps/magedu# cat case8-namespace-cpu-limit-test.yaml

kind: Deployment

apiVersion: apps/v1

metadata:

labels:

app: magedu-nginx-deployment-label

name: magedu-nginx-deployment

namespace: magedu

spec:

replicas: 6

selector:

matchLabels:

app: magedu-nginx-selector

template:

metadata:

labels:

app: magedu-nginx-selector

spec:

containers:

- name: magedu-nginx-container

image: nginx:1.16.1

imagePullPolicy: Always

ports:

- containerPort: 80

protocol: TCP

name: http

env:

- name: "password"

value: "123456"

- name: "age"

value: "18"

resources:

limits:

cpu: 2

memory: 100Mi

requests:

cpu: 2

memory: 100Mi

---

kind: Service

apiVersion: v1

metadata:

labels:

app: magedu-nginx-service-label

name: magedu-nginx-service

namespace: magedu

spec:

type: NodePort

ports:

- name: http

port: 80

protocol: TCP

targetPort: 8080

nodePort: 30033

selector:

app: magedu-nginx-selector

root@k8s-master1:/apps/magedu# kubectl apply -f case8-namespace-cpu-limit-test.yaml

5.node、pod的亲和与反亲和

pod调度流程

5.1 nodeSelector案例:

nodeSelector 基于node标签选择器,将pod调度的指定的目的节点上。

https://kubernetes.io/zh/docs/concepts/scheduling-eviction/assign-pod-node/

可用于基于服务类型干预Pod调度结果,如对磁盘I/O要求高的pod调度到SSD节点,对内存要求比较高的pod调度的内存较高的节点。也可以用于区分不同项目的pod,如将node添加不同项目的标签,然后区分调度。

root@k8s-node1:~# kubectl describe node 192.168.121.113 #默认标签

Name: 192.168.121.113

Roles: node

Labels: beta.kubernetes.io/arch=amd64

beta.kubernetes.io/os=linux

kubernetes.io/arch=amd64

kubernetes.io/hostname=192.168.121.113

kubernetes.io/os=linux

kubernetes.io/role=node

为node节点打标签:

root@k8s-node1:~# kubectl label node 192.168.121.113 project="magedu"

root@k8s-node1:~# kubectl label node 192.168.121.113 disktype="ssd"

将pod调度到目的node,yaml文件中指定的key与value必须精确匹配:

root@k8s-master1:~# cat case1-nodeSelector.yaml

kind: Deployment

#apiVersion: extensions/v1beta1

apiVersion: apps/v1

metadata:

labels:

app: magedu-tomcat-app2-deployment-label

name: magedu-tomcat-app2-deployment

namespace: magedu

spec:

replicas: 4

selector:

matchLabels:

app: magedu-tomcat-app2-selector

template:

metadata:

labels:

app: magedu-tomcat-app2-selector

spec:

containers:

- name: magedu-tomcat-app2-container

image: tomcat:7.0.94-alpine

imagePullPolicy: IfNotPresent

#imagePullPolicy: Always

ports:

- containerPort: 8080

protocol: TCP

name: http

env:

- name: "password"

value: "123456"

- name: "age"

value: "18"

resources:

limits:

cpu: 1

memory: "512Mi"

requests:

cpu: 500m

memory: "512Mi"

nodeSelector:

project: magedu

disktype: ssd

root@k8s-master1:~# kubectl apply -f case1-nodeSelector.yaml

deployment.apps/magedu-tomcat-app2-deployment created

5.2 nodeName案例:

为node节点打标签:

root@k8s-node1:~# kubectl label node 192.168.121.113 disktype=ssd

root@k8s-node2:~# kubectl label node 192.168.121.114 disktype=ssd

创建pod:

root@k8s-master1:~# cat case2-nodename.yml

kind: Deployment

#apiVersion: extensions/v1beta1

apiVersion: apps/v1

metadata:

labels:

app: magedu-tomcat-app2-deployment-label

name: magedu-tomcat-app2-deployment

namespace: magedu

spec:

replicas: 1

selector:

matchLabels:

app: magedu-tomcat-app2-selector

template:

metadata:

labels:

app: magedu-tomcat-app2-selector

spec:

nodeName: 192.168.121.113

containers:

- name: magedu-tomcat-app2-container

image: tomcat:7.0.94-alpine

imagePullPolicy: IfNotPresent

#imagePullPolicy: Always

ports:

- containerPort: 8080

protocol: TCP

name: http

env:

- name: "password"

value: "123456"

- name: "age"

value: "18"

resources:

limits:

cpu: 1

memory: "512Mi"

requests:

cpu: 500m

memory: "512Mi"

root@k8s-master1:~# kubectl apply -f case2-nodename.yaml

5.3 node affinity

affinity是Kubernetes 1.2版本后引入的新特性,类似于nodeSelector,允许使用者指定一些Pod在Node间调度的约束,目前支持两种形式

requiredDuringSchedulingIgnoredDuringExecution #必须满足pod调度匹配条件,如果不满足则不进行调度

preferredDuringSchedulingIgnoredDuringExecution #倾向满足pod调度匹配条件,不满足的情况下会调度的不符合条件的Node上

IgnoreDuringExecution表示如果在Pod运行期间Node的标签发生变化,导致亲和性策略不能满足,也会继续运行当前的Pod。

Affinity与anti-affinity的目的也是控制pod的调度结果,但是相对于nodeSelector,Affinity(亲和)与anti-affinity(反亲和)的功能更加强大:

affinity与nodeSelector对比:

1、亲和与反亲和对目的标签的选择匹配不仅仅支持and,还支持In、NotIn、Exists、DoesNotExist、Gt、Lt。

In:标签的值存在匹配列表中(匹配成功就调度到目的node,实现node亲和)

NotIn:标签的值不存在指定的匹配列表中(不会调度到目的node,实现反亲和)

Gt:标签的值大于某个值(字符串)

Lt:标签的值小于某个值(字符串)

Exists:指定的标签存在

2、可以设置软匹配和硬匹配,在软匹配下,如果调度器无法匹配节点,仍然将pod调度到其它不符合条件的节点。

3、还可以对pod定义亲和策略,比如允许哪些pod可以或者不可以被调度至同一台node。

注:

如果定义一个nodeSelectorTerms(条件)中通过一个matchExpressions基于列表指定了多个operator条件,则只要满足其中一个条件,就会被调度到相应的节点上,即or的关系,即如果nodeSelectorTerms下面有多个条件的话,只要满足任何一个条件就可以了,见 case 3.1.1。

如果定义一个nodeSelectorTerms中都通过一个matchExpressions(匹配表达式)指定key匹配多个条件,则所有的目的条件都必须满足才会调度到对应的节点,即and的关系,即果matchExpressions有多个选项的话,则必须同时满足所有这些条件才能正常调度,见case 3.1.2 。

5.3.1.1:硬亲和-nodeAffinity-requiredDuring-matchExpressions

多个matchExpressions条件,则只要满足其中一个条件,就会被调度到相应的节点上,即or的关系

为node节点打标签:

root@k8s-node1:~# kubectl label node 192.168.121.113 project="magedu"

root@k8s-node1:~# kubectl label node 192.168.121.113 disktype="ssd"

root@k8s-master1:~# cat case3-1.1-nodeAffinity-requiredDuring-matchExpressions.yaml

kind: Deployment

#apiVersion: extensions/v1beta1

apiVersion: apps/v1

metadata:

labels:

app: magedu-tomcat-app2-deployment-label

name: magedu-tomcat-app2-deployment

namespace: magedu

spec:

replicas: 1

selector:

matchLabels:

app: magedu-tomcat-app2-selector

template:

metadata:

labels:

app: magedu-tomcat-app2-selector

spec:

containers:

- name: magedu-tomcat-app2-container

image: tomcat:7.0.94-alpine

imagePullPolicy: IfNotPresent

#imagePullPolicy: Always

ports:

- containerPort: 8080

protocol: TCP

name: http

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions: #2个matchExpressions

- key: disktype #匹配条件1,有一个key但是有多个values、则只要匹配成功一个value就可以调度

operator: In

values:

- ssd # 只有一个value是匹配成功也可以调度

- xxx

- matchExpressions:

- key: project #匹配条件1,有一个key但是有多个values、则只要匹配成功一个value就可以调度

operator: In

values:

- xxx #即使这俩条件都匹配不上也可以调度,即多个matchExpressions只要有任意一个能匹配任何一个value就可以调用。

- nnn

创建pod:

root@k8s-master1:~# kubectl apply -f case3-1.1-nodeAffinity-requiredDuring-matchExpressions.yaml

验证pod调度结果:

root@k8s-master1:~# kubectl get pod -n magedu -o wide

NAME READY STATUS RESTARTS AGE IP NODE

magedu-tomcat-app2-deployment-69886c7c85-2ttx7 1/1 Running 0 4s 10.200.218.30 192.168.121.113

一个matchExpressions匹配多个条件,则所有的目的条件都必须满足才会调度到对应的节点,即and的关系

为node节点打标签:

root@k8s-node1:~# kubectl label node 192.168.121.113 project="magedu"

root@k8s-node1:~# kubectl label node 192.168.121.113 disktype="ssd"

root@k8s-master1:~# cat case3-1.2-nodeAffinity-requiredDuring-matchExpressions.yaml

kind: Deployment

#apiVersion: extensions/v1beta1

apiVersion: apps/v1

metadata:

labels:

app: magedu-tomcat-app2-deployment-label

name: magedu-tomcat-app2-deployment

namespace: magedu

spec:

replicas: 1

selector:

matchLabels:

app: magedu-tomcat-app2-selector

template:

metadata:

labels:

app: magedu-tomcat-app2-selector

spec:

containers:

- name: magedu-tomcat-app2-container

image: tomcat:7.0.94-alpine

imagePullPolicy: IfNotPresent

#imagePullPolicy: Always

ports:

- containerPort: 8080

protocol: TCP

name: http

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions: #只有一个matchExpressions

- key: disktype #匹配条件1,同一个key的多个value只有有一个匹配成功就认为当前key匹配成功

operator: In

values:

- ssd

- hdd

- key: project #匹配条件2,当前key也要匹配成功一个value,即条件1和条件2必须同时每个key匹配成功一个value,否则不调度

operator: In

values:

- magedu

root@k8s-master1:~# kubectl apply -f case3-1.2-nodeAffinity-requiredDuring-matchExpressions.yaml

deployment.apps/magedu-tomcat-app2-deployment created

验证pod调度结果:

root@k8s-master1:~# kubectl get pod -n magedu -o wide

NAME READY STATUS RESTARTS AGE IP NODE

magedu-tomcat-app2-deployment-7f4b7457db-rgljj 1/1 Running 0 3s 10.200.151.216 192.168.121.113

case5.3-2.1:软亲和-nodeAffinity-preferredDuring

root@k8s-master1:~# cat case3-2.1-nodeAffinity-preferredDuring.yaml

kind: Deployment

#apiVersion: extensions/v1beta1

apiVersion: apps/v1

metadata:

labels:

app: magedu-tomcat-app2-deployment-label

name: magedu-tomcat-app2-deployment

namespace: magedu

spec:

replicas: 1

selector:

matchLabels:

app: magedu-tomcat-app2-selector

template:

metadata:

labels:

app: magedu-tomcat-app2-selector

spec:

containers:

- name: magedu-tomcat-app2-container

image: tomcat:7.0.94-alpine

imagePullPolicy: IfNotPresent

#imagePullPolicy: Always

ports:

- containerPort: 8080

protocol: TCP

name: http

affinity:

nodeAffinity:

preferredDuringSchedulingIgnoredDuringExecution:

- weight: 80 #软亲和条件1,weight值越大优先级越高,越优先匹配调度

preference:

matchExpressions:

- key: project

operator: In

values:

- magedu

- weight: 60 #软亲和条件2,在条件1不满足时匹配条件2

preference:

matchExpressions:

- key: disktype

operator: In

values:

- ssd

修改node label,测试pod能否调度软策略优先级较高的节点:

root@k8s-master1:~# kubectl label node 192.168.121.113 disktype=ssd && kubectl label node 192.168.121.114 project=magedu

root@k8s-master1:~# kubectl apply -f case3-2.1-nodeAffinity-preferredDuring.yaml

root@k8s-master1:~# kubectl get pod -n magedu -o wide

NAME READY STATUS RESTARTS AGE IP NODE

magedu-tomcat-app2-deployment-68b779c448-hk2w2 1/1 Running 0 11s 10.200.151.207 192.168.121.114

case5.3-2.2:硬亲和与软亲和结合使用-nodeAffinity-requiredDuring-preferredDuring

root@k8s-master1:~# cat case3-2.2-nodeAffinity-requiredDuring-preferredDuring.yaml

kind: Deployment

#apiVersion: extensions/v1beta1

apiVersion: apps/v1

metadata:

labels:

app: magedu-tomcat-app2-deployment-label

name: magedu-tomcat-app2-deployment

namespace: magedu

spec:

replicas: 1

selector:

matchLabels:

app: magedu-tomcat-app2-selector

template:

metadata:

labels:

app: magedu-tomcat-app2-selector

spec:

containers:

- name: magedu-tomcat-app2-container

image: tomcat:7.0.94-alpine

imagePullPolicy: IfNotPresent

#imagePullPolicy: Always

ports:

- containerPort: 8080

protocol: TCP

name: http

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution: #硬亲和

nodeSelectorTerms:

- matchExpressions: #硬匹配条件1

- key: "kubernetes.io/role"

operator: NotIn

values:

- "master" #硬性匹配key 的值kubernetes.io/role不包含master的节点,即绝对不会调度到master节点(node反亲和)

preferredDuringSchedulingIgnoredDuringExecution: #软亲和

- weight: 80

preference:

matchExpressions:

- key: project

operator: In

values:

- magedu

- weight: 60

preference:

matchExpressions:

- key: disktype

operator: In

values:

- ssd

root@k8s-master1:~# kubectl apply -f case3-2.2-nodeAffinity-requiredDuring-preferredDuring.yaml

创建pod并验证:

# kubectl apply -f case3-2.2-nodeAffinity-requiredDuring-preferredDuring.yaml

# kubectl get pod -n magedu

NAME READY STATUS RESTARTS AGE IP NODE

magedu-tomcat-app2-deployment-d4df9556b-s898k 1/1 Running 0 5s 10.200.151.221 192.168.121.114

5.3-3.1 node 反亲和-nodeantiaffinity

root@k8s-master1:~# cat case3-3.1-nodeantiaffinity.yaml

kind: Deployment

#apiVersion: extensions/v1beta1

apiVersion: apps/v1

metadata:

labels:

app: magedu-tomcat-app2-deployment-label

name: magedu-tomcat-app2-deployment

namespace: magedu

spec:

replicas: 1

selector:

matchLabels:

app: magedu-tomcat-app2-selector

template:

metadata:

labels:

app: magedu-tomcat-app2-selector

spec:

containers:

- name: magedu-tomcat-app2-container

image: tomcat:7.0.94-alpine

imagePullPolicy: IfNotPresent

#imagePullPolicy: Always

ports:

- containerPort: 8080

protocol: TCP

name: http

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions: #匹配条件1

- key: disktype

operator: NotIn #调度的目的节点没有key为disktype且值为hdd的标签

values:

- hdd #绝对不会调度到含有label的key为disktype且值为hdd的节点,即会调度到没有key为disktype且值为hdd的hdd的节点

对node打上标签,测试是否不会调度到该节点:

root@k8s-master1:~# kubectl label node 192.168.121.115 disktype="hdd"

创建pod:

root@k8s-master1:~# kubectl apply -f case3-3.1-nodeantiaffinity.yaml deployment.apps/magedu-tomcat-app2-deployment created

验证pod调度结果:

root@k8s-master1:~# kubectl get pod -n magedu -o wide

NAME READY STATUS RESTARTS AGE IP NODE

magedu-tomcat-app2-deployment-6895d96bb-pwkbx 1/1 Running 0 2s 10.200.218.33 192.168.121.113

5.4 Pod Affinity与anti-affinity简介

Pod亲和性与反亲和性可以基于已经在node节点上运行的Pod的标签来约束新创建的Pod来调度到的目的节点,注意不是基于node上的标签,而是使用的已经运行在node上的pod标签匹配。

其规则的格式为如果 node节点 A已经运行了一个或多个满足调度新创建的Pod B的规则,那么新的Pod B在亲和的条件下会调度到A节点之上,而在反亲和性的情况下则不会调度到A节点至上。

其中规则表示一个具有可选的关联命名空间列表的LabelSelector,只所以Pod亲和与反亲和需可以通过LabelSelector选择namespace,是因为Pod是命名空间限定的而node不属于任何nemespace,所以node的亲和与反亲和不需要namespace,因此作用于Pod标签的标签选择算符必须指定选择算符应用在哪个命名空间。

从概念上讲,node节点是一个拓扑域(具有拓扑结构的区域、宕机的时候的故障域),比如k8s集群中的单台node节点、一个机架、云供应商可用区、云供应商地理区域等,可以使topologyKey来定义亲和或者反亲和的颗粒度是node级别还是可用区级别,以便kubernetes调度系统用来识别并选择正确的目的拓扑域

Pod 亲和性与反亲和性的合法操作符(operator)有 In、NotIn、Exists、DoesNotExist。

在Pod亲和性配置中,在requiredDuringSchedulingIgnoredDuringExecution和preferredDuringSchedulingIgnoredDuringExecution中,topologyKey不允许为空(Empty topologyKey is not allowed.).

在Pod反亲和性中配置中,requiredDuringSchedulingIgnoredDuringExecution和preferredDuringSchedulingIgnoredDuringExecution 中,topologyKey也不可以为空(Empty topologyKey is not allowed.)。

对于requiredDuringSchedulingIgnoredDuringExecution要求的Pod反亲和性,准入控制器LimitPodHardAntiAffinityTopology被引入以确保topologyKey只能是 kubernetes.io/hostname,如果希望 topologyKey 也可用于其他定制拓扑逻辑,可以更改准入控制器或者禁用。

除上述情况外,topologyKey 可以是任何合法的标签键

case5.4-4.1:部署web服务

编写yaml文件,在magedu anmespace部署一个nginx服务,nginx pod将用于后续的pod 亲和及反亲和测试,且pod的label如下:

app: python-nginx-selector

project: python

root@k8s-master1:~# cat case4-4.1-nginx.yaml

kind: Deployment

#apiVersion: extensions/v1beta1

apiVersion: apps/v1

metadata:

labels:

app: python-nginx-deployment-label

name: python-nginx-deployment

namespace: magedu

spec:

replicas: 1

selector:

matchLabels:

app: python-nginx-selector

template:

metadata:

labels:

app: python-nginx-selector

project: python

spec:

containers:

- name: python-nginx-container

image: nginx:1.20.2-alpine

#command: ["/apps/tomcat/bin/run_tomcat.sh"]

#imagePullPolicy: IfNotPresent

imagePullPolicy: Always

ports:

- containerPort: 80

protocol: TCP

name: http

- containerPort: 443

protocol: TCP

name: https

env:

- name: "password"

value: "123456"

- name: "age"

value: "18"

# resources:

# limits:

# cpu: 2

# memory: 2Gi

# requests:

# cpu: 500m

# memory: 1Gi

---

kind: Service

apiVersion: v1

metadata:

labels:

app: python-nginx-service-label

name: python-nginx-service

namespace: magedu

spec:

type: NodePort

ports:

- name: http

port: 80

protocol: TCP

targetPort: 80

nodePort: 30014

- name: https

port: 443

protocol: TCP

targetPort: 443

nodePort: 30453

selector:

app: python-nginx-selector

project: python #一个或多个selector,至少能匹配目标pod的一个标签

root@k8s-master1:~# kubectl apply -f case4-4.1-nginx.yaml

deployment.apps/python-nginx-deployment created

service/python-nginx-service created

case5.4-4.2:Pod Affinity-软亲和

基于软亲和实现多pod在一个node:

先创建一个nginx服务,后续的服务和nginx运行在同一个node节点

root@k8s-master1:~# cat case4-4.2-podaffinity-preferredDuring.yaml

kind: Deployment

#apiVersion: extensions/v1beta1

apiVersion: apps/v1

metadata:

labels:

app: magedu-tomcat-app2-deployment-label

name: magedu-tomcat-app2-deployment

namespace: magedu

spec:

replicas: 1

selector:

matchLabels:

app: magedu-tomcat-app2-selector

template:

metadata:

labels:

app: magedu-tomcat-app2-selector

spec:

containers:

- name: magedu-tomcat-app2-container

image: tomcat:7.0.94-alpine

imagePullPolicy: IfNotPresent

#imagePullPolicy: Always

ports:

- containerPort: 8080

protocol: TCP

name: http

affinity:

podAffinity: #Pod亲和

#requiredDuringSchedulingIgnoredDuringExecution: #硬亲和,必须匹配成功才调度,如果匹配失败则拒绝调度。

preferredDuringSchedulingIgnoredDuringExecution: #软亲和,能匹配成功就调度到一个topology,匹配不成功会由kubernetes自行调度。

- weight: 100

podAffinityTerm:

labelSelector: #标签选择

matchExpressions: #正则匹配

- key: project

operator: In

values:

- python

topologyKey: kubernetes.io/hostname

namespaces:

- magedu

root@k8s-master1:~# kubectl apply -f case4-4.2-podaffinity-preferredDuring.yaml

deployment.apps/magedu-tomcat-app2-deployment created

验证pod调度结果:

root@k8s-master1:~# kubectl get pod -n magedu -o wide

NAME READY STATUS RESTARTS AGE IP NODE

magedu-tomcat-app2-deployment-78b64f4b55-sqhxq 1/1 Running 0 3s 10.200.151.220 192.168.121.113

python-nginx-deployment-7bbc6bf578-sxrmm 1/1 Running 0 91s 10.200.151.217 192.168.121.113

case5.4-4.3:Pod Affinity-硬亲和:

基于硬亲和实现多个pod调度在一个node:

先创建一个nginx服务,后续的服务和nginx运行在同一个node节点

root@k8s-master1:~# cat case4-4.3-podaffinity-requiredDuring.yaml

kind: Deployment

#apiVersion: extensions/v1beta1

apiVersion: apps/v1

metadata:

labels:

app: magedu-tomcat-app2-deployment-label

name: magedu-tomcat-app2-deployment

namespace: magedu

spec:

replicas: 1

selector:

matchLabels:

app: magedu-tomcat-app2-selector

template:

metadata:

labels:

app: magedu-tomcat-app2-selector

spec:

containers:

- name: magedu-tomcat-app2-container

image: tomcat:7.0.94-alpine

imagePullPolicy: IfNotPresent

#imagePullPolicy: Always

ports:

- containerPort: 8080

protocol: TCP

name: http

affinity:

podAffinity:

requiredDuringSchedulingIgnoredDuringExecution: #硬亲和

- labelSelector:

matchExpressions:

- key: project

operator: In

values:

- python

topologyKey: "kubernetes.io/hostname"

namespaces:

- magedu

实现硬亲和pod调度

root@k8s-master1:~# kubectl apply -f case4-4.3-podaffinity-requiredDuring.yaml

deployment.apps/magedu-tomcat-app2-deployment created

验证pod调度结果:

root@k8s-master1:~# kubectl get pod -n magedu -o wide

NAME READY STATUS RESTARTS AGE IP NODE

magedu-tomcat-app2-deployment-5b8cfcfc8c-2jxdc 1/1 Running 0 24s 10.200.218.26 192.168.121.113

python-nginx-deployment-7bbc6bf578-hpx99 1/1 Running 0 27s 10.200.218.31 192.168.121.113

case5.4-4.4:podAntiAffinity-硬反亲和

基于硬反亲和实现多个pod调度不在一个node:

先创建一个nginx服务,后续的服务和nginx运行在同一个node节点

root@k8s-master1:~# cat case4-4.4-podAntiAffinity-requiredDuring.yaml

kind: Deployment

#apiVersion: extensions/v1beta1

apiVersion: apps/v1

metadata:

labels:

app: magedu-tomcat-app2-deployment-label

name: magedu-tomcat-app2-deployment

namespace: magedu

spec:

replicas: 1

selector:

matchLabels:

app: magedu-tomcat-app2-selector

template:

metadata:

labels:

app: magedu-tomcat-app2-selector

spec:

containers:

- name: magedu-tomcat-app2-container

image: tomcat:7.0.94-alpine

imagePullPolicy: IfNotPresent

#imagePullPolicy: Always

ports:

- containerPort: 8080

protocol: TCP

name: http

affinity:

podAntiAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

- labelSelector:

matchExpressions:

- key: project

operator: In

values:

- python

topologyKey: "kubernetes.io/hostname"

namespaces:

- magedu

实现硬反亲和pod调度:

root@k8s-master1:~/case/case# kubectl apply -f case4-4.4-podAntiAffinity-requiredDuring.yaml

deployment.apps/magedu-tomcat-app2-deployment created

验证pod调度结果:

root@k8s-master1:~# kubectl get pod -n magedu -o wide

NAME READY STATUS RESTARTS AGE IP NODE

magedu-tomcat-app2-deployment-6d56968789-lk4zj 1/1 Running 0 9s 10.200.151.226 192.168.121.115

python-nginx-deployment-7bbc6bf578-hpx99 1/1 Running 0 10m 10.200.218.31 192.168.121.113

case5.4-4.5:podAntiAffinity-软反亲和

基于软亲和实现多个pod调度不在一个node:

root@k8s-master1:~# cat case4-4.5-podAntiAffinity-preferredDuring.yaml

kind: Deployment

#apiVersion: extensions/v1beta1

apiVersion: apps/v1

metadata:

labels:

app: magedu-tomcat-app2-deployment-label

name: magedu-tomcat-app2-deployment

namespace: magedu

spec:

replicas: 20

selector:

matchLabels:

app: magedu-tomcat-app2-selector

template:

metadata:

labels:

app: magedu-tomcat-app2-selector

spec:

containers:

- name: magedu-tomcat-app2-container

image: tomcat:7.0.94-alpine

imagePullPolicy: IfNotPresent

#imagePullPolicy: Always

ports:

- containerPort: 8080

protocol: TCP

name: http

affinity:

podAntiAffinity:

preferredDuringSchedulingIgnoredDuringExecution:

- weight: 100

podAffinityTerm:

labelSelector:

matchExpressions:

- key: project

operator: In

values:

- python

topologyKey: kubernetes.io/hostname

namespaces:

- magedu

实现软亲和pod调度

root@k8s-master1:~# kubectl apply -f case4-4.5-podAntiAffinity-preferredDuring.yaml

deployment.apps/magedu-tomcat-app2-deployment created

验证pod调度结果:

root@k8s-master1:~# kubectl get pod -n magedu -o wide

NAME READY STATUS RESTARTS AGE IP NODE

magedu-tomcat-app2-deployment-64dff7b7f7-5rzx4 1/1 Running 0 18s 10.200.218.35 192.168.121.115

python-nginx-deployment-7bbc6bf578-j4ctx 1/1 Running 0 24s 10.200.151.210 192.168.121.113

6 污点与容忍

6.1 污点简介

污点(taints),用于node节点排斥 Pod调度,与亲和的作用是完全相反的,即taint的node和pod是排斥调度关系。

容忍(toleration),用于Pod容忍node节点的污点信息,即node有污点信息也会将新的pod调度到node

https://kubernetes.io/zh/docs/concepts/scheduling-eviction/taint-and-toleration/

污点的三种类型:

NoSchedule: 表示k8s将不会将Pod调度到具有该污点的Node上

root@k8s-master1:~# kubectl taint nodes 192.168.121.113 key1=value1:NoSchedule #设置污点

node/192.168.121.113 tainted

root@k8s-master1:~# kubectl describe node 192.168.121.113 #查看污点

Taints: key1=value1:NoSchedule

root@k8s-master1:~# kubectl taint node 192.168.121.113 key1:NoSchedule- #取消污点

node/192.168.121.113 untainted

PreferNoSchedule: 表示k8s将尽量避免将Pod调度到具有该污点的Node上

NoExecute: 表示k8s将不会将Pod调度到具有该污点的Node上,同时会将Node上已经存在的Pod强制驱逐出去

root@k8s-master1:~# kubectl taint nodes 192.168.121.113 key1=value1:NoExecute

6.2容忍简介

tolerations容忍:定义 Pod 的容忍度(可以接受node的哪些污点),容忍后可以将Pod调度至含有该污点的node。

基于operator的污点匹配: