h5网页实现人脸识别(vue3+ts)

背景:原本其他同事先做了安卓的,同事离职了,现在网页版也需要扫脸,只能使用h5做,h5扫脸内容大部分借鉴了这位同学的(https://blog.csdn.net/m0_54885949/article/details/154010014),也有遇到其他的问题,这里一是记录一下问题,而是记录一下流程。

1.安装人脸库

npm install face-api.js

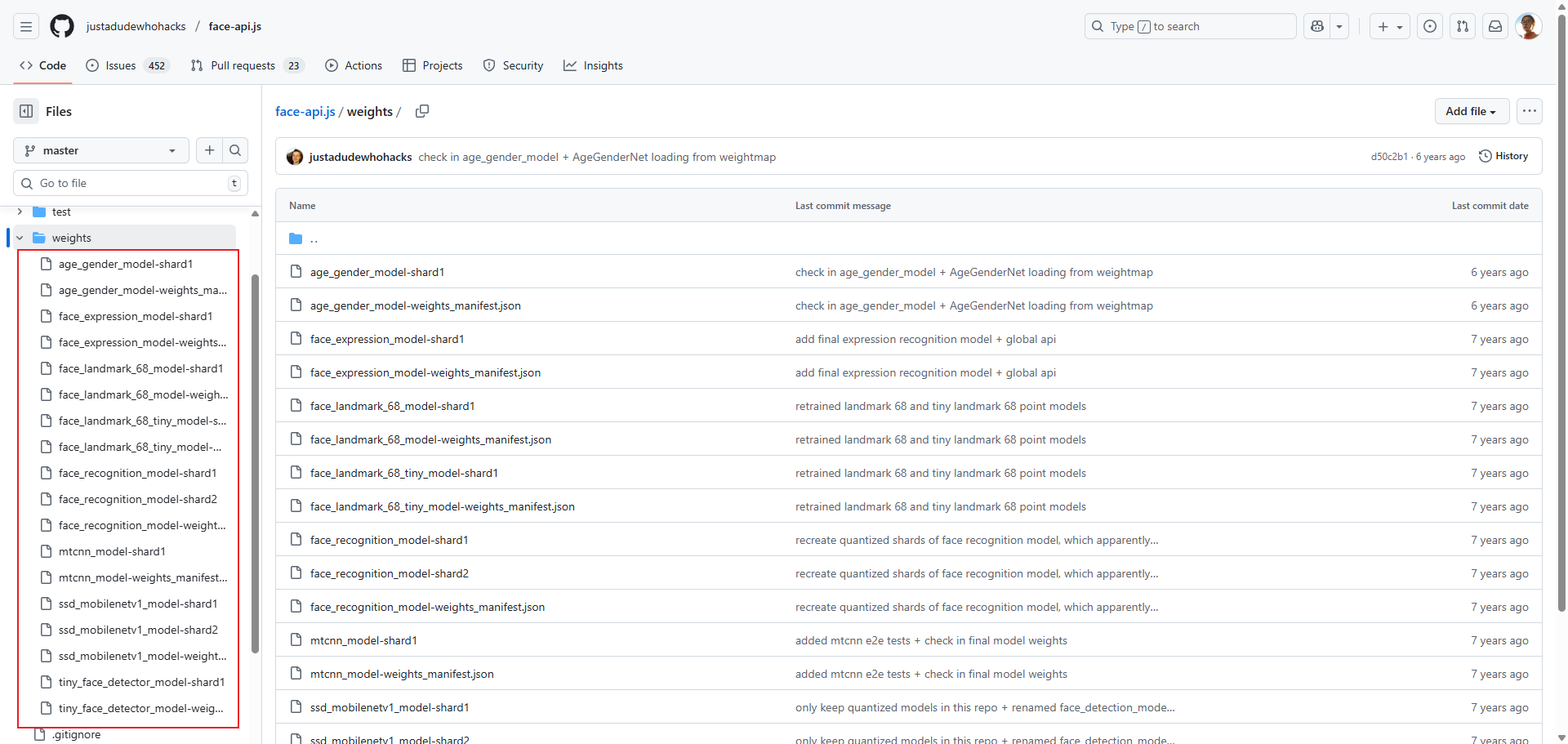

2.安装人脸识别模型

然后去到github下载对应模型,地址:

https://github.com/justadudewhohacks/face-api.js/tree/master/weights

打开后可以看到weights目录下的文件,把它们都下载下来,有的文件比较大,有点慢,耐心下载即可,

3.封装人脸识别捕获API

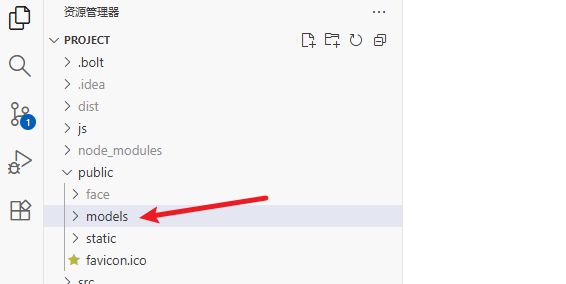

创建文件夹models存放那些文件,然后把文件夹放在根目录的public目录下,在文章的结尾,还有人脸模型的存放位置介绍

下面开始直接上代码,由于我个人也用的是TypeScript,所以要声明类型,如果使用的是JavaScript,则不需要声明类型,同时把封装的API里的类型声明给剔除掉

// src/types/face-api.d.ts

declare module "face-api.js" {

export interface Box {

x: number;

y: number;

width: number;

height: number;

}

export interface FaceDetection {

score: number;

box: Box;

}

export interface FaceLandmarks {

positions: { x: number; y: number }[];

}

export class TinyFaceDetectorOptions {

/** 构造 TinyFaceDetectorOptions,可选参数:inputSize 和 scoreThreshold */

constructor(options?: { inputSize?: number; scoreThreshold?: number });

}

// 我只用过loadFromUri方法,此方法是通过URL去加载模型,包括(http:// https:// file:// blob: data:),我用的是http

export const nets: {

/** TinyFaceDetector 模型 */

tinyFaceDetector: {

/** 加载模型,默认路径或指定路径 */

load: (modelPath?: string) => Promise<void>;

/** 通过 URI 加载模型 */

loadFromUri: (uri: string) => Promise<void>;

/** 从本地磁盘加载模型 */

loadFromDisk: (path: string) => Promise<void>;

};

/** FaceLandmark68 模型 */

faceLandmark68Net: {

load: (modelPath?: string) => Promise<void>;

loadFromUri: (uri: string) => Promise<void>;

loadFromDisk: (path: string) => Promise<void>;

};

};

/**

* 检测单个人脸

* 返回一个对象,包含 withFaceLandmarks 方法用于获取人脸关键点

*/

export const detectSingleFace: (

input: HTMLVideoElement | HTMLImageElement | HTMLCanvasElement,

options: TinyFaceDetectorOptions

) =>

| {

/** 获取人脸关键点 */

withFaceLandmarks: () => Promise<

{ detection: FaceDetection; landmarks: FaceLandmarks } | undefined

>;

}

| undefined;

/**

* 检测图像中所有人脸

* 返回所有检测到的人脸数组

*/

export const detectAllFaces: (

input: HTMLVideoElement | HTMLImageElement | HTMLCanvasElement,

options: TinyFaceDetectorOptions

) => Promise<FaceDetection[]>;

}

封装的人脸识别组合式API

// src/compositionAPI/useFaceCapture

import { onBeforeUnmount, ref } from "vue";

import * as faceapi from "face-api.js";

interface FaceCaptureOptions {

/** 视频帧的宽度(px),用于限制或设置采集的视频分辨率 */

videoWidth?: number;

/** 视频帧的高度(px),用于限制或设置采集的视频分辨率 */

videoHeight?: number;

/**

* 需要连续检测到稳定的人脸帧数才能认为捕获成功

* 例如设置 3,则只有连续 3 帧检测到人脸且位置稳定,才算捕获成功

*/

requiredStableFrames?: number;

}

/**

* 用于人脸采集完成回调,返回人脸图片给调用者,方便拿到图片后做逻辑操作

*/

type FaceCaptureSuccessCallback = (facialInfo: string | null) => void;

/**

* 人脸采集模块

*/

export const useFaceCapture = (

options: FaceCaptureOptions = {},

onFaceCaptureSuccess?: FaceCaptureSuccessCallback

) => {

// 绑定<video>元素

const videoElement = ref<HTMLVideoElement | null>(null);

/**

* 人脸采集状态(方便在UI和逻辑中控制流程)

*/

const faceStatus = ref<

| "waiting" // 等待开始采集,摄像头未启动或未检测

| "detecting" // 正在检测人脸,实时分析视频流

| "preparing" // 条件满足,用户准备拍照,可能有短暂延时

| "capturing" // 正在拍照/采集人脸数据

| "success" // 人脸采集完成,数据已获取

| "valid" // 人脸采集完成且有效,通过认证/检测

| "invalid" // 人脸采集完成但无效,不符合认证/检测要求

>("waiting");

// 当前人脸的base64

const facialInfo = ref<string | null>(null);

// 用来保存摄像头的媒体流

let stream: MediaStream | null = null;

// 是否正在检测

let detecting = false;

// 当前连续合格帧

let stableFrames = 0;

// 摄像头视频流的宽度(像素数)

const VIDEO_WIDTH = options.videoWidth || 300;

// 摄像头视频流的高度(像素数)

const VIDEO_HEIGHT = options.videoHeight || 300;

// 等待稳定帧数(调小 → 允许人脸晃动或短暂不稳定就采集成功 → 更宽松)

const REQUIRED_STABLE_FRAMES = options.requiredStableFrames || 15;

//最大眼球角度(调大 → 允许用户头偏更大角度)

const MAX_EYE_ANGLE = 20;

//最大鼻梁偏移量(调大 → 鼻子可以偏离更多也算通过)

const MAX_NOSE_OFFSET = 60;

//最大嘴部角度(调大 → 嘴部动作偏差可以更大)

const MAX_MOUTH_ANGLE = 20;

// 高度至少占 30%(调小 → 脸可以在画面中更小也算通过)

const MIN_FACE_HEIGHT_RATIO = 0.2;

// 宽度至少占 20%(调小 → 脸可以更小或离镜头更远也算通过)

const MIN_FACE_WIDTH_RATIO = 0.15;

//最少脸部关键点

const MIN_LANDMARKS_COUNT = 30;

const loadModels = async () => {

const env = detectEnv();

if (env === "browser") {

// 浏览器调试 / 打包成 web,这里只做h5,安卓的做过了

await faceapi.nets.tinyFaceDetector.loadFromUri("models");

await faceapi.nets.faceLandmark68Net.loadFromUri("models");

console.log("▶️ 人脸模型加载完成");

}

// else if (env === "android") {

// // 安卓 APK WebView(在apk环境中,我无法实现通过file协议访问,只能把模型交给后端,通过http访问了)

// await faceapi.nets.tinyFaceDetector.load(

// `${import.meta.env.VITE_FACE_MODEL_URL}`

// );

// await faceapi.nets.faceLandmark68Net.load(

// `${import.meta.env.VITE_FACE_MODEL_URL}`

// );

// }

// else if (env === "electron") {

// // Electron / Node.js 桌面

// await faceapi.nets.tinyFaceDetector.load("models");

// await faceapi.nets.faceLandmark68Net.load("models");

// }

else {

throw new Error("未知运行环境,无法加载模型");

}

};

/**

* 判断运行环境

* @returns

*/

const detectEnv = () => {

//各个环境判断

// if (typeof plus !== "undefined") {

// return "android";

// } else if (typeof process !== "undefined" && process.versions?.electron) {

// return "electron";

// } else if (typeof window !== "undefined") {

// return "browser";

// } else {

// return "unknown";

// }

// 这里只做h5,安卓的不需要,

if (typeof window !== "undefined") {

return "browser";

} else {

return "unknown";

}

};

// ======================

// 启动摄像头和模型

// ======================

const startFaceCapture = async () => {

console.log("▶️ 启动人脸采集...");

faceStatus.value = "detecting";

stableFrames = 0;

// 1. 确保在安全上下文(HTTPS或localhost)下运行,本测试环境是http,可以注释该段,所以在谷歌做了配置,文本最后说明

// if (location.protocol !== "https:" && location.hostname !== "localhost") {

// alert("请通过HTTPS或localhost访问本页面");

// return;

// }

try {

stream = await navigator.mediaDevices.getUserMedia({

video: {

width: { ideal: VIDEO_WIDTH },

height: { ideal: VIDEO_HEIGHT },

facingMode: "user", // 优先使用前置摄像头

},

audio: false, // 不需要音频

});

console.log("📹 摄像头启动成功stream", stream);

if (videoElement.value) {

videoElement.value.srcObject = stream;

videoElement.value.play();

console.log(

"📹 视频流绑定成功2videoElement.value.srcObject",

videoElement.value.srcObject

);

}

} catch (error) {

alert("摄像头访问失败");

faceStatus.value = "waiting";

return;

}

try {

await loadModels();

detecting = true;

} catch (error) {

faceStatus.value = "waiting";

alert("模型启动失败" + error);

}

try {

//ios需要加2秒,否则不执行

setTimeout(() => {

detectFaceLoop();

}, 2000);

} catch (error) {

faceStatus.value = "waiting";

alert("实时检测失败");

}

};

// ======================

// 实时检测

// ======================

const detectFaceLoop = async () => {

console.log("🔍 开始检测人脸");

if (!videoElement.value || !detecting) return;

if (faceStatus.value === "success") return;

const options = new faceapi.TinyFaceDetectorOptions();

try {

const detectionWithLandmarks = await faceapi

.detectSingleFace(videoElement.value, options)

?.withFaceLandmarks();

if (detectionWithLandmarks) {

console.log("😀 检测到人脸");

const positions = detectionWithLandmarks.landmarks.positions;

const landmarksCount = positions.length;

if (landmarksCount >= MIN_LANDMARKS_COUNT) {

const faceBox = detectionWithLandmarks.detection.box;

const faceHeightRatio =

faceBox.height / videoElement.value.videoHeight;

const faceWidthRatio = faceBox.width / videoElement.value.videoWidth;

console.log(

`📏 人脸比例: 高度=${(faceHeightRatio * 100).toFixed(1)}% 宽度=${(

faceWidthRatio * 100

).toFixed(1)}%`

);

if (

faceHeightRatio < MIN_FACE_HEIGHT_RATIO ||

faceWidthRatio < MIN_FACE_WIDTH_RATIO

) {

console.log("⚠️ 人脸太小,不符合要求");

stableFrames = 0;

faceStatus.value = "detecting";

requestAnimationFrame(detectFaceLoop);

return;

}

// 左右眼

const leftEyePoints = positions.slice(36, 42);

const leftEyeCenter = {

x:

leftEyePoints.reduce((sum, p) => sum + p.x, 0) /

leftEyePoints.length,

y:

leftEyePoints.reduce((sum, p) => sum + p.y, 0) /

leftEyePoints.length,

};

const rightEyePoints = positions.slice(42, 48);

const rightEyeCenter = {

x:

rightEyePoints.reduce((sum, p) => sum + p.x, 0) /

rightEyePoints.length,

y:

rightEyePoints.reduce((sum, p) => sum + p.y, 0) /

rightEyePoints.length,

};

const noseTip = positions[30];

const mouthLeft = positions[48];

const mouthRight = positions[54];

const eyeDx = rightEyeCenter.x - leftEyeCenter.x;

const eyeDy = rightEyeCenter.y - leftEyeCenter.y;

const eyeAngle = Math.atan2(eyeDy, eyeDx) * (180 / Math.PI);

const videoCenterX = videoElement.value.videoWidth / 2;

const noseOffsetX = Math.abs(noseTip.x - videoCenterX);

const mouthDx = mouthRight.x - mouthLeft.x;

const mouthDy = mouthRight.y - mouthLeft.y;

const mouthAngle = Math.atan2(mouthDy, mouthDx) * (180 / Math.PI);

console.log(

`📐 眼睛角度=${eyeAngle.toFixed(2)}° 鼻子偏移=${noseOffsetX.toFixed(

1

)}px 嘴角角度=${mouthAngle.toFixed(2)}°`

);

if (

Math.abs(eyeAngle) < MAX_EYE_ANGLE &&

noseOffsetX < MAX_NOSE_OFFSET &&

Math.abs(mouthAngle) < MAX_MOUTH_ANGLE

) {

stableFrames++;

console.log(

`✅ 姿态正常,稳定帧数=${stableFrames}/${REQUIRED_STABLE_FRAMES}`

);

if (

faceStatus.value !== "preparing" &&

stableFrames >= REQUIRED_STABLE_FRAMES

) {

console.log("⌛ 准备采集,请保持不要乱动...");

faceStatus.value = "preparing";

// 给用户 3 秒时间准备

setTimeout(() => {

if (faceStatus.value === "preparing") {

console.log("📸 条件满足,开始拍照...");

faceStatus.value = "capturing";

capturePhoto();

stableFrames = 0;

}

}, 1000);

}

} else {

if (faceStatus.value === "preparing") {

console.log("❌ 检测到用户移动,取消准备");

}

stableFrames = 0;

//faceStatus.value = "detecting";

}

} else {

console.log("⚠️ 检测到人脸,但关键点不足");

stableFrames = 0;

faceStatus.value = "detecting";

}

} else {

console.log("🔍 没检测到人脸");

stableFrames = 0;

if (faceStatus.value !== "preparing") {

faceStatus.value = "detecting";

}

}

} catch (error) {

console.log("🔍 检测error", error);

}

requestAnimationFrame(detectFaceLoop);

};

// ======================

// 拍照

// ======================

const capturePhoto = () => {

const video = videoElement.value;

const canvas = document.createElement("canvas");

// 使用摄像头实际分辨率

canvas.width = video!.videoWidth;

canvas.height = video!.videoHeight;

const ctx = canvas.getContext("2d");

if (!ctx) return;

ctx.drawImage(video!, 0, 0, canvas.width, canvas.height);

// 输出高清 JPEG

facialInfo.value = canvas.toDataURL("image/jpeg", 1.0);

faceStatus.value = "success";

stopFaceCapture();

//判断是否需要回调

if (onFaceCaptureSuccess) {

onFaceCaptureSuccess(facialInfo.value);

}

};

// ======================

// 停止摄像头

// ======================

const stopFaceCapture = () => {

if (stream) {

console.log("🛑 停止人脸采集");

stream.getTracks().forEach((t) => t.stop());

stream = null;

}

if (videoElement.value) videoElement.value.srcObject = null;

detecting = false;

stableFrames = 0;

};

// ======================

// 重新采集

// ======================

const retryFaceCapture = async () => {

console.log("🔄 重新开始人脸采集");

stopFaceCapture();

stableFrames = 0;

await startFaceCapture();

};

// ======================

// 重置所有信息

// ======================

const resetFaceCapture = () => {

stopFaceCapture(); // 停止摄像头

// 清空数据

facialInfo.value = null;

faceStatus.value = "waiting";

stableFrames = 0;

detecting = false;

};

onBeforeUnmount(() => {

stopFaceCapture();

});

return {

videoElement,

faceStatus,

facialInfo,

startFaceCapture,

retryFaceCapture,

stopFaceCapture,

resetFaceCapture,

};

};

5.页面显示检测过程中的视频流以及捕获到的图片

//src/components/common/vantComs/CheckFacePopup.vue

<div class="video-container">

<div class="face-title">人脸识别扫描</div>

<div class="face-tips">请衣着整洁,正对手机,确保光线充足</div>

<!-- 摄像头画面 -->

<video ref="videoElement" playsinline webkit-playsinline autoplay></video>

<div class="face-tips">请确保是账户本人操作</div>

<van-loading size="24px" class="loading-status" vertical

>{{ time ? faceStatusTextObj[faceStatus] : "人脸采集超时" }}

</van-loading>

<div class="time">倒计时{{ time }}秒</div>

<!-- 采集结果预览 -->

<!-- <img :src="faceImage" alt="采集的人脸照片" /> -->

</div>

const emit = defineEmits(["on-close"]);

const time = ref(8);

const timer = setInterval(() => {

time.value--;

if (time.value === 0) {

clearInterval(timer);

stopFaceCapture();

setTimeout(() => {

emit("on-close");

}, 1000);

}

}, 1000);

const faceStatusTextObj = ref({

waiting: "等待开始采集",

detecting: "正在检测人脸",

preparing: "用户准备拍照",

capturing: "正在拍照",

success: "人脸采集完成",

valid: "人脸采集完成且有效",

invalid: "人脸采集完成但无效",

});

let faceImage = ref(""); // 采集的人脸照片

// 2.声明人脸图片回调函数类型(拿到的是图片的base64)

type FaceCaptureSuccessCallback = (facialInfo: string | null) => void;

// 3.定义获取到人脸信息后回调函数,拿到图片后的逻辑操作在方法体里处理

const onFaceCaptureSuccess: FaceCaptureSuccessCallback = async (

facialInfo: string | null

) => {

try {

// facialInfo就是拿到的图片base64,在这里做逻辑操作

console.log("获取到图片信息", facialInfo, user.userInfo);

let res = await checkFace({

base64Data: facialInfo?.split("base64,")[1],

// eprId: user.userInfo?.eprId,

eprId: "SHJT",

});

console.log("res", res);

let res2 = await checkIdCard({

idCard: res.data.data,

});

emit("on-close", res2);

console.log("res2", res2);

} catch (err) {

console.log("err", err);

emit("on-close");

} finally {

// 6.重置人脸识别状态,重置后可以重新开始人脸识别

resetFaceCapture();

}

};

// 4.解构人脸识别模块,第一个参数我没有传,因为在封装的API中已经使用定义了默认的

// 5.注意顺序,先定义回调函数类型,再声明回调函数,最后再解构

const {

videoElement,

faceStatus,

startFaceCapture,

stopFaceCapture,

resetFaceCapture,

} = useFaceCapture({}, onFaceCaptureSuccess);

onMounted(() => {

startFaceCapture();

});

onBeforeUnmount(() => {

stopFaceCapture();

});

父组件点击

//src/views/education/HomeTemp.vue

<!-- window 浏览器/plus 安卓 typeof plus == 'undefined'表面该环境不为安卓,由于我们只有安卓和还h5(window)两个环境,-->

<van-grid-item

v-if="typeof plus == 'undefined'"

:icon="image"

text="人脸识别"

@click="getH5FaceRec()"

/>

<van-popup

v-model:show="showoverlay"

:style="{ width: '100%', height: '100%' }"

>

<CheckFacePopup

v-if="showoverlay"

@on-close="onClose"

v-model:show="showoverlay"

></CheckFacePopup>

</van-popup>

import CheckFacePopup from "@/components/common/vantComs/CheckFacePopup.vue";

const getH5FaceRec = async () => {

showoverlay.value = true;

};

6.额外的

无论是web中,还是electron,或是安卓的apk环境中,都需要赋予启动摄像头的权限

本人使用HBuilderX的5+APP模版打包成apk后,无法加载人脸识别的模型,最后没有办法,只能在后端启动一个端口服务映射到模型目录中,使用http来访问,如果是在互联网环境,可以直接使用nginx去代理那个目录,此处不再阐述

————————————————

原文链接:https://blog.csdn.net/m0_54885949/article/details/154010014

注意 1 :ios系统拍照需要加一个延时

setTimeout(() => {

detectFaceLoop();

}, 2000);

注意 2 :http测试的话需要配置谷歌浏览器

https://jimolvxing.blog.csdn.net/article/details/154951967

注意 3 :各大浏览器http测试浏览器配置方法

https://blog.csdn.net/qq_50906507/article/details/145910206?utm_medium=distribute.pc_relevant.none-task-blog-2defaultbaidujs_baidulandingword~default-0-145910206-blog-154951967.235v43pc_blog_bottom_relevance_base2&spm=1001.2101.3001.4242.1&utm_relevant_index=3

浙公网安备 33010602011771号

浙公网安备 33010602011771号