pytorch学习笔记(1)

pytorch的模块

Pytorch 官方文档链接: https://pytorch.org/docs/stable/

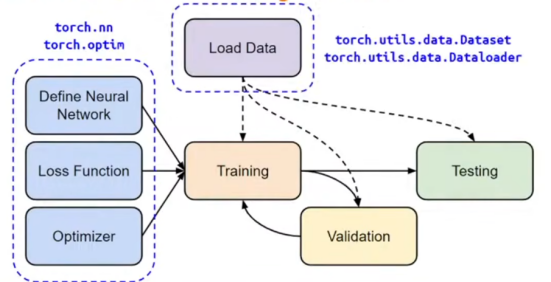

torch.nn:神经网络相关api

torch.option: 优化算法

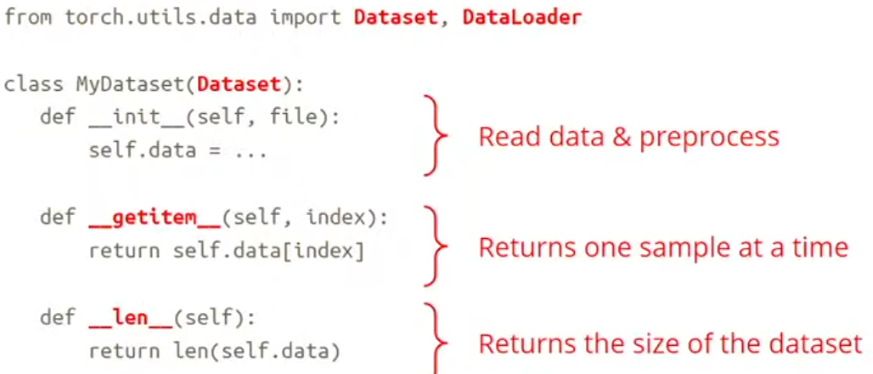

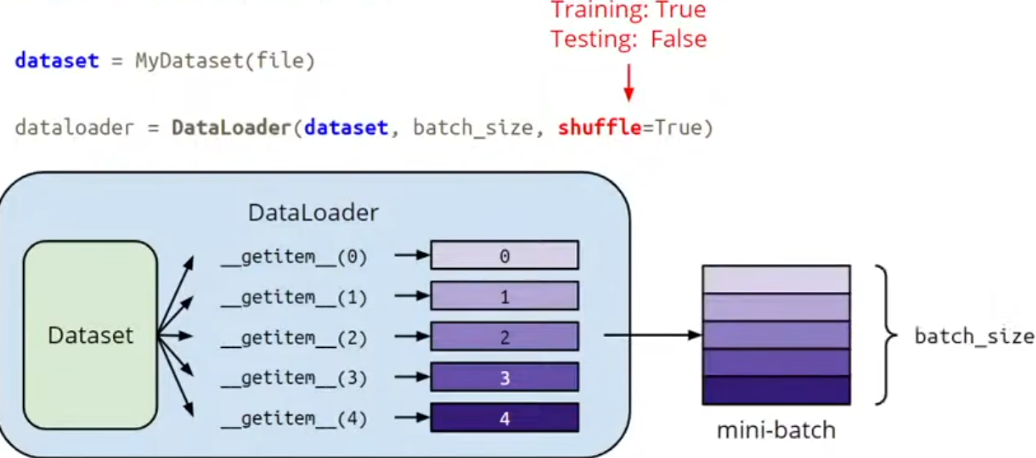

torch.utils.data : dataset,dataLoader

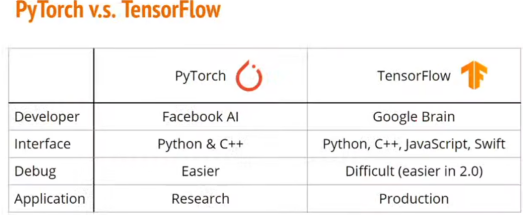

1.pytorch和tensorflow区别

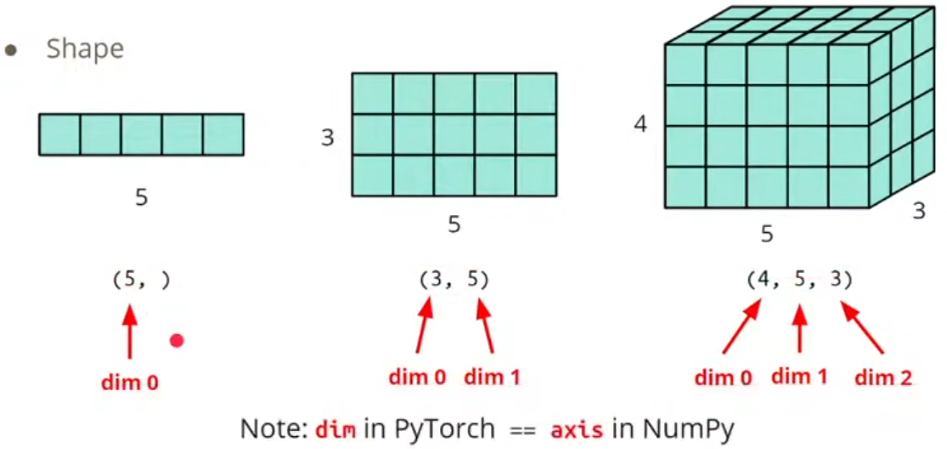

2.tensor(向量)相关操作

- 形状:

- 声明一个tensor:

import torch

x = torch.ones([1,3,5])

print(x, x.shape)

x = x.squeeze(0)

print(x, x.shape)

y = torch.ones([3,1,5])

print(y, y.shape)

y = y.squeeze(1)

print(y, y.shape)

x2 = torch.ones([2,3])

print(x2, x2.shape)

x3 = torch.ones([3,4,6])

print(x3, x3.shape)

输出结果:

- squeeze操作:压缩一个维度

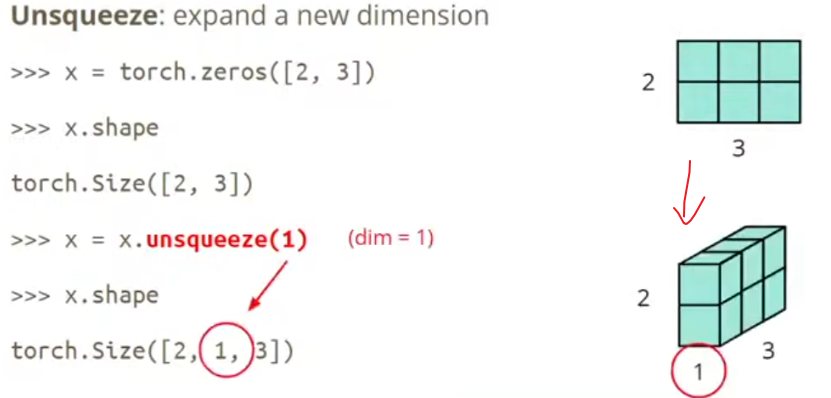

- unsqueeze操作:

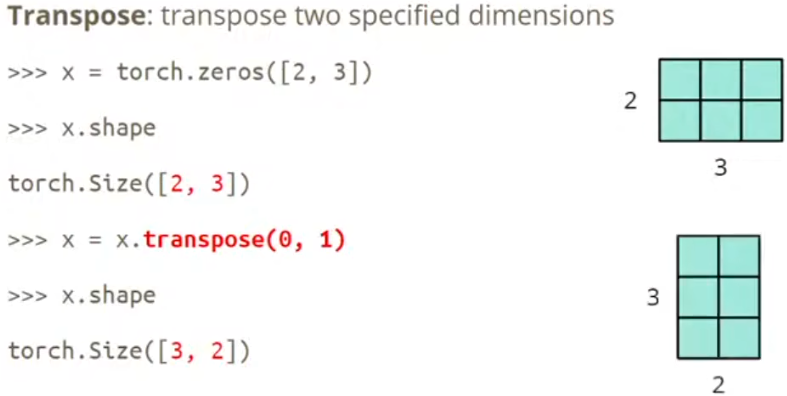

- Transpose:维度对调

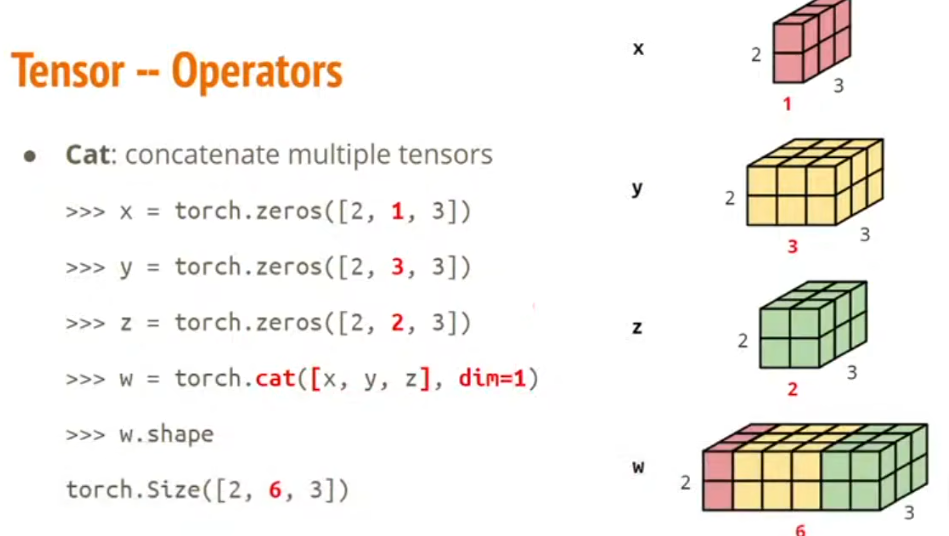

- cat:联合几个tensor

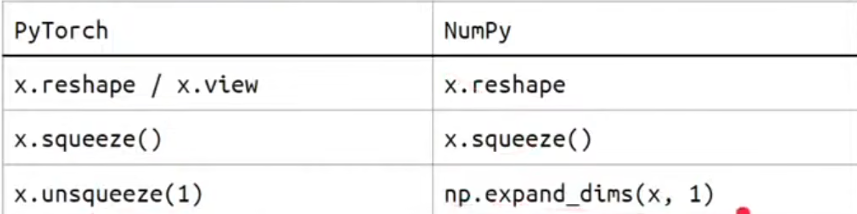

- 和numpy的区别:

3.运行设备设置

pytorch默认是在CPU上计算,如果在GPU上跑要特别声明下:

x = x.to('cpu')

x = x.to('cuda')

torch.cuda.is_available() #检查设备状态

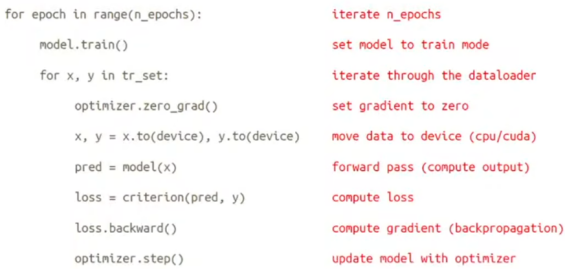

4.怎么计算梯度Gradient

import torch

import numpy as np

x = torch.tensor([[1.,0.],[-1.,1.]],requires_grad=True)

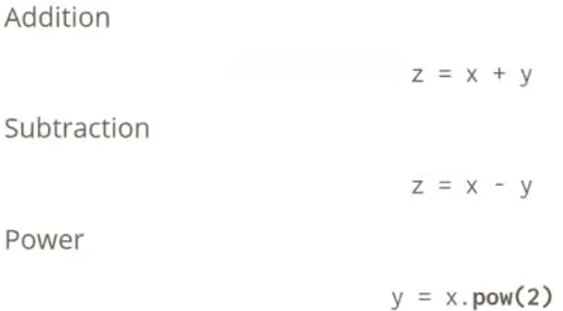

y = x.pow(2) #这里是每个元素的平方

print(y)

z = x.pow(2).sum() #所有元素的平方和

print(z)

z.backward() #z值对每个矩阵元素反向求导

g = x.grad

print(g)

x_num = np.array([[4.,3.],[-1.,1.]])

y_num = x_num**2

print(y_num)

print(sum(sum(y_num)))

输出结果:

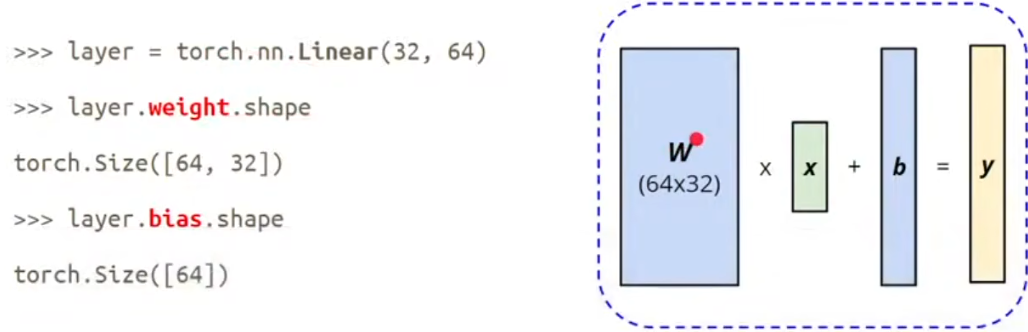

6.torch.nn --神经网络层

*** Linear Layer:**

nn.Linear(in_features, out_features)

nn.sigmoid()

nn.ReLU()

- Loss functions:

nn.MSELoss() #均方误差损失

nn.CrossEntropyLoss() #交叉熵损失

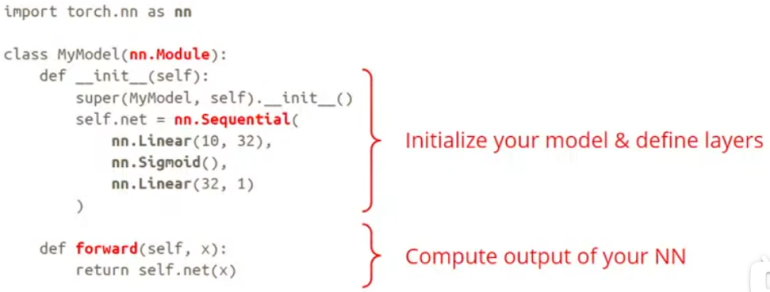

- 构造自己的神经网络:

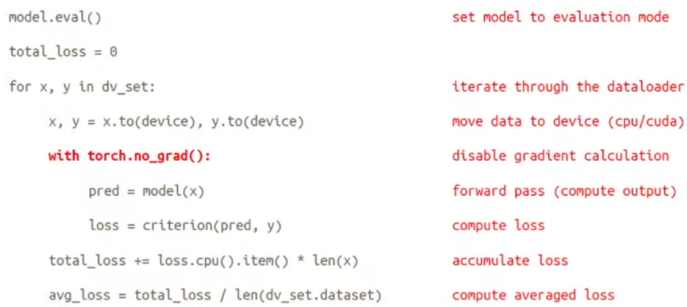

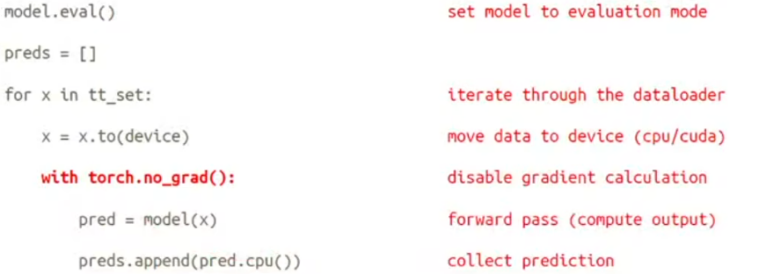

8.validation Set:

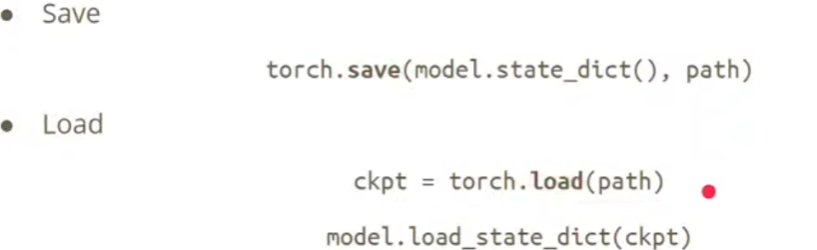

10.Save/Load Neural Network

浙公网安备 33010602011771号

浙公网安备 33010602011771号