使用kubeadm部署Kubernetes集群

一、环境架构与部署准备

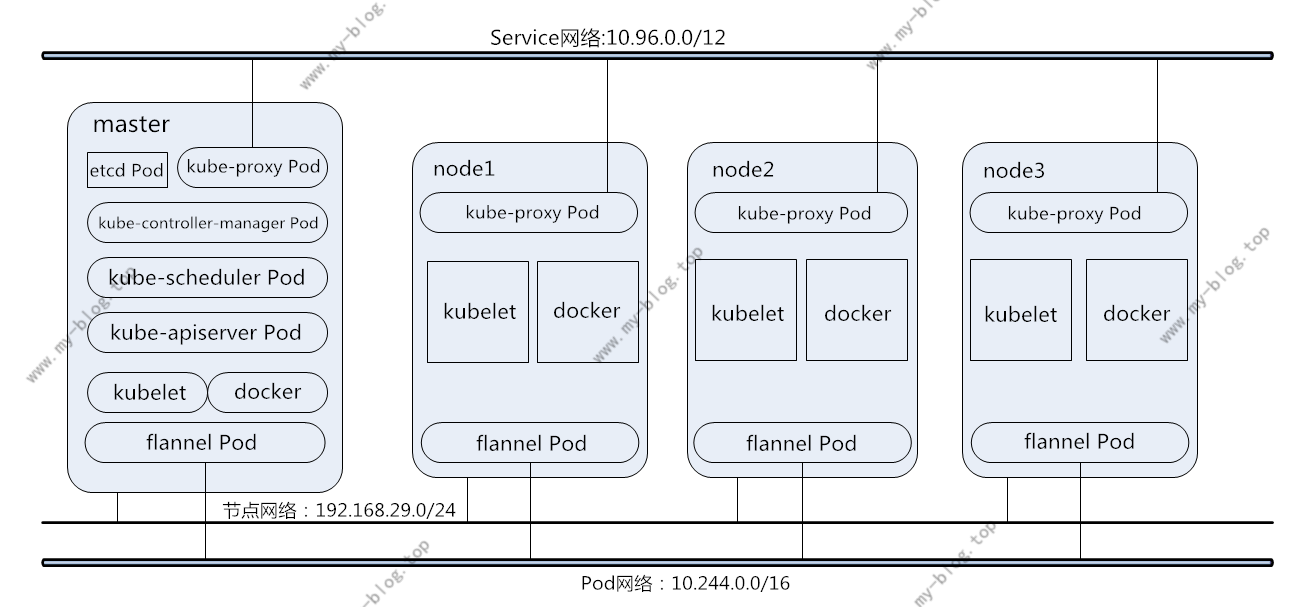

1.集群节点架构与各节点所需安装的服务如下图:

2.安装环境与软件版本:

Master:

所需软件:docker-ce 17.03、kubelet1.11.1、kubeadm1.11.1、kubectl1.11.1

所需镜像:

mirrorgooglecontainers/kube-proxy-amd64:v1.11.1、mirrorgooglecontainers/kube-scheduler-amd64:v1.11.1、mirrorgooglecontainers/kube-controller-manager-amd64:v1.11.1、mirrorgooglecontainers/kube-apiserver-amd64:v1.11.1、coredns/coredns:1.1.3、mirrorgooglecontainers/etcd-amd64:3.2.18、mirrorgooglecontainers/pause:3.1、registry.cn-hangzhou.aliyuncs.com/readygood/flannel:v0.10.0-amd64

Node:

所需软件:docker-ce 17.03、kubelet1.11.1、kubeadm1.11.1

所需镜像:mirrorgooglecontainers/kube-proxy-amd64:v1.11.1、mirrorgooglecontainers/pause:3.1、registry.cn-hangzhou.aliyuncs.com/readygood/flannel:v0.10.0-amd64

二、部署Master

1.关闭Firewall和SELinux

由于kubeadm在初始化时会自动生成ipv4规则,所以尽量在部署前关闭防火墙。

2.配置阿里云的Kubernetes镜像和Docker-ce镜像并安装

K8s yum源配置:

[k8s] name=k8s baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/ gpgchecke=1 gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg enabled=1

Docker-ce源配置:

wget -o /etc/yum.repos.d/ https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

修改Docker镜像默认拉取源:

mkdir -p /etc/docker vim /etc/docker/daemon.json { "registry-mirrors": ["https://registry.docker-cn.com"] }

安装注意指定版本:

yum install -y --setopt=obsoletes=0 docker-ce-17.03.2.ce-1.el7.centos.x86_64 docker-ce-selinux-17.03.2.ce-1.el7.centos.noarch yum install kubelet-1.11.1 kubeadm-1.11.1 kubectl-1.11.1 -y

3.设置内核转发规则,即要求iptables对bridge的数据进行处理,默认为0.

echo 1 > /proc/sys/net/bridge/bridge-nf-call-iptables

4.关闭或者忽略swap,部署Kubernetes集群时尽量不要使用swap分区,Kubernetes会提示是否要关闭或者忽略,忽略方式如下:

vim /etc/sysconfig/kubelet KUBELET_EXTRA_ARGS="--fail-swap-on=false"

5.初始化Kubernetes

kubeadm init --kubernetes-version=1.11.1 --pod-network-cidr=10.244.0.0/16 --service-cidr=10.96.0.0/12 --ignore-preflight-errors=Swap

如果在初始化中出现错误可以使用 kubeadm reset 重置初始化,如果出现" [kubelet-check] It seems like the kubelet isn't running or healthy. "的错误,建议检查swap设置。

初始化完成会出现如下提示,按提示完成操作初始化便完成,最后一段数字一定要保留下来,这是加入集群必须要的认证信息:

Your Kubernetes master has initialized successfully! To start using your cluster, you need to run the following as a regular user: mkdir -p $HOME/.kube sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config sudo chown $(id -u):$(id -g) $HOME/.kube/config You should now deploy a pod network to the cluster. Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at: https://kubernetes.io/docs/concepts/cluster-administration/addons/ You can now join any number of machines by running the following on each node as root: kubeadm join 192.168.29.111:6443 --token 4qswp9.rxgwhn0vqp4c9npl --discovery-token-ca-cert-hash sha256:2d9bc0bd6b1eb12dcb8695f17191b243ecf3ed169d4aafaacc5c5c1272a85f07

6.关于运行Kubernetes所需镜像下载的问题:

由于某些原因无法正常下载官方镜像,但Kubeadm只支持认证官方的镜像标签,所以必须在dockerhub上下载镜像后修改标签才能使用,可使用dockerhub或者阿里云镜像,然后修改标签名:

mirrorgooglecontainers/kube-proxy-amd64 mirrorgooglecontainers/kube-apiserver-amd64 mirrorgooglecontainers/kube-scheduler-amd64 mirrorgooglecontainers/kube-controller-manager-amd64 coredns/coredns mirrorgooglecontainers/etcd-amd64 mirrorgooglecontainers/pause

k8s.gcr.io/kube-proxy-amd64 v1.11.1 d5c25579d0ff 8 weeks ago 97.8MB k8s.gcr.io/kube-scheduler-amd64 v1.11.1 272b3a60cd68 8 weeks ago 56.8MB k8s.gcr.io/kube-controller-manager-amd64 v1.11.1 52096ee87d0e 8 weeks ago 155MB k8s.gcr.io/kube-apiserver-amd64 v1.11.1 816332bd9d11 8 weeks ago 187MB k8s.gcr.io/coredns 1.1.3 b3b94275d97c 3 months ago 45.6MB k8s.gcr.io/etcd-amd64 3.2.18 b8df3b177be2 5 months ago 219MB quay.io/coreos/flannel v0.10.0-amd64 f0fad859c909 7 months ago 44.6MB k8s.gcr.io/pause 3.1 da86e6ba6ca1 8 months ago 742kB

7.关于flannel的安装

在确定已经下载镜像 quay.io/coreos/flannel 后(能访问谷歌请忽略),运行下面的命令。

kubectl apply -f https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

三、Nodes部署

1.安装node所需组件

和master一样,需要安装docker、kubeadm、kubelet,并设置开机自启,步骤参考master,这里不赘述了。下载好Node所需镜像,并重命名为k8s.gcr.io/:

mirrorgooglecontainers/kube-proxy-amd64 v1.11.1 d5c25579d0ff 6 months ago 97.8 MB registry.cn-hangzhou.aliyuncs.com/readygood/flannel v0.10.0-amd64 50e7aa4dbbf8 9 months ago 44.6 MB registry.cn-hangzhou.aliyuncs.com/readygood/pause 3.1 da86e6ba6ca1 13 months ago 742 kB

docker镜像下载完成后初始化flannel服务:

kubectl apply -f https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

2.初始化node节点

kubeadm join 192.168.29.111:6443 --token 4qswp9.rxgwhn0vqp4c9npl --discovery-token-ca-cert-hash sha256:2d9bc0bd6b1eb12dcb8695f17191b243ecf3ed169d4aafaacc5c5c1272a85f07 --ignore-preflight-errors=Swap

如果不关闭swap同样需要配置swap设置以及修改iptables对bridge的数据进行处理设置:

vim /etc/sysconfig/kubelet KUBELET_EXTRA_ARGS="--fail-swap-on=false"

echo 1 > /proc/sys/net/bridge/bridge-nf-call-iptables

出现如下提示即初始化完成:

This node has joined the cluster: * Certificate signing request was sent to master and a response was received. * The Kubelet was informed of the new secure connection details. Run 'kubectl get nodes' on the master to see this node join the cluster.

查看集群状态:

]# kubectl get pods -n kube-system -o wide NAME READY STATUS RESTARTS AGE IP NODE coredns-78fcdf6894-kpt2k 1/1 Running 1 18h 10.244.0.5 master coredns-78fcdf6894-nzdkz 1/1 Running 1 18h 10.244.0.4 master etcd-master 1/1 Running 3 16h 192.168.29.111 master kube-apiserver-master 1/1 Running 3 16h 192.168.29.111 master kube-controller-manager-master 1/1 Running 3 16h 192.168.29.111 master kube-flannel-ds-amd64-5gnd8 1/1 Running 1 16h 192.168.29.111 master kube-flannel-ds-amd64-7rtb8 1/1 Running 0 2h 192.168.29.112 node1 kube-flannel-ds-amd64-qqjdv 1/1 Running 0 2h 192.168.29.113 node2 kube-proxy-kfsfj 1/1 Running 0 2h 192.168.29.113 node2 kube-proxy-lnk67 1/1 Running 0 2h 192.168.29.112 node1 kube-proxy-v8d2q 1/1 Running 2 18h 192.168.29.111 master kube-scheduler-master 1/1 Running 2 16h 192.168.29.111 master

]# kubectl get nodes -o wide NAME STATUS ROLES AGE VERSION INTERNAL-IP EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME master Ready master 18h v1.11.1 192.168.29.111 <none> CentOS Linux 7 (Core) 3.10.0-957.el7.x86_64 docker://17.3.2 node1 Ready <none> 2h v1.11.1 192.168.29.112 <none> CentOS Linux 7 (Core) 3.10.0-957.el7.x86_64 docker://17.3.2 node2 Ready <none> 2h v1.11.1 192.168.29.113 <none> CentOS Linux 7 (Core) 3.10.0-957.el7.x86_64 docker://17.3.2

还需要特别注意的是,各Node之间的主机名不要相同,一定要修改,不然Kubeadm会识别为同一Node而无法加入。

Node2和Node3的部署和Node1相同,可以完全按照Node1的方法来部署。

浙公网安备 33010602011771号

浙公网安备 33010602011771号