高可用OpenStack(Queen版)集群-11.Neutron计算节点

参考文档:

- Install-guide:https://docs.openstack.org/install-guide/

- OpenStack High Availability Guide:https://docs.openstack.org/ha-guide/index.html

- 理解Pacemaker:http://www.cnblogs.com/sammyliu/p/5025362.html

十五.Neutron计算节点

1. 安装neutron-linuxbridge

# 在全部计算节点安装neutro-linuxbridge服务,以compute01节点为例 [root@compute01 ~]# yum install openstack-neutron-linuxbridge ebtables ipset -y

2. 配置neutron.conf

# 在全部计算节点操作,以computer01节点为例; # 注意”bind_host”参数,根据节点修改; # 注意neutron.conf文件的权限:root:neutron [root@compute01 ~]# cp /etc/neutron/neutron.conf /etc/neutron/neutron.conf.bak [root@compute01 ~]# egrep -v "^$|^#" /etc/neutron/neutron.conf [DEFAULT] state_path = /var/lib/neutron bind_host = 172.30.200.41 auth_strategy = keystone # 前端采用haproxy时,服务连接rabbitmq会出现连接超时重连的情况,可通过各服务与rabbitmq的日志查看; # transport_url = rabbit://openstack:rabbitmq_pass@controller:5673 # rabbitmq本身具备集群机制,官方文档建议直接连接rabbitmq集群;但采用此方式时服务启动有时会报错,原因不明;如果没有此现象,强烈建议连接rabbitmq直接对接集群而非通过前端haproxy transport_url=rabbit://openstack:rabbitmq_pass@controller01:5672,controller02:5672,controller03:5672 [agent] [cors] [database] [keystone_authtoken] www_authenticate_uri = http://controller:5000 auth_url = http://controller:35357 memcached_servers = controller01:11211,controller:11211,controller:11211 auth_type = password project_domain_name = default user_domain_name = default project_name = service username = neutron password = neutron_pass [matchmaker_redis] [nova] [oslo_concurrency] lock_path = $state_path/lock [oslo_messaging_amqp] [oslo_messaging_kafka] [oslo_messaging_notifications] [oslo_messaging_rabbit] [oslo_messaging_zmq] [oslo_middleware] [oslo_policy] [quotas] [ssl]

3. 配置linuxbridge_agent.ini

1)配置linuxbridgr_agent.ini

# 在全部计算节点操作,以compute01节点为例; # linuxbridge_agent.ini文件的权限:root:neutron [root@compute01 ~]# cp /etc/neutron/plugins/ml2/linuxbridge_agent.ini /etc/neutron/plugins/ml2/linuxbridge_agent.ini.bak [root@compute01 ~]# egrep -v "^$|^#" /etc/neutron/plugins/ml2/linuxbridge_agent.ini [DEFAULT] [agent] [linux_bridge] # 网络类型名称与物理网卡对应,这里vlan租户网络对应规划的eth3; # 需要明确的是物理网卡是本地有效,需要根据主机实际使用的网卡名确定; # 另有”bridge_mappings”参数对应网桥 physical_interface_mappings = vlan:eth3 [network_log] [securitygroup] firewall_driver = neutron.agent.linux.iptables_firewall.IptablesFirewallDriver enable_security_group = true [vxlan] enable_vxlan = true # tunnel租户网络(vxlan)vtep端点,这里对应规划的eth2(的地址),根据节点做相应修改 local_ip = 10.0.0.41 l2_population = true

2)配置内核参数

# bridge:是否允许桥接; # 如果“sysctl -p”加载不成功,报” No such file or directory”错误,需要加载内核模块“br_netfilter”; # 命令“modinfo br_netfilter”查看内核模块信息; # 命令“modprobe br_netfilter”加载内核模块 [root@compute01 ~]# echo "# bridge" >> /etc/sysctl.conf [root@compute01 ~]# echo "net.bridge.bridge-nf-call-iptables = 1" >> /etc/sysctl.conf [root@compute01 ~]# echo "net.bridge.bridge-nf-call-ip6tables = 1" >> /etc/sysctl.conf [root@compute01 ~]# sysctl -p

4. 配置nova.conf

# 在全部计算节点操作,以compute01节点为例; # 配置只涉及nova.conf的”[neutron]”字段 [root@compute ~]# vim /etc/nova/nova.conf [neutron] url=http://controller:9696 auth_type=password auth_url=http://controller:35357 project_name=service project_domain_name=default username=neutron user_domain_name=default password=neutron_pass region_name=RegionTest

5. 启动服务

# nova.conf文件已变更,首先需要重启全部计算节点的nova服务 [root@compute01 ~]# systemctl restart openstack-nova-compute.service # 开机启动 [root@compute01 ~]# systemctl enable neutron-linuxbridge-agent.service # 启动 [root@compute01 ~]# systemctl restart neutron-linuxbridge-agent.service

6. 验证

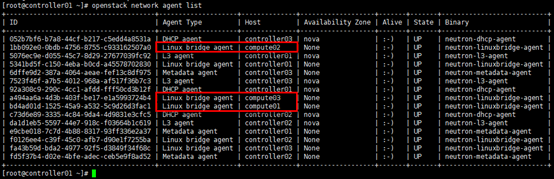

# 任意控制节点(或具备客户端的节点)操作 [root@controller01 ~]# . admin-openrc # 查看neutron相关的agent; # 或:openstack network agent list --agent-type linux-bridge [root@controller01 ~]# openstack network agent list

浙公网安备 33010602011771号

浙公网安备 33010602011771号