4.Python携带cookie信息进行查询

#!/usr/bin/env python

# -*- coding:utf-8 -*-

import urllib2

import csv

import json

# 定义通过http请求获取返回结果的函数

def get_http_content(query_url=''):

# 43 - 58

servers = []

for i in range(43, 59):

servers.append('1.1.1.%s' % i)

# 用户登录信息

username = 'xxx'

loginToken = '2de89edc-387e-4349-b821-00c0ef97343e1504494303676'

cookie = 'key=value' # 从请求中的Cookie来看,Name是key, Value是value

# 需要查询的参数

query_url = 'http://xxx.xx.x/asset/query-assets?' \

'{\"username\":\"%s\",\"loginToken\":\"%s\",\"asset_id\":\"%s\",\"ip\":\"%s\",\"type\":\"eq\"}' % (

username, loginToken, ','.join(servers), ','.join(servers))

# 开始查询

opener = urllib2.build_opener()

opener.addheaders.append(('Cookie', cookie))

f = opener.open(query_url)

data = f.read()

return data

# print data.decode('utf8').encode('gbk')

# 将结果写进csv

def write_to_csv(final_result=[]):

# 如果参数不是wb的形式,那么就会在每次写入行的时候自动添加一个空行

with open('result.csv', 'wb') as csvfile:

# spamwriter = csv.writer(csvfile, dialect='excel')

spamwriter = csv.writer(csvfile)

spamwriter.writerow(['propno', 'dcloc', 'dcname', 'rack', 'cabinet', 'internalip'])

for tmp in final_result:

spamwriter.writerow(tmp)

print 'success!'

if __name__ == '__main__':

# 对返回数据进行处理

results = []

in_json = json.loads(get_http_content(''))

# print in_json.keys()

results = in_json['info']

# 需要统计的参数列表

items_server = ['assets_sn', 'location_place', 'location_room_name', 'location_col_name',

'location_tank', 'location_in_ip']

# 获取参数值

final_result = []

for str in results:

tmp = []

for item_server in items_server:

tmp.append(str[item_server].encode('gbk'))

if len(tmp):

final_result.append(tmp)

# 写进csv文件

write_to_csv(final_result)

另附:

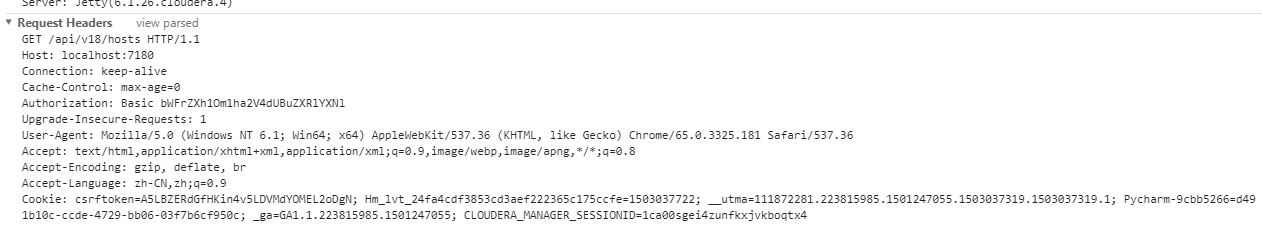

def get_ips():

url_get = 'http://localhost:7180/api/v18/hosts'

opener = urllib2.build_opener()

opener.addheaders.append(('Cookie', 'csrftoken=A5LBZERdGfHKin4v5LDVMdYOMEL2oDgN; Hm_lvt_24fa4cdf3853cd3aef222365c175ccfe=1503037722; __utma=111872281.223815985.1501247055.1503037319.1503037319.1; Pycharm-9cbb5266=d491b10c-ccde-4729-bb06-03f7b6cf950c; _ga=GA1.1.223815985.1501247055; CLOUDERA_MANAGER_SESSIONID=1ca00sgei4zunfkxjvkboqtx4'))

f = opener.open(url_get)

data = f.read()

# print data

f_ip = open('data.txt', 'w')

for item in json.loads(data)['items']:

# print item['ipAddress']

f_ip.write('%s\n' % item['ipAddress'])

f_ip.close()

http://www.cnblogs.com/makexu/

浙公网安备 33010602011771号

浙公网安备 33010602011771号