深度学习中常用的优化方法

附python代码如下:

#!/usr/bin/env python3

# encoding: utf-8

"""

@version: 0.1

@author: lyrichu

@license: Apache Licence

@contact: 919987476@qq.com

@site: http://www.github.com/Lyrichu

@file: lyrichu_20180424_01.py

@time: 2018/04/24 16:02

@description:

Q1:使用python实现GD,SGD,Momentum,AdaGrad,RMSProp以及Adam优化算法

假设这里优化的目标函数是一个二元函数:y = w1x1 + w2x2 + b,数据点为

[(1,1),2],[(2,3),5],[(3,4),7],[(5,6),11],real w1 = w2 = 1,b = 0

"""

import random

from math import sqrt

data = [[(1,1),2],[(2,3),5],[(3,4),7],[(5,6),11]]

def GD(data,lr = 0.001,epoches = 10):

random.seed(66) # 产生相同的随机数

w1,w2,b = [random.random() for _ in range(3)]

print("Using method GD,lr = %f,epoches = %d" % (lr,epoches))

for i in range(epoches):

w1_grads = sum([2*(w1*x1+w2*x2+b-y)*x1 for (x1,x2),y in data])

w2_grads = sum([2*(w1*x1+w2*x2+b-y)*x2 for (x1,x2),y in data])

b_grads = sum([2*(w1*x1+w2*x2+b-y) for (x1,x2),y in data])

w1 -= lr*w1_grads

w2 -= lr*w2_grads

b -= lr*b_grads

loss = sum([(w1*x1+w2*x2+b-y)**2 for (x1,x2),y in data])

print("epoch %d,(w1,w2,b) = (%f,%f,%f),loss = %f" %(i+1,w1,w2,b,loss))

def SGD(data,lr = 0.001,epoches = 10):

random.seed(66) # 产生相同的随机数

w1, w2, b = [random.random() for _ in range(3)]

print("Using method SGD,lr = %f,epoches = %d" % (lr, epoches))

for i in range(epoches):

(x1,x2),y = random.choice(data) # 随机选择一个数据点

w1_grads = 2*(w1 * x1 + w2 * x2 + b - y)*x1

w2_grads = 2 * (w1 * x1 + w2 * x2 + b - y) * x2

b_grads = 2 * (w1 * x1 + w2 * x2 + b - y)

w1 -= lr * w1_grads

w2 -= lr * w2_grads

b -= lr * b_grads

loss = sum([(w1 * x1 + w2 * x2 + b - y) ** 2 for (x1, x2), y in data])

print("epoch %d,(w1,w2,b) = (%f,%f,%f),loss = %f" % (i + 1, w1, w2, b, loss))

def Momentum(data,beta = 0.9,lr = 0.001,epoches = 10):

random.seed(66) # 产生相同的随机数

w1, w2, b = [random.random() for _ in range(3)]

m = [0,0,0] # 初始动量

print("Using method Momentum,lr = %f,beta = %f,epoches = %d" % (lr,beta,epoches))

for i in range(epoches):

(x1, x2), y = random.choice(data) # 随机选择一个数据点

w1_grads = 2 * (w1 * x1 + w2 * x2 + b - y) * x1

w2_grads = 2 * (w1 * x1 + w2 * x2 + b - y) * x2

b_grads = 2 * (w1 * x1 + w2 * x2 + b - y)

m[0] = beta*m[0] + lr*w1_grads

m[1] = beta*m[1] + lr*w2_grads

m[2] = beta*m[2] + lr*b_grads

w1 -= m[0]

w2 -= m[1]

b -= m[2]

loss = sum([(w1 * x1 + w2 * x2 + b - y) ** 2 for (x1, x2), y in data])

print("epoch %d,(w1,w2,b) = (%f,%f,%f),loss = %f" % (i + 1, w1, w2, b, loss))

def AdaGrad(data,eta = 1e-8,lr = 0.1,epoches = 10):

# eta 是平滑系数(非常小的一个正数)

s = 0

random.seed(66) # 产生相同的随机数

w1, w2, b = [random.random() for _ in range(3)]

print("Using method AdaGrad,lr = %f,eta = %f,epoches = %d" % (lr,eta,epoches))

for i in range(epoches):

(x1, x2), y = random.choice(data) # 随机选择一个数据点

w1_grads = 2 * (w1 * x1 + w2 * x2 + b - y) * x1

w2_grads = 2 * (w1 * x1 + w2 * x2 + b - y) * x2

b_grads = 2 * (w1 * x1 + w2 * x2 + b - y)

s += sum([i**2 for i in [w1_grads,w2_grads,b_grads]])

s1 = sqrt(s+eta)

w1 -= lr*w1_grads/s1

w2 -= lr*w2_grads/s1

b -= lr*b_grads/s1

loss = sum([(w1 * x1 + w2 * x2 + b - y) ** 2 for (x1, x2), y in data])

print("epoch %d,(w1,w2,b) = (%f,%f,%f),loss = %f" % (i + 1, w1, w2, b, loss))

def RMSProp(data,eta = 1e-8,beta = 0.9,lr =0.1,epoches = 10):

# eta 是平滑系数(非常小的一个正数)

s = 0

random.seed(66) # 产生相同的随机数

w1, w2, b = [random.random() for _ in range(3)]

print("Using method RMSProp,lr = %f,beta = %f,eta = %f,epoches = %d" % (lr,beta,eta,epoches))

for i in range(epoches):

(x1, x2), y = random.choice(data) # 随机选择一个数据点

w1_grads = 2 * (w1 * x1 + w2 * x2 + b - y) * x1

w2_grads = 2 * (w1 * x1 + w2 * x2 + b - y) * x2

b_grads = 2 * (w1 * x1 + w2 * x2 + b - y)

s = beta*s + (1-beta)*sum([i ** 2 for i in [w1_grads, w2_grads, b_grads]])

s1 = sqrt(s + eta)

w1 -= lr*w1_grads / s1

w2 -= lr*w2_grads / s1

b -= lr*b_grads / s1

loss = sum([(w1 * x1 + w2 * x2 + b - y) ** 2 for (x1, x2), y in data])

print("epoch %d,(w1,w2,b) = (%f,%f,%f),loss = %f" % (i + 1, w1, w2, b, loss))

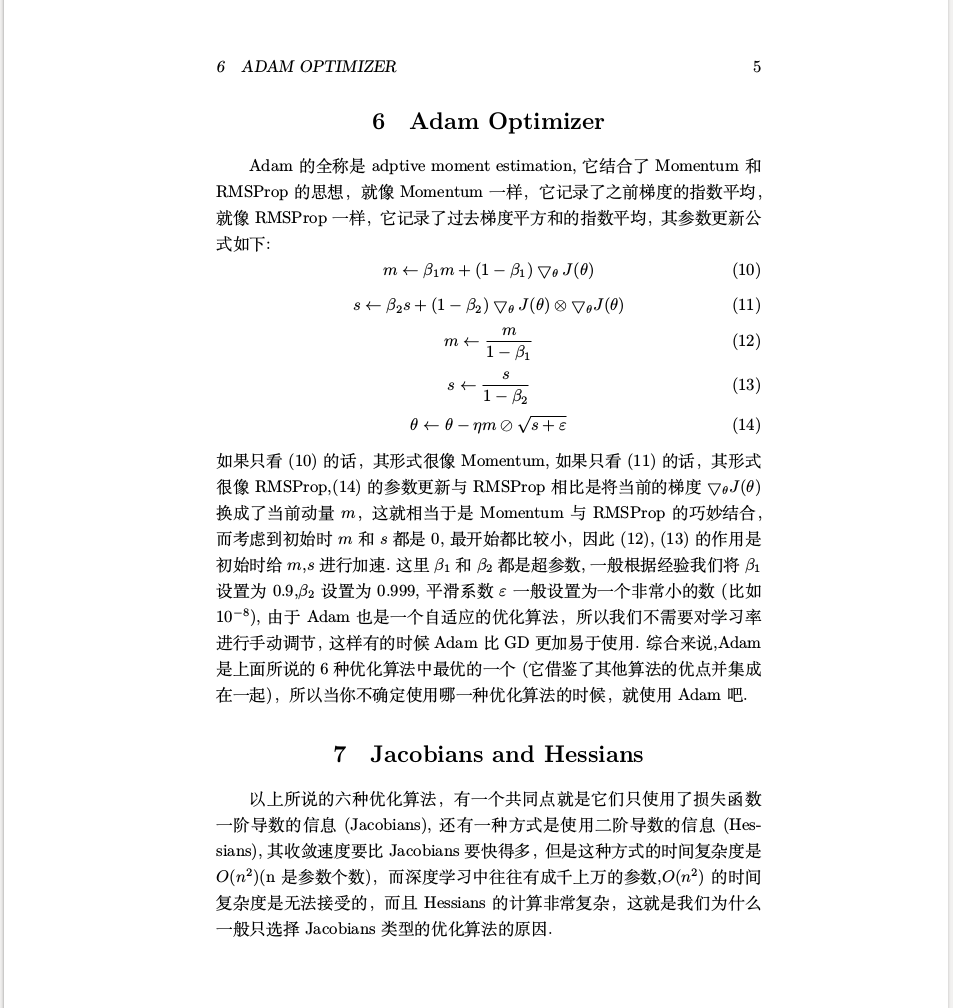

def Adam(data,eta = 1e-8,beta1 = 0.9,beta2 = 0.99,lr = 0.1,epoches = 10):

# eta 是平滑系数(非常小的一个正数)

s = 0

m = [0,0,0] # 动量

random.seed(66) # 产生相同的随机数

w1, w2, b = [random.random() for _ in range(3)]

print("Using method Adam,lr = %f,beta1 = %f,beta2 = %f,eta = %f,epoches = %d" % (lr, beta1,beta2,eta,epoches))

for i in range(epoches):

(x1, x2), y = random.choice(data) # 随机选择一个数据点

w1_grads = 2 * (w1 * x1 + w2 * x2 + b - y) * x1

w2_grads = 2 * (w1 * x1 + w2 * x2 + b - y) * x2

b_grads = 2 * (w1 * x1 + w2 * x2 + b - y)

m[0] = beta1 * m[0] + (1-beta1)* w1_grads

m[1] = beta1 * m[1] + (1-beta1)* w2_grads

m[2] = beta1 * m[2] + (1-beta1)* b_grads

m = [i/(1-beta1) for i in m]

s = beta2 * s + (1 - beta2) * sum([i ** 2 for i in [w1_grads, w2_grads, b_grads]])

s /= (1-beta2)

s1 = sqrt(s + eta)

w1 -= lr * m[0] / s1

w2 -= lr * m[1] / s1

b -= lr * m[2] / s1

loss = sum([(w1 * x1 + w2 * x2 + b - y) ** 2 for (x1, x2), y in data])

print("epoch %d,(w1,w2,b) = (%f,%f,%f),loss = %f" % (i + 1, w1, w2, b, loss))

if __name__ == '__main__':

GD(data,lr = 0.001,epoches=100)

SGD(data,lr = 0.001,epoches=100)

Momentum(data,lr = 0.001,beta=0.9,epoches=100)

AdaGrad(data,eta=1e-8,lr=1,epoches=100)

RMSProp(data,lr=1,beta=0.99,eta=1e-8,epoches=100)

Adam(data,lr=1,beta1=0.9,beta2=0.9999,eta=1e-8,epoches=100)

原始的pdf文档如果需要可以在https://pan.baidu.com/s/1GhGu2c_RVmKj4hb_bje0Eg下载.

热爱编程,热爱机器学习!

github:http://www.github.com/Lyrichu

github blog:http://Lyrichu.github.io

个人博客站点:http://www.movieb2b.com(不再维护)

浙公网安备 33010602011771号

浙公网安备 33010602011771号