效果

源代码

import time

from bs4 import BeautifulSoup

import requests

from selenium import webdriver

# 原作

# https://blog.csdn.net/qq_40050586/article/details/105729740

urlbase = "https://www.nowcoder.com"

# Android 面经

targetUrl = "https://www.nowcoder.com/discuss/experience?tagId=642"

def getIndexPage(url):

driver = webdriver.Chrome(executable_path='/Users/jiangjia/Downloads/chromedriver')

driver.get(targetUrl)

time.sleep(3)

js = "return action=document.body.scrollHeight"

height = driver.execute_script(js)

driver.execute_script('window.scrollTo(0, document.body.scrollHeight)')

time.sleep(5)

t1 = int(time.time())

status = True

num = 0

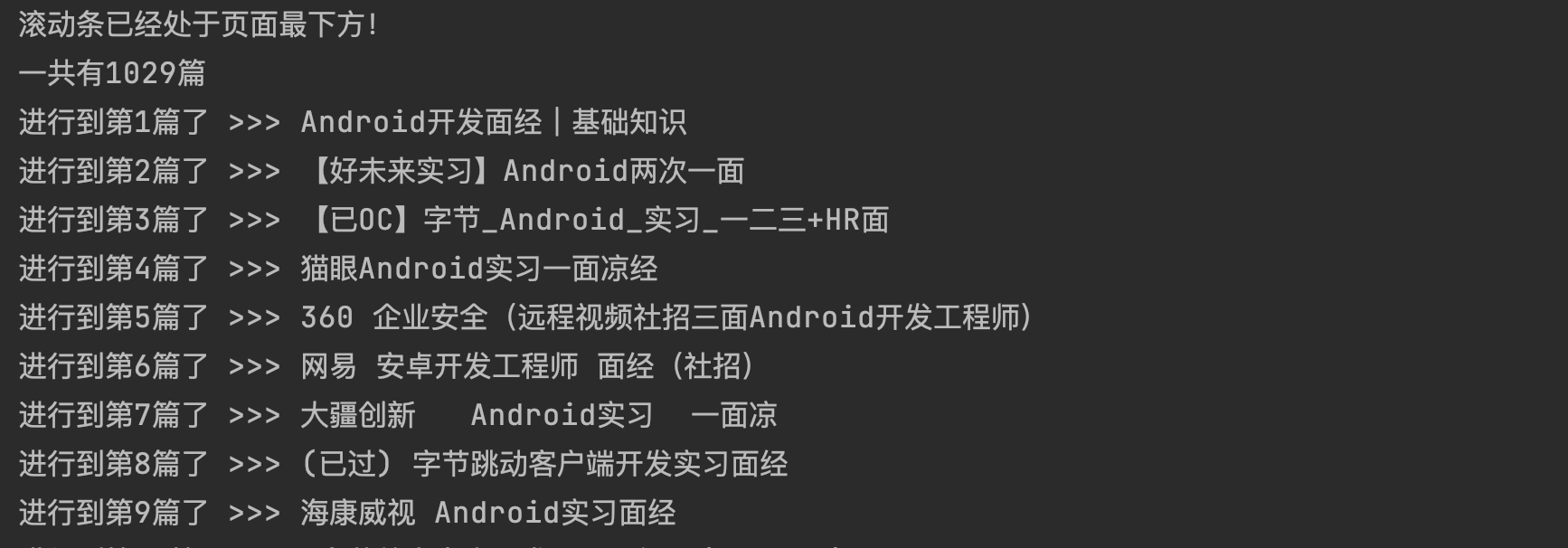

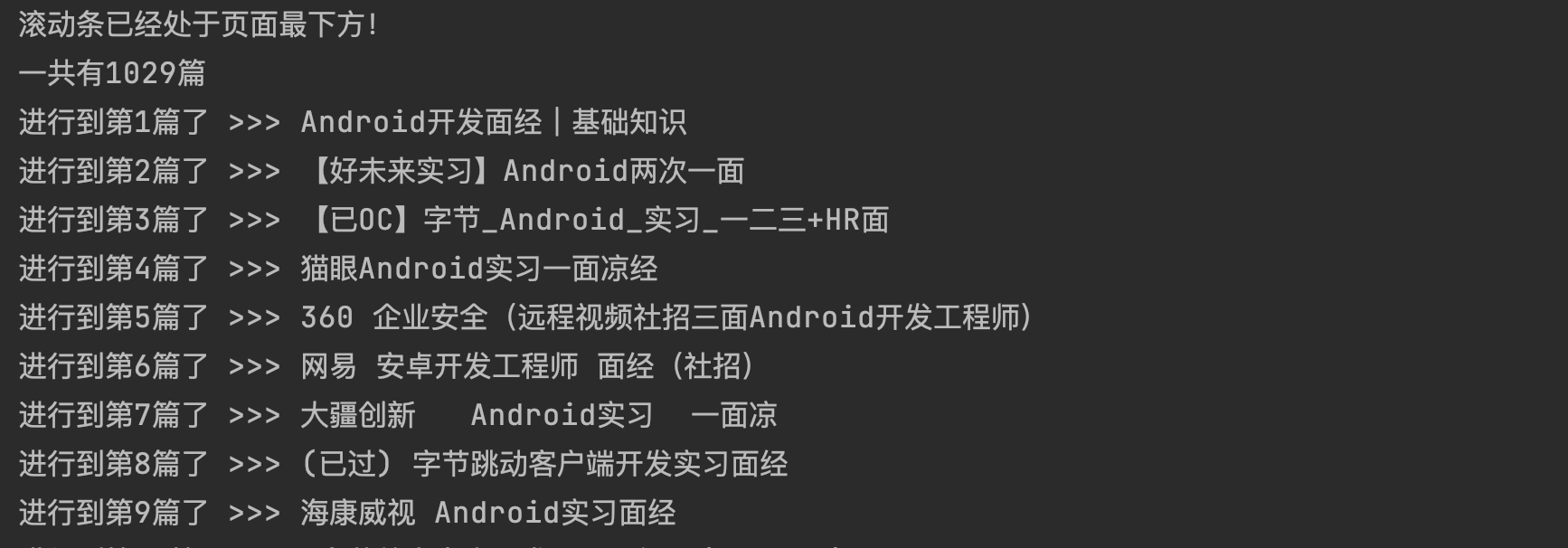

# 这里的一堆代码就是将滚动条拉到最下面,让资源加载完毕。

while status:

t2 = int(time.time())

if t2 - t1 < 30:

new_height = driver.execute_script(js)

if new_height > height:

time.sleep(1)

driver.execute_script('window.scrollTo(0, document.body.scrollHeight)')

height = new_height

t1 = int(time.time())

elif num < 3:

time.sleep(3)

num = num + 1

else:

print("滚动条已经处于页面最下方!")

status = False

driver.execute_script('window.scrollTo(0, 0)')

break

content = driver.page_source

return content

def getUrl(page):

soup = BeautifulSoup(page, 'lxml')

list = []

for ul in soup.select(".js-nc-wrap-link"):

list.append(ul.attrs['data-href'])

return list

def getPageDetail(urll):

try:

response = requests.get(urll)

if response.status_code == 200:

return response.text

return None

except ConnectionError:

print('Error occurred')

return None

def parseContentName(page):

soup = BeautifulSoup(page, 'lxml')

return soup.select(".post-title")[0].get_text()

def main():

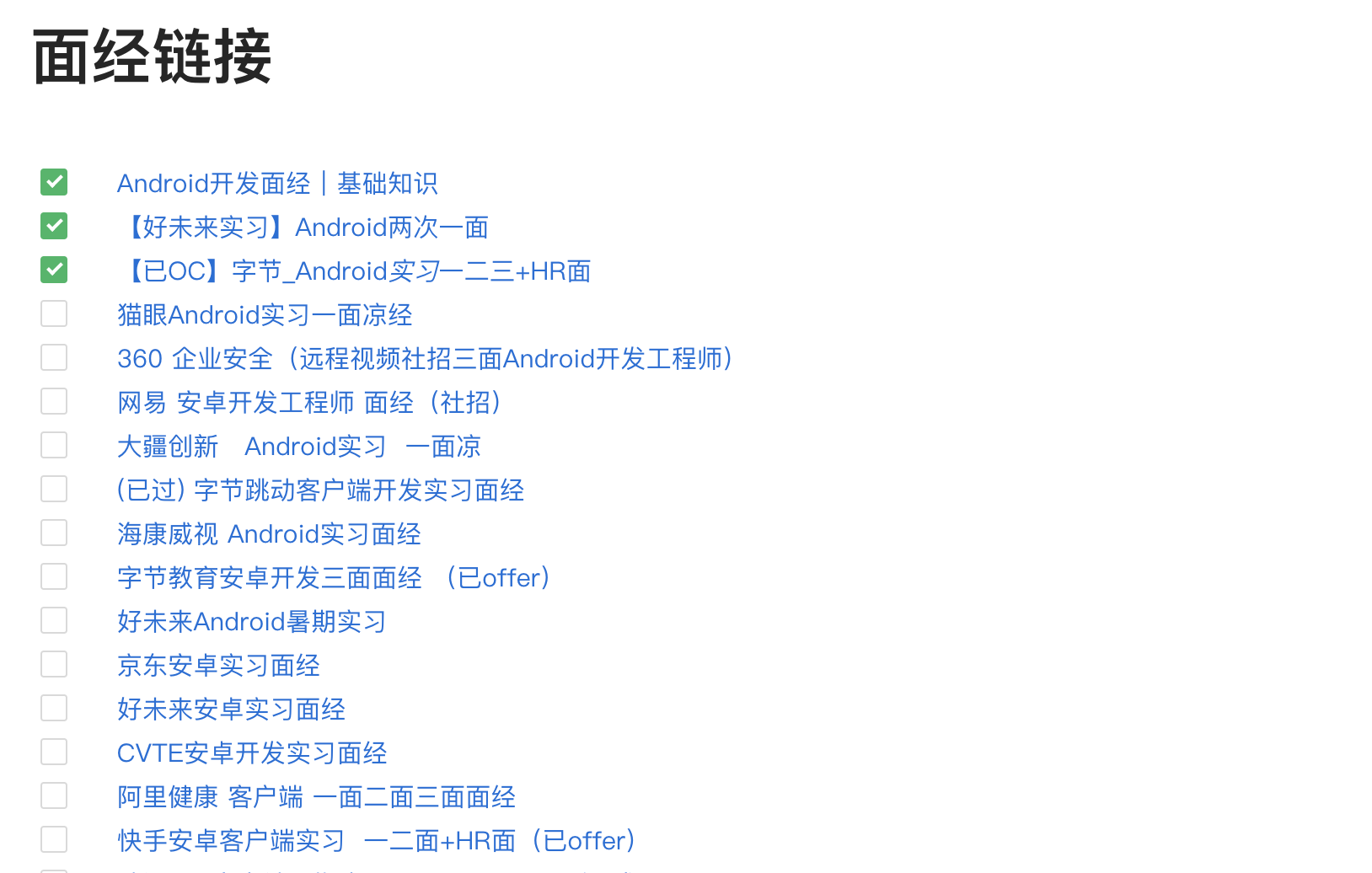

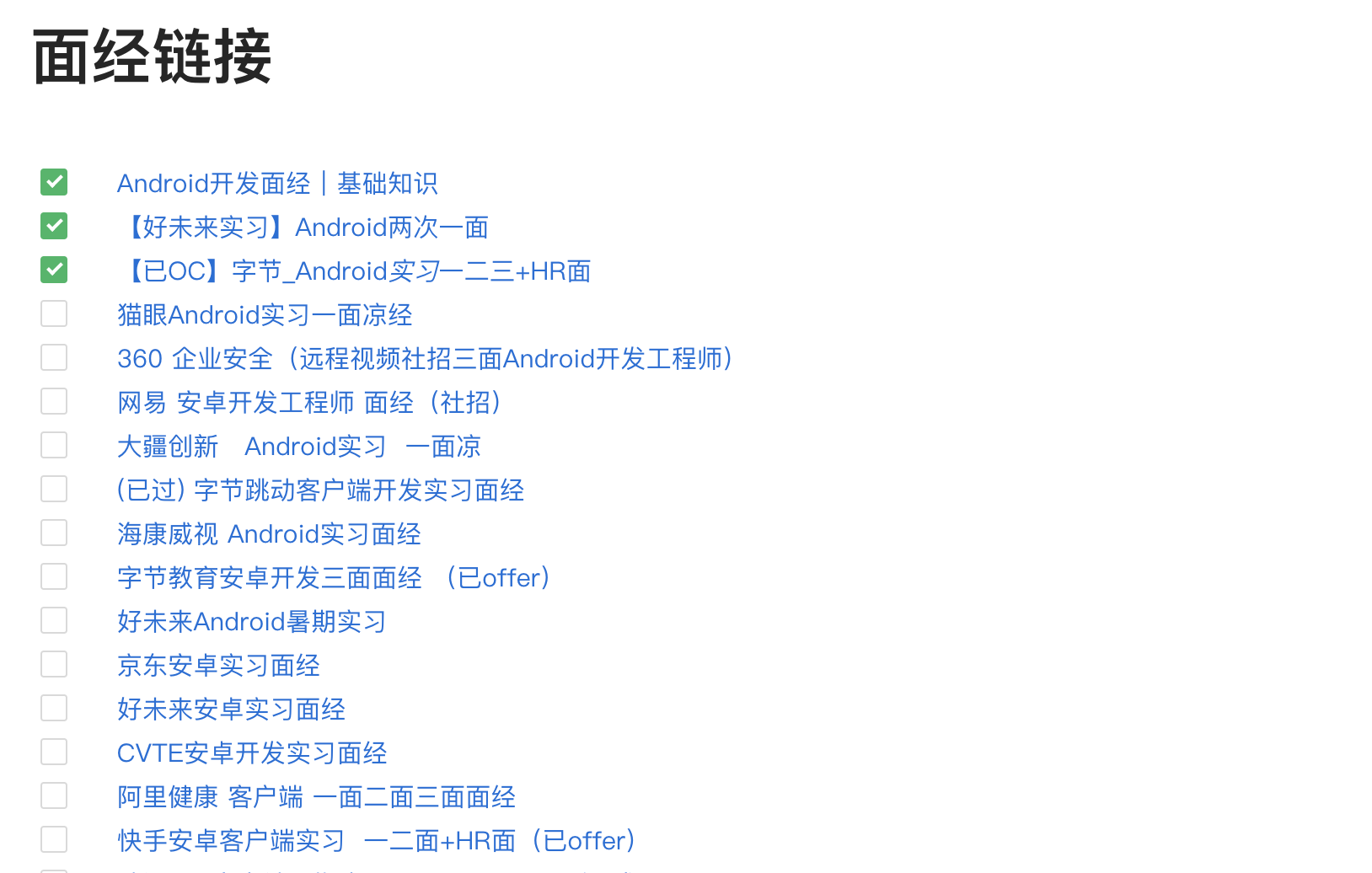

file = open("面经整理.md", "a+")

page = getIndexPage("https://www.nowcoder.com/discuss/experience?tagId=639&order=3&companyId=0&phaseId=2")

list = getUrl(page)

print("一共有%d篇" % len(list))

count = 0

for item in list:

content = getPageDetail(urlbase + item)

name = parseContentName(content)

file.write("- [ ]   [{0}]({1})\n\n".format(name, urlbase + item))

count = count + 1

print("进行到第{0}篇了 >>> {1}".format(count, name))

file.close()

if __name__ == '__main__':

main()

浙公网安备 33010602011771号

浙公网安备 33010602011771号