第二次作业

作业①

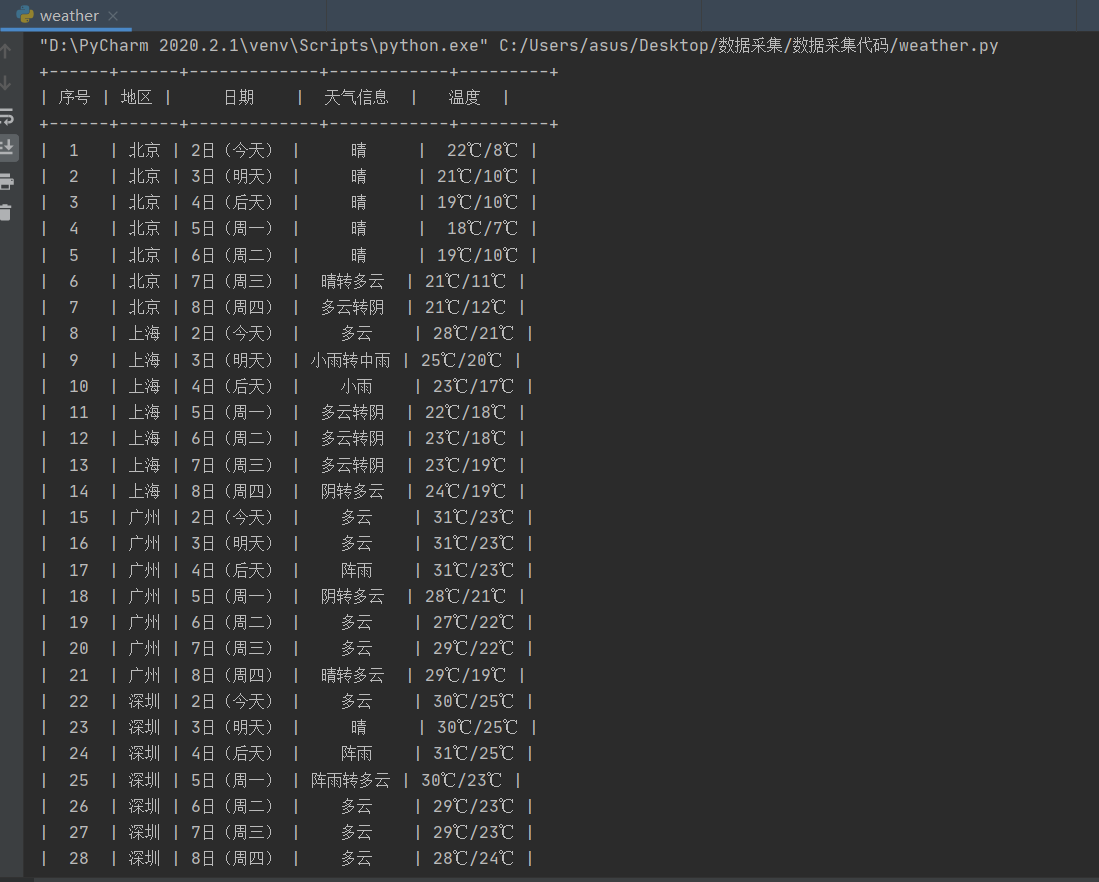

1)、爬取与储存天气预报数据实验

from bs4 import BeautifulSoup

from bs4 import UnicodeDammit

import urllib.request

import sqlite3

import prettytable as pt

x = pt.PrettyTable() # 制表

x.field_names = ["序号", "地区", "日期", "天气信息", "温度"] # 设置表头

class WeatherDB:

def openDB(self):

self.con = sqlite3.connect("weathers.db")

self.cursor = self.con.cursor()

try:

self.cursor.execute(

"create table weathers (wCity varchar(16),wDate varchar(16),wWeather varchar(64),wTemp varchar(32),constraint pk_weather primary key (wCity,wDate))")

except:

self.cursor.execute("delete from weathers")

def closeDB(self):

self.con.commit()

self.con.close()

def insert(self, city, date, weather, temp):

try:

self.cursor.execute("insert into weathers (wCity,wDate,wWeather,wTemp) values (?,?,?,?)",

(city, date, weather, temp))

except Exception as err:

print(err)

def show(self):

num = 1 # 设置序号

self.cursor.execute("select * from weathers")

rows = self.cursor.fetchall()

for row in rows:

x.add_row([num, row[0], row[1], row[2], row[3]])

num += 1

print(x)

class Weatherforecast():

def __init__(self):

self.headers = {

"User-Agent": "Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) "

"Chrome/70.0.3538.25 Safari/537.36 Core/1.70.3775.400 QQBrowser/10.6.4209.400"}

self.citycode = {"北京": "101010100", "上海": "101020100", "广州": "101280101", "深圳": "101280601"}

def forecastcity(self, city):

if city not in self.citycode.keys():

print(city + "code not found")

return

url = "http://www.weather.com.cn/weather/" + self.citycode[city] + ".shtml"

try:

req = urllib.request.Request(url, headers=self.headers)

data = urllib.request.urlopen(req)

data = data.read()

dammit = UnicodeDammit(data, ["utf-8", "gbk"])

data = dammit.unicode_markup

soup = BeautifulSoup(data, 'html.parser')

lis = soup.select("ul[class='t clearfix'] li")

for li in lis:

try:

date_ = li.select('h1')[0].text

weather_ = li.select('p[class="wea"]')[0].text

temp_ = li.select('p[class="tem"] span')[0].text + '℃/' + li.select("p[class='tem'] i")[0].text

self.db.insert(city, date_, weather_, temp_)

except Exception as err:

print(err)

except Exception as err:

print(err)

def process(self, cities):

self.db = WeatherDB()

self.db.openDB()

for city in cities:

self.forecastcity(city)

self.db.show()

self.db.closeDB()

ws = Weatherforecast()

ws.process(["北京", '上海', '广州', '深圳'])

2)、心得体会

这次实验初步学习了在Python中对于数据库的一些用法,和上学期学的大数据基础实践学习的知识有一部分重叠,所以几乎都能不费力地理解代码。之前不理解self的用法,在这次实验中我通过上网查找以及询问同学,弄懂了self的用法。

作业②

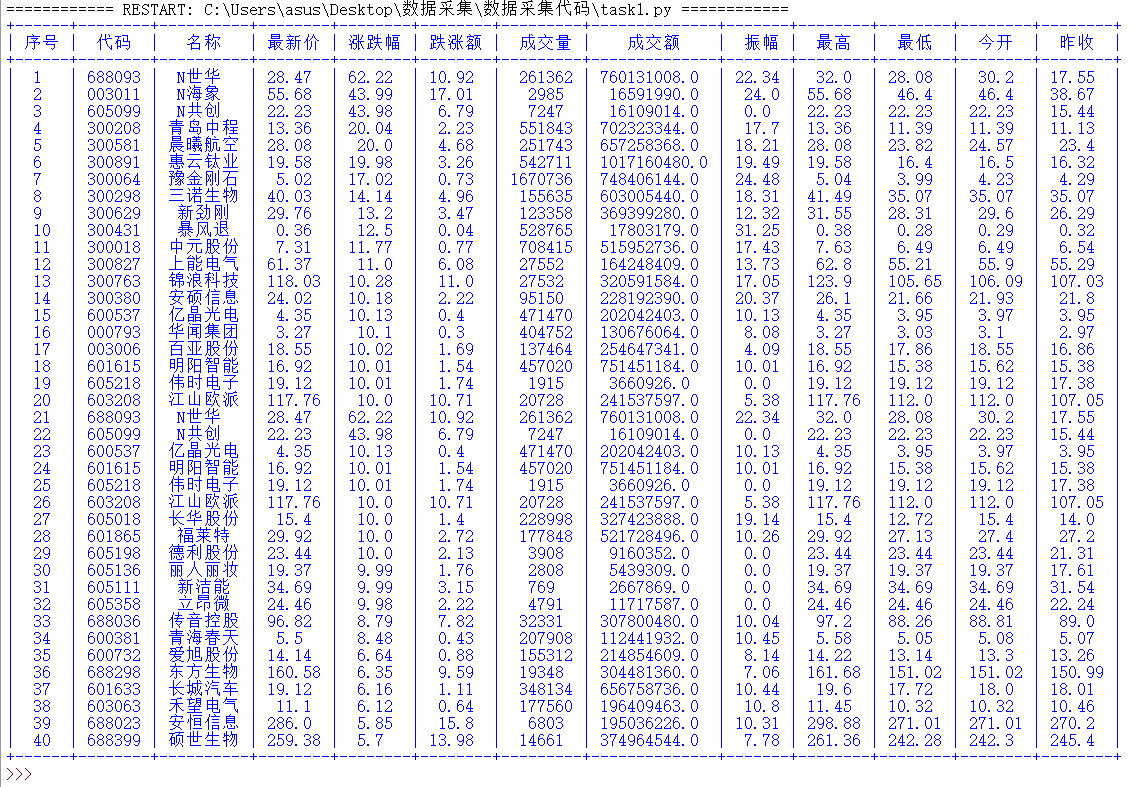

1)、爬取股票相关信息实验

import urllib

import urllib.request

import re

from bs4 import UnicodeDammit, BeautifulSoup

import prettytable as pt

x = pt.PrettyTable() # 制表

x.field_names = ["序号", "代码", "名称", "最新价", "涨跌幅", "跌涨额", "成交量", "成交额", "振幅","最高","最低","今开","昨收"] # 设置表头

def getHtml(fs, fields, page, pz):

headers = {

"User-Agent": "Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) ""Chrome/70.0.3538.25 Safari/537.36 Core/1.70.3775.400 QQBrowser/10.6.4209.400"}

# 需要把page和pz转换成字符,不然会出现can only concatenate str (not "int") to str的报错

url = "http://58.push2.eastmoney.com/api/qt/clist/get?cb=jQuery112409968248217612661_1601548126340&pn=" + str(

page) + "&pz=" + str(pz) + "&po=1&np=1&ut=bd1d9ddb04089700cf9c27f6f7426281&fltt=2&invt=2&fid=f3&fs=" + \

fs + "&fields=" + fields + "&_=1601548126345"

req = urllib.request.Request(url, headers=headers)

data = urllib.request.urlopen(req)

data = data.read()

dammit = UnicodeDammit(data, ["utf-8", "gbk"])

data = dammit.unicode_markup

soup = BeautifulSoup(data, 'html.parser')

data = re.findall(r'"diff":\[(.*?)]', soup.text)

return data

# 获取股票数据

def getOnePageStock(num, fields, fs, page, pz):

data = getHtml(fs, fields, page, pz)

datas = data[0].strip("{").strip("}").split('},{') # 去掉头尾的"{"和"}",再通过"},{"切片

for i in range(len(datas)):

stock = datas[i].replace('"', "").split(",") # 去掉双引号并通过","切片

x.add_row(

[num, stock[6][4:], stock[7][4:], stock[0][3:], stock[1][3:], stock[2][3:], stock[3][3:], stock[4][3:],

stock[5][3:], stock[8][4:], stock[9][4:], stock[10][4:], stock[11][4:]]) # 将数据按行存入表格

num = num + 1

return num # 更新序号

def main():

num = 1 # 序号

page = 1 # 设置所爬取的页数

pz = 20 # 设置一页有多少条信息

fields = "f12,f14,f2,f3,f4,f5,f6,f7,f15,f16,f17,f18" # 设置爬取信息(f12:代码,f14:名称,f2:最新价,f3:涨跌幅,f4:涨跌额,f5:成交量,f6:成交额,f7:涨幅, f15: 最高, f16: 最低, f17: 今开, f18: 昨收)

fs = {

"沪深A股": "m:0+t:6,m:0+t:13,m:0+t:80,m:1+t:2,m:1+t:23",

"上证A股": "m:1+t:2,m:1+t:23"

} # 设置爬取哪些市场

for i in fs.keys():

num = getOnePageStock(num, fields, fs[i], page, pz)

print(x) # 输出表格

main()

2)、心得体会

这次实验我爬取了沪深A股和上证A股第一页的数据(一页20条数据,共40条数据)。因为表格的表头是中文,字节数不同,所以在Pycharm中表格没办法对齐,但用IDLE运行可以做到对齐的效果。在这次实验中,我学会了浏览器F12中的json用法以及通过抓包获取数据集URL。对于得到"fxx:xxxxxx"数据后的处理方法,我只想到了两种方法,一种是通过循环来切片得到后半部分的数据,另一种是只能针对当前题目的自定义字符串来截取后半部分的数据,我用的是第二种方法。

作业③

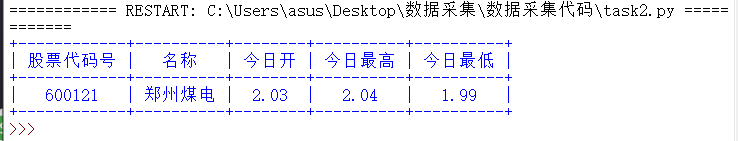

1)、爬取特定代码的股票的相关信息实验

import urllib

import urllib.request

import re

from bs4 import UnicodeDammit, BeautifulSoup

import prettytable as pt

x = pt.PrettyTable() # 制表

x.field_names = ["股票代码号", "名称", "今日开", "今日最高", "今日最低"] # 设置表头

def getHtml(number, fields):

headers = {

"User-Agent": "Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) ""Chrome/70.0.3538.25 Safari/537.36 Core/1.70.3775.400 QQBrowser/10.6.4209.400"}

url = "http://push2.eastmoney.com/api/qt/stock/get?ut=fa5fd1943c7b386f172d6893dbfba10b&invt=2&fltt=2&fields=" + fields + "&secid=1." + number + "&cb=jQuery1124028850151626570875_1601633354589&_=1601633354607"

req = urllib.request.Request(url, headers=headers)

data = urllib.request.urlopen(req)

data = data.read()

dammit = UnicodeDammit(data, ["utf-8", "gbk"])

data = dammit.unicode_markup

soup = BeautifulSoup(data, 'html.parser')

data = re.findall('{"f.*?}', soup.text)

return data

def getOnePageStock(number, fields):

data = getHtml(number, fields)

datas = data[0].strip("{").strip("}").split('},{') # 去掉头尾的"{"和"}",再通过"},{"切片

for i in range(len(datas)):

stock = datas[i].replace('"', "").split(",") # 去掉双引号并通过","切片

# print(stock)

x.add_row([stock[3][4:], stock[4][4:], stock[2][4:], stock[0][4:], stock[1][4:]]) # 将数据按行存入表格

def main():

number = "600" + "121" # 设置股票代码

fields = "f44,f45,f46,f57,f58" # 设置爬取信息(f44:今日最高,f45:今日最低,f46:今日开,f57:股票代码号,f58:名称)

try:

getOnePageStock(number, fields)

print(x)

except:

print("目标股票不存在") # 不是所有代码都存在股票信息,所以需要异常处理

main()

2)、心得体会

实验三和实验二差不多,就不赘述了。在这个实验中,"secid="后面的数字是1或者0需要注意一下。还有一点我没有理解,那就是有的股票代码在网站上搜得到,但是程序运行结果却是股票不存在,例如300121,只能猜测是自身的代码还存在一些的问题。

浙公网安备 33010602011771号

浙公网安备 33010602011771号