1、获取单条新闻的#标题#链接#时间#来源#内容 #点击次数,并包装成一个函数。

import requests from bs4 import BeautifulSoup mt="http://news.gzcc.cn/html/xiaoyuanxinwen/" res=requests.get(mt) res.encoding='utf-8' soup=BeautifulSoup(res.text,"html.parser") for news in soup.select('li'): if len(news.select('.news-list-title'))>0: title=(news.select('.news-list-title')[0].text) url=news.select('a')[0]['href'] day=(news.select('.news-list-info')[0].contents[0].text) sorce=(news.select('.news-list-info')[0].contents[1].text) resd=requests.get(url) resd.encoding='utf-8' soupd=BeautifulSoup(resd.text,"html.parser") textd=(soupd.select('.show-content')[0].text) diji=requests.get('http://oa.gzcc.cn/api.php?op=count&id=8301&modelid=80').text.split('.')[-1].lstrip("html('").rstrip("html');") print(diji) break

2、获取一个新闻列表页的所有新闻的上述详情,并包装成一个函数。

import requests from bs4 import BeautifulSoup mt="http://news.gzcc.cn/html/xiaoyuanxinwen/" res=requests.get(mt) res.encoding='utf-8' soup=BeautifulSoup(res.text,"html.parser") for news in soup.select('li'): if len(news.select('.news-list-title'))>0: title=(news.select('.news-list-title')[0].text) url=news.select('a')[0]['href'] day=(news.select('.news-list-info')[0].contents[0].text) sorce=(news.select('.news-list-info')[0].contents[1].text) resd=requests.get(url) resd.encoding='utf-8' soupd=BeautifulSoup(resd.text,"html.parser") textd=(soupd.select('.show-content')[0].text) print(textd) break

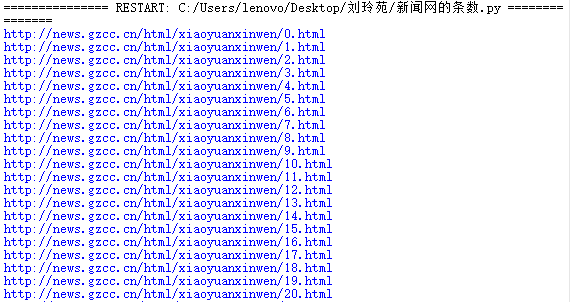

3、获取所有新闻列表页的网址,调用上述函数。

import requests from bs4 import BeautifulSoup import re gzccurl='http://news.gzcc.cn/html/xiaoyuanxinwen/' res=requests.get(gzccurl) res.encoding='utf-8' soup=BeautifulSoup(res.text,"html.parser") n=int(soup.select('.a1')[0].text.rstrip('条')) page=n//10+1 for i in range(page+1): pageurl='http://news.gzcc.cn/html/xiaoyuanxinwen/{}.html'.format(i) print(pageurl)

4、完成所有校园新闻的爬取工作。

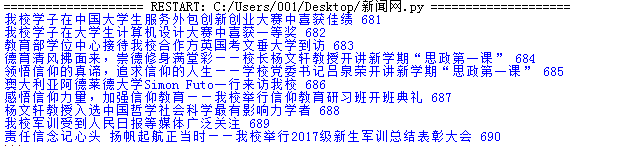

import requests from bs4 import BeautifulSoup import re mt="http://news.gzcc.cn/html/xiaoyuanxinwen/" res=requests.get(mt) res.encoding='utf-8' soup=BeautifulSoup(res.text,"html.parser") def getdiji(newurl): id=re.search('_(.*).html',newurl).group(1).split('/')[1] dijiurl='http://oa.gzcc.cn/api.php?op=count&id={}&modelid=80'.format(id) diji=requests.get('http://oa.gzcc.cn/api.php?op=count&id=8301&modelid=80').text.split('.')[-1].lstrip("html('").rstrip("html');") return diji def genonepage(listurl): res=requests.get(listurl) res.encoding='utf-8' soup=BeautifulSoup(res.text,"html.parser") for news in soup.select('li'): if len(news.select('.news-list-title'))>0: title=(news.select('.news-list-title')[0].text) #标题 url=news.select('a')[0]['href'] #网址 day=(news.select('.news-list-info')[0].contents[0].text) #日期 sorce=(news.select('.news-list-info')[0].contents[1].text) #来源 resd=requests.get(url) resd.encoding='utf-8' soupd=BeautifulSoup(resd.text,"html.parser") textd=(soupd.select('.show-content')[0].text)#点击次数 diji=getdiji(url) print(day,title,sorce,diji) gzccurl='http://news.gzcc.cn/html/xiaoyuanxinwen/' res=requests.get(gzccurl) res.encoding='utf-8' soup=BeautifulSoup(res.text,"html.parser") n=int(soup.select('.a1')[0].text.rstrip('条')) page=n//10+1 for i in range(2,7): pageurl='http://news.gzcc.cn/html/xiaoyuanxinwen/{}.html'.format(i) print(pageurl)

浙公网安备 33010602011771号

浙公网安备 33010602011771号