Dynamics 365 F&O and firewalls - monitor Azure IP ranges

Contents hide

If you’re integrating Dynamics 365 Finance & Operations with 3rd parties, and your organization or the 3rd party one are using a firewall, you might’ve found yourself in the scenario of being asked “which is the production/sandbox IP address?”.

Well, we don’t know. We know which IP it has now, but we don’t know if it will have the same IP in the future, you will have to monitor this if you plan on opening single IPs. This is something Dag Calafell wrote about on his blog: Static IP not guaranteed for Dynamics 365 for Finance and Operations.

I monitor with my eye

I monitor with my eyeSo, what should I do if I have a firewall and need to allow access to/from Dynamics 365 F&O or any other Azure service? The network team usually doesn’t like the answer: if you can’t allow a FQDN, you should open all the address ranges for the datacenter and service you want to access. And that’s a lot of addresses that make the network team sad.

In today’s post, I’ll show you a way to keep an eye on the ranges provided by Microsoft, and hopefully make our life easier.

WARNING: due to this LinkedIn comment, I want to remark that the ranges you can find using this method are for INBOUND communication into Dynamics 365 or whatever service. For outbound communication, check this on Learn: For my Microsoft-managed environments, I have external components that have dependencies on an explicit outbound IP safe list. How can I ensure my service is not impacted after the move to self-service deployment?

Azure IP Ranges: can we monitor them?

Microsoft offers a JSON file you can download with the ranges for all its public cloud datacenters and different services. Yes, a file, not an API.

But wait, don’t complain yet, there IS an API we can use: the Azure REST API. And specifically the Service Tags section under Virtual Networks. The results from calling this API or downloading the file are a bit different, the JSON is structured differently, but both could serve our purpose.

My proposal: an Azure function

We will be querying a REST API, so we could perfectly be using a Power Automate flow to do this. Why am I overdoing things with an Azure function? Because I hate parsing JSON in Power Automate. That’s the main reason, but not the only one. Also because I love Azure functions.

Authentication

To authenticate and be able to access the Azure REST API we need to create an Azure Active Directory app registration, we’ve done this a million times, right? No need to repeat it.

We will need a secret for that app registration too. Keep both. We will create a service principal using it.

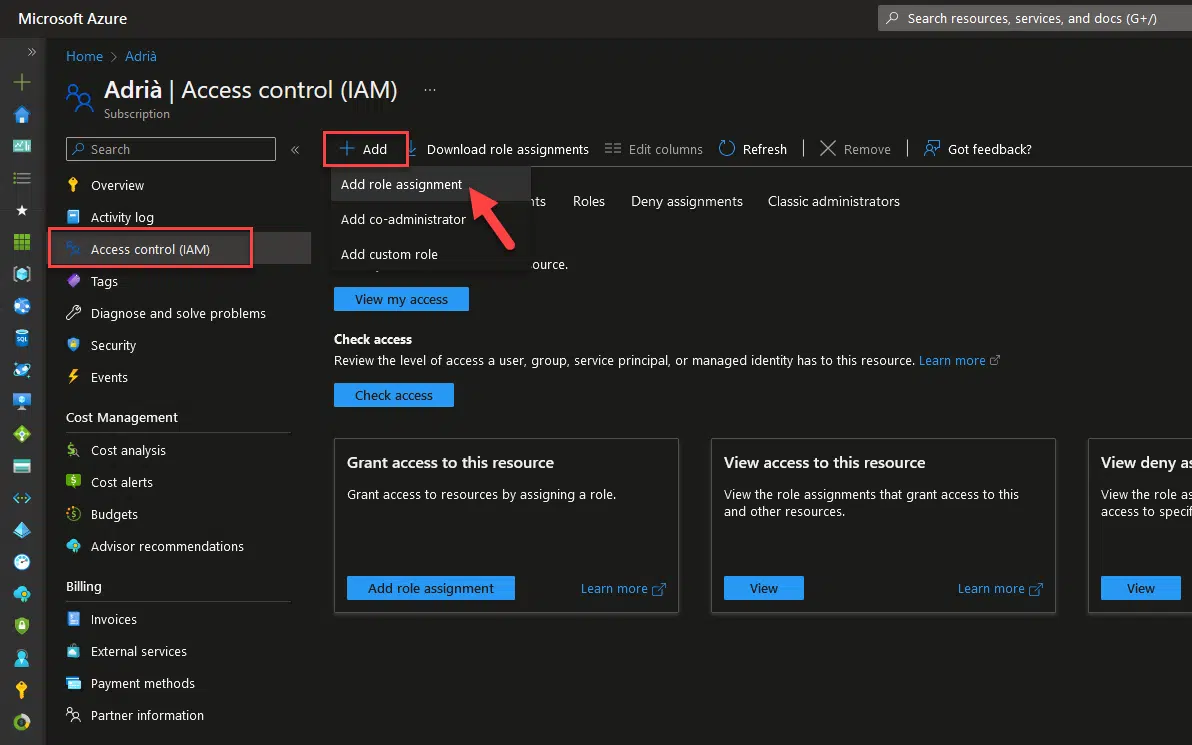

Go to your subscription, to the “Access control (IAM)” section and add a new role assignment:

Create a service principal

Create a service principal

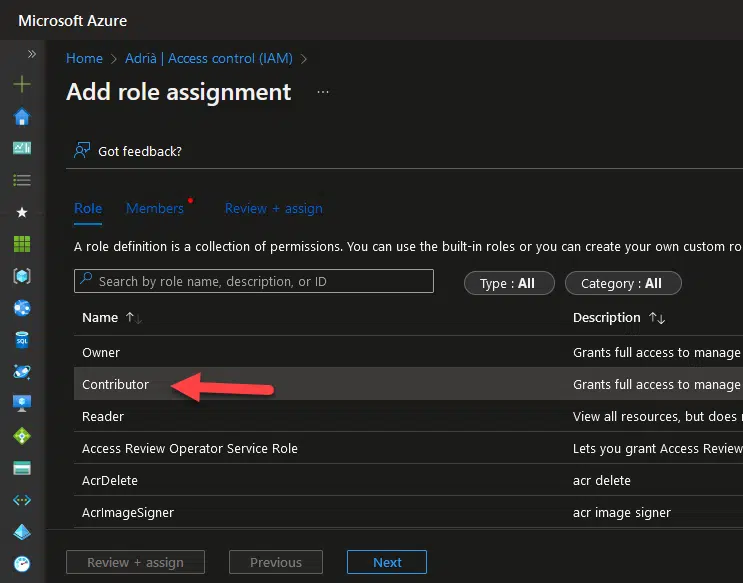

Select the contributor role and click next:

Contributor role

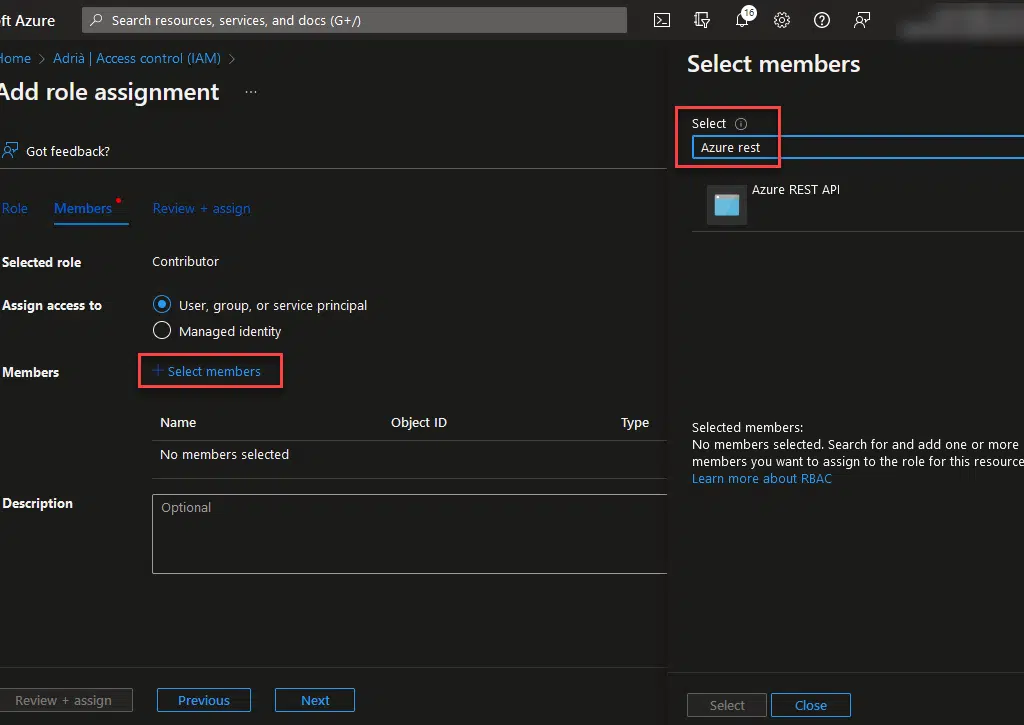

Contributor roleClick the select members text and look for the name of the app registration you created before:

Add members

Add membersFinally, click the “Select” button, and the “Review + assign” one to end. Now we have a service principal with access to a subscription. We will use this to authenticate and access the Azure REST API.

The function

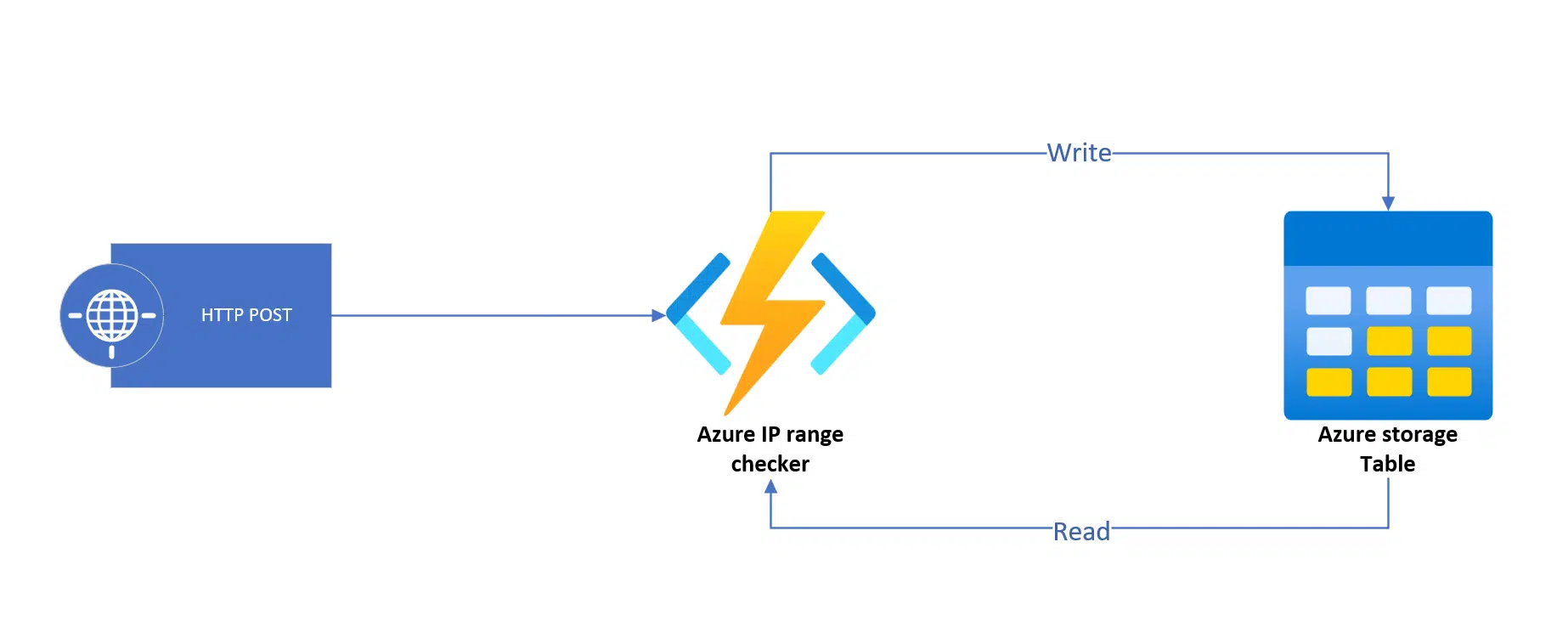

The function will have an HTTP trigger, and will read and write data in an Azure Table. We will do a POST call to the function’s endpoint, and that will trigger the process.

Nice diagram

Nice diagramThen the function will download the JSON content from the Azure REST API, do several things, like looking into the table to see if we stored a previous version of the addresses file, compare both and save the latest version into the table. This is the code of the function:

[FunctionName("CheckRanges")]

public static async Task<IActionResult> Run(

[HttpTrigger(AuthorizationLevel.Function, "post", Route = null)] HttpRequest req,

ILogger log)

{

log.LogInformation("C# HTTP trigger function processed a request.");

string ret = string.Empty;

try

{

string body = String.Empty;

using (StreamReader streamReader = new StreamReader(req.Body))

{

body = await streamReader.ReadToEndAsync();

}

if (string.IsNullOrEmpty(body))

{

throw new Exception("No request body found.");

}

dynamic data = JsonConvert.DeserializeObject(body);

string serviceTagRegion = data.serviceTagRegion;

string region = data.region;

if (string.IsNullOrEmpty(serviceTagRegion) || string.IsNullOrEmpty(region))

{

throw new Exception("The values in the cannot be empty.");

}

// Get token and call the API

var token = GetToken().Result;

var latestServiceTag = GetFile(token, region).Result;

if (latestServiceTag is null)

{

throw new Exception("No tag file has been downloaded.");

}

// Download existing file from the blob, if exists, and compare the root changeNumber

var existingServiceTagEntity = await ReadTableAsync();

// If there's a file in the blob container we retrieve it and compare the changeNumber value. If it's the same there's no changes in the file.

if (existingServiceTagEntity is not null)

{

if (existingServiceTagEntity.ChangeNumber == latestServiceTag.changeNumber)

{

// Return empty containers in the JSON file

AddressChanges diff = new AddressChanges();

diff.addedAddresses = Array.Empty<string>();

diff.removedAddresses = Array.Empty<string>(); ;

ret = JsonConvert.SerializeObject(diff);

log.LogInformation("The downloaded file has the same changenumber as the already existing one. No changes.");

// Return empty JSON containers

return new OkObjectResult(ret);

}

}

// Process the new file

var serviceTagSelected = latestServiceTag.values.FirstOrDefault