李航-统计学习方法-笔记-7:支持向量机

简述

支持向量机 :是一种二分类模型,它的基本模型是定义在特征空间上的间隔最大的线性分类器,间隔最大使它有别于感知机。

核技巧:SVM还包括核技巧,这使它成为实质上的非线性分类器。

间隔最大化:SVM的学习策略是间隔最大化,可形式化为一个求解凸二次规划的问题,也等价于正则化的合页损失函数的最小化问题。SVM的学习算法是求解凸二次规划的最优化算法。

线性可分SVM:当训练数据线性可分时,通过硬间隔最大化,学习一个线性的分类器,即线性可分SVM,又称硬间隔SVM。

线性SVM:当训练数据近似线性可分时,通过软间隔最大化,也学习一个线性分类器,即线性SVM,又称软间隔SVM。

非线性SVM:当训练数据线性不可分时,通过使用核技巧及软间隔最大化,学习非线性SVM。

核函数表示将输入从输入空间映射到特征空间得到特征向量之间的内积。通过使用核函数可以学习非线性SVM,等价于隐式地在高维特征空间中学习线性SVM。这样的方法称为核方法,核方法是比SVM更为一般的机器学习方法。

线性可分SVM

线性可分SVM:一般地,当训练数据线性可分时,存在无穷个分离超平面可将两类数据正确分开。感知机利用误分类最小的策略,求得分离超平面,不过这时的解有无穷多个。线性可分SVM利用间隔最大化求最优分离超平面,这时解唯一。

通过间隔最大化或等价地求解凸二次规划问题学习得到的超平面为\(w^* \cdot x + b^* = 0\)以及相应的分类决策函数\(f(x) = sign(w^* \cdot x + b^*)\),称为线性可分SVM。

函数间隔:一般来说,一个点距离分离超平面的远近可以表示分类预测的确信程度(越远越确信)。在超平面\(w \cdot x + b = 0\)确定的情况下,\(|w \cdot x + b|\)能够相对地表示点\(x\)距离超平面的远近。而\(w \cdot x + b\)的符号与类标记\(y\)的符号是否一致能够表示分类是否正确。所以可用\(y(w \cdot x + b)\)来表示分类的正确性及确信度,这就是函数间隔。

定义超平面\((w, b)\)关于样本点\((x_i, y_i)\)的函数间隔为

定义超平面\((w, b)\)关于训练数据集\(T\)的函数间隔为超平面\((w, b)\)关于\(T\)中所有样本点\((x_i, y_i)\)的函数间隔的最小值。

几何间隔:但是选择分离超平面时,只有函数间隔还不够。因为只要成比例改变\(w\)和\(b\),例如将它们改为\(2w\)和\(2b\),超平面并没有改变,但函数间隔却成为原来的2倍。这一事实启示我们,可以对分离超平面的法向量\(w\)加某些约束,如规范化,\(||w|| = 1\),使间隔是确定的(\(w\)和\(b\)成比例改变时,几何间隔不变),这时函数间隔变成几何间隔。

定义超平面\((w, b)\)关于样本点\((x_i, y_i)\)的几何间隔为

定义超平面\((w, b)\)关于训练数据集\(T\)的几何间隔为超平面\((w, b)\)关于\(T\)中所有样本点\((x_i, y_i)\)的几何间隔的最小值。

硬间隔最大化:SVM学习的基本想法是求解能正确划分训练集并且几何间隔最大的分离超平面,以充分大的确信度对训练数据进行分类。也就是说,不仅将正负实例点分开,而且对最难分的实例点(离超平面最近的点)也有足够大的确信度将它们分开。这样的超平面应该对未知的新实例也有很好的分类预测能力。

这里的间隔最大化又称为硬间隔最大化。

线性可分训练数据集的最大间隔分离超平面是存在且唯一的。

最大间隔分离超平面:这个问题可以表示为下面的约束最优化问题

我们希望最大化超平面\((w, b)\)关于训练数据集的几何间隔\(\gamma\),约束条件表示的是超平面\((w, b)\)关于每个训练样本点的几何间隔至少是\(\gamma\)。

考虑几何间隔和函数间隔的关系式 \(\gamma = \frac{\hat{\gamma}}{||w||}\),可将问题改写成

函数间隔的取值并不影响最优化问题的解。假设将\(w\)和\(b\)按比例改变为\(\lambda w\)和\(\lambda b\),这时函数间隔称为\(\lambda \hat{\gamma}\),函数间隔的这一改变对上面最优化问题的不等式约束没有影响,对目标函数的优化也没有影响。这样,就可以取\(\hat{\gamma} = 1\)。

又注意到最大化\(\frac{1}{||w||}\)和最小化\(\frac{||w||^2}{2}\)是等价的,于是得到下面的最优化问题

这是一个凸二次规划问题,求解得到\(w^*\)和\(b^*\),就可以得到最大间隔分离超平面

$w^* \cdot x + b^* = 0 \(

以及分类决策函数\)f(x) = sign(w^* \cdot x + b^*)$,即线性可分SVM。

凸优化:凸优化问题是指约束优化问题

其中,目标函数\(f(w)\)和约束函数\(g_i(w)\)都是\(R^n\)上的连续可微的凸函数,约束函数\(h_i(w)\)是\(R^n\)上的仿射函数。

\(h(x)\)称为仿射函数,如果它满足\(h(x) = a \cdot x + b\),\(a \in R^n, b \in R, x \in R^n\)。

凸二次规划:当\(f(w)\)为二次函数,且\(g_i(w)\)是仿射函数时,上述问题为凸二次规划。

支持向量:线性可分情况下,样本点与分离超平面距离最近的样本点称为支持向量。支持向量是使式\((7.14)\)等号成立的点,即

对\(y_i = +1\)的正例点,支持向量在超平面\(H_1: w \cdot x + b = +1\)。

对\(y_i = -1\)的正例点,支持向量在超平面\(H_2: w \cdot x + b = -1\)。

间隔边界:\(H_1\)和\(H_2\)平行,并没有实例点落在它们中间。\(H_1\)与\(H_2\)之间形成一条长带,分离超平面与它们平行且位于它们中央。长度的宽度,即\(H_1\)和\(H_2\)之间的距离称为间隔,间隔依赖于分离超平面的法向量\(w\),等于\(\frac{2}{||w||}\),\(H_1\)和\(H_2\)称为间隔边界。

在决定分离超平面时,只有支持向量起作用。如果移动支持向量将改变所求的解,但是如果在间隔边界以外移动其它实例点,甚至去掉这些点,解都不会改变。

由于支持向量在确定超平面中起着决定性作用,所以这个方法称为支持向量机。支持向量的个数一般很少,所以支持向量机由很少的“重要”训练样本确定。

求解线性可分SVM

为求解线性可分SVM的最优化问题\((7.13)-(7.14)\),将它作为原始最优化问题,应用拉格朗日对偶性,通过求解对偶问题得到原始问题的最优解。这样做的优点,一是对偶问题往往更容易求解,二是自然引入核函数,进而推广到非线性分类问题。

对偶算法:首先构建拉格朗日函数。为此,对每个不等式约束\((7.14)\)引进拉格朗日乘子\(\alpha_i \geqslant 0, \ \ i=1,2, ..., N\),定义拉格朗日函数:

原始问题的对偶问题是极大极小问题\(\max_\alpha \min_{w,b} L(w, b, \alpha)\)

(1)先求\(\min_{w,b} L(w, b, \alpha)\),分别对\(w,b\)求偏导,令其等于0。

解得

将式\((7.19)\)代入式\((7.18)\),并利用式\((7.20)\),即得

(2)求\(\min_{w, b} L(w, b, \alpha)\)对\(\alpha\)的极大,即是对偶问题

将式\((7.21)\)的目标函数由求极大转换成求极小,得到下面与之等价的对偶最优化问题:

考虑原始最优化问题\((7.13)-(7.14)\)和对偶最优化问题\((7.22)-(7.24)\),满足强对偶性(满足凸优化和slater条件即可推出强对偶),所以存在\(w^*, \alpha^*, \beta^*\),使\(w^*\)是原始问题的解,\(\alpha^*, \beta^*\)是对偶问题的解。求解原始问题\((7.13)-(7.14)\)可以转换为求解对偶问题\((7.22)-(7.24)\)。

对线性可分训练数据集,假设对偶最优化问题\((7.22)-(7.24)\)对\(\alpha\)的解为\(\alpha^* = (\alpha_1^*, \alpha_2^*, ..., \alpha_N^*)^T\),可以由\(\alpha^*\)求得原始优化问题\((7.13)-(7.14)\)对\((w, b)\)的解\(w^*, b^*\)。

下面阐述得到以上两式的计算过程。

由KKT条件得

由此得

其中至少有一个\(\alpha_j^* > 0\)(用反证法,假设\(\alpha^*=0\),由式\((7.27)\)可知\(w^*=0\),而\(w^*=0\)不是原始问题\((7.13)-(7.14)\)的解,产生矛盾),对此有

将式\((7.25)\)代入式\((7.28)\)并注意到\(y_j^2=1\),得

则分离超平面可以写成

分离决策函数可以写成

可以看出分类决策函数只依赖于输入\(x\)和训练样本输入的内积。

支持向量

由式\((7.25)-(7.26)\)可知,\(w^*\)和\(b^*\)只依赖于训练数据中对应\(\alpha_i^* > 0\)的样本点\((x_i, y_i)\),其它样本点对\(w^*\)和\(b^*\)没有影响。我们将训练数据中对应\(\alpha_i^* > 0\)的实例点\(x_i \in R^n\)称为支持向量。

由KKT互补条件可知,

对应于\(\alpha_i^* > 0\)的实例\(x_i\),有\(y_i ( w^* \cdot x_i + b^*) - 1 = 0\),即

\(x_i\)一定在间隔边界上,符合前面支持向量的定义。

更进一步

对于线性可分问题,上述线性可分SVM的学习(硬间隔最大化)算法是完美的,但是在现实的问题中,训练数据集往往是线性不可分的,即在样本中出现噪声或特异点,此时,有更一般的学习算法。

线性SVM和软间隔最大化

软间隔:线性可分SVM的学习方法对线性不可分数据是不适用的,因为上述方法中的不等式约束并不能都成立。为了将其扩展到线性不可分问题,需要修改硬间隔最大化,使其称为软间隔最大化。

通常情况是,训练数据中有一些特异点(outlier)。将这些特异点除去后,剩下大部分的样本点组成的集合是线性可分的。

松弛变量:线性不可分意味着某些样本点\((x_i, y_i)\)不能满足函数间隔大于等于1的约束条件\((7.14)\)。为了解决这个问题,可以对每个样本点\((x_i, y_i)\)引入一个松弛变量$\xi_i \geqslant 0 $,使函数间隔加上松弛变量大于等于1。这样,约束条件变成

同时对每个松弛变量\(\xi_i\)支付一个代价,目标函数变成

这里\(C>0\)称为惩罚参数,一般由应用问题决定,\(C\)值大时对误分类的惩罚增大,\(C\)值小时对误分类的惩罚减小。

最小化目标函数\((7.31)\)包含两层含义:使\(\frac{1}{2}||w||^2\)尽量小即间隔尽量大,同时使误分类点的个数尽量小,\(C\)是调和二者的系数。

学习问题:问题变成如下凸二次规划问题

可以证明\(w\)的解是唯一的,但\(b\)的解可能不唯一,而是存在于一个区间。

设问题\((7.32)-(7.34)\)的解是\(w^*, b^*\),于是可以得到分离超平面\(w^* \cdot x + b^* = 0\)及分类决策函数\(f(x) = sign(w^* \cdot x + b^*)\)。称这样的模型为训练样本线性不可分时的线性支持向量机,简称线性SVM。

显然线性SVM包含线性可分SVM。由于现实中训练数据集往往是线性不可分的,线性SVM具有更广的适用性。

求解线性SVM

原始问题\((7.32)-(7.34)\)的拉格朗日函数是

其中$\alpha_i \geqslant 0, \mu_i \geqslant 0 $。

对偶问题是拉格朗日函数的极大极小问题,先求极小

将式\((7.41)-(7.43)\)代入式\((7.40)\),得

再对\(\min_{w, b, \xi} L(w, b, \xi, \alpha, \mu)\)求\(\alpha\)的极大,即得对偶问题:

将对偶优化问题\((7.44)-(7.48)\)进行变换:利用等式约束\((7.46)\)消去\(\mu_i\),从而只留下变量\(\alpha_i\),并将约束\((7.46)-(7.48)\)写成

再将对目标函数求极大转换为求极小,于是得到对偶问题\((7.37)-(7.39)\)

定理7.3:设\(\alpha^* = (\alpha_1^*, \alpha_2^*, ..., \alpha_N^*)^T\)是对偶问\((7.37)-(7.39)\)的一个解,若存在\(\alpha^*\)的一个分量\(\alpha_j^*\),\(0 < \alpha_j^* < C\),则原始问题\((7.32)-(7.34)\)的解可按下式求得:

证明:原始问题是凸二次规划问题,解满足KKT条件,即得

由式\((7.52)\)易知\((7.50)\)成立。再由式\((7.53)-(7.54)\)可知,若存在\(\alpha_j^*\),\(0 < \alpha_j^* < C\),则\(y_j(w^* \cdot x_j + b^*) - 1 = 0\),由此得式\((7.51)\)。

分离超平面:由此,分离超平面可以写成

分类决策函数可以写成

支持向量

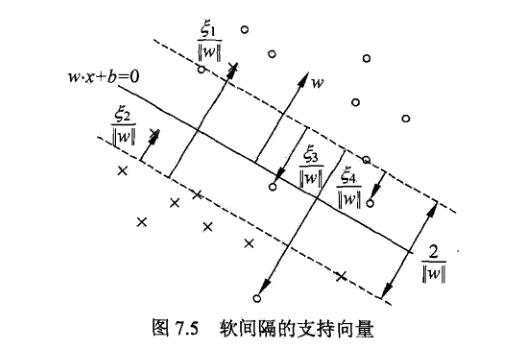

在线性不可分的情况下,将对偶问题\((7.37)-(7.39)\)的解\(\alpha^* = (\alpha_1^*, \alpha_2^*, ..., \alpha_N^*)^T\)中对应于\(\alpha_i^* > 0\)的样本点\((x_i, y_i)\)的实例\(x_i\)称为支持向量(软间隔的支持向量)。如下图所示,这时的支持向量要比线性可分时的情况复杂一些。图中还标出了实例\(x_i\)到间隔边界的距离\(\frac{\xi_i}{||w||}\)。

软间隔的支持向量\(x_i\)或者在间隔边界上,或者在间隔边界与分离超平面之间,或者在分离超平面误分一侧。

若\(\alpha_i^* < C\),则\(\xi_i = 0\),支持向量\(x_i\)恰好落在间隔边界上。

若\(\alpha_i^* = C, \ 0 < \xi_i < 1\),则分类正确,\(x_i\)在间隔边界与分离超平面之间。

若\(\alpha_i^* = C, \xi_i = 1\),则分类正确,\(x_i\)在间隔边界与分离超平面之间。

若\(\alpha_i^* = C, \xi_i > 1\),则\(x_i\)位于分离超平面误分一侧。

合页损失函数

损失函数的说明

线性SVM学习还有另一种解释,就是最小化以下目标函数:

目标函数的第1项是经验风险,函数

称为合页损失函数(hinge loss),下标“+”表示以下取正值的函数

这就是说,当样本点\((x_i, y_i)\)被正确分类且函数间隔(确信度)\(y_i(w \cdot x_i +b)\)大于1时,损失是0,否则损失是\(1 - y_i(w \cdot x_i +b)\)。

目标函数的第2项是系数为\(\lambda\)的\(w\)的\(L_2\)范数,是正则化项。

等价的证明

线性SVM原始优化问题

等价于

可将最优化问题\((7.63)\)改写成问题\((7.60)-(7.62)\),令

则\(\xi_i \geqslant 0\),式\((7.62)\)成立。由式\((7.64)\)得

当\(1 - y_i (w \cdot x_i + b) > 0\)时,有\(y_i (w \cdot x_i + b) = 1 - \xi_i\)。

当\(1 - y_i (w \cdot x_i + b) \leqslant 0\)时,有\(y_i (w \cdot x_i + b) \geqslant 1 - \xi_i\)。

所以式\((7.61)\)成立。所以\((7.63)\)可写成

若取\(\lambda = \frac{1}{2C}\),则

与式\((7.60)\)等价。

图示

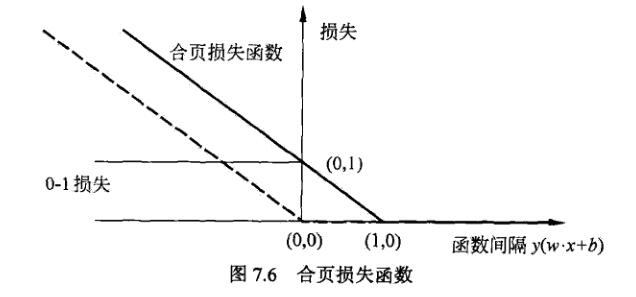

合页损失函数如下图所示,横轴是函数间隔\(y(w \cdot x + b)\),纵轴是损失。由于函数形状像一个合页,故名合页损失函数。

图中还画出0-1损失函数,可以认为它是二分类问题的真正损失函数,而合页损失函数是0-1损失函数的上界。由于0-1损失不是连续可导的,直接优化比较困难,可以认为线性SVM是优化0-1损失的上界(合页损失)构成的目标函数。这时的上界损失又称为代理损失。

图中虚线显示的是感知机的损失\([-y_i(w \cdot x_i) + b]_+\)。样本被正确分类时损失为0,否则为\(-y_i(w \cdot x_i) + b\)。相比之下,合页损失不仅要分类正确,而且要确信度高,损失才是0,对学习有更高的要求。

非线性SVM与核函数

核技巧

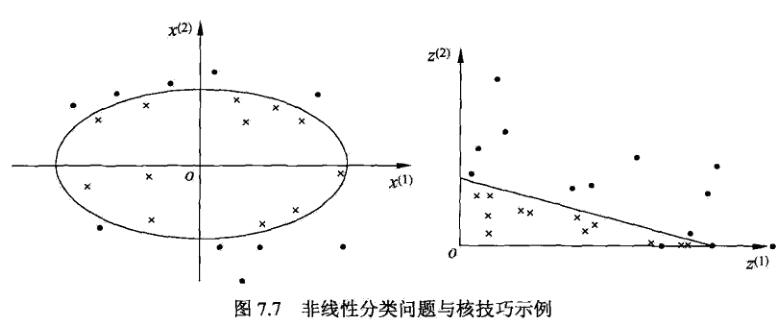

左图分类问题无法用直线(线性模型)将正负实例正确分开,但可以用一条椭圆曲线(非线性模型)将它们正确分开。

如果能用一个超曲面将正负例正确分开,则称这个问题为非线性可分问题。非线性问题往往不好求解,所以希望能用解线性分类问题的方法解决这个问题。所采取的方法是进行一个非线性变换,将非线性问题变换为线性问题。通过求解线性问题来求解原问题。

设原空间为\(X \subset R^2\),\(x = (x^{(1)}, x^{(2)})^T \in X\)。

新空间为\(Z \subset R^2\),\(z = (z^{(1)}, z^{(2)})^T \in Z\)。

定义原空间到新空间的映射:

则原空间的椭圆\(w_1(x^{(1)})^2 + w_2(x^{(2)})^2 + b = 0\)

变换成新空间的直线\(w_1 z^{(1)} + w_2 z^{(2)} + b = 0\)

核技巧应用到SVM,基本想法是通过一个非线性变换将输入空间对应于一个特征空间,使得输入空间\(R^n\)中的超曲面对应于特征空间\(\mathcal{H}\)的超平面。这样,分类问题的学习任务通过在特征空间中求解线性SVM就可以完成。

核函数

设\(\mathcal{X}\)是输入空间,又设\(\mathcal{H}\)为特征空间,如果存在一个从\(\mathcal{X}\)到\(\mathcal{H}\)的映射\(\phi(x): \mathcal{X} \to \mathcal{H}\),使得对所有\(x, z \in \mathcal{X}\),函数\(K(x, z)\)满足条件:

则称\(K(x, z)\)为核函数,\(\phi(x)\)为映射函数,式子\(\phi(x) \cdot \phi(z)\)表示内积。

核技巧的想法是,学习与预测中只定义核函数\(K(x, z)\),而不是显示的定义映射函数\(\phi\)。通过,直接计算\(K(x, z)\)比较容易,而通过\(\phi(x)\)和\(\phi(z)\)计算\(K(x, z)\)并不容易。特征空间\(\mathcal{H}\)一般是高维的,甚至是无穷维的。

对于给定的核\(K(x, z)\),特征空间\(\mathcal{H}\)和映射函数\(\phi\)的取法并不唯一,可以取不同的特征空间,即使在同一特征空间里也可以取不同映射。

学习非线性SVM:注意到线性SVM的对偶问题中,无论是目标函数还是决策函数(分离超平面)都只涉及输入实例与实例之间的内积。在对偶问题的目标函数\((7.37)\)中的内积\(x_i \cdot x_j\)可用\(K(x_i, x_j) = \phi(x_i) \cdot \phi(x_j)\)来代替。此时对偶问题的目标函数为

分类决策函数为

在新的特征空间里从训练样本中学习线性SVM。学习是隐式地在特征空间进行的,不需要显式地定义特征空间和映射函数。这样的技巧称为核技巧。当映射函数是非线性函数时,学习到的含有核函数的SVM是非线性分类模型。

在实际应用中,往往依赖领域知识直接选择核函数,核函数的有效性需要通过实验验证。

正定核

函数\(K(x, z)\)满足什么条件才能称为核函数呢?通常所说的核函数就是正定核函数,下面给出正定核的定义。

定理7.5(正定核的充要条件)

设\(K: \mathcal{X} \times \mathcal{X} \to R\)是对称函数,则\(K(x, z)\)为正定核函数的充要条件是对任意\(x_i \in \mathcal{X}, \ \ i=1, 2, ...,m\),\(K(x, z)\)对应的Gram矩阵:

是半正定矩阵。

但对于一个具体函数\(K(x, z)\)来说,检验它是否为正定核函数并不容易,因为要求任意有限输入集\(\{x_1, ..., x_m\}\)验证\(K\)对应的Gram矩阵是否为半正定。在实际问题中往往应用已有的核函数。

常用核函数

(1)多项式核函数(polynomial kernel function)

对应的支持向量机是一个p次多项式分类器。在此情形下,分类决策函数成为

(2)高斯核函数(Gaussian kernel function)

对应的支持向量机是高斯径向基函数分类器。在此情形下,分类决策函数成为

(3)字符串核函数

核函数不仅可以定义在欧式空间上,还可以定义在离散数据的集合上。比如,字符串核是定义在字符串集合上的核函数。字符串核函数在文本分类,信息检索,生物信息等方面都有应用

判断核函数

(1)给定一个核函数\(K\),对于\(a>0\),\(aK\)也为核函数。

(2)给定两个核函数\(K'\)和\(K''\),\(K' K''\)也为核函数。

(3)对输入空间的任意实值函数\(f(x)\),\(K(x_1, x_2) = f(x_1) f(x_2)\)为核函数。

序列最小最优化算法

SVM的学习问题可以形式化为求解凸二次规划问题。这样的凸二次规划问题具有全局最优解,并且有许多最优化算法可以用于这一问题的求解。但是当训练样本容易很大时,这些算法往往变得非常低效,以致无法使用。如何高效地实现SVM就成为一个重要问题。

SMO(sequential minimal optimization)是其中一种快速学习算法。解如下凸二次规划的对偶问题

问题中变量是拉格朗日乘子,一个变量\(\alpha_i\)对应于一个样本点\((x_i, y_i)\),变量的总数等于训练样本容量\(N\)。

SMO算法:SMO算法是一种启发式算法,基本思路是:如果所有变量的解都满足此最优化问题的KKT条件,那么解就得到了。否则,选择两个变量,固定其它变量,针对这两个变量构建一个二次规划问题。这个二次规划问题关于这两个变量的解应该更接近原始二次规划问题的解,因为这会使得原始二次规划问题的目标函数变得更小。重要的是,这时子问题可以通过解析方法求解,就可以大大提高计算速度。

子问题有两个变量,一个是违反KKT条件最严重的那个,另一个由约束条件自动确定。如此,SMO算法将原问题不断分解为子问题并对子问题求解,进而达到求解原问题的目的。

整个SMO算法包括两个部分:求解两个变量二次规划的解析方法和选择变量的启发式方法。

两个变量二次规划的求解方法

假设选择的两个变量是\(\alpha_1, \alpha_2\),其它变量\(\alpha_i(i =3,4, .., N)\)是固定的。于是SMO的最优化问题\((7.98)-(7.100)\)的子问题可以写成

其中\(\zeta\)是常数,目标函数式\((7.101)\)中省略了不含\(\alpha_1, \alpha_2\)的常数项。

由于只有两个变量,约束可以用二维空间中的图形表示。

假设问题\((7.101)-(7.103)\)的初始可行解为\(\alpha_1^{old}, \alpha_2^{old}\)。最优解为\(\alpha_1^{new}, \alpha_2^{new}\)。并且假设在沿着约束方向未经剪辑时\(\alpha_2\)的最优解为$ \alpha_2^{new, unc}$。

由于\(\alpha_2^{new}\)需满足不等式约束\((7.103)\),所以最优值\(\alpha_2^{new}\)的取值范围必须满足条件

其中,\(L\)与\(H\)是\(\alpha_2^{new}\)所在的对角线段端点的界。

如果\(y1 \neq y2\),则

如果\(y1 = y2\),则

先沿着约束方向未经剪辑(即未考虑不等式约束\((7.103)\))时\(\alpha_2\)的最优解$ \alpha_2^{new, unc}\(;然后再求剪辑后的\)\alpha_2\(的解\)\alpha_2^{new}$。我们用定理来叙述这个结果,然后再加以证明。

为了叙述简单,记

当\(i=1,2\)时,\(E_i\)为函数\(g(x)\)对输入\(x_i\)的预测值与真实输出\(y_i\)之差。

定理7.6:最优化问题\((7.101)-(7.103)\)沿着约束方向未经剪辑时的解是

经剪辑后\(\alpha_2\)的解是

由\(\alpha_2^{new}\)求得\(\alpha_1^{new}\)

证明:引进记号

目标函数写成

由\(\alpha_1 y_1 = \zeta - \alpha_2 y_2\)及\(y_j^2 = 1\),可将\(\alpha_1\)表示为

代入\((7.110)\)得到只含\(\alpha_2\)的目标函数

对\(\alpha_2\)求导数

另其等于0,将$\zeta = \alpha_1^{old} y_ 1 + \alpha_2^{old} y_ 2 \(和 \)\eta = K_{11} + K_{22} - 2K_{12}$代入,得

得证。

变量的选择方法

SMO算法在每个子问题中选择两个变量优化,其中至少一个变量是违反KKT条件的。

(1)第一个变量的选择

SMO称选择第1个变量的过程为外层循环。外层循环在训练样本中选取违反KKT条件最严重的样本,并将其对应的变量作为第1个变量。具体地,检查训练样本点是否满足KKT条件,即

检验过程中,外层循环首先遍历所有满足\(0 < \alpha_i < C\)的样本点,即在间隔边界上的支持向量点,检测它们是否满足KKT,如果这些点都满足KKT,那么遍历整个训练集,检验它们是否满足KKT。

(2)第二个变量的选择

SMO称选择第2个变量的过程为内部循环。选取的标准是希望\(\alpha_2\)有足够大的变化。由于\(\alpha_2^{new}\)是依赖于\(|E_1 - E_2|\)的,为了加快计算速度,一种简单的做法是选择\(\alpha_2\),使其对应的\(|E_1 - E_2|\)最大。因为\(\alpha_1\)已定,\(E_1\)也确定了。如果\(E_1\)为正,那么选择最小的\(E_i\)作为\(E_2\);如果\(E_1\)为负,那么选择最大的\(E_i\)作为\(E_2\)。为了节省计算事件,将所有\(E_i\)值保存在一个列表中。

在特殊情况下,如果内层训练通过以上方法选择的\(\alpha_2\)不能使目标函数有足够的下降,那么采用以下启发式规则继续选择\(\alpha_2\)。

遍历在间隔边界上的支持向量点,依次将其对应的变量作为\(\alpha_2\)试用,直到目标函数有足够的下降。若找不到合适的\(\alpha_2\),那么遍历训练数据集;若仍找不到合适的\(\alpha_2\),则放弃第1个\(\alpha_1\),再通过外层训练寻求另外的\(\alpha_1\)

(3)计算阈值\(b\)和差值\(E_i\)

在每次完成两个变量的优化后,都要重新计算阈值\(b\)。

在每次完成啷个变量的优化后,还需要更新对应的\(E_i\)值。

浙公网安备 33010602011771号

浙公网安备 33010602011771号