[Pytorch]PyTorch Dataloader自定义数据读取

整理一下看到的自定义数据读取的方法,较好的有一下三篇文章, 其实自定义的方法就是把现有数据集的train和test分别用 含有图像路径与label的list返回就好了,所以需要根据数据集随机应变。

所有图片都在一个文件夹1

之前刚开始用的时候,写Dataloader遇到不少坑。网上有一些教程 分为all images in one folder 和 each class one folder。后面的那种写的人比较多,我写一下前面的这种,程式化的东西,每次不同的任务改几个参数就好。

等训练的时候写一篇文章把2333

一.已有的东西

举例子:用kaggle上的一个dog breed的数据集为例。数据文件夹里面有三个子目录

test: 几千张图片,没有标签,测试集

train: 10222张狗的图片,全是jpg,大小不一,有长有宽,基本都在400×300以上

labels.csv : excel表格, 图片名称+品种名称

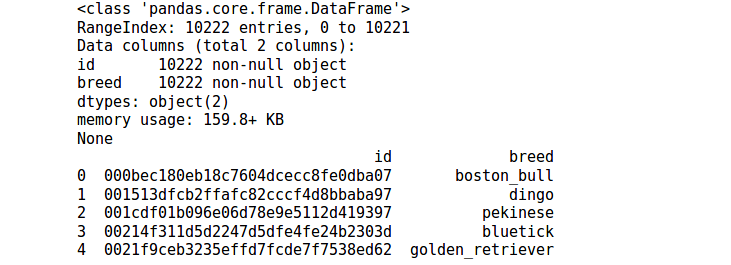

我喜欢先用pandas把表格信息读出来看一看

import pandas as pd

import numpy as np

df = pd.read_csv('./dog_breed/labels.csv')

print(df.info())

print(df.head())

看到,一共有10222个数据,id对应的是图片的名字,但是没有后缀 .jpg。 breed对应的是犬种。

二.预处理

我们要做的事情是:

1)得到一个长 list1 : 里面是每张图片的路径

2)另外一个长list2: 里面是每张图片对应的标签(整数),顺序要和list1对应。

3)把这两个list切分出来一部分作为验证集

1)看看一共多少个breed,把每种breed名称和一个数字编号对应起来:

from pandas import Series,DataFrame

breed = df['breed']

breed_np = Series.as_matrix(breed)

print(type(breed_np) )

print(breed_np.shape) #(10222,)

看一下一共多少不同种类

breed_set = set(breed_np)

print(len(breed_set)) #120

构建一个编号与名称对应的字典,以后输出的数字要变成名字的时候用:

breed_120_list = list(breed_set)

dic = {}

for i in range(120):

dic[ breed_120_list[i] ] = i

2)处理id那一列,分割成两段:

file = Series.as_matrix(df["id"])

print(file.shape)

import os

file = [i+".jpg" for i in file]

file = [os.path.join("./dog_breed/train",i) for i in file ]

file_train = file[:8000]

file_test = file[8000:]

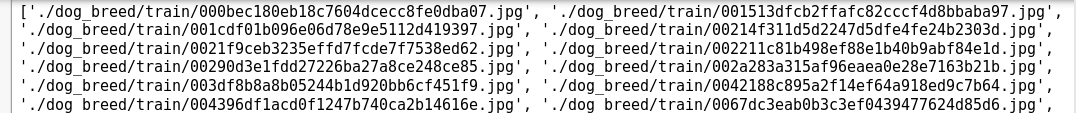

print(file_train)

np.save( "file_train.npy" ,file_train )

np.save( "file_test.npy" ,file_test )

里面就是图片的路径了

3)处理breed那一列,分成两段:

breed = Series.as_matrix(df["breed"])

print(breed.shape)

number = []

for i in range(10222):

number.append( dic[ breed[i] ] )

number = np.array(number)

number_train = number[:8000]

number_test = number[8000:]

np.save( "number_train.npy" ,number_train )

np.save( "number_test.npy" ,number_test )

三.Dataloader

我们已经有了图片路径的list,target编号的list。填到Dataset类里面就行了。

from torch.utils.data import Dataset, DataLoader

from torchvision import transforms, utils

normalize = transforms.Normalize(

mean=[0.485, 0.456, 0.406],

std=[0.229, 0.224, 0.225]

)

preprocess = transforms.Compose([

#transforms.Scale(256),

#transforms.CenterCrop(224),

transforms.ToTensor(),

normalize

])

def default_loader(path):

img_pil = Image.open(path)

img_pil = img_pil.resize((224,224))

img_tensor = preprocess(img_pil)

return img_tensor

当然出来的时候已经全都变成了tensor

class trainset(Dataset):

def init(self, loader=default_loader):

#定义好 image 的路径

self.images = file_train

self.target = number_train

self.loader = loader

def __getitem__(self, index):

fn = self.images[index]

img = self.loader(fn)

target = self.target[index]

return img,target

def __len__(self):

return len(self.images)

我们看一下代码,自定义Dataset只需要最下面一个class,继承自Dataset类。有三个私有函数

def init(self, loader=default_loader):

这个里面一般要初始化一个loader(代码见上面),一个images_path的列表,一个target的列表

def getitem(self, index):

这里吗就是在给你一个index的时候,你返回一个图片的tensor和target的tensor,使用了loader方法,经过 归一化,剪裁,类型转化,从图像变成tensor

def len(self):

return你所有数据的个数

这三个综合起来看呢,其实就是你告诉它你所有数据的长度,它每次给你返回一个shuffle过的index,以这个方式遍历数据集,通过 getitem(self, index)返回一组你要的(input,target)

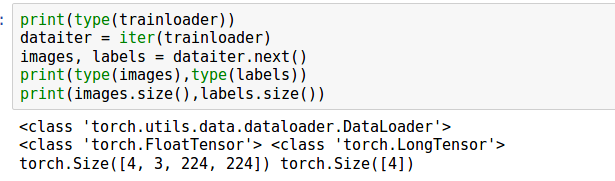

四.使用

实例化一个dataset,然后用Dataloader 包起来

train_data = trainset()

trainloader = DataLoader(train_data, batch_size=4,shuffle=True)

所有图片都在一个文件夹2

在上一篇博客PyTorch学习之路(level1)——训练一个图像分类模型中介绍了如何用PyTorch训练一个图像分类模型,建议先看懂那篇博客后再看这篇博客。在那份代码中,采用torchvision.datasets.ImageFolder这个接口来读取图像数据,该接口默认你的训练数据是按照一个类别存放在一个文件夹下。但是有些情况下你的图像数据不是这样维护的,比如一个文件夹下面各个类别的图像数据都有,同时用一个对应的标签文件,比如txt文件来维护图像和标签的对应关系,在这种情况下就不能用torchvision.datasets.ImageFolder来读取数据了,需要自定义一个数据读取接口。另外这篇博客最后还顺带介绍如何保存模型和多GPU训练。

怎么做呢?

先来看看torchvision.datasets.ImageFolder这个类是怎么写的,主要代码如下,想详细了解的可以看:官方github代码。

看起来很复杂,其实非常简单。继承的类是torch.utils.data.Dataset,主要包含三个方法:初始化__init__,获取图像__getitem__,数据集数量 __len__。__init__方法中先通过find_classes函数得到分类的类别名(classes)和类别名与数字类别的映射关系字典(class_to_idx)。然后通过make_dataset函数得到imags,这个imags是一个列表,其中每个值是一个tuple,每个tuple包含两个元素:图像路径和标签。剩下的就是一些赋值操作了。在__getitem__方法中最重要的就是 img = self.loader(path)这行,表示数据读取,可以从__init__方法中看出self.loader采用的是default_loader,这个default_loader的核心就是用python的PIL库的Image模块来读取图像数据。

class ImageFolder(data.Dataset):

"""A generic data loader where the images are arranged in this way: ::

root/dog/xxx.png

root/dog/xxy.png

root/dog/xxz.png

root/cat/123.png

root/cat/nsdf3.png

root/cat/asd932_.png

Args:

root (string): Root directory path.

transform (callable, optional): A function/transform that takes in an PIL image

and returns a transformed version. E.g, ``transforms.RandomCrop``

target_transform (callable, optional): A function/transform that takes in the

target and transforms it.

loader (callable, optional): A function to load an image given its path.

Attributes:

classes (list): List of the class names.

class_to_idx (dict): Dict with items (class_name, class_index).

imgs (list): List of (image path, class_index) tuples

"""

def __init__(self, root, transform=None, target_transform=None,

loader=default_loader):

classes, class_to_idx = find_classes(root)

imgs = make_dataset(root, class_to_idx)

if len(imgs) == 0:

raise(RuntimeError("Found 0 images in subfolders of: " + root + "\n"

"Supported image extensions are: " + ",".join(IMG_EXTENSIONS)))

self.root = root

self.imgs = imgs

self.classes = classes

self.class_to_idx = class_to_idx

self.transform = transform

self.target_transform = target_transform

self.loader = loader

def __getitem__(self, index):

"""

Args:

index (int): Index

Returns:

tuple: (image, target) where target is class_index of the target class.

"""

path, target = self.imgs[index]

img = self.loader(path)

if self.transform is not None:

img = self.transform(img)

if self.target_transform is not None:

target = self.target_transform(target)

return img, target

def __len__(self):

return len(self.imgs)稍微看下default_loader函数,该函数主要分两种情况调用两个函数,一般采用pil_loader函数。

def pil_loader(path):

with open(path, 'rb') as f:

with Image.open(f) as img:

return img.convert('RGB')

def accimage_loader(path):

import accimage

try:

return accimage.Image(path)

except IOError:

# Potentially a decoding problem, fall back to PIL.Image

return pil_loader(path)

def default_loader(path):

from torchvision import get_image_backend

if get_image_backend() == 'accimage':

return accimage_loader(path)

else:

return pil_loader(path)看懂了ImageFolder这个类,就可以自定义一个你自己的数据读取接口了。

首先在PyTorch中和数据读取相关的类基本都要继承一个基类:torch.utils.data.Dataset。然后再改写其中的__init__、__len__、__getitem__等方法即可。

下面假设img_path是你的图像文件夹,该文件夹下面放了所有图像数据(包括训练和测试),然后txt_path下面放了train.txt和val.txt两个文件,txt文件中每行都是图像路径,tab键,标签。所以下面代码的__init__方法中self.img_name和self.img_label的读取方式就跟你数据的存放方式有关,你可以根据你实际数据的维护方式做调整。__getitem__方法没有做太大改动,依然采用default_loader方法来读取图像。最后在Transform中将每张图像都封装成Tensor。

class customData(Dataset):

def __init__(self, img_path, txt_path, dataset = '', data_transforms=None, loader = default_loader):

with open(txt_path) as input_file:

lines = input_file.readlines()

self.img_name = [os.path.join(img_path, line.strip().split('\t')[0]) for line in lines]

self.img_label = [int(line.strip().split('\t')[-1]) for line in lines]

self.data_transforms = data_transforms

self.dataset = dataset

self.loader = loader

def __len__(self):

return len(self.img_name)

def __getitem__(self, item):

img_name = self.img_name[item]

label = self.img_label[item]

img = self.loader(img_name)

if self.data_transforms is not None:

try:

img = self.data_transforms[self.dataset](img)

except:

print("Cannot transform image: {}".format(img_name))

return img, label定义好了数据读取接口后,怎么用呢?

在代码中可以这样调用。

image_datasets = {x: customData(img_path='/ImagePath',

txt_path=('/TxtFile/' + x + '.txt'),

data_transforms=data_transforms,

dataset=x) for x in ['train', 'val']}这样返回的image_datasets就和用torchvision.datasets.ImageFolder类返回的数据类型一样,有点狸猫换太子的感觉,这就是在第一篇博客中说的写代码类似搭积木的感觉。

有了image_datasets,然后依然用torch.utils.data.DataLoader类来做进一步封装,将这个batch的图像数据和标签都分别封装成Tensor。

dataloders = {x: torch.utils.data.DataLoader(image_datasets[x],

batch_size=batch_size,

shuffle=True) for x in ['train', 'val']}另外,每次迭代生成的模型要怎么保存呢?非常简单,那就是用torch.save。输入就是你的模型和要保存的路径及模型名称,如果这个output文件夹没有,可以手动新建一个或者在代码里面新建。

torch.save(model, 'output/resnet_epoch{}.pkl'.format(epoch))最后,关于多GPU的使用,PyTorch支持多GPU训练模型,假设你的网络是model,那么只需要下面一行代码(调用 torch.nn.DataParallel接口)就可以让后续的模型训练在0和1两块GPU上训练,加快训练速度。

model = torch.nn.DataParallel(model, device_ids=[0,1])完整代码请移步:Github

每个类的图片放在一个文件夹

这是一个适合PyTorch入门者看的博客。PyTorch的文档质量比较高,入门较为容易,这篇博客选取官方链接里面的例子,介绍如何用PyTorch训练一个ResNet模型用于图像分类,代码逻辑非常清晰,基本上和许多深度学习框架的代码思路类似,非常适合初学者想上手PyTorch训练模型(不必每次都跑mnist的demo了)。接下来从个人使用角度加以解释。解释的思路是从数据导入开始到模型训练结束,基本上就是搭积木的方式来写代码。

首先是数据导入部分,这里采用官方写好的torchvision.datasets.ImageFolder接口实现数据导入。这个接口需要你提供图像所在的文件夹,就是下面的data_dir=‘/data’这句,然后对于一个分类问题,这里data_dir目录下一般包括两个文件夹:train和val,每个文件件下面包含N个子文件夹,N是你的分类类别数,且每个子文件夹里存放的就是这个类别的图像。这样torchvision.datasets.ImageFolder就会返回一个列表(比如下面代码中的image_datasets[‘train’]或者image_datasets[‘val]),列表中的每个值都是一个tuple,每个tuple包含图像和标签信息。

data_dir = '/data'

image_datasets = {x: datasets.ImageFolder(

os.path.join(data_dir, x),

data_transforms[x]),

for x in ['train', 'val']}另外这里的data_transforms是一个字典,如下。主要是进行一些图像预处理,比如resize、crop等。实现的时候采用的是torchvision.transforms模块,比如torchvision.transforms.Compose是用来管理所有transforms操作的,torchvision.transforms.RandomSizedCrop是做crop的。需要注意的是对于torchvision.transforms.RandomSizedCrop和transforms.RandomHorizontalFlip()等,输入对象都是PIL Image,也就是用python的PIL库读进来的图像内容,而transforms.Normalize([0.485, 0.456, 0.406], [0.229, 0.224, 0.225])的作用对象需要是一个Tensor,因此在transforms.Normalize([0.485, 0.456, 0.406], [0.229, 0.224, 0.225])之前有一个 transforms.ToTensor()就是用来生成Tensor的。另外transforms.Scale(256)其实就是resize操作,目前已经被transforms.Resize类取代了。

data_transforms = {

'train': transforms.Compose([

transforms.RandomSizedCrop(224),

transforms.RandomHorizontalFlip(),

transforms.ToTensor(),

transforms.Normalize([0.485, 0.456, 0.406], [0.229, 0.224, 0.225])

]),

'val': transforms.Compose([

transforms.Scale(256),

transforms.CenterCrop(224),

transforms.ToTensor(),

transforms.Normalize([0.485, 0.456, 0.406], [0.229, 0.224, 0.225])

]),

}前面torchvision.datasets.ImageFolder只是返回list,list是不能作为模型输入的,因此在PyTorch中需要用另一个类来封装list,那就是:torch.utils.data.DataLoader。torch.utils.data.DataLoader类可以将list类型的输入数据封装成Tensor数据格式,以备模型使用。注意,这里是对图像和标签分别封装成一个Tensor。这里要提到另一个很重要的类:torch.utils.data.Dataset,这是一个抽象类,在pytorch中所有和数据相关的类都要继承这个类来实现。比如前面说的torchvision.datasets.ImageFolder类是这样的,以及这里的torch.util.data.DataLoader类也是这样的。所以当你的数据不是按照一个类别一个文件夹这种方式存储时,你就要自定义一个类来读取数据,自定义的这个类必须继承自torch.utils.data.Dataset这个基类,最后同样用torch.utils.data.DataLoader封装成Tensor。

dataloders = {x: torch.utils.data.DataLoader(image_datasets[x],

batch_size=4,

shuffle=True,

num_workers=4)

for x in ['train', 'val']}生成dataloaders后再有一步就可以作为模型的输入了,那就是将Tensor数据类型封装成Variable数据类型,来看下面这段代码。dataloaders是一个字典,dataloders[‘train’]存的就是训练的数据,这个for循环就是从dataloders[‘train’]中读取batch_size个数据,batch_size在前面生成dataloaders的时候就设置了。因此这个data里面包含图像数据(inputs)这个Tensor和标签(labels)这个Tensor。然后用torch.autograd.Variable将Tensor封装成模型真正可以用的Variable数据类型。

为什么要封装成Variable呢?在pytorch中,torch.tensor和torch.autograd.Variable是两种比较重要的数据结构,Variable可以看成是tensor的一种包装,其不仅包含了tensor的内容,还包含了梯度等信息,因此在神经网络中常常用Variable数据结构。那么怎么从一个Variable类型中取出tensor呢?也很简单,比如下面封装后的inputs是一个Variable,那么inputs.data就是对应的tensor。

for data in dataloders['train']:

inputs, labels = data

if use_gpu:

inputs = Variable(inputs.cuda())

labels = Variable(labels.cuda())

else:

inputs, labels = Variable(inputs), Variable(labels)封装好了数据后,就可以作为模型的输入了。所以要先导入你的模型。在PyTorch中已经默认为大家准备了一些常用的网络结构,比如分类中的VGG,ResNet,DenseNet等等,可以用torchvision.models模块来导入。比如用torchvision.models.resnet18(pretrained=True)来导入ResNet18网络,同时指明导入的是已经预训练过的网络。因为预训练网络一般是在1000类的ImageNet数据集上进行的,所以要迁移到你自己数据集的2分类,需要替换最后的全连接层为你所需要的输出。因此下面这三行代码进行的就是用models模块导入resnet18网络,然后获取全连接层的输入channel个数,用这个channel个数和你要做的分类类别数(这里是2)替换原来模型中的全连接层。这样网络结果也准备好。

model = models.resnet18(pretrained=True)

num_ftrs = model.fc.in_features

model.fc = nn.Linear(num_ftrs, 2)但是只有网络结构和数据还不足以让代码运行起来,还需要定义损失函数。在PyTorch中采用torch.nn模块来定义网络的所有层,比如卷积、降采样、损失层等等,这里采用交叉熵函数,因此可以这样定义:

criterion = nn.CrossEntropyLoss()然后你还需要定义优化函数,比如最常见的随机梯度下降,在PyTorch中是通过torch.optim模块来实现的。另外这里虽然写的是SGD,但是因为有momentum,所以是Adam的优化方式。这个类的输入包括需要优化的参数:model.parameters(),学习率,还有Adam相关的momentum参数。现在很多优化方式的默认定义形式就是这样的。

optimizer = optim.SGD(model.parameters(), lr=0.001, momentum=0.9)然后一般还会定义学习率的变化策略,这里采用的是torch.optim.lr_scheduler模块的StepLR类,表示每隔step_size个epoch就将学习率降为原来的gamma倍。

scheduler = lr_scheduler.StepLR(optimizer, step_size=7, gamma=0.1)准备工作终于做完了,要开始训练了。

首先训练开始的时候需要先更新下学习率,这是因为我们前面制定了学习率的变化策略,所以在每个epoch开始时都要更新下:

scheduler.step()然后设置模型状态为训练状态:

model.train(True)然后先将网络中的所有梯度置0:

optimizer.zero_grad()然后就是网络的前向传播了:

outputs = model(inputs)然后将输出的outputs和原来导入的labels作为loss函数的输入就可以得到损失了:

loss = criterion(outputs, labels)输出的outputs也是torch.autograd.Variable格式,得到输出后(网络的全连接层的输出)还希望能到到模型预测该样本属于哪个类别的信息,这里采用torch.max。torch.max()的第一个输入是tensor格式,所以用outputs.data而不是outputs作为输入;第二个参数1是代表dim的意思,也就是取每一行的最大值,其实就是我们常见的取概率最大的那个index;第三个参数loss也是torch.autograd.Variable格式。

_, preds = torch.max(outputs.data, 1)计算得到loss后就要回传损失。要注意的是这是在训练的时候才会有的操作,测试时候只有forward过程。

loss.backward()回传损失过程中会计算梯度,然后需要根据这些梯度更新参数,optimizer.step()就是用来更新参数的。optimizer.step()后,你就可以从optimizer.param_groups[0][‘params’]里面看到各个层的梯度和权值信息。

optimizer.step()这样一个batch数据的训练就结束了!当你不断重复这样的训练过程,最终就可以达到你想要的结果了。

另外如果你有gpu可用,那么包括你的数据和模型都可以在gpu上操作,这在PyTorch中也非常简单。判断你是否有gpu可以用可以通过下面这行代码,如果有,则use_gpu是true。

use_gpu = torch.cuda.is_available()完整代码请移步:Github

浙公网安备 33010602011771号

浙公网安备 33010602011771号