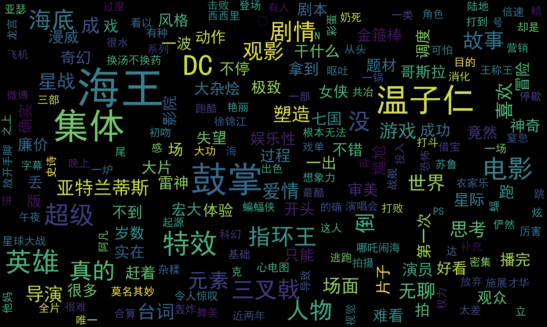

scrapy-redis爬取豆瓣电影短评,使用词云wordcloud展示

1、数据是使用scrapy-redis爬取的,存放在redis里面,爬取的是最近大热电影《海王》

2、使用了jieba中文分词解析库

3、使用了停用词stopwords,过滤掉一些无意义的词

4、使用matplotlib+wordcloud绘图展示

from redis import Redis

import json

import jieba

from wordcloud import WordCloud

import matplotlib.pyplot as plt

# 加载停用词

# stopwords = set(map(lambda x: x.rstrip('\n'), open('chineseStopWords.txt').readlines()))

stopwords = set()

with open('chineseStopWords.txt') as f:

for line in f.readlines():

stopwords.add(line.rstrip('\n'))

stopwords.add(' ')

# print(stopwords)

# print(len(stopwords))

# 读取影评

db = Redis(host='localhost')

items = db.lrange('review:items', 0, -1)

# print(items)

# print(len(items))

# 统计每个word出现的次数

# 过滤掉停用词

# 记录总数,用于计算词频

words = {}

total = 0

for item in items:

data = json.loads(item)['review']

# print(data)

# print('------------')

for word in jieba.cut(data):

if word not in stopwords:

words[word] = words.get(word, 0) + 1

total += 1

print(sorted(words.items(), key=lambda x: x[1], reverse=True))

# print(len(words))

# print(total)

# 词频

freq = {k: v / total for k, v in words.items()}

print(sorted(freq.items(), key=lambda x: x[1], reverse=True))

# 词云

wordcloud = WordCloud(font_path='simhei.ttf',

width=500,

height=300,

scale=10,

max_words=200,

max_font_size=40).fit_words(frequencies=freq) # Create a word_cloud from words and frequencies

plt.imshow(wordcloud, interpolation="bilinear")

plt.axis('off')

plt.show()

绘图结果:

参考:

https://github.com/amueller/word_cloud

http://amueller.github.io/word_cloud/

浙公网安备 33010602011771号

浙公网安备 33010602011771号