flink-1.15.0以下版本Flink SQL采用kafka采集数据使用groups-offset报错的问题

Caused by: org.apache.kafka.clients.consumer.NoOffsetForPartitionException: Undefined offset with no reset policy for partitions: [test_topic-11] at org.apache.kafka.clients.consumer.internals.SubscriptionState.resetInitializingPositions(SubscriptionState.java:683) at org.apache.kafka.clients.consumer.KafkaConsumer.updateFetchPositions(KafkaConsumer.java:2420) at org.apache.kafka.clients.consumer.KafkaConsumer.position(KafkaConsumer.java:1750) at org.apache.kafka.clients.consumer.KafkaConsumer.position(KafkaConsumer.java:1709) at org.apache.flink.connector.kafka.source.reader.KafkaPartitionSplitReader.removeEmptySplits(KafkaPartitionSplitReader.java:375) at org.apache.flink.connector.kafka.source.reader.KafkaPartitionSplitReader.handleSplitsChanges(KafkaPartitionSplitReader.java:260) at org.apache.flink.connector.base.source.reader.fetcher.AddSplitsTask.run(AddSplitsTask.java:51) at org.apache.flink.connector.base.source.reader.fetcher.SplitFetcher.runOnce(SplitFetcher.java:142) ... 6 more

采用flink1.14.5版本,执行flinkSql脚本为

create table inputTable ( CreatTime string COMMENT '', collectionTime string COMMENT '' )with( 'connector' = 'kafka', 'scan.startup.mode' = 'group-offsets', 'topic' = 'demo_topic', 'properties.bootstrap.servers' = '192.168.151.115:9092;192.168.151.116:9092;192.168.151.117:9092', 'properties.group.id' = 'demo_topic$group', 'properties.auto.offset.reset'='earliest', 'format' = 'json' );

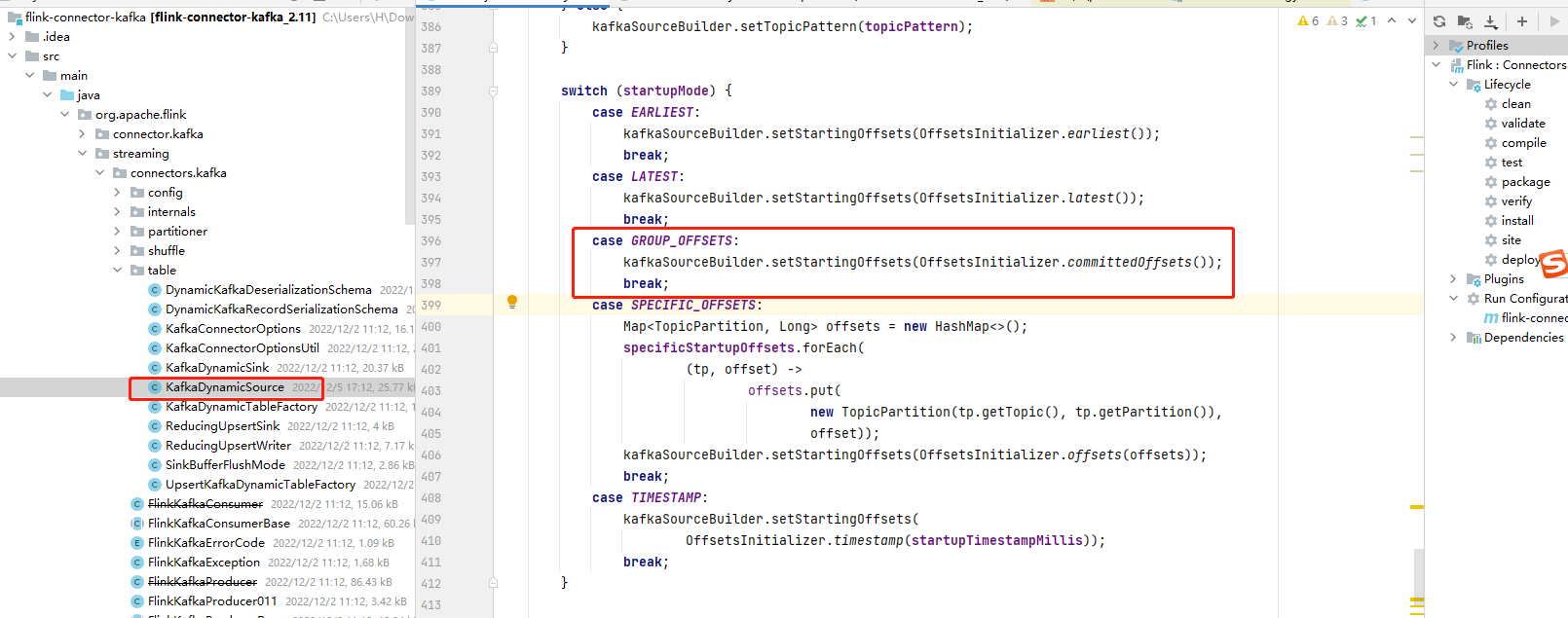

已经使用了'properties.auto.offset.reset'='earliest',但是没有生效。怀疑是依赖的kafka的connector有bug,将github上1.14.5源码拉取下来GitHub - apache/flink at release-1.14.5,查看源码发现

<dependency> <groupId>org.apache.flink</groupId> <artifactId>flink-sql-connector-kafka_2.12</artifactId> <version>1.14.5</version> <scope>provided</scope> </dependency>

发现对group-offsets时没有取用户配置的选项,所有默认为了'none'.故修改这里的代码加入:

String offsetResetConfig =

this.properties.getProperty(

"auto.offset.reset", OffsetResetStrategy.EARLIEST.name());

if ("EARLIEST".equalsIgnoreCase(offsetResetConfig)) {

kafkaSourceBuilder.setStartingOffsets(OffsetsInitializer.earliest());

} else if ("LATEST".equalsIgnoreCase(offsetResetConfig)) {

kafkaSourceBuilder.setStartingOffsets(OffsetsInitializer.latest());

} else {

kafkaSourceBuilder.setStartingOffsets(OffsetsInitializer.committedOffsets());

}

然后再项目根目录执行

mvn spotless:apply 格式化代码

在flink-sql-connector-kafka中执行打包:

mvn clean package -DskipTests

然后用打包好的jar包替换线上的flink项目目录下lib下的 指定jar包就好了。

指定jar包就好了。

也可以直接下载我修改好的jar包:learn/flink-sql-connector-kafka_2.11-1.14.5.jar at master · nothingMoreL/learn (github.com)

浙公网安备 33010602011771号

浙公网安备 33010602011771号