| 博客班级 https://edu.cnblogs.com/campus/ahgc/machinelearning |

| 作业要求 https://edu.cnblogs.com/campus/ahgc/machinelearning/homework/12085 |

| 作业目标 掌握常见的高斯模型,多项式模型和伯努利模型; |

| 学号 3180701235 |

| 二:实验目的 |

| 1.理解朴素贝叶斯算法原理,掌握朴素贝叶斯算法框架; |

| 2.掌握常见的高斯模型,多项式模型和伯努利模型; |

| 3.能根据不同的数据类型,选择不同的概率模型实现朴素贝叶斯算法; |

| 4.针对特定应用场景及数据,能应用朴素贝叶斯解决实际问题。 |

|

| 三:实验内容 |

| 1.实现高斯朴素贝叶斯算法。 |

| 2.熟悉sklearn库中的朴素贝叶斯算法; |

| 3.针对iris数据集,应用sklearn的朴素贝叶斯算法进行类别预测。 |

| 4.针对iris数据集,利用自编朴素贝叶斯算法进行类别预测。 |

|

| 四:实验报告要求 |

| 1.对照实验内容,撰写实验过程、算法及测试结果; |

| 2.代码规范化:命名规则、注释; |

| 3.分析核心算法的复杂度; |

| 4.查阅文献,讨论各种朴素贝叶斯算法的应用场景; |

| 5.讨论朴素贝叶斯算法的优缺点。 |

|

| 五:实验过程 |

| In [1]: |

|

| import numpy as np |

| import pandas as pd |

| import matplotlib.pyplot as plt |

| %matplotlib inline |

|

| from sklearn.datasets import load_iris |

| from sklearn.model_selection import train_test_split |

|

| from collections import Counter |

| import math |

| In [2]: |

|

| def create_data(): |

| iris = load_iris() |

| df = pd.DataFrame(iris.data, columns=iris.feature_names) |

| df['label'] = iris.target |

| df.columns = [ |

| 'sepal length', 'sepal width', 'petal length', 'petal width', 'label' |

| ] |

| data = np.array(df.iloc[:100, :]) |

|

| return data[:, :-1], data[:, -1] |

| In [3]: |

|

| X, y = create_data() |

| X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.3) |

| In [4]: |

|

| X_test[0], y_test[0] |

| In [5]: |

|

| class NaiveBayes: |

| def init(self): |

| self.model = None |

|

| # 数学期望 |

| @staticmethod |

| def mean(X): |

| return sum(X) / float(len(X)) |

|

| # 标准差(方差) |

| def stdev(self, X): |

| avg = self.mean(X) |

| return math.sqrt(sum([pow(x - avg, 2) for x in X]) / float(len(X))) |

|

| # 概率密度函数 |

| def gaussian_probability(self, x, mean, stdev): |

| exponent = math.exp(-(math.pow(x - mean, 2) / |

| (2 * math.pow(stdev, 2)))) |

| return (1 / (math.sqrt(2 * math.pi) * stdev)) * exponent |

|

| # 处理X_train |

| def summarize(self, train_data): |

| summaries = [(self.mean(i), self.stdev(i)) for i in zip(*train_data)] |

| return summaries |

|

| # 分类别求出数学期望和标准差 |

| def fit(self, X, y): |

| labels = list(set(y)) |

| data = |

| for f, label in zip(X, y): |

| data[label].append(f) |

| self.model = { |

| label: self.summarize(value) |

| for label, value in data.items() |

| } |

| return 'gaussianNB train done!' |

|

| # 计算概率 |

| def calculate_probabilities(self, input_data): |

| # summaries: |

| # input_data:[1.1, 2.2] |

| probabilities = {} |

| for label, value in self.model.items(): |

| probabilities[label] = 1 |

| for i in range(len(value)): |

| mean, stdev = value[i] |

| probabilities[label] *= self.gaussian_probability( |

| input_data[i], mean, stdev) |

| return probabilities |

|

| # 类别 |

| def predict(self, X_test): |

| # |

| label = sorted( |

| self.calculate_probabilities(X_test).items(), |

| key=lambda x: x[-1])[-1][0] |

| return label |

|

| def score(self, X_test, y_test): |

| right = 0 |

| for X, y in zip(X_test, y_test): |

| label = self.predict(X) |

| if label == y: |

| right += 1 |

| return right / float(len(X_test)) |

| In [6]: |

|

| model = NaiveBayes() |

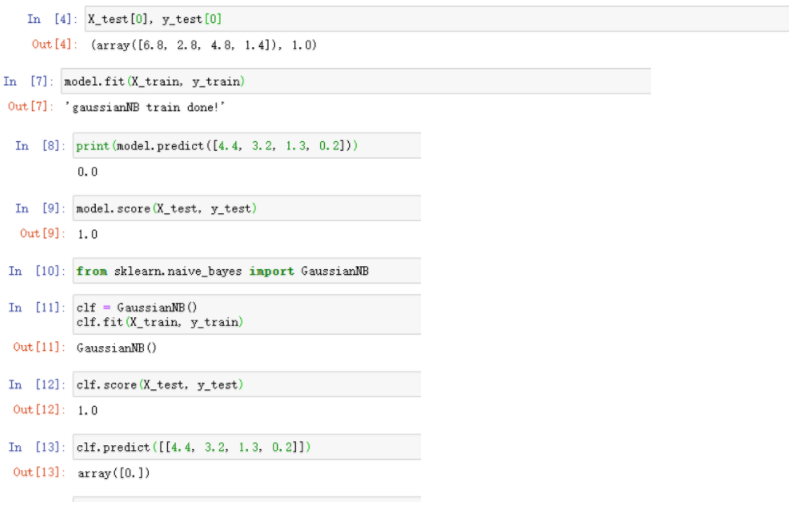

| In [7]: |

|

| model.fit(X_train, y_train) |

| In [8]: |

|

| print(model.predict([4.4, 3.2, 1.3, 0.2])) |

| In [9]: |

|

| model.score(X_test, y_test) |

| In [10]: |

|

| from sklearn.naive_bayes import GaussianNB |

| In [11]: |

|

| clf = GaussianNB() |

| clf.fit(X_train, y_train) |

| In [12]: |

|

| clf.score(X_test, y_test) |

| In [13]: |

|

| clf.predict([[4.4, 3.2, 1.3, 0.2]]) |

| In [14]: |

|

| from sklearn.naive_bayes import BernoulliNB, MultinomialNB # 伯努利模型和多项式模型 |

| 六:实验结果 |

![]() |

浙公网安备 33010602011771号

浙公网安备 33010602011771号