学习K8S之路.8---使用ELK Stack收集kubernetes集群内的应用日志

传统的ELK模型:

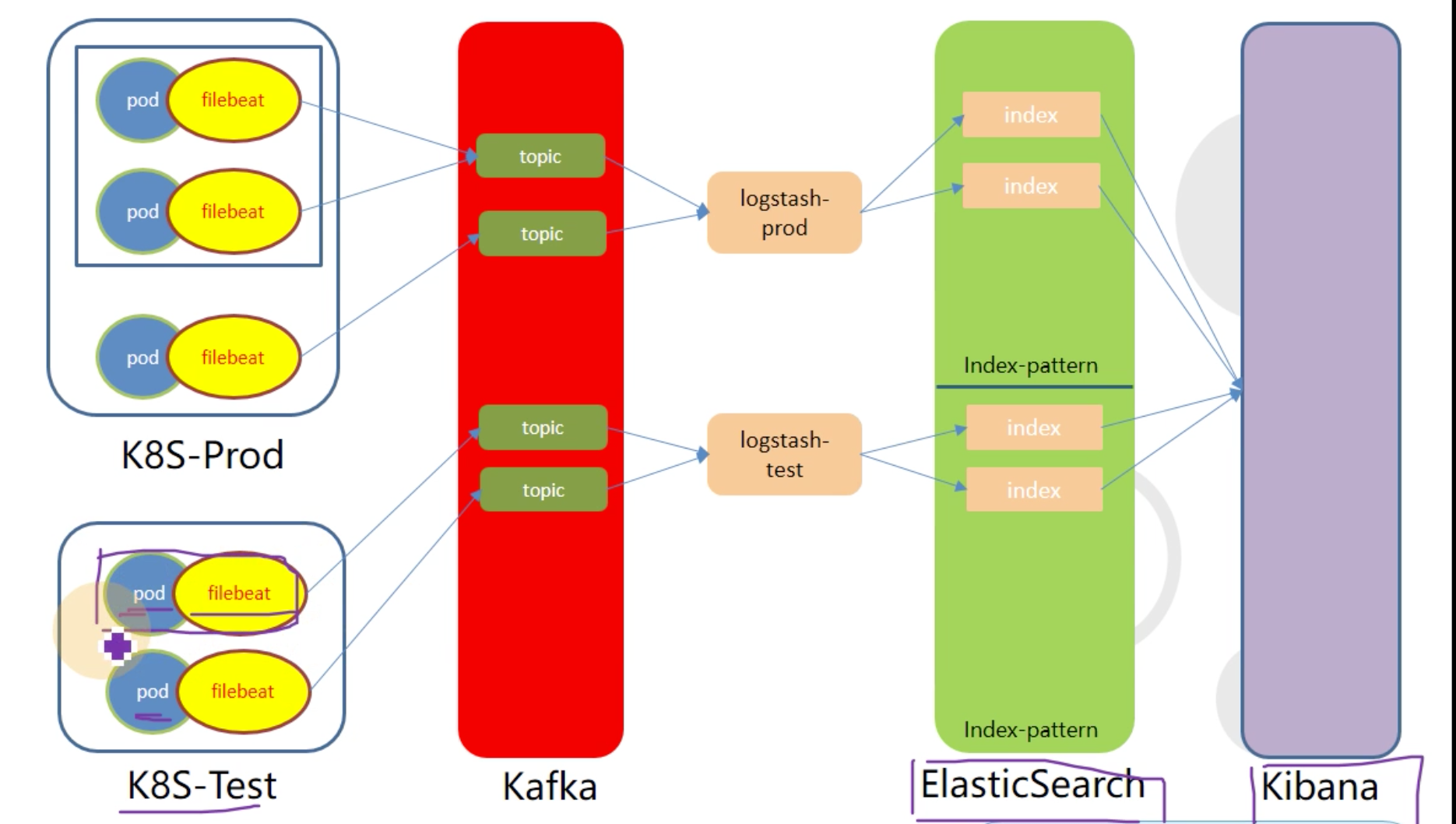

在容器中模型中难以完成工作,需要对齐进行改进,如下图:

简单介绍:

ELK日志流向顺序,filebeat采集日志信息,把相关的日志返给kafka,

logstash从kafka中获取日志信息,返给ES

kibana通过配置文件连接ES,获取数据,并通过web进行展示

前提:

ELK需要JDK环境,所以需要提前安装jdk [root@k8s-6-92 ~]# tar zxf jdk1.8.0_72.tar.gz [root@k8s-6-92 ~]# mv jdk1.8.0_72 /usr/local/java [root@k8s-6-92 ~]# vi /etc/profile export JAVA_HOME=/usr/local/java export CLASSPATH=.:$JAVA_HOME/jre/lib/rt.jar:$JAVA_HOME/lib/dt.jar:$JAVA_HOME/lib/tools.jar export PATH=$JAVA_HOME/bin:$PATH

[root@k8s-6-92 ~]# source /etc/profile

一:安装ES

官网地址:https://www.elastic.co/

下载地址:https://www.elastic.co/cn/downloads/elasticsearch

1.1:在192.168.6.92上安装ES

[root@k8s-6-92 opt]# tar zxf elasticsearch-7.8.0-linux-x86_64.tar.gz [root@k8s-6-92 opt]# ln -s /opt/elasticsearch-7.8.0 /opt/elasticsearch

1.2:配置ES

[root@k8s-6-92 ~]# mkdir /data/elasticsearch/{data,logs} -p [root@k8s-6-92 ~]# cd /opt/elasticsearch/config/ [root@k8s-6-92 config]# vi elasticsearch.yml cluster.name: es.auth.com node.name: k8s-6-92.host.com path.data: /data/elasticsearch/data path.logs: /data/elasticsearch/logs bootstrap.memory_lock: true network.host: 192.168.6.92 http.port: 9200 [root@k8s-6-92 config]# vi jvm.options 注释:配置文件,默认是1G,可根据实际情况进行调整。官方推荐最大不要超过32G -Xms1g -Xmx1g

1.3:创建普通用户

[root@k8s-6-92 config]# useradd -s /bin/bash es [root@k8s-6-92 config]# chown es.es /opt/elasticsearch -R [root@k8s-6-92 config]# chown es.es /data/elasticsearch/ -R

1.4:修改文件描述符

[root@k8s-6-92 ~]# /etc/security/limits.conf es soft nofile 65536 es hard nofile 65536 es soft nproc 65536 es hard nproc 65536 es soft memlock unlimited es hard memlock unlimited

1.5:调整内核参数

[root@k8s-6-92 ~]# echo "vm.max_map_count=262144" >> /etc/sysctl.conf [root@k8s-6-92 ~]# sysctl -p

1.6:启动

[root@k8s-6-92 ~]# su - es [es@k8s-6-92 ~]$ cd /opt/elasticsearch/bin/ [es@k8s-6-92 ~]$ ./elasticsearch -d

注:在启动中如果有错误,可以参考

错误信息: the default discovery settings are unsuitable for production use; at least one of ERROR: [1] bootstrap checks failed [1]: the default discovery settings are unsuitable for production use; at least one of [discovery.seed_hosts, discovery.seed_providers, cluster.initial_master_nodes] must be configured 解决方法: vim config/elasticsearch.yml # 取消注释,并保留一个节点 cluster.initial_master_nodes: ["node-1"]

1.7:验证ES安装是否正常

[root@k8s-6-92 ~]# curl 'http://192.168.6.92:9200/?pretty' { "name" : "k8s-6-92.host.com", "cluster_name" : "es.auth.com", "cluster_uuid" : "Zf5Q5n2tScuz8f7UEI7hSQ", "version" : { "number" : "7.8.0", "build_flavor" : "default", "build_type" : "tar", "build_hash" : "757314695644ea9a1dc2fecd26d1a43856725e65", "build_date" : "2020-06-14T19:35:50.234439Z", "build_snapshot" : false, "lucene_version" : "8.5.1", "minimum_wire_compatibility_version" : "6.8.0", "minimum_index_compatibility_version" : "6.0.0-beta1" }, "tagline" : "You Know, for Search" }

1.8:调整ES日志模板

[root@k8s-6-92 ~]# curl -H "Content-Type:application/json" -XPUT http://192.168.6.92:9200/_template/k8s -d '{ "template" : "k8s*", "index_patterns": ["k8s*"], "settings": { "number_of_shards": 5, "number_of_replicas": 0 } }'

二:安装kafka

在192.168.6.93上安装kafka

2.1:需要安装jdk zookeeper

jdk安装方式省略 1:安装zookeeper: [root@k8s-6-93 ~]# wget https://archive.apache.org/dist/zookeeper/zookeeper-3.4.14/zookeeper-3.4.14.tar.gz [root@k8s-6-93 ~]# tar zxf zookeeper-3.4.14.tar.gz -C /opt/ [root@k8s-6-93 opt]# ln -s /opt/zookeeper-3.4.14 /opt/zookeeper [root@k8s-6-93 zookeeper]# mkdir -pv /data/zookeeper/data /data/zookeeper/logs 2:配置zookeeper [root@k8s-6-93 zookeeper]# vi /opt/zookeeper/conf/zoo.cfg tickTime=2000 initLimit=10 syncLimit=5 dataDir=/data/zookeeper/data dataLogDir=/data/zookeeper/logs clientPort=2181 [root@k8s-6-93 ~]# vi /data/zookeeper/data/myid 1 3:启动zookeeper [root@k8s-6-93 data]# /opt/zookeeper/bin/zkServer.sh start

2.2:安装kafka

kafka官网地址:http://kafka.apache.org/ kafka下载地址:https://mirrors.tuna.tsinghua.edu.cn/apache/kafka/ 注:kafka版本使用2.2.0版本,建议不要使用2.2.0以上版本,因为要使用第三方kafka-manager插件 [root@k8s-6-93 ~]# wget https://archive.apache.org/dist/kafka/2.2.0/kafka_2.12-2.2.0.tgz [root@k8s-6-93 ~]# tar zxf kafka_2.12-2.2.0.tgz -C /opt/ [root@k8s-6-93 ~]# ln -s /opt/kafka_2.12-2.2.0 /opt/kafka

2.3:配置kafka

[root@k8s-6-93 ~]# mkdir /data/kafka/logs [root@k8s-6-93 ~]# /opt/kafka/config [root@k8s-6-93 config]# vi server.properties log.dirs=/data/kafka/logs zookeeper.connect=127.0.0.1:2181 log.flush.interval.messages=10000 log.flush.interval.ms=1000 # 添加下面两行 delete.topic.enable=true host.name=k8s-6-93.host.com

2.4:启动kafka

[root@k8s-6-93 kafka]# ./bin/kafka-server-start.sh -daemon config/server.properties [root@k8s-6-93 kafka]# netstat -nlput | grep 9092

三:安装kafka-manager

3.1:在运维主机上下载docker镜像

[root@k8s-6-96 ~]# docker pull sheepkiller/kafka-manager:stable [root@k8s-6-96 ~]# docker tag 34627743836f harbor.auth.com/public/kafka-manager:stable [root@k8s-6-96 ~]# docker push harbor.auth.com/public/kafka-manager:stable

3.2:准备资源配置清单

[root@k8s-6-96 ~]# mkdir /data/k8s-yaml/kafka-manager/ [root@k8s-6-96 kafka-manager]# cat deployment.yaml kind: Deployment apiVersion: extensions/v1beta1 metadata: name: kafka-manager namespace: infra labels: name: kafka-manager spec: replicas: 1 selector: matchLabels: name: kafka-manager template: metadata: labels: app: kafka-manager name: kafka-manager spec: containers: - name: kafka-manager image: harbor.auth.com/public/kafka-manager:stable ports: - containerPort: 9000 protocol: TCP env: - name: ZK_HOSTS value: 192.168.6.93:2181 - name: APPLICATION_SECRET value: letmein imagePullPolicy: IfNotPresent imagePullSecrets: - name: harbor restartPolicy: Always terminationGracePeriodSeconds: 30 securityContext: runAsUser: 0 schedulerName: default-scheduler strategy: type: RollingUpdate rollingUpdate: maxUnavailable: 1 maxSurge: 1 revisionHistoryLimit: 7 progressDeadlineSeconds: 600

[root@k8s-6-96 kafka-manager]# cat svc.yaml kind: Service apiVersion: v1 metadata: name: kafka-manager namespace: infra spec: ports: - protocol: TCP port: 9000 targetPort: 9000 selector: app: kafka-manager clusterIP: None type: ClusterIP sessionAffinity: None

[root@k8s-6-96 kafka-manager]# cat ingress.yaml kind: Ingress apiVersion: extensions/v1beta1 metadata: name: kafka-manager namespace: infra spec: rules: - host: km.auth.com http: paths: - path: / backend: serviceName: kafka-manager servicePort: 9000

3.3:应用资源配置清单

在任意一台运算节点上进行应用资源配置清单 [root@k8s-6-94 ~]# kubectl apply -f http://k8s-yaml.auth.com/kafka-manager/deployment.yaml deployment.extensions/kafka-manager created [root@k8s-6-94 ~]# kubectl apply -f http://k8s-yaml.auth.com/kafka-manager/svc.yaml service/kafka-manager created [root@k8s-6-94 ~]# kubectl apply -f http://k8s-yaml.auth.com/kafka-manager/ingress.yaml ingress.extensions/kafka-manager created

3.4:在DNS服务器上解析域名

[root@k8s-6-92 ~]# vi /var/named/auth.com.zone km A 192.168.6.89 注:serial 编号进行+1

[root@k8s-6-92 ~]# systemctl restart named

3.5:浏览器访问,并进行配置

http://km.auth.com

四:安装filebeat

4.1:制作Dockerfile

filebeat官方下载地址:https://www.elastic.co/cn/downloads/beats/filebeat

# 636fbb5c9951a8caba74a85bc55ac4ef776ddbd063c4b8471c4a1eee079e2bec14804dcd931baf6261cbc3713a41773fd9ea5b1018e07a1761a3bcef59805b8b 是sha的一个指纹集,获取的方法:选择相应的版本,点击sha,会下载一个文本,文本中就是sha的指纹集

[root@k8s-6-96 ~]# mkdir /data/dockerfile/filebeat [root@k8s-6-96 ~]# cd /data/dockerfile/filebeat [root@k8s-6-96 filebeat]# cat Dockerfile FROM debian:jessie ENV FILEBEAT_VERSION=7.8.0 \ FILEBEAT_SHA1=636fbb5c9951a8caba74a85bc55ac4ef776ddbd063c4b8471c4a1eee079e2bec14804dcd931baf6261cbc3713a41773fd9ea5b1018e07a1761a3bcef59805b8b RUN set -x && \ apt-get update && \ apt-get install -y wget && \ wget https://artifacts.elastic.co/downloads/beats/filebeat/filebeat-${FILEBEAT_VERSION}-linux-x86_64.tar.gz -O /opt/filebeat.tar.gz && \ cd /opt && \ echo "${FILEBEAT_SHA1} filebeat.tar.gz" | sha512sum -c - && \ tar xzvf filebeat.tar.gz && \ cd filebeat-* && \ cp filebeat /bin && \ cd /opt && \ rm -rf filebeat* && \ apt-get purge -y wget && \ apt-get autoremove -y && \ apt-get clean && rm -rf /var/lib/apt/lists/* /tmp/* /var/tmp/* COPY docker-entrypoint.sh / ENTRYPOINT ["/docker-entrypoint.sh"]

[root@k8s-6-96 filebeat]# cat docker-entrypoint.sh #!/bin/bash ENV=${ENV:-"test"} PROJ_NAME=${PROJ_NAME:-"no-define"} MULTILINE=${MULTILINE:-"^\d{2}"} cat > /etc/filebeat.yaml << EOF filebeat.inputs: - type: log fields_under_root: true fields: topic: logm-${PROJ_NAME} paths: - /logm/*.log - /logm/*/*.log - /logm/*/*/*.log - /logm/*/*/*/*.log - /logm/*/*/*/*/*.log scan_frequency: 120s max_bytes: 10485760 multiline.pattern: '$MULTILINE' multiline.negate: true multiline.match: after multiline.max_lines: 100 - type: log fields_under_root: true fields: topic: logu-${PROJ_NAME} paths: - /logu/*.log - /logu/*/*.log - /logu/*/*/*.log - /logu/*/*/*/*.log - /logu/*/*/*/*/*.log - /logu/*/*/*/*/*/*.log output.kafka: hosts: ["192.168.6.93:9092"] topic: k8s-fb-$ENV-%{[topic]} version: 2.0.0 required_acks: 0 max_message_bytes: 10485760 EOF set -xe # If user don't provide any command # Run filebeat if [[ "$1" == "" ]]; then exec filebeat -c /etc/filebeat.yaml else # Else allow the user to run arbitrarily commands like bash exec "$@" fi

[root@k8s-6-96 filebeat]# chmod +x docker-entrypoint.sh

[root@k8s-6-96 filebeat]# docker build . -t harbor.auth.com/public/filebeat:v7.8.0

[root@k8s-6-96 filebeat]# docker push harbor.auth.com/public/filebeat:v7.8.0

4.2:修改Tomcat镜像,添加filebeat镜像,进行收集日志信息

[root@k8s-6-96 uap-admin]# cat dp.yaml kind: Deployment apiVersion: extensions/v1beta1 metadata: name: gmrz-uap-admin namespace: system labels: name: gmrz-uap-admin spec: replicas: 1 selector: matchLabels: name: gmrz-uap-admin template: metadata: labels: app: gmrz-uap-admin name: gmrz-uap-admin spec: containers: - name: gmrz-uap-admin image: harbor.auth.com/apps/uap-admin:v20200707_1628 imagePullPolicy: IfNotPresent volumeMounts: - mountPath: /opt/logs/ name: logm - mountPath: /opt/tomcat/conf/context.xml name: config-context subPath: context.xml - name: filebeat image: harbor.auth.com/public/filebeat:v7.8.0 env: - name: ENV value: test - name: PROJ_NAME value: gmrz-uap-admin volumeMounts: - mountPath: /logm name: logm volumes: - emptyDir: {} name: logm - name: config-context configMap: name: gmrz-uap-config

[root@k8s-6-96 uap-admin]# cat svc.yaml kind: Service apiVersion: v1 metadata: name: gmrz-uap-admin namespace: system spec: ports: - protocol: TCP port: 8080 targetPort: 8080 selector: app: gmrz-uap-admin

[root@k8s-6-96 uap-admin]# cat ingress.yaml kind: Ingress apiVersion: extensions/v1beta1 metadata: name: gmrz-uap-admin namespace: system spec: rules: - host: uap-admin.auth.com http: paths: - path: / backend: serviceName: gmrz-uap-admin servicePort: 8080

4.3:浏览器访问http://km.auth.com

看到kafaka-manager里,topic打进来,即为成功。

4.4:验证数据

[root@k8s-6-93 ~]# cd /opt/kafka/bin/ [root@k8s-6-93 bin]# ./kafka-console-consumer.sh --bootstrap-server 192.168.6.93:9092 --topic k8s-fb-test-logm-gmrz-uap-admin --from-beginning

五:安装logstash

logstash官方下载地址:https://hub.docker.com/_/logstash?tab=tags

5.1:准备docker镜像

Step 1:下载官方镜像 [root@k8s-6-96 ~]# docker pull logstash:7.8.0 [root@k8s-6-96 ~]# docker images | grep logstash [root@k8s-6-96 ~]# docker tag 01979bbd06c9 harbor.auth.com/public/logstash:v7.8.0 [root@k8s-6-96 ~]# docker push harbor.auth.com/public/logstash:v7.8.0 Step 2:准备dockerfile 和 配置文件 [root@k8s-6-96 uap-admin]# cd /data/dockerfile/logstash/ [root@k8s-6-96 logstash]# cat Dockerfile From harbor.auth.com/public/logstash:v7.8.0 ADD logstash.yml /usr/share/logstash/config [root@k8s-6-96 logstash]# cat logstash.yml http.host: "0.0.0.0" path.config: /etc/logstash xpack.monitoring.enabled: false Step 3:构建镜像,并上传到私有仓库中 [root@k8s-6-96 logstash]# docker build . -t harbor.od.com/infra/logstash:v7.8.0 [root@k8s-6-96 logstash]# docker push harbor.auth.com/public/logstash:v7.8.0

5.2:启动docker镜像

Step 1:创建配置文件 [root@k8s-6-96 ~]# mkdir /etc/logstash/ [root@k8s-6-96 ~]# cd /etc/logstash/ [root@k8s-6-96 logstash]# cat logstash-test.conf input { kafka { bootstrap_servers => "192.168.6.93:9092" client_id => "192.168.6.96" consumer_threads => 4 group_id => "k8s_test" topics_pattern => "k8s-fb-test-.*" } } filter { json { source => "message" } } output { elasticsearch { hosts => ["192.168.6.92:9200"] index => "k8s-test-%{+YYYY.MM.DD}" } } Step2:启动logstash镜像 [root@k8s-6-96 ~]# docker run -d --name logstash-test -v /etc/logstash:/etc/logstash harbor.auth.com/infra/logstash:v7.8.0 -f /etc/logstash/logstash-test.conf [root@k8s-6-96 ~]# docker ps -a|grep logstash Step3:验证ElasticSearch里的索引 [root@k8s-6-96 ~]# curl http://192.168.6.92:9200/_cat/indices?v

六:安装Kibana

Kibana官方下载地址:https://hub.docker.com/_/kibana?tab=tags

6.1:准备docker镜像

[root@k8s-6-96 ~]# docker pull kibana:7.8.0 [root@k8s-6-96 ~]# docker images [root@k8s-6-96 ~]# docker tag df0a0da46dd1 harbor.auth.com/infra/kibana:v7.8.0 [root@k8s-6-96 ~]# docker push harbor.auth.com/infra/kibana:v7.8.0

6.2:准备资源配置清单

[root@k8s-6-96 ~]# mkdir /data/k8s-yaml/kibana/ [root@k8s-6-96 ~]# cd /data/k8s-yaml/kibana/ [root@k8s-6-96 kibana]# cat cm.yaml apiVersion: v1 kind: ConfigMap metadata: name: kibana-config namespace: infra data: kibana.yml: | server.name: kibana server.host: "0" elasticsearch.hosts: [ "http://192.168.6.92:9200" ] monitoring.ui.container.elasticsearch.enabled: true

[root@k8s-6-96 kibana]# cat dp.yaml kind: Deployment apiVersion: extensions/v1beta1 metadata: name: kibana namespace: infra labels: name: kibana spec: replicas: 1 selector: matchLabels: name: kibana template: metadata: labels: app: kibana name: kibana spec: volumes: - name: kibana-config configMap: name: kibana-config containers: - name: kibana image: harbor.auth.com/infra/kibana:v7.8.0 imagePullPolicy: IfNotPresent volumeMounts: - name: kibana-config mountPath: /usr/share/kibana/config

[root@k8s-6-96 kibana]# cat svc.yaml kind: Service apiVersion: v1 metadata: name: kibana namespace: infra spec: ports: - protocol: TCP port: 5601 targetPort: 5601 selector: app: kibana clusterIP: None type: ClusterIP sessionAffinity: None

[root@k8s-6-96 kibana]# cat ingress.yaml kind: Ingress apiVersion: extensions/v1beta1 metadata: name: kibana namespace: infra spec: rules: - host: kibana.auth.com http: paths: - path: / backend: serviceName: kibana servicePort: 5601

6.3:应用资源配置清单

在任意一台运算节点上进行应用资源配置清单 [root@k8s-6-94 ~]# kubectl apply -f http://k8s-yaml.auth.com/kibana/cm.yaml [root@k8s-6-94 ~]# kubectl apply -f http://k8s-yaml.auth.com/kibana/dp.yaml [root@k8s-6-94 ~]# kubectl apply -f http://k8s-yaml.auth.com/kibana/svc.yaml [root@k8s-6-94 ~]# kubectl apply -f http://k8s-yaml.auth.com/kibana/ingress.yaml

6.4:在DNS服务器上解析域名

[root@k8s-6-92 ~]# vi /var/named/auth.com.zone kibana A 192.168.6.89 注:serial 编号进行+1 [root@k8s-6-92 ~]# systemctl restart named

6.5:浏览器访问http://kibana.auth.com,并配置kibana

七:kibana的使用

时间选择器

- 选择日志时间

快速时间

绝对时间

相对时间

环境选择器

- 选择对应环境的日志

k8s-test-

k8s-prod-

项目选择器

- 对应filebeat的PROJ_NAME值

- Add a fillter

- topic is ${PROJ_NAME}

dubbo-demo-service

dubbo-demo-web

浙公网安备 33010602011771号

浙公网安备 33010602011771号