爬虫大作业

1.选一个自己感兴趣的主题或网站。(所有同学不能雷同)

2.用python 编写爬虫程序,从网络上爬取相关主题的数据。

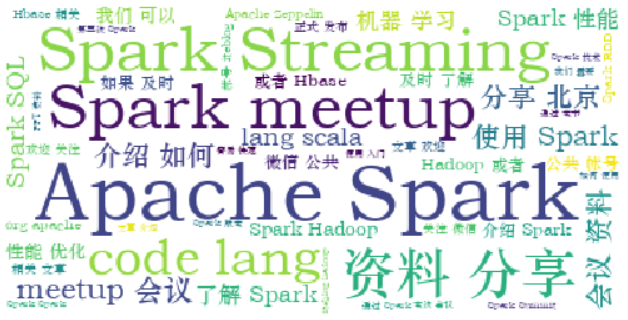

3.对爬了的数据进行文本分析,生成词云。

4.对文本分析结果进行解释说明。

5.写一篇完整的博客,描述上述实现过程、遇到的问题及解决办法、数据分析思想及结论。

6.最后提交爬取的全部数据、爬虫及数据分析源代码

from bs4 import BeautifulSoup as bs

from urllib.request import urlopen

from urllib.request import Request

import urllib.request as ur

from urllib import parse

import requests

import os

import re

import jieba

def getHtml(url):

header = {

"User-Agent": "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/59.0.3071.115 Safari/537.36"}

html = requests.get(url,headers=header).content.decode('utf-8')

return html

def getUrls(pagehtml):

soup = bs(pagehtml, 'html.parser')

d = soup.select('.excerpt header h2 a') # 网址

urls=[]

#将页面的文章的网址保存下来

for j in d:

a = j.get('href')

urls.append(a)

print(urls)

return urls,

def getDatailinfo(url):

try:

html = getHtml(url)

soup = bs(html, "html.parser")

# 遍历首页文本标签

e = soup.select('.excerpt .abstract') # 摘要

f = soup.select('.auth-span') # 评论等

zhaiyao = []

for i in e:#首页文章摘要

text = i.get_text().strip()

if text not in zhaiyao:

zhaiyao.append(text)

print(text)

#将文章下方的作者,点击量,喜欢量等数据保存下来

for i in f:

c = i.get_text().strip()

a = c.split()

for j in zhaiyao:

print(j)

f = open('D:\\python\\a.txt','a',encoding='utf-8')

f.write(j)

f.close()

except:

pass

if __name__=="__main__":

firsturl = "https://www.iteblog.com/archives/category/spark/"

num = 1

pageurls = []

for p in range(24):

pageurl = firsturl + 'page/' + str(num) + '/'

num += 1

pageurls.append(pageurl)

print(pageurls)

for furl in pageurls:

getDatailinfo(furl)

for purl in pageurls:

print('----------------------------------')

pagehtml = getHtml(purl)

urls = getUrls(pagehtml)

for urls in purl:

getAllinfo(urls)

生成词云

info = open('D:\\python\\a.txt','r',encoding='utf-8').read().split()

text = ''

text += ' '.join(jieba.lcut(info))

wc = WordCloud(font_path='C:\Windows\Fonts\STZHONGS.TTF',background_color='White',max_words=50)

wc.generate_from_text(text)

plt.imshow(wc)

plt.imshow(wc.recolor(color_func=image_color))

plt.axis("off")

plt.show()

wc.to_file('dream.png')

浙公网安备 33010602011771号

浙公网安备 33010602011771号