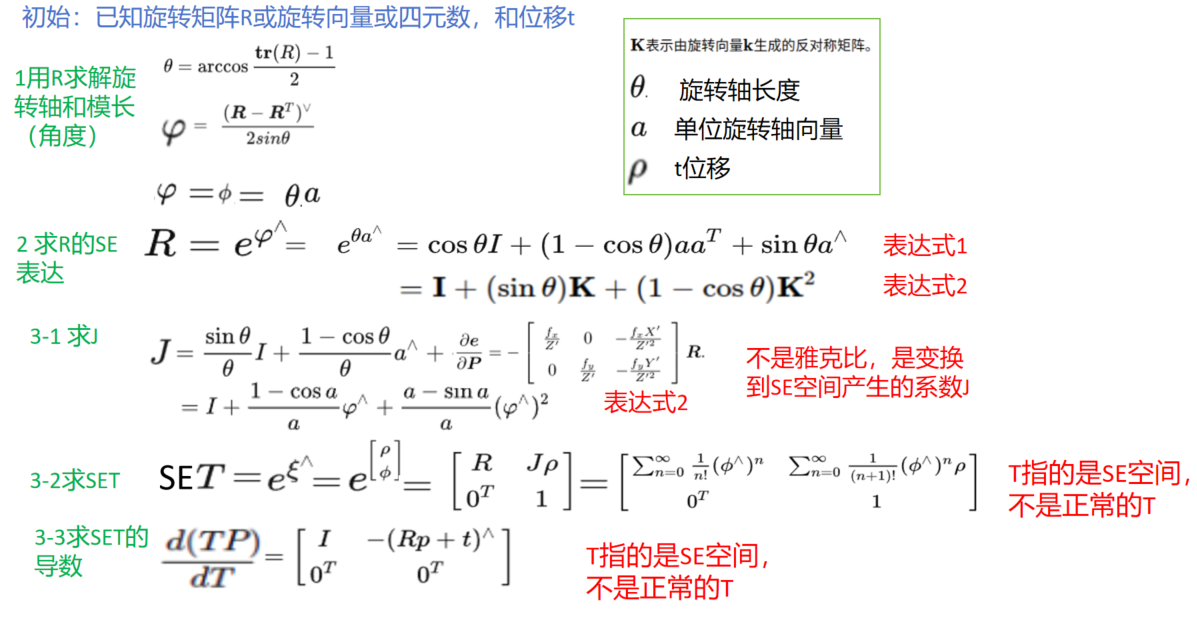

1 理论数学基础

https://www.cnblogs.com/gooutlook/p/17840013.html

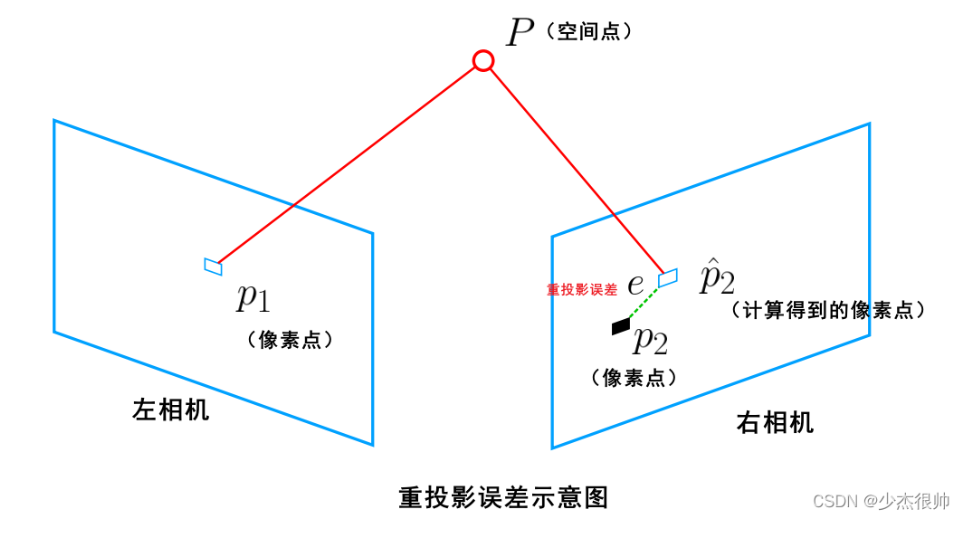

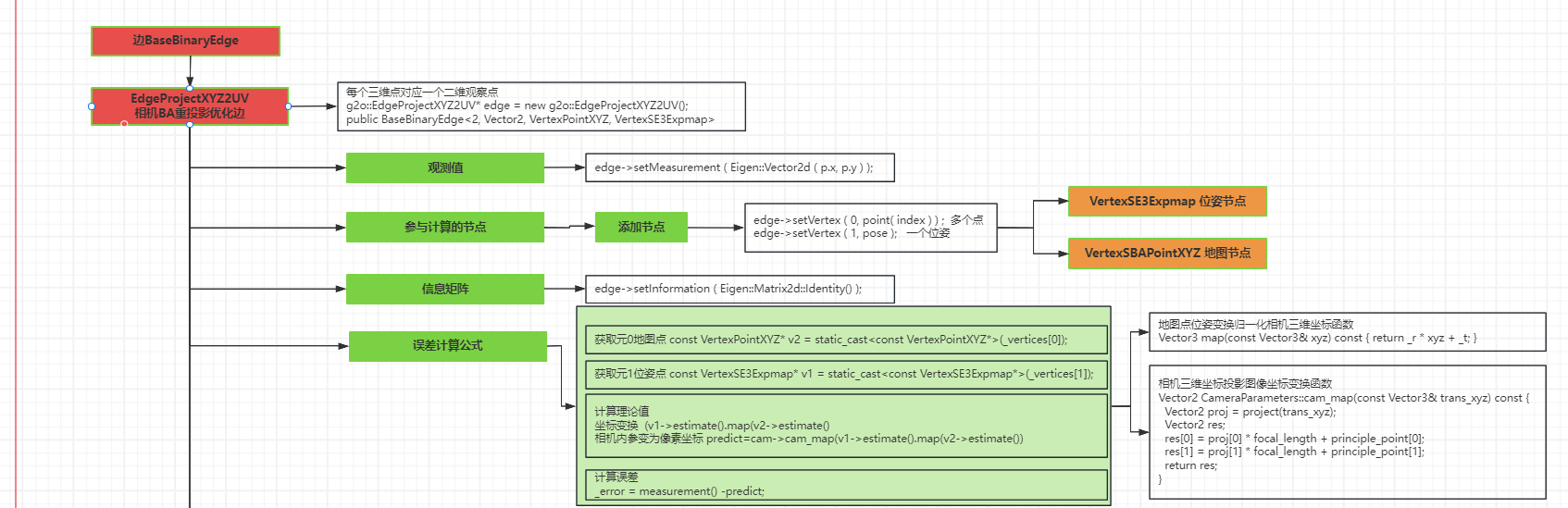

2 BA优化原理

https://www.cnblogs.com/gooutlook/p/17832164.html

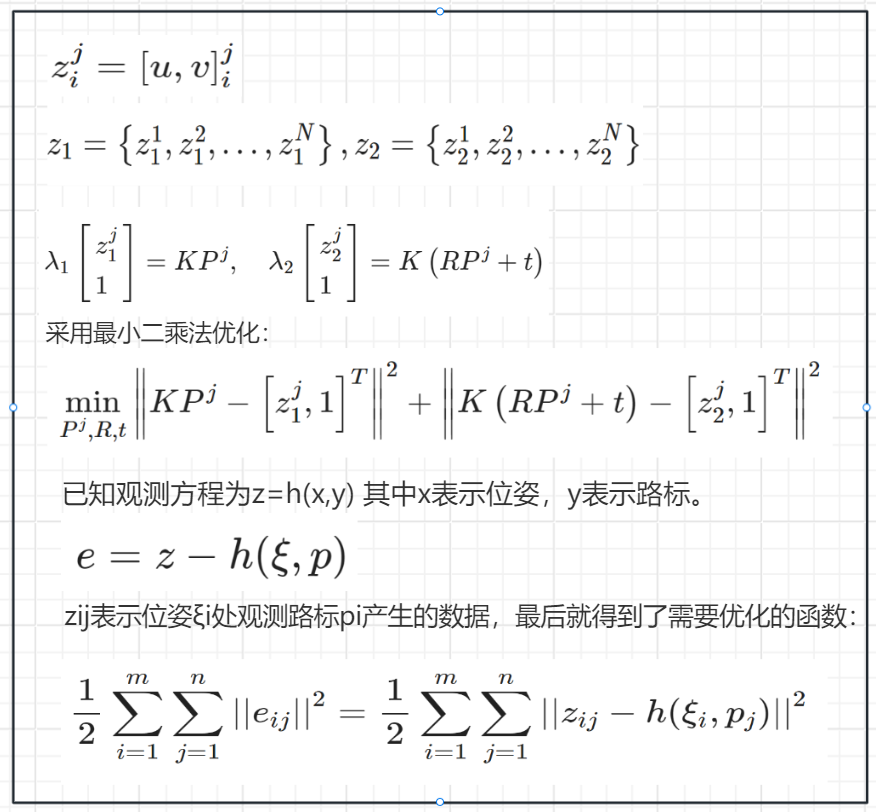

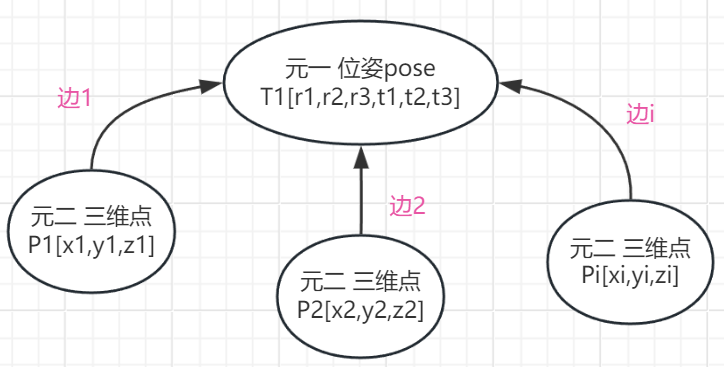

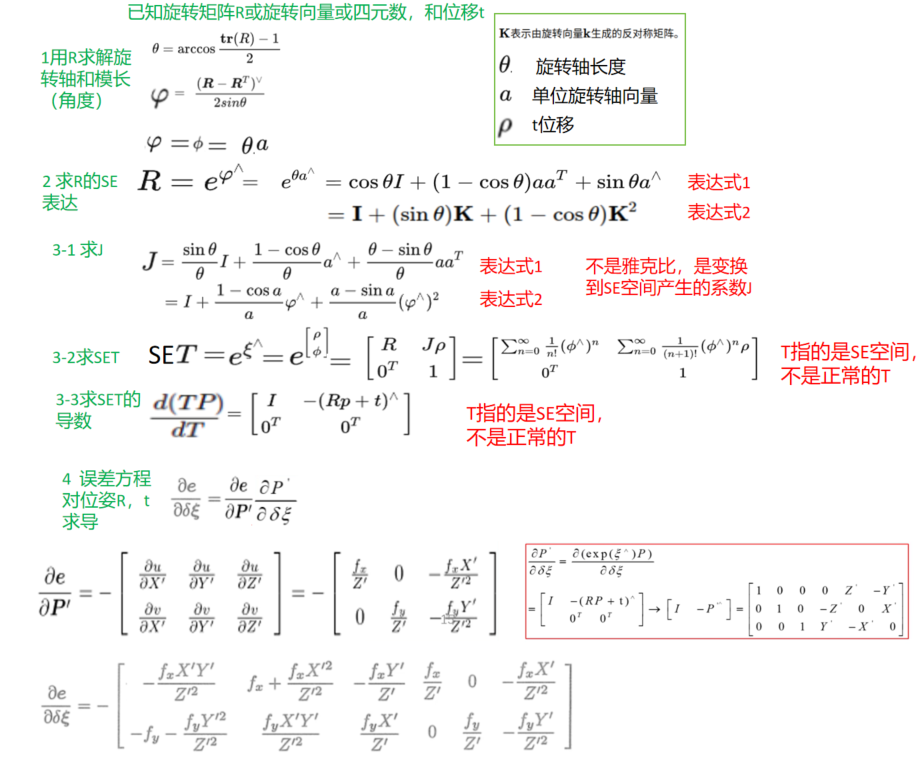

观测值 y_data[i] (ui,vi)像素坐标 预测值 y_predict[i] (ui,vi)= 1/zi*K*T1*Pi 像素坐标 --差值error=y_predict[i]-y_data[i] 说明: y_data[i] edge->setMeasurement ( Eigen::Vector2d ( p.x, p.y ) ); --- Pi 三维点节点带入 point->setEstimate ( Eigen::Vector3d ( p.x, p.y, p.z ) ); T1位姿点节点带入 pose->setEstimate ( g2o::SE3Quat (R,t) K 相机内参

观测值 y_data[i] (ui,vi)像素坐标 预测值 y_predict[i] (ui,vi)= 1/zi*K*Tj*Pi 像素坐标 --差值error=y_predict[i]-y_data[i] 说明: y_data[i] edge->setMeasurement ( Eigen::Vector2d ( p.x, p.y ) ); --- Pi 三维点节点带入 point->setEstimate ( Eigen::Vector3d ( p.x, p.y, p.z ) ); Tj 位姿点节点带入 pose->setEstimate ( g2o::SE3Quat (R,t) K 相机内参

1节点

1-1位姿节点

vertex_se3_expmap.h

// g2o - General Graph Optimization

#ifndef G2O_SBA_VERTEXSE3EXPMAP_H

#define G2O_SBA_VERTEXSE3EXPMAP_H

#include "g2o/core/base_vertex.h"

#include "g2o/types/slam3d/se3quat.h"

#include "g2o_types_sba_api.h"

namespace g2o {

/**

* \brief SE3 Vertex parameterized internally with a transformation matrix

* and externally with its exponential map

*/

class G2O_TYPES_SBA_API VertexSE3Expmap : public BaseVertex<6, SE3Quat> {

public:

EIGEN_MAKE_ALIGNED_OPERATOR_NEW

VertexSE3Expmap();

bool read(std::istream& is);

bool write(std::ostream& os) const;

void setToOriginImpl();

void oplusImpl(const double* update_);

};

} // namespace g2o

#endif

vertex_se3_expmap.cpp

// g2o - General Graph Optimization

#include "vertex_se3_expmap.h"

#include "g2o/stuff/misc.h"

namespace g2o {

VertexSE3Expmap::VertexSE3Expmap() : BaseVertex<6, SE3Quat>() {}

bool VertexSE3Expmap::read(std::istream& is) {

Vector7 est;

internal::readVector(is, est);

setEstimate(SE3Quat(est).inverse());

return true;

}

bool VertexSE3Expmap::write(std::ostream& os) const {

return internal::writeVector(os, estimate().inverse().toVector());

}

void VertexSE3Expmap::setToOriginImpl() { _estimate = SE3Quat(); }

void VertexSE3Expmap::oplusImpl(const double* update_) {

Eigen::Map<const Vector6> update(update_);

setEstimate(SE3Quat::exp(update) * estimate());

}

} // namespace g2o

其他说明

输入数据 6, SE3Quat

SE3Quat是由 Vector6 <double > r 1*3 t 1*3组成 或者 R 3*3 t 1*3 初始化 或者 R 四元数 t

每次跟新结果是 6, SE3Quat

SE3Quat T(vj->estimate())

跟新以后

Eigen::Map<const Vector6> update(update_);

setEstimate(SE3Quat::exp(update) * estimate());

SE3Quat::exp(update) 返回 SE3Quat(Quaternion(R), V * upsilon)

乘号被重写了

inline SE3Quat operator*(const SE3Quat& tr2) const {

SE3Quat result(*this);

result._t += _r * tr2._t;

result._r *= tr2._r;

result.normalizeRotation();

return result;

}

最终迭代跟新

_error = measurement() - cam->cam_map(v1->estimate().map(v2->estimate()));

v1->estimate()和v2->estimate()都是SE3Quat类型

SE3Quat.map()函数

Vector3 map(const Vector3& xyz) const { return _r * xyz + _t; }

SE3Quat类

函数

1初始化

初始化1

SE3Quat(const Matrix3& R, const Vector3& t) : _r(Quaternion(R)), _t(t) {

normalizeRotation();

}

初始化2

SE3Quat(const Quaternion& q, const Vector3& t) : _r(q), _t(t) {

normalizeRotation();

}

初始化3 当参数是6个时候 自动把6个参数拆解成 _t和_r

template <typename Derived>

explicit SE3Quat(const Eigen::MatrixBase<Derived>& v) {

assert((v.size() == 6 || v.size() == 7) &&

"Vector dimension does not match");

if (v.size() == 6) {

for (int i = 0; i < 3; i++) {

_t[i] = v[i];

_r.coeffs()(i) = v[i + 3];

}

_r.w() = 0.; // recover the positive w

if (_r.norm() > 1.) {

_r.normalize();

} else {

double w2 = cst(1.) - _r.squaredNorm();

_r.w() = (w2 < cst(0.)) ? cst(0.) : std::sqrt(w2);

}

}

else if (v.size() == 7) {

int idx = 0;

for (int i = 0; i < 3; ++i, ++idx) _t(i) = v(idx);

for (int i = 0; i < 4; ++i, ++idx) _r.coeffs()(i) = v(idx);

normalizeRotation();

}

}

2变换

Vector3 map(const Vector3& xyz) const { return s * (r * xyz) + t; }

其中r,t是初始化时候直接拆解的。

3 乘法更新

static SE3Quat exp(const Vector6& update) {

Vector3 omega;

for (int i = 0; i < 3; i++) omega[i] = update[i];

Vector3 upsilon;

for (int i = 0; i < 3; i++) upsilon[i] = update[i + 3];

double theta = omega.norm();

Matrix3 Omega = skew(omega);

Matrix3 R;

Matrix3 V;

if (theta < cst(0.00001)) {

Matrix3 Omega2 = Omega * Omega;

R = (Matrix3::Identity() + Omega + cst(0.5) * Omega2);

V = (Matrix3::Identity() + cst(0.5) * Omega + cst(1.) / cst(6.) * Omega2);

} else {

Matrix3 Omega2 = Omega * Omega;

R = (Matrix3::Identity() + std::sin(theta) / theta * Omega +

(1 - std::cos(theta)) / (theta * theta) * Omega2);

V = (Matrix3::Identity() +

(1 - std::cos(theta)) / (theta * theta) * Omega +

(theta - std::sin(theta)) / (std::pow(theta, 3)) * Omega2);

}

return SE3Quat(Quaternion(R), V * upsilon);

}

1-2 地图点

简化版本 以前的api

class G2O_TYPES_SBA_API VertexSBAPointXYZ : public BaseVertex<3, Vector3>

{

public:

EIGEN_MAKE_ALIGNED_OPERATOR_NEW

VertexSBAPointXYZ();

virtual bool read(std::istream& is);

virtual bool write(std::ostream& os) const;

virtual void setToOriginImpl() {

_estimate.fill(0);

}

virtual void oplusImpl(const number_t* update)

{

Eigen::Map<const Vector3> v(update);

_estimate += v;

}

};

复杂版本 最新的api

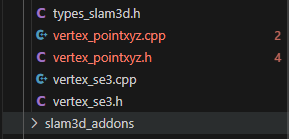

vertex_pointxyz.h

// g2o - General Graph Optimization

#ifndef G2O_VERTEX_TRACKXYZ_H_

#define G2O_VERTEX_TRACKXYZ_H_

#include "g2o/core/base_vertex.h"

#include "g2o/core/hyper_graph_action.h"

#include "g2o_types_slam3d_api.h"

namespace g2o {

/**

* \brief Vertex for a tracked point in space

*/

class G2O_TYPES_SLAM3D_API VertexPointXYZ : public BaseVertex<3, Vector3> {

public:

EIGEN_MAKE_ALIGNED_OPERATOR_NEW;

VertexPointXYZ() {}

virtual bool read(std::istream& is);

virtual bool write(std::ostream& os) const;

virtual void setToOriginImpl() { _estimate.fill(0.); }

virtual void oplusImpl(const double* update_) {

Eigen::Map<const Vector3> update(update_);

_estimate += update;

}

virtual bool setEstimateDataImpl(const double* est) {

Eigen::Map<const Vector3> estMap(est);

_estimate = estMap;

return true;

}

virtual bool getEstimateData(double* est) const {

Eigen::Map<Vector3> estMap(est);

estMap = _estimate;

return true;

}

virtual int estimateDimension() const { return Dimension; }

virtual bool setMinimalEstimateDataImpl(const double* est) {

_estimate = Eigen::Map<const Vector3>(est);

return true;

}

virtual bool getMinimalEstimateData(double* est) const {

Eigen::Map<Vector3> v(est);

v = _estimate;

return true;

}

virtual int minimalEstimateDimension() const { return Dimension; }

};

class G2O_TYPES_SLAM3D_API VertexPointXYZWriteGnuplotAction

: public WriteGnuplotAction {

public:

VertexPointXYZWriteGnuplotAction();

virtual HyperGraphElementAction* operator()(

HyperGraph::HyperGraphElement* element,

HyperGraphElementAction::Parameters* params_);

};

#ifdef G2O_HAVE_OPENGL

/**

* \brief visualize a 3D point

*/

class VertexPointXYZDrawAction : public DrawAction {

public:

VertexPointXYZDrawAction();

virtual HyperGraphElementAction* operator()(

HyperGraph::HyperGraphElement* element,

HyperGraphElementAction::Parameters* params_);

protected:

FloatProperty* _pointSize;

virtual bool refreshPropertyPtrs(

HyperGraphElementAction::Parameters* params_);

};

#endif

} // namespace g2o

#endif

vertex_pointxyz.cpp

// g2o - General Graph Optimization

#include "vertex_pointxyz.h"

#include <stdio.h>

#ifdef G2O_HAVE_OPENGL

#include "g2o/stuff/opengl_primitives.h"

#include "g2o/stuff/opengl_wrapper.h"

#endif

#include <typeinfo>

namespace g2o {

bool VertexPointXYZ::read(std::istream& is) {

return internal::readVector(is, _estimate);

}

bool VertexPointXYZ::write(std::ostream& os) const {

return internal::writeVector(os, estimate());

}

#ifdef G2O_HAVE_OPENGL

VertexPointXYZDrawAction::VertexPointXYZDrawAction()

: DrawAction(typeid(VertexPointXYZ).name()), _pointSize(nullptr) {}

bool VertexPointXYZDrawAction::refreshPropertyPtrs(

HyperGraphElementAction::Parameters* params_) {

if (!DrawAction::refreshPropertyPtrs(params_)) return false;

if (_previousParams) {

_pointSize = _previousParams->makeProperty<FloatProperty>(

_typeName + "::POINT_SIZE", 1.);

} else {

_pointSize = nullptr;

}

return true;

}

HyperGraphElementAction* VertexPointXYZDrawAction::operator()(

HyperGraph::HyperGraphElement* element,

HyperGraphElementAction::Parameters* params) {

if (typeid(*element).name() != _typeName) return nullptr;

initializeDrawActionsCache();

refreshPropertyPtrs(params);

if (!_previousParams) return this;

if (_show && !_show->value()) return this;

VertexPointXYZ* that = static_cast<VertexPointXYZ*>(element);

glPushMatrix();

glPushAttrib(GL_ENABLE_BIT | GL_POINT_BIT);

glDisable(GL_LIGHTING);

glColor3f(LANDMARK_VERTEX_COLOR);

float ps = _pointSize ? _pointSize->value() : 1.f;

glTranslatef((float)that->estimate()(0), (float)that->estimate()(1),

(float)that->estimate()(2));

opengl::drawPoint(ps);

glPopAttrib();

drawCache(that->cacheContainer(), params);

drawUserData(that->userData(), params);

glPopMatrix();

return this;

}

#endif

VertexPointXYZWriteGnuplotAction::VertexPointXYZWriteGnuplotAction()

: WriteGnuplotAction(typeid(VertexPointXYZ).name()) {}

HyperGraphElementAction* VertexPointXYZWriteGnuplotAction::operator()(

HyperGraph::HyperGraphElement* element,

HyperGraphElementAction::Parameters* params_) {

if (typeid(*element).name() != _typeName) return nullptr;

WriteGnuplotAction::Parameters* params =

static_cast<WriteGnuplotAction::Parameters*>(params_);

if (!params->os) {

return nullptr;

}

VertexPointXYZ* v = static_cast<VertexPointXYZ*>(element);

*(params->os) << v->estimate().x() << " " << v->estimate().y() << " "

<< v->estimate().z() << " " << std::endl;

return this;

}

} // namespace g2o

2边

二元边

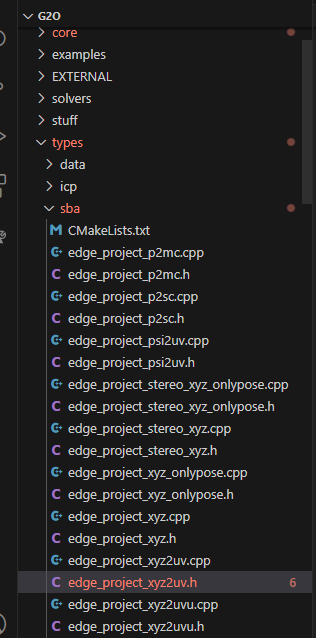

edge_project_xyz2uv.h

// g2o - General Graph Optimization

#ifndef G2O_SBA_EDGEPROJECTXYZ2UV_H

#define G2O_SBA_EDGEPROJECTXYZ2UV_H

#include "g2o/core/base_binary_edge.h"

#include "g2o/types/slam3d/vertex_pointxyz.h"

#include "g2o_types_sba_api.h"

#include "parameter_cameraparameters.h"

#include "vertex_se3_expmap.h"

namespace g2o {

class G2O_TYPES_SBA_API EdgeProjectXYZ2UV

: public BaseBinaryEdge<2, Vector2, VertexPointXYZ, VertexSE3Expmap> {

public:

EIGEN_MAKE_ALIGNED_OPERATOR_NEW;

EdgeProjectXYZ2UV();

bool read(std::istream& is);

bool write(std::ostream& os) const;

void computeError();

virtual void linearizeOplus();

public:

CameraParameters* _cam; // TODO make protected member?

};

} // namespace g2o

#endif

edge_project_xyz2uv.cpp

// g2o - General Graph Optimization

#include "edge_project_xyz2uv.h"

namespace g2o {

EdgeProjectXYZ2UV::EdgeProjectXYZ2UV()

: BaseBinaryEdge<2, Vector2, VertexPointXYZ, VertexSE3Expmap>() {

_cam = 0;

resizeParameters(1);

installParameter(_cam, 0);

}

bool EdgeProjectXYZ2UV::read(std::istream& is) {

readParamIds(is);

internal::readVector(is, _measurement);

return readInformationMatrix(is);

}

bool EdgeProjectXYZ2UV::write(std::ostream& os) const {

writeParamIds(os);

internal::writeVector(os, measurement());

return writeInformationMatrix(os);

}

void EdgeProjectXYZ2UV::computeError() {

const VertexSE3Expmap* v1 = static_cast<const VertexSE3Expmap*>(_vertices[1]);

const VertexPointXYZ* v2 = static_cast<const VertexPointXYZ*>(_vertices[0]);

const CameraParameters* cam =

static_cast<const CameraParameters*>(parameter(0));

_error = measurement() - cam->cam_map(v1->estimate().map(v2->estimate()));

}

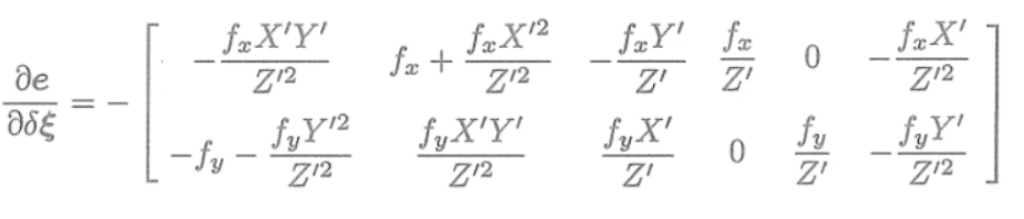

void EdgeProjectXYZ2UV::linearizeOplus() {

VertexSE3Expmap* vj = static_cast<VertexSE3Expmap*>(_vertices[1]);

SE3Quat T(vj->estimate());

VertexPointXYZ* vi = static_cast<VertexPointXYZ*>(_vertices[0]);

Vector3 xyz = vi->estimate();

Vector3 xyz_trans = T.map(xyz);

double x = xyz_trans[0];

double y = xyz_trans[1];

double z = xyz_trans[2];

double z_2 = z * z;

const CameraParameters* cam =

static_cast<const CameraParameters*>(parameter(0));

Eigen::Matrix<double, 2, 3, Eigen::ColMajor> tmp;

tmp(0, 0) = cam->focal_length;

tmp(0, 1) = 0;

tmp(0, 2) = -x / z * cam->focal_length;

tmp(1, 0) = 0;

tmp(1, 1) = cam->focal_length;

tmp(1, 2) = -y / z * cam->focal_length;

_jacobianOplusXi = -1. / z * tmp * T.rotation().toRotationMatrix();

_jacobianOplusXj(0, 0) = x * y / z_2 * cam->focal_length;

_jacobianOplusXj(0, 1) = -(1 + (x * x / z_2)) * cam->focal_length;

_jacobianOplusXj(0, 2) = y / z * cam->focal_length;

_jacobianOplusXj(0, 3) = -1. / z * cam->focal_length;

_jacobianOplusXj(0, 4) = 0;

_jacobianOplusXj(0, 5) = x / z_2 * cam->focal_length;

_jacobianOplusXj(1, 0) = (1 + y * y / z_2) * cam->focal_length;

_jacobianOplusXj(1, 1) = -x * y / z_2 * cam->focal_length;

_jacobianOplusXj(1, 2) = -x / z * cam->focal_length;

_jacobianOplusXj(1, 3) = 0;

_jacobianOplusXj(1, 4) = -1. / z * cam->focal_length;

_jacobianOplusXj(1, 5) = y / z_2 * cam->focal_length;

}

} // namespace g2o

残差

代码调用

https://github.com/gaoxia

mian.cpp

#include <iostream>

#include <opencv2/core/core.hpp>

#include <opencv2/features2d/features2d.hpp>

#include <opencv2/highgui/highgui.hpp>

#include <opencv2/calib3d/calib3d.hpp>

#include <Eigen/Core>

#include <Eigen/Geometry>

#include <g2o/core/base_vertex.h>

#include <g2o/core/base_unary_edge.h>

#include <g2o/core/block_solver.h>

#include <g2o/core/optimization_algorithm_levenberg.h>

#include <g2o/solvers/csparse/linear_solver_csparse.h>

#include <g2o/types/sba/types_six_dof_expmap.h>

#include <chrono>

using namespace std;

using namespace cv;

void find_feature_matches (

const Mat& img_1, const Mat& img_2,

std::vector<KeyPoint>& keypoints_1,

std::vector<KeyPoint>& keypoints_2,

std::vector< DMatch >& matches );

// 像素坐标转相机归一化坐标

Point2d pixel2cam ( const Point2d& p, const Mat& K );

void bundleAdjustment (

const vector<Point3f> points_3d,

const vector<Point2f> points_2d,

const Mat& K,

Mat& R, Mat& t

);

int main ( int argc, char** argv )

{

if ( argc != 5 )

{

cout<<"usage: pose_estimation_3d2d img1 img2 depth1 depth2"<<endl;

return 1;

}

//-- 读取图像

Mat img_1 = imread ( argv[1], CV_LOAD_IMAGE_COLOR );

Mat img_2 = imread ( argv[2], CV_LOAD_IMAGE_COLOR );

vector<KeyPoint> keypoints_1, keypoints_2;

vector<DMatch> matches;

find_feature_matches ( img_1, img_2, keypoints_1, keypoints_2, matches );

cout<<"一共找到了"<<matches.size() <<"组匹配点"<<endl;

// 建立3D点

Mat d1 = imread ( argv[3], CV_LOAD_IMAGE_UNCHANGED ); // 深度图为16位无符号数,单通道图像

Mat K = ( Mat_<double> ( 3,3 ) << 520.9, 0, 325.1, 0, 521.0, 249.7, 0, 0, 1 );

vector<Point3f> pts_3d;

vector<Point2f> pts_2d;

for ( DMatch m:matches )

{

ushort d = d1.ptr<unsigned short> (int ( keypoints_1[m.queryIdx].pt.y )) [ int ( keypoints_1[m.queryIdx].pt.x ) ];

if ( d == 0 ) // bad depth

continue;

float dd = d/5000.0;

Point2d p1 = pixel2cam ( keypoints_1[m.queryIdx].pt, K );

pts_3d.push_back ( Point3f ( p1.x*dd, p1.y*dd, dd ) );

pts_2d.push_back ( keypoints_2[m.trainIdx].pt );

}

cout<<"3d-2d pairs: "<<pts_3d.size() <<endl;

Mat r, t;

solvePnP ( pts_3d, pts_2d, K, Mat(), r, t, false ); // 调用OpenCV 的 PnP 求解,可选择EPNP,DLS等方法

Mat R;

cv::Rodrigues ( r, R ); // r为旋转向量形式,用Rodrigues公式转换为矩阵

cout<<"R="<<endl<<R<<endl;

cout<<"t="<<endl<<t<<endl;

cout<<"calling bundle adjustment"<<endl;

bundleAdjustment ( pts_3d, pts_2d, K, R, t );

}

void find_feature_matches ( const Mat& img_1, const Mat& img_2,

std::vector<KeyPoint>& keypoints_1,

std::vector<KeyPoint>& keypoints_2,

std::vector< DMatch >& matches )

{

//-- 初始化

Mat descriptors_1, descriptors_2;

// used in OpenCV3

Ptr<FeatureDetector> detector = ORB::create();

Ptr<DescriptorExtractor> descriptor = ORB::create();

// use this if you are in OpenCV2

// Ptr<FeatureDetector> detector = FeatureDetector::create ( "ORB" );

// Ptr<DescriptorExtractor> descriptor = DescriptorExtractor::create ( "ORB" );

Ptr<DescriptorMatcher> matcher = DescriptorMatcher::create ( "BruteForce-Hamming" );

//-- 第一步:检测 Oriented FAST 角点位置

detector->detect ( img_1,keypoints_1 );

detector->detect ( img_2,keypoints_2 );

//-- 第二步:根据角点位置计算 BRIEF 描述子

descriptor->compute ( img_1, keypoints_1, descriptors_1 );

descriptor->compute ( img_2, keypoints_2, descriptors_2 );

//-- 第三步:对两幅图像中的BRIEF描述子进行匹配,使用 Hamming 距离

vector<DMatch> match;

// BFMatcher matcher ( NORM_HAMMING );

matcher->match ( descriptors_1, descriptors_2, match );

//-- 第四步:匹配点对筛选

double min_dist=10000, max_dist=0;

//找出所有匹配之间的最小距离和最大距离, 即是最相似的和最不相似的两组点之间的距离

for ( int i = 0; i < descriptors_1.rows; i++ )

{

double dist = match[i].distance;

if ( dist < min_dist ) min_dist = dist;

if ( dist > max_dist ) max_dist = dist;

}

printf ( "-- Max dist : %f \n", max_dist );

printf ( "-- Min dist : %f \n", min_dist );

//当描述子之间的距离大于两倍的最小距离时,即认为匹配有误.但有时候最小距离会非常小,设置一个经验值30作为下限.

for ( int i = 0; i < descriptors_1.rows; i++ )

{

if ( match[i].distance <= max ( 2*min_dist, 30.0 ) )

{

matches.push_back ( match[i] );

}

}

}

Point2d pixel2cam ( const Point2d& p, const Mat& K )

{

return Point2d

(

( p.x - K.at<double> ( 0,2 ) ) / K.at<double> ( 0,0 ),

( p.y - K.at<double> ( 1,2 ) ) / K.at<double> ( 1,1 )

);

}

void bundleAdjustment (

const vector< Point3f > points_3d,

const vector< Point2f > points_2d,

const Mat& K,

Mat& R, Mat& t )

{

// 初始化g2o

typedef g2o::BlockSolver< g2o::BlockSolverTraits<6,3> > Block; // pose 维度为 6, landmark 维度为 3

Block::LinearSolverType* linearSolver = new g2o::LinearSolverCSparse<Block::PoseMatrixType>(); // 线性方程求解器

Block* solver_ptr = new Block ( linearSolver ); // 矩阵块求解器

g2o::OptimizationAlgorithmLevenberg* solver = new g2o::OptimizationAlgorithmLevenberg ( solver_ptr );

g2o::SparseOptimizer optimizer;

optimizer.setAlgorithm ( solver );

// vertex

g2o::VertexSE3Expmap* pose = new g2o::VertexSE3Expmap(); // camera pose

Eigen::Matrix3d R_mat;

R_mat <<

R.at<double> ( 0,0 ), R.at<double> ( 0,1 ), R.at<double> ( 0,2 ),

R.at<double> ( 1,0 ), R.at<double> ( 1,1 ), R.at<double> ( 1,2 ),

R.at<double> ( 2,0 ), R.at<double> ( 2,1 ), R.at<double> ( 2,2 );

pose->setId ( 0 );

pose->setEstimate ( g2o::SE3Quat (

R_mat,

Eigen::Vector3d ( t.at<double> ( 0,0 ), t.at<double> ( 1,0 ), t.at<double> ( 2,0 ) )

) );

optimizer.addVertex ( pose );

int index = 1;

for ( const Point3f p:points_3d ) // landmarks

{

g2o::VertexSBAPointXYZ* point = new g2o::VertexSBAPointXYZ();

point->setId ( index++ );

point->setEstimate ( Eigen::Vector3d ( p.x, p.y, p.z ) );

point->setMarginalized ( true ); // g2o 中必须设置 marg 参见第十讲内容

optimizer.addVertex ( point );

}

// parameter: camera intrinsics

g2o::CameraParameters* camera = new g2o::CameraParameters (

K.at<double> ( 0,0 ), Eigen::Vector2d ( K.at<double> ( 0,2 ), K.at<double> ( 1,2 ) ), 0

);

camera->setId ( 0 );

optimizer.addParameter ( camera );

// edges

index = 1;

for ( const Point2f p:points_2d )

{

g2o::EdgeProjectXYZ2UV* edge = new g2o::EdgeProjectXYZ2UV();

edge->setId ( index );

edge->setVertex ( 0, dynamic_cast<g2o::VertexSBAPointXYZ*> ( optimizer.vertex ( index ) ) );

edge->setVertex ( 1, pose );

edge->setMeasurement ( Eigen::Vector2d ( p.x, p.y ) );

edge->setParameterId ( 0,0 );

edge->setInformation ( Eigen::Matrix2d::Identity() );

optimizer.addEdge ( edge );

index++;

}

chrono::steady_clock::time_point t1 = chrono::steady_clock::now();

optimizer.setVerbose ( true );

optimizer.initializeOptimization();

optimizer.optimize ( 100 );

chrono::steady_clock::time_point t2 = chrono::steady_clock::now();

chrono::duration<double> time_used = chrono::duration_cast<chrono::duration<double>> ( t2-t1 );

cout<<"optimization costs time: "<<time_used.count() <<" seconds."<<endl;

cout<<endl<<"after optimization:"<<endl;

cout<<"T="<<endl<<Eigen::Isometry3d ( pose->estimate() ).matrix() <<endl;

}

cmake_minimum_required( VERSION 2.8 )

project( vo1 )

set( CMAKE_BUILD_TYPE "Release" )

set( CMAKE_CXX_FLAGS "-std=c++11 -O3" )

# 添加cmake模块以使用g2o

list( APPEND CMAKE_MODULE_PATH ${PROJECT_SOURCE_DIR}/cmake_modules )

find_package( OpenCV 3.1 REQUIRED )

# find_package( OpenCV REQUIRED ) # use this if in OpenCV2

find_package( G2O REQUIRED )

find_package( CSparse REQUIRED )

include_directories(

${OpenCV_INCLUDE_DIRS}

${G2O_INCLUDE_DIRS}

${CSPARSE_INCLUDE_DIR}

"/usr/include/eigen3/"

)

add_executable( feature_extraction feature_extraction.cpp )

target_link_libraries( feature_extraction ${OpenCV_LIBS} )

# add_executable( pose_estimation_2d2d pose_estimation_2d2d.cpp extra.cpp ) # use this if in OpenCV2

add_executable( pose_estimation_2d2d pose_estimation_2d2d.cpp )

target_link_libraries( pose_estimation_2d2d ${OpenCV_LIBS} )

# add_executable( triangulation triangulation.cpp extra.cpp) # use this if in opencv2

add_executable( triangulation triangulation.cpp )

target_link_libraries( triangulation ${OpenCV_LIBS} )

add_executable( pose_estimation_3d2d pose_estimation_3d2d.cpp )

target_link_libraries( pose_estimation_3d2d

${OpenCV_LIBS}

${CSPARSE_LIBRARY}

g2o_core g2o_stuff g2o_types_sba g2o_csparse_extension

)

add_executable( pose_estimation_3d3d pose_estimation_3d3d.cpp )

target_link_libraries( pose_estimation_3d3d

${OpenCV_LIBS}

g2o_core g2o_stuff g2o_types_sba g2o_csparse_extension

${CSPARSE_LIBRARY}

)

浙公网安备 33010602011771号

浙公网安备 33010602011771号