InternLM第三期实战-进阶第四关

InternVL 多模态模型部署微调实践

教程链接:https://github.com/InternLM/Tutorial/blob/camp3/docs/L2/InternVL/joke_readme.md

InternVL简介

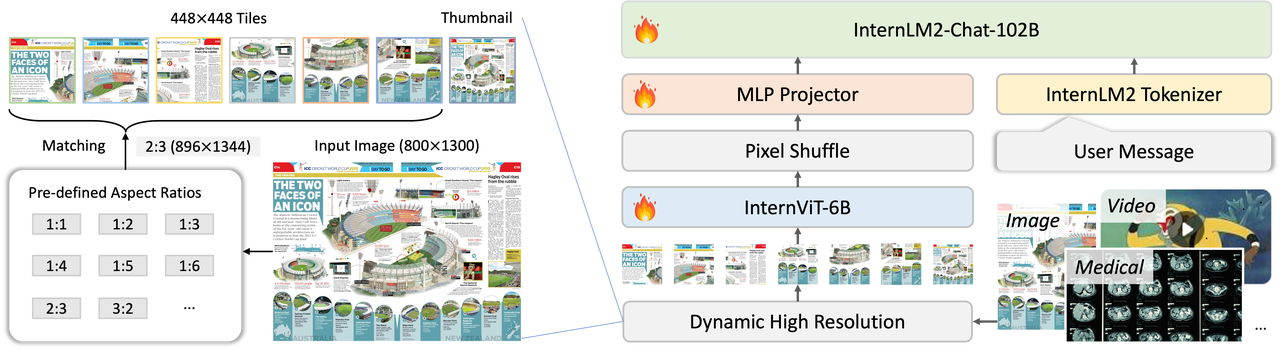

InternVL 是一种用于多模态任务的深度学习模型,旨在处理和理解多种类型的数据输入,如图像和文本。它结合了视觉和语言模型,能够执行复杂的跨模态任务,比如图文匹配、图像描述生成等。通过整合视觉特征和语言信息,InternVL 可以在多模态领域取得更好的表现.

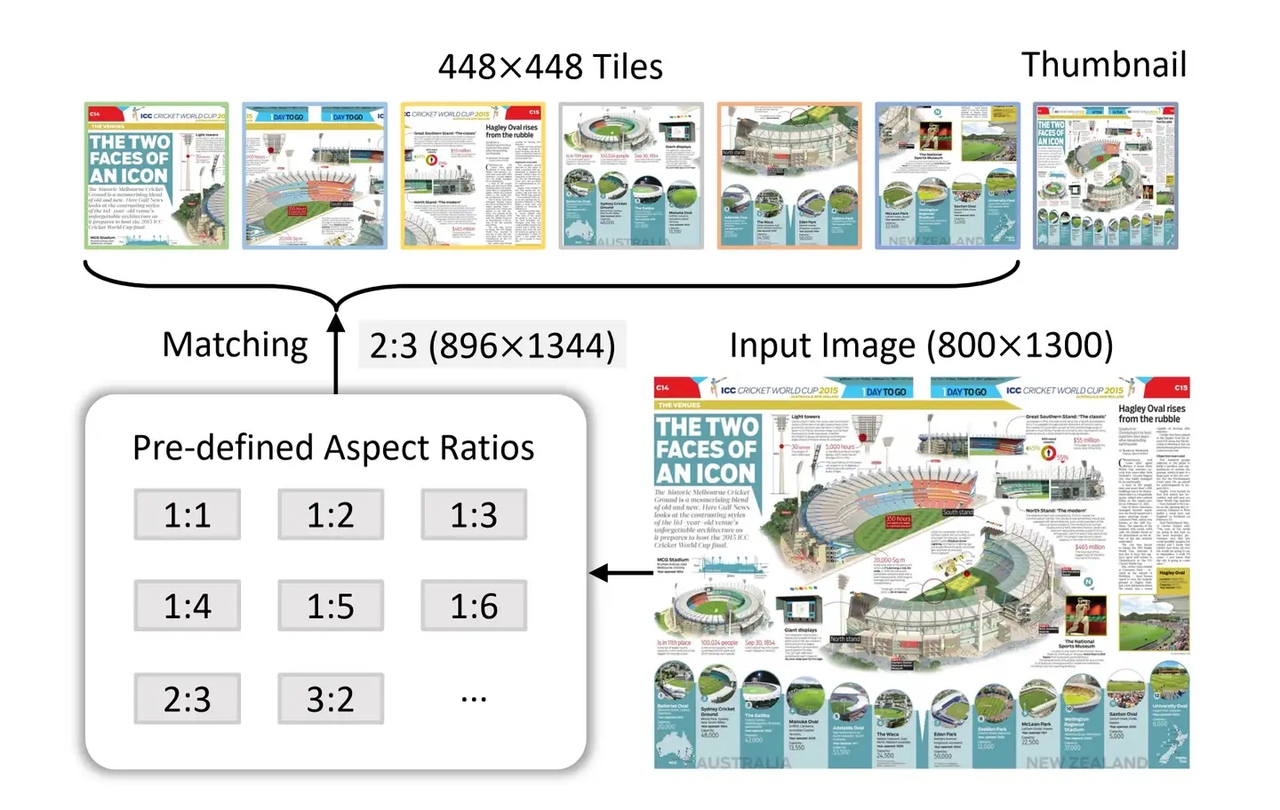

对于InternVL这个模型来说,它vision模块就是一个微调过的ViT,llm模块是一个InternLM的模型。对于视觉模块来说,它的特殊之处在Dynamic High Resolution。

InternVL独特的预处理模块:动态高分辨率(Dynamic High Resolution。),是为了让ViT模型能够尽可能获取到更细节的图像信息,提高视觉特征的表达能力。对于输入的图片,首先resize成448的倍数,然后按照预定义的尺寸比例从图片上crop对应的区域。

Pixel Shuffle在超分任务中是一个常见的操作,PyTorch中有官方实现,即nn.PixelShuffle(upscale_factor) 该类的作用就是将一个tensor中的元素值进行重排列,假设tensor维度为[B, C, H, W], PixelShuffle操作不仅可以改变tensor的通道数,也会改变特征图的大小。

InternVL 部署微调实践

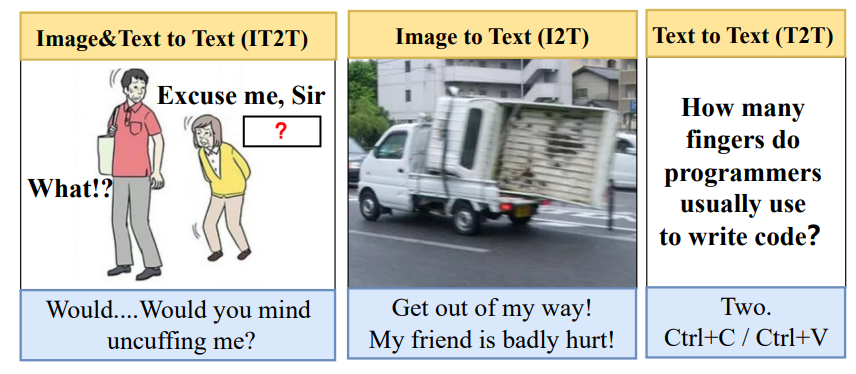

本次的任务是用VLM模型进行冷笑话生成,让你的模型说出很逗的冷笑话吧。在这里,我们微调InterenVL使用xtuner。部署InternVL使用lmdeploy。

环境配置

- 准备模型

cd /root

mkdir -p model

# 我们使用InternVL2-2B模型。该模型已在share文件夹下挂载好,现在让我们把移动出来。

cp -r /root/share/new_models/OpenGVLab/InternVL2-2B /root/model/

- 配置虚拟环境

conda create --name xtuner python=3.10 -y

# 激活虚拟环境(注意:后续的所有操作都需要在这个虚拟环境中进行)

conda activate xtuner

# 安装一些必要的库

conda install pytorch==2.1.2 torchvision==0.16.2 torchaudio==2.1.2 pytorch-cuda=12.1 -c pytorch -c nvidia -y

# 安装其他依赖

apt install libaio-dev

pip install transformers==4.39.3

pip install streamlit==1.36.0

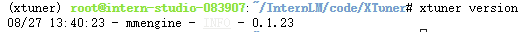

- 安装xtuner

# 创建一个目录,用来存放源代码

mkdir -p /root/InternLM/code

cd /root/InternLM/code

git clone -b v0.1.23 https://github.com/InternLM/XTuner

# 进入XTuner目录

cd /root/InternLM/code/XTuner

pip install -e '.[deepspeed]'

- 安装LMDeploy

pip install lmdeploy==0.5.3

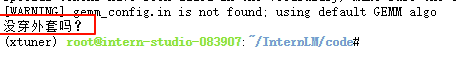

配置完成后验证一下Xtuner的版本.

准备微调数据集

我们这里使用huggingface上的zhongshsh/CLoT-Oogiri-GO数据集,数据集我们从官网下载下来并进行去重,只保留中文数据等操作。并制作成XTuner需要的形式。

## 首先让我们安装一下需要的包

pip install datasets matplotlib Pillow timm

## 让我们把数据集挪出来

cp -r /root/share/new_models/datasets/CLoT_cn_2000 /root/InternLM/datasets/

InternVL 推理部署

我们使用lmdeploy自带的pipeline工具进行开箱即用的推理流程,首先我们新建一个文件。

touch /root/InternLM/code/test_lmdeploy.py

cd /root/InternLM/code/

选取一张图片,然后在test_lmdeploy.py中贴入以下代码:

点击查看代码

from lmdeploy import pipeline

from lmdeploy.vl import load_image

pipe = pipeline('/root/model/InternVL2-2B')

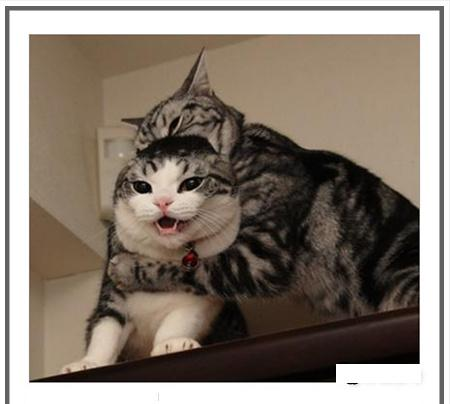

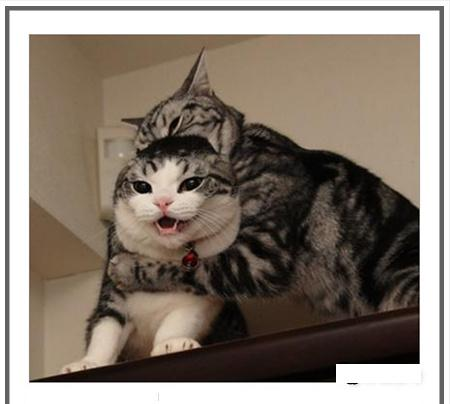

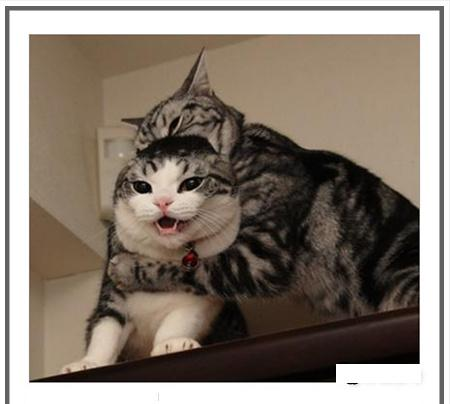

image = load_image('/root/InternLM/007aPnLRgy1hb39z0im50j30ci0el0wm.jpg')

response = pipe(('请你根据这张图片,讲一个脑洞大开的梗', image))

print(response.text)

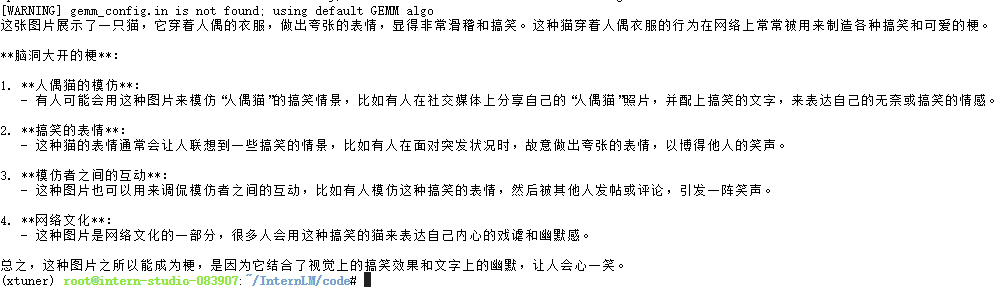

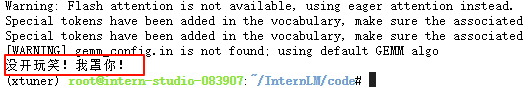

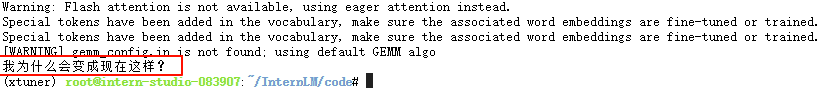

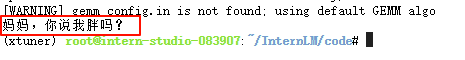

运行执行推理结果python3 test_lmdeploy.py.

推理后我们发现直接使用2b模型不能很好的讲出梗,现在我们要对这个2b模型进行微调。

InternVL 微调攻略

准备数据集

数据集格式为:

# 为了高效训练,请确保数据格式为:

{

"id": "000000033471",

"image": ["coco/train2017/000000033471.jpg"], # 如果是纯文本,则该字段为 None 或者不存在

"conversations": [

{

"from": "human",

"value": "<image>\nWhat are the colors of the bus in the image?"

},

{

"from": "gpt",

"value": "The bus in the image is white and red."

}

]

}

配置微调参数

修改模型路径以及数据集路径,也可根据自己微调的内容和数据集大小以及训练资源修改batchsize和lr等参数

完整config文件

# Copyright (c) OpenMMLab. All rights reserved.

from mmengine.hooks import (CheckpointHook, DistSamplerSeedHook, IterTimerHook,

LoggerHook, ParamSchedulerHook)

from mmengine.optim import AmpOptimWrapper, CosineAnnealingLR, LinearLR

from peft import LoraConfig

from torch.optim import AdamW

from transformers import AutoTokenizer

from xtuner.dataset import InternVL_V1_5_Dataset

from xtuner.dataset.collate_fns import default_collate_fn

from xtuner.dataset.samplers import LengthGroupedSampler

from xtuner.engine.hooks import DatasetInfoHook

from xtuner.engine.runner import TrainLoop

from xtuner.model import InternVL_V1_5

from xtuner.utils import PROMPT_TEMPLATE

#######################################################################

# PART 1 Settings #

#######################################################################

# Model

path = '/root/model/InternVL2-2B'

# Data

data_root = '/root/InternLM/datasets/CLoT_cn_2000/'

data_path = data_root + 'ex_cn.json'

image_folder = data_root

prompt_template = PROMPT_TEMPLATE.internlm2_chat

max_length = 6656

# Scheduler & Optimizer

batch_size = 4 # per_device

accumulative_counts = 4

dataloader_num_workers = 4

max_epochs = 6

optim_type = AdamW

# official 1024 -> 4e-5

lr = 2e-5

betas = (0.9, 0.999)

weight_decay = 0.05

max_norm = 1 # grad clip

warmup_ratio = 0.03

# Save

save_steps = 1000

save_total_limit = 1 # Maximum checkpoints to keep (-1 means unlimited)

#######################################################################

# PART 2 Model & Tokenizer & Image Processor #

#######################################################################

model = dict(

type=InternVL_V1_5,

model_path=path,

freeze_llm=True,

freeze_visual_encoder=True,

quantization_llm=True, # or False

quantization_vit=False, # or True and uncomment visual_encoder_lora

# comment the following lines if you don't want to use Lora in llm

llm_lora=dict(

type=LoraConfig,

r=128,

lora_alpha=256,

lora_dropout=0.05,

target_modules=None,

task_type='CAUSAL_LM'),

# uncomment the following lines if you don't want to use Lora in visual encoder # noqa

# visual_encoder_lora=dict(

# type=LoraConfig, r=64, lora_alpha=16, lora_dropout=0.05,

# target_modules=['attn.qkv', 'attn.proj', 'mlp.fc1', 'mlp.fc2'])

)

#######################################################################

# PART 3 Dataset & Dataloader #

#######################################################################

llava_dataset = dict(

type=InternVL_V1_5_Dataset,

model_path=path,

data_paths=data_path,

image_folders=image_folder,

template=prompt_template,

max_length=max_length)

train_dataloader = dict(

batch_size=batch_size,

num_workers=dataloader_num_workers,

dataset=llava_dataset,

sampler=dict(

type=LengthGroupedSampler,

length_property='modality_length',

per_device_batch_size=batch_size * accumulative_counts),

collate_fn=dict(type=default_collate_fn))

#######################################################################

# PART 4 Scheduler & Optimizer #

#######################################################################

# optimizer

optim_wrapper = dict(

type=AmpOptimWrapper,

optimizer=dict(

type=optim_type, lr=lr, betas=betas, weight_decay=weight_decay),

clip_grad=dict(max_norm=max_norm, error_if_nonfinite=False),

accumulative_counts=accumulative_counts,

loss_scale='dynamic',

dtype='float16')

# learning policy

# More information: https://github.com/open-mmlab/mmengine/blob/main/docs/en/tutorials/param_scheduler.md # noqa: E501

param_scheduler = [

dict(

type=LinearLR,

start_factor=1e-5,

by_epoch=True,

begin=0,

end=warmup_ratio * max_epochs,

convert_to_iter_based=True),

dict(

type=CosineAnnealingLR,

eta_min=0.0,

by_epoch=True,

begin=warmup_ratio * max_epochs,

end=max_epochs,

convert_to_iter_based=True)

]

# train, val, test setting

train_cfg = dict(type=TrainLoop, max_epochs=max_epochs)

#######################################################################

# PART 5 Runtime #

#######################################################################

# Log the dialogue periodically during the training process, optional

tokenizer = dict(

type=AutoTokenizer.from_pretrained,

pretrained_model_name_or_path=path,

trust_remote_code=True)

custom_hooks = [

dict(type=DatasetInfoHook, tokenizer=tokenizer),

]

# configure default hooks

default_hooks = dict(

# record the time of every iteration.

timer=dict(type=IterTimerHook),

# print log every 10 iterations.

logger=dict(type=LoggerHook, log_metric_by_epoch=False, interval=10),

# enable the parameter scheduler.

param_scheduler=dict(type=ParamSchedulerHook),

# save checkpoint per `save_steps`.

checkpoint=dict(

type=CheckpointHook,

save_optimizer=False,

by_epoch=False,

interval=save_steps,

max_keep_ckpts=save_total_limit),

# set sampler seed in distributed evrionment.

sampler_seed=dict(type=DistSamplerSeedHook),

)

# configure environment

env_cfg = dict(

# whether to enable cudnn benchmark

cudnn_benchmark=False,

# set multi process parameters

mp_cfg=dict(mp_start_method='fork', opencv_num_threads=0),

# set distributed parameters

dist_cfg=dict(backend='nccl'),

)

# set visualizer

visualizer = None

# set log level

log_level = 'INFO'

# load from which checkpoint

load_from = None

# whether to resume training from the loaded checkpoint

resume = False

# Defaults to use random seed and disable `deterministic`

randomness = dict(seed=None, deterministic=False)

# set log processor

log_processor = dict(by_epoch=False)

开始训练

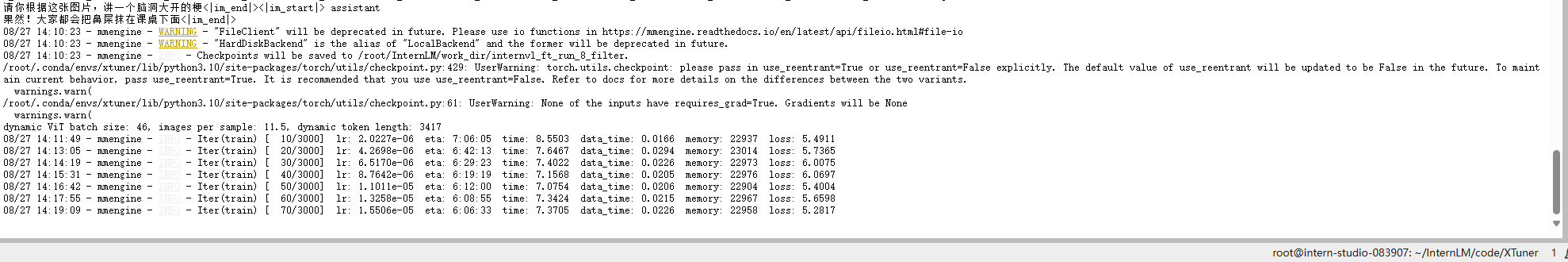

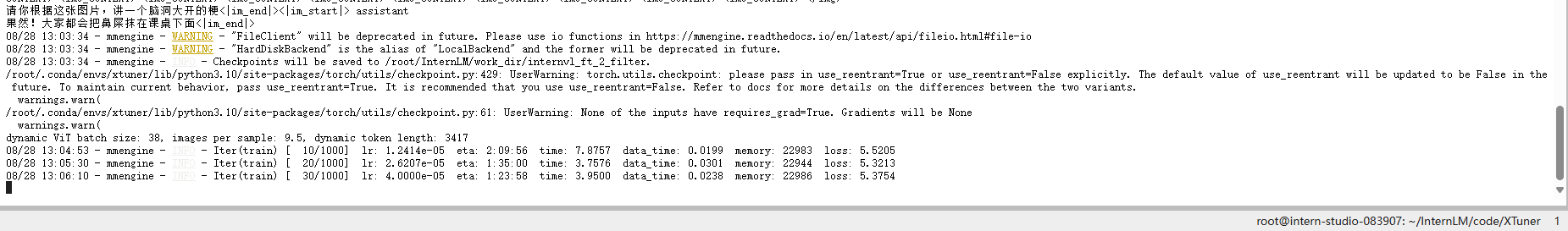

根据修改好的config文件,使用qlora进行训练.

cd XTuner

NPROC_PER_NODE=1 xtuner train /root/InternLM/code/XTuner/xtuner/configs/internvl/v2/internvl_v2_internlm2_2b_qlora_finetune.py --work-dir /root/InternLM/work_dir/internvl_ft_run_8_filter --deepspeed deepspeed_zero1

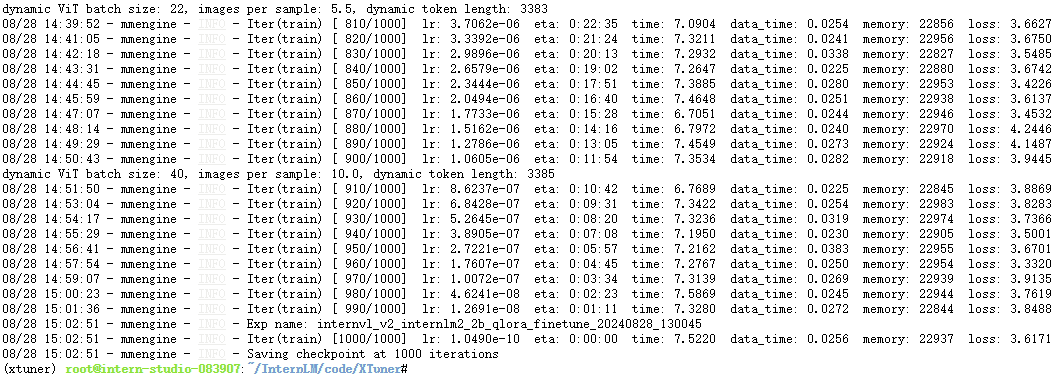

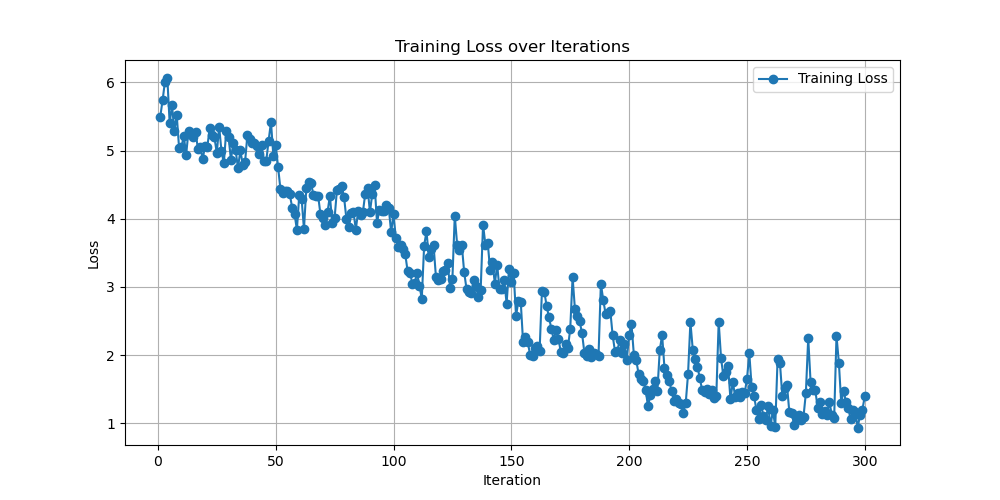

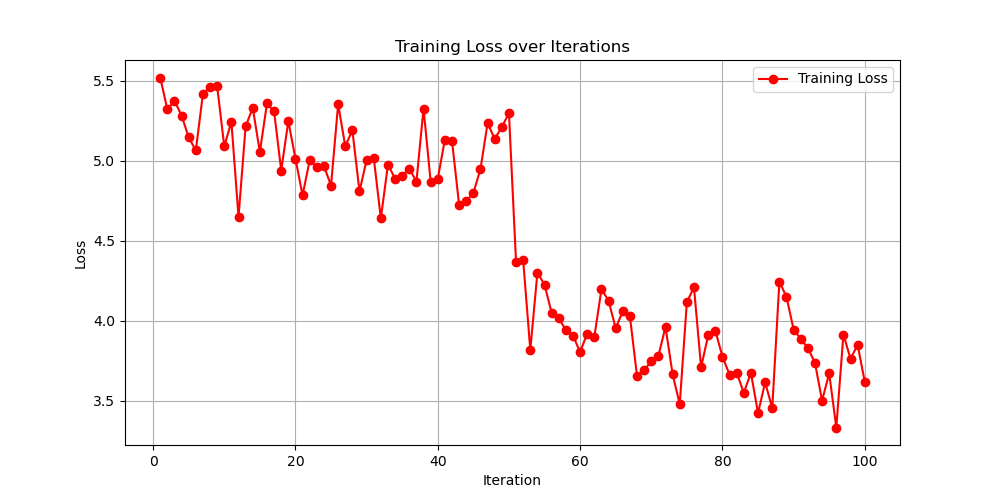

训练完成时的loss情况.

合并权重&&模型转换

用官方脚本进行权重合并

如果这里你执行的epoch不是6,是小一些的数字。你可能会发现internvl_ft_run_8_filter下没有iter_3000.pth, 那你需要把iter_3000.pth切换成你internvl_ft_run_8_filter目录下的pth即可

cd XTuner

# transfer weights

python3 xtuner/configs/internvl/v1_5/convert_to_official.py xtuner/configs/internvl/v2/internvl_v2_internlm2_2b_qlora_finetune.py /root/InternLM/work_dir/internvl_ft_run_8_filter/iter_3000.pth /root/InternLM/InternVL2-2B/

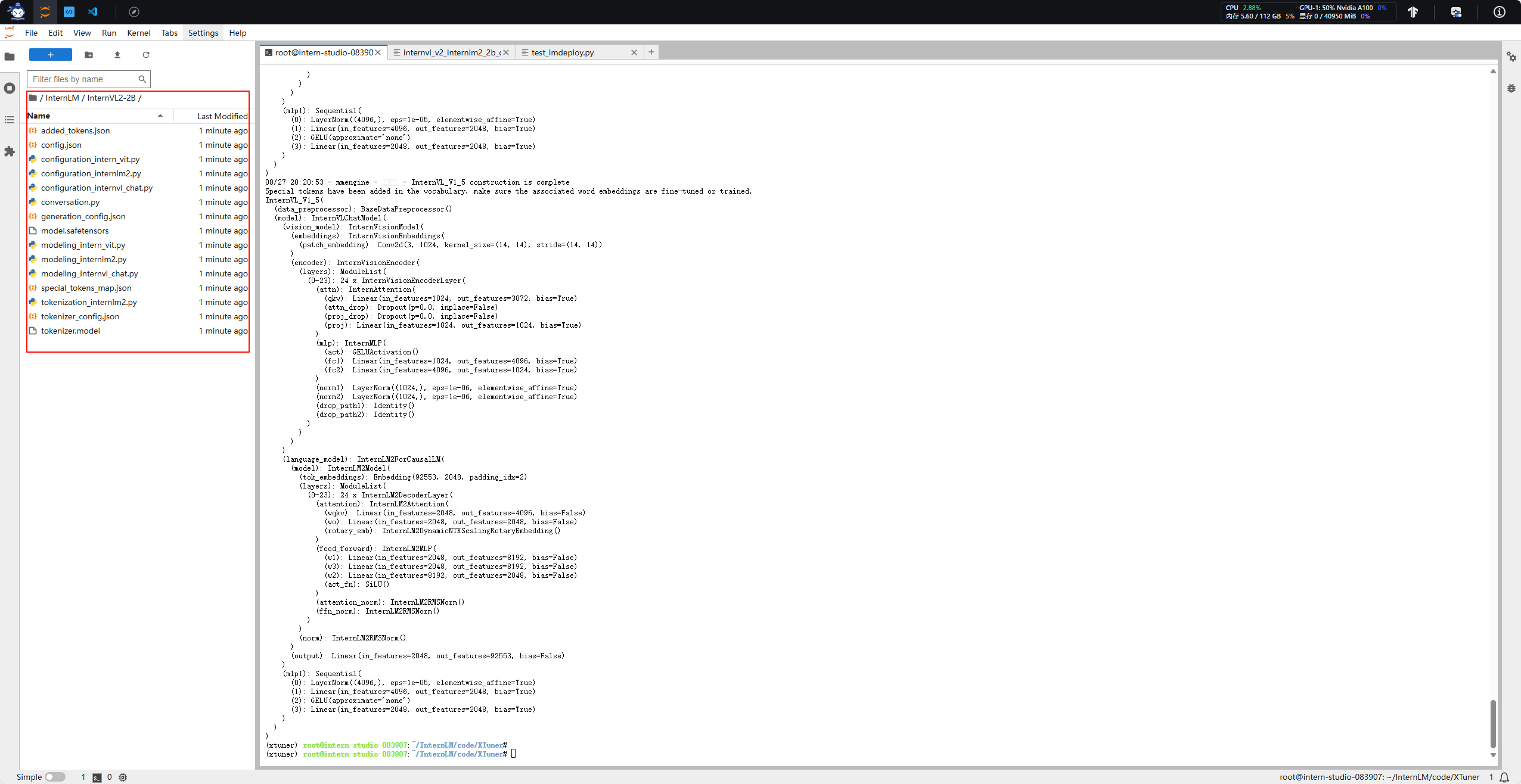

合并后的文件格式:

微调后效果对比

我们把下面的代码替换进test_lmdeploy.py中,然后跑一下效果。

from lmdeploy import pipeline

from lmdeploy.vl import load_image

pipe = pipeline('/root/InternLM/InternVL2-2B')

image = load_image('/root/InternLM/007aPnLRgy1hb39z0im50j30ci0el0wm.jpg')

response = pipe(('请你根据这张图片,讲一个脑洞大开的梗', image))

print(response.text)

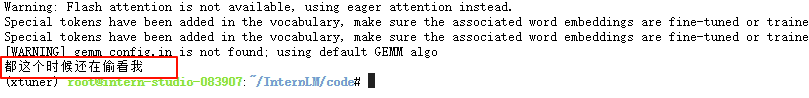

然后运行python3 test_lmdeploy.py.

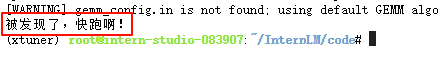

多测试几张图片:

eg1:

eg2:

可以看到效果比微调前好很多.

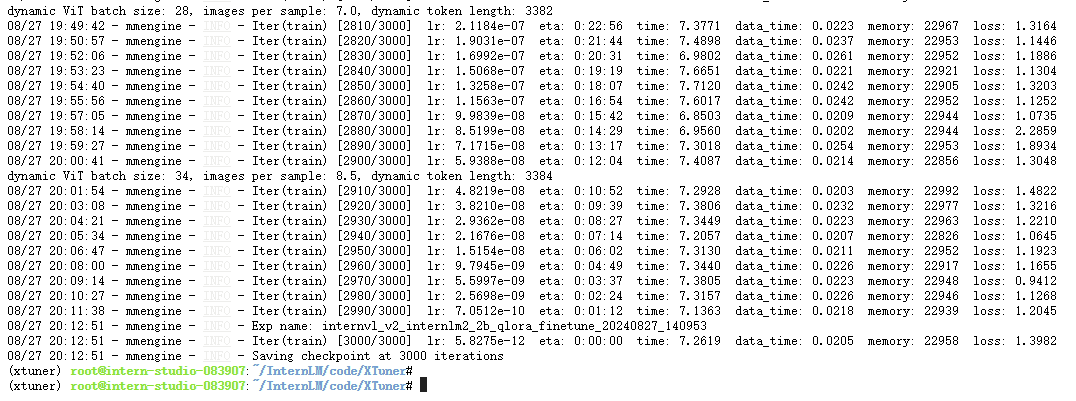

调整xtuner的config文件参数

# Scheduler & Optimizer

batch_size = 4 # per_device

accumulative_counts = 4

dataloader_num_workers = 4

- max_epochs = 6

+ max_epochs = 2

optim_type = AdamW

# official 1024 -> 4e-5

- lr = 2e-5

+ lr = 4e-5

betas = (0.9, 0.999)

weight_decay = 0.05

max_norm = 1 # grad clip

warmup_ratio = 0.03

减小了epoch,增大了lr,看看训练之后的效果怎么样.

可以看到训练完成后的loss值比之前训练的大.

对比一下两次训练的loss:

再来看看推理的效果.

只能说冷的各不相同..

浙公网安备 33010602011771号

浙公网安备 33010602011771号