环境

kafka 2.6.0(安装步骤查看这里)

引入依赖

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-web</artifactId>

</dependency>

<dependency>

<groupId>org.springframework.kafka</groupId>

<artifactId>spring-kafka</artifactId>

<version>2.6.0</version>

</dependency>

配置KafkaAdminClient

# 指定kafka server的地址,集群配多个,中间逗号隔开

spring.kafka.bootstrap-servers=192.168.25.132:9092

定义Bean:

@Configuration

public class KafkaConf {

@Value("${spring.kafka.bootstrap-servers}")

private String server;

@Bean

public KafkaAdminClient kafkaAdminClient(){

Properties props = new Properties();

props.put("bootstrap.servers", server);

return (KafkaAdminClient) KafkaAdminClient.create(props);

}

}

创建Topic:

@RequestMapping("/kafka")

public class KafkaController {

@Autowired

private KafkaAdminClient kafkaAdminClient;

@GetMapping("/createTopic")

public CreateTopicsResult createTopic(){

NewTopic newTopic = new NewTopic("spring-topic", 3, (short) 1);

CreateTopicsResult result = kafkaAdminClient.createTopics(Arrays.asList(newTopic));

return result;

}

}

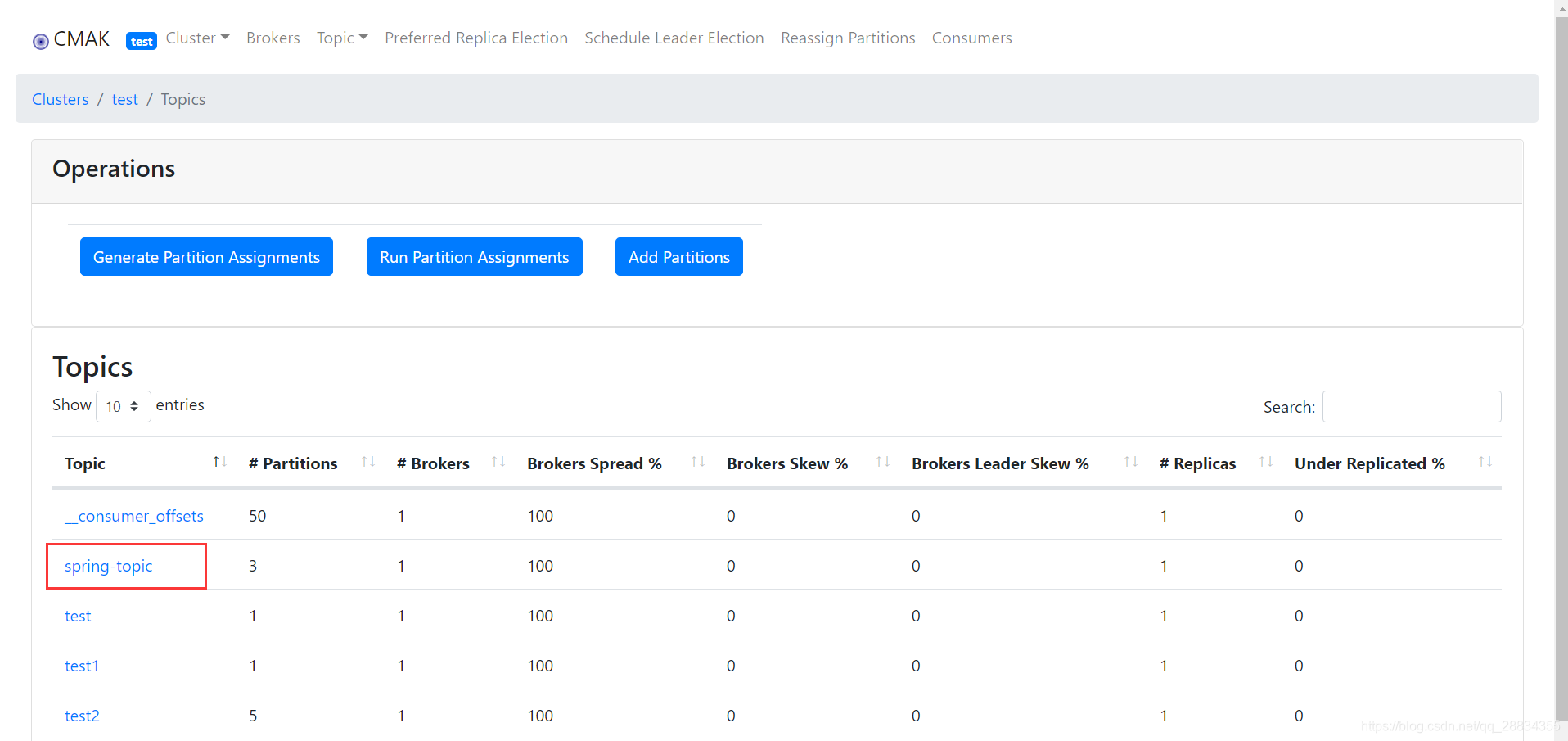

执行后,通过kafka-manager查看,名称为spring-topic的主题已经创建成功:

配置producer

# 写入失败时,重试次数。当leader节点失效,一个repli节点会替代成为leader节点,此时可能出现写入失败,

# 当retris为0时,produce不会重复。retirs重发,此时repli节点完全成为leader节点,不会产生消息丢失。

spring.kafka.producer.retries=5

# 每次批量发送消息的数量,produce积累到一定数据,一次发送

spring.kafka.producer.batch-size=16384

# produce积累数据一次发送,缓存大小达到buffer.memory就发送数据

spring.kafka.producer.buffer-memory=33554432

spring.kafka.producer.acks=all

spring.kafka.producer.key-serializer=org.apache.kafka.common.serialization.StringSerializer

spring.kafka.producer.value-serializer=org.apache.kafka.common.serialization.StringSerializer

定义发送消息接口:

@RequestMapping("/kafka")

public class KafkaController {

@Autowired

private KafkaTemplate<String, Object> kafkaTemplate;

@GetMapping("/send")

public String send(@RequestParam String message){

kafkaTemplate.send("spring-topic", message);

return "success";

}

}

配置consumer

# 指定默认消费者group id --> 由于在kafka中,同一组中的consumer不会读取到同一个消息,依靠groud.id设置组名

spring.kafka.consumer.group-id=group-1

# enable.auto.commit:true --> 设置自动提交offset

spring.kafka.consumer.enable-auto-commit=true

#如果'enable.auto.commit'为true,则消费者偏移自动提交给Kafka的频率(以毫秒为单位),默认值为5000。

spring.kafka.consumer.auto-commit-interval=100

spring.kafka.consumer.key-deserializer=org.apache.kafka.common.serialization.StringDeserializer

spring.kafka.consumer.value-deserializer=org.apache.kafka.common.serialization.StringDeserializer

定义消息接收监听器:

@Component

public class ConsumerListener {

@KafkaListener(topics = "test")

public void onListener(String message){

System.out.println(String.format("接收到消息:%s", message));

}

}

测试

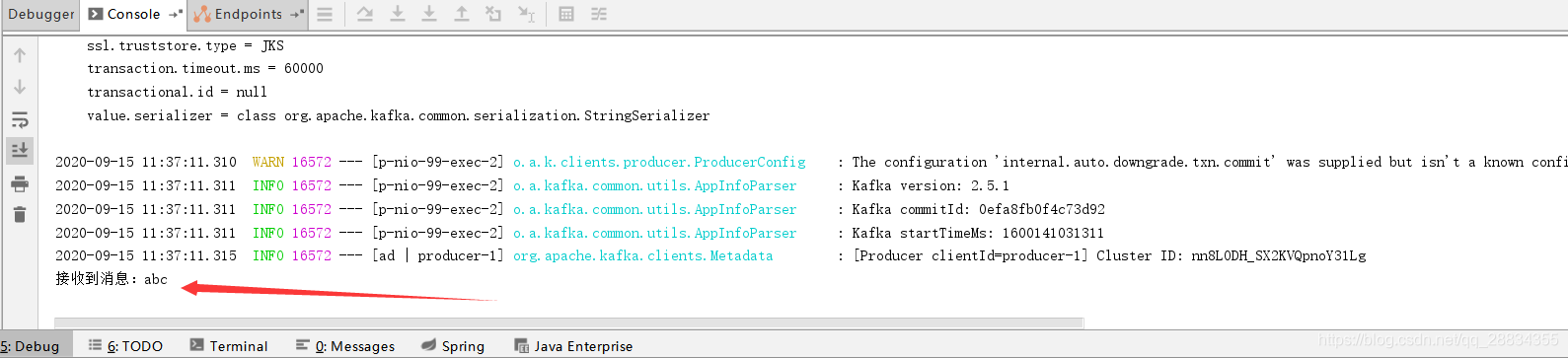

调用发送消息接口:http://localhost:99/kafka/send?message=abc,然后查看IDE窗口,consumer输出了接收到的消息

浙公网安备 33010602011771号

浙公网安备 33010602011771号