HDFS之读写数据流程

HDFS 读写数据流程

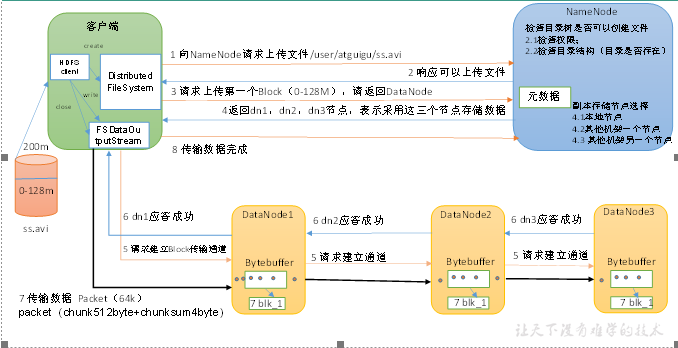

HDFS 写数据流程

刨析文件写入

客户端通过 Distributed FileSystem 模块向 NameNode 请求上传文件,NameNode 检查目标文件是否已经存在,父目录是否存在,或者是否符合权限

NameNode 返回是否可以上传

客户端请求第一个 Block 上传到那几个 DataNode 服务器上

NameNode 返回 3 个 DataNode 节点,分别为 dn1,dn2,dn3

客户端通过 FSDataOutputStream 模块请求 dn1 上传数据,dn1 收到请求会继续调用 dn2,然后 dn2 调用 dn3,将这个通信管道建立完成

三个节点主机逐级响应客户端

客户端开始往 dn1 上传第一个 Block(先从磁盘读取数据放到一个本地内存缓存),以 Packet 为单位,dn1 收到一个 Packet 就会传给 dn2,dn2 传给 dn3;dn1 每传一个 packet 就会放入一个应答队列等待应答。(chunk 为 512 字节 + 4 位校验位)

当一个 Block 传输完成后,客户端再次请求 NameNode 上传第二个 Block 的服务器。(重复执行 3-7 步)

网络拓扑-节点距离计算

在 HDFS 写数据的过程中,NameNode 会选择距离待上传数据最近距离的 DataNode 接收数据。

节点距离:两个节点达到最近的共同祖先的距离总和

注意:机架、集群、最外层的网络节点都可以看作一个父节点

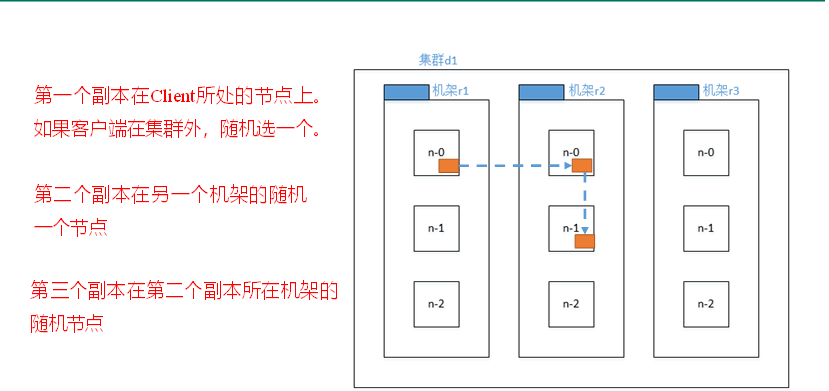

机架感知(副本存储节点选择)

官方说明

地址:Apache Hadoop 3.1.3 – HDFS Architecture

重点:

For the common case, when the replication factor is three, HDFS’s placement policy is to put one replica on the local machine if the writer is on a datanode, otherwise on a random datanode, another replica on a node in a different (remote) rack, and the last on a different node in the same remote rack. This policy cuts the inter-rack write traffic which generally improves write performance. The chance of rack failure is far less than that of node failure; this policy does not impact data reliability and availability guarantees. However, it does reduce the aggregate network bandwidth used when reading data since a block is placed in only two unique racks rather than three. With this policy, the replicas of a file do not evenly distribute across the racks. One third of replicas are on one node, two thirds of replicas are on one rack, and the other third are evenly distributed across the remaining racks. This policy improves write performance without compromising data reliability or read performance.

源码说明

// BlockPlacementPolicyDefault,在该类中查找chooseTargetInOrder方法。

protected Node chooseTargetInOrder(int numOfReplicas, Node writer, Set<Node> excludedNodes, long blocksize, int maxNodesPerRack, List<DatanodeStorageInfo> results, boolean avoidStaleNodes, boolean newBlock, EnumMap<StorageType, Integer> storageTypes) throws NotEnoughReplicasException {

//第一个副本的选择

int numOfResults = results.size();

if (numOfResults == 0) {

DatanodeStorageInfo storageInfo = this.chooseLocalStorage((Node)writer, excludedNodes, blocksize, maxNodesPerRack, results, avoidStaleNodes, storageTypes, true);

writer = storageInfo != null ? storageInfo.getDatanodeDescriptor() : null;

--numOfReplicas;

if (numOfReplicas == 0) {

return (Node)writer;

}

}

//第二个副本的选择

DatanodeDescriptor dn0 = ((DatanodeStorageInfo)results.get(0)).getDatanodeDescriptor();

if (numOfResults <= 1) {

this.chooseRemoteRack(1, dn0, excludedNodes, blocksize, maxNodesPerRack, results, avoidStaleNodes, storageTypes);

--numOfReplicas;

if (numOfReplicas == 0) {

return (Node)writer;

}

}

//第三个副本的选择

if (numOfResults <= 2) {

DatanodeDescriptor dn1 = ((DatanodeStorageInfo)results.get(1)).getDatanodeDescriptor();

if (this.clusterMap.isOnSameRack(dn0, dn1)) {

this.chooseRemoteRack(1, dn0, excludedNodes, blocksize, maxNodesPerRack, results, avoidStaleNodes, storageTypes);

} else if (newBlock) {

this.chooseLocalRack(dn1, excludedNodes, blocksize, maxNodesPerRack, results, avoidStaleNodes, storageTypes);

} else {

this.chooseLocalRack((Node)writer, excludedNodes, blocksize, maxNodesPerRack, results, avoidStaleNodes, storageTypes);

}

--numOfReplicas;

if (numOfReplicas == 0) {

return (Node)writer;

}

}

this.chooseRandom(numOfReplicas, "", excludedNodes, blocksize, maxNodesPerRack, results, avoidStaleNodes, storageTypes);

return (Node)writer;

}副本选择:

Hadoop-3.1.3-副本选择策略

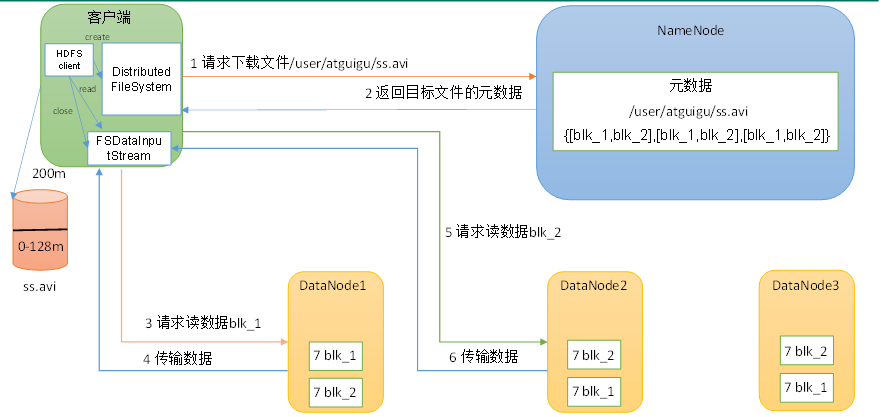

HDFS 读数据流程

客户端经过 DistributedFileSystem 向 NameNode 请求下载文件,NameNode 通过查询元数据,找到文件块所在的 DataNode 地址。

按照节点距离对 DataNode 服务器进行排序,每次找最近的服务器地址进行读取块

DataNode 开始传输数据给客户端(从磁盘里面读取数据输入流,以 Packet 为单位来进行校验)

客户端以 Packet 为单位接收,先存在本地缓存,然后写入目标文件。

- END -这里有错误:HDFS 读取数据是经过高度优化的,在这里读取数据采用的是并行读取。

浙公网安备 33010602011771号

浙公网安备 33010602011771号