neutron核心资源拓展开发

背景:接触openstack开发后,一直做的是非核心资源的开发,大多是CRUD操作,其开发过程比较单一,前段时间接到了一个需求,需求如下:port资源的CRUD操作内加入两个新的字段,接到需求后,未经调研,以为与非核心资源开发类似,因此报了个很短的排期,着手开发后,发现核心资源开发逻辑很乱,因此熬夜加班,花了两倍+的工时才将操作完成,因此记录此开发过程。

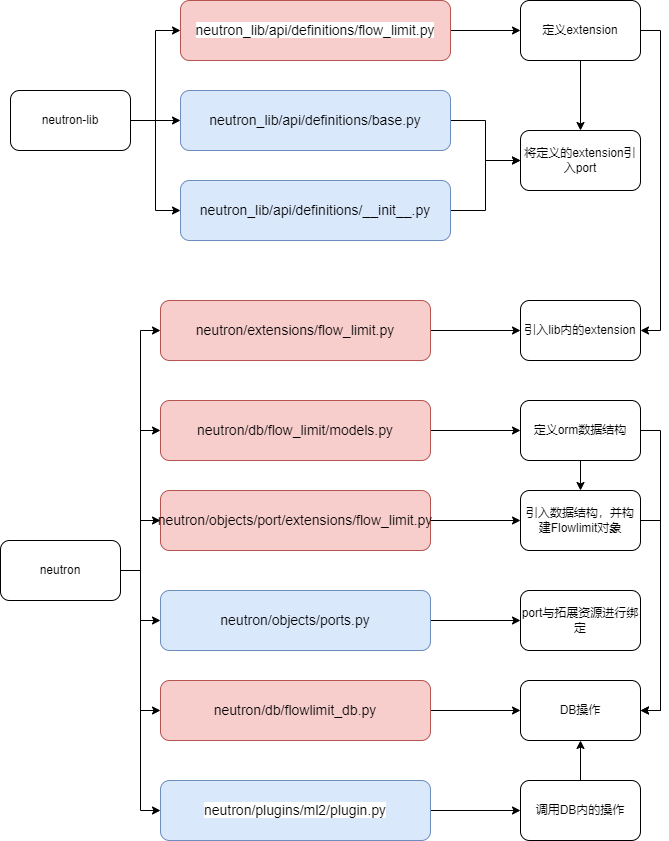

流程图:红色为新建文件,蓝色为修改文件。

- neutron_lib内文件更改(红色加粗代码为add代码)

- 涉及文件:

- neutron_lib/api/definitions/__init__.py

- neutron_lib/api/definitions/base.py

- neutron_lib/api/definitions/flow_limit.py 新加的文件

- neutron_lib/api/definitions/flow_setup_limit.py 新加的文件

- 代码:

- flow_limit.py

-

from neutron_lib.api import converters from neutron_lib.api.definitions import port # The alias of the extension. ALIAS = 'flow_limit' # The label to lookup the plugin in the plugin directory. It can match the # alias, as required. LABEL = 'flow_limit' # Whether or not this extension is simply signaling behavior to the user # or it actively modifies the attribute map. IS_SHIM_EXTENSION = False # Whether the extension is marking the adoption of standardattr model for # legacy resources, or introducing new standardattr attributes. False or # None if the standardattr model is adopted since the introduction of # resource extension. # If this is True, the alias for the extension should be prefixed with # 'standard-attr-'. IS_STANDARD_ATTR_EXTENSION = False # The name of the extension. NAME = 'Flow limit' # A prefix for API resources. An empty prefix means that the API is going # to be exposed at the v2/ level as any other core resource. API_PREFIX = '' # The description of the extension. DESCRIPTION = "Provides flow limit" # A timestamp of when the extension was introduced. UPDATED_TIMESTAMP = "2012-07-23T10:00:00-00:00" # Extension RESOURCE_ATTRIBUTE_MAP = { port.COLLECTION_NAME: { 'flow_limit': {'allow_post': True, 'allow_put': True, 'default': 50000, 'convert_to': converters.convert_to_int, 'enforce_policy': True, 'validate': {'type:range_or_none': [1,500000]}, 'is_visible': True} } } # The subresource attribute map for the extension. It adds child resources # to main extension's resource. The subresource map must have a parent and # a parameters entry. If an extension does not need such a map, None can # be specified (mandatory). SUB_RESOURCE_ATTRIBUTE_MAP = {} # The action map: it associates verbs with methods to be performed on # the API resource. ACTION_MAP = {} # The action status. ACTION_STATUS = { } # The list of required extensions. REQUIRED_EXTENSIONS = [] # The list of optional extensions. OPTIONAL_EXTENSIONS = []

-

-

-

from neutron_lib.api import converters from neutron_lib.api.definitions import port # The alias of the extension. ALIAS = 'flow_setup_limit' # The label to lookup the plugin in the plugin directory. It can match the # alias, as required. LABEL = 'flow_setup_limit' # Whether or not this extension is simply signaling behavior to the user # or it actively modifies the attribute map. IS_SHIM_EXTENSION = False # Whether the extension is marking the adoption of standardattr model for # legacy resources, or introducing new standardattr attributes. False or # None if the standardattr model is adopted since the introduction of # resource extension. # If this is True, the alias for the extension should be prefixed with # 'standard-attr-'. IS_STANDARD_ATTR_EXTENSION = False # The name of the extension. NAME = 'Flow Setup limit' # A prefix for API resources. An empty prefix means that the API is going # to be exposed at the v2/ level as any other core resource. API_PREFIX = '' # The description of the extension. DESCRIPTION = "Provides flow setup limit" # A timestamp of when the extension was introduced. UPDATED_TIMESTAMP = "2012-07-23T10:00:00-00:00" RESOURCE_ATTRIBUTE_MAP = { port.COLLECTION_NAME: { 'flow_setup_limit': {'allow_post': True, 'allow_put': True, 'default': 2000, 'convert_to': converters.convert_to_int, 'enforce_policy': True, 'validate': {'type:range_or_none': [1,40000]}, 'is_visible': True} } } # The subresource attribute map for the extension. It adds child resources # to main extension's resource. The subresource map must have a parent and # a parameters entry. If an extension does not need such a map, None can # be specified (mandatory). SUB_RESOURCE_ATTRIBUTE_MAP = {} # The action map: it associates verbs with methods to be performed on # the API resource. ACTION_MAP = {} # The action status. ACTION_STATUS = { } # The list of required extensions. REQUIRED_EXTENSIONS = [] # The list of optional extensions. OPTIONAL_EXTENSIONS = []

-

-

__init__.py

-

from neutron_lib.api.definitions import agent from neutron_lib.api.definitions import auto_allocated_topology from neutron_lib.api.definitions import bgpvpn from neutron_lib.api.definitions import bgpvpn_routes_control from neutron_lib.api.definitions import data_plane_status from neutron_lib.api.definitions import dns from neutron_lib.api.definitions import dns_domain_ports from neutron_lib.api.definitions import expose_port_forwarding_in_fip from neutron_lib.api.definitions import external_net from neutron_lib.api.definitions import extra_dhcp_opt from neutron_lib.api.definitions import flow_limit from neutron_lib.api.definitions import flow_setup_limit from neutron_lib.api.definitions import fip64 from neutron_lib.api.definitions import firewall from neutron_lib.api.definitions import firewall_v2 from neutron_lib.api.definitions import firewallrouterinsertion from neutron_lib.api.definitions import floating_ip_port_forwarding from neutron_lib.api.definitions import ip_substring_port_filtering from neutron_lib.api.definitions import l3 from neutron_lib.api.definitions import logging from neutron_lib.api.definitions import logging_resource from neutron_lib.api.definitions import network from neutron_lib.api.definitions import network_mtu from neutron_lib.api.definitions import port from neutron_lib.api.definitions import port_security from neutron_lib.api.definitions import portbindings from neutron_lib.api.definitions import portbindings_extended from neutron_lib.api.definitions import provider_net from neutron_lib.api.definitions import router_interface_fip from neutron_lib.api.definitions import subnet from neutron_lib.api.definitions import subnetpool from neutron_lib.api.definitions import trunk from neutron_lib.api.definitions import trunk_details _ALL_API_DEFINITIONS = { address_scope, agent, auto_allocated_topology, bgpvpn, bgpvpn_routes_control, data_plane_status, dns, dns_domain_ports, external_net, expose_port_forwarding_in_fip, extra_dhcp_opt, flow_limit, flow_setup_limit, fip64, firewall, firewall_v2, firewallrouterinsertion, floating_ip_port_forwarding, ip_substring_port_filtering, l3, logging, logging_resource, network,

-

-

-

'data-plane-status', 'default-subnetpools', 'dhcp_agent_scheduler', 'dns-domain-ports', 'dns-integration', 'dvr', 'expose-port-forwarding-in-fip', 'ext-gw-mode', 'external-net', 'extra_dhcp_opt', 'flow_limit', 'flow_setup_limit', 'extraroute', 'flavors', 'floating-ip-port-forwarding', 'ip-substring-filtering', 'l3-ha', 'l3_agent_scheduler', 'logging', 'metering', 'multi-provider', 'net-mtu',

neutron内文件更改(以flow_limit为例)

- 涉及文件:

- neutron/extensions/flow_limit.py

-

from neutron_lib.api.definitions import flow_limit from neutron_lib.api import extensions class Flow_limit(extensions.APIExtensionDescriptor): api_definition = flow_limit

-

neutron/objects/port/extensions/flow_limit.py 定义与port绑定的extension

# Licensed under the Apache License, Version 2.0 (the "License"); you may # not use this file except in compliance with the License. You may obtain # a copy of the License at # # http://www.apache.org/licenses/LICENSE-2.0 # # Unless required by applicable law or agreed to in writing, software # distributed under the License is distributed on an "AS IS" BASIS, WITHOUT # WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. See the # License for the specific language governing permissions and limitations # under the License. from oslo_versionedobjects import fields as obj_fields from neutron.db.flow_limit import models from neutron.objects import base from neutron.objects import common_types @base.NeutronObjectRegistry.register class FlowLimit(base.NeutronDbObject): # Version 1.0: Initial version VERSION = '1.0' db_model = models.FlowLimit fields = { 'id': common_types.UUIDField(), 'flow_limit': obj_fields.IntegerField(), } foreign_keys = { 'Port': {'id': 'id'}, } fields_need_translation = {'id': 'port_id'}

- neutron/objects/ports.py (引入拓展)

- dd

), 'binding': obj_fields.ObjectField( 'PortBinding', nullable=True ), 'data_plane_status': obj_fields.ObjectField( 'PortDataPlaneStatus', nullable=True ), 'dhcp_options': obj_fields.ListOfObjectsField( 'ExtraDhcpOpt', nullable=True ), 'flow_limit': obj_fields.ObjectField( 'FlowLimit', nullable=True ), 'flow_setup_limit': obj_fields.ObjectField( 'FlowSetupLimit', nullable=True ), 'distributed_binding': obj_fields.ObjectField( 'DistributedPortBinding', nullable=True ), 'dns': obj_fields.ObjectField('PortDNS', nullable=True), 'fixed_ips': obj_fields.ListOfObjectsField( 'IPAllocation', nullable=True ), # TODO(ihrachys): consider converting to boolean 'security': obj_fields.ObjectField( 'PortSecurity', nullable=True ), 'security_group_ids': common_types.SetOfUUIDsField( nullable=True, # TODO(ihrachys): how do we safely pass a mutable default? default=None, ), 'qos_policy_id': common_types.UUIDField(nullable=True, default=None), 'binding_levels': obj_fields.ListOfObjectsField( 'PortBindingLevel', nullable=True ), # TODO(ihrachys): consider adding a 'dns_assignment' fully synthetic # field in later object iterations } extra_filter_names = {'security_group_ids'} fields_no_update = ['project_id', 'network_id'] synthetic_fields = [ 'allowed_address_pairs', 'binding', 'binding_levels', 'data_plane_status', 'dhcp_options', 'distributed_binding', 'dns', 'fixed_ips', 'qos_policy_id', 'security', 'flow_limit', 'flow_setup_limit', 'security_group_ids', ] fields_need_translation = { 'binding': 'port_binding', 'dhcp_options': 'dhcp_opts', 'distributed_binding': 'distributed_port_binding', 'security': 'port_security', 'flow_limit': 'flow_limit', 'flow_setup_limit': 'flow_setup_limit', } def create(self): fields = self.obj_get_changes() with self.db_context_writer(self.obj_context): sg_ids = self.security_group_ids if sg_ids is None: sg_ids = set() qos_policy_id = self.qos_policy_id super(Port, self).create() if 'security_group_ids' in fields: self._attach_security_groups(sg_ids) if 'qos_policy_id' in fields: self._attach_qos_policy(qos_policy_id) def update(self): fields = self.obj_get_changes() with self.db_context_writer(self.obj_context): super(Port, self).update() if 'security_group_ids' in fields: self._attach_security_groups(fields['security_group_ids']) if 'qos_policy_id' in fields: self._attach_qos_policy(fields['qos_policy_id']) def _attach_qos_policy(self, qos_policy_id): binding.QosPolicyPortBinding.delete_objects( self.obj_context, port_id=self.id) if qos_policy_id: port_binding_obj = binding.QosPolicyPortBinding( self.obj_context, policy_id=qos_policy_id, port_id=self.id) port_binding_obj.create() self.qos_policy_id = qos_policy_id self.obj_reset_changes(['qos_policy_id']) def _attach_security_groups(self, sg_ids): # TODO(ihrachys): consider introducing an (internal) object for the # binding to decouple database operations a bit more obj_db_api.delete_objects( SecurityGroupPortBinding, self.obj_context, port_id=self.id) if sg_ids: for sg_id in sg_ids: self._attach_security_group(sg_id) self.security_group_ids = sg_ids self.obj_reset_changes(['security_group_ids']) def _attach_security_group(self, sg_id): obj_db_api.create_object( SecurityGroupPortBinding, self.obj_context, {'port_id': self.id, 'security_group_id': sg_id} ) # TODO(rossella_s): get rid of it once we switch the db model to using # custom types. @classmethod def modify_fields_to_db(cls, fields): result = super(Port, cls).modify_fields_to_db(fields) if 'mac_address' in result: result['mac_address'] = cls.filter_to_str(result['mac_address']) return result # TODO(rossella_s): get rid of it once we switch the db model to using # custom types. @classmethod def modify_fields_from_db(cls, db_obj): fields = super(Port, cls).modify_fields_from_db(db_obj) if 'mac_address' in fields: fields['mac_address'] = utils.AuthenticEUI(fields['mac_address']) distributed_port_binding = fields.get('distributed_binding') if distributed_port_binding: fields['distributed_binding'] = fields['distributed_binding'][0] else: fields['distributed_binding'] = None return fields def from_db_object(self, db_obj): super(Port, self).from_db_object(db_obj) # extract security group bindings if db_obj.get('security_groups', []): self.security_group_ids = { sg.security_group_id for sg in db_obj.security_groups } else: self.security_group_ids = set() self.obj_reset_changes(['security_group_ids']) # extract qos policy binding if db_obj.get('qos_policy_binding'): self.qos_policy_id = ( db_obj.qos_policy_binding.policy_id ) else: self.qos_policy_id = None self.obj_reset_changes(['qos_policy_id']) def obj_make_compatible(self, primitive, target_version): _target_version = versionutils.convert_version_to_tuple(target_version) if _target_version < (1, 1): primitive.pop('data_plane_status', None) @classmethod def get_ports_by_router(cls, context, router_id, owner, subnet): rport_qry = context.session.query(models_v2.Port).join( l3.RouterPort) ports = rport_qry.filter( l3.RouterPort.router_id == router_id, l3.RouterPort.port_type == owner, models_v2.Port.network_id == subnet['network_id'] ) return [cls._load_object(context, db_obj) for db_obj in ports.all()] @classmethod def get_ports_by_vnic_type_and_host( cls, context, vnic_type, host): query = context.session.query(models_v2.Port).join( ml2_models.PortBinding) query = query.filter( ml2_models.PortBinding.vnic_type == vnic_type, ml2_models.PortBinding.host == host) return [cls._load_object(context, db_obj) for db_obj in query.all()]

- neutron/db/flow_limit/models.py 定义model

from neutron_lib.db import model_base import sqlalchemy as sa from sqlalchemy import orm from neutron.db import models_v2 class FlowLimit(model_base.BASEV2): port_id = sa.Column(sa.String(36), sa.ForeignKey('ports.id', ondelete="CASCADE"), primary_key=True) flow_limit = sa.Column(sa.Integer, nullable=False) # Add a relationship to the Port model in order to be to able to # instruct SQLAlchemy to eagerly load port flow limit port = orm.relationship( models_v2.Port, load_on_pending=True, backref=orm.backref("flow_limit", uselist=False, cascade='delete', lazy='joined')) revises_on_change = ('port', )

-

neutron/db/flowlimit_db.py定义db操作

from neutron_lib.api.definitions import port as port_def from neutron.db import api as db_api from neutron.db import _resource_extend as resource_extend from neutron.objects.port.extensions import flow_limit as obj_flow_limit @resource_extend.has_resource_extenders class FlowlimitDbMixin(object): """Mixin class to add extra options to the Flow limit file and associate them to a port. """ def _process_port_create_flow_limit(self, context, port, flow_limit): with db_api.context_manager.writer.using(context): flow_limit_obj = obj_flow_limit.FlowLimit( context, id=port['id'], flow_limit=flow_limit ) flow_limit_obj.create() return self._extend_port_flow_limit_dict(context, port) def _extend_port_flow_limit_dict(self, context, port): port['flow_limit'] = self._get_port_flow_limit_binding( context, port['id']) def _get_port_flow_limit_binding(self, context, port_id): opt = obj_flow_limit.FlowLimit.get_object( context, id=port_id) return opt['flow_limit'] def _update_flow_limit_on_port(self, context, id, port, updated_port=None): flt = port['port'].get('flow_limit') if flt: port_update = port['port'] port_update["id"] = id opt = obj_flow_limit.FlowLimit.get_object( context, id=id) if opt: with db_api.context_manager.writer.using(context): opt.flow_limit = flt opt.update() else: self._process_port_create_flow_limit(context, port_update, flt) return bool(flt) @staticmethod @resource_extend.extends([port_def.COLLECTION_NAME]) def _extend_port_dict_flow_limit(res, port): flow_limit = port.flow_limit if flow_limit: res['flow_limit'] = flow_limit['flow_limit'] return res

- neutron/plugins/ml2/plugin.py plugin内创建更新时,直接调用db内定义的方法即可

-

from neutron.db import _utils as db_utils from neutron.db import address_scope_db from neutron.db import agents_db from neutron.db import agentschedulers_db from neutron.db import allowedaddresspairs_db as addr_pair_db from neutron.db import api as db_api from neutron.db import db_base_plugin_v2 from neutron.db import dvr_mac_db from neutron.db import external_net_db from neutron.db import extradhcpopt_db from neutron.db import flowlimit_db from neutron.db import flowsetuplimit_db from neutron.db.models import securitygroup as sg_models from neutron.db import models_v2 from neutron.db import provisioning_blocks from neutron.db.quota import driver # noqa from neutron.db import securitygroups_rpc_base as sg_db_rpc from neutron.db import segments_db from neutron.db import subnet_service_type_db_models as service_type_db from neutron.db import vlantransparent_db from neutron.extensions import allowedaddresspairs as addr_pair from neutron.extensions import availability_zone as az_ext from neutron.extensions import netmtu_writable as mtu_ext from neutron.extensions import providernet as provider from neutron.extensions import vlantransparent from neutron.objects import ports as ports_obj from neutron.objects.qos import policy as policy_object from neutron.plugins.common import utils as p_utils from neutron.plugins.ml2.common import exceptions as ml2_exc from neutron.plugins.ml2 import config # noqa from neutron.plugins.ml2 import db from neutron.plugins.ml2 import driver_context from neutron.plugins.ml2.extensions import qos as qos_ext from neutron.plugins.ml2 import managers from neutron.plugins.ml2 import models from neutron.plugins.ml2 import ovo_rpc from neutron.plugins.ml2 import rpc from neutron.quota import resource_registry from neutron.services.qos import qos_consts from neutron.services.qos import qos_checker_ext from neutron.services.segments import plugin as segments_plugin from neutron.tooz import dist_lock LOG = log.getLogger(__name__) MAX_BIND_TRIES = 10 SERVICE_PLUGINS_REQUIRED_DRIVERS = { 'qos': [qos_ext.QOS_EXT_DRIVER_ALIAS] } def _ml2_port_result_filter_hook(query, filters): values = filters and filters.get(portbindings.HOST_ID, []) if not values: return query bind_criteria = models.PortBinding.host.in_(values) return query.filter(models_v2.Port.port_binding.has(bind_criteria)) @resource_extend.has_resource_extenders @registry.has_registry_receivers class Ml2Plugin(db_base_plugin_v2.NeutronDbPluginV2, dvr_mac_db.DVRDbMixin, external_net_db.External_net_db_mixin, sg_db_rpc.SecurityGroupServerRpcMixin, agentschedulers_db.AZDhcpAgentSchedulerDbMixin, addr_pair_db.AllowedAddressPairsMixin, vlantransparent_db.Vlantransparent_db_mixin, extradhcpopt_db.ExtraDhcpOptMixin, flowlimit_db.FlowlimitDbMixin, flowsetuplimit_db.FlowsetuplimitDbMixin, address_scope_db.AddressScopeDbMixin, service_type_db.SubnetServiceTypeMixin): """Implement the Neutron L2 abstractions using modules. Ml2Plugin is a Neutron plugin based on separately extensible sets of network types and mechanisms for connecting to networks of those types. The network types and mechanisms are implemented as drivers loaded via Python entry points. Networks can be made up of multiple segments (not yet fully implemented). """ # This attribute specifies whether the plugin supports or not # bulk/pagination/sorting operations. Name mangling is used in # order to ensure it is qualified by class __native_bulk_support = True __native_pagination_support = True __native_sorting_support = True # List of supported extensions _supported_extension_aliases = ["provider", "external-net", "binding", "quotas", "security-group", "agent", "dhcp_agent_scheduler", "multi-provider", "allowed-address-pairs", "extra_dhcp_opt", "subnet_allocation", "net-mtu", "net-mtu-writable", "vlan-transparent", "flow_limit", "address-scope", "flow_setup_limit", "availability_zone", "network_availability_zone", "default-subnetpools", "subnet-service-types", "ip-substring-filtering"] @property def supported_extension_aliases(self): if not hasattr(self, '_aliases'): aliases = self._supported_extension_aliases[:] aliases += self.extension_manager.extension_aliases() sg_rpc.disable_security_group_extension_by_config(aliases) vlantransparent.disable_extension_by_config(aliases) self._aliases = aliases return self._aliases def __new__(cls, *args, **kwargs): model_query.register_hook( models_v2.Port, "ml2_port_bindings", query_hook=None, filter_hook=None, result_filters=_ml2_port_result_filter_hook) return super(Ml2Plugin, cls).__new__(cls, *args, **kwargs) @resource_registry.tracked_resources( network=models_v2.Network, port=models_v2.Port, subnet=models_v2.Subnet, subnetpool=models_v2.SubnetPool, security_group=sg_models.SecurityGroup, security_group_rule=sg_models.SecurityGroupRule) def __init__(self): # First load drivers, then initialize DB, then initialize drivers self.type_manager = managers.TypeManager() self.extension_manager = managers.ExtensionManager() self.mechanism_manager = managers.MechanismManager() super(Ml2Plugin, self).__init__() self.type_manager.initialize() self.extension_manager.initialize() self.mechanism_manager.initialize() self._setup_dhcp() self._start_rpc_notifiers() self.add_agent_status_check_worker(self.agent_health_check) self.add_workers(self.mechanism_manager.get_workers()) self._verify_service_plugins_requirements() self.qos_checker = cfg.CONF.qos_checker if cfg.CONF.tooz.enabled: dist_lock.COORDINATOR.start() LOG.info("Tooz lock has Start.") LOG.info("Modular L2 Plugin initialization complete") def _setup_rpc(self): """Initialize components to support agent communication.""" self.endpoints = [ rpc.RpcCallbacks(self.notifier, self.type_manager), securitygroups_rpc.SecurityGroupServerRpcCallback(), dvr_rpc.DVRServerRpcCallback(), dhcp_rpc.DhcpRpcCallback(), agents_db.AgentExtRpcCallback(), metadata_rpc.MetadataRpcCallback(), resources_rpc.ResourcesPullRpcCallback() ] def _setup_dhcp(self): """Initialize components to support DHCP.""" self.network_scheduler = importutils.import_object( cfg.CONF.network_scheduler_driver ) self.add_periodic_dhcp_agent_status_check() def _verify_service_plugins_requirements(self): for service_plugin in cfg.CONF.service_plugins: extension_drivers = SERVICE_PLUGINS_REQUIRED_DRIVERS.get( service_plugin, [] ) for extension_driver in extension_drivers: if extension_driver not in self.extension_manager.names(): raise ml2_exc.ExtensionDriverNotFound( driver=extension_driver, service_plugin=service_plugin ) @registry.receives(resources.PORT, [provisioning_blocks.PROVISIONING_COMPLETE]) def _port_provisioned(self, rtype, event, trigger, context, object_id, **kwargs): port_id = object_id port = db.get_port(context, port_id) if not port or not port.port_binding: LOG.debug("Port %s was deleted so its status cannot be updated.", port_id) return if port.port_binding.vif_type in (portbindings.VIF_TYPE_BINDING_FAILED, portbindings.VIF_TYPE_UNBOUND): # NOTE(kevinbenton): we hit here when a port is created without # a host ID and the dhcp agent notifies that its wiring is done LOG.debug("Port %s cannot update to ACTIVE because it " "is not bound.", port_id) return else: # port is bound, but we have to check for new provisioning blocks # one last time to detect the case where we were triggered by an # unbound port and the port became bound with new provisioning # blocks before 'get_port' was called above if provisioning_blocks.is_object_blocked(context, port_id, resources.PORT): LOG.debug("Port %s had new provisioning blocks added so it " "will not transition to active.", port_id) return self.update_port_status(context, port_id, const.PORT_STATUS_ACTIVE) @log_helpers.log_method_call def _start_rpc_notifiers(self): """Initialize RPC notifiers for agents.""" self.ovo_notifier = ovo_rpc.OVOServerRpcInterface() self.notifier = rpc.AgentNotifierApi(topics.AGENT) self.agent_notifiers[const.AGENT_TYPE_DHCP] = ( dhcp_rpc_agent_api.DhcpAgentNotifyAPI() ) @log_helpers.log_method_call def start_rpc_listeners(self): """Start the RPC loop to let the plugin communicate with agents.""" self._setup_rpc() self.topic = topics.PLUGIN self.conn = n_rpc.create_connection() self.conn.create_consumer(self.topic, self.endpoints, fanout=False) self.conn.create_consumer( topics.SERVER_RESOURCE_VERSIONS, [resources_rpc.ResourcesPushToServerRpcCallback()], fanout=True) # process state reports despite dedicated rpc workers self.conn.create_consumer(topics.REPORTS, [agents_db.AgentExtRpcCallback()], fanout=False) return self.conn.consume_in_threads() def start_rpc_state_reports_listener(self): self.conn_reports = n_rpc.create_connection() self.conn_reports.create_consumer(topics.REPORTS, [agents_db.AgentExtRpcCallback()], fanout=False) return self.conn_reports.consume_in_threads() def _filter_nets_provider(self, context, networks, filters): return [network for network in networks if self.type_manager.network_matches_filters(network, filters) ] def _check_mac_update_allowed(self, orig_port, port, binding): unplugged_types = (portbindings.VIF_TYPE_BINDING_FAILED, portbindings.VIF_TYPE_UNBOUND) new_mac = port.get('mac_address') mac_change = (new_mac is not None and orig_port['mac_address'] != new_mac) if (mac_change and binding.vif_type not in unplugged_types): raise exc.PortBound(port_id=orig_port['id'], vif_type=binding.vif_type, old_mac=orig_port['mac_address'], new_mac=port['mac_address']) return mac_change def _process_port_binding(self, mech_context, attrs): plugin_context = mech_context._plugin_context binding = mech_context._binding port = mech_context.current port_id = port['id'] changes = False host = const.ATTR_NOT_SPECIFIED if attrs and portbindings.HOST_ID in attrs: host = attrs.get(portbindings.HOST_ID) or '' original_host = binding.host if (validators.is_attr_set(host) and original_host != host): binding.host = host changes = True vnic_type = attrs.get(portbindings.VNIC_TYPE) if attrs else None if (validators.is_attr_set(vnic_type) and binding.vnic_type != vnic_type): binding.vnic_type = vnic_type changes = True # treat None as clear of profile. profile = None if attrs and portbindings.PROFILE in attrs: profile = attrs.get(portbindings.PROFILE) or {} if profile not in (None, const.ATTR_NOT_SPECIFIED, self._get_profile(binding)): binding.profile = jsonutils.dumps(profile) if len(binding.profile) > models.BINDING_PROFILE_LEN: msg = _("binding:profile value too large") raise exc.InvalidInput(error_message=msg) changes = True # Note(jetlee): other vnic_type haven't 'anti_affinity_group' # expect direct port. # Updating port, the vnic_type isn't in attrs, so we should # retrieve it from port info. if not validators.is_attr_set(vnic_type): vnic_type = port.get('binding:vnic_type') if 'anti_affinity_group' in binding.profile and vnic_type != \ portbindings.VNIC_DIRECT: msg = _("Normal port hasn't set anti_affinity_group!!!") raise exc.InvalidInput(error_message=msg) # Unbind the port if needed. if changes: binding.vif_type = portbindings.VIF_TYPE_UNBOUND binding.vif_details = '' db.clear_binding_levels(plugin_context, port_id, original_host) mech_context._clear_binding_levels() port['status'] = const.PORT_STATUS_DOWN super(Ml2Plugin, self).update_port( mech_context._plugin_context, port_id, {port_def.RESOURCE_NAME: {'status': const.PORT_STATUS_DOWN}}) if port['device_owner'] == const.DEVICE_OWNER_DVR_INTERFACE: binding.vif_type = portbindings.VIF_TYPE_UNBOUND binding.vif_details = '' db.clear_binding_levels(plugin_context, port_id, original_host) mech_context._clear_binding_levels() binding.host = '' self._update_port_dict_binding(port, binding) binding.persist_state_to_session(plugin_context.session) return changes @db_api.retry_db_errors def _bind_port_if_needed(self, context, allow_notify=False, need_notify=False): if not context.network.network_segments: LOG.debug("Network %s has no segments, skipping binding", context.network.current['id']) return context for count in range(1, MAX_BIND_TRIES + 1): if count > 1: # yield for binding retries so that we give other threads a # chance to do their work greenthread.sleep(0) # multiple attempts shouldn't happen very often so we log each # attempt after the 1st. LOG.info("Attempt %(count)s to bind port %(port)s", {'count': count, 'port': context.current['id']}) bind_context, need_notify, try_again = self._attempt_binding( context, need_notify) if count == MAX_BIND_TRIES or not try_again: if self._should_bind_port(context): # At this point, we attempted to bind a port and reached # its final binding state. Binding either succeeded or # exhausted all attempts, thus no need to try again. # Now, the port and its binding state should be committed. context, need_notify, try_again = ( self._commit_port_binding(context, bind_context, need_notify, try_again)) else: context = bind_context if not try_again: if allow_notify and need_notify: self._notify_port_updated(context) return context LOG.error("Failed to commit binding results for %(port)s " "after %(max)s tries", {'port': context.current['id'], 'max': MAX_BIND_TRIES}) return context def _should_bind_port(self, context): return (context._binding.host and context._binding.vif_type in (portbindings.VIF_TYPE_UNBOUND, portbindings.VIF_TYPE_BINDING_FAILED)) def _attempt_binding(self, context, need_notify): try_again = False if self._should_bind_port(context): bind_context = self._bind_port(context) if bind_context.vif_type != portbindings.VIF_TYPE_BINDING_FAILED: # Binding succeeded. Suggest notifying of successful binding. need_notify = True else: # Current attempt binding failed, try to bind again. try_again = True context = bind_context return context, need_notify, try_again def _bind_port(self, orig_context): # Construct a new PortContext from the one from the previous # transaction. port = orig_context.current orig_binding = orig_context._binding new_binding = models.PortBinding( host=orig_binding.host, vnic_type=orig_binding.vnic_type, profile=orig_binding.profile, vif_type=portbindings.VIF_TYPE_UNBOUND, vif_details='' ) self._update_port_dict_binding(port, new_binding) new_context = driver_context.PortContext( self, orig_context._plugin_context, port, orig_context.network.current, new_binding, None, original_port=orig_context.original) # Attempt to bind the port and return the context with the # result. self.mechanism_manager.bind_port(new_context) return new_context def _commit_port_binding(self, orig_context, bind_context, need_notify, try_again): port_id = orig_context.current['id'] plugin_context = orig_context._plugin_context orig_binding = orig_context._binding new_binding = bind_context._binding # TODO(yamahata): revise what to be passed or new resource # like PORTBINDING should be introduced? # It would be addressed during EventPayload conversion. registry.notify(resources.PORT, events.BEFORE_UPDATE, self, context=plugin_context, port=orig_context.current, original_port=orig_context.current, orig_binding=orig_binding, new_binding=new_binding) # After we've attempted to bind the port, we begin a # transaction, get the current port state, and decide whether # to commit the binding results. with db_api.context_manager.writer.using(plugin_context): # Get the current port state and build a new PortContext # reflecting this state as original state for subsequent # mechanism driver update_port_*commit() calls. try: port_db = self._get_port(plugin_context, port_id) cur_binding = port_db.port_binding except exc.PortNotFound: port_db, cur_binding = None, None if not port_db or not cur_binding: # The port has been deleted concurrently, so just # return the unbound result from the initial # transaction that completed before the deletion. LOG.debug("Port %s has been deleted concurrently", port_id) return orig_context, False, False # Since the mechanism driver bind_port() calls must be made # outside a DB transaction locking the port state, it is # possible (but unlikely) that the port's state could change # concurrently while these calls are being made. If another # thread or process succeeds in binding the port before this # thread commits its results, the already committed results are # used. If attributes such as binding:host_id, binding:profile, # or binding:vnic_type are updated concurrently, the try_again # flag is returned to indicate that the commit was unsuccessful. oport = self._make_port_dict(port_db) port = self._make_port_dict(port_db) network = bind_context.network.current if port['device_owner'] == const.DEVICE_OWNER_DVR_INTERFACE: # REVISIT(rkukura): The PortBinding instance from the # ml2_port_bindings table, returned as cur_binding # from port_db.port_binding above, is # currently not used for DVR distributed ports, and is # replaced here with the DistributedPortBinding instance from # the ml2_distributed_port_bindings table specific to the host # on which the distributed port is being bound. It # would be possible to optimize this code to avoid # fetching the PortBinding instance in the DVR case, # and even to avoid creating the unused entry in the # ml2_port_bindings table. But the upcoming resolution # for bug 1367391 will eliminate the # ml2_distributed_port_bindings table, use the # ml2_port_bindings table to store non-host-specific # fields for both distributed and non-distributed # ports, and introduce a new ml2_port_binding_hosts # table for the fields that need to be host-specific # in the distributed case. Since the PortBinding # instance will then be needed, it does not make sense # to optimize this code to avoid fetching it. cur_binding = db.get_distributed_port_binding_by_host( plugin_context, port_id, orig_binding.host) cur_context = driver_context.PortContext( self, plugin_context, port, network, cur_binding, None, original_port=oport) # Commit our binding results only if port has not been # successfully bound concurrently by another thread or # process and no binding inputs have been changed. commit = ((cur_binding.vif_type in [portbindings.VIF_TYPE_UNBOUND, portbindings.VIF_TYPE_BINDING_FAILED]) and orig_binding.host == cur_binding.host and orig_binding.vnic_type == cur_binding.vnic_type and orig_binding.profile == cur_binding.profile) if commit: # Update the port's binding state with our binding # results. cur_binding.vif_type = new_binding.vif_type cur_binding.vif_details = new_binding.vif_details db.clear_binding_levels(plugin_context, port_id, cur_binding.host) db.set_binding_levels(plugin_context, bind_context._binding_levels) # refresh context with a snapshot of updated state cur_context._binding = driver_context.InstanceSnapshot( cur_binding) cur_context._binding_levels = bind_context._binding_levels # Update PortContext's port dictionary to reflect the # updated binding state. self._update_port_dict_binding(port, cur_binding) # Update the port status if requested by the bound driver. if (bind_context._binding_levels and bind_context._new_port_status): port_db.status = bind_context._new_port_status port['status'] = bind_context._new_port_status # Call the mechanism driver precommit methods, commit # the results, and call the postcommit methods. self.mechanism_manager.update_port_precommit(cur_context) if commit: # Continue, using the port state as of the transaction that # just finished, whether that transaction committed new # results or discovered concurrent port state changes. # Also, Trigger notification for successful binding commit. kwargs = { 'context': plugin_context, 'port': self._make_port_dict(port_db), # ensure latest state 'mac_address_updated': False, 'original_port': oport, } registry.notify(resources.PORT, events.AFTER_UPDATE, self, **kwargs) self.mechanism_manager.update_port_postcommit(cur_context) need_notify = True try_again = False else: try_again = True return cur_context, need_notify, try_again def _update_port_dict_binding(self, port, binding): port[portbindings.VNIC_TYPE] = binding.vnic_type port[portbindings.PROFILE] = self._get_profile(binding) if port['device_owner'] == const.DEVICE_OWNER_DVR_INTERFACE: port[portbindings.HOST_ID] = '' port[portbindings.VIF_TYPE] = portbindings.VIF_TYPE_DISTRIBUTED port[portbindings.VIF_DETAILS] = {} else: port[portbindings.HOST_ID] = binding.host port[portbindings.VIF_TYPE] = binding.vif_type port[portbindings.VIF_DETAILS] = self._get_vif_details(binding) def _get_vif_details(self, binding): if binding.vif_details: try: return jsonutils.loads(binding.vif_details) except Exception: LOG.error("Serialized vif_details DB value '%(value)s' " "for port %(port)s is invalid", {'value': binding.vif_details, 'port': binding.port_id}) return {} def _get_profile(self, binding): if binding.profile: try: return jsonutils.loads(binding.profile) except Exception: LOG.error("Serialized profile DB value '%(value)s' for " "port %(port)s is invalid", {'value': binding.profile, 'port': binding.port_id}) return {} @staticmethod @resource_extend.extends([port_def.COLLECTION_NAME]) def _ml2_extend_port_dict_binding(port_res, port_db): plugin = directory.get_plugin() # None when called during unit tests for other plugins. if port_db.port_binding: plugin._update_port_dict_binding(port_res, port_db.port_binding) # ML2's resource extend functions allow extension drivers that extend # attributes for the resources to add those attributes to the result. @staticmethod @resource_extend.extends([net_def.COLLECTION_NAME]) def _ml2_md_extend_network_dict(result, netdb): plugin = directory.get_plugin() session = plugin._object_session_or_new_session(netdb) plugin.extension_manager.extend_network_dict(session, netdb, result) @staticmethod @resource_extend.extends([port_def.COLLECTION_NAME]) def _ml2_md_extend_port_dict(result, portdb): plugin = directory.get_plugin() session = plugin._object_session_or_new_session(portdb) plugin.extension_manager.extend_port_dict(session, portdb, result) @staticmethod @resource_extend.extends([subnet_def.COLLECTION_NAME]) def _ml2_md_extend_subnet_dict(result, subnetdb): plugin = directory.get_plugin() session = plugin._object_session_or_new_session(subnetdb) plugin.extension_manager.extend_subnet_dict(session, subnetdb, result) @staticmethod def _object_session_or_new_session(sql_obj): session = sqlalchemy.inspect(sql_obj).session if not session: session = db_api.get_reader_session() return session def _notify_port_updated(self, mech_context): port = mech_context.current segment = mech_context.bottom_bound_segment if not segment: # REVISIT(rkukura): This should notify agent to unplug port network = mech_context.network.current LOG.debug("In _notify_port_updated(), no bound segment for " "port %(port_id)s on network %(network_id)s", {'port_id': port['id'], 'network_id': network['id']}) return self.notifier.port_update(mech_context._plugin_context, port, segment[api.NETWORK_TYPE], segment[api.SEGMENTATION_ID], segment[api.PHYSICAL_NETWORK]) def _delete_objects(self, context, resource, objects): delete_op = getattr(self, 'delete_%s' % resource) for obj in objects: try: delete_op(context, obj['result']['id']) except KeyError: LOG.exception("Could not find %s to delete.", resource) except Exception: LOG.exception("Could not delete %(res)s %(id)s.", {'res': resource, 'id': obj['result']['id']}) def _create_bulk_ml2(self, resource, context, request_items): objects = [] collection = "%ss" % resource items = request_items[collection] obj_before_create = getattr(self, '_before_create_%s' % resource) for item in items: obj_before_create(context, item) with db_api.context_manager.writer.using(context): obj_creator = getattr(self, '_create_%s_db' % resource) for item in items: try: attrs = item[resource] result, mech_context = obj_creator(context, item) objects.append({'mech_context': mech_context, 'result': result, 'attributes': attrs}) except Exception as e: with excutils.save_and_reraise_exception(): utils.attach_exc_details( e, ("An exception occurred while creating " "the %(resource)s:%(item)s"), {'resource': resource, 'item': item}) postcommit_op = getattr(self, '_after_create_%s' % resource) for obj in objects: try: postcommit_op(context, obj['result'], obj['mech_context']) except Exception: with excutils.save_and_reraise_exception(): resource_ids = [res['result']['id'] for res in objects] LOG.exception("ML2 _after_create_%(res)s " "failed for %(res)s: " "'%(failed_id)s'. Deleting " "%(res)ss %(resource_ids)s", {'res': resource, 'failed_id': obj['result']['id'], 'resource_ids': ', '.join(resource_ids)}) # _after_handler will have deleted the object that threw to_delete = [o for o in objects if o != obj] self._delete_objects(context, resource, to_delete) return objects def _get_network_mtu(self, network_db, validate=True): mtus = [] try: segments = network_db['segments'] except KeyError: segments = [network_db] for s in segments: segment_type = s.get('network_type') if segment_type is None: continue try: type_driver = self.type_manager.drivers[segment_type].obj except KeyError: # NOTE(ihrachys) This can happen when type driver is not loaded # for an existing segment, or simply when the network has no # segments at the specific time this is computed. # In the former case, while it's probably an indication of # a bad setup, it's better to be safe than sorry here. Also, # several unit tests use non-existent driver types that may # trigger the exception here. if segment_type and s['segmentation_id']: LOG.warning( "Failed to determine MTU for segment " "%(segment_type)s:%(segment_id)s; network " "%(network_id)s MTU calculation may be not " "accurate", { 'segment_type': segment_type, 'segment_id': s['segmentation_id'], 'network_id': network_db['id'], } ) else: mtu = type_driver.get_mtu(s['physical_network']) # Some drivers, like 'local', may return None; the assumption # then is that for the segment type, MTU has no meaning or # unlimited, and so we should then ignore those values. if mtu: mtus.append(mtu) max_mtu = min(mtus) if mtus else p_utils.get_deployment_physnet_mtu() net_mtu = network_db.get('mtu') if validate: # validate that requested mtu conforms to allocated segments if net_mtu and max_mtu and max_mtu < net_mtu: msg = _("Requested MTU is too big, maximum is %d") % max_mtu raise exc.InvalidInput(error_message=msg) # if mtu is not set in database, use the maximum possible return net_mtu or max_mtu def _before_create_network(self, context, network): net_data = network[net_def.RESOURCE_NAME] registry.notify(resources.NETWORK, events.BEFORE_CREATE, self, context=context, network=net_data) def _create_network_db(self, context, network): net_data = network[net_def.RESOURCE_NAME] tenant_id = net_data['tenant_id'] with db_api.context_manager.writer.using(context): net_db = self.create_network_db(context, network) net_data['id'] = net_db.id self.type_manager.create_network_segments(context, net_data, tenant_id) net_db.mtu = self._get_network_mtu(net_db, validate=False) result = self._make_network_dict(net_db, process_extensions=False, context=context) self.extension_manager.process_create_network( context, # NOTE(ihrachys) extensions expect no id in the dict {k: v for k, v in net_data.items() if k != 'id'}, result) self._process_l3_create(context, result, net_data) self.type_manager.extend_network_dict_provider(context, result) # Update the transparent vlan if configured if utils.is_extension_supported(self, 'vlan-transparent'): vlt = vlantransparent.get_vlan_transparent(net_data) net_db['vlan_transparent'] = vlt result['vlan_transparent'] = vlt if az_ext.AZ_HINTS in net_data: self.validate_availability_zones(context, 'network', net_data[az_ext.AZ_HINTS]) az_hints = az_ext.convert_az_list_to_string( net_data[az_ext.AZ_HINTS]) net_db[az_ext.AZ_HINTS] = az_hints result[az_ext.AZ_HINTS] = az_hints registry.notify(resources.NETWORK, events.PRECOMMIT_CREATE, self, context=context, request=net_data, network=result) resource_extend.apply_funcs('networks', result, net_db) mech_context = driver_context.NetworkContext(self, context, result) self.mechanism_manager.create_network_precommit(mech_context) return result, mech_context @utils.transaction_guard @db_api.retry_if_session_inactive() def create_network(self, context, network): self._before_create_network(context, network) result, mech_context = self._create_network_db(context, network) return self._after_create_network(context, result, mech_context) def _after_create_network(self, context, result, mech_context): kwargs = {'context': context, 'network': result} registry.notify(resources.NETWORK, events.AFTER_CREATE, self, **kwargs) try: self.mechanism_manager.create_network_postcommit(mech_context) except ml2_exc.MechanismDriverError: with excutils.save_and_reraise_exception(): LOG.error("mechanism_manager.create_network_postcommit " "failed, deleting network '%s'", result['id']) self.delete_network(context, result['id']) return result @utils.transaction_guard @db_api.retry_if_session_inactive() def create_network_bulk(self, context, networks): objects = self._create_bulk_ml2( net_def.RESOURCE_NAME, context, networks) return [obj['result'] for obj in objects] @utils.transaction_guard @db_api.retry_if_session_inactive() def update_network(self, context, id, network): net_data = network[net_def.RESOURCE_NAME] provider._raise_if_updates_provider_attributes(net_data) need_network_update_notify = False with db_api.context_manager.writer.using(context): original_network = super(Ml2Plugin, self).get_network(context, id) updated_network = super(Ml2Plugin, self).update_network(context, id, network) self.extension_manager.process_update_network(context, net_data, updated_network) self._process_l3_update(context, updated_network, net_data) # ToDO(QoS): This would change once EngineFacade moves out db_network = self._get_network(context, id) # Expire the db_network in current transaction, so that the join # relationship can be updated. context.session.expire(db_network) if mtu_ext.MTU in net_data: db_network.mtu = self._get_network_mtu(db_network, validate=False) # agents should now update all ports to reflect new MTU need_network_update_notify = True updated_network = self._make_network_dict( db_network, context=context) self.type_manager.extend_network_dict_provider( context, updated_network) kwargs = {'context': context, 'network': updated_network, 'original_network': original_network, 'request': net_data} registry.notify( resources.NETWORK, events.PRECOMMIT_UPDATE, self, **kwargs) # TODO(QoS): Move out to the extension framework somehow. qos_update_notify = ( qos_consts.QOS_POLICY_ID in net_data and original_network[qos_consts.QOS_POLICY_ID] != updated_network[qos_consts.QOS_POLICY_ID]) # Do qos check if qos updated and has qos_checker if self.qos_checker and qos_update_notify: self._qos_check(context, original_network[qos_consts.QOS_POLICY_ID], updated_network[qos_consts.QOS_POLICY_ID], network=id) need_network_update_notify |= qos_update_notify mech_context = driver_context.NetworkContext( self, context, updated_network, original_network=original_network) self.mechanism_manager.update_network_precommit(mech_context) # TODO(apech) - handle errors raised by update_network, potentially # by re-calling update_network with the previous attributes. For # now the error is propagated to the caller, which is expected to # either undo/retry the operation or delete the resource. kwargs = {'context': context, 'network': updated_network, 'original_network': original_network} registry.notify(resources.NETWORK, events.AFTER_UPDATE, self, **kwargs) self.mechanism_manager.update_network_postcommit(mech_context) if need_network_update_notify: self.notifier.network_update(context, updated_network) return updated_network def _qos_check(self, context, original_qos_policy_id, updated_qos_policy_id, network=None, port=None): # Note(jetlee): check associated qos policy could change or not dvalue = {} original_qos_policy = policy_object.QosPolicy.get_object( context, id=original_qos_policy_id) updated_qos_policy = policy_object.QosPolicy.get_object( context, id=updated_qos_policy_id) for u_rule in updated_qos_policy.rules: direction = u_rule.direction o_rule_updated = False if original_qos_policy: for o_rule in original_qos_policy.rules: if o_rule.direction != direction: continue o_rule_updated = True value_tmp = u_rule.max_kbps - \ o_rule.max_kbps # if rule max_kbps was decreased, we shouldn't # take care of it if value_tmp > 0: dvalue[direction] = value_tmp # if original rule hasn't this direction if not o_rule_updated: dvalue[direction] = u_rule.max_kbps if dvalue: self._validate_qos_policy_could_change(context, updated_qos_policy, dvalue, network=network, port=port) def _validate_qos_policy_could_change(self, context, policy, dvalue, network=None, port=None): # get all ports, which associated network if network: ports = super(Ml2Plugin, self).get_ports(context, filters={'network_id': [network]}) else: # get details of specified port ports = [port] compute_nodes = [] ports_host_map = {} ports_sub = [] cn_bandwidth = {} # get all compute_nodes name and filter a part of ports # which are active instance port for port in ports: if const.DEVICE_OWNER_COMPUTE_PREFIX in port.get('device_owner') \ and port.get('status') == const.PORT_STATUS_ACTIVE: hostname = port.get('binding:host_id') compute_nodes.append(hostname) ports_sub.append(port) if hostname in ports_host_map: ports_host_map[hostname].append(port.get('id')) else: ports_host_map[hostname] = [port.get('id')] # get bandwidth information of all compute nodes cn_bandwidth = \ qos_checker_ext.get_all_compute_nodes_bandwidth(compute_nodes) LOG.debug("Those ports %(ports)s remain in host, and these hosts " "bandwidth are %(bandwidth)s.\n", {'ports': ports_host_map, 'bandwidth': cn_bandwidth}) # compute bandwidth of compute nodes have enough resources or not for port in ports_sub: hostname = port.get('binding:host_id') port_binding_profile = port.get("binding:profile") # Note(jetlee): if host hasn't bandwidth, we should skip it if hostname not in cn_bandwidth: continue # SRIOV port if port.get('binding:vif_type') == \ portbindings.VIF_TYPE_HW_VEB and \ cn_bandwidth[hostname].get('pci_devices'): port_pci_slot = port_binding_profile.get('pci_slot') port_pci_slot = port_pci_slot.replace(':', '_') port_pci_slot = port_pci_slot.replace('.', '_') port_pci_slot = 'pci_' + port_pci_slot parent_addr = \ cn_bandwidth[hostname]['pci_devices'].get(port_pci_slot) if not parent_addr: continue for nic in cn_bandwidth[hostname]: # If extra_resources hasn't 'pci_slot', we should skip it if not cn_bandwidth[hostname][nic].get('pci_slot'): continue for direction in dvalue: if parent_addr == \ cn_bandwidth[hostname][nic].get('pci_slot'): cn_bandwidth[hostname][nic][direction] -= \ dvalue[direction] if cn_bandwidth[hostname][nic][direction] < 0: qos_updated_obj = network if network \ else port.get('id') raise n_exc.QosPolicyCannotUpdate( network_or_port=qos_updated_obj, policy_id=policy.id, hostname=hostname, ports=ports_host_map[hostname]) # OVS port elif port.get('binding:vif_type') in (portbindings.VIF_TYPE_OVS, portbindings.VIF_TYPE_VHOST_USER): for nic in cn_bandwidth[hostname]: for direction in dvalue: cn_bandwidth[hostname][nic][direction] -= \ dvalue[direction] if cn_bandwidth[hostname][nic][direction] < 0: qos_updated_obj = network if network \ else port.get('id') raise n_exc.QosPolicyCannotUpdate( network_or_port=qos_updated_obj, policy_id=policy.id, hostname=hostname, ports=ports_host_map[hostname]) # we don't take unbound or other vif_type into consideration else: continue @db_api.retry_if_session_inactive() def get_network(self, context, id, fields=None): with db_api.context_manager.reader.using(context): net_db = self._get_network(context, id) net_data = self._make_network_dict(net_db, context=context) self.type_manager.extend_network_dict_provider(context, net_data) return db_utils.resource_fields(net_data, fields) @db_api.retry_if_session_inactive() def get_networks(self, context, filters=None, fields=None, sorts=None, limit=None, marker=None, page_reverse=False): with db_api.context_manager.reader.using(context): nets_db = super(Ml2Plugin, self)._get_networks( context, filters, None, sorts, limit, marker, page_reverse) net_data = [] for net in nets_db: net_data.append(self._make_network_dict(net, context=context)) self.type_manager.extend_networks_dict_provider(context, net_data) nets = self._filter_nets_provider(context, net_data, filters) return [db_utils.resource_fields(net, fields) for net in nets] def get_network_contexts(self, context, network_ids): """Return a map of network_id to NetworkContext for network_ids.""" net_filters = {'id': list(set(network_ids))} nets_by_netid = { n['id']: n for n in self.get_networks(context, filters=net_filters) } segments_by_netid = segments_db.get_networks_segments( context, list(nets_by_netid.keys())) netctxs_by_netid = { net_id: driver_context.NetworkContext( self, context, nets_by_netid[net_id], segments=segments_by_netid[net_id]) for net_id in nets_by_netid.keys() } return netctxs_by_netid @utils.transaction_guard def delete_network(self, context, id): # the only purpose of this override is to protect this from being # called inside of a transaction. return super(Ml2Plugin, self).delete_network(context, id) @registry.receives(resources.NETWORK, [events.PRECOMMIT_DELETE]) def _network_delete_precommit_handler(self, rtype, event, trigger, context, network_id, **kwargs): network = self.get_network(context, network_id) mech_context = driver_context.NetworkContext(self, context, network) # TODO(kevinbenton): move this mech context into something like # a 'delete context' so it's not polluting the real context object setattr(context, '_mech_context', mech_context) self.mechanism_manager.delete_network_precommit( mech_context) @registry.receives(resources.NETWORK, [events.AFTER_DELETE]) def _network_delete_after_delete_handler(self, rtype, event, trigger, context, network, **kwargs): try: self.mechanism_manager.delete_network_postcommit( context._mech_context) except ml2_exc.MechanismDriverError: # TODO(apech) - One or more mechanism driver failed to # delete the network. Ideally we'd notify the caller of # the fact that an error occurred. LOG.error("mechanism_manager.delete_network_postcommit" " failed") self.notifier.network_delete(context, network['id']) def _before_create_subnet(self, context, subnet): # TODO(kevinbenton): BEFORE notification should be added here pass def _create_subnet_db(self, context, subnet): with db_api.context_manager.writer.using(context): result, net_db, ipam_sub = self._create_subnet_precommit( context, subnet) self.extension_manager.process_create_subnet( context, subnet[subnet_def.RESOURCE_NAME], result) network = self._make_network_dict(net_db, context=context) self.type_manager.extend_network_dict_provider(context, network) mech_context = driver_context.SubnetContext(self, context, result, network) self.mechanism_manager.create_subnet_precommit(mech_context) # TODO(kevinbenton): move this to '_after_subnet_create' # db base plugin post commit ops self._create_subnet_postcommit(context, result, net_db, ipam_sub) return result, mech_context @utils.transaction_guard @db_api.retry_if_session_inactive() def create_subnet(self, context, subnet): self._before_create_subnet(context, subnet) result, mech_context = self._create_subnet_db(context, subnet) return self._after_create_subnet(context, result, mech_context) def _after_create_subnet(self, context, result, mech_context): kwargs = {'context': context, 'subnet': result} registry.notify(resources.SUBNET, events.AFTER_CREATE, self, **kwargs) try: self.mechanism_manager.create_subnet_postcommit(mech_context) except ml2_exc.MechanismDriverError: with excutils.save_and_reraise_exception(): LOG.error("mechanism_manager.create_subnet_postcommit " "failed, deleting subnet '%s'", result['id']) self.delete_subnet(context, result['id']) return result @utils.transaction_guard @db_api.retry_if_session_inactive() def create_subnet_bulk(self, context, subnets): objects = self._create_bulk_ml2( subnet_def.RESOURCE_NAME, context, subnets) return [obj['result'] for obj in objects] @utils.transaction_guard @db_api.retry_if_session_inactive() def update_subnet(self, context, id, subnet): with db_api.context_manager.writer.using(context): updated_subnet, original_subnet = self._update_subnet_precommit( context, id, subnet) self.extension_manager.process_update_subnet( context, subnet[subnet_def.RESOURCE_NAME], updated_subnet) updated_subnet = self.get_subnet(context, id) mech_context = driver_context.SubnetContext( self, context, updated_subnet, network=None, original_subnet=original_subnet) self.mechanism_manager.update_subnet_precommit(mech_context) self._update_subnet_postcommit(context, original_subnet, updated_subnet) # TODO(apech) - handle errors raised by update_subnet, potentially # by re-calling update_subnet with the previous attributes. For # now the error is propagated to the caller, which is expected to # either undo/retry the operation or delete the resource. self.mechanism_manager.update_subnet_postcommit(mech_context) return updated_subnet @utils.transaction_guard def delete_subnet(self, context, id): # the only purpose of this override is to protect this from being # called inside of a transaction. return super(Ml2Plugin, self).delete_subnet(context, id) @registry.receives(resources.SUBNET, [events.PRECOMMIT_DELETE]) def _subnet_delete_precommit_handler(self, rtype, event, trigger, context, subnet_id, **kwargs): record = self._get_subnet(context, subnet_id) subnet = self._make_subnet_dict(record, context=context) network = self.get_network(context, subnet['network_id']) mech_context = driver_context.SubnetContext(self, context, subnet, network) # TODO(kevinbenton): move this mech context into something like # a 'delete context' so it's not polluting the real context object setattr(context, '_mech_context', mech_context) self.mechanism_manager.delete_subnet_precommit(mech_context) @registry.receives(resources.SUBNET, [events.AFTER_DELETE]) def _subnet_delete_after_delete_handler(self, rtype, event, trigger, context, subnet, **kwargs): try: self.mechanism_manager.delete_subnet_postcommit( context._mech_context) except ml2_exc.MechanismDriverError: # TODO(apech) - One or more mechanism driver failed to # delete the subnet. Ideally we'd notify the caller of # the fact that an error occurred. LOG.error("mechanism_manager.delete_subnet_postcommit failed") # TODO(yalei) - will be simplified after security group and address pair be # converted to ext driver too. def _portsec_ext_port_create_processing(self, context, port_data, port): attrs = port[port_def.RESOURCE_NAME] port_security = ((port_data.get(psec.PORTSECURITY) is None) or port_data[psec.PORTSECURITY]) # allowed address pair checks if self._check_update_has_allowed_address_pairs(port): if not port_security: raise addr_pair.AddressPairAndPortSecurityRequired() else: # remove ATTR_NOT_SPECIFIED attrs[addr_pair.ADDRESS_PAIRS] = [] if port_security: self._ensure_default_security_group_on_port(context, port) elif self._check_update_has_security_groups(port): raise psec_exc.PortSecurityAndIPRequiredForSecurityGroups() def _setup_dhcp_agent_provisioning_component(self, context, port): subnet_ids = [f['subnet_id'] for f in port['fixed_ips']] if (db.is_dhcp_active_on_any_subnet(context, subnet_ids) and len(self.get_dhcp_agents_hosting_networks(context, [port['network_id']]))): # the agents will tell us when the dhcp config is ready so we setup # a provisioning component to prevent the port from going ACTIVE # until a dhcp_ready_on_port notification is received. provisioning_blocks.add_provisioning_component( context, port['id'], resources.PORT, provisioning_blocks.DHCP_ENTITY) else: provisioning_blocks.remove_provisioning_component( context, port['id'], resources.PORT, provisioning_blocks.DHCP_ENTITY) def _before_create_port(self, context, port): attrs = port[port_def.RESOURCE_NAME] if not attrs.get('status'): attrs['status'] = const.PORT_STATUS_DOWN registry.notify(resources.PORT, events.BEFORE_CREATE, self, context=context, port=attrs) def _create_port_db(self, context, port): attrs = port[port_def.RESOURCE_NAME] with db_api.context_manager.writer.using(context): dhcp_opts = attrs.get(edo_ext.EXTRADHCPOPTS, []) flow_limit = attrs.get('flow_limit') flow_setup_limit = attrs.get('flow_setup_limit') # NOTE(jetlee): now, we get the network info, then create the db. network = self.get_network(context, port['port']['network_id']) if network['router:external']: external_net_flag = True else: external_net_flag = False context.external_net_flag = external_net_flag port_db = self.create_port_db(context, port) result = self._make_port_dict(port_db, process_extensions=False) self.extension_manager.process_create_port(context, attrs, result) self._portsec_ext_port_create_processing(context, result, port) # sgs must be got after portsec checked with security group sgs = self._get_security_groups_on_port(context, port) self._process_port_create_security_group(context, result, sgs) #network = self.get_network(context, result['network_id']) binding = db.add_port_binding(context, result['id']) mech_context = driver_context.PortContext(self, context, result, network, binding, None) self._process_port_binding(mech_context, attrs) result[addr_pair.ADDRESS_PAIRS] = ( self._process_create_allowed_address_pairs( context, result, attrs.get(addr_pair.ADDRESS_PAIRS))) self._process_port_create_extra_dhcp_opts(context, result, dhcp_opts) self._process_port_create_flow_limit(context, result, flow_limit) self._process_port_create_flow_setup_limit(context, result, flow_setup_limit) kwargs = {'context': context, 'port': result} # NOTE(jetlee): it's possible to deliver network and port # obj to qos plugin? we try to do it. kwargs['network_qos_policy_id'] = network.get('qos_policy_id') registry.notify( resources.PORT, events.PRECOMMIT_CREATE, self, **kwargs) self.mechanism_manager.create_port_precommit(mech_context) self._setup_dhcp_agent_provisioning_component(context, result) resource_extend.apply_funcs('ports', result, port_db) # NOTE(jetlee): return a new param - network return result, mech_context, network @utils.transaction_guard @db_api.retry_if_session_inactive() def create_port(self, context, port): self._before_create_port(context, port) try: result, mech_context, network = \ self._create_port_db(context, port) finally: # NOTE(jetlee): now we must release the lock, whether the # create_port_db is success. if cfg.CONF.tooz.enabled and cfg.CONF.tooz.ip_lock and \ cfg.CONF.tooz.release_lock: # NOTE(zhaimengdong): If ip address was configured, context # will not have lock_dict, so we should determine whether the # context has lock_dict before release tooz lock if hasattr(context, 'lock_dict'): lockName = context.lock_dict[context.request_id] dist_lock.release_tooz_lock(lockName) # NOTE(jetlee): add a network to the after method return self._after_create_port(context, result, mech_context, network) def _after_create_port(self, context, result, mech_context, network=None): # notify any plugin that is interested in port create events kwargs = {'context': context, 'port': result} # NOTE(jetlee): deliver the network info to the 'after method' if network: kwargs['network'] = network registry.notify(resources.PORT, events.AFTER_CREATE, self, **kwargs) try: self.mechanism_manager.create_port_postcommit(mech_context) except ml2_exc.MechanismDriverError: with excutils.save_and_reraise_exception(): LOG.error("mechanism_manager.create_port_postcommit " "failed, deleting port '%s'", result['id']) self.delete_port(context, result['id'], l3_port_check=False) try: bound_context = self._bind_port_if_needed(mech_context) except ml2_exc.MechanismDriverError: with excutils.save_and_reraise_exception(): LOG.error("_bind_port_if_needed " "failed, deleting port '%s'", result['id']) self.delete_port(context, result['id'], l3_port_check=False) return bound_context.current @utils.transaction_guard @db_api.retry_if_session_inactive() def create_port_bulk(self, context, ports): objects = self._create_bulk_ml2(port_def.RESOURCE_NAME, context, ports) return [obj['result'] for obj in objects] # TODO(yalei) - will be simplified after security group and address pair be # converted to ext driver too. def _portsec_ext_port_update_processing(self, updated_port, context, port, id): port_security = ((updated_port.get(psec.PORTSECURITY) is None) or updated_port[psec.PORTSECURITY]) if port_security: return # check the address-pairs if self._check_update_has_allowed_address_pairs(port): # has address pairs in request raise addr_pair.AddressPairAndPortSecurityRequired() elif (not self._check_update_deletes_allowed_address_pairs(port)): # not a request for deleting the address-pairs updated_port[addr_pair.ADDRESS_PAIRS] = ( self.get_allowed_address_pairs(context, id)) # check if address pairs has been in db, if address pairs could # be put in extension driver, we can refine here. if updated_port[addr_pair.ADDRESS_PAIRS]: raise addr_pair.AddressPairAndPortSecurityRequired() # checks if security groups were updated adding/modifying # security groups, port security is set if self._check_update_has_security_groups(port): raise psec_exc.PortSecurityAndIPRequiredForSecurityGroups() elif (not self._check_update_deletes_security_groups(port)): if not utils.is_extension_supported(self, 'security-group'): return # Update did not have security groups passed in. Check # that port does not have any security groups already on it. filters = {'port_id': [id]} security_groups = ( super(Ml2Plugin, self)._get_port_security_group_bindings( context, filters) ) if security_groups: raise psec_exc.PortSecurityPortHasSecurityGroup() @staticmethod def _add_port_security_enabled_attr(attrs, original_port): # Add 'port_security_enabled=True' to attrs for cloudos demands when # booting or updating instance ports with no security groups by # default. # The logic listed as follows: # 1. update port with sg, then update port_security_enabled to True # 2. remove port sg, then update port_security_enabled to False if 'security_groups' in attrs: if not attrs['security_groups'] and \ original_port['port_security_enabled']: # To allow all packets in/out from port attrs['port_security_enabled'] = False else: attrs['port_security_enabled'] = True @utils.transaction_guard @db_api.retry_if_session_inactive() def update_port(self, context, id, port): attrs = port[port_def.RESOURCE_NAME] need_port_update_notify = False bound_mech_contexts = [] original_port = self.get_port(context, id) if cfg.CONF.SECURITYGROUP.allow_all_traffic_with_no_security_groups: self._add_port_security_enabled_attr(attrs, original_port) registry.notify(resources.PORT, events.BEFORE_UPDATE, self, context=context, port=attrs, original_port=original_port) with db_api.context_manager.writer.using(context): port_db = self._get_port(context, id) binding = port_db.port_binding if not binding: raise exc.PortNotFound(port_id=id) mac_address_updated = self._check_mac_update_allowed( port_db, attrs, binding) need_port_update_notify |= mac_address_updated original_port = self._make_port_dict(port_db) updated_port = super(Ml2Plugin, self).update_port(context, id, port) self.extension_manager.process_update_port(context, attrs, updated_port) self._portsec_ext_port_update_processing(updated_port, context, port, id) if (psec.PORTSECURITY in attrs) and ( original_port[psec.PORTSECURITY] != updated_port[psec.PORTSECURITY]): need_port_update_notify = True # TODO(QoS): Move out to the extension framework somehow. # Follow https://review.openstack.org/#/c/169223 for a solution. if (qos_consts.QOS_POLICY_ID in attrs and original_port[qos_consts.QOS_POLICY_ID] != updated_port[qos_consts.QOS_POLICY_ID]): need_port_update_notify = True if self.qos_checker and \ const.DEVICE_OWNER_COMPUTE_PREFIX in \ original_port.get('device_owner') and \ original_port.get('status') == \ const.PORT_STATUS_ACTIVE: self._qos_check(context, original_port[qos_consts.QOS_POLICY_ID], updated_port[qos_consts.QOS_POLICY_ID], port=original_port) if addr_pair.ADDRESS_PAIRS in attrs: need_port_update_notify |= ( self.update_address_pairs_on_port(context, id, port, original_port, updated_port)) need_port_update_notify |= self.update_security_group_on_port( context, id, port, original_port, updated_port) network = self.get_network(context, original_port['network_id']) need_port_update_notify |= self._update_extra_dhcp_opts_on_port( context, id, port, updated_port) need_port_update_notify |= self._update_flow_limit_on_port( context, id, port, updated_port) need_port_update_notify |= self._update_flow_setup_limit_on_port( context, id, port, updated_port) levels = db.get_binding_levels(context, id, binding.host) # one of the operations above may have altered the model call # _make_port_dict again to ensure latest state is reflected so mech # drivers, callback handlers, and the API caller see latest state. # We expire here to reflect changed relationships on the obj. # Repeatable read will ensure we still get the state from this # transaction in spite of concurrent updates/deletes. context.session.expire(port_db) updated_port.update(self._make_port_dict(port_db)) mech_context = driver_context.PortContext(

- 涉及文件:

-

- flow_limit.py

- 涉及文件:

浙公网安备 33010602011771号

浙公网安备 33010602011771号