案例一:Spark版的WordCount程序

案例一:Spark版的WordCount程序

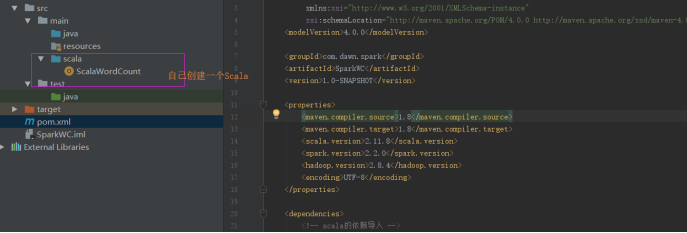

Step1:创建一个Maven工程。

编写Pom文件:

<?xml version="1.0" encoding="UTF-8"?>

<project xmlns="http://maven.apache.org/POM/4.0.0"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>com.dawn.spark</groupId>

<artifactId>SparkWC</artifactId>

<version>1.0-SNAPSHOT</version>

<properties>

<maven.compiler.source>1.8</maven.compiler.source>

<maven.compiler.target>1.8</maven.compiler.target>

<scala.version>2.11.8</scala.version>

<spark.version>2.2.0</spark.version>

<hadoop.version>2.8.4</hadoop.version>

<encoding>UTF-8</encoding>

</properties>

<dependencies>

<!-- scala的依赖导入 -->

<dependency>

<groupId>org.scala-lang</groupId>

<artifactId>scala-library</artifactId>

<version>${scala.version}</version>

</dependency>

<!-- spark的依赖导入 -->

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-core_2.11</artifactId>

<version>${spark.version}</version>

</dependency>

<!-- hadoop-client API的导入 -->

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-client</artifactId>

<version>${hadoop.version}</version>

</dependency>

</dependencies>

<build>

<pluginManagement>

<plugins>

<!-- scala的编译插件 -->

<plugin>

<groupId>net.alchim31.maven</groupId>

<artifactId>scala-maven-plugin</artifactId>

<version>3.2.2</version>

</plugin>

<!-- ava的编译插件 -->

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-compiler-plugin</artifactId>

<version>3.5.1</version>

</plugin>

</plugins>

</pluginManagement>

<plugins>

<plugin>

<groupId>net.alchim31.maven</groupId>

<artifactId>scala-maven-plugin</artifactId>

<executions>

<execution>

<id>scala-compile-first</id>

<phase>process-resources</phase>

<goals>

<goal>add-source</goal>

<goal>compile</goal>

</goals>

</execution>

<execution>

<id>scala-test-compile</id>

<phase>process-test-resources</phase>

<goals>

<goal>testCompile</goal>

</goals>

</execution>

</executions>

</plugin>

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-compiler-plugin</artifactId>

<executions>

<execution>

<phase>compile</phase>

<goals>

<goal>compile</goal>

</goals>

</execution>

</executions>

</plugin>

<!-- 打jar包插件 -->

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-shade-plugin</artifactId>

<version>2.4.3</version>

<executions>

<execution>

<phase>package</phase>

<goals>

<goal>shade</goal>

</goals>

<configuration>

<filters>

<filter>

<artifact>*:*</artifact>

<excludes>

<exclude>META-INF/*.SF</exclude>

<exclude>META-INF/*.DSA</exclude>

<exclude>META-INF/*.RSA</exclude>

</excludes>

</filter>

</filters>

</configuration>

</execution>

</executions>

</plugin>

</plugins>

</build>

</project>

Step2:编写WordCount代码

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

|

import org.apache.spark.{SparkConf, SparkContext}/** * @author Dawn * 2019年6月18日15:39:39 * @version 1.0 * spark-WordCount本地模式测试 */object ScalaWordCount { def main(args: Array[String]): Unit = { //2.设置参数 setAppName设计程序名 setMaster本地测试设置线程数 *多个 val conf:SparkConf=new SparkConf().setAppName("ScalaWordCount").setMaster("local[*]") //1.创建spark执行程序的入口 val sc:SparkContext=new SparkContext(conf) //3.加载数据 并且处理 sc.textFile("f:/temp/data.txt").flatMap(_.split(" ")).map((_,1)) .reduceByKey(_+_) .sortBy(_._2,false) .foreach(println) //保存文件// .saveAsTextFile("f:/temp/scalaWC/") //4.关闭资源 sc.stop() }} |

注意:

运行结果如下:

浙公网安备 33010602011771号

浙公网安备 33010602011771号