HDFS的Shell操作

1.基本语法

bin/hadoop fs 具体命令 OR bin/hdfs dfs 具体命令

dfs是fs的实现类。

2.命令大全

[root@hadoop002 hadoop-2.7.2]# hdfs dfs Usage: hadoop fs [generic options] [-appendToFile <localsrc> ... <dst>] [-cat [-ignoreCrc] <src> ...] [-checksum <src> ...] [-chgrp [-R] GROUP PATH...] [-chmod [-R] <MODE[,MODE]... | OCTALMODE> PATH...] [-chown [-R] [OWNER][:[GROUP]] PATH...] [-copyFromLocal [-f] [-p] [-l] <localsrc> ... <dst>] [-copyToLocal [-p] [-ignoreCrc] [-crc] <src> ... <localdst>] [-count [-q] [-h] <path> ...] [-cp [-f] [-p | -p[topax]] <src> ... <dst>] [-createSnapshot <snapshotDir> [<snapshotName>]] [-deleteSnapshot <snapshotDir> <snapshotName>] [-df [-h] [<path> ...]] [-du [-s] [-h] <path> ...] [-expunge] [-find <path> ... <expression> ...] [-get [-p] [-ignoreCrc] [-crc] <src> ... <localdst>] [-getfacl [-R] <path>] [-getfattr [-R] {-n name | -d} [-e en] <path>] [-getmerge [-nl] <src> <localdst>] [-help [cmd ...]] [-ls [-d] [-h] [-R] [<path> ...]] [-mkdir [-p] <path> ...] [-moveFromLocal <localsrc> ... <dst>] [-moveToLocal <src> <localdst>] [-mv <src> ... <dst>] [-put [-f] [-p] [-l] <localsrc> ... <dst>] [-renameSnapshot <snapshotDir> <oldName> <newName>] [-rm [-f] [-r|-R] [-skipTrash] <src> ...] [-rmdir [--ignore-fail-on-non-empty] <dir> ...] [-setfacl [-R] [{-b|-k} {-m|-x <acl_spec>} <path>]|[--set <acl_spec> <path>]] [-setfattr {-n name [-v value] | -x name} <path>] [-setrep [-R] [-w] <rep> <path> ...] [-stat [format] <path> ...] [-tail [-f] <file>] [-test -[defsz] <path>] [-text [-ignoreCrc] <src> ...] [-touchz <path> ...] [-truncate [-w] <length> <path> ...] [-usage [cmd ...]] Generic options supported are -conf <configuration file> specify an application configuration file -D <property=value> use value for given property -fs <local|namenode:port> specify a namenode -jt <local|resourcemanager:port> specify a ResourceManager -files <comma separated list of files> specify comma separated files to be copied to the map reduce cluster -libjars <comma separated list of jars> specify comma separated jar files to include in the classpath. -archives <comma separated list of archives> specify comma separated archives to be unarchived on the compute machines. The general command line syntax is bin/hadoop command [genericOptions] [commandOptions]

3.常用命令实操

(0)启动Hadoop集群(方便后续的测试)

[atguigu@hadoop102 hadoop-2.7.2]$ sbin/start-dfs.sh

[atguigu@hadoop103 hadoop-2.7.2]$ sbin/start-yarn.sh

(1)-help:输出这个命令参数

[atguigu@hadoop102 hadoop-2.7.2]$ hadoop fs -help rm

(2)-ls: 显示目录信息

[root@hadoop002 hadoop-2.7.2]# hadoop fs -ls / Found 2 items -rw-r--r-- 3 root supergroup 18284613 2020-01-15 12:11 /tools.jar -rw-r--r-- 3 root supergroup 48 2020-01-15 12:05 /wc.input

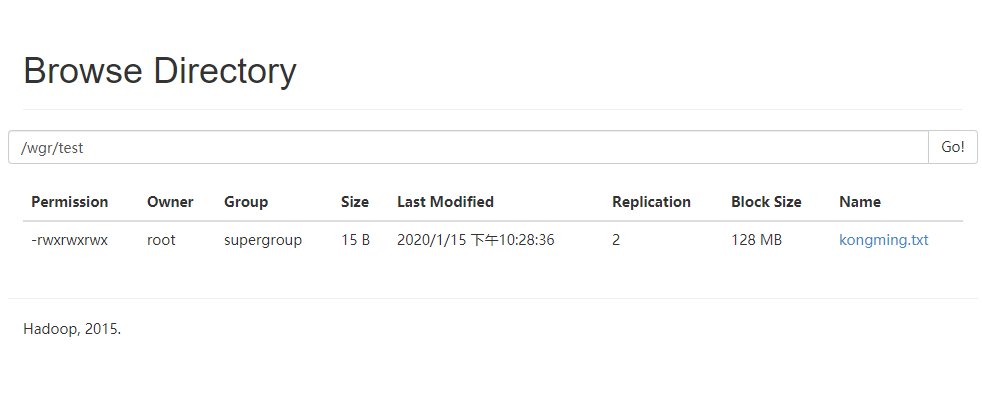

(3)-mkdir:在HDFS上创建目录

[root@hadoop002 hadoop-2.7.2]# hadoop fs -mkdir -p /wgr/test

(4)-moveFromLocal:从本地剪切粘贴到HDFS

[root@hadoop002 hadoop-2.7.2]# touch kongming.txt [root@hadoop002 hadoop-2.7.2]# hadoop fs -moveFromLocal kongming.txt /wgr/test

(5)-appendToFile:追加一个文件到已经存在的文件末尾

[root@hadoop002 hadoop-2.7.2]# echo "testtestetetst" >> test.txt [root@hadoop002 hadoop-2.7.2]# ll total 80 drwxr-xr-x 2 root root 4096 Jan 14 22:19 bin drwxr-xr-x 3 root root 4096 Jan 14 23:35 data drwxr-xr-x 3 root root 4096 Jan 14 22:13 etc -rw-r--r-- 1 root root 0 Jan 14 22:13 hdfs-site.xml drwxr-xr-x 2 root root 4096 Jan 14 22:13 include drwxr-xr-x 2 root root 4096 Jan 14 22:13 input drwxr-xr-x 3 root root 4096 Jan 14 22:19 lib drwxr-xr-x 2 root root 4096 Jan 14 22:13 libexec -rw-r--r-- 1 root root 15429 Jan 14 22:13 LICENSE.txt drwxr-xr-x 3 root root 4096 Jan 15 11:59 logs -rw-r--r-- 1 root root 101 Jan 14 22:20 NOTICE.txt drwxr-xr-x 2 root root 4096 Jan 14 22:19 output -rw-r--r-- 1 root root 1366 Jan 14 22:20 README.txt drwxr-xr-x 2 root root 4096 Jan 14 22:19 sbin drwxr-xr-x 4 root root 4096 Jan 14 22:13 share -rw-r--r-- 1 root root 15 Jan 15 22:27 test.txt drwxr-xr-x 2 root root 4096 Jan 14 22:13 wcinput drwxr-xr-x 2 root root 4096 Jan 14 22:13 wcoutput [root@hadoop002 hadoop-2.7.2]# hadoop fs -appendToFile test.txt /wgr/test/kongming.txt

(6)-cat:显示文件内容

[root@hadoop002 hadoop-2.7.2]# hadoop fs -cat /wgr/test/kongming.txt testtestetetst

(7)-chgrp 、-chmod、-chown:Linux文件系统中的用法一样,修改文件所属权限

[root@hadoop002 hadoop-2.7.2]# hadoop fs -chmod 777 /wgr/test/kongming.txt [root@hadoop002 hadoop-2.7.2]# hadoop fs -cat /wgr/test/kongming.txt testtestetetst [root@hadoop002 hadoop-2.7.2]# hadoop fs -ls -R / -rw-r--r-- 3 root supergroup 18284613 2020-01-15 12:11 /tools.jar -rw-r--r-- 3 root supergroup 48 2020-01-15 12:05 /wc.input drwxr-xr-x - root supergroup 0 2020-01-15 21:49 /wgr drwxr-xr-x - root supergroup 0 2020-01-15 21:51 /wgr/test -rwxrwxrwx 3 root supergroup 15 2020-01-15 22:28 /wgr/test/kongming.txt

(8)-copyFromLocal:从本地文件系统中拷贝文件到HDFS路径去

[root@hadoop002 hadoop-2.7.2]# hadoop fs -copyFromLocal README.txt /

(9)-copyToLocal:从HDFS拷贝到本地

[root@hadoop002 hadoop-2.7.2]# hadoop fs -copyToLocal /README.txt /

(10)-cp :从HDFS的一个路径拷贝到HDFS的另一个路径

[root@hadoop102 hadoop-2.7.2]$ hadoop fs -cp /sanguo/shuguo/kongming.txt /zhuge.txt

(11)-mv:在HDFS目录中移动文件

[root@hadoop102 hadoop-2.7.2]$ hadoop fs -mv /zhuge.txt /sanguo/shuguo/

(12)-get:等同于copyToLocal,就是从HDFS下载文件到本地

[root@hadoop102 hadoop-2.7.2]$ hadoop fs -get /sanguo/shuguo/kongming.txt ./

(13)-getmerge:合并下载多个文件,比如HDFS的目录 /user/atguigu/test下有多个文件:log.1, log.2,log.3,...

[root@hadoop102 hadoop-2.7.2]$ hadoop fs -getmerge /user/root/test/* ./zaiyiqi.txt

(14)-put:等同于copyFromLocal

[root@hadoop102 hadoop-2.7.2]$ hadoop fs -put ./zaiyiqi.txt /user/root/test/

(15)-tail:显示一个文件的末尾

[root@hadoop102 hadoop-2.7.2]$ hadoop fs -tail /sanguo/shuguo/kongming.txt

(16)-rm:删除文件或文件夹

[root@hadoop102 hadoop-2.7.2]$ hadoop fs -rm /user/root/test/jinlian2.txt

(17)-rmdir:删除空目录

[root@hadoop102 hadoop-2.7.2]$ hadoop fs -mkdir /test

[root@hadoop102 hadoop-2.7.2]$ hadoop fs -rmdir /test

(18)-du统计文件夹的大小信息

[root@hadoop102 hadoop-2.7.2]$ hadoop fs -du -s -h /user/root/test

[root@hadoop102 hadoop-2.7.2]$ hadoop fs -du -h /user/root/test 1.3 K /user/root/test/README.txt 15 /user/root/test/jinlian.txt 1.4 K /user/root/test/zaiyiqi.txt

(19)-setrep:设置HDFS中文件的副本数量

[root@hadoop002 hadoop-2.7.2]# hadoop fs -setrep 2 /wgr/test/kongming.txt Replication 2 set: /wgr/test/kongming.txt [root@hadoop002 hadoop-2.7.2]#

这里设置的副本数只是记录在NameNode的元数据中,是否真的会有这么多副本,还得看DataNode的数量。因为目前只有3台设备,最多也就3个副本,只有节点数的增加到10台时,副本数才能达到10。

浙公网安备 33010602011771号

浙公网安备 33010602011771号