flume实时读取文件到kafka

背景:需要实时读取log日志文件中的记录到kafka

1.zookeeper服务需要开启,查看zookeeper的状态,(zookeeper的安装及启动过程可查看 https://www.cnblogs.com/cstark/p/14573395.html)

[root@master kafka_2.11-0.11]# /opt/soft/zookeeper-3.4.13/bin/zkServer.sh status ZooKeeper JMX enabled by default Using config: /opt/soft/zookeeper-3.4.13/bin/../conf/zoo.cfg Mode: follower

2.kafka服务需要开启

/opt/soft/kafka_2.11-0.11/bin/kafka-server-start.sh /opt/soft/kafka_2.11-0.11/config/server.properties

3.启动flume 的配置文件

启动flume:bin/flume-ng agent --conf conf --conf-file ./conf/job/file_to_hdfs.conf --name a1

a1.sources = r1 a1.sinks = k1 a1.channels = c1 # Describe/configure the source a1.sources.r1.type = exec a1.sources.r1.command = tail -F /opt/data/mall/16/mall.log # 需要监控的文件 # Describe the sink #a1.sinks.k1.type = logger a1.sinks.k1.type = org.apache.flume.sink.kafka.KafkaSink a1.sinks.k1.topic = test a1.sinks.k1.brokerList = master:9092 a1.sinks.k1.requiredAcks = 1 a1.sinks.k1.batchSize = 20 # Use a channel which buffers events in memory a1.channels.c1.type = memory a1.channels.c1.capacity = 1000 a1.channels.c1.transactionCapacity = 100 # Bind the source and sink to the channel a1.sources.r1.channels = c1 a1.sinks.k1.channel = c1

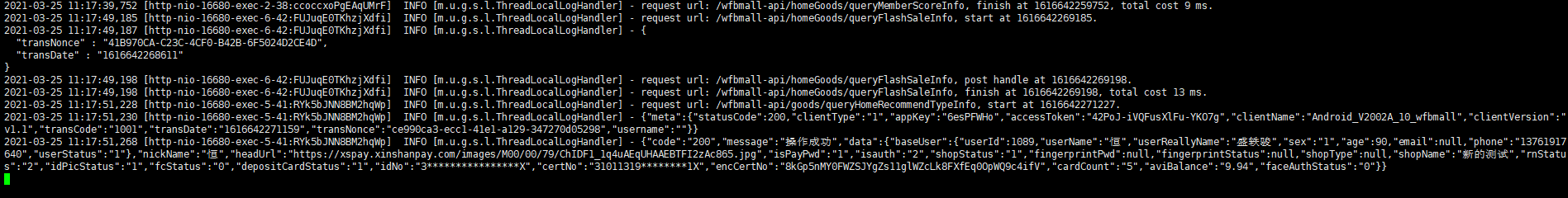

4.查看kafka对应的topic里面数据是否有同步过来

/opt/soft/kafka_2.11-0.11/bin/kafka-console-consumer.sh --bootstrap-server master:9092 --from-beginning --topic test

浙公网安备 33010602011771号

浙公网安备 33010602011771号