Python爬虫笔记

Python爬虫

环境

cmd 窗口

python --version

pip --version

pip config set global.index-url https://pypi.tuna.tsinghua.edu.cn/simple

pip install pymysql

pip list | find "requests"python -m pip install pip -U (更新)

vscode

安装python , kite , jupyter

第一个程序

from os import write

from typing import Text

import requests

#请求百度的结果 r 代表响应

try:

r = requests.get("http://www.baidu.com")

# r.request.headers 请求的头部

r.encoding = 'utf-8'

r.raise_for_ststus()

r.history

r.content #这是二进制内容

except:

print("error")

print(r.text)

# 存入txt

with open("1.txt","w",encoding="utf-8") as f:

f.write(r.text)

#运行单元|运行本单元上方|调试单元

#with可以自动关闭

汉字字符集编码查询;中文字符集编码:GB2312、BIG5、GBK、GB18030、Unicode (qqxiuzi.cn)

html状态码(需要记住)

导包的路径

安装路径下的lib路径下的site里

*参数

一个 * : 元组方式

两个 ** : 字典方式

XHR

xml http requests

登录校园网

payload = {"username":"11",

"password":"xN94pkdfNwM=",

"authCode":"",

"It":"abcd1234",

"execution":"e3s2",

"_eventId":"submit",

"isQrSubmit":"false",

"qrValue":"",

"isMobileLogin":"false"}

url="http://a.cqie.edu.cn/cas/login?service=http://i.cqie.edu.cn/portal_main/toPortalPage"

r = requests.post(url,data=payload,timeout=3)

r.status_code

实验2

1

import requests

from lxml import etree

def down_html(url):#获取网页内容

try:

r=requests.get(url)#发送请求

r.raise_for_status() #非正常返回爬出异常

r.encoding=r.apparent_encoding#设置返回内容的字符集编码

return r.text

except Exception:

print("download page error")

#网页解析

def parse_html(html):

data=etree.HTML(html)

title=data.xpath('//div[@id="u1"]/a/text()')

url=data.xpath('//div[@id="u1"]/a/@href')

result=dict()

for i in range(0,len(title)):

result[title[i]]=url[i]

return result

if __name__=='__main__':

url="http://www.baidu.com"

for k,v in parse_html(down_html(url)).items():

print(k+"-->"+v)

2

import requests

import csv

from lxml import etree

#下载网页

def download_page(url):

try:

r=requests.get(url)#发送请求

r.raise_for_status() #非正常返回爬出异常

r.encoding=r.apparent_encoding #设置返回内容的字符集编码

return r.text #返回网页的文本内容

except Exception:

pass

#解析网页

def parse_html(html):

data=etree.HTML(html)

books=[]

for book in data.xpath('//*[@id="tag-book"]/div/ul/li'):

name=book.xpath("div[2]/h4/a/text()")[0].strip() #书名

author=book.xpath('div[2]/div/span/text()')[0].strip()#作者

price=book.xpath("div[2]/span/span/text()")[0].strip()#价格

details_url=book.xpath("div[2]/h4/a/@href")[0].strip()#详情页地址

book=[name,author,price,details_url]

books.append(book)

return books

#保存数据

def save_data(file,data): #path文件保存路径,item数据列表

with open(file,"w+") as f:

writer = csv.writer(f)

for row in data:

writer.writerow(row)

print("data saved successfully")

if __name__ == '__main__':

url="https://www.ryjiaoyu.com/tag/details/7"

save_data("book4.csv",parse_html(download_page(url)))

静态网页爬取

robots协议

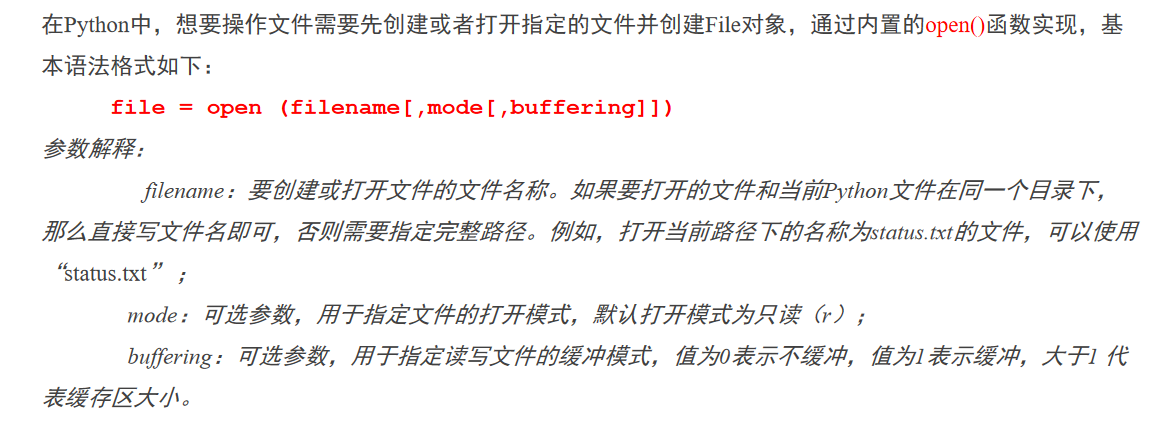

文件存储

txt文本存储

csv文件存储

import requests

from lxml import etree

#下载网页

def download_html(url):

try:

r= requests.get(url)

r.raise_for_status()

r.encoding=r.apparent_encoding

return r.text

except Exception as e:

print(e)

#解析网页

def parse_html(html):

data=etree.HTML(html)

titles=data.xpath("//*[@id=\"colR\"]/div[2]/dl/dd/ul/li/a/text()")

dates=data.xpath("//*[@id=\"colR\"]/div[2]/dl/dd/ul/li/span/text()")

print(list(zip(titles,dates)))

return list(zip(titles,dates))

#保存数据

def save_data(data):

with open("news1.txt","w",encoding="utf-8") as f:

for item in data:

f.write(",".join(item)+"\n")

print("news title already saved successfully")

if __name__ == '__main__':

url="http://www.cqie.edu.cn/html/2/xydt/"

print(save_data(parse_html(download_html(url))))

PyMySQL

4个步骤

- 创建数据库链接对象 con

- 获取游标对象 cursor

- 执行SQL语句

- 提交事务,关闭链接

浙公网安备 33010602011771号

浙公网安备 33010602011771号