爬虫 -requests

爬虫 -requests

Python标准库中提供了: urllib、urllib2、httplib等模块以供Http请求, 但是API不够理想, 因而出现了Requests模块 (Requests是用python语言基于urllib编写的).

Requests 是使用 Apache2 Licensed 许可证的, 基于Python开发的HTTP 库, 其在Python内置模块的基础上进行了高度的封装, 从而使得Pythoner进行网络请求时, 变得简单, 使用Requests可以比较轻松的完成浏览器可有的任何操作.

示例

import requests response = requests.get("https://www.baidu.com") print(type(response)) print(response.status_code) print(type(response.text)) print(response.text) print(response.cookies) print(response.content) print(response.content.decode("utf-8"))

很多情况下的网站如果直接response.text会出现乱码的问题, 所以使用response.content, 这样返回的数据格式其实是二进制格式, 然后通过decode()转换为utf-8, 这样就解决了通过response.text直接返回显示乱码的问题.

请求发出后, Requests 会基于 HTTP 头部对响应的编码作出有根据的推测. 当访问 response.text 时, Requests 会使用其推测的文本编码. 可以找出 Requests 使用了什么编码,并且能够使用 response.encoding 属性来改变它.如:

response =requests.get("http://www.baidu.com") response.encoding="utf-8" print(response.text)

不管是通过response.content.decode("utf-8)的方式还是通过response.encoding="utf-8"的方式都可以避免乱码的问题发生

请求

GET请求

import requests response = requests.get('http://httpbin.org/get') print(response.text)

带参数GET请求

import requests response = requests.get("http://httpbin.org/get?name=charon&age=22") print(response.text)

如果想要在URL查询字符串传递数据, 通常会通过httpbin.org/get?key=val方式传递. Requests模块允许使用params关键字传递参, 以一个字典来传递这些参数,例子如下:

import requests data = { "name":"charon", "age":22 } response = requests.get("http://httpbin.org/get",params=data) print(response.url) print(response.text)

上述两种的结果是相同的,通过params参数传递一个字典内容,从而直接构造url

注意:第二种方式通过字典的方式的时候,如果字典中的参数为None则不会添加到url上

解析Json

import requests import json response = requests.get("http://httpbin.org/get") print(type(response.text)) print(response.json()) # 这里!!!! print(json.loads(response.text)) # 与这里结果一样!!!! print(type(response.json()))

从结果可以看出requests里面集成的json其实就是执行了json.loads()方法,两者的结果是一样的

获取二进制数据

import requests response = requests.get("https://github.com/favicon.ico") print(type(response.text), type(response.content)) print(response.text) print(response.content)

将二进制数据保存

import requests response = requests.get("https://github.com/favicon.ico") with open('favicon.ico', 'wb') as f: f.write(response.content) f.close()

添加hearders

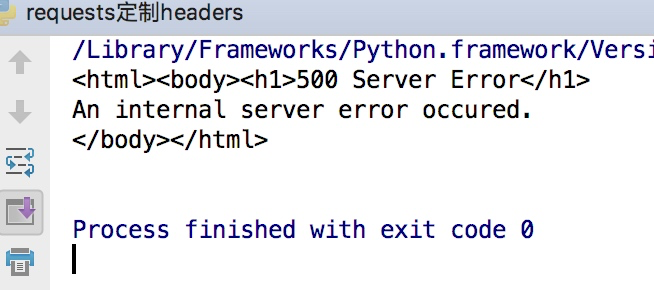

可以定制headers的信息, 如直接通过requests请求知乎网站的时候,默认是无法访问的, 需要添加请求头才可

import requests response =requests.get("https://www.zhihu.com") print(response.text)

这样会得到如下的错误

因为访问知乎需要头部信息, 在谷歌浏览器里输入chrome://version就可以看到用户代理, 将用户代理添加到头部信息, 即可访问知乎.

import requests headers = { 'User-Agent': 'Mozilla/5.0 (Macintosh; Intel Mac OS X 10_11_4) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/52.0.2743.116 Safari/537.36' } response = requests.get("https://www.zhihu.com/explore", headers=headers) print(response.text)

POST请求

通过在发送post请求时添加一个data参数, 这个data参数可以通过字典构造成, 这样对于发送post请求就非常方便

import requests data = {'name': 'charon', 'age': '22'} response = requests.post("http://httpbin.org/post", data=data) print(response.text)

其他与GET请求基本一样

import requests data = {'name': 'charon', 'age': '22'} headers = { 'User-Agent': 'Mozilla/5.0 (Macintosh; Intel Mac OS X 10_11_4) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/52.0.2743.116 Safari/537.36' } response = requests.post("http://httpbin.org/post", data=data, headers=headers) print(response.json())

其他各种请求方法

import requests requests.post('http://httpbin.org/post') requests.put('http://httpbin.org/put') requests.delete('http://httpbin.org/delete') requests.head('http://httpbin.org/get') requests.options('http://httpbin.org/get')

响应

reponse属性

import requests response = requests.get('http://www.jianshu.com') print(type(response.status_code), response.status_code) print(type(response.headers), response.headers) print(type(response.cookies), response.cookies) print(type(response.url), response.url) print(type(response.history), response.history)

结果如下:

状态码判断

状态判断

import requests response = requests.get('http://www.jianshu.com/hello.html') exit() if not response.status_code == requests.codes.not_found else print('404 Not Found')

或者可以直接使用状态码来判断

import requests response = requests.get('http://www.jianshu.com') exit() if not response.status_code == 200 else print('Request Successfully')

状态码对应的状态

100: ('continue',), 101: ('switching_protocols',), 102: ('processing',), 103: ('checkpoint',), 122: ('uri_too_long', 'request_uri_too_long'), 200: ('ok', 'okay', 'all_ok', 'all_okay', 'all_good', '\\o/', '✓'), 201: ('created',), 202: ('accepted',), 203: ('non_authoritative_info', 'non_authoritative_information'), 204: ('no_content',), 205: ('reset_content', 'reset'), 206: ('partial_content', 'partial'), 207: ('multi_status', 'multiple_status', 'multi_stati', 'multiple_stati'), 208: ('already_reported',), 226: ('im_used',), # Redirection. 300: ('multiple_choices',), 301: ('moved_permanently', 'moved', '\\o-'), 302: ('found',), 303: ('see_other', 'other'), 304: ('not_modified',), 305: ('use_proxy',), 306: ('switch_proxy',), 307: ('temporary_redirect', 'temporary_moved', 'temporary'), 308: ('permanent_redirect', 'resume_incomplete', 'resume',), # These 2 to be removed in 3.0 # Client Error. 400: ('bad_request', 'bad'), 401: ('unauthorized',), 402: ('payment_required', 'payment'), 403: ('forbidden',), 404: ('not_found', '-o-'), 405: ('method_not_allowed', 'not_allowed'), 406: ('not_acceptable',), 407: ('proxy_authentication_required', 'proxy_auth', 'proxy_authentication'), 408: ('request_timeout', 'timeout'), 409: ('conflict',), 410: ('gone',), 411: ('length_required',), 412: ('precondition_failed', 'precondition'), 413: ('request_entity_too_large',), 414: ('request_uri_too_large',), 415: ('unsupported_media_type', 'unsupported_media', 'media_type'), 416: ('requested_range_not_satisfiable', 'requested_range', 'range_not_satisfiable'), 417: ('expectation_failed',), 418: ('im_a_teapot', 'teapot', 'i_am_a_teapot'), 421: ('misdirected_request',), 422: ('unprocessable_entity', 'unprocessable'), 423: ('locked',), 424: ('failed_dependency', 'dependency'), 425: ('unordered_collection', 'unordered'), 426: ('upgrade_required', 'upgrade'), 428: ('precondition_required', 'precondition'), 429: ('too_many_requests', 'too_many'), 431: ('header_fields_too_large', 'fields_too_large'), 444: ('no_response', 'none'), 449: ('retry_with', 'retry'), 450: ('blocked_by_windows_parental_controls', 'parental_controls'), 451: ('unavailable_for_legal_reasons', 'legal_reasons'), 499: ('client_closed_request',), # Server Error. 500: ('internal_server_error', 'server_error', '/o\\', '✗'), 501: ('not_implemented',), 502: ('bad_gateway',), 503: ('service_unavailable', 'unavailable'), 504: ('gateway_timeout',), 505: ('http_version_not_supported', 'http_version'), 506: ('variant_also_negotiates',), 507: ('insufficient_storage',), 509: ('bandwidth_limit_exceeded', 'bandwidth'), 510: ('not_extended',), 511: ('network_authentication_required', 'network_auth', 'network_authentication'),

高级操作

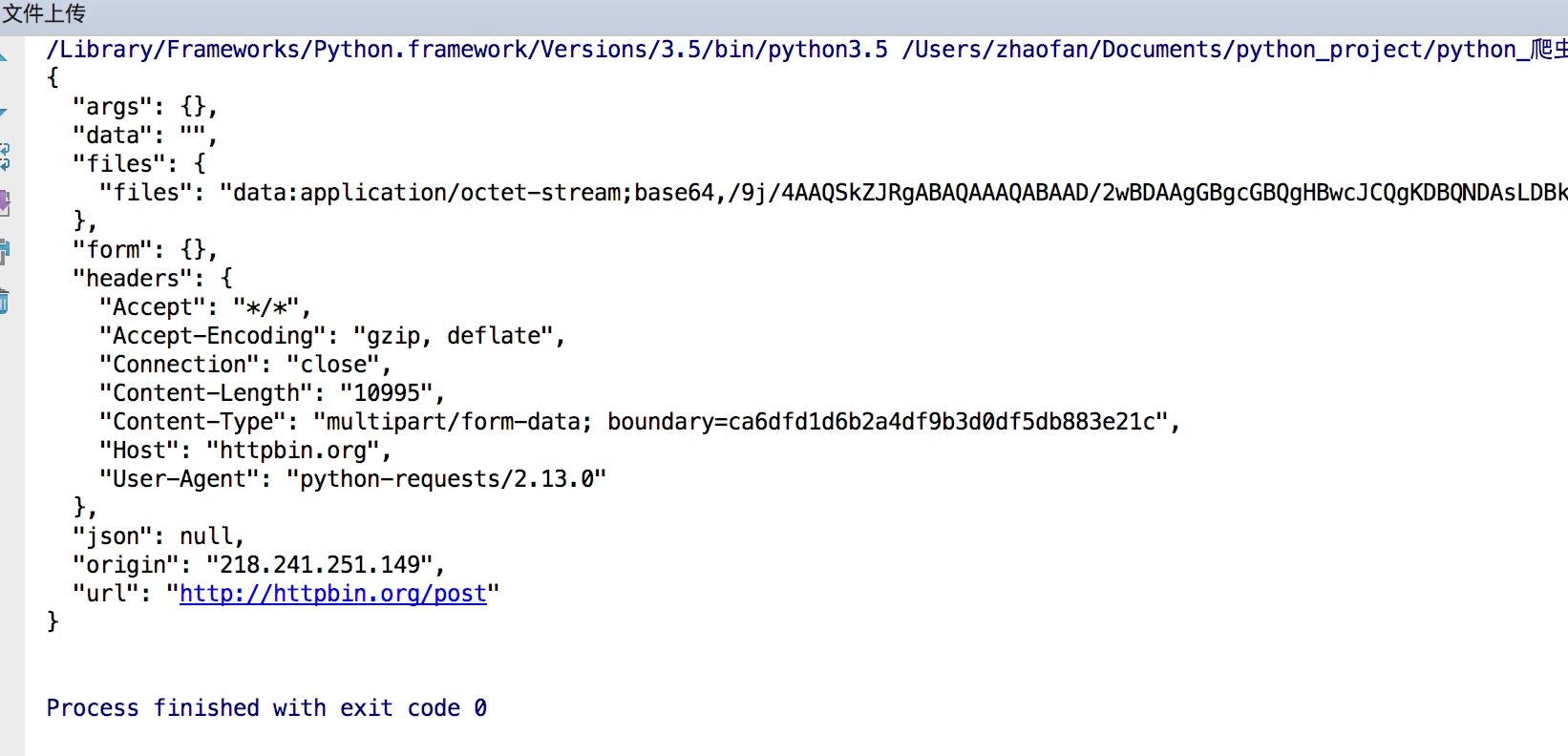

文件上传

构造一个字典然后通过files参数传递

import requests files = {'file': open('favicon.ico', 'rb')} response = requests.post("http://httpbin.org/post", files=files) print(response.text)

结果如下:

获取cookie

import requests response = requests.get("https://www.baidu.com") print(response.cookies) for key, value in response.cookies.items(): print(key + '=' + value)

会话维持

模拟登入

cookie的一个作用就是可以用于模拟登陆,做会话维持

import requests s = requests.Session() s.get("http://httpbin.org/cookies/set/number/123456") response = s.get("http://httpbin.org/cookies") print(response.text)

通过创建一个session对象,两次请求都通过这个对象访问

证书验证

现在的很多网站都是https的方式访问,所以这个时候就涉及到证书的问题

import requests response = requests.get("https:/www.12306.cn") print(response.status_code)

默认的12306网站的证书是不合法的,这样就会提示如下错误

为了避免这种情况的发生可以通过verify=False.

但是这样是可以访问到页面,但是会提示:

InsecureRequestWarning: Unverified HTTPS request is being made. Adding certificate verification is strongly advised. See: https://urllib3.readthedocs.io/en/latest/advanced-usage.html#ssl-warnings InsecureRequestWarning)

解决方法为:

import requests from requests.packages import urllib3 urllib3.disable_warnings() response = requests.get('https://www.12306.cn', verify=False) print(response.status_code)

代理设置

import requests proxies= { "http":"http://127.0.0.1:9999", "https":"http://127.0.0.1:8888" } response = requests.get("https://www.baidu.com",proxies=proxies) print(response.text)

如果代理需要设置账户名和密码,只需要将字典更改为如下:

proxies = { "http":"http://user:password@127.0.0.1:9999" }

如果你的代理是通过sokces这种方式则需要pip install "requests[socks]"

import requests proxies = { 'http': 'socks5://127.0.0.1:9742', 'https': 'socks5://127.0.0.1:9742' } response = requests.get("https://www.taobao.com", proxies=proxies) print(response.status_code)

超时设置

通过timeout参数可以设置超时的时间

import requests from requests.exceptions import ReadTimeout try: response = requests.get("http://httpbin.org/get", timeout = 0.5) print(response.status_code) except ReadTimeout: print('Timeout')

认证设置

如果碰到需要认证的网站可以通过requests.auth模块实现

import requests from requests.auth import HTTPBasicAuth r = requests.get('http://120.27.34.24:9001', auth=HTTPBasicAuth('user', '123')) print(r.status_code)

另一种方式

import requests r = requests.get('http://120.27.34.24:9001', auth=('user', '123')) print(r.status_code)

异常处理

关于reqeusts的异常在这里可以看到详细内容:

http://www.python-requests.org/en/master/api/#exceptions

所有的异常都是在requests.excepitons中

从源码可以看出

RequestException继承IOError,

HTTPError,ConnectionError,Timeout继承RequestionException

ProxyError,SSLError继承ConnectionError

ReadTimeout继承Timeout异常

常用的异常继承关系详情:

http://cn.python-requests.org/zh_CN/latest/_modules/requests/exceptions.html#RequestException

例子:

import requests from requests.exceptions import ReadTimeout, ConnectionError, RequestException try: response = requests.get("http://httpbin.org/get", timeout = 0.5) print(response.status_code) except ReadTimeout: print('Timeout') except ConnectionError: print('Connection error') except RequestException: print('Error')

其实最后测试可以发现, 首先被捕捉的异常是timeout, 当把网络断掉的haul就会捕捉到ConnectionError, 如果前面异常都没有捕捉到, 最后也可以通过RequestExctption捕捉到.

浙公网安备 33010602011771号

浙公网安备 33010602011771号