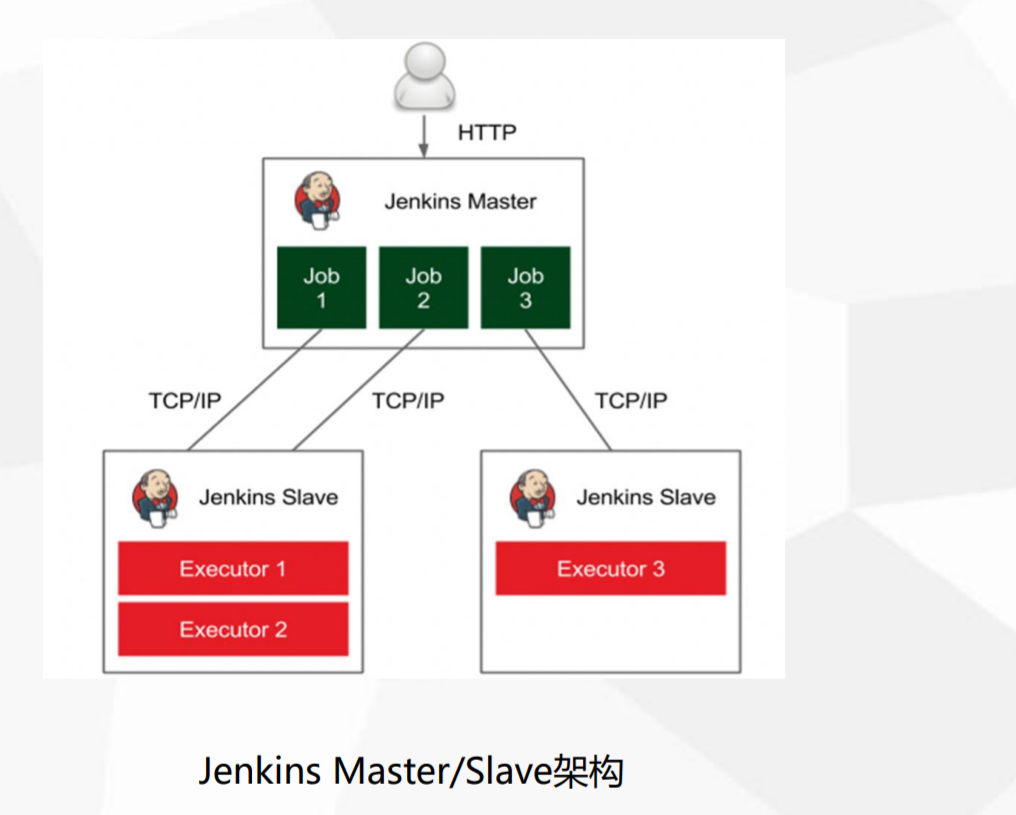

基于K8S构建企业级Jenkins CI/CD平台(master/slave架构)

搭建平台目的:

k8s中搭建jenkins master/slave架构,解决单jenkins执行效率低,资源不足等问题(jenkins master 调度任务到 slave上,并发执行任务,提升任务执行的效率)

CI/CD环境特点:

Slave弹性伸缩

基于镜像隔离构建环境

流水线发布,易维护

一、环境准备

| 服务名 | 地址 | 版本 |

| k8s-master | 10.48.14.100 | v1.22.3 |

| k8s-node1 | 10.48.14.50 | v1.22.3 |

| k8s-node2 | 10.48.14.51 | v1.22.3 |

| gogs代码仓库 | 10.48.14.50:30080 | |

| harbor镜像仓库 | 10.48.14.50:8888 | v1.8.1 |

K8S集群搭建参考:https://www.cnblogs.com/cfzy/p/16145576.html 使用gogs作为代码仓库,harbor作为镜像仓库:搭建参考(https://www.cnblogs.com/cfzy/p/16049885.html)

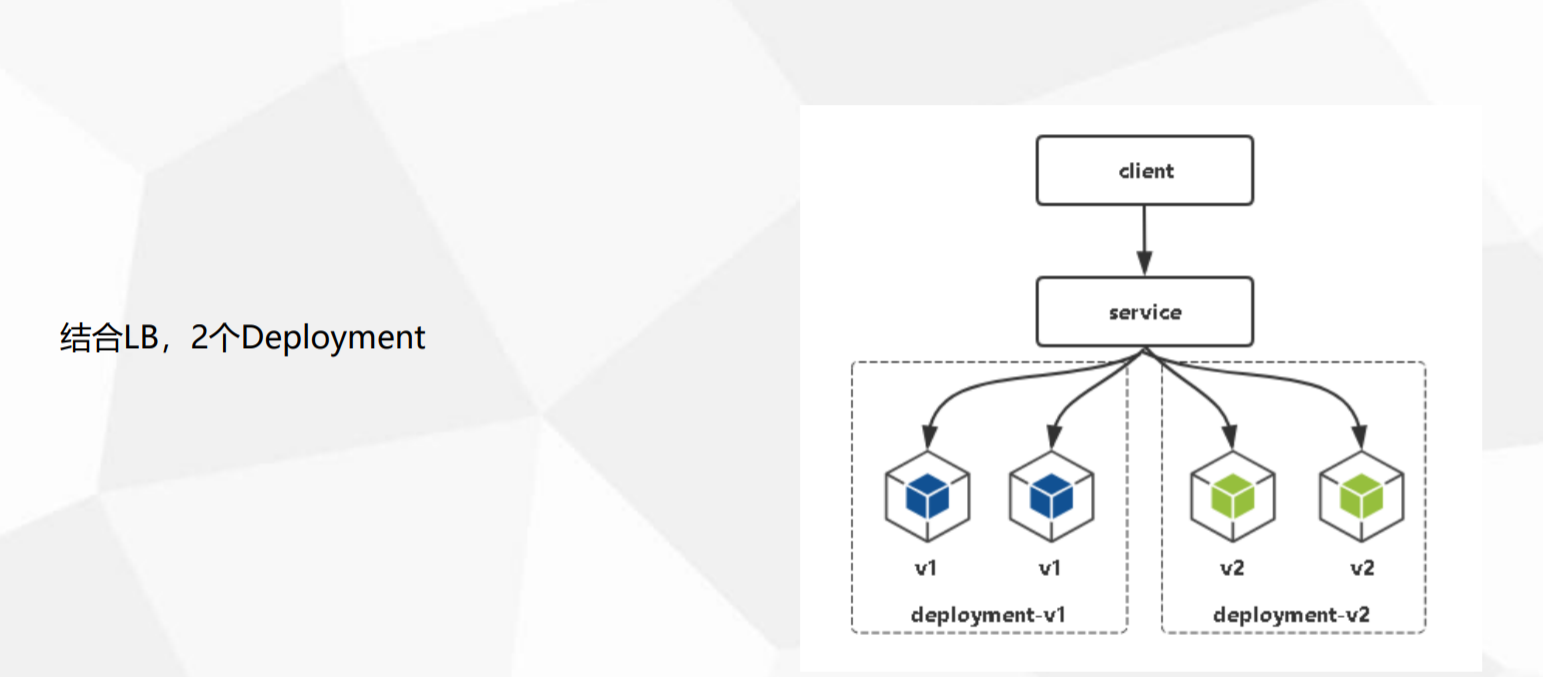

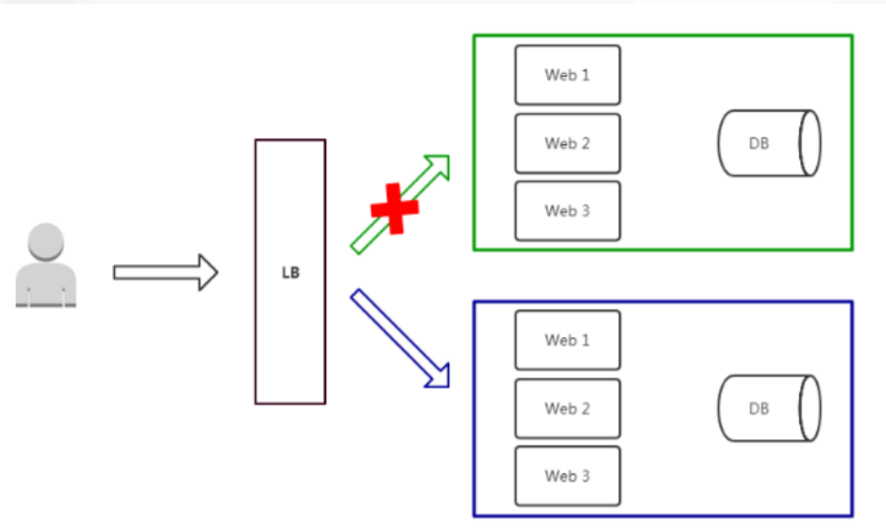

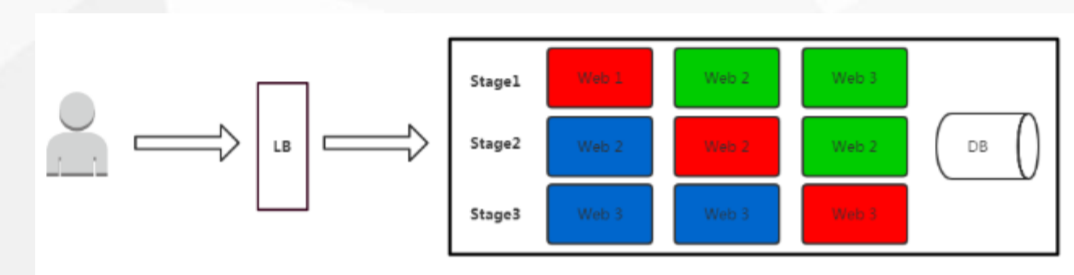

二、了解发布流程

1.蓝绿发布 项目逻辑上分为AB组,在项目升级时,首先把A组从负 载均衡中摘除,进行新版本的部署。 B组仍然继续提供 服务。A组升级完成上线,B组从负载均衡中摘除。 特点: 策略简单 升级/回滚速度快 用户无感知,平滑过渡 缺点: 需要两倍以上服务器资源 短时间内浪费一定资源成本

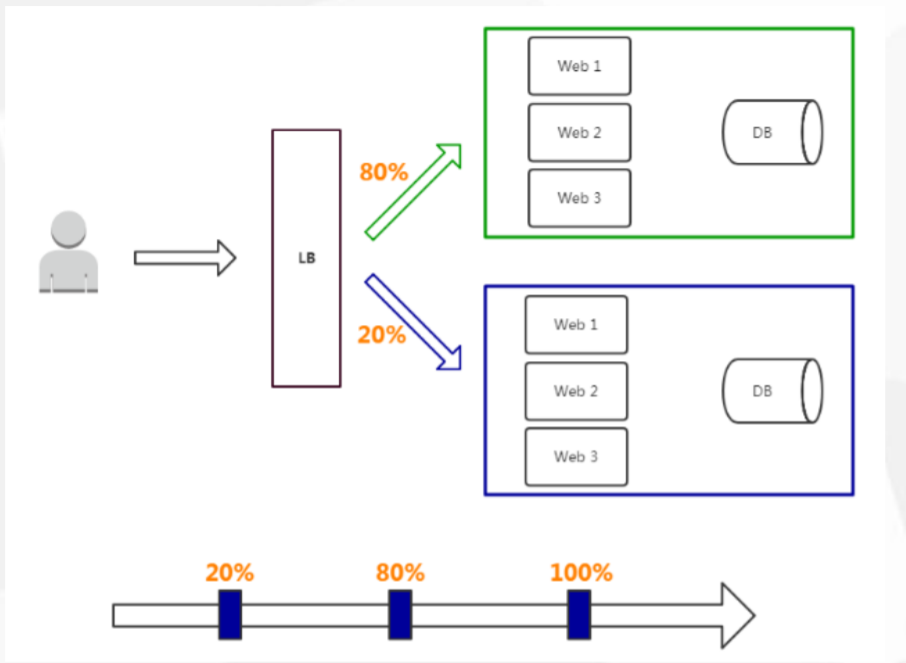

2.灰度发布 灰度发布:

只升级部分服务,即让一部分用户继续用 老版本,一部分用户开始用新版本,如果用户对新版 本没有什么意见,那么逐步扩大范围,把所有用户都 迁移到新版本上面来。 特点: 保证整体系统稳定性 用户无感知,平滑过渡 缺点: 自动化要求高

k8s中的落地方式

3.滚动发布 滚动发布: 每次只升级一个或多个服务,升级完成 后加入生产环境,不断执行这个过程,直到集群中 的全部旧版升级新版本。 特点: 用户无感知,平滑过渡 缺点: 部署周期长 发布策略较复杂 不易回滚

三、在Kubernetes中部署Jenkins

3.1 部署jenkins

创建动态PVC:为Jenkins提供持久化存储(因为之前创建了NFS作为后端存储的PVC"managed-nfs-storage",所以直接拿来用了)

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: jenkins

spec:

storageClassName: "managed-nfs-storage"

accessModes:

- ReadWriteMany

resources:

requests:

storage: 5Gi

kubectl apply -f pvc.yml

创建deploy资源运行Jenkins服务:

apiVersion: apps/v1

kind: Deployment

metadata:

name: jenkins

labels:

name: jenkins

spec:

replicas: 1

selector:

matchLabels:

name: jenkins

template:

metadata:

name: jenkins

labels:

name: jenkins

spec:

serviceAccountName: jenkins

containers:

- name: jenkins

image: jenkins/jenkins:lts-jdk11

ports:

- containerPort: 8080

- containerPort: 50000

resources:

limits:

cpu: 2

memory: 2Gi

requests:

cpu: 1

memory: 1Gi

env:

- name: TZ

value: Asia/Shanghai

- name: LIMITS_MEMORY

valueFrom:

resourceFieldRef:

resource: limits.memory

divisor: 1Mi

volumeMounts:

- name: jenkins-home

mountPath: /var/jenkins_home

securityContext:

fsGroup: 1000

volumes:

- name: jenkins-home

persistentVolumeClaim:

claimName: jenkins

kubectl apply -f deployment.yml

创建名为Jenkins的SA,并授权:

# 创建名为jenkins的ServiceAccount apiVersion: v1 kind: ServiceAccount metadata: name: jenkins --- # 创建名为jenkins的Role,授予允许管理API组的资源Pod kind: Role apiVersion: rbac.authorization.k8s.io/v1 metadata: name: jenkins rules: - apiGroups: [""] resources: ["pods"] verbs: ["create","delete","get","list","patch","update","watch"] - apiGroups: [""] resources: ["pods/exec"] verbs: ["create","delete","get","list","patch","update","watch"] - apiGroups: [""] resources: ["pods/log"] verbs: ["get","list","watch"] - apiGroups: [""] resources: ["secrets"] verbs: ["get"] --- # 将名为jenkins的Role绑定到名为jenkins的ServiceAccount apiVersion: rbac.authorization.k8s.io/v1 kind: RoleBinding metadata: name: jenkins roleRef: apiGroup: rbac.authorization.k8s.io kind: Role name: jenkins subjects: - kind: ServiceAccount name: jenkins

kubectl apply -f rbac.yaml

暴露Jenkins服务端口:

apiVersion: v1

kind: Service

metadata:

name: jenkins

spec:

selector:

name: jenkins

type: NodePort

ports:

-

name: http

port: 80

targetPort: 8080

protocol: TCP

nodePort: 30006

-

name: agent

port: 50000

protocol: TCP

targetPort: 50000

kubectl apply -f service.yml

为Jenkins的URL设置域名访问:

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: jenkins

annotations:

kubernetes.io/ingress.class: "nginx"

nginx.ingress.kubernetes.io/ssl-redirect: "true"

kubernetes.io/tls-acme: "true"

nginx.ingress.kubernetes.io/client_max_body_size: 100m

nginx.ingress.kubernetes.io/proxy-body-size: 50m

nginx.ingress.kubernetes.io/proxy-request-buffering: "off"

spec:

rules:

- host: jenkins.test.com

http:

paths:

- path: /

pathType: Prefix

backend:

service:

name: jenkins

port:

number: 80

kubectl apply -f ingress.yml

在host文件添加dns记录

访问jenkins:jenkins.test.com

登录页面,安装推荐插件,如果安装失败就更换成国内源地址

3.2 配置jenkins下载插件地址,并安装必要插件

cd $jenkins_home/ sed -i 's#https://updates.jenkins.io/update-center.json#http://mirrors.tuna.tsinghua.edu.cn/jenkins/updates/update-center.json#g' hudson.model.UpdateCenter.xml cd $jenkins_home/updates 替换插件源地址: sed -i 's#https://updates.jenkins.io/download#http://mirrors.aliyun.com/jenkins#g' default.json 替换谷歌地址: sed -i 's#http://www.google.com#http://www.baidu.com#g' default.json 安装插件:Git/Git Parameter/Pipeline/Kubernetes/Kubernetes Continuous Deploy/Config File Provider Kubernetes Continuous Deploy: 用于将资源配置部署到Kubernetes Config File Provider:用于存储kubectl用于连接k8s集群的kubeconfig配置文件

注意:Kubernetes Continuous Deploy插件因为版本原因,在构建过程中报错,所以被我弃用了,用Config File Provider插件代替也可以达到目的

3.3 Jenkins在K8S中动态创建代理

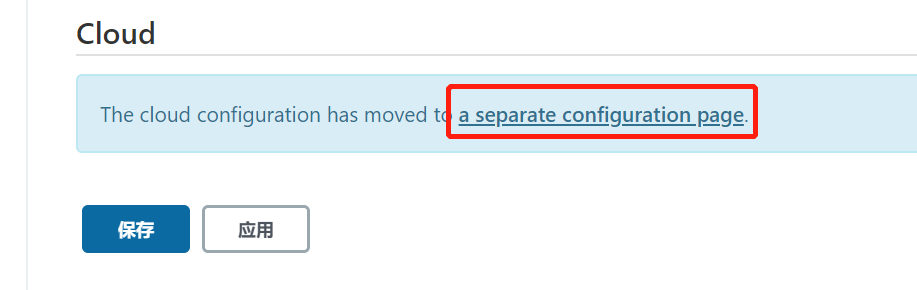

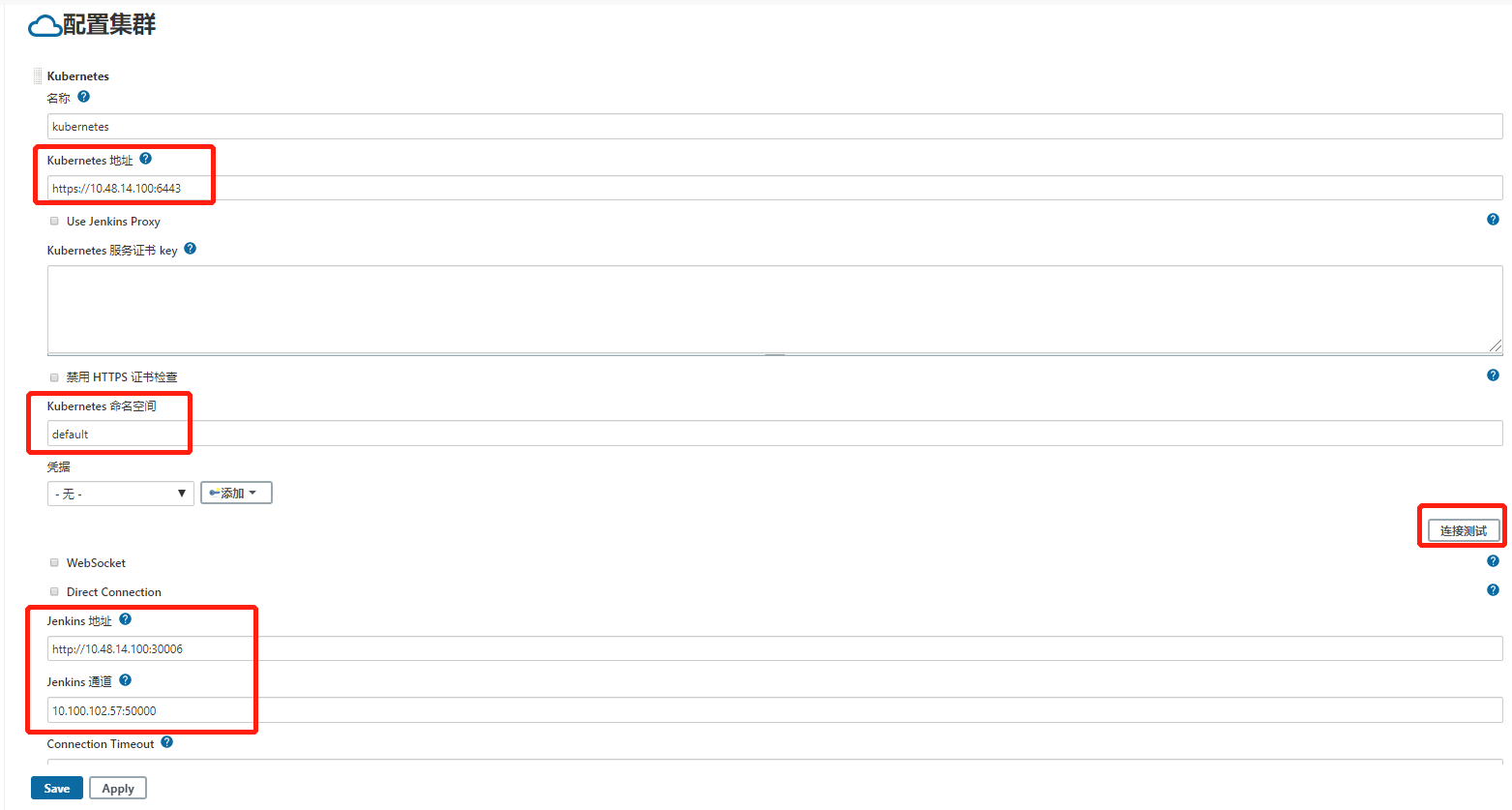

3.3.1 配置Kubernetes plugin Jenkins页面配置k8s集群信息:系统管理——系统配置——Cloud——配置集群

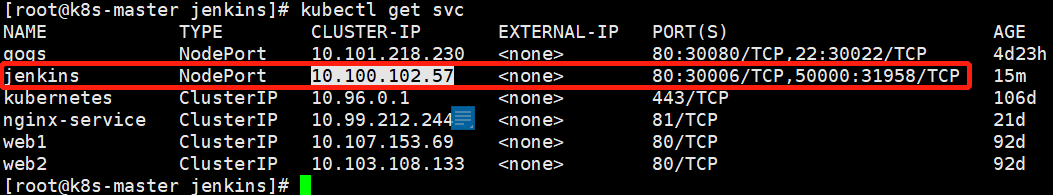

注意:这个是最重要的一个配置,决定整个安装的成败,"kubernetes地址" 用"https://kubernetes.default"或者"https://k8s集群主节点的ip+端口", 然后点击"连接测试",连接成功会出现k8s版本号。 为什么连k8s不需要凭证:jenkins是在k8s内部搭建的,所以不需要k8s凭证,如果是在外部搭建的就需要添加k8s凭证 jenkins地址: kubectl get svc #查看jenkins的端口 jenkins通道:这个参数是Jenkins Master和Jenkins Slave之间通信必须配置的,kubectl get svc #查看ip和端口

3.3.2 构建Jenkins—slave镜像(Dockerfile) #jenkins 官方有jenkins-slave 制作好的镜像,可以直接 docker pull jenkins/jnlp-slave 下载到本地并上传本地私有镜像厂库。

官方的镜像好处就是不需要再单独安装maven,kubectl 这样的命令了,如果项目是maven构建就可以直接使用了。

#我们要用gradle构建项目,所以需要安装gradle,jdk等,构建镜像所需要Dockerfile如下:(需要先下载jdk和gradle二进制文件)

构建镜像所需文件在:https://github.com/fxkjnj/kubernetes/tree/main/jenkins-for_kubernetes/jenkins-slave

FROM centos:7 MAINTAINER liang ENV JAVA_HOME=/usr/local/java

ENV PATH=$JAVA_HOME/bin:/usr/local/gradle/bin:$PATH

RUN yum install -y maven curl git libtool-ltdl-devel && \ yum clean all && \ rm -rf /var/cache/yum/* && \ mkdir -p /usr/share/jenkins COPY jdk-11.0.9 /usr/local/java

COPY gradle6.4 /usr/local/gradle COPY slave.jar /usr/share/jenkins/slave.jar COPY jenkins-slave /usr/bin/jenkins-slave COPY settings.xml /etc/maven/settings.xml

RUN chmod +x /usr/bin/jenkins-slave COPY kubectl /usr/bin ENTRYPOINT ["jenkins-slave"]

jenkins-slave:shell脚本,用于启动slave.jar

settings.xml: 修改maven官方源为阿里云源

slave.jar: agent程序,接收master下发的任务

kubectl: 让jenkins-slave可以执行kubectl命令,cp /usr/bin/kubectl ./

构建dockerfile,生成slave-agent镜像

docker build -t jenkins-slave-jdk:11 .

上传到harbor仓库

docker tag jenkins-slave-jdk:11 10.48.14.50:8888/library/jenkins-slave-jdk:11

docker login -u admin 10.48.14.50:8888

docker push 10.48.14.50:8888/library/jenkins-slave-jdk:11

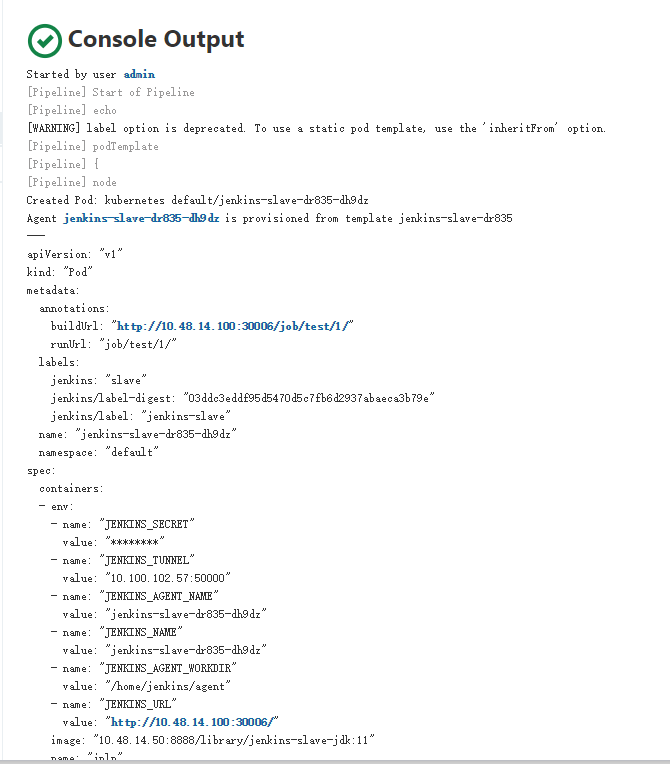

3.3.3 创建一个流水线任务,测试jenkins-slave功能(在k8s中动态创建代理)

创建流水线任务 "test"

编写pipeline测试脚本(声明式脚本)

需要注意的是,spec中定义containers名字一定要写jnlp

pipeline {

agent {

kubernetes {

label "jenkins-slave"

yaml """

kind: Pod

metadata:

name: jenkins-slave

spec:

containers:

- name: jnlp

image: "10.48.14.50:8888/library/jenkins-slave-jdk:11"

"""

}

}

stages {

stage('测试'){

steps {

sh """

echo hello

"""

}

}

}

}

保存,然后构建任务,查看日志信息

测试完成,功能正常。

在构建的时候,k8s集群default命名空间下,会临时启动一个pod(jenkins-slave-dr835-dh9dz),这个pod就是jenkins动态创建的代理,

用于执行jenkins-master下发的构建任务,当jenkins构建完成后,这个pod自动销毁

四、Jenkins在K8S中持续部署(完整流程)

4.1 持续集成和持续部署流程

持续集成CI:提交代码——代码构建——可部署的包——打包镜像——推送镜像仓库 持续部署CD:kubectl命令行/yaml文件——创建资源——暴露应用——更新镜像/回滚/扩容——删除资源 jenkins在k8s中持续集成部署流程 拉取代码:git checkout 代码编译:mvn clean

构建镜像并推送远程仓库

部署到K8S

开发测试

用kubectl命令行持续部署 1、创建资源(deployment) kubectl create deployment tomcat --image=tomcat:v1 kubectl get pods,deploy 2、发布服务(service) kubectl expose deployment tomcat --port=80 --target-port=8080 --name=tomcat-service --type=NodePort --port 集群内部访问的service端口,即通过clusterIP:port可以访问到某个service --target-port 是pod的端口,从port和nodeport来的流量经过kube-proxy流入到后端pod的targetport上,最后进入容器 nodeport:外部访问k8s集群中service的端口,如果不定义端口号会默认分配一个 containerport:是pod内部容器的端口,targetport映射到containerport(一般在deployment中设置) kubectl get service 3、升级 kubectl set image deployment tomcat 容器名称=tomcat:v2 --record=true #查看升级状态 kubectl rollout status deployment/tomcat 4、扩容缩容 kubectl scale deployment tomcat --replicas=10 5、回滚 kubectl rollout history deployment/tomcat #查看版本发布历史 kubectl rollout undo deployment/tomcat #回滚到上一版本 kubectl rollout undo deployment/tomcat --to-revision=2 #回滚到指定版本 6、删除 kubectl delete deployment/tomcat #删除deployment资源 kubectl delete service/tomcat-service #删除service资源

4.2 分步生成CI/CDpipeline语法

4.2.1 拉取代码(git checkout)

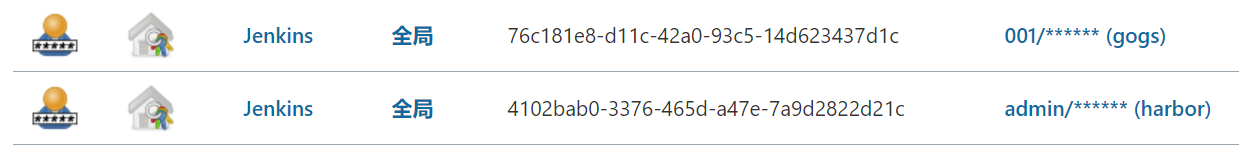

添加凭证(git仓库、harbor仓库):系统管理——凭据配置——新增harbor、git仓库的用户名/密码

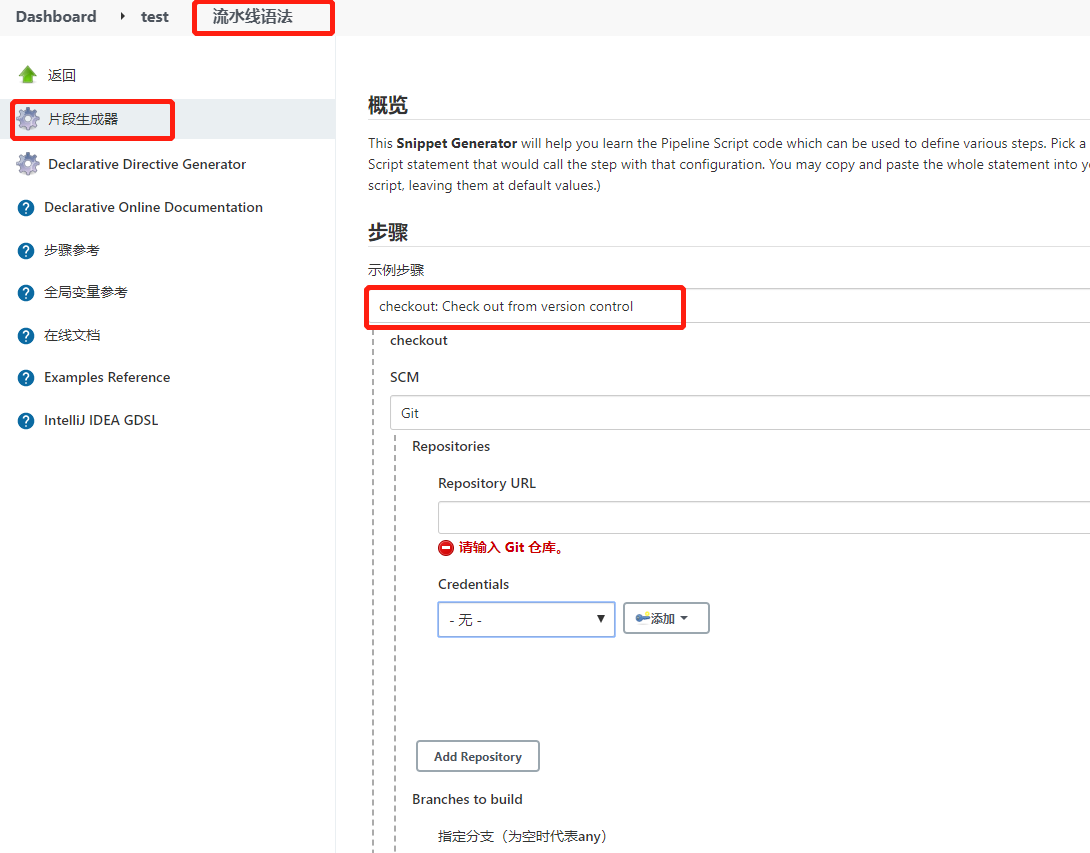

用pipeline语法生成器,生成拉取代码步骤的pipeline语法:

根据自己的代码仓库信息填写,然后点击生成流水线脚本,就有了拉取代码的脚本语法:(credentialsID、URL等信息可以定义成变量传输)

checkout([$class: 'GitSCM', branches: [[name: '*/master']], extensions: [], userRemoteConfigs: [[credentialsId: 'a5ec87ae-87a1-418e-aa49-53c4aedcd261', url: 'http://10.48.14.100:30080/001/java-demo.git']]])

4.2.2 代码编译

mvn clean package -Dmaven.test.skip=true

4.2.3 构建镜像

制作一个tomcat镜像,因为java服务要跑着tomcat中:下载安装包apache-tomcat-8.5.34.tar.gz,并编写Dockerfile

FROM centos:7

MAINTAINER liang

ENV VERSION=8.5.34

RUN yum install -y java-1.8.0-openjdk wget curl unzip iproute net-tools && \

yum clean all && \

rm -rf /var/cache/yum/*

COPY apache-tomcat-${VERSION}.tar.gz /

RUN tar -zxf apache-tomcat-${VERSION}.tar.gz && \

mv apache-tomcat-${VERSION} /usr/local/tomcat && \

rm -rf apache-tomcat-${VERSION}.tar.gz /usr/local/tomcat/webapps/* && \

mkdir /usr/local/tomcat/webapps/test && \

echo "ok" > /usr/local/tomcat/webapps/test/status.html && \

sed -i '1a JAVA_OPTS="-Djava.security.edg=file:/dev/./urandom"' /usr/local/tomcat/bin/catalina.sh && \

ln -sf /usr/share/zoneinfo/Asia/Shanghai /etc/localtime

ENV PATH $PATH:/usr/local/tomcat/bin

WORKDIR /usr/local/tomcat

EXPOSE 8080

CMD ["catalina.sh","run"]

构建镜像,并上传镜像到harbor仓库

docker build -t tomcat:v1 .

docker tag tomcat:v1 10.48.14.50:8888/library/tomcat:v1

docker login -u admin 10.48.14.50:8888

docker push 10.48.14.50:8888/library/tomcat:v1

以tomcat:v1为基础镜像,构建项目镜像,并上传到harbor仓库

FROM 10.48.14.50:8888/library/tomcat:v1 LABEL maitainer lizhenliang RUN rm -rf /usr/local/tomcat/webapps/* ADD target/*.war /usr/local/tomcat/webapps/ROOT.war

docker build -t 10.48.14.50:8888/dev/java-demo:v1 .

docker login -u admin 10.48.14.50:8888

docker push 10.48.14.50:8888/dev/java-demo:v1

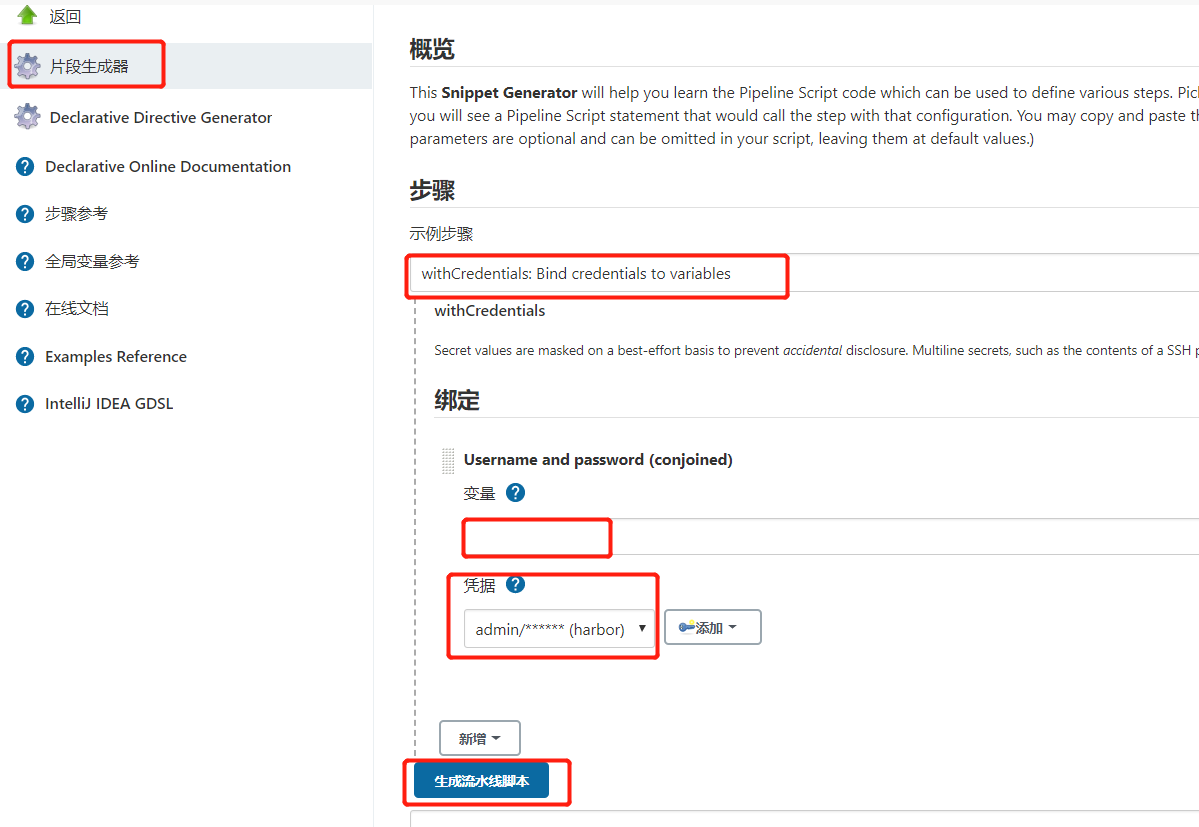

通过credential插件生成ID号来隐藏harbor用户名密码,生成pipeline语法:

pipeline中构建镜像并上传到harbor仓库:

#其中涉及的变量可以pipeline中定义

stage('构建镜像'){

steps {

withCredentials([usernamePassword(credentialsId: "${docker_registry_auth}", passwordVariable: 'password', usernameVariable: 'username')]) {

sh """

echo '

FROM ${registry}/library/tomcat:v1

MAINTAINER liang

RUN rm -rf /usr/local/tomcat/webapps/*

ADD target/*.war /usr/local/tomcat/webapps/ROOT.war

' > Dockerfile

docker build -t ${image_name} .

docker login -u ${username} -p '${password}' ${registry}

docker push ${image_name}

"""

}

}

}

4.2.4 部署服务到k8s

在k8s中为用户admin授权,生成kubeconfig文件。或者直接复制/root/.kube/config(这是一个kubeconfig文件)

Jenkins-slave镜像已经有kubectl命令,只需要kubeconfig就可以连接k8s集群

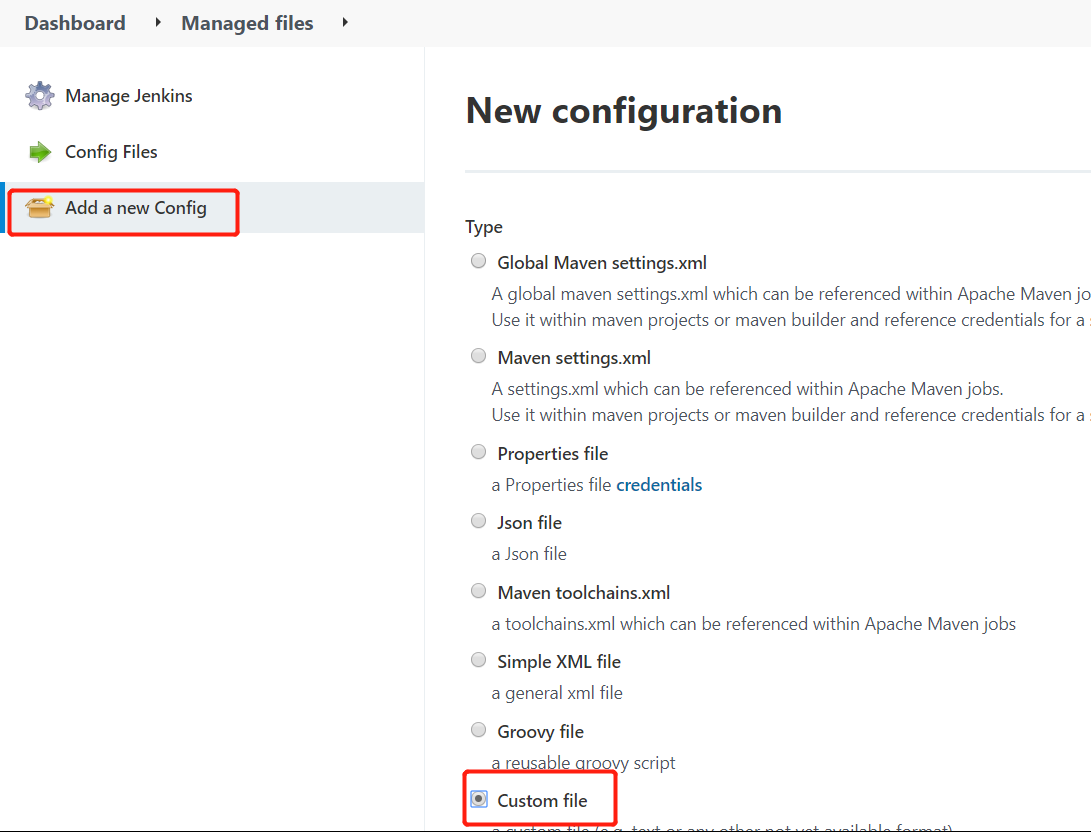

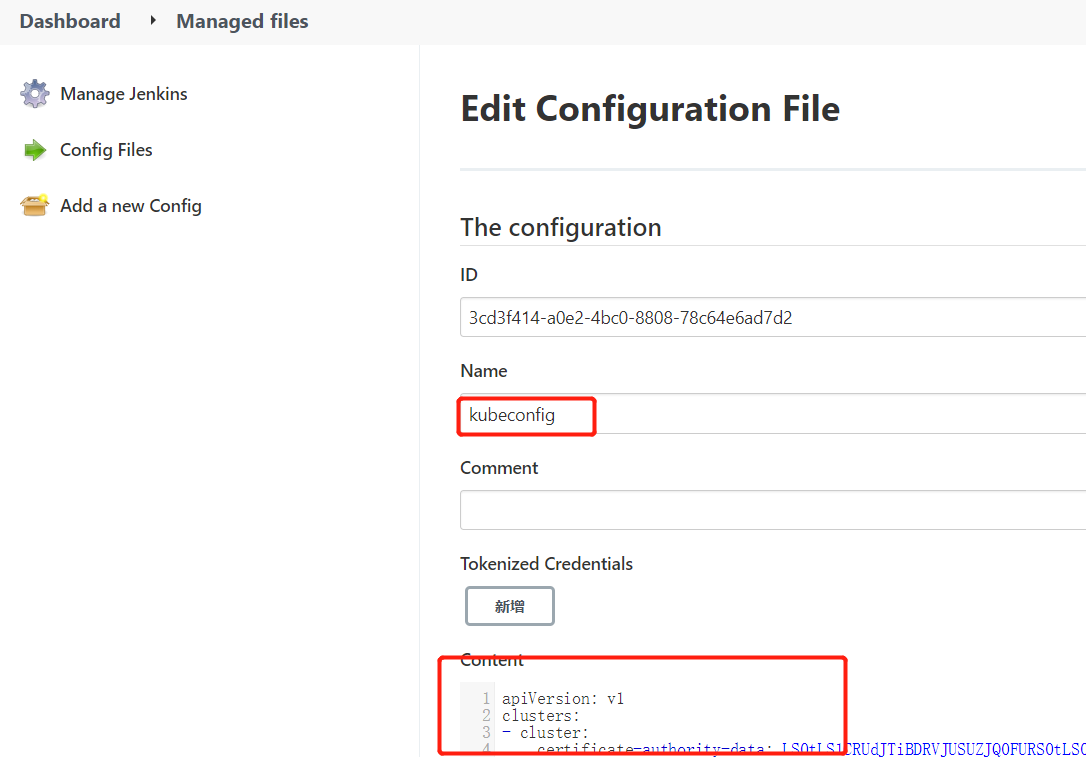

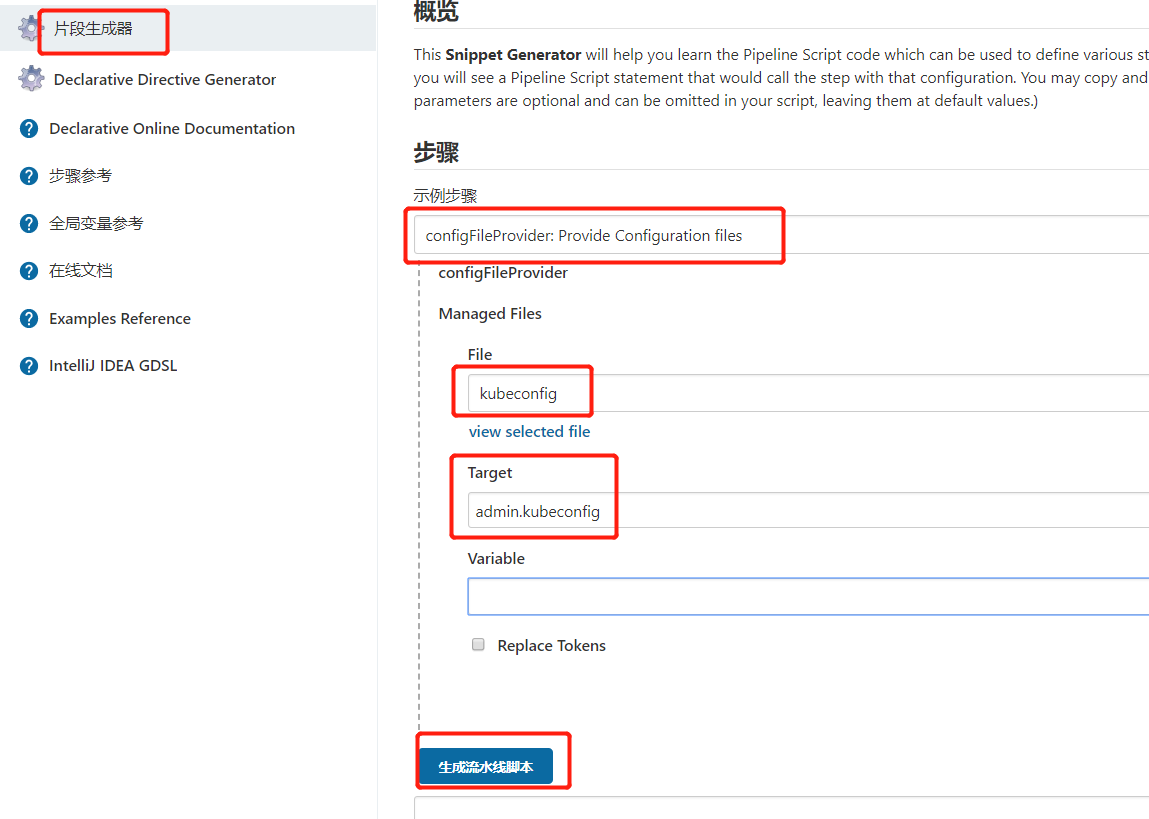

把生成的kubeconfig文件放到Jenkins中:需要安装Config File Provider插件,在Mansged files中配置

Manage Jenkins -> Managed files -> Add a new Config -> Custom file(自定义文件)

将生成的kubeconfig文件内容复制进去,复制ID号,在pipeline脚本定义变量:def k8s_auth = "ID号"

用pipeline语法生成器,生成部署资源到k8s的pipeline语法:其中Target参数可以自定义

pipeline中部署资源到k8s:

stage('部署到K8S平台'){

steps {

configFileProvider([configFile(fileId: "${k8s_auth}", targetLocation: 'admin.kubeconfig')]) {

sh """

kubectl apply -f deploy.yaml -n ${Namespace} --kubeconfig=admin.kubeconfig

sleep 10

kubectl get pod -n ${Namespace} --kubeconfig=admin.kubeconfig

"""

}

}

}

4.3 编写创建项目资源的deploy文件

项目是使用Jenkins在Kubernetes中持续部署一个无状态的tomcat pod应用;涉及到deployment控制器 以及采用NodePort 的方式去访问pod

deploy.yaml文件必须和项目代码在同一个路径下(否则kubectl无法指定yaml文件就无法创建pod),所以编写完yaml后,上传到项目仓库中

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

name: java-demo

name: java-demo

namespace: NS

spec:

replicas: RSCOUNT

selector:

matchLabels:

name: java-demo

template:

metadata:

labels:

name: java-demo

spec:

imagePullSecrets:

- name: SECRET_NAME

containers:

- image: IMAGE_NAME

name: java-demo

---

apiVersion: v1

kind: Service

metadata:

namespace: NS

labels:

name: java-demo

name: java-demo

spec:

type: NodePort

ports:

- port: 80

protocol: TCP

targetPort: 8080

selector:

name: java-demo

4.4 定义环境变量,进行参数化构建,以及一些脚本优化

4.4.1 对jenkins-slave创建pod进行优化 每次maven 打包会产生依赖的库文件,为了加快每次编译打包的速度,我们可以创建一个pvc或挂载目录,用来存储maven每次打包产生的依赖文件。 以及我们需要将 k8s 集群 node 主机上的docker 命令挂载到Pod 中,用于镜像的打包 ,推送,修改后的jenkins-salve如下:

kubernetes {

label "jenkins-slave"

yaml """

kind: Pod

metadata:

name: jenkins-slave

spec:

containers:

- name: jnlp

image: "10.48.14.50:8888/library/jenkins-slave-jdk:11"

imagePullPolicy: Always

env:

- name: TZ

value: Asia/Shanghai

volumeMounts:

- name: docker-cmd

mountPath: /usr/bin/docker

- name: docker-sock

mountPath: /var/run/docker.sock

- name: maven-cache

mountPath: /root/.m2

- name: gradle-cache

mountPath: /root/.gradle

volumes:

- name: docker-cmd

hostPath:

path: /usr/bin/docker

- name: docker-sock

hostPath:

path: /var/run/docker.sock

- name: maven-cache

hostPath:

path: /tmp/m2

- name: gradle-cache

hostPath:

path: /tmp/gradle

"""

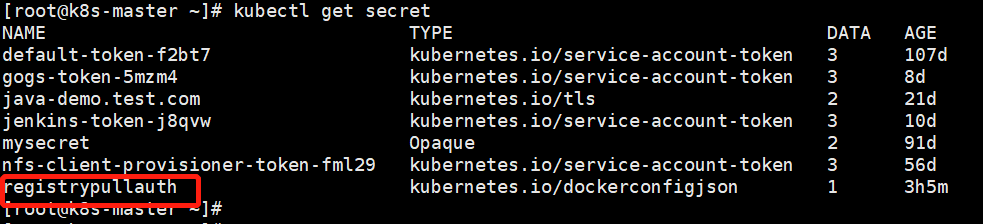

4.4.2 创建一个登录harbor仓库的secret凭证(部署项目的yaml文件要从harbor拉取镜像需要认证) kubectl create secret docker-registry registrypullauth --docker-username=admin --docker-password=Harbor12345 --docker-server=10.48.14.50:8888

4.4.3 定义环境变量,修改项目的deploy.yaml文件,进行参数化构建

定义环境变量,在pipeline语法中引用变量: def registry = "10.48.14.50:8888" #harbor仓库地址 def project = "dev" #harbor存放镜像的仓库名 def app_name = "java-demo" #项目名 def image_name = "${registry}/${project}/${app_name}:${BUILD_NUMBER}" #编译打包成的镜像名 def git_address = "http://10.48.14.100:30080/001/java-demo.git" // 认证 def secret_name = "registrypullauth" #harbor用户名密码生成的secret def docker_registry_auth = "b07ed5ba-e191-4688-9ed2-623f4753781c" #harbor用户密码生成的id def git_auth = "a5ec87ae-87a1-418e-aa49-53c4aedcd261" def k8s_auth = "3cd3f414-a0e2-4bc0-8808-78c64e6ad7d2" def JAVA_OPTS = "-Xms128m -Xmx256m -Dfile.encoding=UTF8 -Duser.timezone=GMT+08 -Dspring.profiles.active=test"

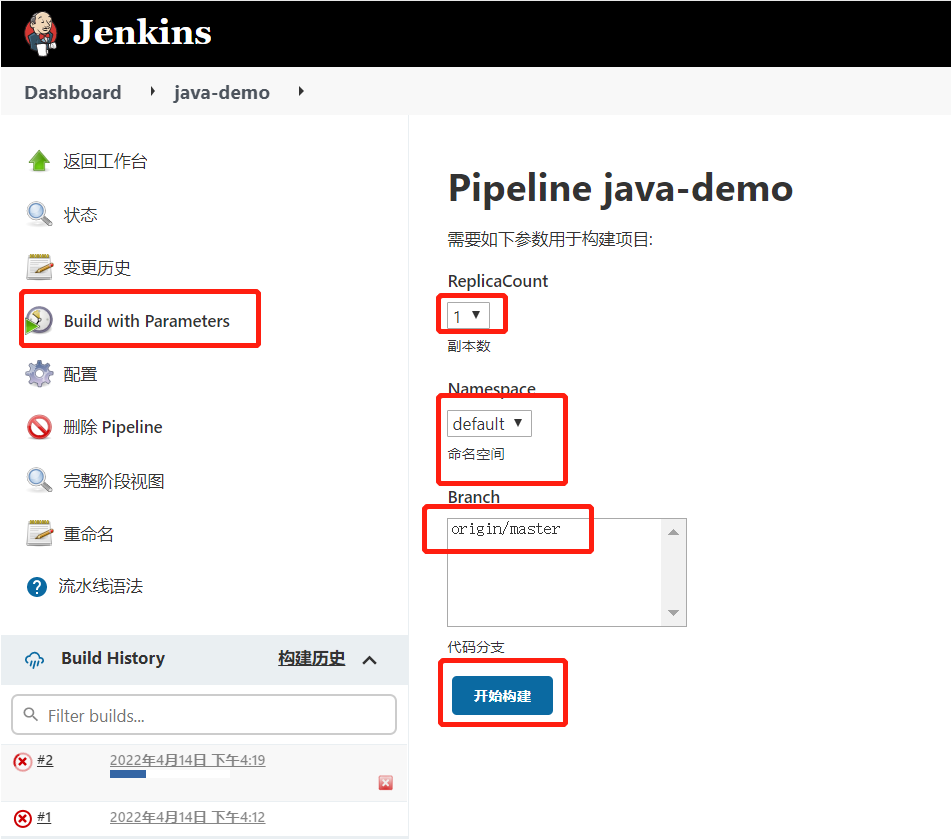

参数化构建过程中,交互内容:

代码分支(prod,dev,test)

副本数(1,3,5,7)

命名空间(prod,dev,test)

修改项目的deploy.yaml文件,替换成参数变量:

sed -i 's#IMAGE_NAME#${image_name}#' deploy.yaml

sed -i 's#SECRET_NAME#${secret_name}#' deploy.yaml

sed -i 's#RSCOUNT#${ReplicaCount}#' deploy.yaml

sed -i 's#NS#${Namespace}#' deploy.yaml

指定kubeconfig,运行项目pod

kubectl apply -f deploy.yaml -n ${Namespace} --kubeconfig=admin.kubeconfig

4.5 完整的pipeline脚本

def registry = "10.48.14.50:8888"

// 项目

def project = "dev"

def app_name = "java-demo"

def image_name = "${registry}/${project}/${app_name}:${BUILD_NUMBER}"

def git_address = "http://10.48.14.100:30080/001/java-demo.git"

// 认证

def secret_name = "registrypullauth"

def docker_registry_auth = "b07ed5ba-e191-4688-9ed2-623f4753781c"

def git_auth = "a5ec87ae-87a1-418e-aa49-53c4aedcd261"

def k8s_auth = "3cd3f414-a0e2-4bc0-8808-78c64e6ad7d2"

def JAVA_OPTS = "-Xms128m -Xmx256m -Dfile.encoding=UTF8 -Duser.timezone=GMT+08 -Dspring.profiles.active=test"

pipeline {

agent {

kubernetes {

label "jenkins-slave"

yaml """

kind: Pod

metadata:

name: jenkins-slave

spec:

containers:

- name: jnlp

image: "${registry}/library/jenkins-slave-jdk:11"

imagePullPolicy: Always

env:

- name: TZ

value: Asia/Shanghai

volumeMounts:

- name: docker-cmd

mountPath: /usr/bin/docker

- name: docker-sock

mountPath: /var/run/docker.sock

- name: gradle-cache

mountPath: /root/.gradle

- name: maven-cache

mountPath: /root/.m2

volumes:

- name: docker-cmd

hostPath:

path: /usr/bin/docker

- name: docker-sock

hostPath:

path: /var/run/docker.sock

- name: gradle-cache

hostPath:

path: /tmp/gradle

- name: maven-cache

hostPath:

path: /tmp/m2

"""

}

}

parameters {

choice (choices: ['1', '3', '5', '7'], description: '副本数', name: 'ReplicaCount')

choice (choices: ['dev','test','prod','default'], description: '命名空间', name: 'Namespace')

}

stages {

stage('拉取代码'){

steps {

checkout([$class: 'GitSCM',

branches: [[name: "${params.Branch}"]],

doGenerateSubmoduleConfigurations: false,

extensions: [], submoduleCfg: [],

userRemoteConfigs: [[credentialsId: "${git_auth}", url: "${git_address}"]]

])

}

}

stage('代码编译'){

steps {

sh """

pwd

mvn clean package -Dmaven.test.skip=true

"""

}

}

stage('构建镜像'){

steps {

withCredentials([usernamePassword(credentialsId: "${docker_registry_auth}", passwordVariable: 'password', usernameVariable: 'username')]) {

sh """

echo '

FROM ${registry}/library/tomcat:v1

LABEL maitainer lizhenliang

RUN rm -rf /usr/local/tomcat/webapps/*

ADD target/*.war /usr/local/tomcat/webapps/ROOT.war

' > Dockerfile

docker build -t ${image_name} .

docker login -u ${username} -p '${password}' ${registry}

docker push ${image_name}

"""

}

}

}

stage('部署到K8S平台'){

steps {

configFileProvider([configFile(fileId: "${k8s_auth}", targetLocation: 'admin.kubeconfig')]) {

sh """

pwd

ls

sed -i 's#IMAGE_NAME#${image_name}#' deploy.yaml

sed -i 's#SECRET_NAME#${secret_name}#' deploy.yaml

sed -i 's#RSCOUNT#${ReplicaCount}#' deploy.yaml

sed -i 's#NS#${Namespace}#' deploy.yaml

kubectl apply -f deploy.yaml -n ${Namespace} --kubeconfig=admin.kubeconfig

sleep 10

kubectl get pod -n ${Namespace} --kubeconfig=admin.kubeconfig

"""

}

}

}

}

}

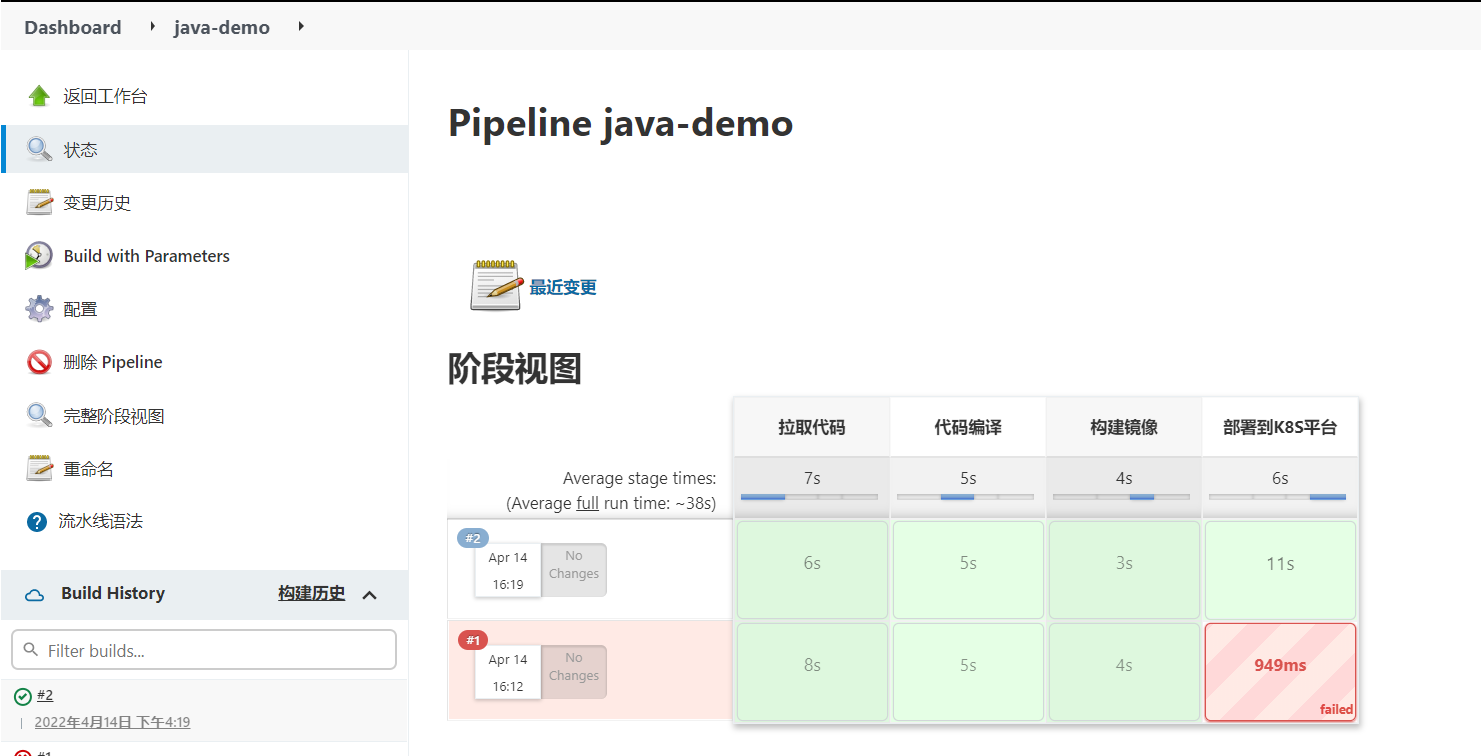

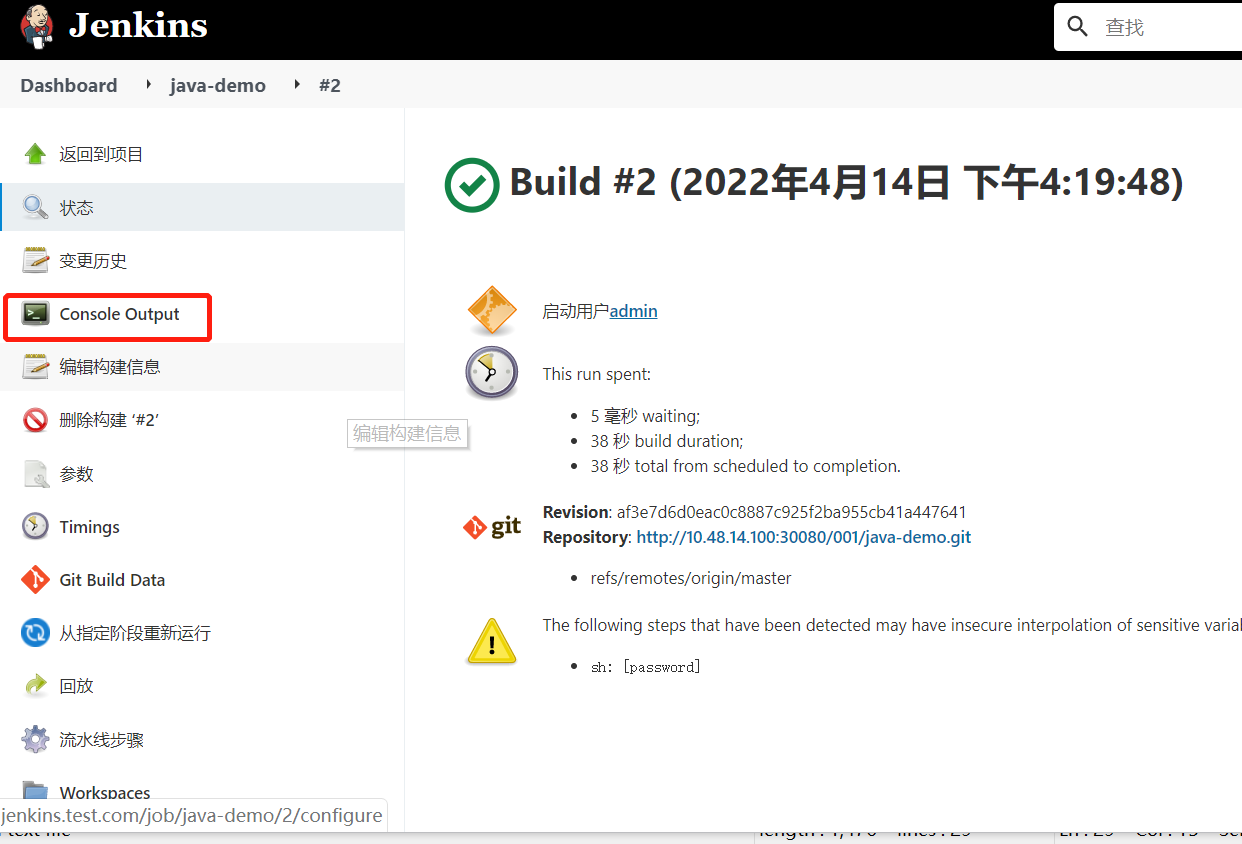

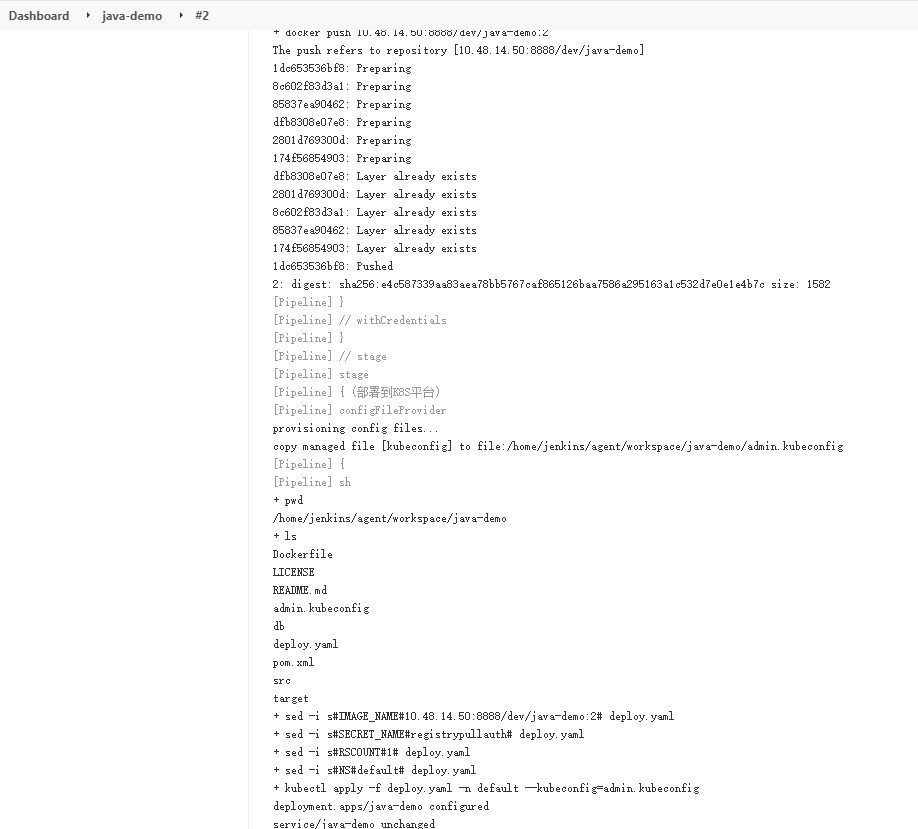

4.6 构建项目,查询日志

查看构建过程和日志

原文链接:https://www.cnblogs.com/cfzy/p/15692758.html

作者:等风来~~

本博客所有文章仅用于学习、研究和交流目的,欢迎转载。

如果觉得文章写得不错,或者帮助到您了,请点个赞。

如果文章有写的不足的地方,请你一定要指出,因为这样不光是对我写文章的一种促进,也是一份对后面看此文章的人的责任。谢谢。

浙公网安备 33010602011771号

浙公网安备 33010602011771号