在一台服务器里面通过docker-compose部署zookeeper,kafka集群

在同一台服务器里面部署zk,kafka集群

1、首先在服务器安装docker和docker-compose环境(这里不多说)

2、拉取zookeeper和kafka镜像到服务器上

可以通过以下命令来查找相关的镜像

docker search 镜像名称

docker search zookeeper

docker search kafka

3、通过 命令docker pull 镜像名称:版本号 拉取镜像

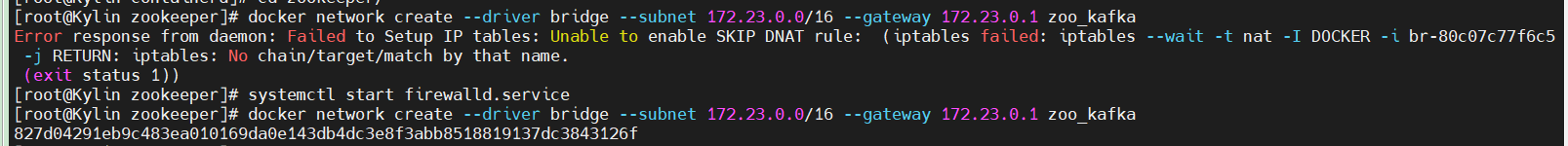

4、创建集群虚拟网络

docker network create --driver bridge --subnet 172.23.0.0/16 --gateway 172.23.0.1 zoo_kafka

如果报错:(开启防火墙)

5、分别创建目录/zookeeper, /kafka,并在相应的目录里面新建docker-compose.yml文件

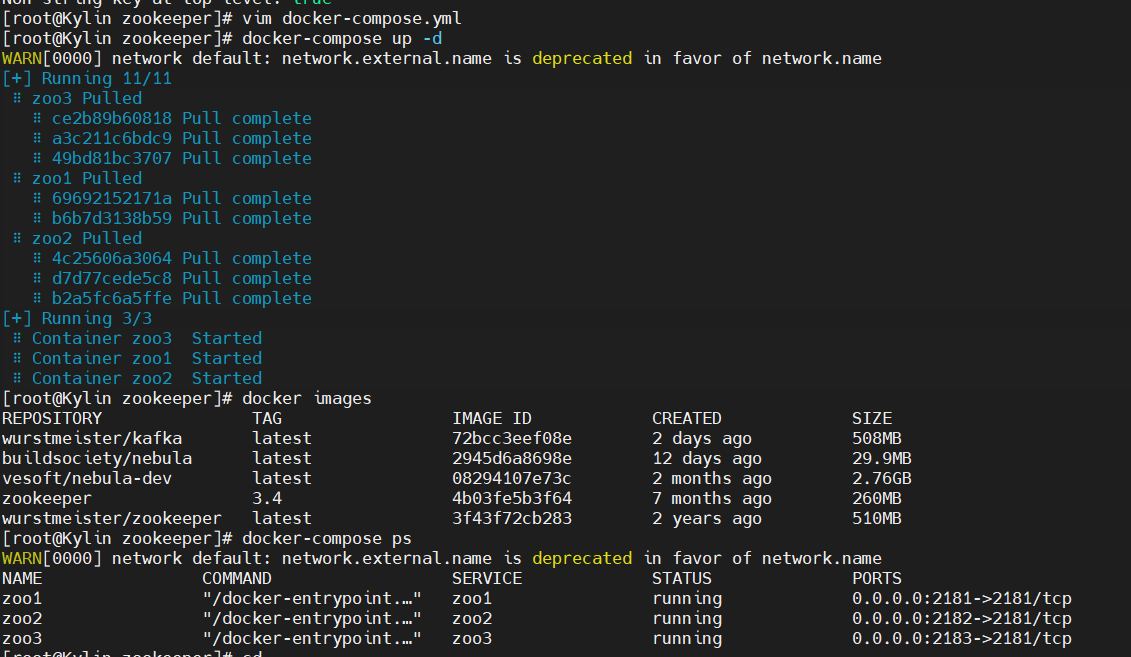

6、部署zookeeper

docker-compose.yml内容如下:

version: '2' services: zoo1: image: zookeeper:3.4 # 镜像名称 restart: always # 当发生错误时自动重启 hostname: zoo1 container_name: zoo1 privileged: true ports: # 端口 - 2181:2181 volumes: # 挂载数据卷 - ./zoo1/data:/data - ./zoo1/datalog:/datalog environment: TZ: Asia/Shanghai ZOO_MY_ID: 1 # 节点ID ZOO_PORT: 2181 # zookeeper端口号 ZOO_SERVERS: server.1=zoo1:2888:3888 server.2=zoo2:2888:3888 server.3=zoo3:2888:3888 # zookeeper节点列表 networks: default: ipv4_address: 172.23.0.11 zoo2: image: zookeeper:3.4 restart: always hostname: zoo2 container_name: zoo2 privileged: true ports: - 2182:2181 volumes: - ./zoo2/data:/data - ./zoo2/datalog:/datalog environment: TZ: Asia/Shanghai ZOO_MY_ID: 2 ZOO_PORT: 2181 ZOO_SERVERS: server.1=zoo1:2888:3888 server.2=zoo2:2888:3888 server.3=zoo3:2888:3888 networks: default: ipv4_address: 172.23.0.12 zoo3: image: zookeeper:3.4 restart: always hostname: zoo3 container_name: zoo3 privileged: true ports: - 2183:2181 volumes: - ./zoo3/data:/data - ./zoo3/datalog:/datalog environment: TZ: Asia/Shanghai ZOO_MY_ID: 3 ZOO_PORT: 2181 ZOO_SERVERS: server.1=zoo1:2888:3888 server.2=zoo2:2888:3888 server.3=zoo3:2888:3888 networks: default: ipv4_address: 172.23.0.13 networks: default: external: name: zoo_kafka

在/zookeeper路径下执行:

docker-compose up -d

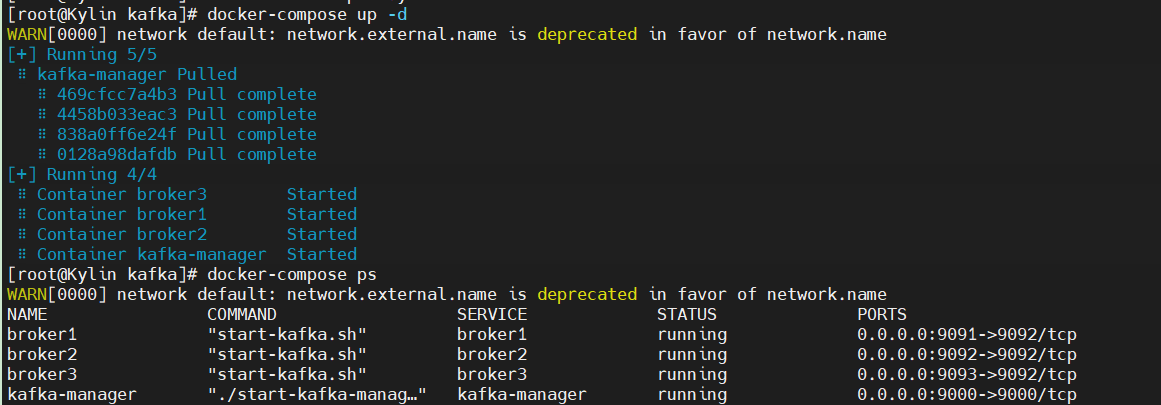

7、部署kafka

docker-compose.yml内容如下:

version: '2' services: broker1: image: wurstmeister/kafka restart: always hostname: broker1 container_name: broker1 privileged: true ports: - "9091:9092" environment: KAFKA_BROKER_ID: 1 KAFKA_LISTENERS: PLAINTEXT://broker1:9092 KAFKA_ADVERTISED_LISTENERS: PLAINTEXT://broker1:9092 KAFKA_ADVERTISED_HOST_NAME: broker1 KAFKA_ADVERTISED_PORT: 9092 KAFKA_ZOOKEEPER_CONNECT: zoo1:2181,zoo2:2181,zoo3:2181 volumes: - /var/run/docker.sock:/var/run/docker.sock - ./broker1:/kafka/kafka\-logs\-broker1 external_links: - zoo1 - zoo2 - zoo3 networks: default: ipv4_address: 172.23.0.14 broker2: image: wurstmeister/kafka restart: always hostname: broker2 container_name: broker2 privileged: true ports: - "9092:9092" environment: KAFKA_BROKER_ID: 2 KAFKA_LISTENERS: PLAINTEXT://broker2:9092 KAFKA_ADVERTISED_LISTENERS: PLAINTEXT://broker2:9092 KAFKA_ADVERTISED_HOST_NAME: broker2 KAFKA_ADVERTISED_PORT: 9092 KAFKA_ZOOKEEPER_CONNECT: zoo1:2181,zoo2:2181,zoo3:2181 volumes: - /var/run/docker.sock:/var/run/docker.sock - ./broker2:/kafka/kafka\-logs\-broker2 external_links: # 连接本compose文件以外的container - zoo1 - zoo2 - zoo3 networks: default: ipv4_address: 172.23.0.15 broker3: image: wurstmeister/kafka restart: always hostname: broker3 container_name: broker3 privileged: true ports: - "9093:9092" environment: KAFKA_BROKER_ID: 3 KAFKA_LISTENERS: PLAINTEXT://broker3:9092 KAFKA_ADVERTISED_LISTENERS: PLAINTEXT://broker3:9092 KAFKA_ADVERTISED_HOST_NAME: broker3 KAFKA_ADVERTISED_PORT: 9092 KAFKA_ZOOKEEPER_CONNECT: zoo1:2181,zoo2:2181,zoo3:2181 volumes: - /var/run/docker.sock:/var/run/docker.sock - ./broker3:/kafka/kafka\-logs\-broker3 external_links: # 连接本compose文件以外的container - zoo1 - zoo2 - zoo3 networks: default: ipv4_address: 172.23.0.16 kafka-manager: image: sheepkiller/kafka-manager:latest restart: always container_name: kafka-manager hostname: kafka-manager ports: - "9000:9000" links: # 连接本compose文件创建的container - broker1 - broker2 - broker3 external_links: # 连接本compose文件以外的container - zoo1 - zoo2 - zoo3 environment: ZK_HOSTS: zoo1:2181,zoo2:2181,zoo3:2181 KAFKA_BROKERS: broker1:9092,broker2:9092,broker3:9092 APPLICATION_SECRET: letmein KM_ARGS: -Djava.net.preferIPv4Stack=true networks: default: ipv4_address: 172.23.0.10 networks: default: external: # 使用已创建的网络 name: zoo_kafka

在/kafka路径下执行:

docker-compose up -d

8、测试是否搭建成功

进入kafka容器

docker exec -it broker1 /bin/bash

bash-5.1# kafka-topics.sh --create --zookeeper 172.23.0.11:2181 --replication-factor 1 --partitions 1 --topic test Created topic test. bash-5.1# kafka-console-producer.sh --broker-list 172.23.0.16:9092 --topic test >sdfdsf >dsf bash-5.1# kafka-console-consumer.sh --bootstrap-server 172.23.0.16:9092 --topic test --from-beginning sdfdsf dsf

浙公网安备 33010602011771号

浙公网安备 33010602011771号